CS 484: Computational Vision

Yuri Boykov

Estimated study time: 6 hr 2 min

Table of contents

Introduction to Computational Vision

What Is Computer Vision?

Human vision begins when light reflected from objects reaches the eye’s photoreceptors, producing signals that travel to the brain, which interprets the scene — inferring a garden, a bridge, the geometry of 3D space, the identities of objects, and their spatial relationships. Computer vision asks: can we build machines that replicate this interpretive process? The sensor is no longer a biological eye but a grid of electronic sensing elements arranged on an image plane inside a camera. The signals those elements produce — a rectangular array of intensity values — are then analysed by algorithms that attempt to recover scene understanding from raw pixel data.

Computer Vision and Its Relationship to Computer Graphics

Computer graphics and computer vision are, in a meaningful sense, inverses of each other. Graphics starts from a computer model — geometry, materials, lighting — and renders it into an image. Vision starts from an image and attempts to recover the underlying model: shapes, lighting, motion, object identities. Both fields share a substantial intersection in modelling, where the representation of 3D shape, surface properties, and illumination is central. Vision draws further on detection, tracking, motion estimation, and recognition, while graphics focuses on surface design, animation, and user interfaces for controlling rendered scenes.

An Interdisciplinary Field

Computational vision sits at the intersection of many disciplines. Biology and neuroscience inform our understanding of how living organisms perceive visual scenes, motivating both the problems we study and some of the algorithmic approaches we take. Physics underlies the formation of images — how light travels, reflects, and refracts. Mathematics — particularly linear algebra, probability, optimization, and geometry — provides the formal backbone of virtually every algorithm in the field. Psychology and cognitive science shed light on how perception and attention operate in humans. Machine learning has become the dominant paradigm for learning visual representations from data. Robotics provides some of the most demanding application contexts, while medical imaging connects the field to engineering and clinical practice.

The goal of computational vision, stated simply, is to bridge the gap between pixels and meaning. A computer sees only a two-dimensional table of numbers. The task of the vision system is to infer from those numbers the rich semantic content that a human perceives immediately and effortlessly.

Applications of Computer Vision

Optical Character Recognition

One of the earliest successful applications, optical character recognition (OCR) allows computers to read printed or handwritten text. Reliable systems for printed text were available by the early 1990s and are now ubiquitous in postal sorting, document scanning, and mobile applications.

Object Detection and Tracking

Object detection is the task of locating instances of specific object categories within an image, typically by predicting bounding boxes. Face detection became practical around 2000 using methods such as the Viola-Jones cascade classifier, and modern deep-learning detectors can localise hundreds of object categories simultaneously in real time. Tracking extends detection across time, maintaining the identity of objects across video frames. Methods such as pictorial structures decompose articulated objects into parts with spatial constraints, enabling tracking of human body configurations across a sequence.

Segmentation

Segmentation is the problem of assigning a label to every pixel in an image, producing a pixel-accurate partitioning of the scene. Early energy minimisation methods — Livewire (1995) and graph cuts (2001–2004) — enabled interactive segmentation tools in which a user sketches a rough outline and the algorithm snaps to precise object boundaries. These methods found rapid adoption in medical image segmentation, where graph-cut techniques enabled reliable 3D organ extraction from CT volumes as early as 2001. Later, combining detection with segmentation led to panoptic segmentation systems (exemplified by the CityScapes dataset) that simultaneously identify object instances and label every pixel with a semantic category.

Activity Recognition

Activity recognition systems interpret the temporal structure of video, identifying human actions such as walking, running, or gesturing. Bottom-up tracking methods that aggregate motion evidence across frames provide the foundation for such recognition pipelines.

Vision-Based Interaction and Assistive Technology

The Microsoft Kinect (2010) brought structured-light 3D sensing to consumer hardware, enabling markerless body tracking for gaming. The same depth-sensing technology underpins assistive technologies that help people with mobility impairments interact with computers and environments through gesture.

Stereo and Multi-View Reconstruction

Humans perceive depth partly because our two eyes observe the world from slightly different positions — the disparity between the two views provides a powerful depth cue. Stereo reconstruction algorithms recover a dense depth map from pairs of calibrated images; competitive results were demonstrated as early as 1999. By 2005, systems using many images from different viewpoints were producing full 3D surface models. Structure from Motion (SfM) methods take an unordered collection of photographs and simultaneously recover camera positions and a sparse 3D point cloud, enabling the reconstruction of large scenes from casual photography.

3D Scene Reconstruction

Beyond stereo, scenes can be reconstructed from point clouds obtained by laser scanners using surface-fitting algorithms. Impressive results from just a handful of images were achieved by Debevec, Taylor, and Malik at SIGGRAPH 1996. More remarkably, monocular depth estimation — recovering depth from a single image — has become possible through learning. Godard et al. (CVPR 2017) demonstrated that a neural network trained on stereo pairs can predict plausible depth maps at test time from a single photograph, exploiting learned priors about scene geometry.

Vision for Robotics

NASA’s Mars Exploration Rover Spirit used an onboard vision system to navigate hostile terrain autonomously. The system solved multiple simultaneous tasks: panorama stitching of wide-angle imagery, 3D terrain modelling to identify safe traversal paths, position tracking to maintain localisation without GPS, and obstacle detection to avoid rocks and craters. This application illustrates how computer vision underpins autonomous systems that must operate far beyond the reach of direct human control.

Image Modalities

Part I: Photo and Video Data

The Pinhole Camera and Camera Obscura

To understand digital imaging, it is useful to reason from first principles about how cameras form images. Consider the simplest possible “camera”: a piece of film placed directly in front of a scene. Every point on the film receives light from every visible point in the scene simultaneously, so the film records a completely blurred superposition of the entire scene — no useful image is formed.

The key insight behind the Camera Obscura (Latin: dark room), known to Aristotle and described in detail by Athanasius Kircher in 1646, is that introducing a barrier with a small hole dramatically improves image formation. Most rays from each scene point are blocked; only the ray passing through the hole reaches the film. The result is a dim but spatially coherent image, with the depth of the room corresponding to the focal length of the device. The pencil of rays — all rays passing through the aperture from a single point — converges on the film to produce a sharp pixel, as long as the aperture is small enough.

This principle carries a fundamental consequence. Because 3D space is projected onto a 2D image plane, depth information is lost: a nearby small object and a distant large object can produce identical images. Depth is therefore inherently ambiguous in a single photograph. Recovering it requires additional information — multiple viewpoints, prior knowledge about scene geometry, or learning from data — which is a recurring theme throughout the course.

Aperture Size and Diffraction

There is a tension in pinhole camera design: making the aperture smaller sharpens the image by blocking more extraneous rays, but it also reduces the amount of light reaching the film and eventually introduces diffraction effects. When the aperture diameter becomes comparable to the wavelength of visible light (roughly 400–700 nm), the wave nature of light causes rays to spread after passing through the hole, reintroducing blur. In practice, extremely small apertures are therefore impractical.

Lenses

Lenses resolve the aperture dilemma by gathering light over a large area and refracting it so that all rays from a single scene point converge to a single image point. A lens of sufficient size can collect far more light than a pinhole while still producing a sharp image — provided the scene point lies at the correct depth. Points at other depths focus behind or in front of the image plane, producing a circle of confusion: a small disk rather than a point. Moving the image plane (changing the image distance) shifts which depths are in focus.

The focal length of a lens is defined as the image distance at which objects at infinity appear sharply focused. Focal length depends on the physical construction of the lens (the curvature of its surfaces and the refractive index of the glass). Many camera lenses are multi-element systems that allow the effective focal length to be varied (zoom lenses).

The Pinhole Camera Model

Because modelling circles of confusion and depth of field adds considerable complexity, computational vision typically adopts the simplified pinhole camera model. In this model, a single point — the optical centre \(C\), also called the viewpoint — replaces the lens, and an image plane is positioned at distance \(f\) (the focal length) in front of it. Every scene point is mapped to the image plane by the ray connecting it to \(C\). Equivalently, one can draw the image plane as a virtual plane on the same side of \(C\) as the scene (in front of the camera), which avoids the inversion inherent in a real pinhole and is the convention used throughout the course.

Projective Geometry of the Pinhole Camera

To describe the mapping from 3D scene points to 2D image pixels mathematically, we adopt a camera-centred coordinate system. The optical centre \(C\) is placed at the origin. The \(x\)- and \(y\)-axes lie parallel to the image plane, aligned with the horizontal \(u\) and vertical \(v\) axes of the image coordinate system respectively. The \(z\)-axis, called the optical axis, points forward through the centre of the image.

Under these assumptions, a 3D world point \((x, y, z)\) projects onto image pixel \((u, v)\) according to:

\[ (x,\, y,\, z) \;\longmapsto\; \left( f\,\frac{x}{z},\; f\,\frac{y}{z} \right) \]The factor \(1/z\) embodies the key perceptual fact that objects appear smaller as they recede: the apparent size of any 3D object in the image is inversely proportional to its distance \(z\) from the camera. This mapping is called a perspective projection, and it is the foundation for all the geometric reasoning covered in later lectures (warping, homographies, stereo, and multi-view geometry).

The Human Eye as a Camera

The human eye is a biological realisation of the camera principle. The iris is a coloured annulus of radial muscles that controls the pupil — the aperture through which light enters. The pupil adjusts dynamically to regulate the amount of incoming light. The lens of the eye (which can change shape to adjust focal length, a process called accommodation) focuses light onto the retina at the back of the eye. The retina is lined with photoreceptor cells: rods (sensitive to low light, monochromatic) and cones (sensitive to colour, concentrated in the fovea at the centre of the visual field). These cells play the role of the film or sensor in a camera.

Digital Image Formation

Real cameras transduce light into electrical signals using CCD or CMOS image sensors. The intensity recorded at each sensor element depends on two physical quantities:

\[ f(x, y) = \text{reflectance}(x, y) \times \text{illumination}(x, y) \]Reflectance is a material property — the fraction of incident light that the surface reflects — and lies in \([0, 1]\). Illumination is the amount of light falling on the surface from the environment and can in principle be unbounded. Their product gives the image irradiance at each point.

Sampling and Quantization

Before we can process an image on a computer, the continuous signal must be converted to a discrete array through two operations:

- Spatial sampling: The image plane is divided into a regular grid. The continuous intensity value at each grid location is measured. If the grid spacing is \(\Delta\), the sampled image is written \(f[i, j] = f(i\Delta, j\Delta)\). The density of the grid determines the spatial resolution, typically expressed in pixels.

- Intensity quantization: Each sampled intensity value is rounded to the nearest integer from a finite set. An 8-bit image stores values in \(\{0, 1, \ldots, 255\}\). The number of bits used is the bit depth (also called intensity depth or colour depth).

Increasing spatial resolution captures finer detail; increasing bit depth captures more subtle intensity gradations. Insufficient resolution causes aliasing; insufficient bit depth causes visible contouring artefacts.

Images as Functions

Mathematically, a greyscale image is a function \(f : \mathbb{R}^2 \to \mathbb{R}\), where \(f(x, y)\) gives the intensity at continuous image coordinates \((x, y)\). In practice we restrict attention to a rectangle and a bounded intensity range:

\[ f : [a,b] \times [c,d] \;\to\; [0, 1] \]A colour image is a vector-valued function with one component per colour channel. In the standard RGB representation:

\[ \mathbf{f}(x,y) = \begin{pmatrix} r(x,y) \\ g(x,y) \\ b(x,y) \end{pmatrix} \]where \(r\), \(g\), and \(b\) give the red, green, and blue channel intensities respectively.

After sampling and quantization, a digital image is represented as a 2D matrix of integers. This functional/matrix duality is central to image processing: filtering algorithms are naturally described as operations on the continuous function \(f\), but the implementation operates on the discrete matrix.

Image Data Tensors

Modern vision systems generalise the 2D image to multi-dimensional tensors:

- Colour image: A \(H \times W \times 3\) array with height \(H\), width \(W\), and 3 colour channels (RGB).

- Video: A \(H \times W \times T\) array, where \(T\) indexes frames over time. Processing video requires algorithms that exploit both spatial and temporal structure.

- Medical volumetric data: A \(H \times W \times Z\) array, where \(Z\) indexes slices along the third spatial dimension. CT and MRI scanners produce such volumes by acquiring a series of parallel 2D cross-sections.

In deep learning, images are typically represented as tensors of shape \((C, H, W)\) or \((H, W, C)\) and batched into \((N, C, H, W)\) for training.

Part II: Medical Images and Volumes

Medical imaging provides some of the most scientifically important and technically challenging applications of computer vision. Unlike natural photographs, medical images are acquired using physical phenomena other than visible-light photography, and they frequently require 3D volumetric analysis.

X-Ray Imaging

X-rays were discovered by Wilhelm Conrad Röntgen in 1895 — the “X” standing for unknown at the time — and Röntgen was awarded the first Nobel Prize in Physics in 1901 for this discovery. X-ray imaging (also known as radiography or Röntgen imaging) remained the dominant modality in medical imaging until the 1970s.

X-rays are high-energy electromagnetic radiation that penetrate soft tissue but are strongly absorbed by dense materials. Calcium in bone absorbs X-rays most strongly, producing bright white regions on a film or detector. Fat and other soft tissues absorb less, appearing in grey tones, while air absorbs almost nothing and renders as black — which is why the lungs appear dark on a chest radiograph.

Conventional X-ray imaging is fundamentally 2D projection imaging: it collapses the entire 3D body along the direction of the beam into a single 2D shadow image. Depth information along the beam axis is completely lost, though it can be partially recovered by combining images from multiple angles — the insight that eventually led to computed tomography.

Computed Tomography

The mathematical foundation for tomographic reconstruction — recovering a 2D cross-sectional image from its 1D projections — was laid by Johann Radon in 1917. The Radon transform \(g(s, \theta)\) represents the integral of a function \(f(x, y)\) along a line parameterised by offset \(s\) and angle \(\theta\). Its inversion formula (filtered backprojection) provides a practical algorithm for CT reconstruction.

The first clinical Computed Tomography (CT) scanner was developed by Godfrey Hounsfield at EMI PLC in 1972. Hounsfield shared the 1979 Nobel Prize in Physiology or Medicine with Allan Cormack, who had independently developed the mathematical theory of reconstruction. The word tomography derives from the Greek tomos (slice), reflecting the fact that CT reconstructs thin cross-sectional slices through the body rather than projections through its full depth.

The evolution of CT resolution has been remarkable: early 1970s scanners produced coarse 80×80 images with 3 mm pixels and 13 mm thick slices, requiring 80 seconds per slice. Modern multi-slice CT systems produce 512×512 images with sub-millimetre pixels and slice thicknesses below 0.5 mm, complete a full rotation in 0.5 seconds, reconstruct each slice in another 0.5 seconds, and acquire up to 16 slices simultaneously using spiral (helical) scanning. This isotropic resolution — comparable in all three spatial dimensions — enables true 3D volumetric reconstruction and multiplanar reformatting.

Despite initial scepticism (one neuroradiologist reportedly dismissed early CT images as “pretty pictures that will never replace radiographs”), CT rapidly became indispensable. It is now a cornerstone of diagnostic radiology, particularly for trauma, oncology, and neurological assessment.

Magnetic Resonance Imaging

Magnetic Resonance Imaging (MRI) exploits the quantum-mechanical property of nuclear spin. Hydrogen nuclei (protons) are abundant in biological tissue (particularly in water and fat). When placed in a strong magnetic field and excited by radiofrequency (RF) pulses, they precess at a characteristic frequency and emit a small RF signal as they return to equilibrium. Spatial encoding using magnetic field gradients allows reconstruction of a 3D image of proton density and relaxation times.

The key figures in MRI’s development were Paul Lauterbur, who demonstrated the first magnetic resonance images in Nature in 1973, and Sir Peter Mansfield, who developed the mathematics of rapid imaging sequences. Raymond Damadian built the first whole-body MRI scanner in 1977. Lauterbur and Mansfield shared the 2003 Nobel Prize in Physiology or Medicine.

Unlike CT, MRI does not use ionising radiation, making it safe for repeated examinations. It provides exceptional soft-tissue contrast, distinguishing structures that appear nearly identical on CT. The signal depends on tissue-specific relaxation times T1 and T2, which are exploited to produce different contrasts by varying the RF pulse sequence.

Advanced MRI Modalities

Beyond standard T1 and T2-weighted imaging, a range of specialised sequences have expanded MRI’s capabilities:

- Magnetic Resonance Angiography (MRA): By tuning the scanner to detect moving structures, MRA produces 3D images of blood vessels without requiring X-ray contrast agents. Gadolinium-based contrast agents can further enhance vascular conspicuity.

- Magnetic Resonance Spectroscopy (MRS): Rather than imaging anatomy, MRS measures the chemical composition of tissue — the relative concentrations of metabolites such as choline, creatine, and N-acetylaspartate. This provides information about brain chemistry and muscle metabolism that is invisible to anatomical imaging.

- Functional MRI (fMRI): Active brain regions demand increased oxygen delivery via blood flow. Oxygenated and deoxygenated haemoglobin have different magnetic properties, producing a Blood Oxygen Level-Dependent (BOLD) signal change of roughly 1%. By correlating these changes with a stimulus or task (for example, watching a flickering disk), fMRI maps brain regions involved in specific cognitive and sensory functions. It is an essential tool in both neuroscience research and pre-surgical planning.

- Diffusion-Weighted MRI (DWI) and Tractography: DWI measures the microscopic diffusion of water molecules, which in neural tissue is anisotropic — faster along the long axis of nerve fibres than perpendicular to them. Fitting a diffusion tensor to the measured diffusion in each voxel yields a field of ellipsoids encoding local fibre orientation. Tractography algorithms then trace streamlines through this field, reconstructing the large white-matter fibre tracts that connect different brain regions — results that, as one neuroanatomist observed, look “just like Gray’s Anatomy.”

- Perfusion-Weighted MRI (PWI): Measures blood flow and volume at the tissue level, important for stroke diagnosis.

Ultrasound

Ultrasound imaging uses high-frequency sound waves (typically 1–20 MHz) rather than electromagnetic radiation. A transducer emits a pulse; echoes reflected from tissue interfaces are timed to reconstruct a depth profile (A-mode) or 2D cross-section (B-mode). Ultrasound is real-time, portable, and uses no ionising radiation, making it the modality of choice for foetal imaging, cardiac assessment, and point-of-care applications. Its main limitation is poor penetration in bone and gas, and operator-dependent image quality.

Each of these modalities provides a different window into biological structure and function. Computational vision and medical image analysis draw on all of them, applying segmentation, registration, detection, and reconstruction algorithms to tasks ranging from tumour delineation to surgical guidance.

Image Pre-Processing and Low-Level Features

Overview: Low-Level Features and the Role of Filtering

Computer vision approaches the problem of image understanding at many levels of abstraction. High-level understanding concerns itself with semantic categories — what objects are present in a scene, where they are located, and what relationships exist among them. Low-level features, by contrast, operate closer to the raw signal: pixel intensities, colors, local contrast, edges, and corners. These low-level measurements are the foundation upon which all higher reasoning is built, and understanding them thoroughly is essential before tackling the more sophisticated machinery of neural networks or 3D reconstruction that appears later in the course.

The pipeline of feature extraction can be visualized as a cascade. Raw sensor output flows through a layer of low-dimensional, hand-designed low-level filters that produce filtered features — edges, corners, texture descriptors, and similar measurements. Later in the course, these hand-designed filters give way to compositions of learnable filters (deep neural networks), which produce high-dimensional features suited for semantic discrimination. Lecture 3 is concerned entirely with the first stage of this pipeline.

The mathematical apparatus central to low-level feature extraction is filtering, implemented through the operation of convolution or cross-correlation. Filtering is a form of neighborhood processing: rather than transforming each pixel in isolation, the output at any given location depends on a small spatial neighborhood around that location. This is a crucial conceptual step beyond simple point processing. As a motivating example, consider what happens when all pixels in an image are randomly shuffled: the histogram of intensities is completely unchanged, so no amount of point processing could recover the original appearance, yet any human can instantly recognize that the spatial structure — the very thing that makes the image intelligible — has been destroyed. Spatial information is indispensable, and filtering is the tool that exploits it.

Point Processing

Before neighborhood processing, the simplest form of image transformation is point processing, in which each output pixel depends only on the corresponding input pixel. Formally, if \( f \) is the original image and \( g \) the output, then

\[ g(x, y) = t(f(x, y)) \]where \( t \) is an intensity transforming function mapping the input intensity range to the output intensity range. Because every pixel is processed independently and spatial relationships play no role, point processing cannot capture or exploit any structure in the image. What it can do is globally redistribute the intensity values in useful ways.

Domain vs. Range Transformations

Image processing operations broadly fall into two families. Domain (geometric) transformations rearrange pixel locations without altering their intensities:

\[ g(x, y) = f(t_x(x, y),\; t_y(x, y)) \]where \( t_x \) and \( t_y \) are coordinate mapping functions. Flipping, rotating, or translating an image are all domain transformations. Range transformations leave positions fixed but alter intensity values, and point processing is the simplest member of this family. Filtering is a generalization of range transformations that incorporates a neighborhood of size \( \epsilon \) around each pixel:

\[ g(x, y) = \int_{|u| \le \epsilon} \int_{|v| \le \epsilon} h(u, v)\, f(x - u, y - v)\, du\, dv \]where \( h \) is the filter function. Domain transformations are the topic of the next lecture; point processing and filtering are the subjects of the present one.

Grayscale Intensity Transformations

To understand point processing concretely, consider various choices of the function \( t \) and visualize them as plots with input intensity on the horizontal axis and output intensity on the vertical axis. The identity transformation, \( t(I) = I \), leaves all intensities unchanged. The negative transformation, \( t(I) = 255 - I \), reverses the ordering of intensities so that dark regions become bright and vice versa; medical imaging sometimes employs this because certain tissue boundaries are more visible in the negative. Monotonically increasing functions that are not the identity stretch or compress different parts of the intensity range without reversing order.

Gamma Correction

A particularly important family of point-processing transforms is gamma correction, defined by

\[ t(I) = c \cdot I^{\gamma} \]where \( c \) is a scaling constant (often set to 1) and \( \gamma \) is the key parameter. When \( \gamma = 1 \) the transform is the identity. When \( \gamma > 1 \) (for example, \( \gamma = 5 \), the function effectively ignores dark input intensities and compresses them toward zero while spreading the upper range of highlights across the full output range — useful for images that are overexposed. Conversely, when \( \gamma < 1 \) (for example, \( \gamma = 0.4 \), the transform expands the lower intensity range, bringing out detail in dark regions. In practice, gamma correction is routinely applied to compensate for camera acquisition artifacts such as over- or underexposure. An overexposed image has almost all pixels concentrated in the upper half of the intensity range; applying an appropriate gamma pushes these values back toward a more uniform distribution. The key point is that no new information is created — the same image data is simply remapped to better exploit the available dynamic range, producing an image that looks more contrasty and informative to human observers.

Image Histograms and Contrast

A image histogram (or intensity histogram) provides a compact summary of how the available intensity range is used by an image. The horizontal axis represents possible intensity values (for an 8-bit grayscale image, \( 0 \) to \( 255 \), and the height of each bar indicates the count of pixels with that intensity. Normalizing by the total pixel count converts counts to probabilities, so the histogram can be viewed as the probability distribution \( p(i) \) of intensity \( I \) across the image.

Different histogram shapes encode different image qualities. A dark image concentrates counts in the lower intensity bins; a bright, overexposed image concentrates them in the upper bins; a low-contrast “gray” image has a narrow peak in the middle. A high-quality, well-exposed image uses the full range from 0 to 255, with counts spread across all bins. This breadth corresponds to high contrast — not to be confused with dynamic range, which measures the maximum minus minimum intensity. Contrast in this sense refers to the degree to which available intensity levels are actually exploited.

Contrast stretching is the practice of applying a point transformation that redistributes intensities from a narrow sub-range out across the full available range. However, one must be careful: extreme contrast stretching that maps everything below a midpoint to pure black and everything above to pure white (intensity thresholding) does achieve maximum spread but destroys fine gradations within each half, reducing the information content of the image. Moreover, because the number of distinct intensity values in an image cannot be increased by any point transformation, the stretched histogram will always contain gaps (holes) if the original image used fewer than 256 distinct values.

Histogram Equalization

The most principled automatic contrast enhancement is histogram equalization. The transformation function \( t \) is set equal to the cumulative distribution of image intensities:

\[ t(i) = \sum_{j=0}^{i} p(j) = \sum_{j=0}^{i} \frac{n_j}{n} \]where \( n_j \) is the count of pixels with intensity \( j \) and \( n \) is the total number of pixels. The theoretical justification comes from a standard result in probability: if a random variable \( I \) has an arbitrary probability density \( p(i) \) over \( [0, 1] \) and \( t(i) \) is its cumulative distribution function, then the transformed random variable \( I' = t(I) \) has a uniform distribution over \( [0, 1] \). Applied to image processing, this means that if each pixel is treated as an independent sample from the intensity distribution, histogram equalization produces a new image whose histogram is as uniform as possible — spreading intensities across the full range and maximizing contrast in the sense of uniformity of usage. This operation is available as a one-button command in most image editing software.

Window-Center Adjustment

A specialized point-processing problem arises in medical imaging. CT and MRI scanners store pixel intensities with 16-bit precision (up to 65,000 distinct values), while a standard display monitor has only 8-bit output (256 levels). Naively mapping this large range to 256 values causes many distinct tissue intensities to be collapsed into the same displayed gray level, losing diagnostic detail.

The window-center adjustment solves this by focusing the available 256 output levels on a selected sub-range of the input. The window parameter specifies the width of the input sub-range of interest; the center parameter specifies the midpoint of that window. Intensities below the window floor are clipped to black, and those above the ceiling are clipped to white, while those within the window are spread linearly across the 0–255 output range. By adjusting the window and center, a radiologist can focus on lung parenchyma, bony structures, or soft tissue in turn — each requiring different intensity sub-ranges. In the limiting case where the window width is zero, all pixels are mapped to either black or white based solely on whether their intensity falls above or below the center, producing binary thresholding.

Linear Filtering and Convolution

Motivation: Spatial Information

A fundamental insight is that pixel intensities alone, stripped of their spatial relationships, convey far less information than pixels in context. Two images with identical histograms — one a natural scene, the other its randomly shuffled version — look completely different because spatial structure has been destroyed. Filters that examine neighborhoods restore the spatial dimension to the analysis.

Linear Transforms and the Filter Matrix

To build intuition, consider a one-dimensional scan line of \( k \) pixels represented as a vector \( \mathbf{f} \in \mathbb{R}^k \). Any linear transformation of this vector can be written as \( \mathbf{g} = M\mathbf{f} \) for a \( k \times k \) matrix \( M \). The identity matrix leaves the signal unchanged. A matrix with ones in the first column copies the first element to all output positions. A subdiagonal matrix of ones performs a cyclic shift. The interesting case arises when \( M \) has a banded structure: all non-zero entries lie near the main diagonal, and — crucially — the same pattern of weights repeats in every row. Such a matrix corresponds to a linear shift-invariant (LSI) filter.

Linear Shift-Invariant Filters and the Kernel

When the matrix \( M \) has this repeated-row structure, the full matrix can be replaced by a compact kernel (also called a mask or window function) \( h \). For a 1D kernel of half-width \( k \), the filtering operation is:

\[ g[i] = \sum_{u=-k}^{k} h[u]\, f[i + u] \]The kernel \( h \) specifies the weight given to each neighbor at offset \( u \) from the center. For a simple three-tap kernel \( h = [a, b, c] \), the output at position \( i \) is \( a\,f[i-1] + b\,f[i] + c\,f[i+1] \) — a weighted average of the pixel, its left neighbor, and its right neighbor.

Two defining properties characterize this class of operations. Linearity means that \( H(\mathbf{f} + \mathbf{g}) = H\mathbf{f} + H\mathbf{g} \) — the filter applied to a sum of signals equals the sum of the filter applied to each signal separately. Shift-invariance means that \( H(S\mathbf{f}) = S(H\mathbf{f}) \) for any shift operator \( S \) — it does not matter whether you shift the signal first and then filter, or filter first and then shift. These two properties together are equivalent, in the if-and-only-if sense, to the kernel-based representation above. Any operator that is both linear and shift-invariant must be expressible as a convolution with some kernel.

A terminological caution: the word kernel in this context means a window function defining filter weights. This usage is unrelated to the algebraic term “kernel” meaning null space of a matrix, even though kernels here are often described alongside matrices. The computer vision and machine learning communities use the word in the filter sense, and one must infer meaning from context.

2D Filtering: Cross-Correlation and Convolution

Extending to two-dimensional images, a 2D image \( f[i,j] \) can be filtered by a 2D kernel \( h[u,v] \) to produce output \( g[i,j] \). The cross-correlation operation is:

\[ g[i, j] = \sum_{u=-k}^{k} \sum_{v=-k}^{k} h[u, v]\, f[i + u, j + v] \]written compactly as \( g = h \star f \). The closely related convolution operation differs by negating the indices inside \( f \):

\[ g[i, j] = \sum_{u=-k}^{k} \sum_{v=-k}^{k} h[u, v]\, f[i - u, j - v] \]written as \( g = h * f \). The difference amounts to flipping the kernel both horizontally and vertically before applying it. If the kernel happens to be symmetric — \( h[u,v] = h[-u,-v] \) — then convolution and cross-correlation are identical. In general they are not, but since one can always flip the kernel, the two operations are equivalent in practice, and practitioners often use the terms interchangeably. Convolution enjoys additional mathematical properties — commutativity and associativity, as well as clean behavior in the Fourier domain — which make it the preferred formulation in theoretical treatments. Cross-correlation, on the other hand, has a natural statistical interpretation as a similarity measure, which becomes important for template matching. Convolutional neural networks, despite their name, often implement cross-correlation internally.

Impulse Response

A useful way to characterize any linear shift-invariant filter is its impulse response: the output produced when the input is a unit impulse (a single pixel of intensity 1 surrounded by zeros). For an LSI filter, the impulse response is exactly the kernel \( h \) itself. This equivalence means the kernel can be thought of as the “fingerprint” of the filter — the pattern it stamps onto an image when it encounters a single bright point.

Common Linear Filters

Mean Filter (Box Filter)

The simplest smoothing filter is the mean filter (also called a box filter). For a window of size \( (2k+1) \times (2k+1) \), every pixel in the neighborhood receives equal weight:

\[ h[u, v] = \frac{1}{(2k+1)^2} \]For example, a \( 3 \times 3 \) mean filter has kernel entries \( \frac{1}{9} \) everywhere. Applying this filter replaces each pixel’s value with the average of its neighborhood. The primary effect is smoothing: high-frequency noise is attenuated because isolated noisy spikes are averaged with their neighbors. The primary side effect is blurring: sharp boundaries between regions, where intensity changes abruptly, become gradual transitions in the output. As the window size increases, smoothing and blurring both intensify.

The mean filter also lacks rotational invariance. Its square kernel shape responds differently to features aligned with the horizontal/vertical axes versus those oriented diagonally, producing visible grid-like (horizontal and vertical striping) artifacts in the output. This means that applying the mean filter and then rotating an image gives a different result than rotating first and then filtering — a property that is generally undesirable.

For Gaussian noise (where each pixel is corrupted by an independent additive sample from a normal distribution), the mean filter gives reasonable results at modest window sizes, though larger windows over-blur the image. For salt-and-pepper noise (random isolated pixels set to either pure black or pure white), the mean filter performs poorly: even at large window sizes, high-contrast speckles persist because their extreme values pull the local average away from the true underlying intensity.

Gaussian Filter

The Gaussian filter addresses the mean filter’s limitations by weighting contributions according to distance from the center of the kernel. The 2D Gaussian kernel is:

\[ G_\sigma(u, v) = \frac{1}{2\pi\sigma^2} \exp\!\left(-\frac{u^2 + v^2}{2\sigma^2}\right) \]where \( \sigma \) is the standard deviation, controlling the breadth of the kernel: larger \( \sigma \) produces more smoothing. A discrete approximation commonly used for a \( 3 \times 3 \) kernel is:

\[ \frac{1}{16}\begin{bmatrix} 1 & 2 & 1 \\ 2 & 4 & 2 \\ 1 & 2 & 1 \end{bmatrix} \]This kernel is denoted \( G_\sigma \) in course notation. Because the Gaussian function is circularly symmetric, the filter is rotationally invariant: applying the Gaussian filter and then rotating produces the same result as rotating and then filtering. This eliminates the axis-aligned artifacts seen with the mean filter and makes the Gaussian the standard choice for smoothing in computer vision.

The Gaussian also has the property of separability: a 2D Gaussian convolution can be implemented as two sequential 1D convolutions, one along rows and one along columns. This reduces computational cost from \( O(n^2) \) to \( O(n) \) per pixel (where \( n \) is the number of kernel taps), a significant savings for large kernels.

The parameter \( \sigma \) encodes the scale at which the image is analyzed. A small \( \sigma \) (e.g., \( \sigma = 1 \) barely smooths the image, and the filter responds to fine details including hair-thin textures. A large \( \sigma \) (e.g., \( \sigma = 7 \) suppresses all fine structure and the filter responds only to coarse, large-scale features. This scale-dependence is not a bug but a fundamental feature that reappears in the construction of the Gaussian pyramid and SIFT later in this lecture.

Non-Linear Filters: The Median Filter

While the mean and Gaussian filters are linear operations expressible as convolution, some noise types demand a fundamentally different approach. The median filter is a non-linear operation that replaces each pixel with the median intensity within its neighborhood window, rather than the mean. The median is found by sorting all values in the window and selecting the middle one.

The median filter is not a convolution — it cannot be expressed as a linear combination of neighboring pixel values — and it is a homework exercise to prove this formally. The intuition is that sorting is an inherently non-linear operation, and the result cannot be written as a weighted sum of the input values with fixed weights.

The crucial advantage of the median over the mean is its robustness to outliers. Salt-and-pepper noise corrupts only a small fraction of pixels with extreme values (0 or 255). In any sufficiently large window, these extreme values are a minority; the median simply ignores them in favor of the majority’s more typical values. In contrast, even a single outlier pulls the mean toward it proportionally. As a result, a \( 3 \times 3 \) median filter almost completely eliminates salt-and-pepper noise with minimal blurring, while a \( 7 \times 7 \) mean filter still leaves visible speckles and produces significant blur. For Gaussian noise, however, where every pixel is corrupted to a moderate degree, the mean or Gaussian filter is adequate and computationally cheaper; the median’s robustness is not needed in this case.

Image Gradients and Edge Detection

From Smoothing to Feature Extraction

The second major use of filtering is feature extraction. Rather than suppressing variation in the image, the goal is now to highlight specific structures — most importantly, edges, locations where image intensity changes rapidly. Edges encode much of the semantic and geometric information in an image: they delineate object boundaries, reveal surface markings, and support reasoning about geometry such as vanishing points.

Partial Derivatives and the Gradient

Because images are functions of two spatial variables, the appropriate mathematical tool is the gradient from multivariate calculus. For a scalar function \( f(x, y) \), the partial derivative with respect to \( x \) captures the rate of intensity change in the horizontal direction, holding \( y \) constant:

\[ \frac{\partial f}{\partial x} = \lim_{\epsilon \to 0} \frac{f(x + \epsilon, y) - f(x, y)}{\epsilon} \]and similarly for the vertical direction:

\[ \frac{\partial f}{\partial y} = \lim_{\epsilon \to 0} \frac{f(x, y + \epsilon) - f(x, y)}{\epsilon} \]The gradient at a point \( (x, y) \) is the two-dimensional vector assembling these two partial derivatives:

\[ \nabla f(x, y) = \left(\frac{\partial f}{\partial x},\; \frac{\partial f}{\partial y}\right) \]This vector points in the direction of the steepest ascent of the intensity surface. Its magnitude,

\[ \|\nabla f\| = \sqrt{\left(\frac{\partial f}{\partial x}\right)^2 + \left(\frac{\partial f}{\partial y}\right)^2} \]measures the steepness of that ascent. Where the gradient magnitude is large, the image intensity changes rapidly — these are edge locations. Where the gradient magnitude is near zero, intensity is approximately constant. The direction of the gradient is always orthogonal to the edge at that location, pointing from the darker to the brighter side.

The directional derivative in any direction \( \hat{n} \) can be obtained from the gradient by taking the dot product: \( \nabla f \cdot \hat{n} \). This is the rate of change of intensity along direction \( \hat{n} \), and it achieves its maximum when \( \hat{n} \) is aligned with the gradient itself.

Numerical Estimation: Central Differences

Since image intensities are available only at discrete pixel locations, partial derivatives must be approximated numerically. The central difference approximation is preferred for its symmetry:

\[ \frac{\partial f}{\partial x}\bigg|_{(x_i, y_i)} \approx \frac{f(x_{i+1}, y_i) - f(x_{i-1}, y_i)}{2\Delta x} \]This is equivalent to convolution with the kernel:

\[ \nabla_x = \frac{1}{2\Delta x}\begin{bmatrix} 0 & 0 & 0 \\ 1 & 0 & -1 \\ 0 & 0 & 0 \end{bmatrix} \]and an analogous kernel \( \nabla_y \) estimates the vertical derivative. That differentiation is expressible as a convolution is not a coincidence: differentiation is a linear, shift-invariant operation, so the general theory guarantees it must correspond to convolution with some kernel.

Sobel and Prewitt Operators

In practice, the simple central-difference kernel is sensitive to noise because isolated noisy pixels create large spurious differences. Standard edge detection operators — the Sobel operator and the Prewitt operator — incorporate a small amount of smoothing across the perpendicular direction to improve robustness. The Sobel operator for horizontal gradient estimation uses the kernel:

\[ S_x = \frac{1}{8}\begin{bmatrix} -1 & 0 & 1 \\ -2 & 0 & 2 \\ -1 & 0 & 1 \end{bmatrix} \]and \( S_y \) is its transpose. The Prewitt operator uses uniform weights in the smoothing direction:

\[ P_x = \frac{1}{6}\begin{bmatrix} -1 & 0 & 1 \\ -1 & 0 & 1 \\ -1 & 0 & 1 \end{bmatrix} \]Both operators estimate the gradient while averaging over a small region perpendicular to the differentiation axis, reducing the impact of noise.

Derivative of Gaussian

A more principled approach is the derivative of Gaussian filter. The gradient computation is noise-sensitive because differentiation amplifies high-frequency components of the signal, and noise is concentrated at high frequencies. The standard remedy is to smooth the image with a Gaussian before differentiating. By the associativity of convolution, these two operations can be pre-combined into a single kernel:

\[ \nabla_x (G_\sigma * f) = (\nabla_x G_\sigma) * f \]Computing \( \nabla_x G_\sigma \) once (the x-derivative of the Gaussian kernel) produces a single kernel that simultaneously smooths and differentiates. This kernel resembles a smoothed version of the simple \( [-1, 0, 1] \) differencing kernel, with values gradually transitioning from positive on one side to negative on the other. The parameter \( \sigma \) again plays the role of scale: a small \( \sigma \) produces a sharp derivative kernel responsive to fine-scale edges, while a large \( \sigma \) produces a broader kernel responsive only to prominent coarse-scale intensity transitions.

Edge Detection via Non-Maximum Suppression

Computing the gradient magnitude \( \|\nabla f\| \) at every pixel and thresholding produces a set of pixels with strong intensity changes, but these tend to form thick bands spanning several pixels perpendicular to the edge direction. To produce thin, crisp edge maps, non-maximum suppression is applied: a pixel is retained as an edge point only if its gradient magnitude is a local maximum along the gradient direction. Concretely, at each candidate pixel \( q \), the two neighbors in the direction of the gradient (points \( p \) and \( r \), interpolated if they do not fall exactly on pixel grid positions) are compared to \( q \); if \( q \) is not larger than both, it is suppressed. The result is a one-pixel-wide ridge of local maxima corresponding to the true edge locus. Combining gradient thresholding and non-maximum suppression, with adaptive hysteresis thresholding, yields the well-known Canny edge detector.

The Laplacian of Gaussian and Difference of Gaussians

Unsharp Masking

Unsharp masking is a classical image sharpening technique. If a blurred version of an image is \( G_\sigma * I \), then the information lost by blurring — the high-frequency content — is captured by the unsharp mask:

\[ U = I - G_\sigma * I \]Adding a scaled version of this mask back to the original amplifies fine detail:

\[ I_{\text{sharp}} = I + \alpha U = (1 + \alpha) I - \alpha\, G_\sigma * I \]This entire operation can be expressed as a single convolution with a kernel known as the Difference of Gaussians (DoG):

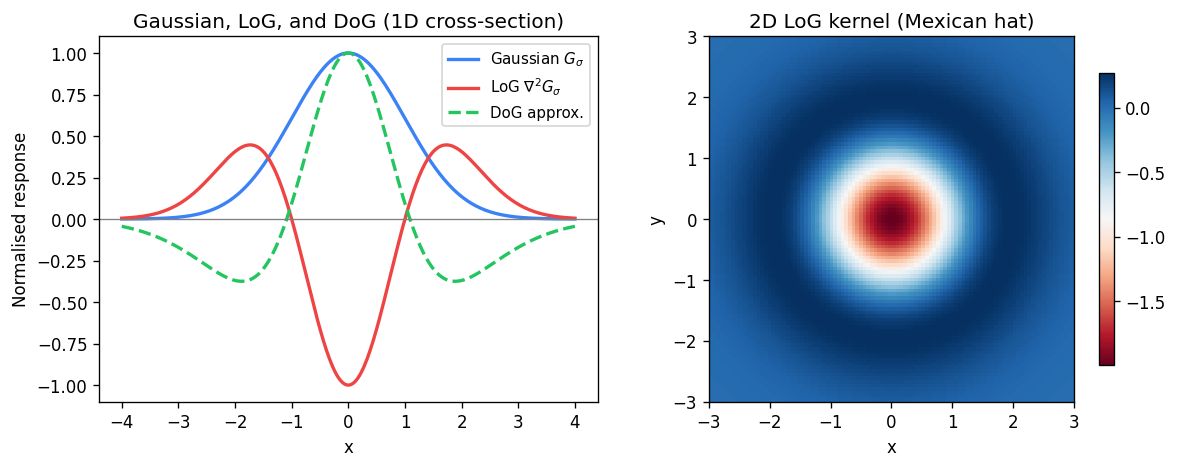

\[ \text{DoG} \approx G_\epsilon - G_\sigma \]where \( G_\epsilon \) is a very narrow Gaussian approximating a delta function (corresponding to the \( I \) term) and \( G_\sigma \) is the broader smoothing Gaussian. The DoG kernel has a characteristic appearance: a bright positive center surrounded by a negative annular ring, sometimes described as a “Mexican hat” shape.

Laplacian of Gaussian (LoG)

The Laplacian of Gaussian (LoG) is a closely related operator defined as the Laplacian (sum of second-order partial derivatives) applied to a Gaussian-smoothed image:

\[ \text{LoG} = \nabla^2 G_\sigma = \frac{\partial^2 G_\sigma}{\partial x^2} + \frac{\partial^2 G_\sigma}{\partial y^2} \]Like the DoG, it has a bright center and negative surround (or vice versa depending on sign convention). The LoG and DoG are closely approximated by each other for appropriate choices of the two Gaussian widths, and both are used as blob detectors: they respond strongly at circular intensity blobs — regions that are brighter (or darker) than their surroundings on a scale determined by \( \sigma \). The extrema (maxima and minima) of the DoG or LoG response over the image indicate locations and scales of blob-like features.

An important observation is that these kernels look visually like the patterns they are designed to detect. The derivative-of-Gaussian kernel in the x-direction resembles a vertical stripe pattern, and indeed it produces strong responses at vertical edges. A DoG kernel resembles a circular blob, and it produces strong responses at blob-like features. This visual correspondence between a kernel and the features it detects is the foundation of template matching and normalized cross-correlation.

Normalized Cross-Correlation (NCC) for Template Matching

Cross-Correlation as Similarity

Any linear filter applied to a location in an image can be interpreted as computing the dot product between the kernel \( h \) (unrolled into a vector) and the image patch \( f_t \) at that location (also unrolled). This is precisely cross-correlation. The numerical output of plain cross-correlation depends on the absolute magnitudes of the kernel and patch values, making it sensitive to illumination changes. To obtain a similarity measure that is invariant to scaling, one divides by the norms of both vectors, computing the cosine of the angle between them:

\[ \text{NCC}[t] = \frac{h \cdot f_t}{\|h\|\, \|f_t\|} = \cos\theta \]This is the Normalized Cross-Correlation (NCC). Its values lie in \( [-1, +1] \): a value of \( +1 \) means the patch is a positive scalar multiple of the template (perfect similarity); \( -1 \) means it is a negative multiple (opposite appearance); \( 0 \) means the two are orthogonal (uncorrelated).

Mean Subtraction and the Correlation Coefficient

A practical refinement is to subtract the mean value from both the template and the patch before computing the dot product and norms:

\[ \text{NCC}[t] = \frac{(h - \bar{h}) \cdot (f_t - \bar{f}_t)}{\|h - \bar{h}\|\, \|f_t - \bar{f}_t\|} \]This is equivalent to the standard Pearson correlation coefficient between the two samples. Equivalently, it can be written in terms of covariance and standard deviations:

\[ \text{NCC}[t] = \frac{\text{cov}(h, f_t)}{\sigma_h\, \sigma_{f_t}} \]Mean subtraction has two important effects. First, it removes additive intensity biases, making the similarity measure invariant to uniform brightness offsets between the template and the image patch. Second, because image intensities are always positive, the raw vectors always lie in the positive quadrant of their vector space; subtracting the mean centers them so they can point in any direction, allowing the full range from \( -1 \) to \( +1 \) to be achieved in practice.

Template matching via NCC produces a response image where local maxima indicate positions where the template best matches the underlying image content. Non-maximum suppression can be applied to these response maps to extract a sparse set of detected feature locations. As a simple but illustrative application, one can detect facial landmarks — left eye, right eye, mouth corners — by sliding templates for each part across a face image and finding local NCC maxima, then using spatial consistency constraints among the detected parts to prune false positives.

Feature Detectors

Discriminant Feature Points

For many applications — image alignment, 3D reconstruction, panoramic stitching, object recognition — one needs to establish reliable correspondences between points in two images of the same scene taken from different viewpoints. This requires discriminant feature points: locations whose appearance is sufficiently distinctive that they can be reliably identified in both images and matched correctly. Edges alone fail this criterion: along a straight edge, every local patch looks nearly identical to every other patch, and there is no way to determine which point on one edge corresponds to which point on another.

Harris Corner Detector

Corners are far more discriminant. At a corner, intensity gradients vary significantly in direction across the local neighborhood — there are multiple distinct edge directions — making the appearance unique. The Harris corner detector formalizes this intuition mathematically.

The key idea is to measure how much the image patch changes when the analysis window is shifted in each direction. Define the change function for a window \( w \) shifted by \( \mathbf{ds} = (u, v)^T \):

\[ E_w(u, v) = \sum_{x, y} w(x, y)\, [I(x + u, y + v) - I(x, y)]^2 \]where \( w(x, y) \) is 1 inside the window and 0 outside (or a Gaussian weighting). Using a first-order Taylor expansion of \( I(x+u, y+v) \) around \( (x, y) \):

\[ I(x + u, y + v) - I(x, y) \approx I_x\, u + I_y\, v = \nabla I \cdot \mathbf{ds} \]where \( I_x = \partial I/\partial x \) and \( I_y = \partial I/\partial y \) are the image gradients. Substituting and simplifying:

\[ E_w(u, v) \approx \mathbf{ds}^T\, M_w\, \mathbf{ds} \]where \( M_w \) is the \( 2 \times 2 \) structure tensor (also called the Harris matrix or autocorrelation matrix):

\[ M_w = \sum_{x, y} w(x, y)\, \nabla I\, \nabla I^T = \sum_{x, y} w(x, y) \begin{bmatrix} I_x^2 & I_x I_y \\ I_x I_y & I_y^2 \end{bmatrix} \]This matrix is computed by summing the outer products of the gradient vector at every pixel within the window, and it is always positive semi-definite.

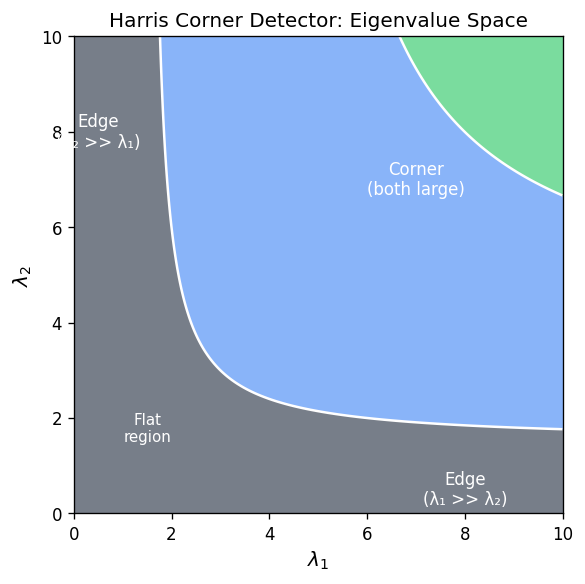

Eigenvalue Analysis

The function \( E_w(u, v) = \mathbf{ds}^T M_w \mathbf{ds} \) is a quadratic form whose isolines (level sets) are ellipses in the \( (u, v) \) plane. The shape of these ellipses is determined by the two eigenvalues \( \lambda_1, \lambda_2 \) of \( M_w \), and the orientation of the principal axes is given by the corresponding eigenvectors.

- If both \( \lambda_1 \) and \( \lambda_2 \) are small, the error \( E_w \) remains nearly zero even for large shifts in any direction: the window lies in a flat region with no texture.

- If one eigenvalue is large and the other small (say \( \lambda_2 \gg \lambda_1 \), the error grows rapidly in one direction but slowly in the other: the window lies on an edge, where sliding along the edge produces no change but crossing it produces a large change.

- If both \( \lambda_1 \) and \( \lambda_2 \) are large, the error grows rapidly in all directions: the window is at a corner, meaning the appearance changes significantly no matter which direction one moves.

The semi-axes of the ellipse are proportional to \( 1/\sqrt{\lambda_i} \), so large eigenvalues correspond to small ellipses (rapid change), and small eigenvalues correspond to large ellipses (slow change).

Harris Corner Response Measure

To avoid explicitly computing eigenvalues (which is computationally more expensive than computing determinant and trace), a common corner response function is:

\[ R = \frac{\det M_w}{\operatorname{trace} M_w} = \frac{\lambda_1 \lambda_2}{\lambda_1 + \lambda_2} \]Since \( \det M_w = \lambda_1 \lambda_2 \) and \( \operatorname{trace} M_w = \lambda_1 + \lambda_2 \), this ratio is large only when both eigenvalues are large, corresponding to a corner. Thresholding \( R > T \) and then applying non-maximum suppression yields a sparse set of corner locations.

Properties of the Harris detector:

- Rotation invariance: The eigenvalues of \( M_w \) (and hence \( R \) are invariant to image rotation, because rotating the image merely rotates the ellipse without changing its shape. Corner locations detected are therefore the same regardless of image orientation.

- Partial invariance to affine intensity changes: Adding a constant bias to all intensities does not affect gradients, so \( R \) is invariant to bias. Multiplying all intensities by a gain \( a \) scales the gradients and hence scales \( R \), but does not change the locations of local maxima — only potentially moving some above or below the detection threshold.

- Non-invariance to image scale: At a fine scale, a curved boundary segment may appear to be an edge; at a coarser scale (after zooming out), the same segment exhibits the abrupt directional change characteristic of a corner. The window size needed to detect the corner correctly depends on the scale at which it occurs, and Harris corners with a fixed window size will miss corners at other scales.

Scale-Invariant Feature Detection and the Gaussian Pyramid

The Scale Problem

The Harris detector’s non-invariance to scale is a fundamental limitation for matching across images taken at different distances or zoom levels. The solution is to search for features not just at a fixed scale but across a range of scales, selecting for each candidate location the scale at which the feature response is locally maximal. This adds scale as a third coordinate alongside \( x \) and \( y \).

Gaussian Pyramid

Rather than enlarging the filter kernel (which is computationally expensive), the standard approach is to downsample the image while keeping the kernel fixed. The Gaussian pyramid is a multi-resolution representation constructed by repeated blur-and-downsample operations:

- Start with the original image at full resolution.

- Blur with a Gaussian and downsample by a factor of 2, producing a half-resolution image.

- Repeat, producing progressively coarser levels.

Each level of the pyramid corresponds to a different scale. Because each successive image has half the resolution, the total storage cost of the pyramid is only about \( 4/3 \) times that of the original image (geometric series). Convolving each pyramid level with the same DoG kernel is equivalent to convolving the original image with a DoG kernel scaled up by the pyramid factor — a much more efficient approach.

The Gaussian pyramid bears a resemblance to the encoder portion of segmentation convolutional neural networks: both apply repeated convolution and downsampling to process an image at multiple resolutions. This connection is not coincidental and will resurface when CNNs are covered later in the course.

Scale-Space Feature Detection with DoG

To find blob-like features at their natural scale, convolve the DoG kernel with each level of the Gaussian pyramid, producing a 3D volume indexed by position \( (x, y) \) and scale \( s \). Local maxima in this 3D volume — points that are larger than all their neighbors in position and in scale — are reported as detected features. Each feature is characterized by its location \( (x, y) \) and its scale \( s \), often visualized as a circle whose center is the feature location and whose radius is proportional to the feature scale.

Feature Descriptors

Detection vs. Description

Detecting a feature point gives its location and scale. To match feature points across images, one also needs a descriptor: a compact representation of the local image appearance that is stable across viewpoint and illumination changes, and distinctive enough to avoid incorrect matches. A good descriptor must be both invariant (stable to common transformations) and discriminant (different for different features).

The hierarchy of discriminancy, from least to most:

- Color (RGB): Very few distinct colors; almost any two nearby patches can be confused. Non-discriminant.

- Edges (gradient magnitude extrema): More distinctive but still insufficient — patches at different positions along the same edge look identical.

- Corners: Highly distinctive in location; the basis for MOPS and SIFT descriptors.

Rotation Invariance via Dominant Gradient

A key invariance requirement is robustness to rotation: if the same scene is photographed from a rotated viewpoint, descriptors for the same physical point should be similar. The standard trick is to define a canonical orientation for each feature based on the dominant gradient direction in its neighborhood.

The dominant gradient is computed at a coarser scale (by blurring the image heavily) to get a stable, noise-robust estimate of the overall intensity gradient direction. This direction is then used to rotate the analysis patch into a consistent canonical frame. If the same feature is observed from a rotated camera, both detections will estimate the same (rotated) dominant gradient and both will canonically orient their patches accordingly — so the resulting patches, though extracted from differently oriented images, will be aligned and comparable.

Multi-Scale Oriented Patches (MOPS)

MOPS (Multi-Scale Oriented Patches) is a complete feature description system built on Harris corners. For each detected Harris corner at scale \( s \) and location \( (x, y) \):

- Scale and location come from the Harris detector applied at each pyramid level.

- Orientation is determined by the dominant blurred-gradient direction in the neighborhood, making the descriptor rotation-invariant.

- Descriptor vector: A square image patch of size proportional to \( 5s \times 5s \) pixels is sampled around the feature center, aligned with the canonical orientation. This patch is resampled to a standard \( 8 \times 8 \) grid using grayscale intensities.

- Normalization: The 64 grayscale values are normalized by subtracting the mean and dividing by the standard deviation within the patch: \( I' = (I - \mu)/\sigma \). This achieves bias/gain invariance: if the same patch is observed under different uniform lighting (additive bias \( I \to I + b \) or multiplicative gain (\( I \to aI \), the normalized descriptor is unchanged.

Matching is performed by comparing descriptors at the same scale: a feature at scale \( s \) in one image is matched against features at scale \( s \) in another, using Euclidean distance between descriptor vectors as the matching score.

SIFT (Scale-Invariant Feature Transform)

SIFT (Scale-Invariant Feature Transform), introduced by David Lowe, is arguably the most widely cited paper in the history of computer vision and remains a benchmark for hand-crafted feature descriptors.

Detection: SIFT uses DoG extrema in the Gaussian pyramid — the same mechanism described above for scale-space feature detection — to find interest points at their natural scale. Like MOPS, each detected point has a location \( (x, y) \) and a scale \( s \).

Orientation assignment: Like MOPS, SIFT establishes a dominant orientation from the distribution of gradient orientations in the neighborhood, computed from the Gaussian-smoothed image at the detection scale. Typically, a histogram of gradient orientations is built from the neighborhood, with each gradient contribution weighted by its magnitude and by a Gaussian distance weight. The peak of this histogram defines the canonical orientation. Multiple peaks above 80% of the maximum can produce multiple descriptors for the same keypoint, increasing robustness.

Descriptor construction: The distinctive element of SIFT is its descriptor. Rather than using raw intensity values like MOPS, SIFT uses gradient orientation histograms. The neighborhood around the feature is divided into a \( 4 \times 4 \) grid of sub-regions. Within each sub-region, an 8-bin histogram of gradient orientations is computed, with gradient magnitudes providing the voting weights. Concatenating these \( 4 \times 4 \times 8 = 128 \) values produces the 128-dimensional SIFT descriptor. This representation is much more robust to small geometric distortions, local illumination variations, and minor viewpoint changes than raw intensity patches. The descriptor is subsequently L2-normalized and then clamped at 0.2 to reduce sensitivity to non-linear illumination effects, and renormalized.

The use of gradient orientation histograms gives SIFT invariance to intensity shifts and gains (since gradients are invariant to additive offsets), and the histogramming provides robustness to small spatial displacements. The result is a descriptor that is highly discriminant and stable across a wide range of photometric and geometric changes, which explains why SIFT was for many years the de-facto standard for feature-based image matching in tasks such as panoramic stitching, 3D reconstruction, and object recognition.

Summary

This lecture has covered the full pipeline from raw pixel values to sophisticated, matchable feature descriptors:

Point processing (gamma correction, histogram equalization, window-center adjustment) modifies pixel intensities independently to improve visual quality or adapt dynamic range.

Linear filtering via convolution or cross-correlation extracts neighborhood-based features. The mean filter smooths by uniform averaging; the Gaussian filter smooths with rotational invariance and explicit scale control through \( \sigma \). The derivative-of-Gaussian filter simultaneously smooths and differentiates, producing gradient estimates robust to noise. Sobel and Prewitt operators are practical approximations that incorporate local smoothing.

Non-linear filtering via the median filter handles impulsive (salt-and-pepper) noise more robustly than any linear filter, at the cost of no longer being expressible as a convolution.

Image gradients provide the fundamental low-level features for edge detection. The gradient vector at each pixel points in the direction of steepest intensity ascent; its magnitude quantifies edge strength. Non-maximum suppression thins thick edge bands to one-pixel-wide ridges. The Laplacian of Gaussian (LoG) and Difference of Gaussians (DoG) extend differentiation to second-order operators that detect blob-like structures.

Normalized Cross-Correlation unifies filtering with template matching, providing a statistically grounded similarity measure — the Pearson correlation coefficient — between a template and image patches at all locations.

Feature detectors identify discriminant locations for reliable cross-image matching. The Harris corner detector uses the eigenvalues of the structure tensor \( M_w \) to classify image patches as flat, edge, or corner. Its corner response function \( R = \det M_w / \operatorname{trace} M_w \) is rotation-invariant but scale-dependent.

Scale-invariant detection via the Gaussian pyramid finds features at their natural scale as local maxima in a \( (x, y, s) \) scale-space volume. MOPS adds orientation normalization and uses normalized intensity patches as descriptors. SIFT further replaces raw intensities with gradient orientation histograms, producing a robust 128-dimensional descriptor that was the state of the art in hand-crafted feature matching for over a decade.

Image Warping

Parametric Transformations

Image warping — also called a domain transformation — is distinct from the point processing (range transformations) studied in the previous lecture. Where point processing changes the intensity values of pixels while leaving their positions fixed (producing, for example, a brighter image whose geometric structure is unchanged), image warping moves pixels to new locations while preserving their intensity values. Formally, if \( f \) is the source image and \( T \) is a coordinate transform, then point processing produces \( g(\mathbf{x}) = T(f(\mathbf{x})) \), whereas image warping produces \( g(\mathbf{x}) = f(T(\mathbf{x})) \). The domain of the image is changed; the range (the set of intensity values) is merely rearranged.

This lecture focuses exclusively on parametric (global) warps — transformations that are described by a small, fixed number of parameters and that apply the same coordinate-changing rule to every pixel in the image. The word “global” emphasizes this uniformity: a single mathematical formula, controlled by just a handful of numbers, dictates the fate of every point. The simplest parametric warps use only four parameters (a 2×2 matrix), and even the most general class covered here — the projective transformation or homography — requires only eight independent parameters. This economy of description stands in sharp contrast to an arbitrary pixel permutation, which would require storing one pair of coordinates for every pixel in the image.

The full family of parametric warps studied here forms a nested hierarchy: linear transformations (scale, rotation, shear, reflection) are embedded within affine transformations (which add translation), which are in turn embedded within projective transformations. Each step up the hierarchy adds degrees of freedom and relaxes geometric invariants. The practical motivation for understanding this hierarchy is that the simplest transformation sufficient for a given task should always be preferred — simpler models are easier to estimate, are less prone to overfitting, and have faster inverses.

Linear Transformations: Scaling, Rotation, Shear, and Reflection

A 2D linear transformation maps every point \( \mathbf{p} = (x, y)^T \) to a new point \( \mathbf{p}' = (x', y')^T \) by matrix multiplication with a 2×2 matrix \( M \):

\[ \begin{pmatrix} x' \\ y' \end{pmatrix} = M \begin{pmatrix} x \\ y \end{pmatrix} = \begin{pmatrix} a & b \\ c & d \end{pmatrix} \begin{pmatrix} x \\ y \end{pmatrix}. \]The four entries of \( M \) constitute the four parameters of the transformation. A key property shared by all linear transformations is that the origin always maps to the origin: setting \( (x, y) = (0, 0) \) always yields \( (x', y') = (0, 0) \).

Scaling is the simplest non-trivial example. A uniform scaling by factor \( s \) multiplies both coordinates equally; non-uniform scaling uses a diagonal matrix with independent scale factors \( s_x \) and \( s_y \) along each axis:

\[ S = \begin{pmatrix} s_x & 0 \\ 0 & s_y \end{pmatrix}. \]The inverse is obtained by reciprocating the diagonal entries. When \( s_x \neq s_y \) the transformation changes the aspect ratio of the image, stretching it along one axis relative to the other.

2D rotation about the coordinate origin by angle \( \theta \) (counterclockwise) is derived by expressing a point in polar form. If a point has polar coordinates \( (r, \phi) \), so that \( x = r\cos\phi \) and \( y = r\sin\phi \), then after rotation by \( \theta \) it becomes \( x' = r\cos(\phi+\theta) \) and \( y' = r\sin(\phi+\theta) \). Expanding with the angle-addition identities and substituting back:

\[ x' = x\cos\theta - y\sin\theta, \qquad y' = x\sin\theta + y\cos\theta. \]In matrix form this is the rotation matrix:

\[ R(\theta) = \begin{pmatrix} \cos\theta & -\sin\theta \\ \sin\theta & \cos\theta \end{pmatrix}. \]Although \( \sin \) and \( \cos \) are nonlinear functions of the parameter \( \theta \), the mapping itself is still linear in \( (x, y) \) — each output coordinate is a linear combination of the input coordinates. Rotation matrices have only one genuine degree of freedom (the angle \( \theta \), even though the matrix has four nonzero entries. A crucial property is that the inverse of a rotation matrix equals its transpose: \( R^{-1} = R^T \). This holds because rotating by \( -\theta \) reverses the rotation, and substituting \( -\theta \) into the formula for \( R \) yields exactly the transpose.

Shear is a transformation that slides points parallel to one axis by an amount proportional to their coordinate along the other axis. A horizontal shear is written:

\[ \begin{pmatrix} x' \\ y' \end{pmatrix} = \begin{pmatrix} 1 & sh_x \\ 0 & 1 \end{pmatrix} \begin{pmatrix} x \\ y \end{pmatrix}, \]so that \( x' = x + sh_x \cdot y \) while \( y' = y \) is unchanged. Points on the same horizontal scan line (same \( y \) all shift by the same amount, but points at different heights shift differently. This is not a rotation: although lines through the origin map to lines at a different angle, the individual points translate horizontally rather than rotating around the origin.

Reflection is represented by diagonal matrices with negative entries. Mirroring about the \( y \)-axis negates only the \( x \)-coordinate:

\[ \begin{pmatrix} -1 & 0 \\ 0 & 1 \end{pmatrix}, \]while point reflection through the origin negates both coordinates with \( \text{diag}(-1, -1) \).

A fundamental result characterizes the complete geometric scope of 2×2 linear transformations: any linear transformation is some combination of scaling, rotation, shear, and reflection — and conversely, any such combination is linear. This can be made precise using the singular value decomposition (SVD): every 2×2 matrix \( A \) factors as

\[ A = R(\theta) \begin{pmatrix} \lambda_1 & 0 \\ 0 & \lambda_2 \end{pmatrix} R(-\phi), \]where the two rotation matrices define a pair of orthogonal coordinate axes, and the diagonal matrix performs a (possibly anisotropic) scaling along those axes. The scalars \( \lambda_1, \lambda_2 \) can be negative, which corresponds to a reflection. The overall area scaling factor is \( |\det A| = |\lambda_1 \lambda_2| \), and this factor is the same everywhere in the plane — ratios of areas are preserved under any linear transformation.

Key properties shared by all 2D linear transformations are: the origin maps to the origin; lines map to lines; parallel lines remain parallel; ratios of lengths along parallel lines are preserved; ratios of areas are preserved; and the set of linear transformations is closed under composition (the product of two 2×2 matrices is another 2×2 matrix).

What linear transformations cannot do: translation. The transformation \( x' = x + t_x, \; y' = y + t_y \) shifts every point by the fixed vector \( (t_x, t_y) \). It is not a linear transformation because \( T(0, 0) = (t_x, t_y) \neq (0, 0) \), violating the requirement that the origin maps to the origin. No 2×2 matrix can represent translation. This limitation motivates the introduction of homogeneous coordinates.

Linear Transformations as Space Deformation and Change of Basis

It is worth noting two complementary interpretations of the equation \( \mathbf{q} = M\mathbf{p} \). In the active (space deformation) interpretation, the matrix moves a point \( \mathbf{p} \) to a new location \( \mathbf{q} \) within a fixed coordinate system. In the passive (change of basis) interpretation, the columns of \( M \) are treated as new basis vectors \( \mathbf{u} \) and \( \mathbf{v} \), and the equation expresses that the coordinates \( (4, 3) \) (for example) in the new basis \( \{\mathbf{u}, \mathbf{v}\} \) correspond to the point \( \mathbf{q} \) expressed in the original basis \( \{\mathbf{i}, \mathbf{j}\} \). The inverse matrix \( M^{-1} \) then converts back — its columns play the role of \( \mathbf{i} \) and \( \mathbf{j} \) expressed in the \( \{\mathbf{u}, \mathbf{v}\} \) system. When both bases are orthonormal, the matrix \( M \) reduces to a rotation (or rotoreflection).

Homogeneous Coordinates

To include translation within the framework of matrix multiplication, we adopt homogeneous coordinates. A 2D point \( (x, y) \) is represented by a 3-vector \( (x, y, 1)^T \), or more generally by any scalar multiple \( (wx, wy, w)^T \) for \( w \neq 0 \). The rule for converting back to Cartesian coordinates is to divide the first two components by the third: the homogeneous triple \( (X, Y, W) \) represents the 2D point \( (X/W, \; Y/W) \). The triple \( (0, 0, 0) \) is forbidden since it yields \( 0/0 \).

This representation is deliberately redundant: infinitely many triples represent the same 2D point, since scaling all three components by any nonzero constant \( \lambda \) leaves the ratio unchanged. For instance, \( (2, 1, 1) \), \( (4, 2, 2) \), and \( (6, 3, 3) \) all represent the Cartesian point \( (2, 1) \).

With this representation, translation becomes a linear operation. Setting \( w = 1 \) and multiplying by the 3×3 translation matrix:

\[ \begin{pmatrix} 1 & 0 & t_x \\ 0 & 1 & t_y \\ 0 & 0 & 1 \end{pmatrix} \begin{pmatrix} x \\ y \\ 1 \end{pmatrix} = \begin{pmatrix} x + t_x \\ y + t_y \\ 1 \end{pmatrix}, \]which corresponds to the translated Cartesian point \( (x + t_x,\; y + t_y) \).

A second major advantage of homogeneous coordinates is that they give a finite representation to points at infinity. As the third coordinate \( w \to 0 \) with \( (x, y) \) held fixed, the Cartesian point \( (x/w, y/w) \) recedes to infinity along the ray in direction \( (x, y) \). The homogeneous triple \( (x, y, 0) \) — perfectly representable with ordinary floating-point numbers — encodes this point at infinity. This is the 2D analogue of the way \( \pm\infty \) extend the real line; here we get a whole circle’s worth of “directions at infinity” added to the Euclidean plane. The resulting space is the projective plane \( \mathbb{P}^2 \).

Affine Transformations