CS 486: Introduction to Artificial Intelligence

Alice Gao

Estimated study time: 5 hr 2 min

Table of contents

Introduction to AI and CS 486/686

Applications of Artificial Intelligence

What is artificial intelligence? Artificial Intelligence is a hard term to define, but before we formally define it, it is best to look at some applications of AI to get a feeling of what it is. Due to the limited time of this course, we cannot touch upon every possible application of AI — there are too many applications everywhere in our daily lives. This introduction will focus mostly on games.

What is the state of the art of AI? The grand goal of AI is to build a general intelligence agent. Unfortunately, there has not been much success towards this goal, but there has been a lot of progress made in restricted domains. We will go through a few examples to show the kind of progress that has been made.

Checkers

Checkers can be thought of as a simplified version of chess. The goal of the game is to capture or block all of your opponent’s pieces. On a checkerboard, there are around 1020 possible positions if we are able to put the pieces in all possible positions.

Marion Tinsley was the world champion of checkers from 1950 to 1990. During his entire 40 year span, he only lost a total of 5 games. It was said that Tinsley was “to checkers what Leonardo da Vinci was to science, what Michelangelo was to art and what Beethoven was to music.”

Jonathan Schaeffer, a professor at the University of Alberta, worked on checkers from 1988 to 2007. He developed a program called Chinook to play checkers, which uses search and reinforcement learning.

Chinook and Marion Tinsley played two matches in total. The first one was in 1992. Chinook defeated Tinsley in two games, but had to resign due to an error. In 1994, Schaeffer invited Tinsley to play another match against Chinook. They played six games in total, and all of them were draws. Tinsley had a stomach ache, and eventually had to go to the hospital. He withdrew from the match, and Chinook officially became the first computer program in history to win a human world championship. However, Schaeffer was disappointed since Tinsley never really lost a match to Chinook, and soon after, Tinsley died from pancreatic cancer.

Schaeffer decided that if he was not going to be able to beat the world champion, he was going to beat the game. He spent the next few years working on Chinook, and in 2007, he published an article in Science titled “Checkers is solved.”

“From the end of the Tinsley saga in ‘94–‘95 until 2007, I worked obsessively on building a perfect checkers program. The reason was simple: I wanted to get rid of the ghost of Marion Tinsley. People said to me, ‘You could never have beaten Tinsley because he was perfect.’ Well, yes, we would have beaten Tinsley because he was only almost perfect. But my computer program is perfect.”

— Jonathan Schaeffer

Assuming that both players play checkers perfectly, the player who goes first has a strategy to guarantee a draw. This tells us that the game of checkers is quite difficult — there is no strategy to guarantee a win if both players can play perfectly.

Chess

Compared to checkers, chess is a much more complex game. Where checkers has 1020 possible positions, chess has 10100.

In 1980, a Carnegie Mellon University professor, Edward Fredkin, created a prize of $100,000 for any computer scientist who could create a computer that could beat the best human chess player in the world. This prize was claimed 17 years later by IBM researchers in 1997. IBM claimed this prize by developing a program called Deep Blue. Deep Blue uses heuristic search to play the game of chess, and it could look 7 or 8 moves ahead in a game.

In 1996, Deep Blue played Gary Kasparov who was the world champion. Unfortunately for IBM, Deep Blue lost this match, but in 1997, after Deep Blue was heavily upgraded, it beat Kasparov in a six-game match.

Go

Go is a game that originated from China. It has very simple rules — you play by putting black and white stones on the board — but it is a very difficult game to master. Where checkers has 1020 possible positions and chess has 10100 possible positions, Go has 10360 possible positions.

AlphaGo was developed by Google DeepMind, and it uses search and deep neural networks to try to solve the game of Go. In March 2016, AlphaGo beat Lee Sedol, a world-renowned Go player, in a match with five games. Lee Sedol was able to win only one of the five games. In March 2017, AlphaGo also beat another player named Ke Jie who at the time was the current world number one ranking player.

Poker

Checkers, chess, and Go are all games of complete information. For games of complete information, all the information about the game is in front of the players. The only thing that is needed to win is to look ahead more moves than the opponent. With poker, the players cannot see the cards of the opponents. This is a game of imperfect information, so this uncertainty has to be modelled in some way.

There are two main difficulties in poker compared to checkers, chess, and Go. The first is uncertainty — we must model the opponents and try to guess what their cards are. The second difficulty is that poker is not a one-shot game. When a player is playing one hand versus another hand, the decisions are not independent. In contrast, in Go, playing one game after another, the two games are completely independent. For poker, the end goal is to maximize the stack of chips over multiple games, requiring considerations for long-term payoff.

Two research teams are closely involved in developing programs to play poker. Michael Bowling leads the team from the University of Alberta, and Tuomas Sandholm leads the team from Carnegie Mellon University.

The University of Alberta team recently tackled both limit and no-limit poker. With limit poker, every time a player makes a bet, there is an upper limit on how much the player can bet. Limit poker is a much easier problem to solve than no-limit poker. Michael Bowling’s team was able to develop a program called Cepheus which plays essentially perfect games of heads-up limit hold-em poker. The team also tackled heads-up no-limit poker and developed a program called DeepStack which defeated professional poker players at heads-up no-limit Texas hold’em.

The Carnegie Mellon University team developed a program called Libratus which defeats professionals in no-limit poker. In 2017, from Jan 11–31, Libratus spent 20 days playing against four top human poker players. They played a total of 120,000 hands, and Libratus was able to defeat all four human players.

Jeopardy!

Jeopardy! is a popular TV game show, where Alex Trebek is the host and the players answer questions by category. Watson, developed by IBM, is a program named after its founder, Thomas Watson. Watson played a match with Ken Jennings (who had the longest unbeaten run on Jeopardy: 74 winning appearances) and Brad Rutter (who won the biggest prize ever on the show: $3.25 million) in 2011. Watson won a total of $77k, leaving Rutter with $21k and Jennings with $24k.

How did Watson become so good at playing this game? The game show is happening in real time, so Watson needed very advanced capabilities in natural language processing and machine learning. Watson cannot just go on the internet and search for the answers — that would be considered cheating. Instead, Watson needed to understand natural language, respond very quickly, and go through its own knowledge base to find the answer and express it in natural language. After Watson proved that it could beat the top human players, the program was repurposed for healthcare.

Other Applications

There are many other applications of AI, like autonomous cars, FCC spectrum auctions, vacuum robots, spam filtering, automated planning and scheduling for transportation during the Persian Gulf Crisis of 1991, and automated phone systems.

Topics in CS 486/686

This course is a broad and shallow course, introducing a large number of topics in AI with limited time to talk about each one. However, after taking this course, you should have a broad understanding of AI. This course prepares you to explore some topics in more depth by taking other courses or by learning more on your own.

The topics covered in the 24 lectures of this course are organized into five main units:

Intro to AI and the Course — Lectures 1 and 2 describe some applications of AI, introduce the components of this course, and discuss several definitions of AI.

Search — Lectures 2 through 5 cover uninformed search, heuristic search, constraint satisfaction problems (CSP), and local search algorithms.

Supervised Learning — Lectures 6 through 9 cover an introduction to machine learning and decision trees, followed by two lectures on neural networks. Decision trees are simple and intuitive, whereas neural networks are complex and like black boxes.

Reasoning Under Uncertainty — Lectures 10 through 15 cover probabilities, independence and Bayesian networks, the variable elimination algorithm, and two lectures on hidden Markov models.

Decision Making Under Uncertainty — Lectures 16 through 21 cover decision theory and decision networks, Markov decision processes (solved using value iteration and policy iteration), and passive and active reinforcement learning algorithms.

Multi-agent Systems — Lectures 22 and 23 cover game theory concepts we can use to determine our best actions in a multi-agent setting.

Definitions of AI and Uninformed Search

Definitions of Artificial Intelligence

There are many ways of defining artificial intelligence. We will follow the four definitions given in the book Artificial Intelligence: A Modern Approach by Peter Norvig and Stuart J. Russell. The four definitions are arranged in a table:

| Human-based | Rationality-based | |

|---|---|---|

| Thinking | Cognitive Modeling: Systems that think like humans | Laws of Thought: Systems that think rationally |

| Acting | Turing Test: Systems that act like humans | Rational Agent: Systems that act rationally |

The definitions on the left and the definitions on the right differ by how to measure the performance of the system being developed. Should performance be measured against humans? Or should performance be measured against rationality, which is an ideal concept of intelligence that can be developed mathematically?

The definitions on the top and bottom differ by what kinds of things to care about. Should we care about how the systems think and how they reason? Or should we care about how the systems act and behave? The difference is that thinking and reasoning is something that goes on internally in a system, whereas behaviours and actions are things that can be observed.

Cognitive Modeling

The first definition is called the cognitive modelling approach. For this definition, we want to develop a system that thinks like a human. We might use humans as a benchmark because in the real world, there are not many examples of intelligence, and humans are one of the few we do have.

How do we figure out how humans think? We could try to examine our own thoughts through introspection. We could also conduct psychological experiments: bring people into a lab and observe what they do, and try to infer what kind of thinking and reasoning process led to their behaviour. We also now have technology like MRI which can be used to observe the brain in action.

This idea led to the development of a big area of study called cognitive science. The main purpose of this area is to develop a theory of the mind using AI modelling and by conducting psychological experiments.

Turing Test

The second definition is called the Turing test approach. For this definition, we want to develop a system that acts like humans.

The Turing test was an idea proposed by Alan Turing, the father of computer science, around the 1950s. The idea is as follows: we have an interrogator that is communicating with an entity that could be a human or a computer program. They communicate via a text interface, and the interrogator does not know who they are talking to. The purpose of the entity is to prove that it is intelligent. If the entity is able to behave in such a way that the interrogator cannot distinguish the entity from the human, then the entity passes the Turing test and is considered intelligent.

Some argue that the Turing test is not useful since it gives us a way to recognize if something is intelligent, but does not give us a way to realize intelligence — it does not tell us how to build a system that can pass the test. On the other hand, the idea of the Turing test gave rise to a lot of important areas of AI. To pass a Turing test, an entity needs to: understand natural language (NLP), represent and store knowledge (knowledge representation), reason and perform inference, learn (machine learning), perceive objects (computer vision), and move and manipulate objects (robotics).

Laws of Thought

The third definition is called the law of thought approach. For this definition, we want to build a system that thinks rationally.

Greek philosopher Aristotle first tried to formally define what it means for something to think correctly. Aristotle defined syllogisms, which are patterns of argument structures where if given the correct premises, it is possible to draw the correct conclusions. A famous example is “All people are mortal; Socrates is a person; therefore, Socrates is mortal.”

This led to the development of the field of logic. The logicist tradition tells us to use logic to express our knowledge: encode all the objects we have in the world, and then encode their relationships as well. However, there are problems with this approach. It is often difficult to express things in logic, and even if we could encode all knowledge in logic, searching through all of these statements would be incredibly slow and impractical.

Rational Agent

The fourth definition is called the rational agent approach. For this definition, we want to build a system that acts rationally. The word “agent” comes from a Latin word that means “to do” — an agent is something that acts.

A rational agent acts to achieve the best outcome in a world without uncertainty. In a world with uncertainty, a rational agent acts to achieve the best expected outcome, where the expectation is taken over our uncertainty. A rational behaviour is the creation and pursuit of goals. The agent should operate autonomously, be able to perceive the environment, and be able to learn and adapt to changes.

Which Definition Should We Choose?

In this course, we focus on the rational agent definition — we want to develop systems that act rationally. There are a few reasons for this choice:

Why care about behaviour over thoughts? Acting rationally is a more general idea than thinking rationally. Thinking correctly and performing inference is only one way to achieve rational behaviour. In some scenarios there is simply no correct answer or no correct way to think, yet we still have to act. As humans, we often rely on reflexes to avoid dangers — we have to act quickly in order to survive.

Why measure performance against rationality rather than humans? First, humans often act in ways that we do not consider intelligent. A book called Predictably Irrational by Dan Ariely explores how humans behave irrationally in predictable ways. Second, rationality is a well-defined concept mathematically — we can develop theoretical models, analyze them, and perform experiments. The analogy is similar to how modern aircraft was developed: rather than mimicking birds, we studied the underlying principles of aerodynamics.

Applications of Search

Before exploring the topic of search, let us look at some applications of search algorithms.

Propositional Satisfiability

Given a propositional formula, such as \( (((a \land b) \lor c) \land d) \lor (\neg e)) \), the problem of propositional satisfiability asks whether there is a way to assign truth values to the variables to make the formula true.

Interestingly, such a simple problem actually has applications in the real world. One of these applications is the FCC spectrum auction. The FCC wanted to buy back radio spectrums from TV broadcasters and sell them to telecom companies — a resource reallocation problem that ends up being in the form of a propositional satisfiability problem involving tens of thousands of variables.

Sliding Puzzles

The eight puzzle involves tiles numbered 1 to 8 arranged in a 3×3 grid. The rule is that we can slide the tiles either horizontally or vertically. The goal is to move the tiles from the initial configuration to the final configuration where the tiles are arranged from 1 to 8 in order.

Another classic sliding puzzle is called Huarong Dao, a Chinese sliding puzzle based on the Battle of the Red Cliffs between the Wei kingdom and the Shu kingdom. The story involves Cao Cao’s escape through the narrow Huarong Dao, guarded by commanders Zhang Fei, Ma Chao, Zhao Yun, Huang Zhong, and Guan Yu. The puzzle represents this battlefield, and the goal is to slide pieces horizontally and vertically until Cao Cao escapes from the bottom opening.

Missionaries and Cannibals

Three missionaries and three cannibals need to cross a river. They start on the left side, and there is one boat that can carry at most two people at a time. At any point, if there are more cannibals than missionaries on either side of the river, the cannibals will eat the missionaries. The goal is to transport all six people from the left side to the right side without letting any missionaries be eaten.

N-Queens Problem

The goal of the N-Queens problem is to place \(n\) queens on an \(n \times n\) board so that no pair of queens can attack each other. A queen attacks anything in the same row, in the same column, and in the same diagonal. This is a classic constraint satisfaction problem.

Formulating a Search Problem

Why would we want to use a search algorithm in the first place? If we are facing a difficult problem, we would want an algorithm to solve it. It is often easy to recognize a solution to these problems, but it is hard to know how to get to a solution. This is where we would want to use search. The description of a search problem may remind you of NP-hard problems — problems that are very easy to verify a solution for, but have no efficient algorithm to solve.

Components of a Search Problem

A search problem is defined by:

- A set of states

- A start state (S)

- Goal states or a goal test (G) — a Boolean function which tells us whether a given state is a goal state

- A successor (neighbour) function — an action which takes us from one state to other states

- (Optionally) a cost associated with each action

A solution to this problem is a path from the start state to a goal state, optionally with the smallest total cost. If we have costs associated with the directed arcs, our goal will be to find the path with the least total cost — in other words, the shortest path.

For a real-world problem, there are often multiple ways of formulating the problem into a search problem. The formulation you choose is going to affect how efficiently you can find a solution. First, the state definition can affect how difficult it is to implement the successor function. Second, the successor function can affect the size and structure of the search graph.

Solving a Search Problem

When solving a search problem, we often do not directly work with the search graph. Rather, we generate a search tree, which is the process by which we explore the search graph.

We start with the initial node, and at any point in time, we maintain something called a frontier. The frontier consists of a set of paths. It contains all the leaf nodes that are available for expansion. To expand a particular node, we first choose the path from the frontier which ends with the node, remove it from the frontier, then generate all the neighbours of that particular node and add them to the frontier.

This leads to the generic search algorithm:

procedure SEARCH(Graph, Start node s, Goal test goal(n))

frontier := {⟨s⟩}

while frontier is not empty do

select and remove path ⟨n₀, ..., nₖ⟩ from frontier

if goal(nₖ) then

return ⟨n₀, ..., nₖ⟩

for every neighbour n of nₖ do

add ⟨n₀, ..., nₖ, n⟩ to frontier

return no solution

There are different search strategies that produce a number of uninformed search algorithms: depth-first search, breadth-first search, iterative deepening search, and lowest-cost-first search.

Two important points about this algorithm: First, how we select a path from the frontier determines our search strategy. If we select the newest path added to the frontier, we get depth-first search. If we select the oldest path, we get breadth-first search. Second, we perform the goal test when the path is removed from the frontier, not when it is added — this is because the goal test could be costly to perform, and because there could be multiple solutions and we might want to find the path with the lowest cost.

Depth-First Search

Depth-first search (DFS) treats the frontier as a stack. A stack follows the last in, first out (LIFO) principle. DFS goes down one path until completion, and if it does not find the goal, it backtracks and goes down the next path.

Properties of Depth-First Search

We use the following quantities:

- \(b\): branching factor — the maximum number of successors that any node has

- \(m\): maximum depth of the search tree

- \(d\): depth of the shallowest goal node

Space complexity: DFS only needs to store the frontier. It needs to remember the current path it is exploring (at most length \(m\) and the alternative nodes at every level (at most \(b\) siblings per level). Therefore, the space complexity is \(O(bm)\) — linear in the maximum depth of the tree.

Time complexity: In the worst case, DFS may have to explore the entire search tree. The number of nodes grows exponentially with depth: 1 node at the top level, at most \(b\) at the next, \(b^2\) at the next, and so on up to \(b^m\) at the bottom. The time complexity is \(O(b^m)\) — exponential in the maximum depth.

Completeness: DFS is not guaranteed to find a solution if one exists, since there may be infinite paths in the search tree (for example, cycles). If the algorithm goes down a cycle, it is essentially going down an infinite path and will not terminate.

Breadth-First Search

Breadth-first search (BFS) treats the frontier as a queue. A queue follows the first in, first out (FIFO) principle. This means that removing an item from the frontier using BFS means removing the oldest node that was added to the frontier. BFS explores the search tree level by level, expanding nodes from left to right on every level.

Properties of Breadth-First Search

Space complexity: The frontier grows to the size of the level containing the shallowest goal node. At depth \(d\), there are \(b^d\) nodes. Therefore, the space complexity is \(O(b^d)\) — exponential in the depth of the shallowest goal node.

Time complexity: In the worst case, BFS will visit all the nodes up to and including depth \(d\). The time complexity is dominated by the size of level \(d\), which is \(O(b^d)\).

Completeness: Yes — since BFS explores nodes level-by-level, it will not encounter the problem of going down an infinite path forever. If a goal node exists at a finite depth, BFS is guaranteed to find it.

Optimality: BFS is guaranteed to find the shallowest goal node, again because it explores the tree level by level.

Iterative Deepening Search

The motivation for iterative deepening search (IDS) comes from the trade-off between BFS and DFS:

| BFS | DFS | |

|---|---|---|

| Space | \(O(b^d)\) exponential | \(O(bm)\) linear |

| Completeness | Guaranteed to find a solution if one exists | May get stuck on infinite paths |

IDS combines the best of both: it searches iteratively for every depth limit. Up to a particular depth, IDS does depth-first search until it reaches the depth limit. If no goal is found, the depth limit is increased and the search continues. Every time the depth limit is changed, the frontier is completely reset.

Properties of Iterative Deepening Search

Space complexity: IDS performs depth-first search for every depth limit. It will stop at depth \(d\) (the depth of the shallowest goal node). So the space complexity is \(O(bd)\) — linear in \(d\), similar to depth-first search.

Time complexity: IDS may visit all the nodes up to depth \(d\). The total number of nodes is dominated by the nodes at depth \(d\), which is \(b^d\). So roughly the time complexity is \(O(b^d)\). The exact bound is a little worse because of repeated computation — every time the depth limit increases, the earlier levels are searched again — but this repeated computation is not too bad.

Completeness: Yes — because of the depth limit, IDS will not get stuck on infinite paths like DFS.

Optimality: Yes — IDS is guaranteed to find the shallowest goal node, same as BFS.

In summary, iterative deepening search is a rare win-win situation. By combining ideas from breadth-first search and depth-first search, it gets the good properties of both algorithms while not doing much worse on other aspects.

Thought Questions and Practice Problems

- What is the difference between the search graph and the search tree?

- For the search algorithms that we consider, we only store the frontier, not the search graph nor the search tree. If we were able to store the search graph or the search tree, would our search algorithm be better? Can we avoid certain problems for storing the search graph or the search tree?

- For IDS, can we increase the depth limit by more than 1 each time?

- For IDS, whenever we increase the depth limit, can we re-use the results of the previous depth-first search instead of performing the depth-first search from scratch?

- I learned BFS and DFS in CS 341. However, the complexity analyses of these algorithms in this course look quite different from those in CS 341. Why is this the case?

- How do we derive the goal path from the expansion? We know we reached the final node but do we also keep track of how we got there?

Heuristic Search

Why Use Heuristic Search?

We have talked about a few uninformed or blind search algorithms already: depth-first search, breadth-first search, iterative deepening search, and lowest-cost-first search.

Consider the 8-puzzle problem with two states currently on the frontier. An uninformed search algorithm treats each state as a black box — it does not know anything other than the fact that these are two states and that there is a goal test it can apply. An uninformed search algorithm will specify some arbitrary order and expand accordingly.

Now consider how humans would approach this. Humans would notice that the states are quite different — one might be chaotic with all tiles out of place, while the other looks very close to the goal state. Humans have an intuition that tells us to expand the state that is much closer to the goal. Since uninformed search algorithms treat each state as a black box, they cannot find the goal state in a fast and efficient way. They go through the nodes in a systematic way, hoping to stumble upon the goal state at some point.

In contrast, a heuristic search algorithm can do much better. It makes use of something called a heuristic function — essentially an estimate of how close the current state is to the goal state. “Estimate” is the key word here. There is usually some domain knowledge which estimates that one state is closer to the goal state than another. However, the domain knowledge may not be entirely correct, hence why it is an estimate.

A search heuristic \(h(n)\) is an estimate of the cost of the cheapest path from node \(n\) to a goal node. It has the following properties:

- \(h(n)\) is arbitrary, non-negative, and problem-specific

- If \(n\) is a goal node, \(h(n) = 0\)

- \(h(n)\) must be easy to compute (without search)

Overview

In this lecture, we will discuss three related algorithms: Lowest-cost-first search, Greedy best-first search, and A* search. For these algorithms, let us consider search problems where we have costs associated with the arcs in the search graph. Every action has an associated non-negative cost, and our goal is to find the optimal solution — the path with the minimum cost.

These three algorithms are related since they use one or both of two sources of information: the cost function and the heuristic function. For all three, the frontier is implemented as a priority queue — they differ in the order in which they remove paths from the priority queue.

The Cost Function

The cost function gives us the actual cost of a path from the start state to any state \(n\). This is an evaluation of our past — it tells us how long the paths are that we have already found. This number is accurate as we have already found the path.

The Heuristic Function

In contrast, the heuristic function estimates the future. It takes a state and makes an educated guess about how far the state is to a goal state. More formally, given any state, the heuristic value is an estimate of the cost of the cheapest path from the current state to any goal state. The heuristic function is supposed to help us and make the problem easier, but search is an expensive procedure. If it is difficult to calculate the heuristic function, it defeats the purpose.

Relevant Search Algorithms

- Lowest-cost-first search (LCFS): Uses only the cost function. Removes the path with the lowest cost from the frontier. Always selects the best/cheapest path found so far.

- Greedy best-first search (GBFS): Uses only the heuristic function. Removes the path with the lowest heuristic value. Always selects the path/node that we believe is closest to a goal node.

- A*: Uses both the cost and the heuristic functions. Removes the path with the lowest sum of the cost and the heuristic values.

Technically, LCFS is an uninformed search algorithm since it does not use the heuristic function. GBFS and A* are heuristic search algorithms.

Lowest-Cost-First Search

LCFS is technically an uninformed search algorithm because it does not make use of the heuristic function. So far, the uninformed search algorithms focus on finding any solution, with very little guarantee on the quality of the solution found. DFS has no guarantee. BFS and IDS are guaranteed to find the solution with the fewest arcs. If all arcs have the same costs, this solution is optimal. If the arcs have different costs, we cannot say anything about the solution.

LCFS maintains a frontier which is a priority queue ordered by costs of the paths; it selects the path with the lowest total cost to be removed from the frontier and explores the cheapest path first. You have probably learned this algorithm in a previous course — it is also called Dijkstra’s shortest path algorithm.

Properties of LCFS

Time and space complexity are both exponential, since LCFS generates all paths whose cost is less than that of the optimal solution.

Quality of the solution found:

- Complete: Guaranteed to find a solution if one exists? Yes

- Optimal: Guaranteed to find the optimal solution? Yes

These properties hold under mild conditions: the branching factor is finite, and the cost of every arc is bounded below by some positive constant (the cost of an arc cannot be arbitrarily small). This is to make sure that there are only finitely many paths with a finite cost.

Greedy Best-First Search

The Greedy Best-First Search algorithm (GBFS) makes use of the heuristic function. In fact, it relies on the heuristic function as the only information about the states. For GBFS, the frontier is a priority queue ordered by the heuristic value. At every step, the algorithm removes the path with the smallest heuristic value. Intuitively, we are choosing the state that we think is closest to the goal, according to our heuristic function.

Properties of GBFS

Space and time complexity are exponential — the heuristic function does not improve the worst case scenarios. In the worst case, the heuristic function can be completely uninformative, making GBFS equivalent to the worst of the uninformed search algorithms.

GBFS is neither complete nor optimal. If the heuristic function is extremely inaccurate, it will cause the algorithm to go off track, explore paths that do not terminate, or explore paths that are not optimal. The high-level intuition is that GBFS relies on the heuristic function, and we have absolutely no guarantee on how good the heuristic function is.

A* Search

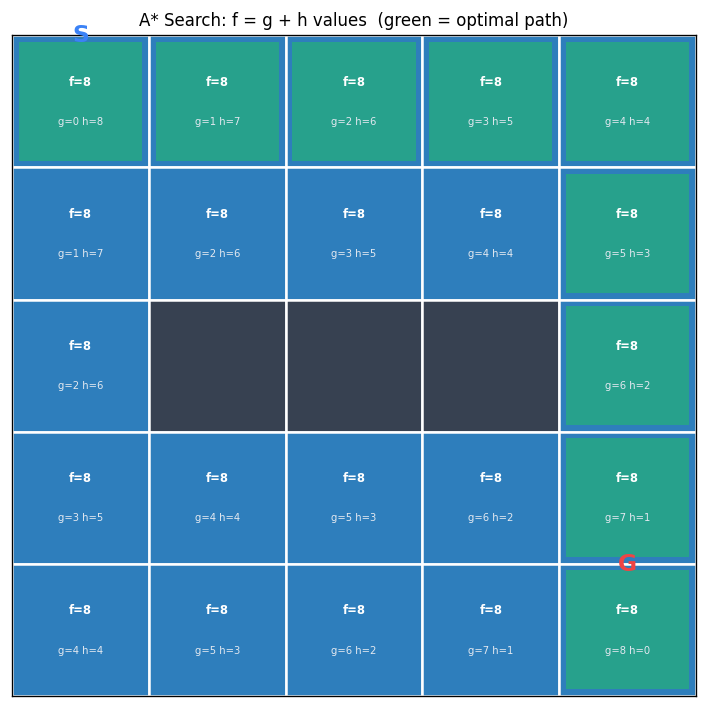

The A* search algorithm is going to do much better than the greedy search algorithm because it takes advantage of the cost information as well. A* search implements the frontier as a priority queue, ordered by \(f(n)\) where \(n\) is the current node:

\[f(n) = cost(n) + h(n)\]Think of \(f(n)\) as an estimate of the cost of the cheapest path from the start state to a goal state through the current state \(n\). Intuitively, A* combines the ideas of lowest-cost-first search and greedy best-first search.

Properties of A* Search

For complexity, A* does not do better than the other heuristic search algorithms — space and time complexity are both exponential. The heuristic function does not improve theoretical guarantees since the guarantees only consider the worst case. In practice, A* performs much better if the heuristic value is accurate.

A* is complete and optimal as long as the heuristic function satisfies a mild condition: the heuristic function must be admissible.

An admissible heuristic is one that does not over-estimate the cost of the cheapest path from the current node \(n\) to a goal node. Let \(h^*(n)\) be the cost of the cheapest path from \(n\) to a goal. Then we must have:

\[0 \leq h(n) \leq h^*(n)\]You can think of an admissible heuristic as a lower bound on the actual cost of the best path, or like an optimistic person who always tells you a cost value that is smaller than the actual cost.

Furthermore, A* is optimally efficient: given a particular admissible heuristic \(h\), no search algorithm could do better. Among all the optimal algorithms that start from the same start node and use the same heuristic \(h\), A* expands the minimum number of paths \(p\) for which \(f(p) \neq f^*\), where \(f^*\) is the cost of the cheapest path.

Designing an Admissible Heuristic

Since A* has the nice property that if the heuristic function is admissible then it is guaranteed to find an optimal solution, an important question is: how do we come up with an admissible heuristic function?

Two Heuristics for the 8-Puzzle

Manhattan distance heuristic: For every tile on the puzzle except the empty one, compute the Manhattan distance (horizontal distance plus vertical distance) from its current position to its goal position, and sum all these distances. For the initial state with tiles 5,3,_,8,7,6,2,4,1, the Manhattan distance heuristic value is 16.

Misplaced tile heuristic: Count the number of non-empty tiles that are not in their goal positions. For the same initial state, seven out of the eight numbers are not in their goal positions, so the heuristic value is 7.

A Procedure for Constructing an Admissible Heuristic

The general procedure is:

- Take the original problem and relax it. Usually the problem has some requirements expressed as constraints. Simplify the constraints or remove some constraints.

- Solve the relaxed problem optimally. The cost of the optimal solution to the relaxed problem is an admissible heuristic function for the original problem.

It is important that the relaxed problem is easy to solve — solving it should not require search.

Constructing Admissible Heuristics for the 8-Puzzle

In the 8-puzzle, there are two requirements when moving a tile from position A to position B:

- A and B must be adjacent

- The target position B must be empty

Relaxation 1: Remove the “B must be empty” constraint (keep adjacency). A tile can move from square A to square B if A and B are adjacent. The optimal solution to this relaxed problem gives us the Manhattan distance heuristic.

Relaxation 2: Remove both constraints. A tile can move from any square A to any square B. Since we can move a tile directly to its goal position in one move, the optimal cost is just the number of misplaced tiles — this gives us the Misplaced tile heuristic.

A third heuristic can be derived by removing only the adjacency constraint (keeping “B must be empty”), which leads to Gaschnig’s heuristic (Gaschnig, 1979).

Comparing Heuristic Functions

When we have multiple admissible heuristic functions, which one should we choose?

First, we must require that the heuristic is admissible, so that A* can find the optimal solution. Next, it is nice if the heuristic function produces different values for different states — this helps us distinguish different states and decide which state to expand next. If the heuristic function is constant, it has the same value for all states, which is not very useful. Finally, we want the heuristic values to be as close to the true costs as possible — the more accurate the heuristic, the better.

Dominating Heuristic

Given heuristics \(h_1(n)\) and \(h_2(n)\), we say that \(h_2(n)\) dominates \(h_1(n)\) if and only if:

- \(\forall n: h_2(n) \geq h_1(n)\) (for every state, \(h_2\) produces a weakly higher value than \(h_1\)

- \(\exists n: h_2(n) > h_1(n)\) (there exists at least one state where \(h_2\) has a strictly larger value than \(h_1\)

One consequence of this relationship is that if \(h_2\) dominates \(h_1\), then running A* with \(h_2\) will not expand more nodes than running A* with \(h_1\). By using \(h_2\), A* will spend less time exploring the search graph and find an optimal solution faster.

For the 8-puzzle, the Manhattan distance heuristic dominates the misplaced tile heuristic. For every tile that is not in its goal position, the misplaced tile heuristic adds 1, while the Manhattan distance heuristic adds at least 1 (more if the positions are not adjacent). The Manhattan distance is always greater than or equal to the misplaced tile count, and strictly greater for many states.

Pruning the Search Space

It is often a good idea to combine search algorithms with pruning strategies. For example, depth-first search will not terminate on a graph with cycles, which can be solved with cycle pruning. For breadth-first search, using multiple-path pruning can reduce the number of nodes we need to expand and make the search more efficient.

Cycle Pruning

A cycle cannot be part of an optimal solution. As soon as we detect that we are in a cycle, we should discard the path right away. Cycles can cause problems for search algorithms — DFS gets trapped in the cycle and does not terminate.

To check whether the new node is in a cycle, we check whether it is on the current path. In the worst case, we need to go through all the nodes on the path — this takes time linear in the length of the path. However, for DFS, which only remembers one path at a time, we can store all nodes in a set or hash map and check in constant time.

procedure SEARCH(Graph, Start node s, Goal test goal(n))

frontier := {⟨s⟩}

while frontier is not empty do

select and remove path ⟨n₀, ..., nₖ⟩ from frontier

if goal(nₖ) then

return ⟨n₀, ..., nₖ⟩

for every neighbour n of nₖ do

if n ∉ ⟨n₀, ..., nₖ⟩ then // cycle check

add ⟨n₀, ..., nₖ, n⟩ to frontier

return no solution

Multiple-Path Pruning

When solving a search problem, we only need to find one path to any node. Once we have found a path to a node, we can discard all other paths to the same node. We maintain an explored set that stores all the nodes to which we have found a path. When we remove a path from the frontier, we check whether the last node is in the explored set. If it is, we discard the path. Otherwise, we expand the path and add the node to the explored set.

Cycle pruning is a special case of multi-path pruning — multi-path pruning subsumes cycle pruning.

procedure SEARCH(Graph, Start node s, Goal test goal(n))

frontier := {⟨s⟩}

explored := {}

while frontier is not empty do

select and remove path ⟨n₀, ..., nₖ⟩ from frontier

if nₖ ∉ explored then

add nₖ to explored

if goal(nₖ) then

return ⟨n₀, ..., nₖ⟩

for every neighbour n of nₖ do

add ⟨n₀, ..., nₖ, n⟩ to frontier

return no solution

A Problem with Multi-Path Pruning

Multi-path pruning specifies that we should only keep the first path found to any node. But what if the first path is not the shortest path? For LCFS, multi-path pruning will not discard the optimal solution, because LCFS always explores paths in order of increasing costs — it will never find a longer path first and then a shorter path later.

For A* with an admissible heuristic, multi-path pruning can discard the optimal solution. The issue arises when the heuristic values cause A* to find a longer path to a node before a shorter path. The culprit is that admissibility allows the heuristic to overestimate the difference between the costs to reach intermediate nodes, even though it does not overestimate the total cost to the goal.

To fix this, we need a stronger condition on the heuristic. An admissible heuristic requires:

\[h(m) - h(g) \leq cost(m, g)\]for any node \(m\) and any goal node \(g\). For A* with multi-path pruning to be optimal, the heuristic must satisfy a stronger condition for any node \(m\) and any other node \(n\):

\[h(m) - h(n) \leq cost(m, n)\]If the heuristic satisfies this inequality, it is said to be a consistent heuristic. If the heuristic is consistent, then A* search with multi-path pruning is optimal.

Fortunately, most admissible heuristic functions are consistent. For example, in a multi-dimensional space, the Euclidean distance is often a consistent heuristic. It is challenging to come up with a heuristic that is admissible but not consistent. Therefore, it is often sufficient to construct an admissible heuristic and verify that it is also consistent using the definition.

Constraint Satisfaction Problems

Motivation for CSPs

In the previous lectures, the search algorithms we studied treated each state as a black box — the algorithms did not know or use the internal structure of states. This was a deliberate design choice, allowing us to use the same algorithm on many different problems. However, not knowing a state’s internal structure means the algorithm cannot detect that a partial state will never lead to a solution.

Consider the 4-queens problem. A partial state with only 2 queens might already violate the row constraint, making it impossible to reach a goal state. Knowing this internal structure allows us to prune large parts of the search tree. When we realize a partial state can never lead to a goal, we immediately backtrack and try something else.

A constraint satisfaction problem (CSP) explicitly models the internal structure of each state. These internal structures enable the development of specialized algorithms that solve CSPs much more efficiently than generic search.

Defining a CSP

A CSP is a special type of search problem. In addition to the usual components (initial state, goal test, successor function, cost function), we explicitly model the internal structure of a state with three components:

- A set \(X\) of variables: \(\{X_1, X_2, \ldots, X_n\}\)

- A set \(D\) of domains: \(D_i\) is the domain for variable \(X_i\)

- A set \(C\) of constraints specifying allowable value combinations

A solution to a CSP is an assignment of values to all the variables that satisfies all the constraints.

The 4-Queens Problem as a CSP

To formulate the 4-queens problem as a CSP, we assume there is exactly one queen per column. We define four variables \(x_0, x_1, x_2, x_3\), where the subscript \(i\) refers to the column and the value is the row position of the queen. The domain of each variable contains all four possible row positions: \(D_i = \{0, 1, 2, 3\}\) for each \(x_i\).

The constraints require that no two queens can be in the same row or the same diagonal, expressed mathematically as:

\[\forall_i \forall_j \; (i \neq j) \rightarrow ((x_i \neq x_j) \land (|x_i - x_j| \neq |i - j|))\]The first part encodes the row constraint (different row positions), and the second part encodes the diagonal constraint (the difference in row positions cannot equal the difference in column positions).

Backtracking Search

CSPs can be solved using backtracking search, a special type of depth-first search. We use an incremental formulation: start with an empty board and add queens one by one from left to right, ensuring constraints are satisfied at each step.

The search components are:

- State: one queen per column in the leftmost \(k\) columns with no pair of queens attacking each other

- Initial state: no queens on the board

- Goal state: 4 queens on the board with no pair attacking each other

- Successor function: add a queen to the leftmost empty column such that it is not attacked by any existing queen

When tracing the backtracking algorithm on the 4-queens problem, we expand nodes in lexicographical order. Starting with the empty state, we place the first queen in row 0. After eliminating attacked positions, we find the second queen can only go in row 2 or 3. Choosing row 2 leads to a dead end (no valid position for the third queen), so we backtrack and try row 3. Continuing this process, we eventually find the solution state 1,3,0,2 — queens placed in rows 1, 3, 0, and 2 for columns 0 through 3 respectively.

The key advantage of backtracking search over plain DFS is that it recognizes when a partial state cannot possibly lead to a solution, immediately backtracking and pruning large portions of the search tree.

Arc Consistency

Motivation for Arc Consistency

When solving the 4-queens problem with backtracking search, placing the first queen in row 0 leads to a dead end — the entire leftmost subtree fails. Can we detect this dead end earlier without exploring it?

The answer is yes. Arc consistency helps us achieve this. After placing the first queen in row 0, we can eliminate positions for other queens by applying the row and diagonal constraints. Eventually, we discover that no valid positions remain for some variable, proving that the assignment \(x_0 = 0\) cannot lead to a solution.

Constraint Graphs

To apply arc consistency, we represent a CSP as a constraint graph. Nodes represent variables, and undirected arcs represent constraints. For the 4-queens problem, we have four nodes and six constraints (one between every pair of variables). If there are multiple constraints between two variables, we combine them into one.

The number of variables involved in a constraint is called its arity. A unary constraint involves one variable, a binary constraint involves two. Any constraint of higher arity can be decomposed into binary constraints by introducing additional variables, so it suffices to consider only binary constraints in a constraint graph.

Definition of Arc Consistency

Given two variables \(X\) and \(Y\) with domains \(D_X\) and \(D_Y\), joined by a constraint \(c(X,Y)\), each binary constraint has two arcs: \(\langle X, c(X,Y) \rangle\) and \(\langle Y, c(X,Y) \rangle\). The first element of the tuple is the primary variable.

The arc \(\langle X, c(X,Y) \rangle\) is arc-consistent if and only if for every value \(v\) in \(D_X\), there exists a value \(w\) in \(D_Y\) such that \((v, w)\) satisfies the constraint \(c(X,Y)\). In predicate logic:

\[\langle X, c(X,Y) \rangle \text{ is arc-consistent} \iff \forall v \in D_X \; \exists w \in D_Y \; (v,w) \text{ satisfies } c(X,Y)\]An important property: arc consistency is not symmetric. If \(\langle X, c(X,Y) \rangle\) is arc-consistent, it does not necessarily mean that \(\langle Y, c(X,Y) \rangle\) is also arc-consistent. Both arcs must be verified separately.

If an arc is not consistent, we can remove values from the primary variable’s domain to make it consistent. This domain reduction never eliminates valid solutions — it only removes values that cannot participate in any solution.

The AC-3 Algorithm

The AC-3 algorithm, proposed by Alan Mackworth in 1977, uses arc consistency to reduce variable domains and bring us closer to solving a CSP. The algorithm works as follows:

procedure AC-3

S := every arc in the constraint graph

while S is not empty do

select and remove ⟨X, c(X,Y)⟩ from S

remove every value in D_X that doesn't have a

supporting value in D_Y satisfying c(X,Y)

if D_X was reduced then

if D_X is empty then return false

for every Z ≠ Y, add ⟨Z, c'(Z,X)⟩ to S

return true

The algorithm initializes a set S with every arc. It repeatedly selects and removes an arc, makes it arc-consistent by removing unsupported values from the primary variable’s domain, and if the domain was reduced, adds back arcs where the reduced variable is the secondary variable (except for the arc to Y, since reducing X’s domain cannot break arc consistency from Y’s perspective).

Why Add Arcs Back?

The most confusing part of AC-3 is why we add arcs back to S after reducing a domain. Consider an arc \(\langle X, c(X,Y) \rangle\) that was previously verified as arc-consistent. If we later remove a value from \(D_Y\), the arc \(\langle X, c(X,Y) \rangle\) may no longer be arc-consistent — the support for some value in \(D_X\) might have been the removed value in \(D_Y\). In contrast, reducing the primary variable’s domain (\(D_X\) cannot break the arc’s consistency, since fewer values need support.

Tracing AC-3 on the 4-Queens Problem

Starting with \(x_0 = 0\) and full domains for the other variables, AC-3 systematically reduces domains:

- Processing \(\langle x_1, c(x_0, x_1) \rangle\): removes 0 and 1 from \(D_{x_1}\), leaving \(\{2, 3\}\)

- Processing \(\langle x_2, c(x_0, x_2) \rangle\): removes 0 and 2, leaving \(\{1, 3\}\)

- Processing \(\langle x_3, c(x_0, x_3) \rangle\): removes 0 and 3, leaving \(\{1, 2\}\)

- Further propagation reduces \(x_2\) to \(\{3\}\), then \(x_3\) to \(\{1\}\)

- Eventually \(x_2\)’s domain becomes empty — AC-3 returns false

This confirms that \(x_0 = 0\) has no solution, matching our earlier finding with backtracking search, but detected without any search.

Properties of AC-3

Does arc processing order matter? No — any order leads to the same final domains.

Three possible outcomes:

- A domain becomes empty → no solution exists

- Every domain has exactly one value → unique solution found

- Every domain has at least one value and at least one has multiple → AC-3 is inconclusive; search or domain splitting is needed

Termination: AC-3 is guaranteed to terminate.

Complexity: With \(n\) variables, at most \(d\) values per domain, and \(c\) binary constraints, there are \(2c\) arcs. Each arc can be re-added at most \(d\) times, and checking consistency takes \(O(d^2)\). The overall runtime is \(O(cd^3)\).

Local Search

Introduction

The search algorithms discussed so far explore the search space systematically, which can be very slow if the space is too large or infinite. They also track paths from the initial state to the goal, which is unnecessary for many problems. For the 4-queens problem, the order in which queens are placed does not matter — only the final board matters.

Local search addresses both concerns by giving up on systematic exploration and path tracking. Local search algorithms explore only a portion of the search space in an ad hoc way, requiring very little memory. They can find solutions quickly on average and work well for CSPs and general optimization problems. However, they provide no guarantee that a solution will be found even if one exists.

What Is Local Search?

Local search starts with a complete assignment of values to all variables and iteratively improves the solution. This contrasts with the incremental formulation used for backtracking search, where we started with an empty state and built it step by step.

Components of a Local Search Problem

A local search problem consists of:

- A state: a complete assignment to all variables

- A neighbour relation: which states to explore next

- A cost function: how good each state is

For the 4-queens problem formulated as local search, the variables are \(x_0, x_1, x_2, x_3\) (row positions), the initial state has 4 queens in random positions, the goal is zero attacking pairs, and the cost function counts the number of pairs of queens attacking each other. Two natural neighbour relations are: (A) move a single queen to a different row in the same column, or (B) swap the row positions of two queens.

Iterative Best Improvement (Greedy Descent)

The first local search algorithm is greedy descent (also called hill climbing when maximizing a fitness function). The algorithm starts with a random state, moves to the neighbour with the lowest cost if it improves the current state, and stops when no neighbour has a lower cost.

Greedy descent can be summarized as: descend into a canyon in a thick fog with amnesia. Descending means minimizing cost. The thick fog means only seeing immediate neighbours. Amnesia means no memory of where we have been.

Properties of Greedy Descent

Greedy descent performs well in practice, often making rapid progress toward a solution. However, it is not guaranteed to find the global optimum given enough time — it can get stuck at local optima.

A local optimum is a state where no neighbour has a strictly lower cost. A global optimum is a state with the lowest cost among all states — it is a special case of a local optimum.

There are several types of problematic regions in the search landscape: strict local optima (valleys that are not the deepest), flat local optima (plateaus where all neighbours have equal cost), and shoulders (flat regions that eventually lead downhill).

Escaping Flat Local Optima

To escape flat local optima, we can allow sideway moves: the algorithm moves to a neighbour with the same cost. To ensure termination, we limit the number of consecutive sideway moves. A tabu list — a short-term memory of recently visited states — prevents cycling.

For the 8-queens problem (approximately 17 million states), greedy descent without sideway moves solves only 14% of instances in 3-4 steps. With up to 100 consecutive sideway moves allowed, the success rate jumps to 94%, though at the cost of more steps (21 on success, 64 on failure).

Greedy Descent with Randomization

Two randomization strategies help escape even strict local optima:

Random restarts: Jump to an entirely different part of the search space and run greedy descent again. This is effective for smooth landscapes with few, wide local optima — random walks would not escape the large valleys.

Random walks: Occasionally move to a higher-cost neighbour. This is effective for jagged landscapes with many small local optima — random restarts would just land in another local optimum.

Greedy descent with random restarts runs greedy descent multiple times from random initial states and keeps the best result. Given enough time, this approach finds the global optimum with probability approaching 1, since eventually the random start will be the global optimum itself.

Simulated Annealing

Simulated annealing combines optimization (exploitation) with exploration. Inspired by the physical process of slowly cooling molten metals, the algorithm starts with a high temperature and reduces it slowly.

At each step, the algorithm chooses a random neighbour. If the neighbour is an improvement, it moves there. If not, it moves with probability \(p = e^{-\Delta C / T}\), where \(\Delta C = \text{cost}(A') - \text{cost}(A) > 0\) is the cost increase and \(T\) is the current temperature.

Two key properties of this probability function:

- As \(T\) decreases, we are less likely to move to a worse neighbour (more conservative over time)

- As \(\Delta C\) increases, we are less likely to move to the neighbour (worse neighbours are riskier)

procedure SIMULATED-ANNEALING

current ← initial-state

T ← a large positive value

while T > 0 do

next ← a random neighbour of current

ΔC ← cost(next) - cost(current)

if ΔC < 0 then

current ← next

else

current ← next with probability p = e^(-ΔC/T)

decrease T

return current

The annealing schedule determines how \(T\) decreases. A popular choice is geometric cooling: multiply \(T\) by a factor close to 1 (e.g., 0.99) at each step. Starting with \(T = 10\) and multiplying by 0.99, after 500 steps \(T \approx 0.07\). If the temperature decreases slowly enough, simulated annealing is guaranteed to find the global optimum with probability approaching 1.

An analogy: imagine a hilly surface where we drop a tennis ball and want it to reach the deepest valley. Gravity pulls the ball down (optimization), but we shake the surface to bounce the ball around (exploration). Initially we shake vigorously; over time we shake less, and the ball settles into a deep valley.

Population-Based Algorithms

All local search algorithms so far maintain a single state. Population-based algorithms maintain multiple states simultaneously, allowing information sharing between parallel searches.

Beam Search

Beam search maintains \(k\) states. At each step, it generates all neighbours of the entire population and keeps the \(k\) best. When \(k = 1\), beam search reduces to greedy descent. Unlike \(k\) random restarts in parallel (where searches are independent), beam search shares information — a state in a promising region contributes many neighbours to the next population. However, beam search suffers from lack of diversity: the population can quickly concentrate in a small region.

Stochastic Beam Search

Stochastic beam search addresses the diversity problem by choosing the next \(k\) states probabilistically rather than deterministically. The probability of choosing a neighbour is proportional to its fitness. This mimics natural selection — neighbours are like offspring, and survival depends on fitness. Stochastic beam search is analogous to asexual reproduction.

Genetic Algorithm

The genetic algorithm extends the biological analogy to sexual reproduction. It maintains a population of \(k\) states and probabilistically selects two parent states (with probability proportional to fitness) to produce a child through crossover — combining portions of each parent. The child may also undergo mutation (random small changes). This process repeats until a stopping criterion is met.

Introduction to Machine Learning

Introduction to Learning

Machine learning has been hugely successful in many applications: medical diagnosis (processing MRI, X-ray, and CT images), facial recognition, handwriting recognition, speech recognition, and spam filtering.

Learning is the ability of an agent to improve its performance on future tasks based on experience. We want agents that can do more, do things better, and do things faster. Learning is essential because we cannot anticipate all possible solutions, all changes over time, and for some tasks we may not know how to program a solution directly.

The Learning Architecture

Any learning problem has four key components:

- Problem/task: the behaviour we want to improve

- Experiences/data: the training examples

- Background knowledge/bias: initial knowledge that may bias performance

- Measure of improvement: how we track progress

Types of Learning Problems

Learning problems fall into three broad categories:

Supervised learning: Given input features, target features, and training examples, predict target features for new examples. This requires labeled data — each training example has a known correct answer.

Unsupervised learning: Learning from examples without target labels. Examples include clustering (grouping similar examples) and dimensionality reduction (projecting high-dimensional data to lower dimensions).

Reinforcement learning: Learning from rewards and punishments, somewhere between supervised (exact feedback) and unsupervised (no feedback).

Classification vs. Regression

Supervised learning problems divide into two categories:

- Classification: target features are discrete (e.g., classifying weather as sunny, cloudy, or rainy)

- Regression: target features are continuous (e.g., predicting tomorrow’s temperature)

Supervised Learning

In supervised learning, we are given training examples of the form \((x, f(x))\), where \(x\) is a vector of input features and \(f(x)\) is the target. We return a hypothesis \(h\) that approximates the true function \(f\).

Learning as a Search Problem

Learning can be viewed as searching through a hypothesis space. We need:

- A search space of hypotheses

- An evaluation function to compare hypotheses

- A search method to explore the space

Often the hypothesis space is prohibitively large for systematic search, so machine learning techniques use local search methods.

Generalization

The goal of machine learning is not to find a function that fits all training examples perfectly, but to find a hypothesis that can predict unseen examples correctly. A hypothesis that predicts unseen examples well is said to generalize well.

Two techniques for choosing a hypothesis that generalizes:

- Ockham’s razor: prefer the simplest hypothesis consistent with the data

- Cross-validation: a more principled approach to estimating generalization error

Bias-Variance Trade-off

The bias-variance trade-off explains how model performance changes with complexity:

- Bias describes how well we can fit the data with infinite training data. High bias means the model is too simplistic — strong assumptions, few degrees of freedom, poor fit to training data.

- Variance describes how much the learned hypothesis varies given different training data. High variance means the model is too flexible — changes drastically with the data, fits training data well but generalizes poorly (over-fitting).

The optimal model complexity balances these two sources of error. Too simple → high bias (under-fitting). Too complex → high variance (over-fitting). The sweet spot is in the middle.

Cross-Validation

Cross-validation is a principled technique for finding a model with low bias and low variance. The steps are:

- Break the training data into \(K\) equally sized partitions

- Train on \(K-1\) partitions (training set)

- Test on the remaining partition (validation set)

- Repeat \(K\) times, each time using a different partition for validation

- Calculate the average error over the \(K\) validation sets

This gives a reliable estimate of how well the model generalizes.

Over-Fitting

As model complexity increases, the error on the training set always decreases. However, the validation set error follows a U-shape: it first decreases (under-fit region), reaches a minimum (best fit), then increases (over-fit region). The best model has enough complexity to capture the common patterns but not so much that it over-fits to training-specific characteristics.

Decision Trees

Examples of Decision Trees

Our first machine learning algorithm is the decision tree. A decision tree is a very common algorithm that we humans use daily — “Which language should you learn?”, “What kind of pet is right for you?”, and “Should you use emoji?” are all examples of decision tree reasoning.

We will use the following running example throughout this unit: Jeeves is a valet to Bertie Wooster. Each morning, Jeeves records the weather (Outlook, Temperature, Humidity, Wind) and whether Bertie plays tennis. The training set has 14 examples.

Definition and Classification

A decision tree is a simple model for supervised classification. Each internal node performs a test on an input feature. The edges are labeled with the feature values. Each leaf node specifies a value for the target feature. If we convert a decision tree to a program, it becomes a big nested if-then-else structure.

To classify an example, we traverse down the tree, evaluating each test and following the corresponding edge until we reach a leaf. The classification on that leaf is our prediction.

The Decision Tree Learning Algorithm

Issues in Learning a Decision Tree

Given a data set, we can generate many different decision trees. Two key decisions must be made:

- What order to test features? Different orders produce different trees. Finding the optimal order is computationally expensive, so we use a greedy (myopic) approach — choosing the best feature at each step without considering future effects.

- Should we grow a full tree or stop early? A full tree may over-fit the training data, so a smaller tree might generalize better (Occam’s razor).

Growing a Full Tree

To build a tree given a testing order: at each node, check if all examples share the same class. If so, create a leaf with that class label. Otherwise, test the next feature and split examples into branches. Repeat recursively.

Stopping Criteria

There are three base cases:

- All examples are in the same class → return the class label

- No features left (noisy data) → return the majority class

- No examples left (unseen feature combination) → use the parent node’s examples and return the majority class

Pseudocode

procedure DECISION-TREE-LEARNER(examples, features)

if all examples are in the same class then

return the class label

else if no features left then

return the majority decision

else if no examples left then

return the majority decision at the parent node

else

choose a feature f

for each value v of feature f do

build edge with label v

build sub-tree using examples where f = v

Determining the Order of Testing Features

We want to choose the feature that makes the biggest difference to the classification — the feature that reduces our uncertainty the most. This is measured by the expected information gain.

Entropy

Entropy from information theory measures the uncertainty in a probability distribution. For a distribution over \(k\) outcomes with probabilities \(P(c_1), \ldots, P(c_k)\):

\[I(P(c_1), \ldots, P(c_k)) = -\sum_{i=1}^{k} P(c_i) \log_2(P(c_i))\]For a binary distribution \((p, 1-p)\): entropy is maximized at 1 bit when \(p = 0.5\) (uniform distribution, maximum uncertainty) and minimized at 0 bits when \(p = 0\) or \(p = 1\) (point mass, no uncertainty). By convention, \(0 \cdot \log_2(0) = 0\).

Expected Information Gain

Consider a feature with \(k\) values \(v_1\) to \(v_k\). Before testing, we have \(p\) positive and \(n\) negative examples. The entropy before testing is:

\[I_{\text{before}} = I\left(\frac{p}{p+n}, \frac{n}{p+n}\right)\]After testing, the expected entropy is the weighted average of the entropy of each branch:

\[EI_{\text{after}} = \sum_{i=1}^{k} \frac{p_i + n_i}{p + n} \cdot I\left(\frac{p_i}{p_i + n_i}, \frac{n_i}{p_i + n_i}\right)\]The expected information gain is the difference:

\[\text{InfoGain} = I_{\text{before}} - EI_{\text{after}}\]We select the feature with the highest expected information gain. This greedy algorithm for building a decision tree by selecting the most informative feature at each step is known as ID3.

A Full Example

For the Jeeves dataset (9 positive, 5 negative), the entropy before any split is approximately 0.94 bits. Computing information gain for each feature:

| Feature | Gain |

|---|---|

| Outlook | 0.247 |

| Humidity | 0.151 |

| Wind | 0.048 |

| Temp | 0.029 |

Outlook has the highest gain, so it becomes the root. For the Overcast branch, all examples are positive (entropy 0, pure node). For Sunny and Rain, we continue splitting. In the Sunny branch, Humidity has the highest gain (0.97); in the Rain branch, Wind has the highest gain (0.97). The resulting tree is small and shallow — exactly what we wanted.

Real-Valued Features

So far, the algorithm handles only discrete features. For real-valued features (like temperature as a number rather than Hot/Mild/Cool), we use binary splits.

Choosing a Split Point

To handle a real-valued feature:

- Sort the instances by the feature value

- Identify possible split points at midpoints between consecutive values where the target label changes

- Calculate the expected information gain for each split point

- Choose the split point with the highest gain

A midpoint \((X + Y)/2\) between consecutive values \(X\) and \(Y\) is a possible split point only if the labels at \(X\) and \(Y\) differ. This smart approach reduces the number of candidate split points from \(n-1\) to only those where the label actually changes.

Over-Fitting

Decision trees are simple, interpretable, and work well even with tiny datasets. However, over-fitting is a common problem. The decision tree learner is a perfectionist — it keeps growing until it perfectly classifies all training examples, which can be undesirable.

Consider the Jeeves dataset with one corrupted data point (day 3 changed from Yes to No). This tiny change causes the algorithm to grow an entire subtree for the Overcast branch (which was previously a pure Yes leaf), dramatically changing the tree and increasing the test error from 0/14 to 2/14.

Dealing with Over-Fitting: Pruning

Two approaches to prevent over-fitting:

Pre-pruning (stop growing the tree early): We use criteria to decide when to stop splitting:

- Maximum depth: stop if the tree reaches a preset depth

- Minimum examples: stop if too few examples remain at a node

- Minimum information gain: stop if the gain from splitting is too small

- Reduction in training error: stop if the error reduction is below a threshold

Post-pruning (grow a full tree, then trim): Post-pruning is particularly useful when individual features are uninformative but multiple features working together are very informative (as in the XOR problem). With pre-pruning, we would stop too early because each feature alone has zero information gain. With post-pruning, the full tree is grown first, capturing the joint effect, and then trimmed where subtrees do not help. To post-prune, we examine nodes whose children are all leaves: if the information gain is below a threshold, we replace the subtree with a single leaf using the majority class.

Neural Networks, Part 1

Introduction to Artificial Neural Networks

There is currently a great deal of excitement around neural networks. To understand why, it helps to situate them within the broader landscape of artificial intelligence. Artificial intelligence is the endeavour of building machines that behave intelligently. Machine learning is a branch of AI that lets computers learn without being explicitly programmed, primarily using statistical methods. Deep learning is a branch of machine learning that develops hierarchical networks that mimic the human brain.

Deep learning has been around for decades, but it only became very successful in the late 2000s. Two factors drove this resurgence: the availability of more powerful computers and access to vast amounts of data.

Two landmark events from 2012 illustrate this success. First, a deep learning algorithm called AlexNet won the ImageNet challenge, a supervised learning application in image classification. Second, the Google Brain project made a breakthrough in unsupervised learning: after processing over 10 million images from YouTube videos, one node of the neural network developed a strong affinity for cats and was able to recognize when an image contained a cat. This has been called the infamous Cat Experiment.

Learning Complex Relationships

Consider applications such as image interpretation, speech recognition, or machine translation. In all of these, the relationship between inputs and outputs is complex. How can we build a model to learn such complex relationships? Humans can learn these relationships very well, so the original motivation for artificial neural networks was to build a model that mimics the human brain.

The Human Brain

Thanks to neuroscience, we have some understanding of the structure of the human brain. The brain is a set of densely connected neurons. Each neuron is a very simple unit with several key components: dendrites receive inputs from other neurons, the soma controls the activity of the neuron, the axon sends outputs to other neurons, and synapses link neurons together. A neuron takes input, does some simple computation, and decides on the strength of its output.

As a computational model, this contrasts with other models we have encountered. For example, a random forest combines multiple decision trees, and each model is very complex. The model of the human brain is very different: it has a large number of simple components rather than a small number of complex components. No one model is inherently better than another.

A Simple Mathematical Model of a Neuron

McCulloch and Pitts first proposed this model of a neuron in 1943. It is a very simple model; actual neurons have much more complex behaviour. The model says that a neuron is a linear classifier: it “fires” when a linear combination of its inputs exceeds some threshold.

Neuron \( j \) computes a weighted sum of its input signals \( a_i \), where \( w_{ij} \) is a weight on the connection from unit \( i \) to unit \( j \):

\[ in_j = \sum_{i=0}^{n} w_{ij} a_i \]Neuron \( j \) then applies an activation function \( g \) to the weighted sum to derive the output:

\[ a_j = g(in_j) = g\left( \sum_{i=0}^{n} w_{ij} a_i \right) \]Notice that the input \( a_0 \) is always set to 1; this is called a bias or dummy input. Because our model is a linear classifier, we want to represent a linear function, but our inputs are all variables. We need the possibility of having a constant term. Setting \( a_0 = 1 \) lets us have that constant term through the weight \( w_{0j} \).

Desirable Properties of the Activation Function

The activation function is very important in our model. There are three desirable properties an activation function should have.

First, it should be non-linear. Combining linear functions will not produce a non-linear function, but complex relationships are often non-linear. Using a non-linear activation function allows us to model non-linear relationships by interleaving linear functions (weighted sums of inputs) with non-linear functions (activation functions).

Second, it should mimic the behaviour of real neurons. If the weighted sum of input signals is large enough, the neuron fires. Otherwise, it does not fire. There is no need for a hard threshold; the function can fire an output signal of some amount.

Third, it should be differentiable almost everywhere. We want to learn a neural network using gradient descent or other optimization algorithms, which require the activation function to be differentiable.

Common Activation Functions

The step function is defined as:

\[ g(x) = \begin{cases} 1 & \text{if } x > 0 \\ 0 & \text{if } x \le 0 \end{cases} \]It is simple to use but not differentiable, so it is not used in practice. It is useful for explaining concepts.

The sigmoid function is defined as:

\[ g(x) = \frac{1}{1 + e^{-kx}} \]The sigmoid can approximate the step function (larger \( k \) gives a better approximation). It gives clear, bounded predictions and is differentiable. It was preferred for a long time but suffers from the vanishing gradient problem: the gradient is very small for extreme values of \( x \), and it is computationally expensive.

The rectified linear unit (ReLU) is defined as:

\[ g(x) = \max(0, x) \]It is computationally efficient, non-linear, and differentiable. However, it suffers from the dying ReLU problem: the gradient is 0 for negative \( x \) or \( x \) approaching 0, which can prevent learning.

The leaky ReLU is defined as:

\[ g(x) = \max(0.1x, x) \]It behaves like a ReLU but enables learning for negative input values by allowing a small, non-zero gradient when \( x < 0 \).

Introduction to Perceptrons

Feed-Forward vs. Recurrent Neural Networks

There are two major types of neural networks. A feed-forward neural network is a directed acyclic graph with no loops. Values flow in one direction through the network: they come in as input values, get transformed through the edges and nodes, and finally go out as output values. They never go backwards. In a feed-forward network, the outputs are a function of the inputs alone. If you know the inputs, you can determine the outputs.

A recurrent neural network can have loops. An output from a node can be fed back into the node as an input. The advantage of having a loop is that it gives the network memory: the circuit can remember some information while it is doing computations. The output value is no longer a function of its inputs alone; it may also depend on what the network remembers about the historical inputs. Arguably, a recurrent network is a better model of the human brain because we have memory. Unfortunately, because of the loops, it is also more difficult to train a recurrent network and to interpret its learned function. In this course we focus on feed-forward networks.

Perceptrons