CS 475/675: Computational Linear Algebra

Leili Rafiee Sevyeri

Estimated study time: 4 hr 42 min

Table of contents

Instructor: Leili Rafiee Sevyeri

Term: Spring 2021

Topics: Linear systems, least squares, eigenvalue problems, singular value decomposition

Course Overview

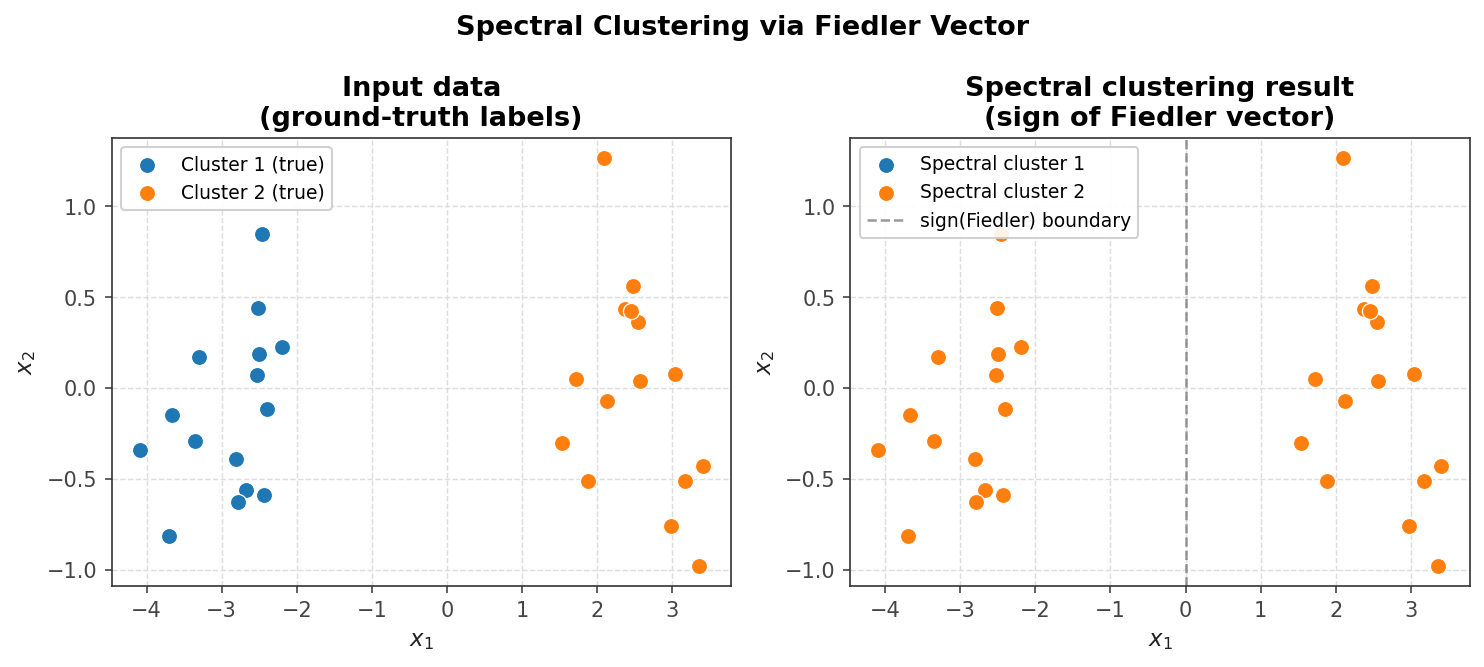

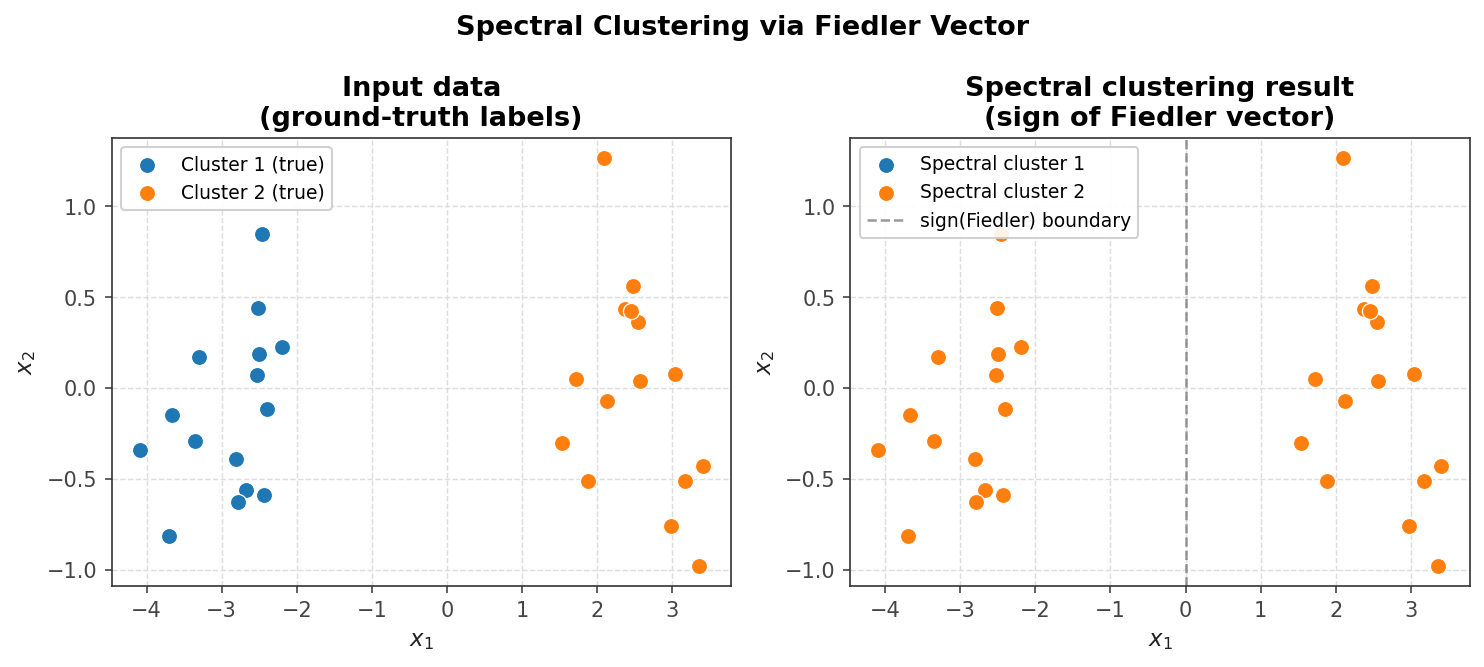

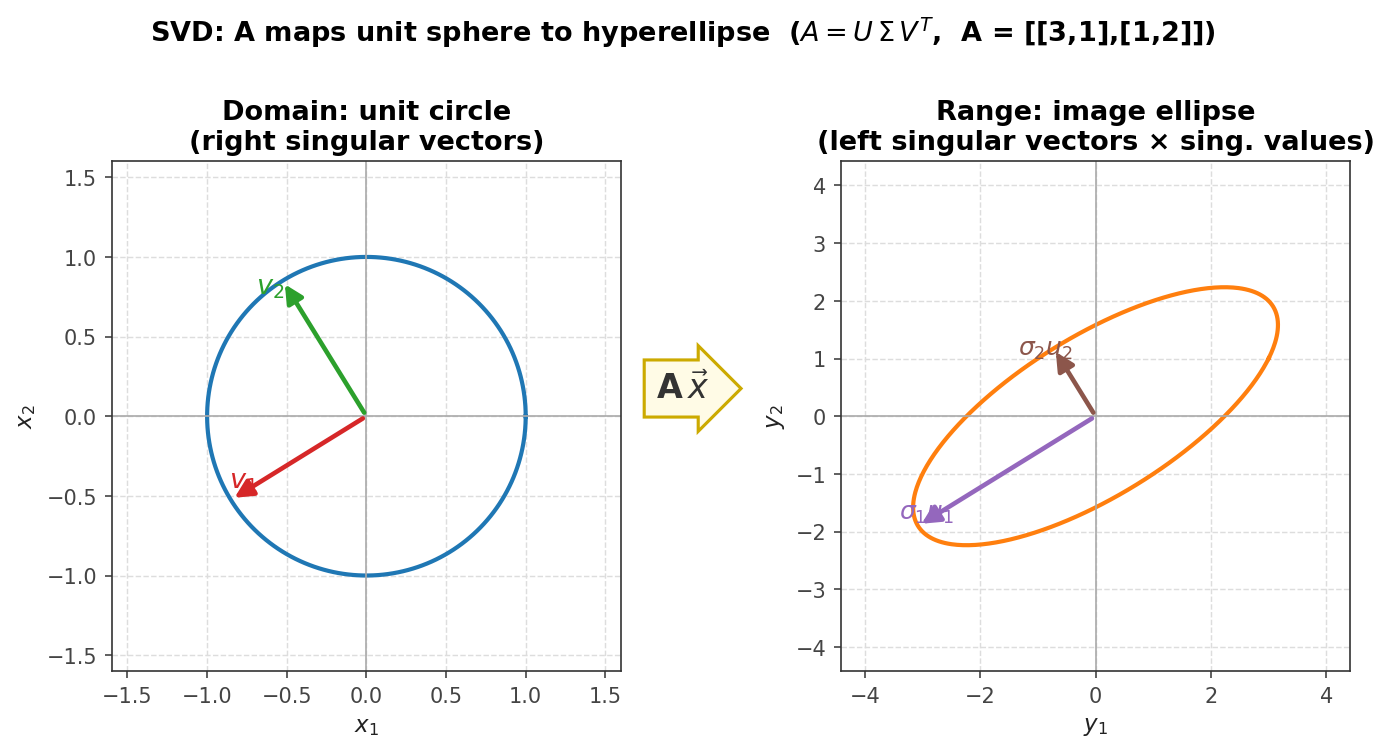

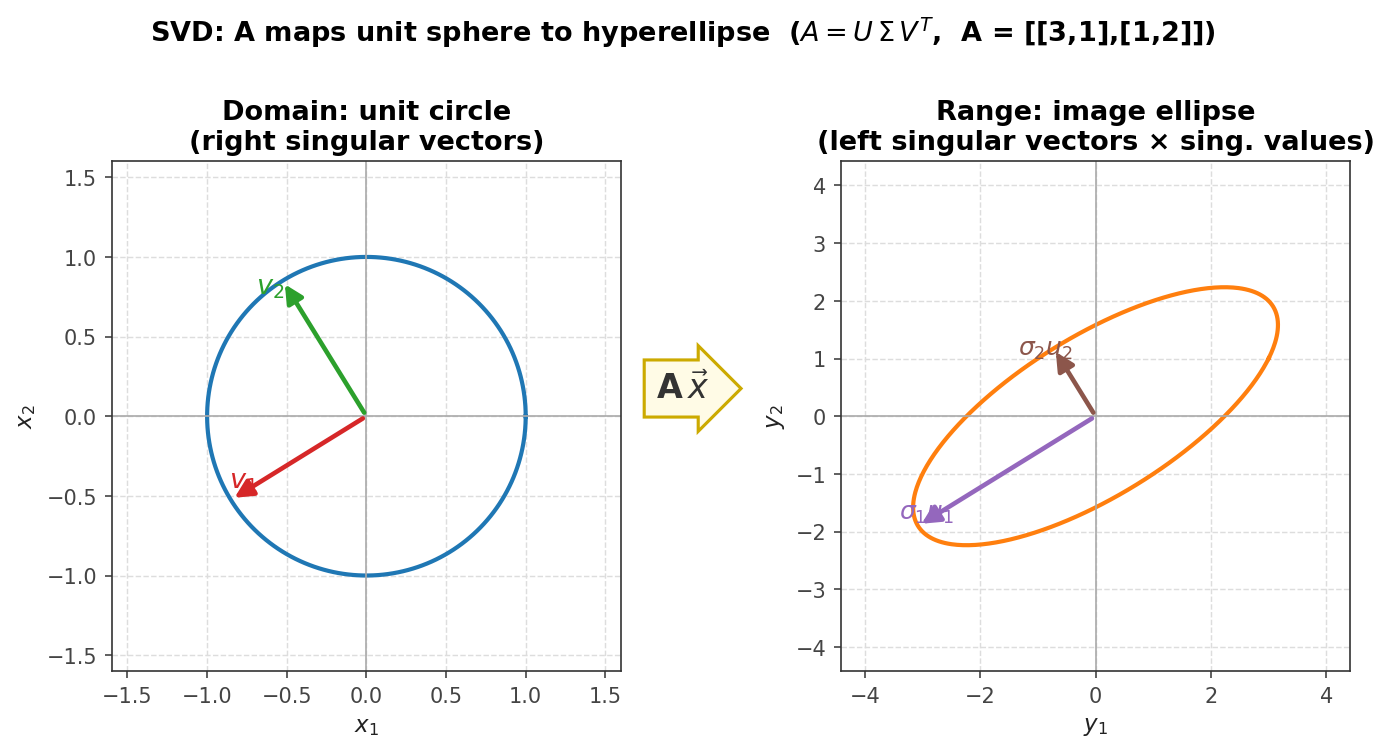

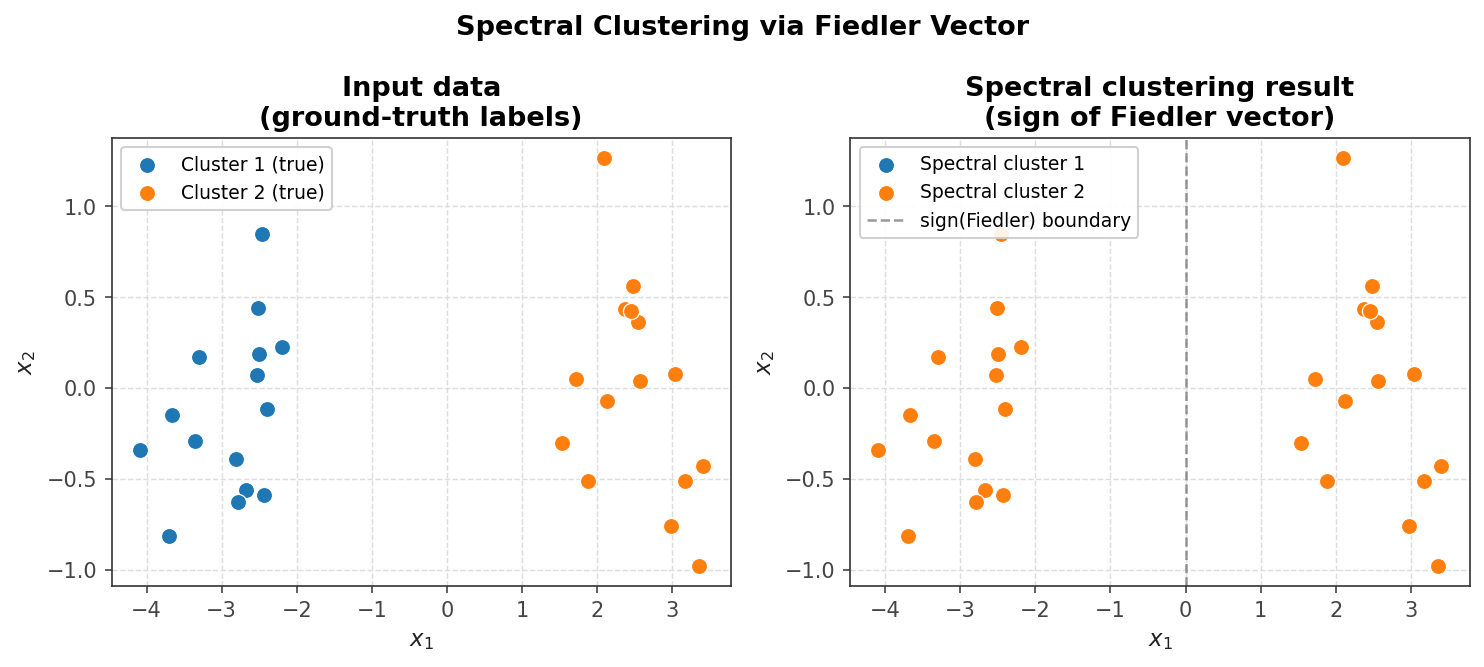

Computational linear algebra is the development of efficient and accurate algorithms for linear algebra problems. This course organizes its content around four main topics: solving linear systems of equations, solving least squares problems, solving eigenvalue problems, and performing singular value decompositions. Applications of these topics are found throughout engineering and the computational sciences, spanning finance, solid and fluid mechanics, machine learning, data mining, computer graphics, biology, climate modelling, optimization, differential equations, signal processing, image processing, medical imaging, computer vision, and search engines, among others. Practical applications explored specifically in this course include temperature modelling via finite-difference discretization of the heat equation, image denoising, spectral clustering for image segmentation, and principal component analysis for image compression.

1.1 What Is Computational Linear Algebra?

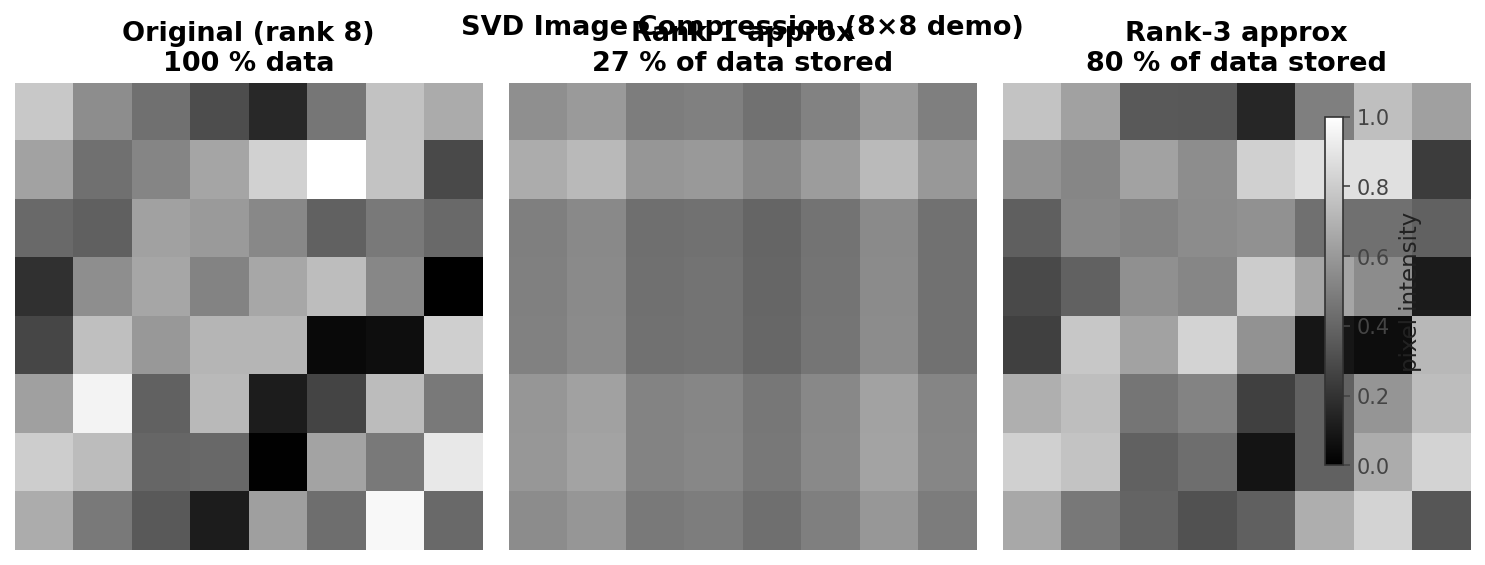

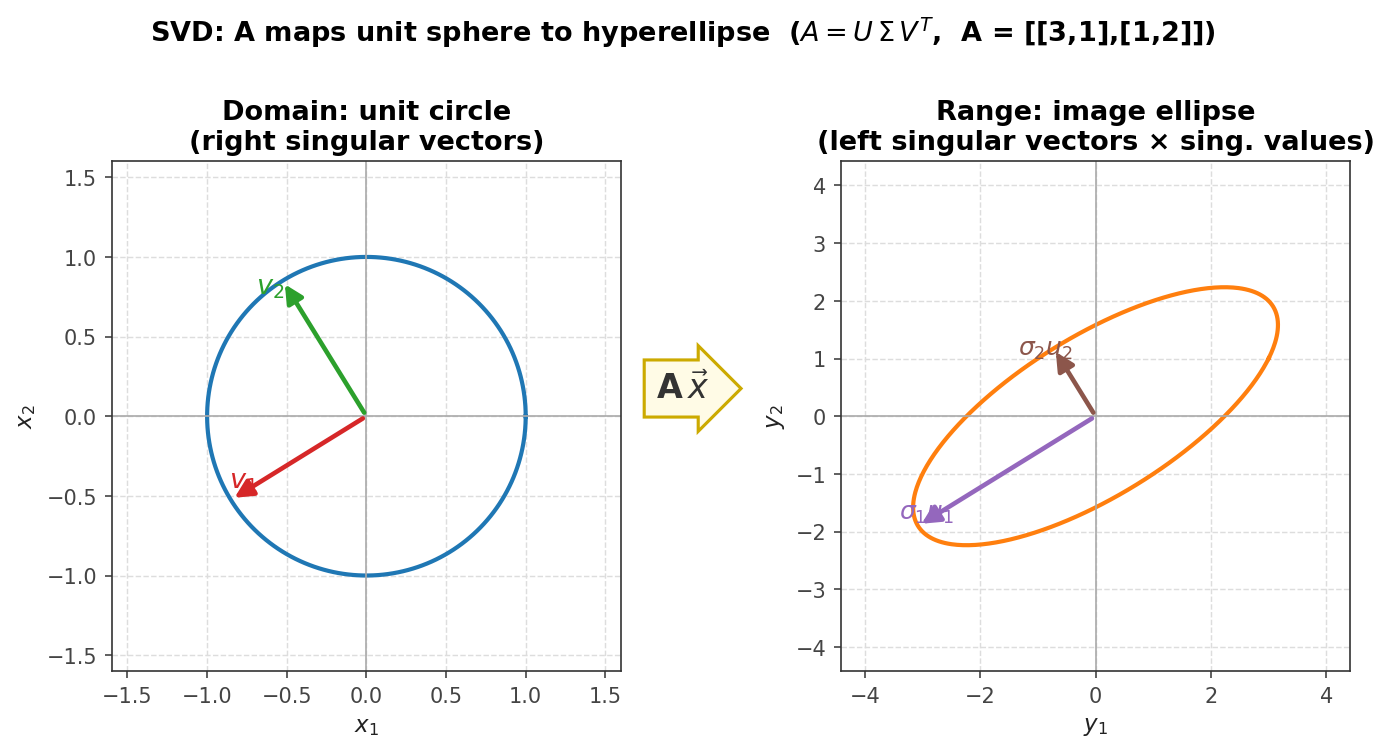

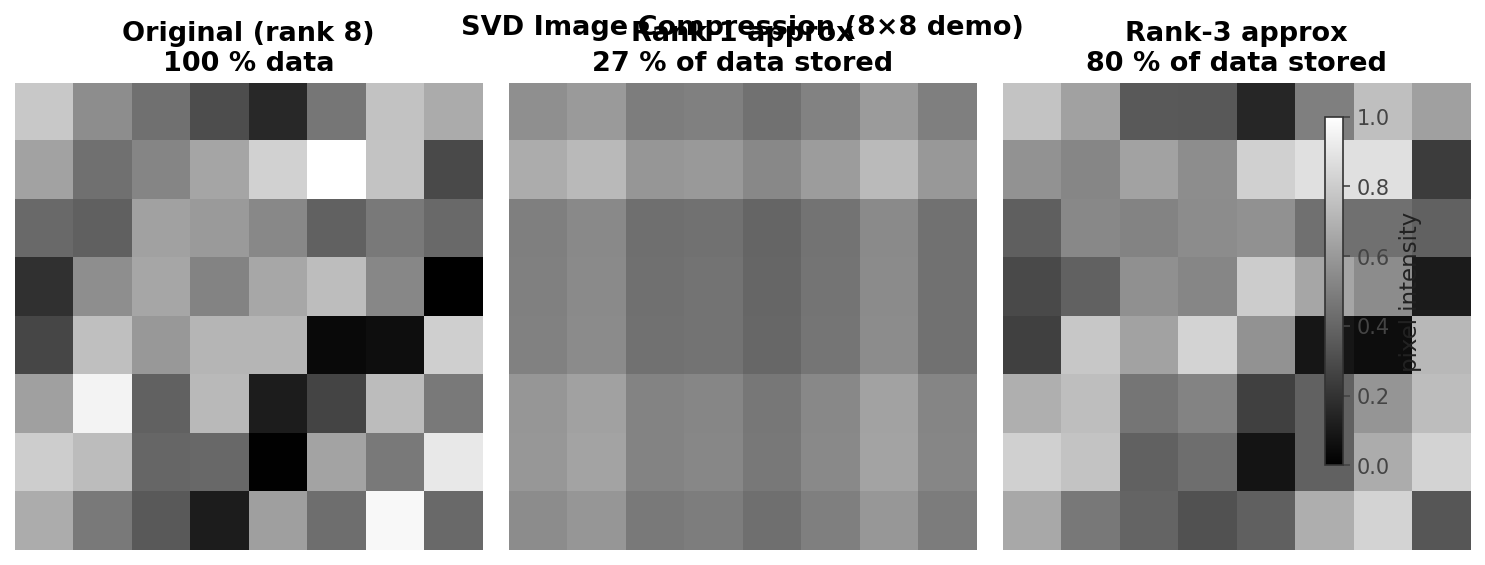

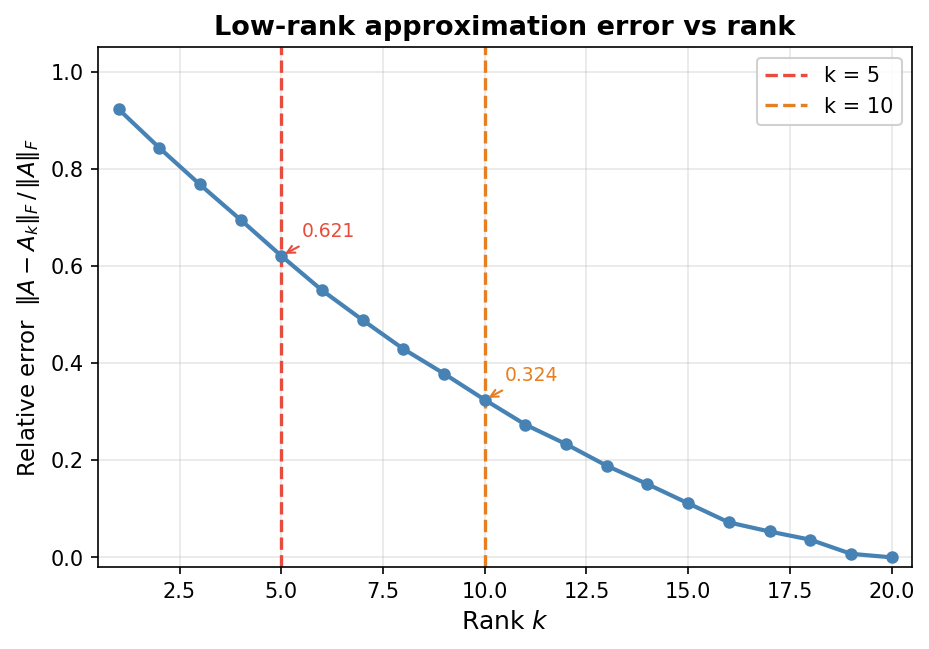

The central concern of computational linear algebra is not merely whether a mathematical solution exists, but how to find it efficiently and accurately. The four main problem classes provide the organizing structure for the entire course. Solving linear systems asks for the vector \( x \) satisfying \( Ax = b \) when \( A \in \mathbb{R}^{n \times n} \) is full-rank and \( b \in \mathbb{R}^n \). Least squares problems also seek a solution to \( Ax = b \), but now \( A \in \mathbb{R}^{m \times n} \) and \( b \in \mathbb{R}^m \); the matrix need not be full rank, and systems may be over- or under-determined. The goal is the “best possible” answer in a least-squares sense, familiar from data-fitting and regression. Eigenvalue problems ask for scalars \( \lambda \in \mathbb{R} \) and vectors \( v \in \mathbb{R}^n \) satisfying \( Av = \lambda v \), equivalently seeking the factorization \( A = Q\Lambda Q^{-1} \) where \( \Lambda \) is diagonal with eigenvalues and the columns of \( Q \) are eigenvectors. A prominent application is Google’s PageRank algorithm, which relies on finding a particular eigenvector. Finally, the singular value decomposition (SVD) factorizes a general matrix \( A \in \mathbb{R}^{m \times n} \) as \( A = U\Sigma V^T \), where \( U \) and \( V \) are orthogonal and \( \Sigma \) is diagonal with non-negative entries called singular values. A key application is the low-rank approximation of matrices: by retaining only a small subset of the rows and columns of \( U \), \( V \), and \( \Sigma \) one obtains a compressed representation of \( A \). As the retained rank increases, the approximation becomes indistinguishable from the original, as illustrated by image compression examples.

SVD low-rank image approximation at varying ranks

SVD low-rank image approximation at varying ranks

1.2 Key Themes

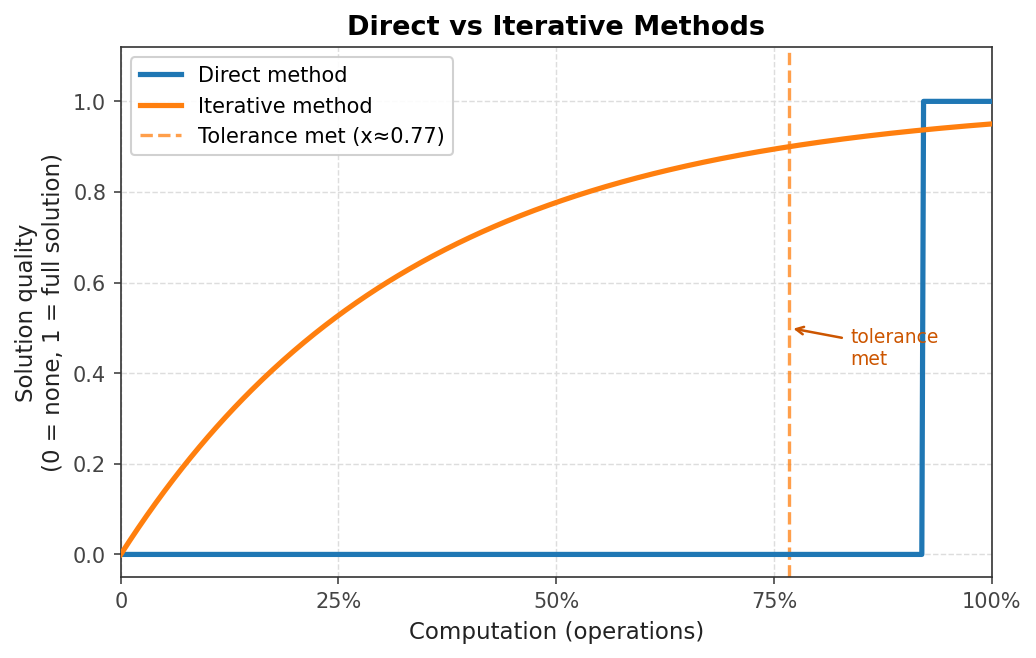

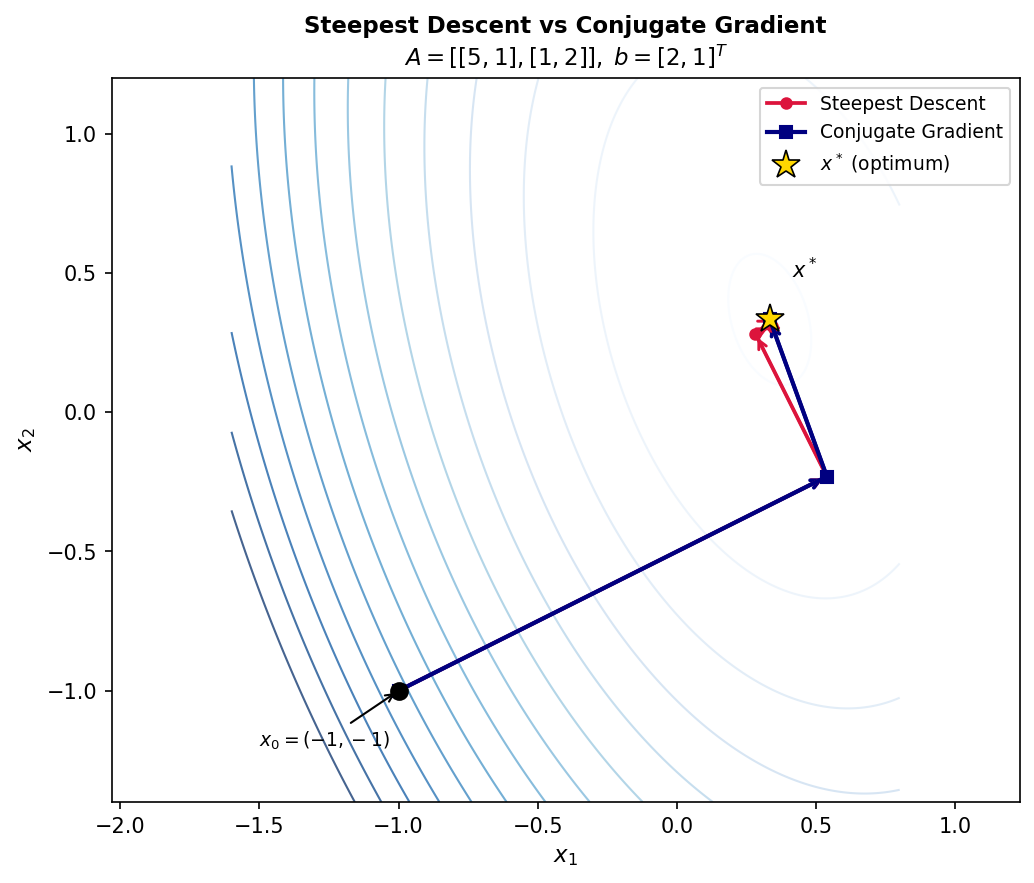

Several themes recur throughout the course and are worth identifying at the outset. The first is the distinction between direct methods and iterative methods. A direct algorithm performs a finite, predetermined sequence of operations and yields the exact solution (in exact arithmetic). Gaussian elimination is a canonical example. An iterative algorithm instead applies the same operations repeatedly to improve an approximate solution until it is “good enough.” Examples include Jacobi iteration, Gauss-Seidel iteration, conjugate gradient, and the PageRank algorithm.

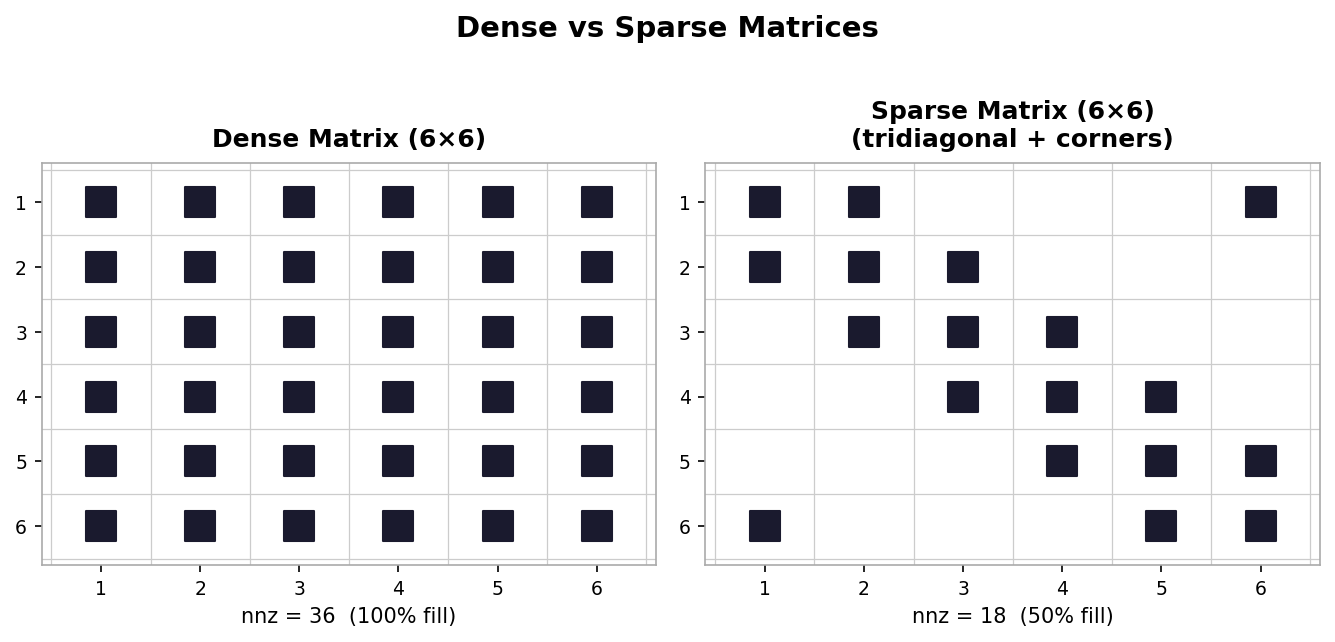

Matrix structure is a second persistent theme. Dense matrices have most or all entries non-zero; they require \( n \times n \) storage and are manipulated in the usual way. Sparse matrices have mostly zero entries, and their non-zero entries may exhibit patterns. Sparsity can be exploited to save both memory and computation, and recognizing or imposing structure is one of the most powerful tools available to practitioners.

Dense (left) vs. sparse tridiagonal (right) matrix nonzero patterns

Dense (left) vs. sparse tridiagonal (right) matrix nonzero patterns

Matrix factorization is a third central theme. Expressing a matrix \( A \) as a product of simpler matrices, \( A = BCD \), opens up questions about what factorizations exist, what properties they possess, and how those properties can be exploited in algorithm design.

The fourth theme is orthogonality. Vectors \( u, v \in \mathbb{R}^n \) are orthogonal if \( u^T v = 0 \). A square matrix \( Q \in \mathbb{R}^{n \times n} \) is orthogonal if \( Q^T = Q^{-1} \). Orthogonality arises repeatedly throughout many of the algorithms covered in this course.

1.3 Course Topics

The topic of solving linear systems tries to solve \( Ax = b \) for \( x \in \mathbb{R}^n \), where \( A \in \mathbb{R}^{n \times n} \) must be full-rank and \( b \in \mathbb{R}^n \). Although this problem is familiar from earlier courses, solving linear systems efficiently and accurately on large problems in floating-point arithmetic takes substantial effort. Least squares problems also aim to solve \( Ax = b \) but with \( A \in \mathbb{R}^{m \times n} \) and \( b \in \mathbb{R}^m \), seeking the best possible answer when the system may be over- or under-determined. Eigenvalue problems involve finding \( \lambda \) and \( v \) satisfying \( Av = \lambda v \), equivalent to the factorization \( A = Q\Lambda Q^{-1} \). The SVD factors a general \( A \in \mathbb{R}^{m \times n} \) as \( A = U\Sigma V^T \) with orthogonal \( U \) and \( V \) and diagonal non-negative \( \Sigma \), enabling low-rank approximation and many other applications.

Linear Algebra Basics

This lecture recaps foundational concepts from linear algebra that will be used throughout the course: vector spaces, bases, dimension, an important interpretation of matrix-vector multiplication, the range, column and row space, null space, rank, nullity, and matrix inverse. It also presents the Sherman-Morrison and Sherman-Morrison-Woodbury formulas for updating a matrix inverse. Much of this material parallels Lecture 1 of Trefethen and Bau, which is recommended as a supplemental read.

2.1 Vector Spaces, Bases, and Dimension

A vector space is a collection of vectors equipped with addition and scalar multiplication operators. An example is \( V = \{v_1, v_2, \ldots, v_k\} \) with \( v_i \in \mathbb{R}^2 \) and the usual operations of component-wise addition and scalar scaling.

A basis \( B \) for a vector space \( V \) is a set of vectors satisfying two conditions: the vectors in \( B \) are linearly independent, and every vector in \( V \) is a linear combination of vectors in \( B \) (i.e., \( B \) spans \( V \). A standard basis for \( \mathbb{R}^3 \) is the set of three unit vectors \( \{e_1, e_2, e_3\} \) with ones in the first, second, and third positions respectively.

The dimension of a vector space \( V \), written \( \dim(V) \), is the number of elements in any basis for \( V \); equivalently, it is the number of linearly independent vectors needed to span \( V \). It is important to note that the dimension of a vector space is not necessarily the same as the dimension of the vectors it contains. For example, the pair of three-dimensional vectors \( \{[1,0,0]^T,\,[0,1,0]^T\} \) spans only a two-dimensional subspace of \( \mathbb{R}^3 \) (the \( xy \)-plane), so \( \dim(V) = 2 \).

2.2 Interpretation of Matrix-Vector Multiplication

Let \( x \in \mathbb{R}^n \) and \( A \in \mathbb{R}^{m \times n} \). The familiar row-times-column interpretation of matrix-vector multiplication yields the product \( b = Ax \) with entries

\[ b_i = \sum_{j=1}^{n} a_{ij} x_j, \quad i = 1, \ldots, m. \]Here \( a_{ij} \) is the \( (i,j) \) entry of \( A \) and \( x_j \) is the \( j \)-th entry of \( x \). The map \( x \mapsto Ax \) is linear: \( A(x+y) = Ax + Ay \) and \( A(\alpha x) = \alpha Ax \) for any \( x, y \in \mathbb{R}^n \) and \( \alpha \in \mathbb{R} \). Conversely, any linear map from \( \mathbb{R}^n \) to \( \mathbb{R}^m \) can be written as multiplication by an \( m \times n \) matrix.

A more useful interpretation is as a linear combination of columns. If \( a_j \in \mathbb{R}^m \) denotes the \( j \)-th column of \( A \), then

\[ b = Ax = \sum_{j=1}^{n} x_j a_j. \]This says that \( b \) is a linear combination of the columns of \( A \) with coefficients given by the entries of \( x \). This column-combination view makes it immediately apparent that the range of \( A \) is identical to the column space of \( A \).

The range of \( A \in \mathbb{R}^{m \times n} \) is \( \text{range}(A) = \{y \in \mathbb{R}^m \mid y = Ax,\, x \in \mathbb{R}^n\} \). The column space of \( A \) is the space of all vectors expressible as linear combinations of its columns, and the row space is the analogous span of its rows.

2.3 Null Space, Nullity, and Rank

The null space (or kernel) of \( A \in \mathbb{R}^{m \times n} \) is

\[ \text{null}(A) = \{x \in \mathbb{R}^n \mid Ax = 0\}, \]the set of all vectors mapped to the zero vector. The zero vector itself always belongs to every null space; interest lies in non-trivial vectors. The dimension of the null space is called the nullity of \( A \), written \( \text{nullity}(A) \). The dimensions of the column and row spaces are both equal to the rank of \( A \), written \( \text{rank}(A) \) — a fundamental result says that column rank always equals row rank. The rank-nullity theorem then gives

\[ \text{rank}(A) + \text{nullity}(A) = n, \]where \( n \) is the number of columns of \( A \). An \( m \times n \) matrix \( A \) is full rank if \( \text{rank}(A) = \min(m, n) \). When \( A \) is full rank and \( m \geq n \), the map \( x \mapsto Ax \) is one-to-one: distinct inputs produce distinct outputs.

2.4 Matrix Inverse

A square matrix \( A \in \mathbb{R}^{n \times n} \) that is full rank is called invertible or nonsingular. Its inverse \( A^{-1} \) is the unique matrix satisfying \( AA^{-1} = A^{-1}A = I \). For a real square matrix, the following statements are all equivalent: \( A \) is invertible; \( \text{rank}(A) = n \); \( \text{range}(A) = \mathbb{R}^n \); \( \text{null}(A) = \{0\} \); \( A \) has no zero eigenvalues; \( A \) has no zero singular values; and \( \det(A) \neq 0 \).

Several useful matrix inverse identities hold for invertible matrices \( A \) and \( B \): \( (AB)^{-1} = B^{-1}A^{-1} \); \( (A^{-1})^T = (A^T)^{-1} = A^{-T} \); and \( B^{-1} = A^{-1} - B^{-1}(B-A)A^{-1} \). The last identity is verified by multiplying \( B \) on the left: \( B(A^{-1} - B^{-1}(B - A)A^{-1}) = BA^{-1} - (B - A)A^{-1} = BA^{-1} - BA^{-1} + I = I \).

Now suppose we have already computed \( A^{-1} \) and wish to update it after a rank-one modification \( A \to A + uv^T \), where \( u, v \in \mathbb{R}^n \). The Sherman-Morrison formula gives

\[ (A + uv^T)^{-1} = A^{-1} - \frac{A^{-1}uv^T A^{-1}}{1 + v^T A^{-1} u}. \]This allows updating \( A^{-1} \) without recomputation from scratch; the process is called a rank-one update since \( uv^T \) has rank one. Generalizing to a rank-\( k \) modification, with \( U, V \in \mathbb{R}^{n \times k} \), gives the Sherman-Morrison-Woodbury formula:

\[ (A + UV^T)^{-1} = A^{-1} - A^{-1}U(I + V^T A^{-1} U)^{-1} V^T A^{-1}. \]A rank-\( k \) modification to \( A \) therefore yields a rank-\( k \) correction to \( A^{-1} \). One can verify this formula by checking that multiplying by \( (A + UV^T) \) recovers the identity.

Solving Linear Systems

Many practical problems rely on solving systems of linear equations of the form \( Ax = b \), where \( A \in \mathbb{R}^{n \times n} \), \( b \in \mathbb{R}^n \), and \( x \in \mathbb{R}^n \) is the vector of unknowns. Even algorithms for nonlinear problems often require solving linear systems as substeps. This lecture discusses direct methods based on Gaussian elimination, interpreted through LU factorization and triangular solves, and derives the cost of these algorithms.

3.1 Linear Systems of Equations

To appreciate the scale of modern linear system solves, consider fluid animation. Simulating a fluid for one time-step at a modest resolution of \( 100 \times 100 \times 100 \) cells involves at least one million unknowns, so \( A \) has dimension at least \( 1{,}000{,}000 \times 1{,}000{,}000 \). Animating just ten seconds at 30 frames per second requires solving roughly 300 such problems. Efficient and accurate methods are therefore indispensable.

A key design principle is to avoid constructing \( A^{-1} \) directly: doing so is generally less efficient in terms of operation counts, less accurate due to accumulated round-off error, and more costly in terms of storage (especially for sparse \( A \).

3.2 Gaussian Elimination

Solving \( Ax = b \) via Gaussian elimination is interpreted in three steps. First, factor \( A \) into the product \( A = LU \). Second, perform a forward solve: solve \( Lz = b \) for the intermediate vector \( z \). Third, perform a backward solve: solve \( Ux = z \) for \( x \). Here \( L \) is lower triangular (zero entries above the main diagonal) and \( U \) is upper triangular (zero entries below the main diagonal). When equations in \( Ax = b \) must be rearranged to avoid zero pivots, one uses the permuted LU factorization \( PA = LU \), where \( P \) is a permutation matrix (the identity matrix with rows rearranged), and solves \( PAx = Pb \) instead.

LU Factorization. The algorithm proceeds one column at a time, subtracting appropriate multiples of each pivot row from the rows below it to introduce zeros in the sub-diagonal entries of that column. The multiplicative factors used to zero out entry \( a_{ik} \) are stored in place of those zeros and constitute the entries of \( L \). The resulting \( L \) is unit lower triangular (diagonal entries are all 1). The in-place pseudocode iterates over each column \( k = 1, \ldots, n \); for each row \( i > k \) it computes the multiplier \( \text{mult} = a_{ik}/a_{kk} \), stores it at position \( a_{ik} \) (where a zero would otherwise go), and subtracts \( \text{mult} \times \) the current pivot row from row \( i \).

Triangular Solves. Substituting \( A = LU \) into \( Ax = b \) gives \( L(Ux) = b \). Setting \( z = Ux \), one first solves \( Lz = b \) (forward substitution, working from top to bottom through the equations) and then \( Ux = z \) (backward substitution, working from bottom to top). These two solves are far more efficient than solving the original system because the triangular structure means each unknown is determined by one simple equation once its predecessors are known.

The forward substitution algorithm initializes \( z_i = b_i \) and subtracts the already-solved \( z_j \) values weighted by \( l_{ij} \) for \( j < i \). The backward substitution algorithm initializes \( x_i = z_i \), subtracts the already-solved \( x_j \) values weighted by \( u_{ij} \) for \( j > i \), and divides by \( u_{ii} \). Both algorithms assume non-zero diagonal entries; zero or near-zero pivot entries cause problems that are addressed through pivoting (discussed in Week 3 on stability of factorizations).

3.3 Cost of Gaussian Elimination

The cost of an algorithm is measured in flops (floating-point operations: adds, subtracts, multiplies, and divides). Note that “flops” here denotes a count, not a rate. Actual hardware timing depends on factors such as fused-multiply-add operations, but flop counting gives a reliable asymptotic picture.

For the LU factorization, the dominant cost comes from the innermost loop of the algorithm. Counting the multiplications and subtractions across all three nested loops and summing using standard identities for \( \sum k \) and \( \sum k^2 \) yields a total of

\[ \frac{2n^3}{3} + O(n^2) = O(n^3) \]flops for factorization. Each triangular solve costs \( n^2 + O(n) = O(n^2) \) flops, so the total cost of Gaussian elimination is

\[ \frac{2n^3}{3} + O(n^2) + 2(n^2 + O(n)) = O(n^3). \]The LU factorization dominates for large \( n \). This has an important practical consequence: once \( L \) and \( U \) are available, solving with a different right-hand side \( b \) costs only \( O(n^2) \) additional flops.

Constructing \( A^{-1} \). If the explicit inverse is genuinely needed, one can factor \( A = LU \) once and then solve \( LU v_k = e_k \) for each standard basis vector \( e_k \); the columns \( v_k \) assemble to give \( A^{-1} \). Each additional right-hand side costs only \( O(n^2) \) flops. Nonetheless, most numerical algorithms avoid constructing \( A^{-1} \) for the reasons outlined in Section 3.1.

Worked Example: LU Factorization and Solve

This example walks through solving \( Ax = b \) for a specific \( 3 \times 3 \) system by computing the LU factorization and then performing forward and backward substitution.

The first step is to factor \( A \) into its lower and upper triangular components \( L \) and \( U \). The process follows exactly the steps of Gaussian elimination. To zero out the entry in row 2, column 1, one replaces row 2 with row 2 minus 1 times row 1. This leaves row 1 unchanged and produces a zero in the \( (2,1) \) position. For example, if \( a_{21} = 1 \) and \( a_{11} = 1 \), the multiplier is 1 and the updated entries in row 2 become \( 1 - 1 = 0 \), \( -2 - 1 = -3 \), and \( 2 - 1 = 1 \). Row 3 remains unchanged after this first step.

In the next step, zeroing out position \( (3,1) \) requires replacing row 3 with row 3 minus 1 times row 1. Rows 1 and 2 remain unchanged. The result introduces a zero in position \( (3,1) \) and updates the remaining entries: \( 2 - 1 = 1 \) and \( -1 - 1 = -2 \).

The final elimination step zeros out position \( (3,2) \) by replacing row 3 with row 3 minus \( -\tfrac{1}{3} \) times row 2. The pivot row entry is \( -3 \), giving a multiplier of \( -\tfrac{1}{3} \). The new entry in position \( (3,3) \) becomes \( -2 - (-\tfrac{1}{3}) \cdot 1 = -2 + \tfrac{1}{3} = -\tfrac{5}{3} \). This final matrix is \( U \), the upper triangular factor.

The matrix \( L \) is built from the multiplicative factors used at each step. It is unit lower triangular, with ones on the diagonal. The three multipliers used were 1, 1, and \( -\tfrac{1}{3} \), placed in the positions they zeroed out.

With \( L \) and \( U \) in hand, the forward solve \( Lz = b \) begins at the top equation. The first equation gives \( z_1 = 0 \) immediately. The second equation gives \( z_1 + z_2 = 4 \), so \( z_2 = 4 \). The third equation gives \( z_1 - \tfrac{1}{3}z_2 + z_3 = 2 \), which becomes \( 0 - \tfrac{4}{3} + z_3 = 2 \) and so \( z_3 = \tfrac{6}{3} + \tfrac{4}{3} = \tfrac{10}{3} \).

The backward solve \( Ux = z \) starts at the bottom equation: \( -\tfrac{5}{3} x_3 = \tfrac{10}{3} \), giving \( x_3 = -2 \). The second equation from the bottom gives \( -3x_2 + x_3 = 4 \), so \( -3x_2 = 4 + 2 = 6 \) and \( x_2 = -2 \). The top equation gives \( x_1 + x_2 + x_3 = 0 \), so \( x_1 = -x_2 - x_3 = 2 + 2 = 4 \). The final solution is \( x = [4,\,-2,\,-2]^T \).

Worked Example: Cost of LU Factorization

This example derives the leading-order flop count for LU factorization by carefully summing operations across all three nested loops.

The innermost loop body performs two flops: one subtraction and one multiplication. Immediately outside the innermost loop, there is one division, contributing one additional flop per iteration of the middle loop. The total cost is the sum of all these flops over all iterations of all three loops.

Writing this as a triple sum, the cost equals \( \sum_{k=1}^{n} \sum_{i=k+1}^{n} \left(1 + \sum_{j=k+1}^{n} 2\right) \). Evaluating the innermost sum first, \( \sum_{j=k+1}^{n} 2 = 2(n-k) \), by the basic identity that summing the constant 1 from \( r \) to \( s \) gives \( s - r + 1 \). Combining with the division term yields the factor \( 1 + 2(n-k) = 1 + 2n - 2k \) per middle-loop iteration.

Summing over \( i \) from \( k+1 \) to \( n \) gives \( (n-k)(1 + 2n - 2k) \). Expanding the product yields terms of order constant, linear, and quadratic in \( k \). The final sum over \( k \) from 1 to \( n \) is evaluated using the known identities \( \sum_{k=1}^{n} k = \frac{n(n+1)}{2} \) and \( \sum_{k=1}^{n} k^2 = \frac{n(n+1)(2n+1)}{6} \). Substituting these and simplifying all terms produces the total cost

\[ \frac{2}{3}n^3 - \frac{1}{2}n^2 - \frac{1}{6}n = \frac{2n^3}{3} + O(n^2), \]confirming the \( O(n^3) \) scaling stated in the lecture notes. The leading coefficient \( \tfrac{2}{3} \) will be seen to be exactly twice the leading coefficient of Cholesky factorization, reflecting the symmetry savings exploited in that method.

Worked Example: Partial Pivoting

This example demonstrates solving \( Ax = b \) using LU factorization with partial pivoting. The process factors \( A \) such that \( PA = LU \), where \( P \) is a permutation matrix. The resulting system becomes \( PAx = Pb \), equivalently \( LUx = \tilde{b} \) where \( \tilde{b} = Pb \), and this is solved with the usual two triangular solves.

The first step is to factor \( A \). With partial pivoting, one examines the magnitude of entries in the first column to select the pivot. Suppose the third entry has the largest magnitude (for example, 2 is greater than 1), so rows 1 and 3 must be swapped. The first permutation matrix \( P_1 \) is the identity matrix with rows 1 and 3 swapped. After applying \( P_1 \), the permuted matrix \( P_1 A \) has the largest entry on the diagonal of the first column, making it a safe pivot.

Row subtractions then zero out the two entries below the pivot. Both rows 2 and 3 are updated by subtracting one-half of row 1 (the multiplier \( \tfrac{1}{2} \) follows from dividing each sub-diagonal entry by the pivot 2). This produces zeros in the first column and updates the remaining entries: for example, the \( (2,2) \) entry becomes \( -1 - \tfrac{1}{2}(3) = -\tfrac{5}{2} \) and the \( (2,3) \) entry becomes \( 1 - \tfrac{1}{2}(-1) = \tfrac{3}{2} \).

After the first elimination stage, the two candidate pivot entries in the second column are equal in magnitude, so no row swap is needed. The second permutation matrix \( P_2 \) is therefore just the identity. The final elimination step replaces row 3 with row 3 minus 1 times row 2, zeroing the \( (3,2) \) entry and updating \( (3,3) \) to \( \tfrac{5}{2} - \tfrac{3}{2} = 1 \). The resulting upper triangular matrix is \( U \).

The total permutation matrix is \( P = P_2 P_1 = P_1 \) since \( P_2 = I \), giving the matrix that swaps rows 1 and 3. The lower triangular factor \( L \) is unit lower triangular with the three multipliers \( \tfrac{1}{2} \), \( \tfrac{1}{2} \), and 1 placed in the appropriate sub-diagonal positions.

To solve for \( x \), one first permutes the right-hand side: \( \tilde{b} = Pb \), which swaps the first and third entries of \( b = [4,1,2]^T \) to give \( \tilde{b} = [2,1,4]^T \). The forward solve \( Lz = \tilde{b} \) then gives \( z = [4, -1, 1]^T \), and the backward solve \( Ux = z \) yields the final answer \( x = [1, 1, 1]^T \).

Special Linear Systems

This lecture addresses a central question: can \( Ax = b \) be solved more efficiently by exploiting properties of \( A \)? The answer is yes. The common matrix types considered in this course are symmetric, positive definite (PD), symmetric positive definite (SPD), banded, tridiagonal, and general sparse. This lecture covers the first three types, which lead to the \( LDL^T \) factorization for symmetric matrices and the Cholesky factorization for SPD matrices.

4.1 Symmetric Matrices

A symmetric matrix satisfies \( A = A^T \); symmetry requires the matrix to be square. A general LU-like factorization exists for any matrix possessing an LU factorization, as stated in Theorem 4.1: there exist unique unit lower triangular matrices \( L, M \) and a diagonal matrix \( D \) such that \( A = LDM^T \). The proof constructs \( D = \text{diag}(u_{11}, u_{22}, \ldots, u_{nn}) \) from the diagonal of \( U \) and sets \( M^T = D^{-1}U \), so that dividing rows of \( U \) by the corresponding diagonal entry makes the diagonal of \( M^T \) all ones, and \( M \) becomes unit lower triangular.

Unfortunately the flop count of \( LDM^T \) is essentially the same as a plain LU factorization. However, if \( A \) is symmetric, then Theorem 4.2 guarantees that \( M = L \), giving the \( LDL^T \) factorization \( A = LDL^T \). Since \( M = L \) there is no need to compute redundant entries; roughly half the cost of a plain LU factorization is saved.

The proof of \( M = L \) proceeds by considering the matrix \( M^{-1}AM^{-T} \), which is symmetric (as \( A \) is symmetric) and evaluates to \( M^{-1}L D \). The product \( M^{-1}L \) is lower triangular (product of two lower triangular matrices), so \( M^{-1}LD \) is lower triangular. But it is also symmetric, and the only lower triangular symmetric matrix is a diagonal matrix. Since both \( L \) and \( M \) are unit lower triangular, \( M^{-1}L \) is also unit triangular and diagonal, hence the identity, so \( M = L \).

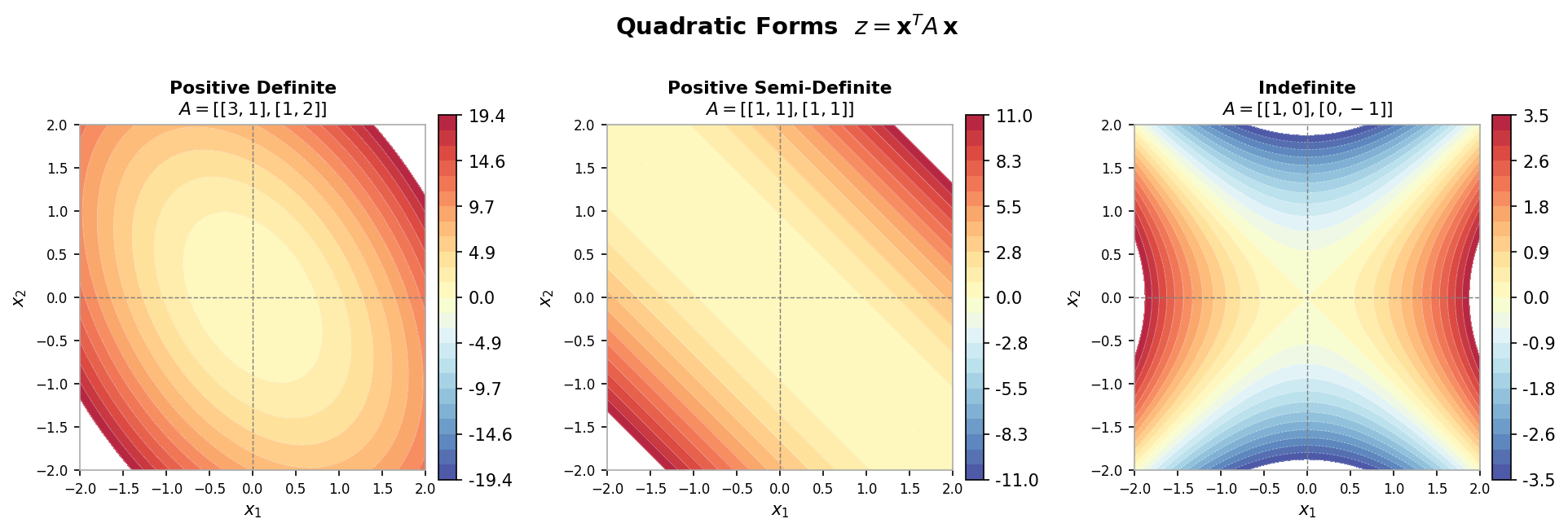

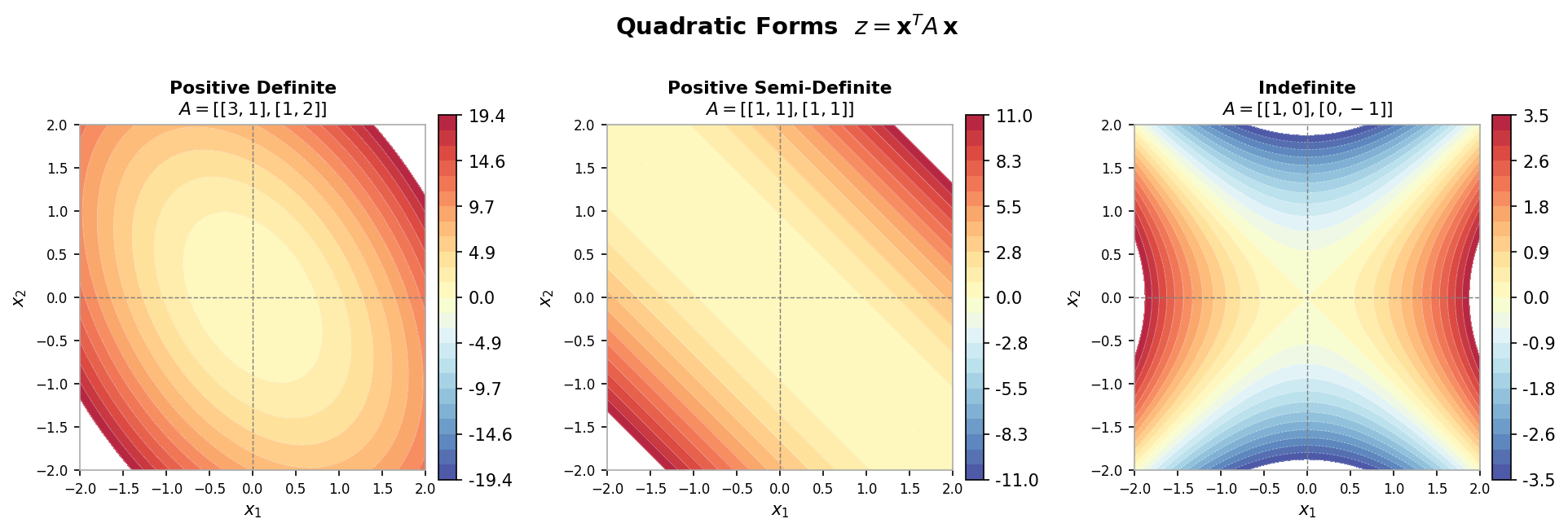

4.2 Positive Definiteness

A matrix \( A \in \mathbb{R}^{n \times n} \) is positive definite (PD) if \( x^T Ax > 0 \) for all \( x \in \mathbb{R}^n \), \( x \neq 0 \). This generalizes the notion of positivity for scalars: \( a \in \mathbb{R} \) is positive if \( xax > 0 \) for all \( x \neq 0 \). The function \( f(x) = x^T Ax \) is quadratic with \( A \) as its coefficient matrix; positive definiteness is equivalent to asking whether \( f \) is convex (a bowl shape with a unique minimum). Positive definiteness implies invertibility and strictly positive eigenvalues.

Contour plots of positive definite, positive semi-definite, and indefinite quadratic forms

Contour plots of positive definite, positive semi-definite, and indefinite quadratic forms

A fundamental result (Theorem 4.3) states that if \( A \in \mathbb{R}^{n \times n} \) is PD and \( X \in \mathbb{R}^{n \times k} \) has rank \( k \leq n \), then \( B = X^T AX \) is also PD. The proof sets \( x = Xz \) for any \( z \neq 0 \): since \( X \) has rank \( k \), its null space contains only the zero vector (by the rank-nullity theorem), so \( x = Xz \neq 0 \) whenever \( z \neq 0 \), and then \( z^T B z = (Xz)^T A (Xz) = x^T Ax > 0 \) since \( A \) is PD.

A principal submatrix is constructed by deleting rows and their corresponding columns from a matrix. Two corollaries follow from Theorem 4.3. First, if \( A \) is PD then all its principal submatrices are also PD (one can design identity-like matrices \( X \) to “pick out” any principal submatrix via \( X^T AX \). In particular, every diagonal entry of a PD matrix is strictly positive. Second, if \( A \) is PD, then the diagonal matrix \( D \) in the factorization \( A = LDM^T \) has strictly positive entries. This follows by taking \( X = L^{-T} \) and applying Corollary 4.1 to the principal submatrix argument.

4.3 Symmetric Positive Definite Matrices

A symmetric positive definite (SPD) matrix \( A \) satisfies both \( A = A^T \) and \( x^T Ax > 0 \) for all \( x \neq 0 \). Theorem 4.4 states that if \( A \) is SPD, there exists a unique lower triangular matrix \( G \) with positive diagonal entries such that \( A = GG^T \). This is the Cholesky factorization and \( G \) is the Cholesky factor.

The existence proof uses the \( LDL^T \) factorization guaranteed by symmetry: since \( A \) is PD, the diagonal entries of \( D \) are all positive. One can therefore define \( D^{1/2} = \text{diag}(\sqrt{d_1}, \ldots, \sqrt{d_n}) \) and set \( G = LD^{1/2} \), which is lower triangular with positive diagonal. Then \( GG^T = LD^{1/2}(LD^{1/2})^T = LD^{1/2}D^{1/2}L^T = LDL^T = A \).

Constructing the Cholesky Factor. The algorithm works recursively. Partition \( A \) as

\[ A = \begin{pmatrix} \alpha & v^T \\ v & B \end{pmatrix}, \]where \( \alpha = a_{11} \in \mathbb{R} \), \( v = a_{2:n,1} \in \mathbb{R}^{n-1} \), and \( B = a_{2:n,2:n} \in \mathbb{R}^{(n-1) \times (n-1)} \). The first column of \( G \) is determined by comparing block forms of \( GG^T \) with \( A \):

\[ g_{11} = \sqrt{\alpha}, \quad G_{21} = \frac{v}{\sqrt{\alpha}}, \quad G_{22}G_{22}^T = B - \frac{vv^T}{\alpha}. \]The algorithm then recursively applies Cholesky factorization to the Schur complement \( B - vv^T/\alpha \), which is itself SPD (one verifies this using Theorem 4.3 with the full-rank matrix \( X = [1, -v^T/\alpha;\, 0, I] \). The recursion eliminates one row and column at a time until the entire lower triangular factor \( G \) is assembled.

Cost of Cholesky Factorization. The in-place algorithm (Algorithm 4.1) exploits symmetry by working only on sub-diagonal entries, iterating over the diagonal entry, the column below it, and the lower-right block update. Focusing on the innermost doubly-nested loop (which contributes one subtraction and one multiplication per iteration), the total flop count is

\[ \sum_{k=1}^{n} \sum_{j=k+1}^{n} \sum_{i=j}^{n} 2 = \frac{n^3}{3} + O(n^2), \]which is exactly half the cost of LU factorization (\( \frac{2n^3}{3} + O(n^2) \) flops). The square roots and column divisions contribute only \( O(n^2) \) and \( O(n) \) terms respectively and are dominated by the lower-right block updates.

Worked Example: Cholesky Factorization

This example walks through computing the Cholesky factorization of a specific SPD matrix step by step, illustrating the recursive structure of the algorithm.

Consider a \( 3 \times 3 \) SPD matrix \( A \). The first step is to identify \( \alpha \), \( v \), and \( B \) in the block decomposition of \( A \). The top-left corner gives \( \alpha = 9 \), the column below it gives \( v = [3, 0]^T \), and the lower-right submatrix gives \( B = \begin{pmatrix} 5 & 2 \\ 2 & 17 \end{pmatrix} \).

Computing the first column of \( G \): the diagonal entry is \( \sqrt{\alpha} = \sqrt{9} = 3 \); the entries below the diagonal are \( v/\sqrt{\alpha} = [3/3, 0/3]^T = [1, 0]^T \). The Schur complement is \( B - vv^T/\alpha = \begin{pmatrix} 5 & 2 \\ 2 & 17 \end{pmatrix} - \frac{1}{9}\begin{pmatrix} 9 & 0 \\ 0 & 0 \end{pmatrix} = \begin{pmatrix} 4 & 2 \\ 2 & 17 \end{pmatrix} \).

After the first stage, the factorization has its first column of \( G \) as \( [3, 1, 0]^T \), and the algorithm now recurses on the \( 2 \times 2 \) Schur complement.

For the second recursion, the sub-matrix is \( \begin{pmatrix} 4 & 2 \\ 2 & 17 \end{pmatrix} \). Identifying the block components: \( \alpha = 4 \), \( v = [2] \), \( B = [17] \). The diagonal entry of the second column of \( G \) is \( \sqrt{4} = 2 \); the entry below is \( 2/2 = 1 \). The Schur complement is \( 17 - (1/4) \cdot 4 = 17 - 4 \cdot (4/4) = 17 - 4 = 16 \). Wait — more carefully: \( B - vv^T/\alpha = 17 - 2^2/4 = 17 - 1 = 16 \). The second column of \( G \) contributes \( [2, 1]^T \) in the appropriate positions.

The third and final recursion operates on the \( 1 \times 1 \) Schur complement \( [16] \). Its Cholesky factor is simply \( \sqrt{16} = 4 \), which becomes the third diagonal entry of \( G \).

Assembling all columns, the Cholesky factor is

\[ G = \begin{pmatrix} 3 & 0 & 0 \\ 1 & 2 & 0 \\ 0 & 1 & 4 \end{pmatrix}. \]One can verify that \( GG^T = A \).

Worked Example: Cost of Cholesky Factorization

This example derives the exact leading-order flop count for Cholesky factorization, confirming that it is half that of LU factorization.

The algorithm has three nested loops: the outermost over \( k = 1 \) to \( n \), a middle loop over \( j = k+1 \) to \( n \), and an innermost loop over \( i = j \) to \( n \). Inside the innermost loop there are two flops (one subtraction, one multiplication). The division outside the innermost loop contributes only an \( O(n^2) \) term, and the square root outside the middle loop contributes only an \( O(n) \) term.

Focusing on the dominant innermost loop, the total cost is

\[ 2 \sum_{k=1}^{n} \sum_{j=k+1}^{n} \sum_{i=j}^{n} 1. \]Evaluating the innermost sum using the formula \( \sum_{i=j}^{n} 1 = n - j + 1 \), then splitting the middle sum into constant and linear parts in \( j \), one applies the identities for summing 1 and for summing the index itself (using a dummy variable shift since the index does not start at 1). After carrying through the algebra, the outer sum over \( k \) is evaluated using the known identities for \( \sum k \) and \( \sum k^2 \). The final result is

\[ \frac{n^3}{3} - \frac{n}{3} = \frac{n^3}{3} + O(n), \]confirming that the leading-order cost is \( \frac{n^3}{3} \) flops — precisely half the \( \frac{2n^3}{3} \) flops required by LU factorization. This savings arises directly from exploiting the symmetry of an SPD matrix to avoid redundant computation on the upper triangle.

Special Linear Systems (Part 2)

This lecture continues the theme of exploiting matrix structure to solve \( Ax = b \) more efficiently. The first three matrix types — symmetric, positive definite, and symmetric positive definite — were treated in Lecture 4. This lecture covers the remaining three common types: banded matrices, tridiagonal matrices (a special case of banded), and general sparse matrices.

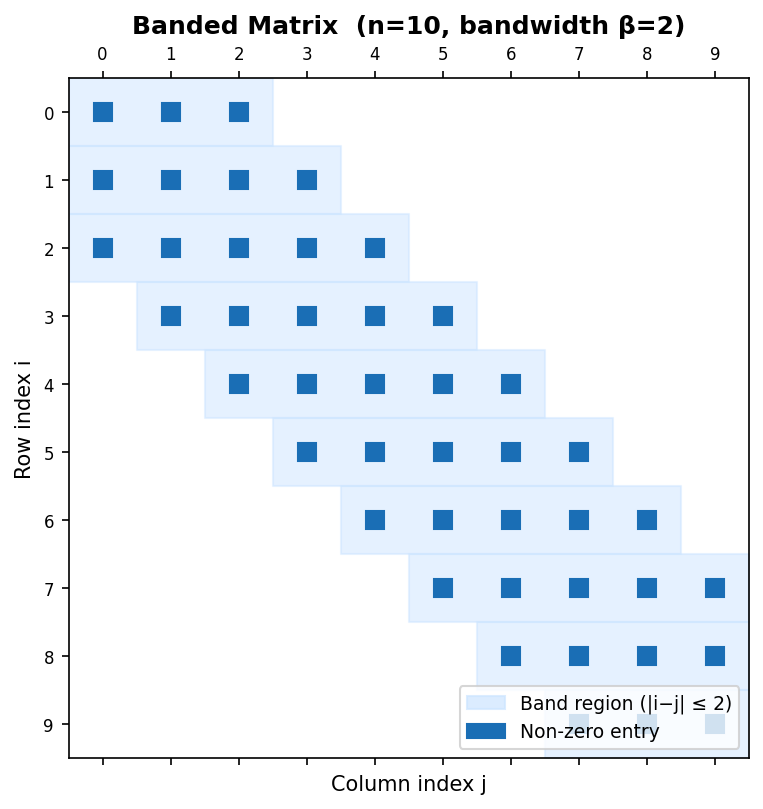

5.1 Banded Matrices

Banded matrices have non-zero entries only in “bands” adjacent to the main diagonal; all entries sufficiently far from the diagonal are zero. Formally, the matrix \( A = [a_{ij}] \) has upper bandwidth \( q \) if \( a_{ij} = 0 \) for \( j > i + q \), and lower bandwidth \( p \) if \( a_{ij} = 0 \) for \( i > j + p \). Common special cases include diagonal matrices (\( p = q = 0 \), upper triangular (\( p = 0 \), \( q = n-1 \), lower triangular (\( p = m-1 \), \( q = 0 \), tridiagonal (\( p = q = 1 \), upper and lower bidiagonal (\( (p,q) = (0,1) \) and \( (1,0) \), and upper and lower Hessenberg (\( (p,q) = (1, n-1) \) and \( (m-1, 1) \).

An important structural property of banded matrices is preserved under factorization: if \( A = LU \), \( A \) has upper bandwidth \( q \) and lower bandwidth \( p \), then \( U \) has upper bandwidth \( q \) and \( L \) has lower bandwidth \( p \). The same bandwidth preservation holds for the \( LDL^T \) and Cholesky \( GG^T \) factorizations.

Cost of Banded LU Factorization. The banded LU factorization algorithm (Algorithm 5.1) restricts its inner loops to the non-zero bands: for each pivot column \( k \), the row loop runs only from \( k+1 \) to \( \min(k+p, n) \) and the column loop only from \( k+1 \) to \( \min(k+q, n) \). When the bandwidths are small relative to \( n \) (i.e., \( n \gg p \) and \( n \gg q \), the cost is approximately \( 2npq \) flops, dramatically less than the \( \frac{2n^3}{3} \) flops of the naive full implementation that would touch the zero entries. For example, with \( n = 300 \), \( p = 2 \), \( q = 2 \): naive LU requires approximately 18,000,000 flops, while banded LU requires only approximately 2,400 flops. For tridiagonal matrices (\( p = q = 1 \), the flop count reduces to \( O(n) \).

Tridiagonal \( LDL^T \) Factorization. When a symmetric tridiagonal matrix \( A \) is factored as \( A = LDL^T \), the factors inherit the tridiagonal structure: \( L \) is unit lower bidiagonal with sub-diagonal entries \( e_1, \ldots, e_{n-1} \), and \( D = \text{diag}(d_1, \ldots, d_n) \). Expanding the product \( (LDL^T)_{kk} = e_{k-1}^2 d_{k-1} + d_k \) and \( (LDL^T)_{k,k-1} = e_{k-1} d_{k-1} \) yields explicit recurrence relations:

\[ d_1 = a_{11}, \quad \text{for } k = 2, \ldots, n: \quad e_{k-1} = \frac{a_{k,k-1}}{d_{k-1}}, \quad d_k = a_{kk} - e_{k-1} a_{k,k-1}. \]This algorithm requires only \( O(n) \) flops and \( O(n) \) storage, making it optimal for tridiagonal systems. Once \( L \) and \( D \) are available, solving \( LDL^T x = b \) via forward and backward substitution also costs only \( O(n) \) flops.

5.2 General Sparse Matrices

Patterns other than simple bands also arise frequently. General sparse matrices contain mostly zero entries but with non-zeros possibly scattered across the matrix beyond just diagonal bands. For many practical problems, the number of non-zeros per row is bounded by a small constant, so the total number of non-zeros is \( O(n) \); one naturally wants to store and operate on only the non-zero entries.

Various storage formats (data structures) exist for sparse matrices. The simplest approach uses a vector of \( (i, j, \text{value}) \) triplets, one per non-zero, but this is inefficient. A more common structure is Compressed Row Storage (CRS), also known as Compressed Sparse Row (CSR): it uses an array of non-zero values (val) of length equal to the number of non-zeros (nnz), an array of column indices (colInd) of length nnz, and an array of row-start pointers (rowPtr) of length equal to the number of rows.

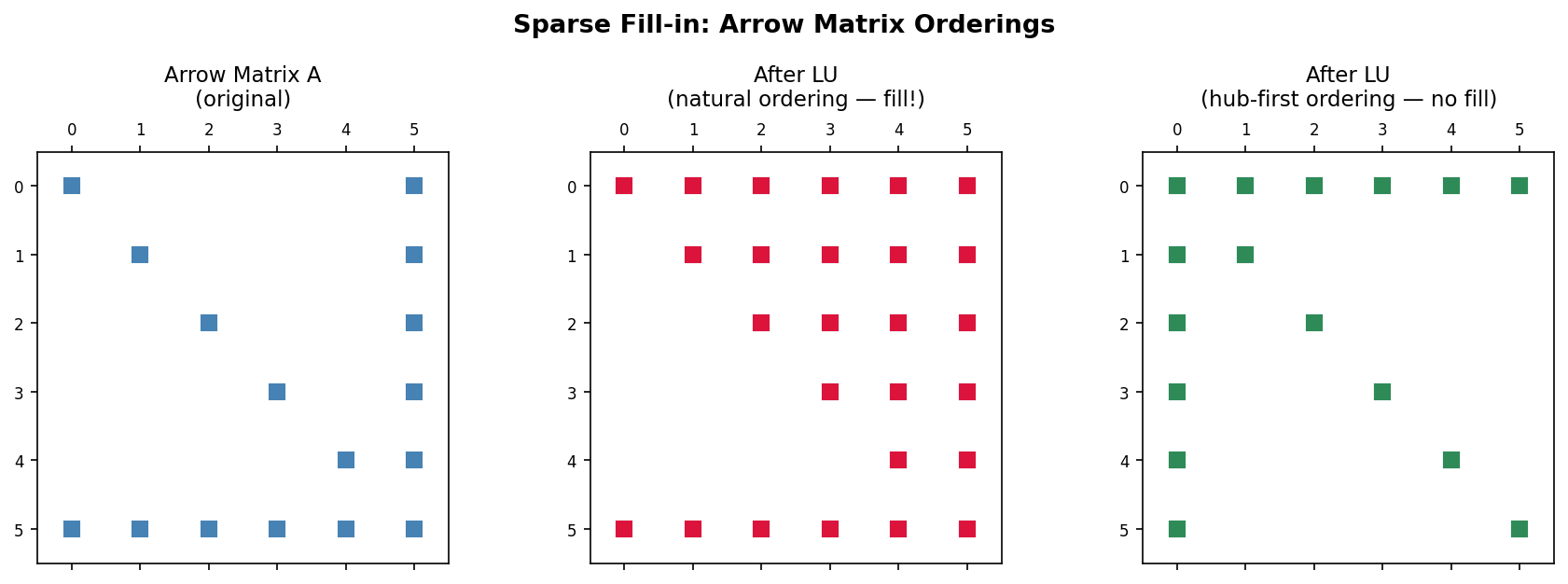

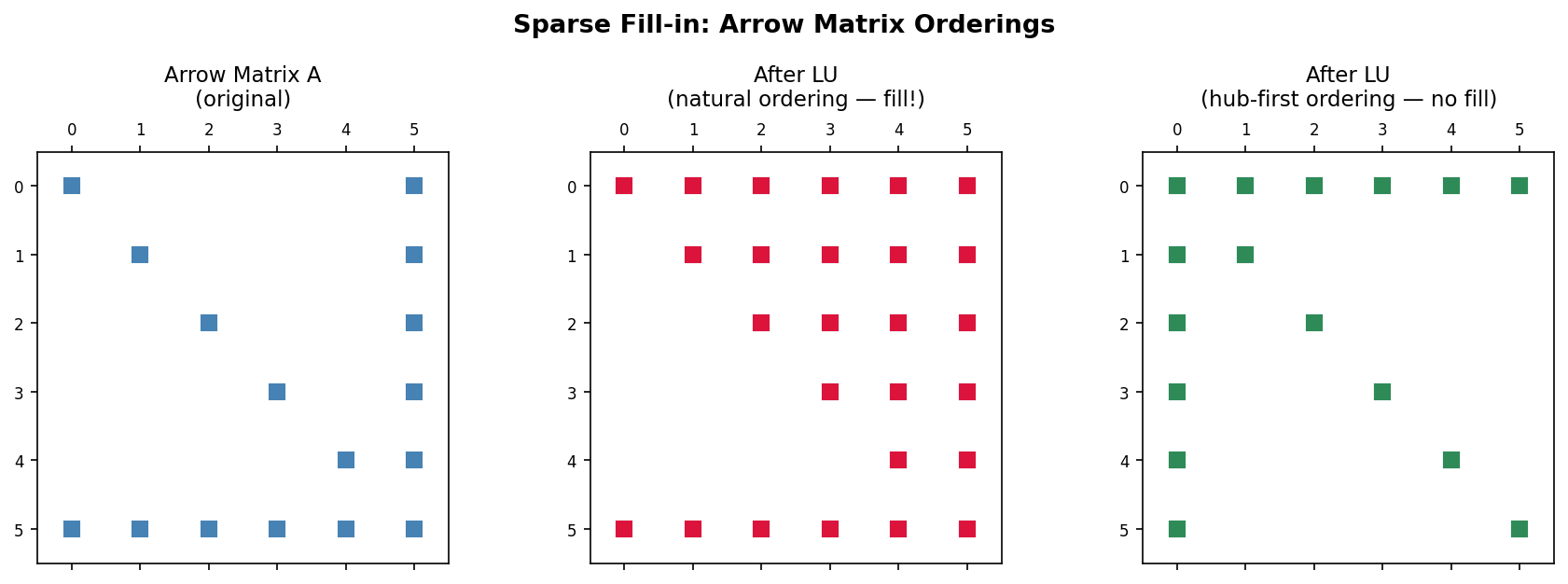

Fill-in During Factorization. For LU factorization of a sparse matrix, the key computational step is the row subtraction \( a_{ij} \leftarrow a_{ij} - a_{ik} a_{kj}/a_{kk} \). Since most entries are zero, algorithms skip operating on them. However, even if \( A \) is sparse, its factorization may not be, because row subtractions can turn zero entries into non-zeros. This phenomenon is called fill-in.

A classic example is the arrow matrix: a matrix with a dense first row, a dense first column, and diagonal entries elsewhere. The LU factors of such a matrix are fully dense in the corresponding triangles, meaning all potential fill-in positions become non-zero. The key intuition is that one must reorder the system of equations to avoid or minimize fill-in. A matrix reordering permutes the rows and columns to yield a matrix whose LU factorization suffers no (or minimal) fill-in. For the arrow matrix, if the dense row and column are moved to the last position rather than the first, the LU factors are again sparse. Matrix reorderings are discussed in detail starting in Lecture 8.

Finite Differences for Modelling Heat Conduction

This lecture covers an important application of solving linear systems: the numerical solution of partial differential equations (PDEs). PDEs involve multivariable functions and their partial derivatives and describe numerous physical phenomena including electromagnetism, fluid flow, sound propagation, financial problems, solid mechanics, and quantum mechanics. Finite difference, finite volume, and finite element methods discretize PDEs and produce sparse linear systems. This lecture introduces finite differences for the Poisson equation, which models heat conduction.

6.1 Heat Conduction

The heat distribution in a uniform material at equilibrium is modelled by the Poisson equation:

\[ -\left(\frac{\partial^2 T}{\partial x^2} + \frac{\partial^2 T}{\partial y^2} + \frac{\partial^2 T}{\partial z^2}\right) = f, \quad \text{equivalently} \quad -\Delta T = f, \]where \( x, y, z \) are spatial coordinates, \( T(x, y, z) \) is the temperature field, \( f(x, y, z) \) is a given heat source function, and \( \Delta = \frac{\partial^2}{\partial x^2} + \frac{\partial^2}{\partial y^2} + \frac{\partial^2}{\partial z^2} \) is the Laplacian operator.

1D Example. Consider the one-dimensional problem

\[ -\frac{\partial^2 T}{\partial x^2} = f \quad \text{on } (0, 1), \qquad T(0) = 0, \quad T(1) = 0. \]The second and third conditions are the boundary conditions, prescribing zero temperature at both ends. To find an approximate numerical solution, the approach is to first discretize the domain into finite subintervals and then approximate the spatial derivative with finite differences.

Discretizing the 1D domain means defining \( n+2 \) evenly spaced gridpoints \( 0 = x_0 < x_1 < x_2 < \cdots < x_n < x_{n+1} = 1 \). The grid spacing is \( h = x_i - x_{i-1} = \frac{1}{n+1} \). The numerical approximation of the exact solution at gridpoint \( x_i \) is denoted \( T_i \). Since the boundary conditions fix \( T_0 = 0 \) and \( T_{n+1} = 0 \), only the \( n \) interior values \( T_1, T_2, \ldots, T_n \) are unknowns. These are the active gridpoints.

The centered finite difference approximation of the second derivative is

\[ \frac{\partial^2 T}{\partial x^2}(x_i) \approx \frac{T_{i+1} - 2T_i + T_{i-1}}{h^2}. \]Applying this to the PDE at each active gridpoint gives one equation per interior gridpoint:

\[ -\frac{T_{i+1} - 2T_i + T_{i-1}}{h^2} = f_i, \quad i = 1, \ldots, n. \]Each equation relates \( T_i \) to its two neighbors \( T_{i-1} \) and \( T_{i+1} \). Assembling all \( n \) equations into matrix form yields

\[ \frac{1}{h^2} \begin{pmatrix} 2 & -1 & & & \\ -1 & 2 & -1 & & \\ & \ddots & \ddots & \ddots & \\ & & -1 & 2 & -1 \\ & & & -1 & 2 \end{pmatrix} \begin{pmatrix} T_1 \\ T_2 \\ \vdots \\ T_{n-1} \\ T_n \end{pmatrix} = \begin{pmatrix} f_1 \\ f_2 \\ \vdots \\ f_{n-1} \\ f_n \end{pmatrix}. \]The resulting matrix is symmetric and tridiagonal (a banded matrix with \( p = q = 1 \), and the system can be solved using the banded LU or Cholesky algorithms in \( O(n) \) flops.

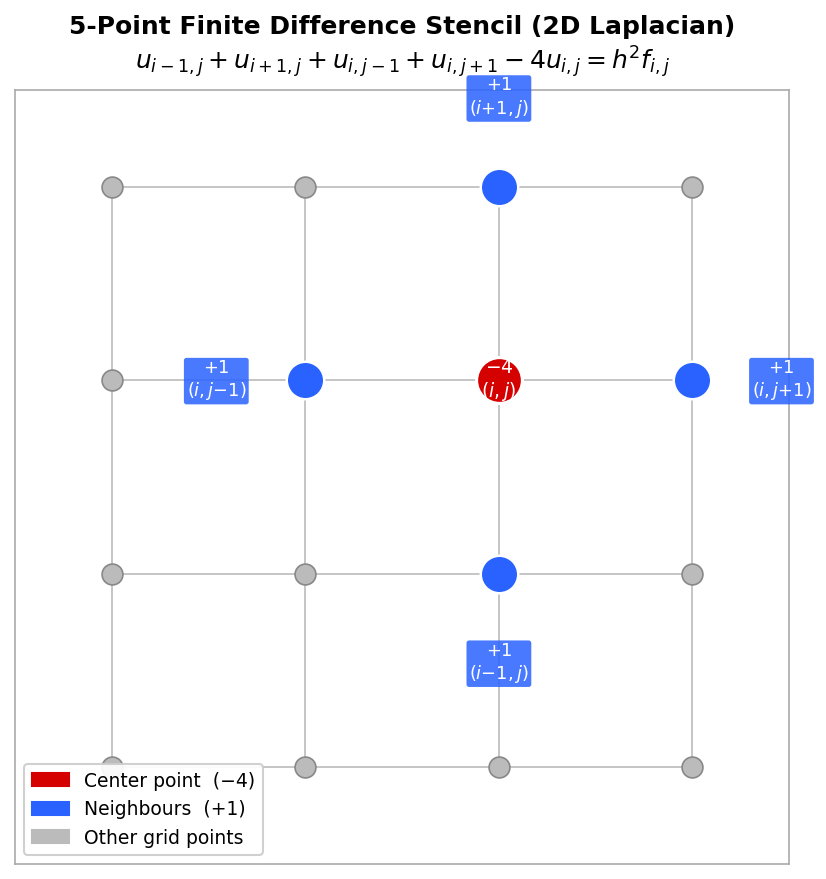

Heat Conduction in a 2D Plate. For a two-dimensional rectangular plate with zero temperature on all boundaries, the 2D Poisson equation at interior gridpoint \( (x_i, y_j) \) is discretized by approximating the second derivative in each axis separately and summing:

\[ \frac{4T_{i,j} - T_{i+1,j} - T_{i-1,j} - T_{i,j+1} - T_{i,j-1}}{h^2} = f_{i,j}. \]This is the discrete 2D Poisson equation. The finite difference stencil is a convenient visual representation centered at each gridpoint showing the weights of the five terms: \( 4/h^2 \) at the center, \( -1/h^2 \) at each of the four neighbors.

Five-point finite difference stencil with coefficients labelled

Five-point finite difference stencil with coefficients labelled

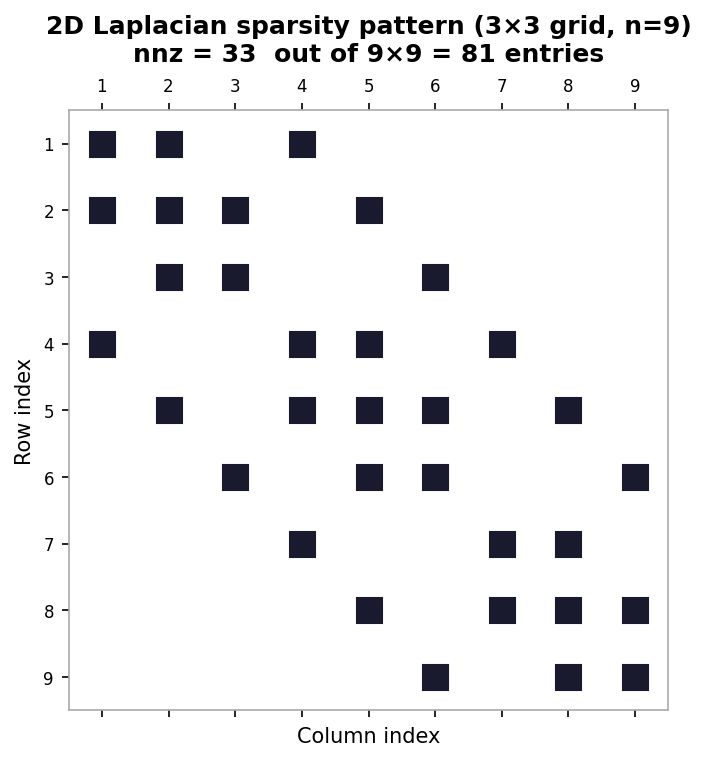

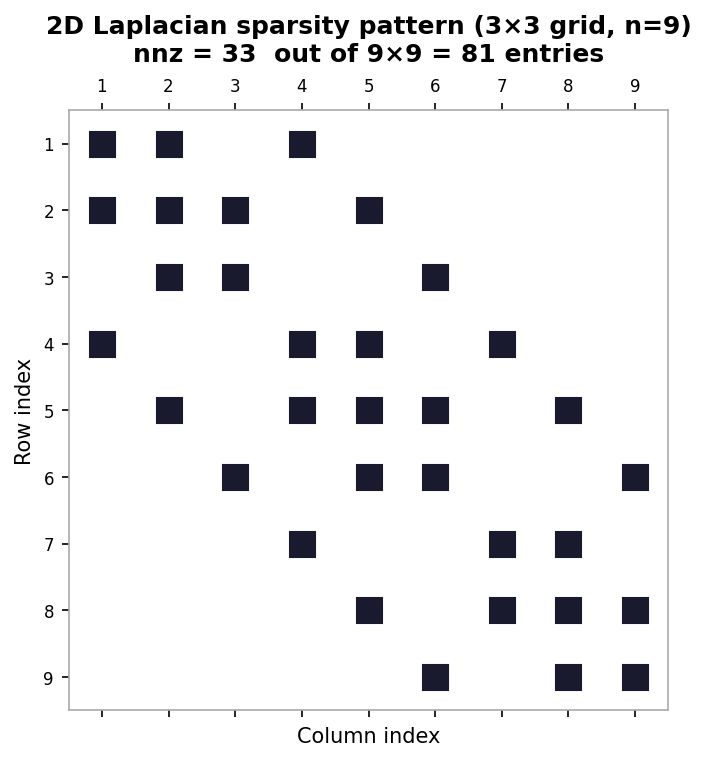

To form a matrix equation, the 2D indices \( (i,j) \) must be flattened to a 1D index. Natural rowwise ordering numbers gridpoints along the \( x \)-axis first and then along the \( y \)-axis: the mapping is \( k = i + (j-1) \times m \) for an \( m \times m \) grid of interior points. With this ordering and an \( m^2 \times m^2 \) system of equations, the resulting matrix has five bands:

- 1 main diagonal band (with entries 4),

- 2 adjacent off-diagonal bands (with entries \( -1 \),

- 2 further off-diagonal bands separated by \( m \) positions (with entries \( -1 \).

Sparsity pattern of the 2D Laplacian matrix for a 3×3 interior grid

Sparsity pattern of the 2D Laplacian matrix for a 3×3 interior grid

The matrix is symmetric, and its graph recovers the underlying 2D grid structure. Other types of PDE problems, discretizations, and geometries — such as triangular meshes for finite volume discretization of flow around an airfoil — give rise to different matrix structures and properties. This lecture focused on the equilibrium (steady-state) heat equation; the time-dependent heat equation gives rise to similar but time-evolving linear systems.

Worked Example: 1D Poisson Matrix Equation

This example derives the matrix form of the discrete 1D Poisson equation for a specific case with six gridpoints (\( x_0 \) to \( x_5 \), so that \( n+1 = 5 \) and the grid spacing is \( h = 1/5 \). The boundaries fix \( T_0 = 0 \) and \( T_5 = 0 \), leaving four active gridpoints \( T_1, T_2, T_3, T_4 \).

To build the matrix equation, one writes out the discrete equation for each active gridpoint. For \( i = 1 \), the discrete equation gives \( (-T_2 + 2T_1 - T_0)/h^2 = f_1 \); since \( T_0 = 0 \) the boundary term drops out. For \( i = 2 \), one gets \( (-T_3 + 2T_2 - T_1)/h^2 = f_2 \). For \( i = 3 \), one gets \( (-T_4 + 2T_3 - T_2)/h^2 = f_3 \). For \( i = 4 \) (the last active point), one gets \( (-T_5 + 2T_4 - T_3)/h^2 = f_4 \); since \( T_5 = 0 \) the boundary term drops out on this end as well.

Writing these four equations in matrix form, with \( 1/h^2 \) factored outside, gives a coefficient matrix with 2 on the main diagonal, \( -1 \) on the two adjacent off-diagonals, and zeros elsewhere. Examining the first row one sees the pattern \( [2, -1, 0, 0] \), the second row \( [-1, 2, -1, 0] \), the third \( [0, -1, 2, -1] \), and the fourth \( [0, 0, -1, 2] \). This is the familiar symmetric tridiagonal structure. The right-hand side vector is \( [f_1, f_2, f_3, f_4]^T \), the source values at the active gridpoints.

Worked Example: 2D Poisson Matrix Equation

This example illustrates building the 2D Poisson matrix equation and the index-flattening procedure for the specific case \( m = 4 \) (a \( 4 \times 4 \) grid of interior points, so \( m^2 = 16 \) unknowns), with zero boundary temperatures. The natural rowwise ordering flattens 2D indices \( (i, j) \) to 1D indices \( k \) using \( k = i + (j-1) \times m \).

As a first example, consider the equation for interior point \( T_{2,2} \) (\( i=2 \), \( j=2 \). The discrete Poisson equation gives \( (1/h^2)(4T_{2,2} - T_{3,2} - T_{1,2} - T_{2,3} - T_{2,1}) = f_{2,2} \). Flattening the indices: the center \( (2,2) \) maps to \( k = 2 + (2-1) \times 4 = 6 \); the neighbor \( (3,2) \) maps to \( k = 3 + 4 = 7 \); \( (1,2) \) maps to \( k = 1 + 4 = 5 \); \( (2,3) \) maps to \( k = 2 + 8 = 10 \); and \( (2,1) \) maps to \( k = 2 + 0 = 2 \). In the 1D-indexed system this corresponds to row 6: the entry \( (6,6) = 4 \) and entries \( (6,7) \), \( (6,5) \), \( (6,10) \), \( (6,2) \) are all \( -1 \).

As a second example, consider the equation for \( T_{2,4} \) (\( i=2 \), \( j=4 \). The discrete Poisson equation gives \( (1/h^2)(4T_{2,4} - T_{3,4} - T_{1,4} - T_{2,5} - T_{2,3}) = f_{2,4} \). Since \( m = 4 \), the point \( (2,5) \) lies on the boundary, so \( T_{2,5} = 0 \) and that term disappears. Flattening the remaining indices: center \( (2,4) \) maps to \( k = 2 + 3 \times 4 = 14 \); \( (3,4) \) maps to \( 3 + 12 = 15 \); \( (1,4) \) maps to \( 1 + 12 = 13 \); and \( (2,3) \) maps to \( 2 + 2 \times 4 = 10 \). Row 14 of the matrix therefore has a 4 on the diagonal and \( -1 \) entries in columns 15, 13, and 10 — only three off-diagonal entries instead of four, reflecting the missing boundary neighbor.

The general matrix from the 2D Poisson equation with natural ordering has blocks of tridiagonal structure along the main diagonal (from the \( x \)-direction coupling) and blocks of \( -1 \)s on the off-block-diagonals (from the \( y \)-direction coupling), resulting in a banded symmetric matrix with bandwidth \( m \).

Graph Structure of Matrices

This lecture considers the graph representation of (symmetric) matrices and examines how fill-in during factorization is related to the graph structure. Since fill-in increases both storage and computational costs, understanding and minimizing it is central to efficient sparse linear algebra. The graph perspective provides an intuitive framework for analyzing and designing matrix reordering algorithms.

7.1 Graph Structure of Matrices

Given a matrix \( A \), one can construct an associated directed graph \( G(A) \). The graph has one node \( i \) for each row \( i \) of \( A \), and a directed edge \( (i \to j) \) exists whenever the matrix entry \( a_{ij} \neq 0 \) (for \( i \neq j \). For symmetric matrices, edges are undirected: \( i \leftrightarrow j \). Formally, for a square matrix \( A \in \mathbb{R}^{n \times n} \), the associated graph \( G = (V, E) \) has nodes \( i \in V \) for all \( i \in [1, n] \) and edges \( (i, j) \in E \) for all \( i \neq j \) with \( a_{ij} \neq 0 \). Self-loops (diagonal entries) are excluded from the graph even when \( a_{ii} \neq 0 \).

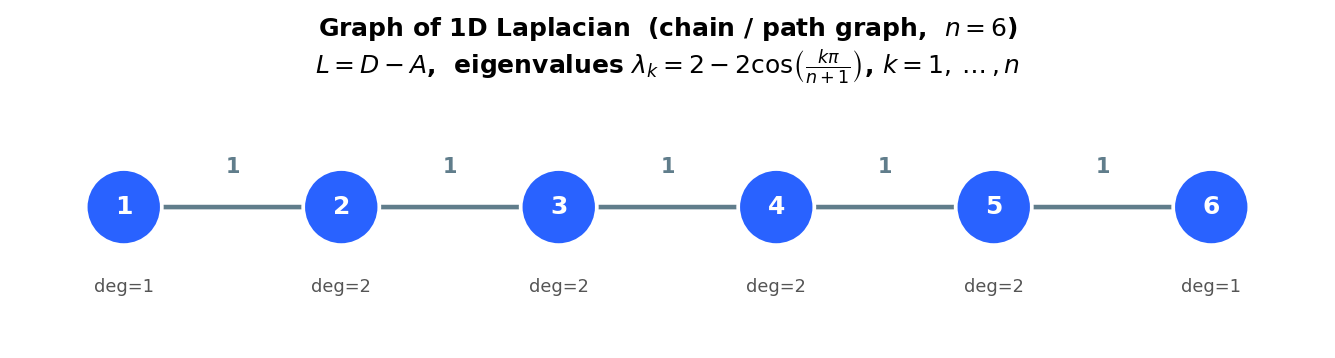

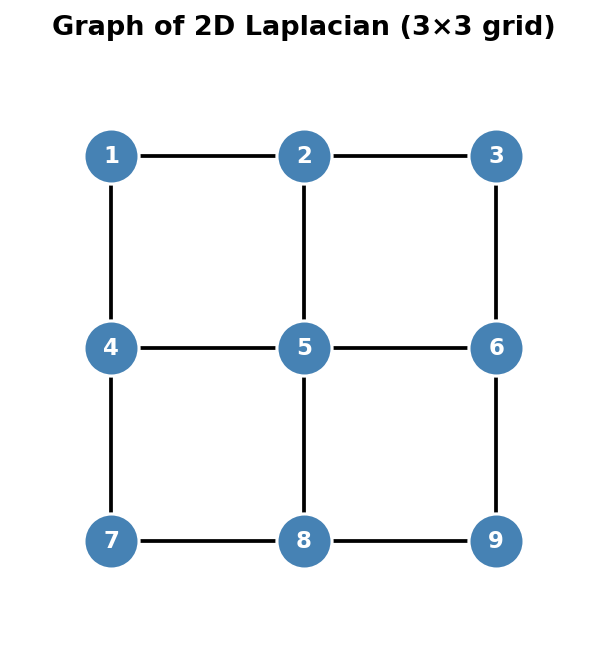

The graph structure frequently has a physical or geometric interpretation. The 1D Laplacian matrix, which is tridiagonal, corresponds to a graph in which nodes are connected consecutively in a chain. The 2D Laplacian matrix, arising from the discrete Poisson equation, gives a graph that directly recovers the underlying 2D grid: each interior node connects to its four neighbors (left, right, up, down). This geometric interpretation is extremely helpful for understanding why different orderings of the unknowns produce different sparsity patterns.

Graph of the 1D Laplacian: a chain of n nodes

Graph of the 1D Laplacian: a chain of n nodes

Graph of the 2D Laplacian: a 3×3 grid of 9 nodes

Graph of the 2D Laplacian: a 3×3 grid of 9 nodes

7.2 Fill-in During Factorization

Recall that factorization can destroy the nice sparsity of a matrix. The graph perspective reveals precisely when fill-in occurs. Consider the LU factorization of a matrix \( A \) and focus on the first elimination step: one adds multiples of row 1 to rows \( 2, 3, \ldots, n \) to zero out the entries in the first column. While introducing those zeros, new non-zeros may appear in positions that were previously zero — this is fill-in.

From the graph perspective, eliminating node \( i \) transforms the graph according to two rules: (1) node \( i \) and all its edges are deleted, and (2) new edges \( j \leftrightarrow k \) are added for every pair of nodes \( j, k \) that were both connected to node \( i \) in the original graph but were not already connected to each other. These new edges correspond exactly to the fill-in entries. In other words, eliminating node \( i \) “cliques up” its neighborhood: all neighbors of \( i \) become connected to each other.

The same fill-in pattern arises for Cholesky factorization. At the first step of Cholesky, one computes the Schur complement \( B - vv^T/\alpha \). If a pair of rows in \( v \) are both non-zero (i.e., both rows connect to node 1 in the graph), then the corresponding entry in \( B - vv^T/\alpha \) becomes non-zero — exactly the same fill-in as in LU factorization, because both methods delete the same node and connect its neighbors.

A dramatic illustration is the arrow matrix, which has a dense first row and column plus diagonal entries. Every off-diagonal node is connected to node 1 but not to any other off-diagonal node. When node 1 is eliminated, all other nodes become connected to each other (since they all share node 1 as a neighbor), producing a completely dense lower-right block. By contrast, if the arrow is reversed (the dense row and column are last rather than first), then the hub node is eliminated last, and no fill-in occurs at all because none of the other nodes need to be connected before the hub is removed.

Fill-in comparison: natural ordering (dense fill) vs. hub-first ordering (no fill) for an arrow matrix

Fill-in comparison: natural ordering (dense fill) vs. hub-first ordering (no fill) for an arrow matrix

Matrix Reordering

Lectures 5 and 7 showed that general sparse matrices may have dense LU factors due to fill-in. The canonical example is the arrow matrix, whose LU factorization fills in the entire lower-right triangular block. This lecture introduces matrix reorderings — strategies for renumbering the nodes of the graph in order to produce LU factors with little or no fill-in.

8.1 Matrix Reordering — Key Idea

The graph structure of a matrix expresses the underlying relationships among variables. Crucially, the ordering (numbering) of the nodes and variables impacts the layout of the matrix but does not change the underlying graph or the solution. Different matrices with the same graph can suffer vastly different amounts of fill-in during factorization, depending solely on how the nodes are numbered. The goal of matrix reordering is therefore to renumber the graph nodes to produce a matrix whose factorization minimizes fill-in.

Mathematically, reordering is expressed using permutation matrices: a permutation matrix is the identity matrix \( I \) with some rows (or columns) swapped. Permuting the rows of \( A \) is equivalent to multiplying on the left by a permutation matrix \( P \), giving \( PA \). Permuting the columns is equivalent to multiplying on the right by a permutation matrix \( Q \), giving \( AQ \). Both can be applied simultaneously to give \( PAQ \). In practice, permutation matrices are never stored or multiplied explicitly; implementations use index arrays instead.

If one permutes \( A \) to \( \tilde{A} = PAQ \), then to solve the original system \( Ax = b \) one must reorder entries of both \( x \) and \( b \) to match the changes to \( A \). The permuted system is

\[ PAQ(Q^T x) = Pb, \quad \text{i.e.,} \quad \tilde{A}\tilde{x} = \tilde{b}, \]where \( \tilde{x} = Q^T x \) and \( \tilde{b} = Pb \). After solving the permuted system for \( \tilde{x} \), the original solution is recovered by \( x = Q\tilde{x} \) (unpermuting).

Symmetric permutation is particularly important. A symmetric permutation replaces \( A \) with \( PAP^T \), permuting rows and columns in the same way (\( Q = P^T \). This is equivalent in graph terms to simply relabeling the nodes while leaving the edges unchanged, and it naturally preserves symmetry. To construct \( P \) from a reordering (a list of before-and-after node labels), one applies the desired row swaps to the identity matrix \( I \). For example, a reordering \( 1 \to 2,\; 2 \to 3,\; 3 \to 1,\; 4 \to 4 \) sends row 1 to row 2, row 2 to row 3, and row 3 to row 1, producing the permutation matrix with those row swaps applied to \( I \).

8.2 Example with Natural Ordering

As a concrete illustration, consider the 2D Laplacian matrix on a non-square domain with \( m_x \gg m_y \) (many more gridpoints in the \( x \)-direction than in the \( y \)-direction). With natural rowwise ordering (numbering along \( x \) first, then \( y \), the entries in row \( i \) of the matrix appear in columns \( i \) (diagonal), \( i-1 \) and \( i+1 \) (inner bands), and \( i-m_x \) and \( i+m_x \) (outer bands). Hence the bandwidth is \( m_x \).

By contrast, columnwise ordering (numbering along \( y \) first, then \( x \) produces outer bands of width \( m_y \), so the bandwidth is \( m_y \). Since \( m_x \gg m_y \), the columnwise ordering gives a much narrower bandwidth and therefore much less fill-in.

For banded matrix factorization, the bands of \( L \) and \( U \) have the same widths as the corresponding bands of \( A \). Fill-in can only occur in the region between the outermost bands. Recalling that banded Gaussian elimination costs approximately \( O(npq) \) flops for bandwidths \( p \) and \( q \), the total cost for bandwidth \( m \) is \( O(m^2 n) \). Rowwise ordering gives \( O(m_x^2 n) \) flops, while columnwise ordering gives only \( O(m_y^2 n) \) flops — a significant saving when \( m_x \gg m_y \).

Banded matrix (n=10, bandwidth β=2) with shaded band region

Banded matrix (n=10, bandwidth β=2) with shaded band region

For more general sparsity patterns, finding the truly optimal ordering is an NP-complete problem. There exist many practical ordering algorithms based on good heuristics, including envelope/level set methods, (Reverse) Cuthill-McKee, Markowitz ordering, and minimum degree ordering. These algorithms are examined in subsequent lectures.

Worked Example: Graph Laplacian

This example illustrates computing the graph Laplacian for a weighted undirected graph and connecting it to the definitions of degree, weighted adjacency, indicator vectors, and set volumes.

The non-symmetric graph Laplacian is defined as \( L = D - W \), where \( D \) is the degree matrix and \( W \) is the weighted adjacency matrix. The degree matrix \( D \) is diagonal with the degree of each node on the diagonal; for a weighted graph the degree of node \( i \) is the sum of the weights of all edges incident to it.

For a specific six-node graph with nodes \( v_1, \ldots, v_6 \), computing the degrees: \( v_1 \) connects to \( v_2 \), \( v_3 \), and \( v_4 \) with weights 1, 1, and 2, giving degree \( 1 + 1 + 2 = 4 \). Node \( v_2 \) connects to \( v_1 \) and \( v_4 \) with weights 1 and 3, giving degree \( 1 + 3 = 4 \). Node \( v_3 \) connects to \( v_1 \), \( v_4 \), and \( v_6 \) with weights 1, 4, and 2, giving degree \( 1 + 4 + 2 = 7 \). Node \( v_4 \) connects to \( v_1 \), \( v_2 \), \( v_3 \), and \( v_5 \) with weights 2, 3, 4, and 2, giving degree \( 2 + 3 + 4 + 2 = 11 \). Node \( v_5 \) connects only to \( v_4 \) with weight 2, giving degree 2. Node \( v_6 \) connects only to \( v_3 \) with weight 2, giving degree 2.

The weighted adjacency matrix \( W \) has one row per vertex, with the edge weight \( w_{ij} \) in position \( (i,j) \) when nodes \( i \) and \( j \) share an edge. Because the graph is undirected, \( W \) is symmetric. The row sum for each node equals its degree, which can be seen directly from the structure of \( W \). The Laplacian \( L = D - W \) then has entries

\[ L = \begin{pmatrix} 4 & -1 & -1 & -2 & 0 & 0 \\ -1 & 4 & 0 & -3 & 0 & 0 \\ -1 & 0 & 7 & -4 & 0 & -2 \\ -2 & -3 & -4 & 11 & -2 & 0 \\ 0 & 0 & 0 & -2 & 2 & 0 \\ 0 & 0 & -2 & 0 & 0 & 2 \end{pmatrix}. \]This graph Laplacian shares a key structural property with the finite difference discrete Laplacian: the diagonal entries are all positive, the off-diagonal entries are all non-positive, and each row sums to zero. For example, the first row: \( 4 - 1 - 1 - 2 = 0 \); in the finite difference stencil the analogous property was that the center coefficient 4 plus the four \( -1 \) neighbors sum to zero.

Finally, one can illustrate the definitions of indicator vectors, set volumes, and cut weights. Partition the six nodes into two subsets: \( A = \{v_1, v_2, v_6\} \) and \( B = \{v_3, v_4, v_5\} \). The indicator vector \( \mathbf{1}_A = [1, 1, 0, 0, 0, 1]^T \) has a 1 in position \( i \) if \( v_i \in A \) and 0 otherwise; similarly \( \mathbf{1}_B = [0, 0, 1, 1, 1, 0]^T \).

The cut weight \( W(A, B) \) is the sum of edge weights between the two sets: \( W(A,B) = \sum_{i \in A, j \in B} w_{ij} \). Enumerating all pairs: \( w_{13} + w_{14} + w_{15} + w_{23} + w_{24} + w_{25} + w_{63} + w_{64} + w_{65} \). Many of these are zero (no edge between the corresponding nodes): \( w_{15} = 0 \) (no edge between \( v_1 \) and \( v_5 \), \( w_{23} = 0 \), \( w_{25} = 0 \), \( w_{64} = 0 \), \( w_{65} = 0 \). The non-zero contributions are \( w_{13} = 1,\; w_{14} = 2,\; w_{24} = 3,\; w_{63} = 2 \), giving \( W(A,B) = 1 + 2 + 0 + 0 + 3 + 0 + 2 + 0 + 0 = 8 \).

The size of a set is just the number of vertices: \( |A| = 3 \) and \( |B| = 3 \). The volume of a set is the sum of degrees of its vertices: \( \text{vol}(A) = d_1 + d_2 + d_6 = 4 + 4 + 2 = 10 \) and \( \text{vol}(B) = d_3 + d_4 + d_5 = 7 + 11 + 2 = 20 \). These quantities — cut weight, size, and volume — are fundamental to spectral clustering and graph partitioning algorithms studied later in the course.

Envelope Reordering

Recall that finding the optimal reordering for a sparse matrix is NP-complete. In practice, reordering algorithms rely on good heuristics rather than an exhaustive search. This course covers four broad families of reordering methods: envelope and level-set methods, (Reverse) Cuthill-McKee, Markowitz, and Minimum Degree. The first two families are the subject of this lecture; the remaining two are treated in Lecture 10.

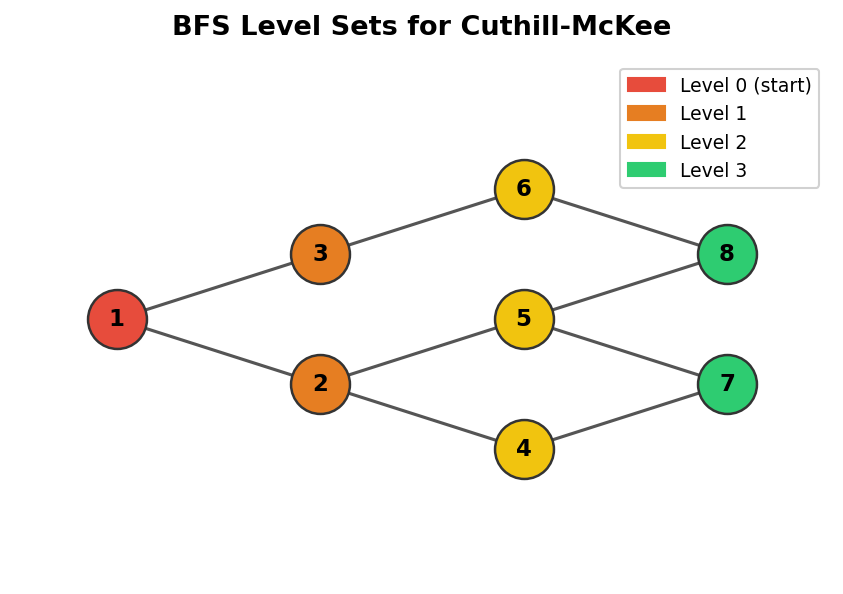

A central motivating observation is that, in practice, bandwidth may vary substantially between individual rows of a matrix. The envelope of a matrix is the smallest region around the diagonal where fill-in may occur. Because fill is confined to the envelope, a smaller envelope means less work during Gaussian elimination. The strategy is therefore to keep the envelope close to the diagonal, which translates into a graph-theoretic requirement: neighbouring nodes in the sparsity graph should receive numbers that are as close together as possible.

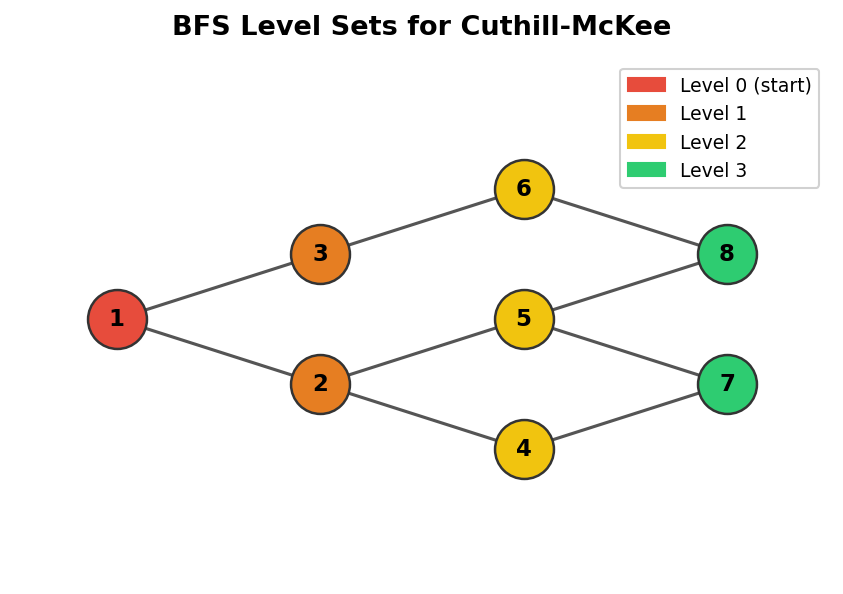

BFS level sets on a graph, used as the basis for the Cuthill-McKee algorithm

BFS level sets on a graph, used as the basis for the Cuthill-McKee algorithm

9.1 Level Sets

We assume throughout that the sparsity pattern of the matrix is symmetric, so the underlying graph representation is undirected. Envelope methods are grounded in the notion of graph level sets.

Graph level sets \(S_i\) are groups of nodes that lie at the same graph distance from a chosen starting node. Formally, \(S_1\) consists of the single starting node; \(S_2\) contains all graph neighbours of nodes in \(S_1\); and in general \(S_i\) is the set of all graph neighbours of nodes in \(S_{i-1}\) that have not appeared in any earlier level set. Envelope methods then number nodes by processing each level set in turn: first all nodes in \(S_1\), then all in \(S_2\), and so on.

The level-set ordering algorithm is essentially a breadth-first search (BFS) of the graph. Starting from a chosen node \(s\), the algorithm marks \(s\) and places it in \(S_1\). At each subsequent step it expands the frontier: for every unmarked node adjacent to any node in the current level set \(S_i\), that node is added to \(S_{i+1}\) and marked. The process continues until no new nodes can be reached. For disconnected graphs, the procedure is repeated on each connected component. Two open questions within this basic framework are: in what order should nodes within a level set be enumerated, and in what order should the neighbours of a node be visited?

9.2 Cuthill-McKee

The Cuthill-McKee (CM) algorithm provides a heuristic answer to the within-level-set ordering question by exploiting node degrees. The degree of a node \(v\), written \(\deg(v)\), is the number of adjacent nodes, equivalently the number of incident edges. The Cuthill-McKee heuristic is: when visiting a node during the BFS traversal, add its as-yet-unnumbered neighbours to the queue in increasing order of degree, breaking ties by some fixed rule such as alphabetical order or original node index.

Concretely, the CM algorithm begins by picking a starting node and assigning it number 1. All unnumbered neighbours of node 1 are then numbered in increasing degree order. For each of those neighbours, their own unnumbered neighbours are in turn ordered by degree and queued, and the process continues until every node has received a number.

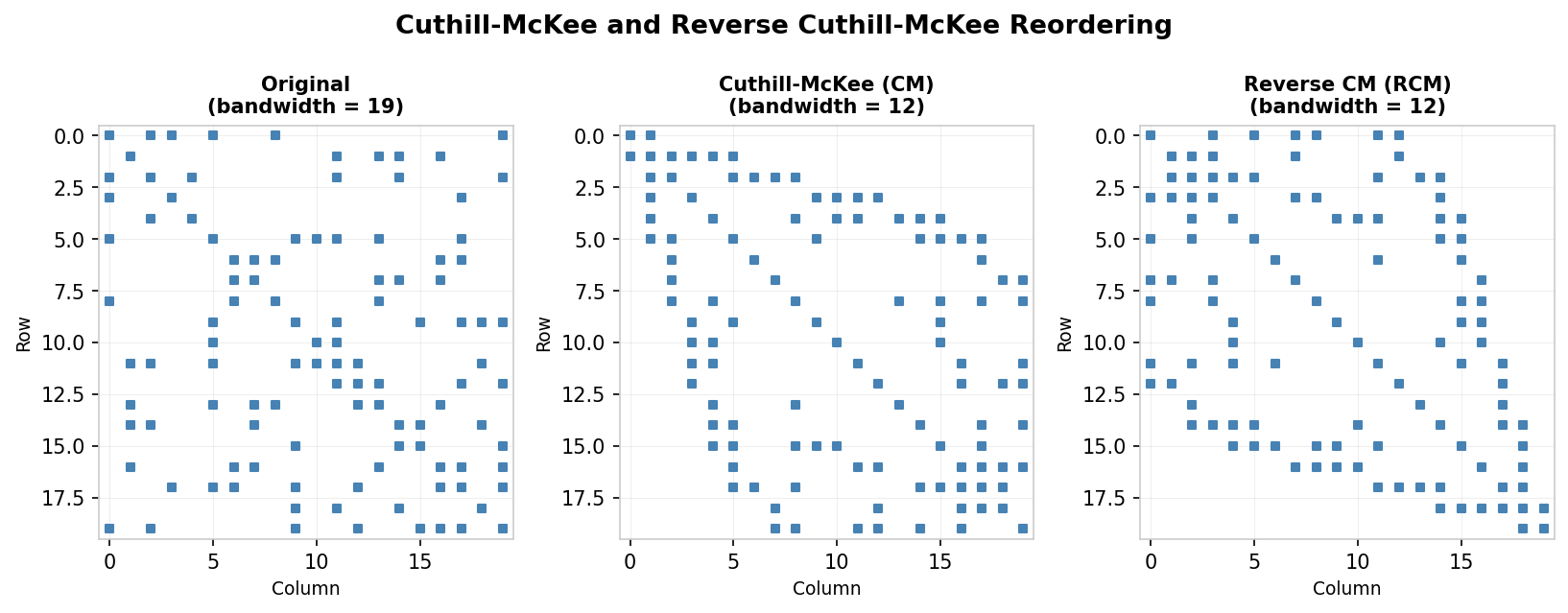

9.3 Reverse Cuthill-McKee

The Reverse Cuthill-McKee (RCM) algorithm is exactly what its name implies: compute the CM numbering, then reverse it. If there are \(n\) nodes, the RCM number of node \(i\) under CM becomes node \(n - i + 1\) under RCM. Although this reversal appears trivial, J. A. George observed in 1971 that it can substantially reduce the amount of fill-in for many graphs. RCM produces the same envelope bandwidth as CM, but the resulting sparsity patterns tend to resemble a lower-triangular “downward arrow” matrix rather than an upper-triangular “upward arrow” matrix, which is preferable for fill-reduction in practice.

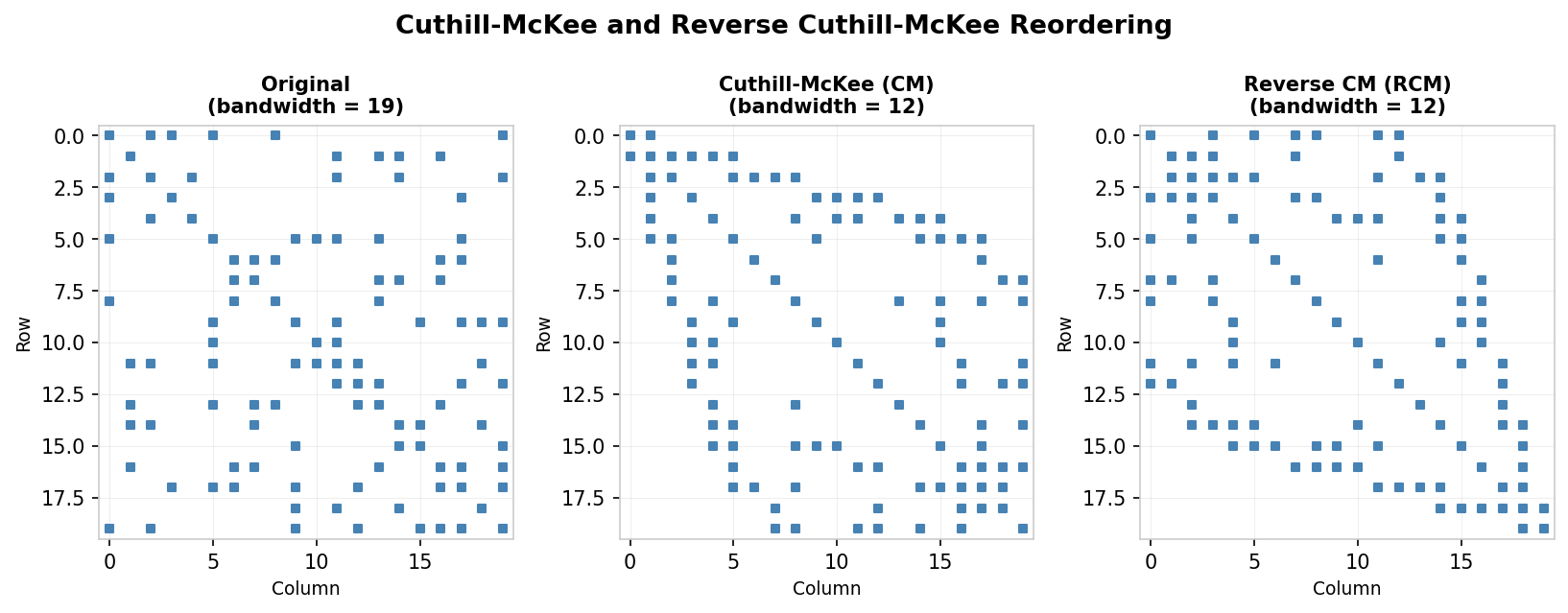

Effect of CM and RCM reordering on bandwidth of a sparse symmetric matrix

Effect of CM and RCM reordering on bandwidth of a sparse symmetric matrix

A concrete illustration applies the CM algorithm starting from node B in a small graph, breaking ties alphabetically. The CM ordering gives a matrix whose entries cluster near the diagonal, but the RCM reversal of that numbering produces an ordering with even less fill. In the example shown in the lecture notes, the original matrix has 30 positions within its envelope where fill can occur, CM reduces this to 20, and RCM further reduces it to 12.

CM/RCM reordering on an arrow matrix reduces fill-in dramatically

CM/RCM reordering on an arrow matrix reduces fill-in dramatically

The optimality of RCM on trees is a provable fact.

Theorem 9.1 (RCM is optimal on trees). On any tree, regardless of which starting node is chosen, the RCM ordering produces no fill during factorization.

The proof proceeds by showing that the first node in the RCM ordering must be a leaf (degree-1 node). Suppose for contradiction that the first node under RCM is a non-leaf \(u\), so \(u\) is connected to at least two nodes \(v\) and \(w\). Since the graph is a tree it contains no cycles, so the BFS from a starting node \(s\) can reach at most one of \(v, w\) before reaching \(u\). After reaching \(u\), the BFS must continue to the other child, so \(u\) cannot be the last node in the CM ordering, contradicting the assumption that its reversal is first in RCM. It follows that \(u\) must be a leaf. Removing the leaf \(u\) and rerunning CM on the remaining tree produces the same ordering (minus \(u\). Recursively, elimination always removes leaf nodes, never introducing new edges and hence never producing fill.

It is important to note that Theorem 9.1 does not extend to the plain CM ordering, and that RCM does not guarantee the globally optimal fill-reduction for general graphs. Determining the optimal ordering that minimises total fill-in is NP-complete [Yannakakis, 1981].

Worked Example: Cuthill-McKee and Reverse Cuthill-McKee

Consider a graph with nodes labelled A through J, and suppose we begin the Cuthill-McKee algorithm from node G, breaking ties alphabetically. The neighbours of G are H, E, B, and F. Their degrees are: H has degree 2, E has degree 3, and both B and F have degree 4. Within the level set of G, nodes are ordered by increasing degree, so the numbering proceeds: G receives 1, H receives 2, E receives 3, B receives 4, and F receives 5 (ties between B and F broken alphabetically).

We now expand from these four nodes. H’s neighbours are F and G, but both are already numbered, so H contributes nothing new. E’s neighbours include B (already numbered), G (already numbered), and C — so C is discovered here with degree 3, and receives number 6. B’s neighbours include C, E, G (all already numbered), and J, which has degree 1 and receives number 7. F’s neighbours include A (degree 2), D (degree 4), G, and H (both already numbered). Ordering A and D by increasing degree gives A number 8 and D number 9. Finally, D’s only unnumbered neighbour is I, which has degree 1 and receives number 10.

The complete CM ordering is therefore: G=1, H=2, E=3, B=4, F=5, C=6, J=7, A=8, D=9, I=10. Applying the reversal formula, the RCM ordering maps CM position \(i\) to RCM position \(n - i + 1\), so position 1 (G) becomes position 10, position 2 (H) becomes position 9, and so on.

In the original unnumbered matrix the envelope contains 30 positions where fill can occur. After applying CM this reduces to 20, and after applying RCM it reduces further to 12, illustrating the practical advantage of the reversal step.

Local Reorderings

The previous lecture established that the graph associated with a symmetric sparse matrix provides a powerful abstraction for understanding fill-in during factorization, and that envelope methods such as Reverse Cuthill-McKee can reduce fill by minimising the bandwidth. This lecture examines two complementary local strategies: Markowitz reordering and Minimum Degree reordering.

10.1 Markowitz Reordering

Markowitz reordering [Markowitz, 1957] is a local rule that greedily minimises fill-in for the current elimination step only, without attempting to optimise globally. After \(k\) steps of LU factorisation the matrix has been partially reduced to a remaining lower-right block \(A^{(k)}\). During one more step of LU, multiples of the pivot row are subtracted from rows below it, but only in columns where those rows have a nonzero entry. Fill-in does not occur in rows that already have a zero in the column to be eliminated.

For any candidate pivot entry \(a^{(k)}_{ij}\), let \(r^{(k)}_i\) denote the number of nonzeros in row \(i\) of \(A^{(k)}\) and \(c^{(k)}_j\) denote the number of nonzeros in column \(j\). The maximum possible fill-in introduced by using \(a^{(k)}_{ij}\) as the pivot is \((r^{(k)}_i - 1)(c^{(k)}_j - 1)\), which is called the Markowitz product. The Markowitz algorithm selects the pivot \(a^{(k)}_{pq}\) that minimises this product:

\[ (p, q) = \arg\min_{k \leq i,j} (r^{(k)}_i - 1)(c^{(k)}_j - 1). \]The minimising entry is then swapped into the pivot position by permuting rows and columns. This is only an approximation of the true fill, since some of the positions that could in principle be filled may already be nonzero before the step begins.

For symmetric matrices, since \(\arg\min_i r^{(k)}_i = \arg\min_j c^{(k)}_j\), it suffices to examine only diagonal entries \(a^{(k)}_{pp}\) and to select

\[ p = \arg\min_{k \leq i} (r^{(k)}_i - 1). \]Applying both a row and column swap symmetrically brings \(a^{(k)}_{pp}\) into the pivot position without breaking the symmetry of the matrix. This approach preserves diagonal dominance, which is important for stability, and corresponds directly to a node reordering in the graph representation.

10.2 Minimum Degree Reordering

The symmetric specialisation of Markowitz reordering leads naturally to the minimum degree algorithm. The quantity \(r^{(k)}_i - 1\) for a diagonal entry counts the number of off-diagonal nonzeros in row \(i\), which is precisely the degree of node \(i\) in the graph of \(A^{(k)}\). Minimising the Markowitz product for symmetric matrices is therefore equivalent to choosing the node with the smallest current degree as the next pivot.

Crucially, this graph-view decision can be computed before factorisation begins by renumbering nodes based on the elimination graph. Each step of Cholesky factorisation corresponds in the graph to: deleting the chosen node and all its edges, then connecting all its former neighbours together with new edges (those new edges represent fill). The minimum degree algorithm constructs this elimination graph iteratively, and at each step selects the node with the least current degree, removes it, adds the necessary fill edges, and relabels the graph.

When multiple nodes share the same minimum degree, tie-breaking strategies are needed. Common approaches include selecting the node with the smallest original index, pre-ordering with RCM and honouring that sequence, or using “multiple minimum degree” which simultaneously eliminates several non-interacting nodes of the same degree.

Comparison of minimum-degree reordering versus RCM

Comparison of minimum-degree reordering versus RCM

Minimum degree reordering is optimal on trees, because every elimination step in a tree removes a leaf node (degree 1) and never introduces new edges. However, it is still a local, greedy heuristic with no guarantee of global optimality for general graphs. A counterexample exists: consider a graph whose matrix envelope is already completely filled in. Factorisation can produce no additional fill. Yet minimum degree reordering would eliminate a low-degree interior node first, immediately introducing fill edges between its neighbours, degrading the structure.

Several improvements have been proposed to extend basic minimum degree. Supervariables (indistinguishable nodes) are groups of nodes with identical adjacency structure that can be eliminated simultaneously, reducing both computation and storage. Multiple elimination allows non-adjacent nodes of the same degree to be eliminated together safely. Approximate minimum degree (AMD) replaces exact degree updates with cheaper approximations, substantially reducing running time. A quotient graph representation provides a smarter data structure for tracking the evolving graph. In practice, Matlab’s symamd (symmetric approximate minimum degree) uses these ideas and often outperforms RCM, though it still provides no global optimality guarantee.

Stability of Factorizations

The story of this course up to this point has been: direct methods for solving linear systems rely on matrix factorisation; matrices often possess exploitable structure (sparsity, bandedness, symmetry, positive definiteness); reordering can reduce factorisation cost by limiting fill. This lecture turns to a different question — not how efficient the factorisation is, but how numerically reliable it is. A new use for row and column swaps emerges: not to reduce fill-in but to improve stability.

11.1 Conditioning versus Stability

How do small errors or perturbations to the matrix problem \(Ax = b\) affect the exact solution? The matrix condition number \(\kappa(A) = \|A\| \cdot \|A^{-1}\|\) provides a measure of this sensitivity, and satisfies \(\kappa \geq 1\). If the relative perturbations in \(A\) and \(b\) are both bounded by \(\delta\), then the relative change in the solution satisfies

\[ \frac{\|\Delta x\|}{\|x\|} \leq 2\kappa(A)\delta + O(\delta^2). \]Stability is a distinct property from conditioning. Conditioning describes the intrinsic sensitivity of the mathematical problem; stability describes how errors introduced during computation (such as rounding) propagate through the numerical algorithm. A highly stable algorithm cannot rescue a poorly conditioned problem, but an unstable algorithm can produce meaningless results even when the underlying problem is well-conditioned. For LU factorisation, the basic goal is to keep the magnitudes of entries in \(L\) and \(U\) under control — huge entries inflate rounding errors and may render the product \(LU\) far from the original \(A\).

11.2 Pivoting

Factorisation as described so far assumes that each diagonal entry encountered as a pivot, \(a^{(k-1)}_{kk}\), is nonzero at each step. Problems arise when a pivot is exactly zero (division by zero) or close to zero (large round-off amplification). Pivoting addresses these problems by permuting rows or columns to bring a larger-magnitude entry into the pivot position before each elimination step.

Complete pivoting searches the entire remaining submatrix \(A^{(k-1)}\) for the entry of largest magnitude and swaps both its row and its column to the pivot position. Partial pivoting is less expensive: it searches only within the current column for the largest-magnitude entry and swaps only rows, placing that entry into the pivot position. For most practical problems, partial pivoting is sufficient.

LU factorisation with partial pivoting satisfies

\[ \hat{L}\hat{U} = \hat{P}A + \Delta A, \qquad \frac{\|\Delta A\|}{\|A\|} = O(\rho \epsilon_{\text{machine}}), \]where \(\hat{P}\) is the computed permutation and \(\rho\) is the growth factor, approximately \(\rho \approx \|U\| / \|A\|\).

Partial pivoting can in principle fail: there exist contrived matrices for which successive elimination steps produce exponential growth in the entries of \(U\). In one classic family of examples the growth factor reaches \(\rho \approx 2^{n-1}\), causing \(\|U\|\) to dwarf \(\|A\|\). However, as Trefethen and Bau observe, “In fifty years of computing, no matrix problems that excite an explosive instability are known to have arisen under natural circumstances.” The cost of complete pivoting is therefore rarely justified, and partial pivoting yielding the factorisation \(PA = LU\) is standard practice.

There is also an important interaction between pivoting for stability and pivoting for sparsity: permuting for large pivots tends to destroy the sparsity pattern that reordering was designed to exploit. This trade-off must be managed carefully.

11.3 When Pivoting Is Unnecessary

For certain structured matrices, pivoting for stability is never required because the pivots are automatically positive throughout the factorisation.

Theorem 11.1. If \(A\) is symmetric positive definite (SPD), then during LU factorisation the pivot \(a^{(k-1)}_{kk} > 0\) for all \(k\).

The proof proceeds by induction on the matrix size. For a \(1 \times 1\) SPD matrix the single entry is a positive scalar. For \(n > 1\), write

\[ A = \begin{pmatrix} a_{11} & v^T \\ v & A_{22} \end{pmatrix}. \]Since \(A\) is SPD its leading principal submatrices are positive definite, so \(a_{11} > 0\). One step of LU using \(a_{11}\) as the pivot transforms \(A\) to a new Schur complement

\[ A^{(1)}_{22} = A_{22} - \frac{vv^T}{a_{11}}. \]One shows that \(A^{(1)}_{22}\) is itself SPD by constructing, for any nonzero \(x \in \mathbb{R}^{n-1}\), the vector \(y = (-x^T v / a_{11},\; x^T)^T \in \mathbb{R}^n\) and exploiting \(y^T A y > 0\). Repeating inductively, every subsequent pivot is positive.

Pivoting is also unnecessary for row diagonally dominant matrices, where \(|a_{kk}| > \sum_{j \neq k} |a_{kj}|\) for every row \(k\), and for column diagonally dominant matrices, where the analogous condition holds for each column. In both cases, the diagonal entry is guaranteed to dominate, ensuring well-conditioned pivots throughout.

Image Denoising

Digital images often contain random noise — small random errors — arising from sensors, capture conditions, or the stochastic nature of the measurement process. Synthetic images produced by ray-tracing also accumulate noise unless the simulation is run for an extremely long time; a practical alternative is to ray-trace briefly and then apply a mathematical denoising procedure to the result. The goal of image denoising is to recover, from a corrupted observation, a version of the image with the noise removed or substantially reduced.

12.1 Inverse Problems

Image denoising is an inverse problem: given observations, reconstruct the underlying source that generated them. Let \(u_0\) be the noisy observed image, \(u^*\) the unknown clean signal, and \(n\) the noise, so that \(u_0 = u^* + n\). We treat grayscale images as 2D scalar functions by writing \(u_{ij}\) for the pixel intensity at row \(i\), column \(j\). An estimate of the noise variance \(\|n\|_2^2 = \sigma^2\) is assumed known.

Two key assumptions make the inverse problem tractable: first, the noise is not too large, meaning the observation \(u_0\) is close to the true signal \(u^*\); second, the true signal has exploitable structure, typically smoothness. A PDE-based approach formulates the problem as an optimisation:

\[ \min_u \; \text{"fluctuation of pixel value"} \quad \text{subject to} \quad \|u - u_0\|_2^2 \approx \sigma^2. \]The objective seeks to minimise rapid changes in pixel colour (assumed to be noise), while the constraint ensures the deviation of the solution from the observation matches the expected noise level. Because infinitely many decompositions of \(u_0\) satisfy the constraint, the problem is ill-posed and a choice of regularisation is needed to single out a particular solution.

12.2 Regularisation Models

Rather than working with the constrained form, it is often more convenient to work with the penalised form