PHYS 359: Statistical Mechanics

Alan Jamison

Estimated study time: 3 hr 43 min

Table of contents

Sources and References

Primary textbook — Schroeder, D. V. An Introduction to Thermal Physics. Addison-Wesley, 2000.

Supplementary texts — Pathria, R. K. and Beale, P. D. Statistical Mechanics, 4th ed. Academic Press, 2021; Kardar, M. Statistical Physics of Particles. Cambridge University Press, 2007; Reif, F. Fundamentals of Statistical and Thermal Physics. McGraw-Hill, 1965; Kittel, C. and Kroemer, H. Thermal Physics, 2nd ed. W. H. Freeman, 1980; Sethna, J. P. Statistical Mechanics: Entropy, Order Parameters, and Complexity, 2nd ed. Oxford University Press, 2021; Huang, K. Statistical Mechanics, 2nd ed. Wiley, 1987.

Online resources — Tong, D. Statistical Physics (Cambridge Part II Mathematical Tripos lecture notes); MIT OpenCourseWare 8.044 Statistical Physics I and 8.333 Statistical Mechanics I (Kardar); NIST Chemistry WebBook.

Part I: Foundations and the Three Ensembles

Chapter 1: The Statistical Programme

Why big is different

Every physics course you have encountered before statistical mechanics considers a narrow slice of nature: the electric field of a point charge, the trajectory of a projectile, the propagation of a wave through a uniform medium. You are trained to track individual particles and solve equations of motion for each of them. Statistical mechanics is radically different. Its subject is systems with so many degrees of freedom that tracking individuals is not only impractical but, more surprisingly, unnecessary. The three-body gravitational problem is already intractable in general; a three-body interaction in a fluid, similarly, admits no clean analytic solution. Yet when you have \(10^{23}\) molecules, the physics becomes simpler, not harder. That apparent paradox is the central message of this chapter.

The reason is that we change our question. Instead of asking “what is the position and momentum of molecule number 4,183,771?” we ask “what is the pressure of this gas? What is its temperature? How much heat will it absorb if we raise the temperature by ten degrees?” These macroscopic questions are statistical questions — answers that sum over the enormous multiplicity of microscopic configurations. The theory that does this systematically is statistical mechanics, and it has turned out to be one of the most broadly applicable frameworks in all of science, reaching from astrophysics (neutron stars) to condensed matter (superconductors) to biology (protein folding) and even economics (agent-based models of markets).

Equilibrium and the fundamental postulate

Before we can count anything, we need an assumption powerful enough to make the statistics well-defined. Statistical mechanics rests on a single foundational principle, stated here in the careful language of Schroeder:

In an isolated system at equilibrium, all accessible microstates are equally probable.

An equilibrium state is one in which all macroscopic variables — pressure, volume, temperature, magnetisation — have ceased to change with time. This does not mean that nothing is happening at the microscopic level. Gas molecules are still bouncing off walls; electrons are still hopping between orbitals. Equilibrium is a macroscopic concept, meaning that the aggregate statistics of these microscopic processes have stabilised. A gas in a cylinder with a fixed piston is in mechanical equilibrium: the average force the gas exerts on the piston equals the external pressure, even though individual molecules hit the piston at random times. A chemical system at equilibrium has fixed concentrations, even though individual molecules continue to react in both directions. A system in thermal equilibrium exchanges energy with its surroundings at the same rate in both directions, so the net energy flow is zero.

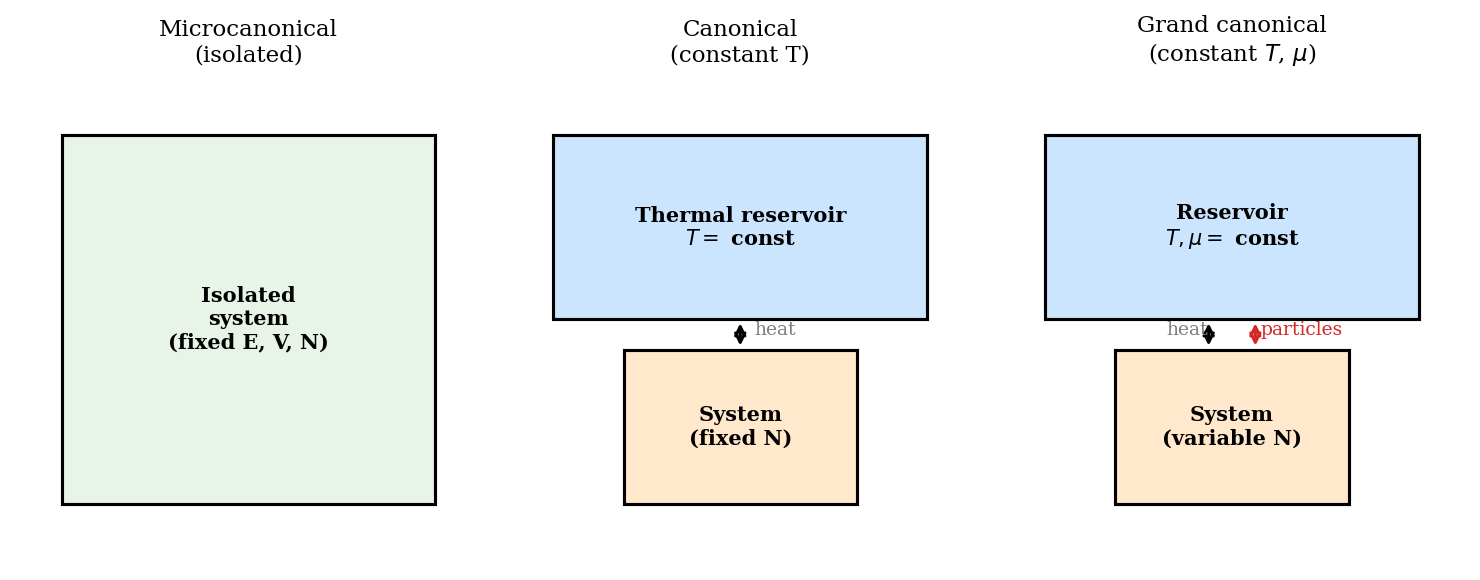

The word isolated is equally important. An isolated system cannot exchange energy or particles with anything outside itself. Its total energy \(E\), volume \(V\), and particle number \(N\) are all fixed. In practice we often relax one or more of these constraints — allowing energy exchange with a heat bath, or particle exchange with a particle reservoir — and that relaxation gives rise to the canonical and grand canonical ensembles we will develop in later chapters. But the fundamental postulate applies to the isolated case, and everything else is built from it.

Given equal probability of all microstates, the central task of statistical mechanics is counting: how many microstates \(\Omega\) are compatible with the observed macroscopic state? A state with more microstates will be overwhelmingly more likely to be observed than one with few microstates. This shifts the whole enterprise from dynamics to combinatorics.

Combinatorics: the mathematics of counting

Two counting techniques appear throughout this course, and you should have seen both in PHYS 358.

Combinations. When we have \(N\) distinguishable objects and wish to choose \(k\) of them (without regard to order), the number of ways to do so is the binomial coefficient

\[ \binom{N}{k} = \frac{N!}{k!\,(N-k)!}. \]The canonical physics example is a spin-\(\tfrac{1}{2}\) paramagnet: \(N\) independent magnetic dipoles, each pointing either up or down. If \(N_\uparrow\) of them point up, the number of distinct spin configurations with that value of \(N_\uparrow\) is \(\Omega = \binom{N}{N_\uparrow}\). Note the symmetry \(\binom{N}{k} = \binom{N}{N-k}\): the number of ways to have \(N_\uparrow\) up-spins equals the number of ways to have \(N - N_\uparrow\) down-spins, which is physically obvious — you are just relabelling up and down.

Ball-and-stick counting (stars and bars). The second technique addresses the question: in how many ways can \(q\) identical balls be distributed among \(N\) bins? Represent the \(N\) bins by the \(N-1\) dividers between them; then arrange the \(q\) balls and \(N-1\) sticks in a row. Any ordering of these \(q + N - 1\) objects corresponds to a valid distribution, and the number of such orderings is

\[ \Omega = \binom{q + N - 1}{q}. \]For an Einstein solid — \(N\) quantum harmonic oscillators sharing \(q\) units of vibrational energy — the oscillators are the bins and the energy quanta are the balls. The number of accessible microstates with total energy \(E = q\hbar\omega\) is therefore \(\Omega = \binom{N+q-1}{q}\). This simple formula encapsulates the entire statistical mechanics of the Einstein solid.

Stirling’s approximation and why statistical mechanics works

Almost every application of these counting formulae involves factorials of astronomically large numbers. If \(N \sim N_A \approx 6 \times 10^{23}\), computing \(N!\) directly is hopeless. Stirling’s approximation rescues us:

\[ N! \approx N^N e^{-N} \sqrt{2\pi N}, \qquad \ln N! \approx N\ln N - N + \tfrac{1}{2}\ln N. \]For the sizes of \(N\) we encounter, the \(\tfrac{1}{2}\ln N\) term is negligible compared to \(N\ln N\), so the working approximation is simply \(\ln N! \approx N\ln N - N\).

The derivation of Stirling’s formula is itself instructive. We begin with the gamma function representation of the factorial. By successive integration by parts, one can verify that

\[ N! = \Gamma(N+1) = \int_0^\infty t^N e^{-t}\,dt. \]The integrand \(t^N e^{-t}\) vanishes at both endpoints and has a single maximum. To find the maximum, write the integrand as \(e^{N\ln t - t}\) and differentiate the exponent with respect to \(t\), setting the result to zero:

\[ \frac{d}{dt}(N\ln t - t) = \frac{N}{t} - 1 = 0 \quad \Rightarrow \quad t^* = N. \]Now expand the exponent in a Taylor series about \(t^* = N\). Writing \(t = N + u\), the exponent becomes

\[ N\ln(N+u) - (N+u) = N\ln N + N\ln\!\left(1 + \frac{u}{N}\right) - N - u \approx N\ln N - N - \frac{u^2}{2N}, \]where we have used \(\ln(1 + u/N) \approx u/N - u^2/(2N^2)\) and collected terms. The integrand therefore has the Gaussian form

\[ e^{N\ln t - t} \approx e^{N\ln N - N}\, e^{-u^2/(2N)}. \]Substituting back into the integral (and extending the lower limit from \(-N\) to \(-\infty\), which is justified because the Gaussian is negligibly small well before reaching \(u = -N\)):

\[ N! \approx e^{N\ln N - N} \int_{-\infty}^\infty e^{-u^2/(2N)}\,du = e^{N\ln N - N}\sqrt{2\pi N}. \]Taking the logarithm reproduces the Stirling formula. The appearance of a Gaussian here is the first of many times we will encounter this pattern: the logarithm of a large combinatorial object has a sharp maximum, the region near the maximum is approximately parabolic, and the whole distribution is therefore approximately Gaussian.

To see why statistical mechanics works, consider two Einstein solids A and B in thermal contact, sharing a fixed total of \(q\) energy quanta. In the high-temperature limit (\(q \gg N\)) one can show that the total multiplicity as a function of the number of quanta on side A, \(q_A\), takes the Gaussian form

\[ \Omega(q_A) \approx \Omega_{\rm total} \exp\!\left[-\frac{2N}{q^2}\!\left(q_A - \frac{q}{2}\right)^2\right]. \]The peak occurs at \(q_A^* = q/2\) (equal energy on each side, i.e.\ thermal equilibrium) and the fractional width of the distribution is

\[ \frac{\sigma_{q_A}}{q_A^*} = \frac{1}{\sqrt{N}}. \]For \(N \sim 10^{23}\) this fraction is \(\sim 10^{-12}\): the distribution is so ferociously sharp that any macroscopic observation will find the system within a part in a trillion of equilibrium. Statistical mechanics is not an approximation in any practical sense — it becomes exact as \(N\to\infty\), which is the regime we always inhabit.

Entropy and the statistical definition

Boltzmann’s great insight was that the thermodynamic entropy of a state is the logarithm of its multiplicity:

\[ \boxed{S = k_{\rm B}\ln\Omega,} \]where \(k_{\rm B} = 1.381 \times 10^{-23}\ \text{J K}^{-1}\) is Boltzmann’s constant. This single equation bridges the microscopic world of counting to the macroscopic world of thermodynamics. Because \(\Omega\) grows exponentially with the number of particles (a typical isolated solid has \(\Omega \sim e^N\)), taking the logarithm produces an extensive quantity — entropy doubles when you double the system, exactly as thermodynamics demands. The tendency of isolated systems to spontaneously evolve toward the highest-\(\Omega\) macrostate is nothing other than the second law of thermodynamics.

The historical significance of this formula is hard to overstate. Before Boltzmann, entropy was a purely macroscopic concept introduced by Clausius in 1865 as a measure of the “degradation” of energy — a quantity that always increased in spontaneous processes. Why entropy should increase, and what it fundamentally represented, was mysterious. Boltzmann’s identification \(S = k_{\rm B}\ln\Omega\) answered both questions simultaneously. Entropy increases because the system evolves toward more probable macrostates — this is not a law imposed from outside, but a consequence of the vastly larger number of high-entropy microstates. A system in a low-entropy state (e.g.\ a gas compressed into one corner of a box) will, if left to itself, spread into a high-entropy state (filling the entire box) simply because there are exponentially more microstates in the expanded configuration. The second law is a law of large numbers, not a fundamental prohibition.

Boltzmann’s equation is engraved on his tombstone in Vienna — a fitting monument to the idea that unites the atomic and thermodynamic pictures of nature. Interestingly, the formula as written (\(S = k\log W\), using Boltzmann’s original notation where \(W\) denotes the number of microstates) was actually first written in this compact form by Max Planck, not Boltzmann. Boltzmann introduced the logarithmic relationship and Planck coined the constant \(k\) that bears Boltzmann’s name.

Why entropy is extensive. This point deserves emphasis. For \(N\) independent spins, the total multiplicity is roughly \(\Omega \sim 2^N = e^{N\ln 2}\), so \(S = k_{\rm B} N\ln 2\), which is proportional to \(N\). The logarithm converts an exponential (which would make entropy an astronomically large and non-additive quantity) into something linear in system size. Two non-interacting systems A and B with multiplicities \(\Omega_A\) and \(\Omega_B\) have a combined multiplicity \(\Omega_A\Omega_B\) (since microstates factorise for independent systems), and therefore a combined entropy \(k_{\rm B}\ln(\Omega_A\Omega_B) = S_A + S_B\). Extensivity — the property that entropy is additive and scales with system size — is thus a direct consequence of the logarithm in Boltzmann’s definition. Without it, thermodynamics as we know it would be impossible: you could not meaningfully add the entropies of two subsystems.

Temperature from entropy: the statistical definition of T

The thermodynamic temperature has a sharp statistical interpretation that follows naturally from the entropy definition. Consider two systems A and B that can exchange energy through a thermal contact. The total energy is \(E = E_A + E_B = \text{const}\). The total entropy is \(S_{\rm tot} = S_A(E_A) + S_B(E_B)\). At equilibrium, \(S_{\rm tot}\) is maximised over the distribution of energy between A and B. Taking the derivative with respect to \(E_A\) and setting it to zero:

\[ \frac{\partial S_{\rm tot}}{\partial E_A} = \frac{\partial S_A}{\partial E_A} + \frac{\partial S_B}{\partial E_B}\frac{\partial E_B}{\partial E_A} = \frac{\partial S_A}{\partial E_A} - \frac{\partial S_B}{\partial E_B} = 0. \](We used \(\partial E_B/\partial E_A = -1\) since \(E_A + E_B\) is fixed.) Equilibrium therefore requires

\[ \frac{\partial S_A}{\partial E_A} = \frac{\partial S_B}{\partial E_B}. \]This is the condition that the two systems have the same temperature. It tells us that temperature is the quantity whose equality characterises thermal equilibrium, and that temperature must be a function of \(\partial S/\partial E\). The identification with the thermodynamic temperature is

\[ \boxed{\frac{1}{T} = \frac{\partial S}{\partial E}\bigg|_{V,N}.} \]This is not a definition to be memorised and applied by rote — it is a derivation of temperature from the more fundamental concept of entropy. Energy flows from high \(T\) to low \(T\) because doing so increases the total entropy: a hot body has a smaller \(\partial S/\partial E\) than a cold body, so transferring a small amount of energy \(\delta E\) from hot to cold decreases \(S_{\rm hot}\) by \(\delta E/T_{\rm hot}\) and increases \(S_{\rm cold}\) by \(\delta E/T_{\rm cold}\), with \(\delta E/T_{\rm cold} > \delta E/T_{\rm hot}\) giving a net increase in total entropy.

Two interacting Einstein solids: maximising entropy

To make the temperature condition concrete, let us apply it to two Einstein solids A and B with \(N_A\) and \(N_B\) oscillators and \(q_A\) and \(q_B\) energy quanta, subject to \(q_A + q_B = q = \text{const}\). The multiplicity of each solid in the large-\(q\) limit (using Stirling’s approximation) is

\[ \Omega_A = \binom{q_A + N_A - 1}{q_A} \approx \frac{(q_A + N_A)^{q_A + N_A}}{q_A^{q_A} N_A^{N_A}}. \]The log-multiplicity is \(\ln\Omega_A \approx (q_A + N_A)\ln(q_A + N_A) - q_A\ln q_A - N_A\ln N_A\). To find the maximum of \(\ln\Omega_A + \ln\Omega_B\) subject to fixed total quanta, differentiate with respect to \(q_A\):

\[ \frac{\partial\ln\Omega_A}{\partial q_A} = \ln(q_A + N_A) - \ln q_A = \ln\!\left(1 + \frac{N_A}{q_A}\right). \]Setting \(\partial(\ln\Omega_A + \ln\Omega_B)/\partial q_A = 0\) (with \(\partial q_B/\partial q_A = -1\)):

\[ \ln\!\left(1 + \frac{N_A}{q_A}\right) = \ln\!\left(1 + \frac{N_B}{q_B}\right) \quad \Rightarrow \quad \frac{N_A}{q_A} = \frac{N_B}{q_B} \quad \Rightarrow \quad \frac{q_A}{N_A} = \frac{q_B}{N_B}. \]Equilibrium is achieved when the energy per oscillator is equal on both sides. Since the energy per oscillator is \(\hbar\omega q/N\), this is precisely the condition \(T_A = T_B\) — energy per mode is equipartitioned. Identifying \(1/T = \partial S/\partial E = k_{\rm B}\,\partial\ln\Omega/\partial E\) and using \(\partial E_A/\partial q_A = \hbar\omega\), one finds \(T = \hbar\omega/(k_{\rm B}\ln(1 + N_A/q_A))\), which gives the correct Einstein solid temperature. The argument shows explicitly, via Stirling’s approximation, that the maximum of the combined log-multiplicity occurs at equal energy per oscillator — a beautiful and direct derivation of thermal equilibrium from pure counting.

Chapter 2: The Boltzmann Factor and the Canonical Ensemble

A small system in contact with a reservoir

Physical systems are almost never truly isolated. More commonly, a small system \(\mathcal{S}\) sits in contact with a large thermal reservoir \(\mathcal{R}\) — a heat bath so enormous that any energy exchanged with \(\mathcal{S}\) barely changes the reservoir’s temperature. The reservoir has fixed temperature \(T\); energy flows freely between system and reservoir, but the total energy \(E_{\rm tot} = E_s + E_r\) of the combined (isolated) system is fixed.

To find the probability that \(\mathcal{S}\) occupies a microstate with energy \(E_s\), we use the fundamental postulate on the composite system. The number of microstates available to the combined system when \(\mathcal{S}\) has energy \(E_s\) is

\[ \Omega_{\rm tot}(E_s) = 1 \times \Omega_r(E_{\rm tot} - E_s), \]where the 1 counts the single microstate of \(\mathcal{S}\) that we are specifying, and \(\Omega_r\) is the multiplicity of the reservoir. By the fundamental postulate, the probability of observing \(\mathcal{S}\) in that microstate is proportional to \(\Omega_{\rm tot}\). Taking the logarithm and Taylor-expanding around \(E_s = 0\) (valid because \(E_s \ll E_{\rm tot}\)):

\[ \ln\Omega_r(E_{\rm tot} - E_s) \approx \ln\Omega_r(E_{\rm tot}) - E_s \frac{\partial \ln\Omega_r}{\partial E_r}. \]The partial derivative \(\partial\ln\Omega_r/\partial E_r = (1/k_{\rm B})\partial S_r/\partial E_r\) is, from our temperature definition, equal to \(1/(k_{\rm B}T)\). Setting \(\beta \equiv 1/(k_{\rm B}T)\), we obtain the Boltzmann factor:

\[ \boxed{P(s) = \frac{1}{Z} e^{-\beta E_s},} \]where the sum \(Z = \sum_s e^{-\beta E_s}\) over all microstates of \(\mathcal{S}\) ensures normalisation. This is the partition function — from the German Zustandssumme, “sum over states”. Its argument is \(\beta\), which encodes the temperature.

The logic is worth pausing over. The Boltzmann weight \(e^{-\beta E_s}\) does not say that high-energy states are forbidden; it says they are exponentially suppressed relative to low-energy ones. The suppression sharpens as the temperature decreases (large \(\beta\)): at \(T\to 0\) only the ground state is occupied; at \(T\to\infty\) all states become equally probable, recovering the microcanonical ensemble on the system alone.

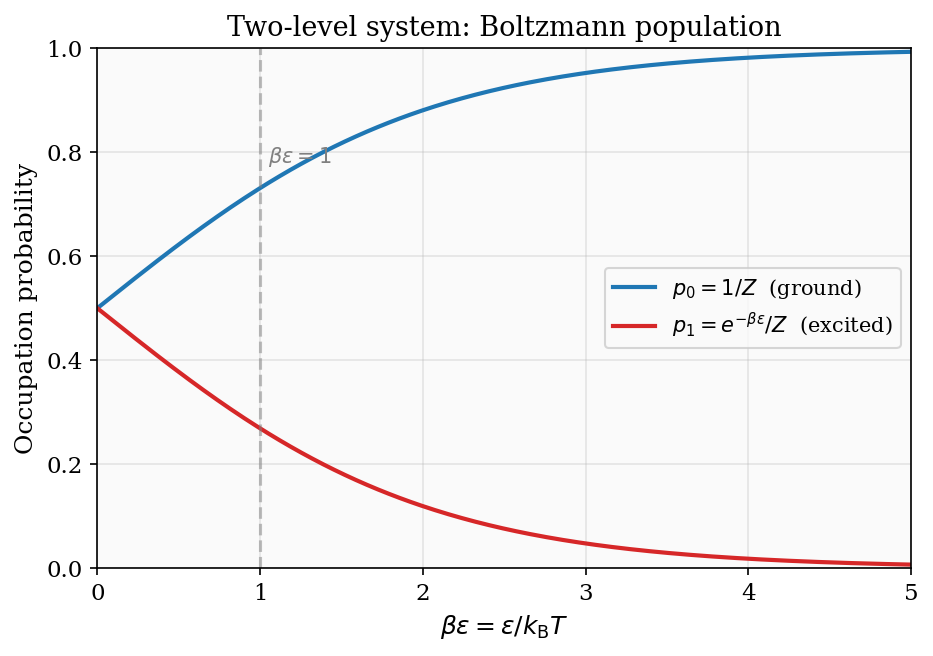

The physical meaning of \(\beta\)

The parameter \(\beta = 1/(k_{\rm B}T)\) is sometimes called the coldness or inverse temperature, and there are good reasons to regard it as the more natural variable for quantum statistics. Its physical interpretation is transparent: \(\beta\) measures how sharply the Boltzmann distribution is peaked at the ground state. At large \(\beta\) (low temperature), the factor \(e^{-\beta\varepsilon}\) falls steeply as a function of energy — even a small excitation energy \(\varepsilon\) produces an enormous suppression \(e^{-\beta\varepsilon}\) — so the system is almost certain to be found in the ground state. At small \(\beta\) (high temperature), the Boltzmann weights become nearly uniform: \(e^{-\beta\varepsilon} \approx 1 - \beta\varepsilon \approx 1\), and all states are nearly equally probable regardless of their energy. The crossover between these two regimes occurs when \(\beta\varepsilon \sim 1\), i.e.\ when the thermal energy \(k_{\rm B}T\) is comparable to the characteristic level spacing \(\varepsilon\). This is the condition for classical statistics to apply: when \(k_{\rm B}T\) greatly exceeds all relevant energy gaps, the system cannot resolve its discrete quantum structure and behaves classically.

Thinking in terms of \(\beta\) rather than \(T\) also clarifies the meaning of negative temperature, which we will encounter in Chapter 3: a system with \(\partial S/\partial E < 0\) has \(1/T < 0\), i.e.\ \(\beta < 0\). Such a system is hotter than any positive-temperature state, a fact that is much more naturally stated as “\(\beta\) has crossed zero” than as “\(T\) has jumped from \(+\infty\) to \(-\infty\).”

Figure 1: Occupation probabilities of a two-level system as a function of \(\beta\varepsilon = \varepsilon/k_{\rm B}T\). At low temperature the ground state dominates; at high temperature both levels approach equal occupation.

The partition function as a generating function

The partition function \(Z(\beta)\) is far more than a normalisation constant. All equilibrium properties of the system can be derived from it by differentiation.

Average energy. Since \(\langle E\rangle = \sum_s E_s P(s) = \sum_s E_s e^{-\beta E_s}/Z\), we recognise that \(\partial Z / \partial(-\beta) = \sum_s E_s e^{-\beta E_s}\). Therefore

\[ \langle E \rangle = -\frac{\partial \ln Z}{\partial \beta}. \]Entropy and Helmholtz free energy. From the thermodynamic identity \(F = -k_{\rm B}T\ln Z\), all other potentials follow by standard Legendre transforms. The entropy is \(S = -\partial F/\partial T\), the pressure is \(p = -\partial F/\partial V\), and the heat capacity at constant volume is

\[ C_V = \frac{\partial \langle E \rangle}{\partial T} = k_{\rm B}\beta^2\frac{\partial^2 \ln Z}{\partial\beta^2}. \]Energy fluctuations and the variance

The heat capacity can be related to the fluctuations in energy in a compelling way. Consider

\[ \langle E^2\rangle = \frac{1}{Z}\sum_s E_s^2\, e^{-\beta E_s} = \frac{1}{Z}\frac{\partial^2 Z}{\partial\beta^2}. \]The variance in energy is \(\langle(\Delta E)^2\rangle = \langle E^2\rangle - \langle E\rangle^2\). After some algebra using the expressions above:

\[ \langle(\Delta E)^2\rangle = \frac{\partial^2\ln Z}{\partial\beta^2} = -\frac{\partial\langle E\rangle}{\partial\beta} = k_{\rm B}T^2\frac{\partial\langle E\rangle}{\partial T} = k_{\rm B}T^2 C_V. \]This is a profound result. The fluctuations in energy of a system in contact with a heat bath are directly proportional to its heat capacity. A large heat capacity means the system can absorb energy without changing temperature, which in turn means many different microstates have similar energies — precisely the condition for large fluctuations. The relative fluctuation is

\[ \frac{\sqrt{\langle(\Delta E)^2\rangle}}{\langle E\rangle} = \frac{\sqrt{k_{\rm B}T^2 C_V}}{\langle E\rangle}. \]For a monatomic ideal gas, \(C_V = \tfrac{3}{2}Nk_{\rm B}\) and \(\langle E\rangle = \tfrac{3}{2}Nk_{\rm B}T\), so the relative fluctuation is \(1/\sqrt{N} \sim 10^{-12}\). Thermal fluctuations are utterly negligible for macroscopic systems — another reason statistical mechanics predictions are effectively exact.

A worked example: the harmonic oscillator

The quantum harmonic oscillator with energy levels \(E_n = n\hbar\omega\) (measuring from the ground state, dropping the zero-point energy \(\hbar\omega/2\) for convenience) provides a clean illustration of the partition function machinery. The partition function is a geometric series:

\[ Z = \sum_{n=0}^\infty e^{-\beta n\hbar\omega} = \frac{1}{1 - e^{-\beta\hbar\omega}}. \]The average energy follows from differentiation:

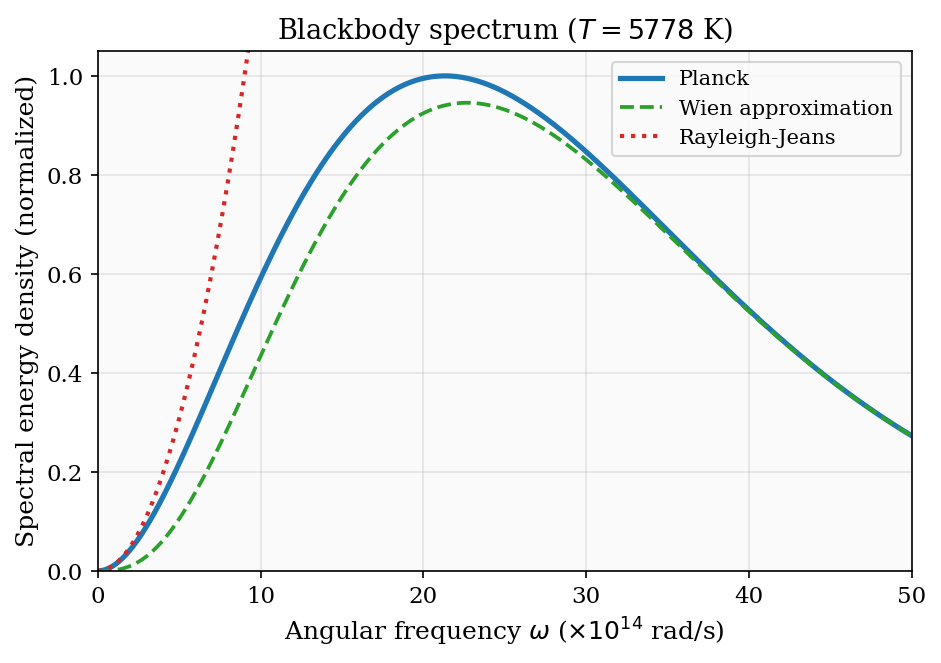

\[ \langle E\rangle = -\frac{\partial\ln Z}{\partial\beta} = \frac{\hbar\omega}{e^{\beta\hbar\omega} - 1}. \]This is the Planck function for a single mode, familiar from blackbody radiation. The heat capacity of a single oscillator is

\[ C = \frac{\partial\langle E\rangle}{\partial T} = k_{\rm B}\left(\frac{\hbar\omega}{k_{\rm B}T}\right)^2 \frac{e^{\hbar\omega/k_{\rm B}T}}{(e^{\hbar\omega/k_{\rm B}T}-1)^2}. \]Two limits are worth examining explicitly. At high temperature (\(k_{\rm B}T \gg \hbar\omega\), i.e.\ \(\beta\hbar\omega \ll 1\)): the exponential expands as \(e^{\beta\hbar\omega} \approx 1 + \beta\hbar\omega\), so \(\langle E\rangle \approx k_{\rm B}T\) and \(C \approx k_{\rm B}\). This is the classical equipartition result — one unit of \(k_{\rm B}T\) per quadratic degree of freedom (here the average of kinetic and potential energy each giving \(\tfrac{1}{2}k_{\rm B}T\)). At low temperature (\(k_{\rm B}T \ll \hbar\omega\)), the denominator \(e^{\beta\hbar\omega} - 1 \approx e^{\beta\hbar\omega}\) is exponentially large, so \(\langle E\rangle \approx \hbar\omega\, e^{-\hbar\omega/k_{\rm B}T} \to 0\) and \(C \approx k_{\rm B}(\hbar\omega/k_{\rm B}T)^2 e^{-\hbar\omega/k_{\rm B}T} \to 0\) exponentially. The oscillator is frozen out — it is almost certainly in its ground state, and the small probability of excitation to the first level decreases exponentially with decreasing temperature. This exponential quenching is the signature of an energy gap: modes with \(\hbar\omega \gg k_{\rm B}T\) are quantum-mechanically frozen and do not contribute to the heat capacity.

The ensemble in which \(T\) is fixed and \(N\) and \(V\) are fixed is the canonical ensemble. The probability distribution \(P(s) \propto e^{-\beta E_s}\) is the Boltzmann distribution, and \(Z\) is its partition function.

Equivalence of ensembles and thermodynamic limits

A subtlety that deserves mention: the microcanonical, canonical, and grand canonical ensembles are, in principle, different probability distributions over microstates — the first fixes \(E\), the second fixes \(T\), the third fixes both \(T\) and \(\mu\). For a finite system they give different predictions for thermodynamic quantities. However, in the thermodynamic limit (\(N\to\infty\), \(V\to\infty\) with \(N/V = \text{const}\)), all three ensembles give identical predictions for intensive quantities like pressure, temperature, and density. This is the equivalence of ensembles, which holds whenever thermodynamic fluctuations are negligible — i.e.\ whenever \(N\) is large. We have already seen this: the relative energy fluctuation in the canonical ensemble is \(\sim 1/\sqrt{N}\), which vanishes as \(N\to\infty\), so the canonical distribution is effectively a delta function around the average energy in the thermodynamic limit, equivalent to the microcanonical distribution at that energy.

Ensemble inequivalence does occur in systems with long-range interactions (gravitational systems, plasmas, certain spin models) where energy is not extensive. In such systems, the canonical ensemble may predict phase transitions that the microcanonical ensemble does not, or vice versa. But for the systems we consider in this course — short-range interactions, extensive energy — ensemble equivalence is a reliable theorem that frees us to choose whatever ensemble is most convenient for calculation.

Chapter 3: Working with the Partition Function

Degeneracy and thermal excitation

When multiple microstates share the same energy, we say those states are degenerate. For a state with energy \(E_n\) and degeneracy \(g_n\), the partition function takes the form \(Z = \sum_n g_n e^{-\beta E_n}\), and the probability of finding the system with energy \(E_n\) is \(P_n = g_n e^{-\beta E_n}/Z\).

As a concrete example, consider a hydrogen atom in thermal equilibrium at temperature \(T\). The energy levels are \(E_n = -13.6\ \text{eV}/n^2\) with degeneracy \(g_n = 2n^2\) (counting spin and orbital angular momentum). At room temperature \(k_{\rm B}T \approx 0.025\ \text{eV}\), which is much smaller than the excitation energy of the first excited state, \(E_2 - E_1 \approx 10.2\ \text{eV}\). The Boltzmann factor \(e^{-\beta(E_2-E_1)} \approx e^{-408}\) is so tiny that essentially all hydrogen atoms are in the ground state. Only near the surface of a star, where \(T \sim 10^4\ \text{K}\) and \(k_{\rm B}T \sim 1\ \text{eV}\), does the first excited state begin to be significantly populated. This explains the strength of the Balmer absorption lines in stellar spectra: the \(n=2\) level has just enough thermal population to absorb visible photons.

The paramagnet revisited

The two-state paramagnet is the workhorse example of Chapter 2, and it pays to extract every physical quantity from its partition function. A spin-\(\tfrac{1}{2}\) dipole in field \(B\) (along \(\hat{z}\)) has energies \(E_\uparrow = -\mu B\) and \(E_\downarrow = +\mu B\), so

\[ Z = e^{\beta\mu B} + e^{-\beta\mu B} = 2\cosh(\beta\mu B). \]The average energy of a single dipole is

\[ \langle E \rangle = -\mu B\tanh(\beta\mu B), \]and the average component of the dipole along \(\hat{z}\) is \(\langle\mu_z\rangle = \mu\tanh(\beta\mu B)\). For \(N\) independent dipoles the total magnetisation is

\[ M = N\mu\tanh(\beta\mu B). \]Two limits illuminate the physics. At high temperature (\(k_{\rm B}T \gg \mu B\), i.e.\ \(\beta\mu B \to 0\)), \(\tanh x \approx x\) and \(M \approx N\mu^2 B / (k_{\rm B}T)\): the magnetisation is inversely proportional to temperature. This is Curie’s law, \(M \propto 1/T\), which can be understood qualitatively by noting that thermal fluctuations compete with the field in aligning the spins. At low temperature (\(\beta\mu B \to \infty\)), \(\tanh \to 1\) and \(M \to N\mu\): all spins align with the field. The transition between these regimes occurs on the scale \(k_{\rm B}T \sim \mu B\).

Heat capacity and the Schottky anomaly

To find the heat capacity of the paramagnet, differentiate the total energy \(U = -N\mu B\tanh(\beta\mu B)\) with respect to \(T\). Using \(\partial\tanh(\beta\mu B)/\partial T = -(\mu B/k_{\rm B}T^2)\operatorname{sech}^2(\beta\mu B)\):

\[ C = \frac{\partial U}{\partial T} = Nk_{\rm B}(\beta\mu B)^2\operatorname{sech}^2(\beta\mu B). \]This heat capacity has a striking feature: it is not monotonic. As \(T\to 0\), \(\beta\mu B\to\infty\) and \(\operatorname{sech}^2(\beta\mu B)\to 0\) exponentially, so \(C\to 0\). As \(T\to\infty\), \(\beta\mu B\to 0\) and \((\beta\mu B)^2\operatorname{sech}^2(\beta\mu B)\to 0\) as \(\beta^2\to 0\), so \(C\to 0\) again. In between, \(C\) passes through a maximum — the Schottky anomaly — at a temperature \(k_{\rm B}T_{\rm peak}\approx 0.83\,\mu B\), where the thermal energy is comparable to the level splitting. The physical picture is clear: at low \(T\) the system is frozen in the ground state and cannot absorb heat; at high \(T\) the two levels are equally populated and further heating changes nothing; at intermediate \(T\) the system is actively transitioning between the two levels and absorbs heat efficiently. Any two-level system (atomic hyperfine levels, crystal-field split electronic levels, nuclear spin states) exhibits this anomalous peak in \(C(T)\), providing a direct spectroscopic tool for measuring energy splittings.

Negative temperature in two-level systems

Because the energy of a two-level spin system is bounded above (you cannot put more than \(N\mu B\) of energy into a paramagnet), it is possible to prepare states that are genuinely hotter than any state with positive temperature. If the population of the upper energy level exceeds that of the lower level, the system is in a population inversion. Adding more energy to such a state increases the disorder (the system was already highly ordered by the inversion, and more energy makes the populations more equal), so \(\partial S/\partial E > 0\) initially but eventually \(\partial S/\partial E < 0\) once the populations cross equal occupation.

More precisely: the entropy of a two-level system with \(N_\uparrow\) spins up and \(N_\downarrow = N - N_\uparrow\) spins down is \(S = k_{\rm B}\ln\binom{N}{N_\uparrow}\). The energy is \(U = -(N_\uparrow - N_\downarrow)\mu B = -(2N_\uparrow - N)\mu B\). As \(N_\uparrow\) increases from \(0\) to \(N/2\), \(S\) increases (more disorder) and so does \(U\) — giving \(\partial S/\partial U > 0\) and positive temperature. As \(N_\uparrow\) increases from \(N/2\) to \(N\), \(S\) decreases (the system becomes more ordered again, now with most spins up) while \(U\) continues to increase — giving \(\partial S/\partial U < 0\) and thus \(T < 0\). The Boltzmann distribution still holds: a population inversion corresponds to a \(\beta < 0\) Boltzmann weight \(e^{-\beta E_s}\) that favours higher energies. A system at negative temperature is hotter than any positive-temperature system in the sense that energy flows from it to any positive-temperature body on thermal contact. The temperature sequence, ordered from cold to hot, is \(T = 0^+, \ldots, +\infty \equiv -\infty, \ldots, T = 0^-\).

A practically important technique for the low-temperature regime: write \(\tanh(\beta\mu B) = (1 - e^{-2\beta\mu B})/(1+e^{-2\beta\mu B}) \approx 1 - 2e^{-2\beta\mu B}\) for large \(\beta\mu B\). The leading correction to full saturation is exponentially small, a signature of the energy gap \(2\mu B\) separating the ground state from the first excited state.

The rotational partition function

Diatomic molecules — nitrogen, oxygen, hydrogen — have rotational degrees of freedom that become thermally accessible at temperatures well below room temperature for light molecules. A rigid rotor has energy levels

\[ E_J = \varepsilon_{\rm rot}\, J(J+1), \qquad J = 0, 1, 2, \ldots, \]with degeneracy \(2J+1\), where \(\varepsilon_{\rm rot} = \hbar^2/(2I)\) and \(I\) is the moment of inertia. The partition function is

\[ Z_{\rm rot} = \sum_{J=0}^\infty (2J+1)\,e^{-\beta\varepsilon_{\rm rot}J(J+1)}. \]This sum does not have a closed form. However, in the high-temperature limit (\(\beta\varepsilon_{\rm rot} \ll 1\)) the levels are densely packed relative to \(k_{\rm B}T\), and the sum can be replaced by an integral. With the substitution \(u = J(J+1)\), \(du = (2J+1)\,dJ\):

\[ Z_{\rm rot} \approx \int_0^\infty (2J+1)\,e^{-\beta\varepsilon_{\rm rot}J(J+1)}\,dJ = \frac{1}{\beta\varepsilon_{\rm rot}} = \frac{k_{\rm B}T}{\varepsilon_{\rm rot}}. \]The average rotational energy follows immediately:

\[ \langle E_{\rm rot}\rangle = -\frac{\partial\ln Z_{\rm rot}}{\partial\beta} = k_{\rm B}T. \]This is a preview of the equipartition theorem: each quadratic degree of freedom (here the two rotational angles of a diatomic molecule) contributes \(\tfrac{1}{2}k_{\rm B}T\) to the average energy.

The low-temperature limit. At low temperature (\(\beta\varepsilon_{\rm rot} \gg 1\)), only the lowest few terms in \(Z_{\rm rot}\) matter. Retaining \(J = 0\) and \(J = 1\):

\[ Z_{\rm rot} \approx 1 + 3e^{-2\beta\varepsilon_{\rm rot}}, \]since the \(J=1\) level has degeneracy \(2(1)+1 = 3\) and energy \(\varepsilon_{\rm rot}\cdot 1\cdot 2 = 2\varepsilon_{\rm rot}\). The average energy is approximately \(\langle E_{\rm rot}\rangle \approx 6\varepsilon_{\rm rot}e^{-2\beta\varepsilon_{\rm rot}}/(1 + 3e^{-2\beta\varepsilon_{\rm rot}})\), which is exponentially suppressed. The rotational heat capacity is similarly suppressed, approaching zero exponentially as \(T\to 0\). The crossover temperature is set by \(\varepsilon_{\rm rot}/k_{\rm B}\), which takes different values for different molecules:

| Molecule | \(\varepsilon_{\rm rot}/k_{\rm B}\) (K) |

|---|---|

| H\(_2\) | 85.4 |

| N\(_2\) | 2.88 |

| O\(_2\) | 2.08 |

For nitrogen and oxygen, \(\varepsilon_{\rm rot}/k_{\rm B}\) is only a few kelvin, well below room temperature, so rotation is fully excited at ambient conditions and contributes \(k_{\rm B}T\) to the energy and \(k_{\rm B}\) to the heat capacity per molecule. For hydrogen, \(\varepsilon_{\rm rot}/k_{\rm B} = 85.4\ \text{K}\) means that at room temperature \(\beta\varepsilon_{\rm rot} \approx 0.29\) and the high-temperature approximation is marginal — this is why the rotational heat capacity of H\(_2\) is not fully activated at room temperature.

A subtle complication arises for homonuclear diatomics (H\(_2\), N\(_2\), O\(_2\)), where the two identical nuclei impose exchange-symmetry constraints on the wave function. For hydrogen in particular, the nuclear spin state (ortho or para hydrogen) selects either even or odd values of \(J\), effectively halving the number of accessible states at low temperature.

The Einstein solid from the partition function

For a collection of \(N\) independent quantum harmonic oscillators, each at frequency \(\omega\), the partition function factorises as \(Z_N = Z_1^N\) (distinguishable oscillators on a lattice). The average energy is simply \(N\) times the single-oscillator result derived above:

\[ \langle E\rangle = \frac{N\hbar\omega}{e^{\beta\hbar\omega}-1}, \]and the heat capacity is

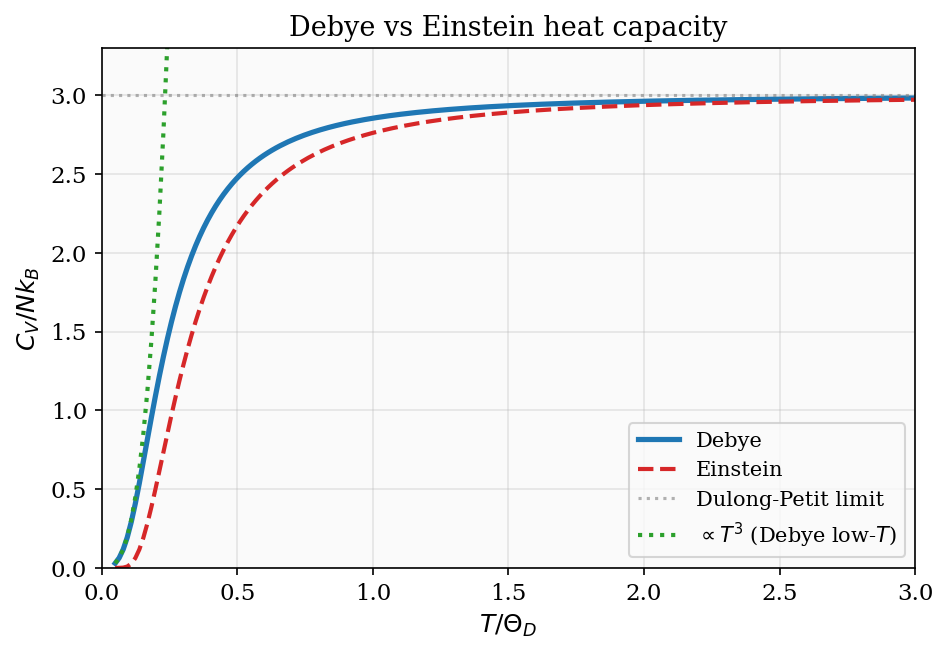

\[ C_V = Nk_{\rm B}\left(\frac{\hbar\omega}{k_{\rm B}T}\right)^2\frac{e^{\hbar\omega/k_{\rm B}T}}{(e^{\hbar\omega/k_{\rm B}T}-1)^2}. \]At high temperature this approaches \(Nk_{\rm B}\) per degree of freedom — for a 3D solid with \(3N\) modes, \(C_V\to 3Nk_{\rm B}\), which is the Dulong–Petit law, confirmed experimentally for many materials at sufficiently high temperature. At low temperature the heat capacity is exponentially suppressed: \(C_V \propto (\hbar\omega/k_{\rm B}T)^2 e^{-\hbar\omega/k_{\rm B}T}\to 0\). The Einstein model’s prediction of exponential low-\(T\) quenching is qualitatively correct (it captures the freezing-out of quantum modes) but quantitatively wrong (real solids show a power-law \(T^3\) rather than exponential decay), because a real solid has a spectrum of vibrational frequencies, not a single frequency. The Debye model, treated in Chapter 11, fixes this.

Composite systems and factorisation

If a system decomposes into independent subsystems A and B, with energies \(E_A\) and \(E_B\), the total energy is \(E = E_A + E_B\) and the microstates factorise. The partition function factorises too:

\[ Z = \sum_{s_A, s_B} e^{-\beta(E_{s_A} + E_{s_B})} = Z_A \cdot Z_B. \]Taking logarithms: \(\ln Z = \ln Z_A + \ln Z_B\), so the free energy \(F = -k_{\rm B}T\ln Z\) is additive. This is why extensive thermodynamic quantities (energy, entropy, free energy) are additive for non-interacting subsystems.

The factorisation property has a profound implication for the partition function of a complex molecule. Any system whose Hamiltonian can be written as a sum of independent terms \(H = H_1 + H_2 + \ldots\) has a partition function that factorises as \(Z = Z_1 Z_2\cdots\). This means we can compute the thermal properties of each part separately and combine them by addition of free energies. For a diatomic gas, the translational, rotational, vibrational, and electronic degrees of freedom all satisfy this condition (to a good approximation), and their separate partition functions, computed in Chapters 2 and 3, immediately give the full thermodynamics of the molecule. The factorisation is the partition function’s reflection of the extensivity of thermodynamics.

Chapter 4: Continuous Degrees of Freedom and the Maxwell–Boltzmann Distribution

Phase space and classical partition functions

Discrete energy levels are the quantum-mechanical default, but at sufficiently high temperatures the level spacings become negligible compared to \(k_{\rm B}T\) and we can treat position and momentum as continuous variables. The classical partition function for a single particle moving in three dimensions is

\[ Z_1 = \frac{1}{h^3}\int d^3x\,d^3p\; e^{-\beta p^2/(2m)} = \frac{V}{\lambda_{\rm dB}^3}, \]where \(\lambda_{\rm dB} = h/\sqrt{2\pi m k_{\rm B}T}\) is the thermal de Broglie wavelength. The factor of \(h^3\) in the denominator is Planck’s constant cubed — it sets the minimum volume of a cell in phase space, preventing the integral from diverging and maintaining the correct classical limit of the quantum result. The thermal de Broglie wavelength sets the length scale below which quantum effects become important: when the typical inter-particle separation \((V/N)^{1/3}\) is much larger than \(\lambda_{\rm dB}\), the gas behaves classically.

The three-dimensional momentum integral

Let us evaluate the momentum integral explicitly, since this Gaussian integral reappears throughout the course. Write the three-dimensional integral as a product over Cartesian components:

\[ \int d^3p\; e^{-\beta p^2/(2m)} = \left(\int_{-\infty}^{\infty} e^{-\beta p_x^2/(2m)}\,dp_x\right)^3. \]Each one-dimensional Gaussian integral evaluates to \(\sqrt{2\pi m/\beta} = \sqrt{2\pi mk_{\rm B}T}\) using the standard result \(\int_{-\infty}^\infty e^{-ax^2}\,dx = \sqrt{\pi/a}\). Therefore

\[ \int d^3p\; e^{-\beta p^2/(2m)} = (2\pi mk_{\rm B}T)^{3/2}. \]Combining with the spatial integral \(\int d^3x = V\) and the phase-space prefactor \(1/h^3\):

\[ Z_1 = \frac{V(2\pi mk_{\rm B}T)^{3/2}}{h^3} = \frac{V}{\lambda_{\rm dB}^3}, \qquad \lambda_{\rm dB} = \frac{h}{\sqrt{2\pi mk_{\rm B}T}}. \]This is the fundamental result for the single-particle translational partition function of a classical particle.

The equipartition theorem

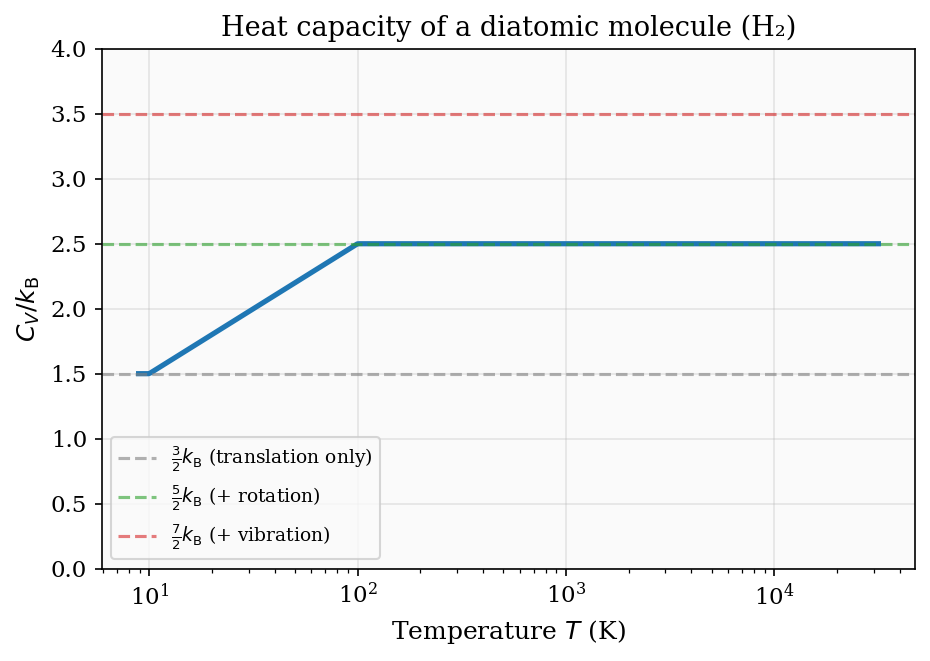

For any quadratic degree of freedom — any term of the form \(\tfrac{1}{2}a x^2\) in the Hamiltonian, whether \(x\) is a momentum component or a displacement — the contribution to the average energy is exactly \(\tfrac{1}{2}k_{\rm B}T\). Formally, this follows from the Gaussian integral: the Boltzmann weight \(e^{-\beta a x^2/2}\) gives \(\langle\tfrac{1}{2}ax^2\rangle = \tfrac{1}{2}k_{\rm B}T\). Counting degrees of freedom then immediately predicts heat capacities:

| System | Degrees of freedom | \(C_V / Nk_{\rm B}\) |

|---|---|---|

| Monatomic ideal gas | 3 translational | \(\tfrac{3}{2}\) |

| Diatomic (high \(T\)) | 3 trans + 2 rot | \(\tfrac{5}{2}\) |

| Diatomic (very high \(T\)) | 3 trans + 2 rot + 2 vib | \(\tfrac{7}{2}\) |

| 3D harmonic solid | 3 kin + 3 pot | 3 (Dulong-Petit) |

The activation of rotational and vibrational modes at increasing temperatures is visible in the measured heat capacity of hydrogen gas, rising from \(\tfrac{3}{2}\) at cryogenic temperatures through \(\tfrac{5}{2}\) at room temperature toward \(\tfrac{7}{2}\) above \(\sim 3000\ \text{K}\).

Figure 4: Schematic heat capacity of a diatomic molecule. The plateaus at \(\tfrac{3}{2}\), \(\tfrac{5}{2}\), and \(\tfrac{7}{2}\) correspond to translational, rotational, and vibrational degrees of freedom becoming active as temperature rises.

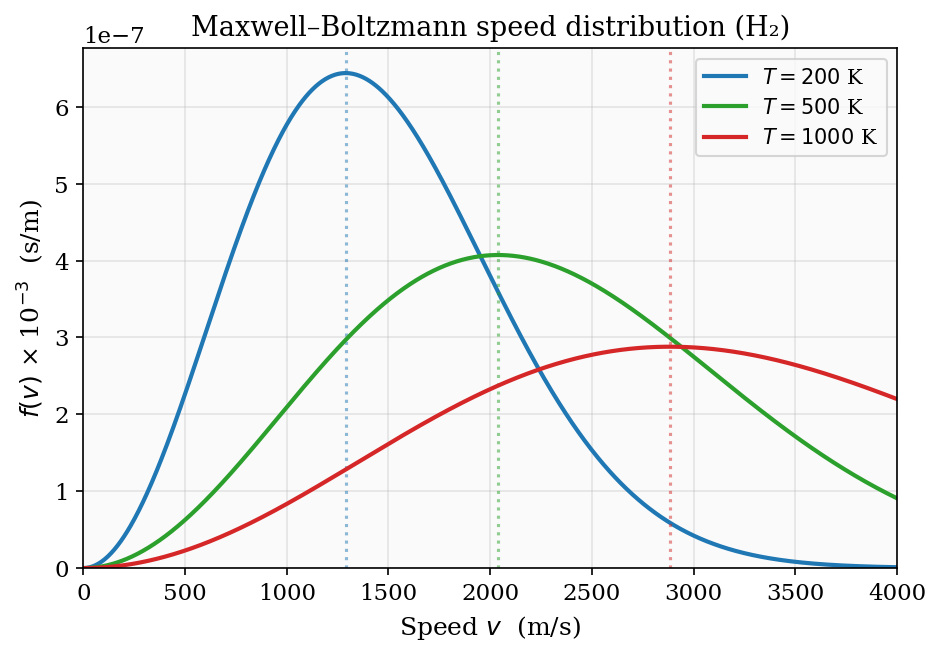

The Maxwell–Boltzmann speed distribution

The Boltzmann factor applied to a gas molecule with velocity \(\mathbf{v}\) gives a probability density proportional to \(e^{-mv^2/2k_{\rm B}T}\) in velocity space. Converting to a distribution over speed \(v = |\mathbf{v}|\) by integrating over the spherical shell of radius \(v\) in 3D velocity space:

\[ f(v) = 4\pi \left(\frac{m}{2\pi k_{\rm B}T}\right)^{3/2} v^2\, e^{-mv^2/2k_{\rm B}T}. \]The factor \(v^2\) (surface area of the sphere) and the exponential (Boltzmann weight) together create a distribution with a well-defined most-probable speed \(v_p = \sqrt{2k_{\rm B}T/m}\), a mean speed \(\langle v\rangle = \sqrt{8k_{\rm B}T/\pi m}\), and an rms speed \(\sqrt{\langle v^2\rangle} = \sqrt{3k_{\rm B}T/m}\).

Deriving the mean and rms speeds from Gaussian integrals. The relevant integrals are of the form \(\int_0^\infty v^n e^{-v^2/v_0^2}\,dv\), where \(v_0 = \sqrt{2k_{\rm B}T/m}\). For the normalisation, \(n=2\): \(\int_0^\infty v^2 e^{-v^2/v_0^2}\,dv = \sqrt{\pi}v_0^3/4\). The mean speed uses \(n=3\): \(\int_0^\infty v^3 e^{-v^2/v_0^2}\,dv = v_0^4/2\). The mean-square speed uses \(n=4\): \(\int_0^\infty v^4 e^{-v^2/v_0^2}\,dv = 3\sqrt{\pi}v_0^5/8\). Combining these with the normalisation factor \(4\pi(m/2\pi k_{\rm B}T)^{3/2} = 4/(\sqrt{\pi}v_0^3)\):

\[ \langle v\rangle = \frac{4}{\sqrt{\pi}v_0^3}\cdot\frac{v_0^4}{2} = \frac{2v_0}{\sqrt{\pi}} = \sqrt{\frac{8k_{\rm B}T}{\pi m}}, \]\[ \langle v^2\rangle = \frac{4}{\sqrt{\pi}v_0^3}\cdot\frac{3\sqrt{\pi}v_0^5}{8} = \frac{3v_0^2}{2} = \frac{3k_{\rm B}T}{m}, \]so the rms speed is \(v_{\rm rms} = \sqrt{3k_{\rm B}T/m}\). All three characteristic speeds grow as \(\sqrt{T}\) and decrease as \(1/\sqrt{m}\) — heavier molecules move slower at the same temperature, which underlies the phenomenon of effusion.

Figure 3: The Maxwell–Boltzmann speed distribution for H\(_2\) at 200 K, 500 K, and 1000 K. The peak shifts to higher speeds and the distribution broadens as temperature increases; dotted vertical lines mark the most-probable speed \(v_p\).

Effusion and Graham’s law

Effusion is the process by which gas molecules escape through a small hole in a container wall — small enough that the equilibrium Maxwell–Boltzmann distribution is not disturbed. The flux of molecules hitting a unit area of wall per unit time is

\[ \Phi = \int_{v_z > 0} v_z\, f(v)\,d^3v, \]where the integral is over all velocities with positive \(z\)-component (moving toward the wall). Writing \(f(v)\,d^3v = f(v_x, v_y, v_z)\,dv_x\,dv_y\,dv_z\) and using the fact that \(v_z\) and \(v_x, v_y\) are independent:

\[ \Phi = n\int_0^\infty v_z\left(\frac{m}{2\pi k_{\rm B}T}\right)^{1/2}e^{-mv_z^2/2k_{\rm B}T}\,dv_z = n\sqrt{\frac{k_{\rm B}T}{2\pi m}} = \frac{n\langle v\rangle}{4}, \]where \(n = N/V\) is the number density. The result \(\Phi = n\langle v\rangle/4\) is the standard effusion formula. Since \(\langle v\rangle \propto 1/\sqrt{m}\), the effusion rate is inversely proportional to the square root of the molecular mass — this is Graham’s law of effusion. Lighter gases escape through a pinhole faster; historically this was used to separate uranium isotopes (\(^{235}\)UF\(_6\) vs \(^{238}\)UF\(_6\)) by repeated effusion through porous barriers.

The barometric formula

The Boltzmann factor applies to any form of energy, not just kinetic energy. A molecule at height \(z\) in Earth’s atmosphere has gravitational potential energy \(U = mgz\). The probability of finding a molecule at height \(z\) is therefore proportional to \(e^{-mgz/k_{\rm B}T}\), and since the number density \(n(z)\) is proportional to this probability:

\[ n(z) = n_0\, e^{-mgz/k_{\rm B}T} = n_0\, e^{-z/H}, \]where the scale height is \(H = k_{\rm B}T/(mg)\). This is the barometric formula, also called the law of atmospheres. For nitrogen (the dominant constituent of Earth’s atmosphere, with \(m \approx 4.65\times 10^{-26}\ \text{kg}\)) at \(T = 250\ \text{K}\):

\[ H = \frac{k_{\rm B}T}{mg} = \frac{(1.38\times 10^{-23})(250)}{(4.65\times 10^{-26})(9.8)} \approx 7.6\ \text{km}. \]The atmospheric pressure falls to \(1/e \approx 37\%\) of its sea-level value at \(z \approx 7.6\ \text{km}\), consistent with the observation that Mount Everest (8.8 km) has roughly one-third of sea-level atmospheric pressure. The exponential dependence on \(mgz/k_{\rm B}T\) is a direct consequence of the Boltzmann factor — the atmosphere is a system in thermal equilibrium (approximately) with a spatially varying external potential.

It is worth noting that the barometric formula is consistent with hydrostatic equilibrium. The pressure gradient required to support the weight of the gas is \(dp/dz = -\rho g = -nmg\). The ideal gas law \(p = nk_{\rm B}T\) (at constant \(T\)) gives \(dp = k_{\rm B}T\,dn\), so \(k_{\rm B}T\,dn/dz = -nmg\), or \(dn/n = -mg\,dz/(k_{\rm B}T)\). Integrating recovers the barometric formula. The Boltzmann statistical argument and the fluid mechanical hydrostatic argument are two perspectives on the same result, connected by the thermodynamic equation of state.

Isothermal versus adiabatic atmospheres. The barometric formula assumes a constant temperature \(T\), which is an approximation. In reality, the temperature of the atmosphere decreases with altitude at a rate of about \(6.5\ \text{K/km}\) in the troposphere (the adiabatic lapse rate, set by the condition that a rising air parcel expands adiabatically without becoming buoyantly unstable). At higher altitudes (stratosphere and above), the temperature profile is more complex. The barometric formula with a constant \(T\) is a good approximation for order-of-magnitude estimates and for understanding the basic physics; a more accurate treatment requires solving the coupled equations of hydrostatics, thermodynamics, and radiative transfer.

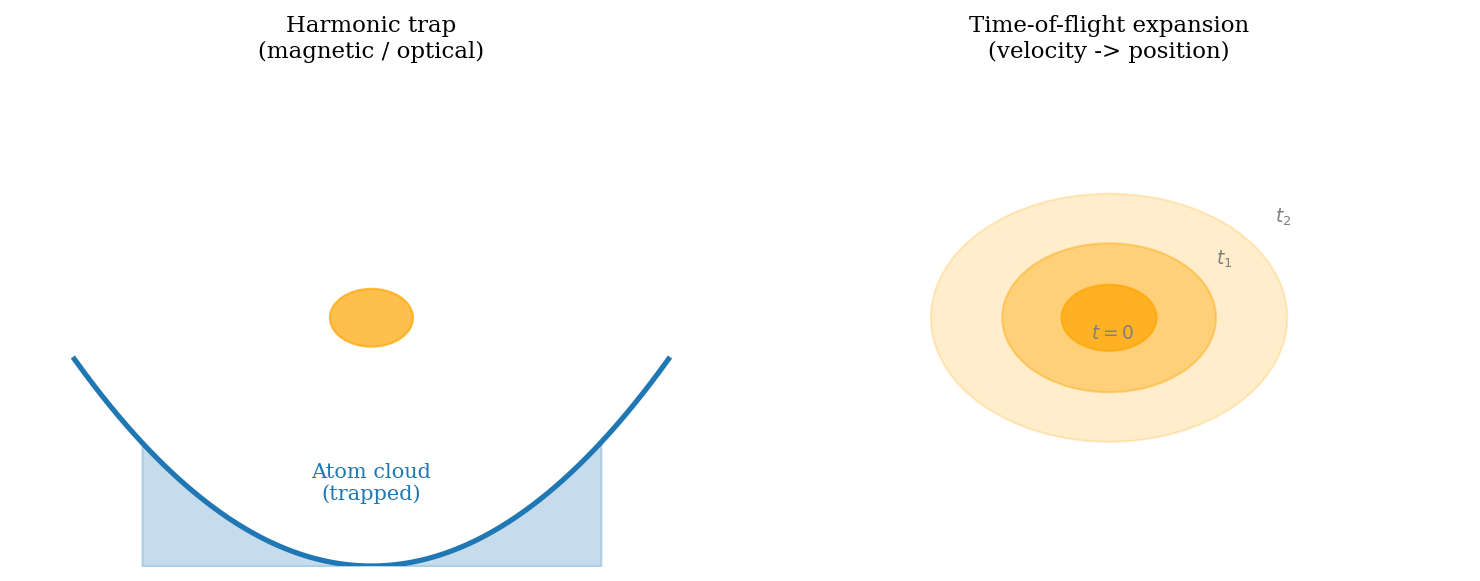

The derivation also illustrates a general principle: in the local density approximation (LDA), a system in an external potential \(U(\mathbf{r})\) has a local chemical potential \(\mu_{\rm local}(\mathbf{r}) = \mu - U(\mathbf{r})\), and the local density is whatever density gives the right \(\mu_{\rm local}\). This generalisation will be important when we study trapped atomic gases in Chapter 14.

Chapter 5: The Grand Canonical Ensemble

Chemical potential and particle exchange

The canonical ensemble holds \(N\) fixed. Many physical situations involve particle exchange: a metal in contact with an electron reservoir, a gas in contact with a larger gas, a protein that can bind or release ligand molecules. The relevant ensemble allows both energy and particle number to fluctuate.

The chemical potential \(\mu\) is the thermodynamic variable conjugate to particle number, defined by

\[ \mu = \left(\frac{\partial U}{\partial N}\right)_{S,V} = \left(\frac{\partial F}{\partial N}\right)_{T,V}. \]Just as temperature drives energy to flow from high-\(T\) to low-\(T\) regions until equilibrium is reached, chemical potential drives particles to flow from high-\(\mu\) to low-\(\mu\) regions until the chemical potentials equalise. This is diffusive equilibrium.

Diffusive equilibrium from entropy maximisation

The condition for diffusive equilibrium follows from the same entropy-maximisation argument we used for thermal equilibrium. Consider two systems with particle numbers \(N_1\) and \(N_2\), with \(N_1 + N_2 = N_{\rm tot}\) fixed, in contact at the same temperature \(T\). The total entropy is \(S_{\rm tot}(N_1) = S_1(N_1) + S_2(N_{\rm tot} - N_1)\). Maximising over \(N_1\):

\[ \frac{\partial S_{\rm tot}}{\partial N_1} = \frac{\partial S_1}{\partial N_1} - \frac{\partial S_2}{\partial N_2} = 0 \quad \Rightarrow \quad \frac{\partial S_1}{\partial N_1}\bigg|_{E,V} = \frac{\partial S_2}{\partial N_2}\bigg|_{E,V}. \]The thermodynamic identity \(dU = T\,dS - p\,dV + \mu\,dN\) gives \(\partial S/\partial N|_{E,V} = -\mu/T\), so the equilibrium condition is \(-\mu_1/T = -\mu_2/T\), i.e.\ \(\mu_1 = \mu_2\). Particles flow from high \(\mu\) to low \(\mu\), exactly as heat flows from high \(T\) to low \(T\).

By extending the Boltzmann argument — now treating a system in contact with a reservoir of both energy and particles — one finds that the probability of a microstate \(s\) with energy \(E_s\) and particle number \(N_s\) is

\[ P(s) = \frac{1}{\mathcal{Z}}\,e^{-\beta(E_s - \mu N_s)}, \]where the grand partition function (also called the grand sum) is

\[ \mathcal{Z} = \sum_{s} e^{-\beta(E_s - \mu N_s)} = \sum_{N=0}^\infty e^{\beta\mu N}\,Z_N, \]with \(Z_N\) the \(N\)-particle canonical partition function. The average particle number is \(\langle N\rangle = k_{\rm B}T\,\partial\ln\mathcal{Z}/\partial\mu\), and the average energy is \(\langle E\rangle = -\partial\ln\mathcal{Z}/\partial\beta + \mu\langle N\rangle\).

Figure 2: The three ensembles. In the microcanonical (left) the system is isolated; in the canonical (centre) it can exchange heat with a reservoir at temperature \(T\); in the grand canonical (right) it exchanges both heat and particles with a reservoir at temperature \(T\) and chemical potential \(\mu\).

The grand potential

The thermodynamic potential naturally associated with the grand canonical ensemble is the grand potential:

\[ \Phi = -k_{\rm B}T\ln\mathcal{Z} = U - TS - \mu\langle N\rangle. \]All thermodynamic quantities follow from derivatives of \(\Phi\). The entropy is

\[ S = -\frac{\partial\Phi}{\partial T}\bigg|_{\mu,V}, \]the mean particle number is

\[ \langle N\rangle = -\frac{\partial\Phi}{\partial\mu}\bigg|_{T,V}, \]and the pressure is

\[ p = -\frac{\partial\Phi}{\partial V}\bigg|_{T,\mu}. \]For an ideal gas, \(\Phi = -k_{\rm B}T\mathcal{Z}\), and these derivatives reproduce the ideal gas law and the Sackur–Tetrode entropy. The grand potential plays the same role for the grand canonical ensemble that the Helmholtz free energy \(F\) plays for the canonical ensemble: it is the appropriate free energy to minimise when \(T\) and \(\mu\) (rather than \(T\) and \(N\)) are the natural variables.

A biological application: haemoglobin

The grand canonical ensemble provides a beautiful model of how haemoglobin carries oxygen in the blood. Treat each of the four binding sites on a haemoglobin molecule as an independent two-state system: either empty (energy \(0\), one particle) or occupied by an O\(_2\) molecule (energy \(-\varepsilon < 0\), counts as one additional particle). The grand partition function for a single site is

\[ \mathcal{Z}_{\rm site} = 1 + e^{\beta(\mu + \varepsilon)}, \]and the average occupation (fraction of the time the site is occupied by oxygen) is

\[ \langle n\rangle = \frac{e^{\beta(\mu+\varepsilon)}}{1 + e^{\beta(\mu+\varepsilon)}} = \frac{1}{1 + e^{-\beta(\mu+\varepsilon)}}. \]This is a Fermi–Dirac-like distribution, even though we are dealing with a classical binding problem; the underlying reason is that the site is either empty or singly occupied (occupation restricted to 0 or 1), which is the same restriction that gives fermions their statistics.

In the blood, \(\mu\) is determined by the partial pressure of oxygen: higher O\(_2\) pressure \(\Rightarrow\) larger \(\mu\) (by the ideal gas relation \(\mu = k_{\rm B}T\ln(P/P_0)\)). In the lungs, O\(_2\) pressure is high, \(\mu\) is large, and \(\langle n\rangle\) is close to 1 — the haemoglobin loads up with oxygen. In peripheral tissues where O\(_2\) is consumed, the pressure is lower, \(\mu\) drops, and \(\langle n\rangle\) decreases — oxygen is released. The operating point in the lungs corresponds roughly to \(\langle n\rangle \approx 0.95\) while in resting tissue the operating point is \(\langle n\rangle \approx 0.7\), releasing about a quarter of the oxygen with each pass. Under exercise, the tissue O\(_2\) partial pressure drops further and \(\langle n\rangle\) falls to \(0.3\)–\(0.5\), releasing much more oxygen per cycle.

Real haemoglobin has cooperative binding among the four sites — binding one O\(_2\) makes it easier for the subsequent sites to bind, giving a sigmoidal loading curve that is steeper than the single-site prediction. The Hill equation \(\langle n\rangle = p^h/(K^h + p^h)\) with Hill coefficient \(h \approx 2.8\) for haemoglobin captures this cooperativity phenomenologically; the single-site grand canonical result is recovered for \(h = 1\). The cooperative binding sharpens the loading/unloading switch, making haemoglobin a much more efficient oxygen transporter than a non-cooperative binder would be.

Chapter 6: The Classical Ideal Gas Re-derived

The quantum mechanical gas in a box

A structureless particle of mass \(m\) in a cubic box of side \(L\) has energy levels determined by the requirement that the wave function vanishes at the walls. For a particle in a box with standing-wave boundary conditions, the allowed wave vectors are \(k_i = n_i\pi/L\) with \(n_i = 1, 2, 3, \ldots\), giving energy levels

\[ E_{\mathbf{n}} = \frac{\hbar^2\pi^2}{2mL^2}\left(n_x^2 + n_y^2 + n_z^2\right) = \frac{h^2}{8mL^2}\left(n_x^2 + n_y^2 + n_z^2\right). \]For a macroscopic box at room temperature, the ground-state energy \(h^2/8mL^2\) is of order \(10^{-38}\ \text{J}\) — many orders of magnitude below \(k_{\rm B}T \sim 10^{-21}\ \text{J}\). The levels are so densely packed that the single-particle partition function is well approximated by an integral over continuous \(\mathbf{n}\), or equivalently over continuous momentum. Switching to periodic boundary conditions (which give \(k_i = 2\pi n_i/L\) with \(n_i \in \mathbb{Z}\)) and converting the sum over lattice points in \(\mathbf{k}\)-space to an integral using the density of states \(V/(2\pi)^3\) per unit \(k^3\)-volume:

\[ Z_1 = \frac{V}{(2\pi)^3}\int d^3k\; e^{-\beta\hbar^2 k^2/(2m)} = \frac{V}{h^3}\int d^3p\; e^{-\beta p^2/(2m)} = V\left(\frac{2\pi m k_{\rm B}T}{h^2}\right)^{3/2} = \frac{V}{\lambda_{\rm dB}^3}. \]This shows how the discrete quantum sum over states in a box becomes, in the classical limit, precisely the phase-space integral with the Planck cell volume \(h^3\). The quantum derivation thus justifies the classical phase-space prescription — there is no ambiguity about factors of \(h\).

Indistinguishability and the Gibbs paradox

For distinguishable particles the \(N\)-particle partition function would be \(Z_1^N\). But gas molecules are indistinguishable: swapping two identical molecules does not produce a new microstate. Naively using \(Z_N = Z_1^N\) for an ideal gas leads to a profound inconsistency known as the Gibbs paradox. Consider two portions of the same ideal gas: \(N/2\) molecules in volume \(V/2\) on the left, and \(N/2\) molecules in the same gas in volume \(V/2\) on the right. When the partition is removed, a classical calculation with \(Z_N = Z_1^N\) gives an entropy of mixing

\[ \Delta S_{\rm mixing} = Nk_{\rm B}\ln 2 > 0, \]arising because each molecule now has twice the volume available. But physically, mixing two portions of the same gas should produce no entropy change — there is no way to tell whether a molecule in the combined system came from the left or right. The Gibbs paradox is an artificial entropy of mixing that arises from treating identical particles as distinguishable.

The resolution is to divide by \(N!\), accounting for the fact that permuting the \(N\) molecules does not produce a distinct microstate:

\[ Z_N = \frac{Z_1^N}{N!}. \]With this correction, the entropy of mixing identical gases is exactly zero, as it must be. The division by \(N!\) is not a semiclassical patch — it is the correct classical limit of the quantum treatment of identical bosons or fermions when the phase-space density \(N\lambda_{\rm dB}^3/V \ll 1\). In the quantum treatment, identical bosons (fermions) have symmetric (antisymmetric) wave functions, and in the dilute limit the symmetrisation produces exactly the \(N!\) suppression of the partition function.

The Sackur-Tetrode entropy and the ideal gas law

From \(F = -k_{\rm B}T\ln Z_N\) we extract all ideal gas properties. Using Stirling’s approximation \(\ln N! \approx N\ln N - N\):

\[ F = Nk_{\rm B}T\left[\ln\!\left(\frac{N\lambda_{\rm dB}^3}{V}\right) - 1\right]. \]The pressure is \(p = -\partial F/\partial V = Nk_{\rm B}T/V\), recovering the ideal gas law \(pV = Nk_{\rm B}T\) from a microscopic derivation. The entropy is

\[ S = Nk_{\rm B}\left[\ln\!\left(\frac{V}{N\lambda_{\rm dB}^3}\right) + \frac{5}{2}\right]. \]This is the Sackur–Tetrode equation, derived independently by Sackur and Tetrode in 1911. It is remarkable for two reasons. First, it contains Planck’s constant \(h\) (through \(\lambda_{\rm dB}\)), so even the classical entropy of a gas knows about quantum mechanics. Second, it is extensive: if you double \(N\) and \(V\), the entropy doubles — the \(\ln(V/N)\) factor ensures this.

Numerical check for helium. At standard temperature and pressure (\(T = 273.15\ \text{K}\), \(p = 101.325\ \text{kPa}\)), the number density of helium (mass \(m = 4 \times 1.66\times 10^{-27}\ \text{kg}\)) is \(n = p/(k_{\rm B}T) \approx 2.69\times 10^{25}\ \text{m}^{-3}\). The thermal de Broglie wavelength is \(\lambda_{\rm dB} = h/\sqrt{2\pi mk_{\rm B}T} \approx 0.40\ \text{\AA}\). The Sackur–Tetrode formula gives

\[ \frac{S}{Nk_{\rm B}} = \ln\!\left(\frac{n^{-1}}{\lambda_{\rm dB}^3}\right) + \frac{5}{2} \approx \ln\!\left(\frac{(3.7\times 10^{-26})}{(6.4\times 10^{-32})}\right) + 2.5 \approx \ln(5.8\times 10^5) + 2.5 \approx 13.3 + 2.5 = 15.8. \]The tabulated value for helium at STP is \(S/(Nk_{\rm B}) \approx 15.2\) — the Sackur–Tetrode prediction agrees to within a few percent. The small discrepancy comes from corrections beyond the ideal-gas approximation. The fact that this formula, derived purely from quantum mechanics and statistical mechanics, predicts the absolute entropy of a gas (not merely entropy differences) to such accuracy is a triumph.

The chemical potential of the ideal gas

The chemical potential follows from \(\mu = \partial F/\partial N\):

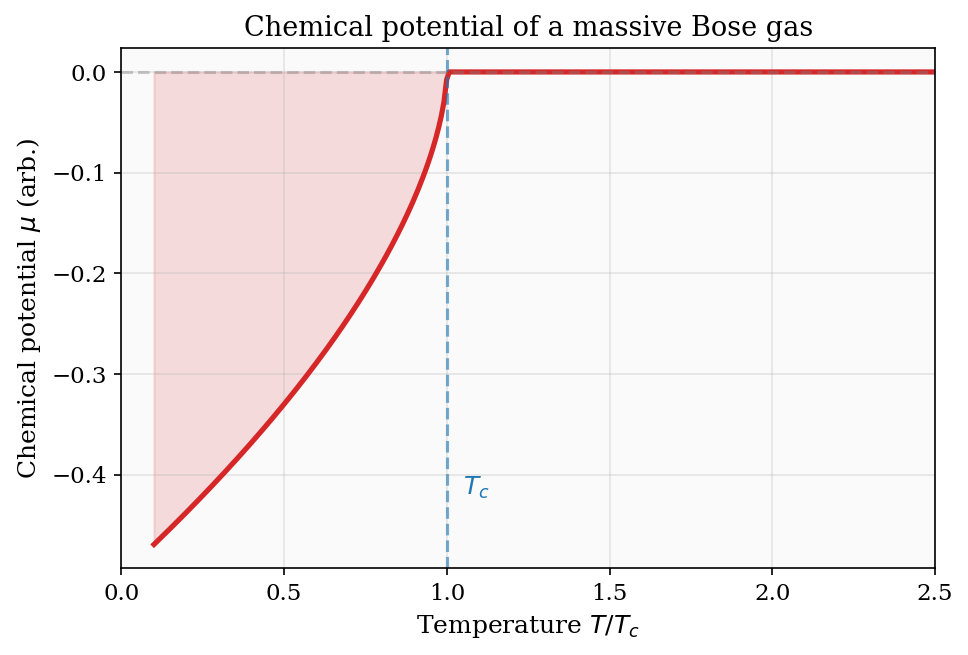

\[ \mu = k_{\rm B}T\ln\!\left(\frac{N\lambda_{\rm dB}^3}{V}\right). \]This connects Chapters 5 and 6 directly. For a dilute classical gas, \(N\lambda_{\rm dB}^3/V \ll 1\) and \(\mu\) is large and negative — it costs no free energy to add a particle because the gas is sparse. As the gas is compressed (larger \(N/V\)) or cooled (larger \(\lambda_{\rm dB}\)), \(\mu\) rises toward zero. When \(\mu\) reaches zero, the phase-space density \(N\lambda_{\rm dB}^3/V \approx 1\) and quantum effects become dominant — this signals the onset of quantum degeneracy, the subject of Part II. For bosons, \(\mu = 0\) is the threshold for Bose–Einstein condensation; for fermions, \(\mu = 0\) signals that the Fermi energy is comparable to \(k_{\rm B}T\).

The internal partition function

For diatomic and polyatomic molecules, the single-particle partition function factorises into contributions from each independent degree of freedom:

\[ Z_1 = Z_{\rm trans}\, Z_{\rm rot}\, Z_{\rm vib}\, Z_{\rm el}. \]This factorisation holds because the total energy decomposes as \(E = E_{\rm trans} + E_{\rm rot} + E_{\rm vib} + E_{\rm el}\) (to the extent that the modes are independent, i.e.\ Born–Oppenheimer approximation and rigid rotor approximation). The Helmholtz free energy and entropy then simply add the contributions from each factor. This additivity — a consequence of the logarithm in \(F = -k_{\rm B}T\ln Z\) — makes the calculation of thermodynamic quantities for complex molecules systematic: compute each partition function independently and sum the resulting free energies.

The electronic partition function \(Z_{\rm el}\) is typically just the ground-state degeneracy at low temperature (since the first electronic excitation energy is usually \(\gg k_{\rm B}T\) at chemically relevant temperatures). The vibrational partition function is the harmonic oscillator result derived in Chapter 2. The rotational partition function is the rigid rotor result from Chapter 3. Together, they account for all the observed heat capacities of diatomic molecules as a function of temperature.

The factorisation also explains the law of corresponding states for chemical reactions. The equilibrium constant \(K_{\rm eq}\) of a gas-phase reaction is determined by \(\Delta F = -k_{\rm B}T\ln K_{\rm eq}\), where \(\Delta F\) is the difference in Helmholtz free energies of products and reactants. Since \(F = -k_{\rm B}T\ln Z_{\rm trans} - k_{\rm B}T\ln Z_{\rm rot} - k_{\rm B}T\ln Z_{\rm vib} - k_{\rm B}T\ln Z_{\rm el}\), each mode contributes additively and independently to \(\Delta F\). This separation underlies the success of transition state theory: the rate of a chemical reaction can be computed from the partition functions of reactants and the transition state complex without knowing the detailed trajectory of the reaction. Statistical mechanics thus provides the microscopic foundation for chemical kinetics.

Part II: Quantum Statistics and Applications

Chapter 7: Bosons, Fermions, and the Three Distributions

Identical particles and exchange symmetry

Classical statistical mechanics treats all particles as distinguishable, even when they are nominally identical. Quantum mechanics forbids this. The many-body wave function of \(N\) identical particles must be either symmetric under any transposition of two particles (for bosons) or antisymmetric (for fermions). The parity of a particle under exchange is determined by its spin: integer-spin particles are bosons (photons, helium-4, \(^{87}\)Rb atoms), while half-integer-spin particles are fermions (electrons, protons, neutrons, \(^6\)Li atoms).

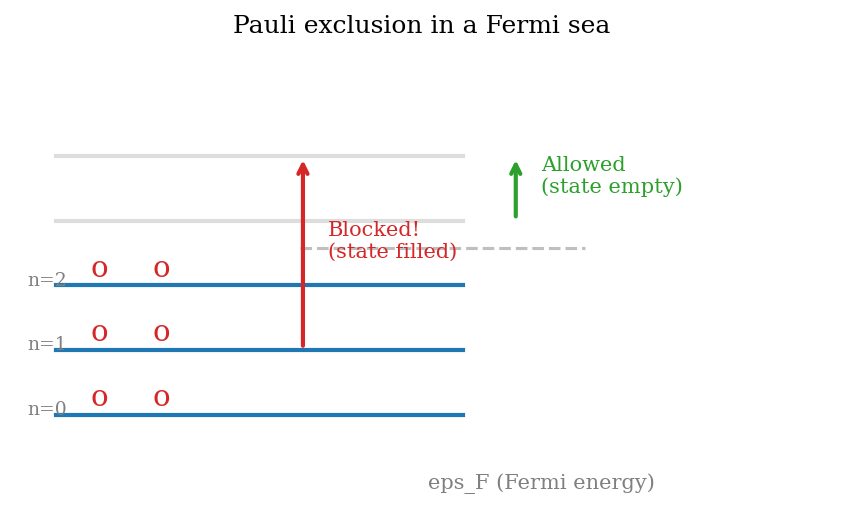

The consequences of exchange symmetry are dramatic. For fermions, antisymmetry implies that no two particles can occupy the same single-particle state — the Pauli exclusion principle. For bosons, symmetry implies that multiple particles favour the same state (bunching). These tendencies persist even in the complete absence of any direct interaction between the particles, and they dominate the thermodynamics of quantum gases at low temperatures or high densities.

The relevant dimensionless parameter is the phase-space density: \(\Phi = N\lambda_{\rm dB}^3/V\). When \(\Phi \ll 1\) (dilute, high temperature), particles rarely occupy the same state and classical Boltzmann statistics apply. When \(\Phi \gtrsim 1\), quantum statistics become essential. To evaluate the importance of quantum effects in a few systems: for electrons in copper at room temperature, \(n \approx 8.5\times 10^{28}\ \text{m}^{-3}\) and \(\lambda_{\rm dB} \approx 0.4\ \text{nm}\), giving \(n\lambda_{\rm dB}^3 \approx 5.4\gg 1\) — copper electrons are deeply quantum. For liquid \(^4\)He at 4 K, \(n \approx 2.2\times 10^{28}\ \text{m}^{-3}\) and \(\lambda_{\rm dB} \approx 0.5\ \text{nm}\), giving \(n\lambda_{\rm dB}^3 \approx 2.8 > 1\) — helium-4 is quantum degenerate, consistent with its superfluid transition at 2.17 K. For hydrogen gas at STP, \(n \approx 2.7\times 10^{25}\ \text{m}^{-3}\) and \(\lambda_{\rm dB} \approx 0.7\ \text{\AA}\), giving \(n\lambda_{\rm dB}^3 \approx 10^{-5} \ll 1\) — hydrogen at STP is classical.

Single-mode grand partition functions: bosons

The grand canonical ensemble provides the cleanest derivation of the quantum distribution functions. Consider a single energy level \(\varepsilon\). For a mode that can hold any number of bosons, the occupation number \(n\) can be any non-negative integer. The grand partition function is a geometric series:

\[ \mathcal{Z}_{\rm BE} = \sum_{n=0}^\infty e^{-\beta(\varepsilon-\mu)n} = \frac{1}{1 - e^{-\beta(\varepsilon-\mu)}}, \]valid as long as \(\mu < \varepsilon\) (so the ratio in the geometric series has magnitude less than 1). Taking the logarithm: \(\ln\mathcal{Z}_{\rm BE} = -\ln(1 - e^{-\beta(\varepsilon-\mu)})\). The mean occupation is obtained by differentiating with respect to the variable \(\beta\varepsilon\):

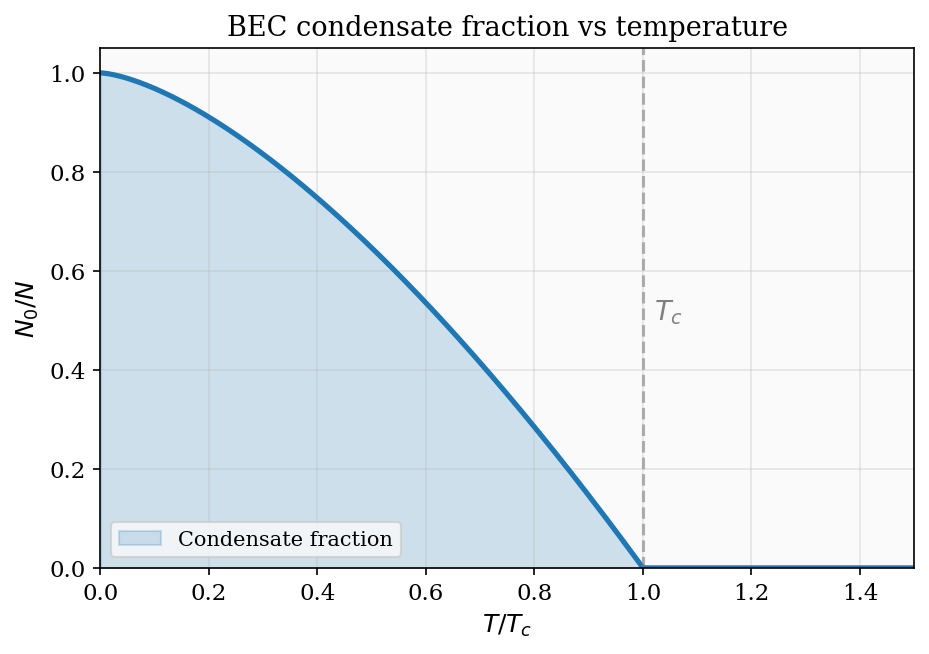

\[ \langle n_{\rm BE}\rangle = -\frac{\partial\ln\mathcal{Z}_{\rm BE}}{\partial(\beta\varepsilon)} = \frac{e^{-\beta(\varepsilon-\mu)}}{1 - e^{-\beta(\varepsilon-\mu)}} = \frac{1}{e^{\beta(\varepsilon-\mu)} - 1}. \]This is the Bose–Einstein distribution. As \(\mu\to\varepsilon^-\), the mean occupation \(\langle n_{\rm BE}\rangle\to\infty\): the mode can hold arbitrarily many bosons and will become macroscopically occupied when \(\mu\) approaches the mode energy. This divergence is the mathematical signature of Bose–Einstein condensation.

Single-mode grand partition functions: fermions

For a fermionic mode, the occupation number is restricted to \(n \in \{0, 1\}\) by the Pauli exclusion principle. The grand partition function has just two terms:

\[ \mathcal{Z}_{\rm FD} = \sum_{n=0}^{1} e^{-\beta(\varepsilon-\mu)n} = 1 + e^{-\beta(\varepsilon-\mu)}. \]Taking the logarithm and differentiating with respect to \(\beta\varepsilon\):

\[ \langle n_{\rm FD}\rangle = -\frac{\partial\ln\mathcal{Z}_{\rm FD}}{\partial(\beta\varepsilon)} = \frac{e^{-\beta(\varepsilon-\mu)}}{1 + e^{-\beta(\varepsilon-\mu)}} = \frac{1}{e^{\beta(\varepsilon-\mu)} + 1}. \]This is the Fermi–Dirac distribution. It is bounded between 0 and 1, as required for a mode that can hold at most one fermion. At \(T = 0\), it is a step function: \(\langle n_{\rm FD}\rangle = 1\) for \(\varepsilon < \mu\) and \(\langle n_{\rm FD}\rangle = 0\) for \(\varepsilon > \mu\). At finite temperature the step is smeared over a width \(\sim k_{\rm B}T\).

In both cases, taking the classical limit \(e^{\beta(\varepsilon-\mu)} \gg 1\) (low density or high temperature, so \(\mu \ll \varepsilon\)) gives

\[ \langle n\rangle \approx e^{-\beta(\varepsilon-\mu)} = e^{\beta\mu}\,e^{-\beta\varepsilon}, \]the Maxwell–Boltzmann distribution, where both the \(\pm 1\) in the denominator are negligible.

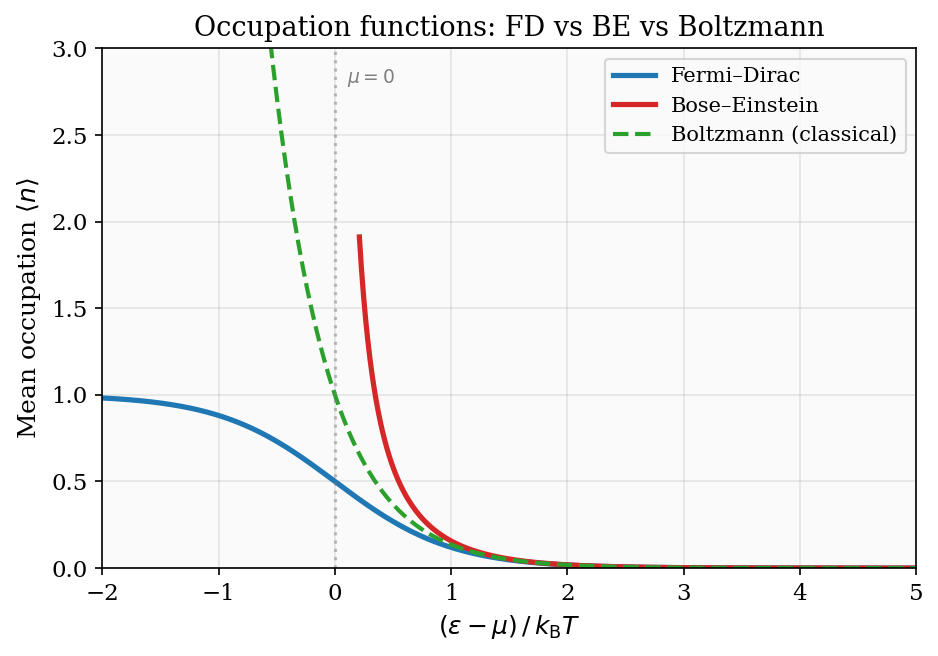

Figure 5: The three quantum occupation functions plotted versus \((\varepsilon-\mu)/k_{\rm B}T\). The Fermi–Dirac distribution is bounded between 0 and 1; the Bose–Einstein diverges as \(\varepsilon\to\mu^+\); both converge to the Maxwell–Boltzmann exponential for \(\varepsilon\gg\mu\).

Bosons wanting to bunch: exchange forces without a force

A striking quantum effect is that bosons attract each other statistically — they are more likely to be found in the same state than classical probability would predict — while fermions repel. This “exchange force” has no classical counterpart and arises purely from symmetrisation of the wave function.

To see this concretely, consider two non-interacting particles that can each occupy two states \(|1\rangle\) or \(|2\rangle\). The three cases — classical, bosonic, fermionic — give very different probabilities for finding both particles in the same state.

For classical distinguishable particles, the four configurations \((1,1)\), \((1,2)\), \((2,1)\), \((2,2)\) are each equally probable with probability \(1/4\). The probability of both particles being in the same state is \(2/4 = 1/2\).

For identical bosons, the distinct quantum states are \(|1\rangle|1\rangle\), \((|1\rangle|2\rangle + |2\rangle|1\rangle)/\sqrt{2}\), and \(|2\rangle|2\rangle\) — three states, each equally probable by the fundamental postulate. The probability of both particles in the same state is \(2/3 > 1/2\): bosons are more likely to be found together.

For identical fermions, only the antisymmetric combination \((|1\rangle|2\rangle - |2\rangle|1\rangle)/\sqrt{2}\) exists — a single state. Both particles are in the same state with probability \(0\): fermions cannot share a state. The Pauli exclusion principle is not a separate postulate — it is a consequence of antisymmetry.

The three probabilities — \(2/3\), \(1/2\), \(0\) — summarise the “exchange forces” for bosons, classical particles, and fermions respectively. These statistical correlations are pure quantum effects, present even for non-interacting particles, and they lead to dramatically different thermodynamic behaviours at low temperatures.

The non-degenerate limit: recovering Maxwell–Boltzmann

In the classical limit of high temperature and low density (\(n\lambda_{\rm dB}^3 \ll 1\)), both quantum distributions reduce to the Maxwell–Boltzmann result. We can see this from the grand canonical viewpoint. In this limit, the mean occupation of every mode is much less than 1: \(\langle n\rangle \ll 1\), which means \(e^{\beta(\varepsilon-\mu)} \gg 1\). The grand partition function for each mode then factorises as \(\mathcal{Z}_{\rm mode} \approx 1 + e^{-\beta(\varepsilon-\mu)}\) for both bosons and fermions (the distinction between \(\pm 1\) in the denominator is negligible). The total grand partition function for all modes factorises, and the mean occupation of mode \(\varepsilon\) is

\[ \langle n\rangle \approx e^{-\beta(\varepsilon-\mu)} = e^{\beta\mu}\, e^{-\beta\varepsilon}. \]This is the Maxwell–Boltzmann Boltzmann factor with fugacity \(e^{\beta\mu}\). The condition \(\langle N\rangle = \sum_\varepsilon \langle n_\varepsilon\rangle = e^{\beta\mu}Z_1\) then fixes \(\mu = k_{\rm B}T\ln(N\lambda_{\rm dB}^3/V)\), exactly the ideal gas chemical potential from Chapter 6. The grand canonical approach thus provides a fully unified derivation of all three statistics and their classical limit.

The condition for quantum degeneracy can now be stated precisely. Define the phase-space occupancy \(\eta = n\lambda_{\rm dB}^3\). When \(\eta \ll 1\) the classical (Maxwell–Boltzmann) regime applies; when \(\eta \gtrsim 1\) quantum statistics are essential. For bosons, the threshold \(\eta \approx 2.612\) marks the BEC transition; for fermions, \(\eta \gtrsim 1\) marks the onset of Fermi degeneracy. As computed earlier: copper electrons have \(\eta \approx 5\) at room temperature (deeply degenerate Fermi gas); liquid \(^4\)He has \(\eta \approx 3\) at its normal boiling point (bosonic degeneracy, consistent with the superfluid transition); hydrogen gas at STP has \(\eta \approx 10^{-5}\) (classical). The phase-space occupancy \(\eta\) is the single most important dimensionless parameter in quantum statistical mechanics.

Chapter 8: Degenerate Fermi Gases and the Density of States

The Fermi sea at zero temperature

When temperature goes to zero with the particle number held fixed, the Fermi–Dirac distribution approaches a step function: every state with energy below the Fermi energy \(\varepsilon_{\rm F}\) is occupied (\(\langle n\rangle = 1\)) and every state above is empty (\(\langle n\rangle = 0\)). The Fermi energy is simply the chemical potential at \(T = 0\), determined by the requirement that the total number of particles equals the integral of the density of states over all occupied states.

The filled states form a Fermi sea in energy space. Unlike a classical gas, where all particles would rush to the ground state at \(T = 0\), fermions are forced by the Pauli exclusion principle to fill states one by one, all the way up to \(\varepsilon_{\rm F}\). This quantum pressure (which persists even at absolute zero) is responsible for the stability of metals, the rigidity of white dwarf stars, and the incompressibility of atomic nuclei.

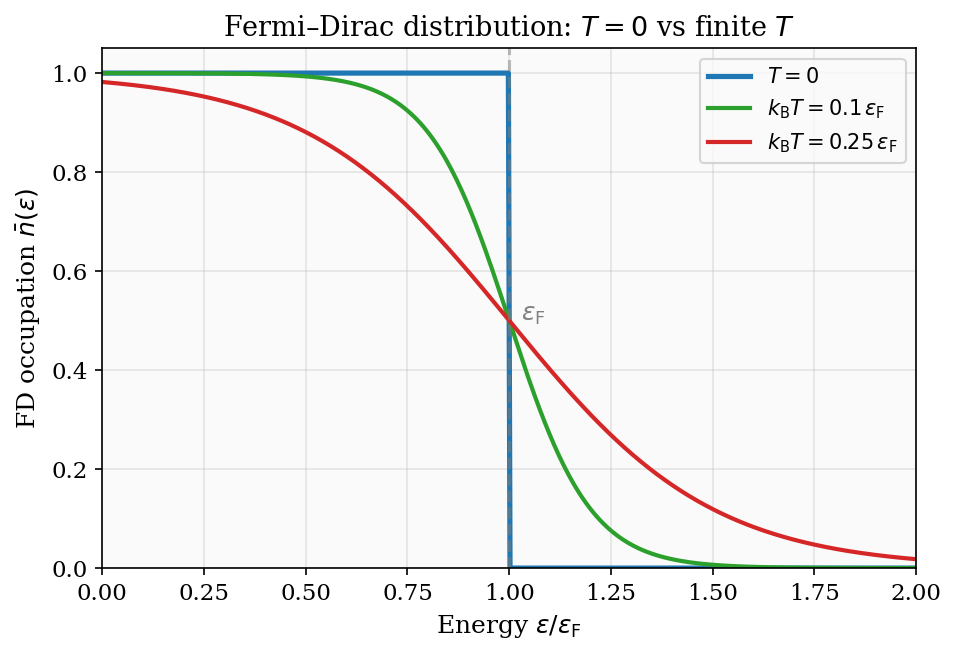

Figure 6: The Fermi–Dirac distribution at \(T=0\) (sharp step), \(k_{\rm B}T = 0.1\,\varepsilon_{\rm F}\), and \(k_{\rm B}T = 0.25\,\varepsilon_{\rm F}\). Thermal excitations smear the step over a window of width \(\sim k_{\rm B}T\) around the Fermi energy.

Density of states for a three-dimensional Fermi gas

To compute the Fermi energy and ground-state energy, we need the density of states \(g(\varepsilon)\), defined so that \(g(\varepsilon)\,d\varepsilon\) is the number of single-particle states with energy in \([\varepsilon, \varepsilon+d\varepsilon]\). For a particle in a 3D box of volume \(V\), the energy is \(\varepsilon = \hbar^2 k^2/2m\). We begin by counting states in \(k\)-space. Using periodic boundary conditions, the allowed \(\mathbf{k}\)-vectors form a cubic lattice with spacing \(2\pi/L\) in each direction, so the density of states in \(\mathbf{k}\)-space is \(V/(2\pi)^3\). The total number of states with wavevector magnitude less than \(k\), including the factor of 2 for electron spin, is

\[ N_{\rm states}(k) = 2\cdot\frac{V}{(2\pi)^3}\cdot\frac{4\pi k^3}{3} = \frac{V k^3}{3\pi^2}. \]The density of states in energy is obtained by differentiating with respect to \(\varepsilon\) and using \(k = \sqrt{2m\varepsilon}/\hbar\), so \(dk/d\varepsilon = \sqrt{m/(2\hbar^2\varepsilon)}\):

\[ g(\varepsilon) = \frac{dN_{\rm states}}{d\varepsilon} = \frac{V k^2}{\pi^2}\frac{dk}{d\varepsilon} = \frac{V}{2\pi^2}\left(\frac{2m}{\hbar^2}\right)^{3/2}\sqrt{\varepsilon} \propto \varepsilon^{1/2}. \]This \(\varepsilon^{1/2}\) density of states is the fundamental input to all Fermi gas calculations. Its form is entirely a consequence of three-dimensional geometry and the quadratic dispersion relation.

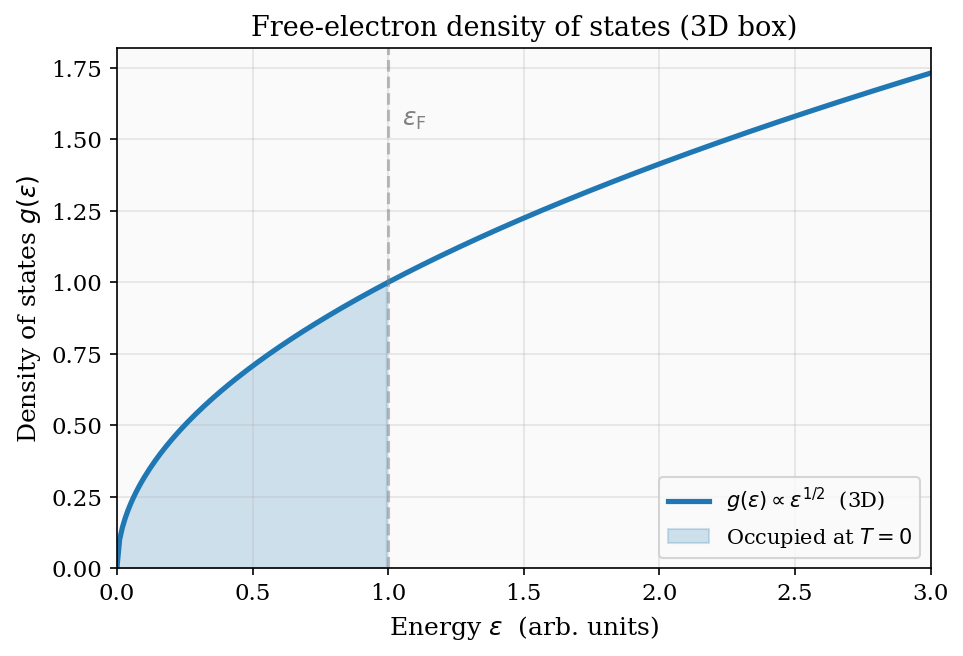

Figure 7: The free-electron density of states \(g(\varepsilon) \propto \varepsilon^{1/2}\) for a 3D box. The shaded region is occupied at \(T=0\); the Fermi energy \(\varepsilon_{\rm F}\) is the sharp cutoff.

Setting \(\int_0^{\varepsilon_{\rm F}} g(\varepsilon)\,d\varepsilon = N\) determines the Fermi energy:

\[ \varepsilon_{\rm F} = \frac{\hbar^2}{2m}\left(3\pi^2\,\frac{N}{V}\right)^{2/3}. \]The Fermi energy of copper

For electrons in copper, the valence electron density is \(N/V \approx 8.5 \times 10^{28}\ \text{m}^{-3}\) (one conduction electron per copper atom, density \(8900\ \text{kg/m}^3\), atomic mass 63.5 u). Substituting \(m = m_e = 9.11\times 10^{-31}\ \text{kg}\) and \(\hbar = 1.055\times 10^{-34}\ \text{J s}\):

\[ \varepsilon_{\rm F} = \frac{(1.055\times 10^{-34})^2}{2(9.11\times 10^{-31})}\left(3\pi^2\times 8.5\times 10^{28}\right)^{2/3} \approx 7.0\ \text{eV}. \]The Fermi temperature is \(T_{\rm F} = \varepsilon_{\rm F}/k_{\rm B} \approx 81{,}000\ \text{K}\). Room temperature is 300 K — a factor of 270 smaller than \(T_{\rm F}\). Copper’s conduction electrons are therefore profoundly quantum-mechanical at room temperature, with \(k_{\rm B}T/\varepsilon_{\rm F} \approx 0.004\). The classical equipartition prediction that each electron contributes \(\tfrac{3}{2}k_{\rm B}\) to the heat capacity is wildly wrong — the actual contribution is about 200 times smaller, suppressed by the Pauli exclusion principle as we quantify in Chapter 9.

Ground-state energy and degeneracy pressure

The total ground-state energy of the Fermi gas is obtained by integrating \(\varepsilon g(\varepsilon)\) up to \(\varepsilon_{\rm F}\):

\[ E_0 = \int_0^{\varepsilon_{\rm F}} \varepsilon\, g(\varepsilon)\,d\varepsilon = \frac{V}{2\pi^2}\left(\frac{2m}{\hbar^2}\right)^{3/2}\int_0^{\varepsilon_{\rm F}}\varepsilon^{3/2}\,d\varepsilon = \frac{V}{2\pi^2}\left(\frac{2m}{\hbar^2}\right)^{3/2}\frac{2\varepsilon_{\rm F}^{5/2}}{5} = \frac{3}{5}N\varepsilon_{\rm F}. \]The average energy per electron is \(E_0/N = \tfrac{3}{5}\varepsilon_{\rm F} \approx 4.2\ \text{eV}\) for copper — much larger than the thermal energy \(k_{\rm B}T \approx 0.026\ \text{eV}\) at room temperature.

The corresponding zero-temperature degeneracy pressure follows from thermodynamics: for an ideal gas in any dimension, \(pV = \frac{2}{3}E\), so

\[ P_0 = \frac{2E_0}{3V} = \frac{2}{5}\frac{N}{V}\varepsilon_{\rm F}. \]For copper, \(P_0 \approx 3.8\times 10^{10}\ \text{Pa} \approx 380{,}000\ \text{atm}\). This enormous pressure exists even at absolute zero and is entirely a quantum effect — the Pauli exclusion principle prevents the Fermi sea from collapsing. It is this degeneracy pressure that supports white dwarf stars against gravitational collapse.

White dwarf degeneracy pressure. A typical white dwarf has density \(\rho \sim 10^6\ \text{g/cm}^3 = 10^9\ \text{kg/m}^3\). Taking the composition to be primarily carbon-12 (6 protons + 6 neutrons + 6 electrons per nucleus), the electron number density is \(n_e \approx (6/12)\rho/m_p \approx (10^9)/(2\times 1.67\times 10^{-27}) \approx 3\times 10^{35}\ \text{m}^{-3}\). The corresponding Fermi energy is \(\varepsilon_{\rm F} \approx (\hbar^2/2m_e)(3\pi^2 n_e)^{2/3} \approx 0.2\ \text{MeV}\), which is comparable to \(m_e c^2 = 0.511\ \text{MeV}\) — electrons in a white dwarf are relativistic. The degeneracy pressure in the relativistic limit scales as \(P_0 \propto n_e^{4/3}\) rather than \(n_e^{5/3}\), and this softer scaling means that above a critical mass (\(\approx 1.4\ M_\odot\), the Chandrasekhar limit), the degeneracy pressure cannot balance gravity and the star collapses to a neutron star. The Chandrasekhar limit is a direct consequence of the relativistic correction to the Fermi degeneracy pressure.

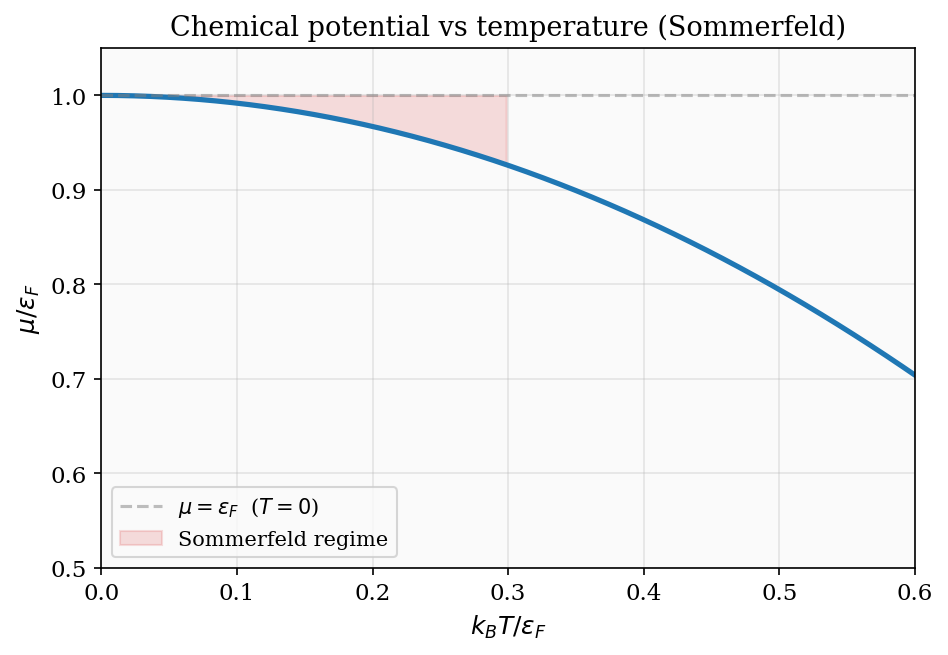

Chapter 9: The Sommerfeld Expansion and Electrons in Metals

Low-temperature corrections to Fermi integrals