CS 476/676: Computational Finance

CS476/676 Staff

Estimated study time: 5 hr 54 min

Table of contents

Course Introduction and Motivation

Why This Course? What Is It About?

CS476/676 Computational Finance is a course that teaches basic computational methods for solving interesting and important problems in finance using computers — a domain commonly referred to as FinTech (financial technology). The course sits at the intersection of rigorous mathematics, algorithmic thinking, and real-world financial markets, and it is offered within the Computer Science department for reasons that are both practical and intellectual.

Why Is Computational Finance Offered in CS?

A natural question is why a subject that sounds like it belongs in an economics or finance faculty is housed in Computer Science. The answer has two parts. First, the financial industry has an immense and growing need for CS talent: the algorithms, software systems, and data pipelines that underpin modern banking, trading, and risk management are built and maintained by computer scientists and software engineers. Second, the career data reflects this reality — many CS graduates find themselves working in the financial industry, whether in quantitative trading, risk technology, data science, or software development for financial platforms.

CS Relevance: Voices from Industry

The demand for technically trained computer scientists in finance is not merely an academic observation; it is articulated loudly by senior leaders at the world’s largest financial institutions.

“Everything we do is underpinned by math and a lot of software.” — Goldman Sachs CFO Marty Chavez, speaking to a Harvard audience in January 2017.

This remark captures the essence of modern investment banking: every trade, every risk calculation, every client interaction is mediated by software systems built on mathematical foundations.

“We’re in the technology business. Our product happens to be banking, but largely that’s delivered through technology.” — Nova Scotia Bank CEO Brian Porter, to The Globe and Mail, 2016.

Porter’s framing is striking — he does not describe his institution as a bank that uses technology, but as a technology company whose product is banking. The distinction signals how thoroughly software has become the core delivery mechanism of financial services.

“If I have a choice to choose somebody who has experience in technical or delivery experience or data mining, I would rather pick up that expertise and teach them banking than taking somebody from another bank who is no further ahead than we are.” — Jeff Henderson, Executive VP and Chief Information Officer at TD, to The Financial Post, 2017.

Henderson’s statement is perhaps the most directly relevant to CS students: banks would rather hire a strong computer scientist and train them in finance than hire a finance person with weak technical skills. This is a compelling argument for why a computational finance course belongs in CS.

Beyond individual hiring preferences, banks and financial institutions are making structural investments in technical talent near universities. Examples in the Waterloo region include Borealis (the RBC-funded AI research institute), the CIBC data lab, Manulife, and Sun Life, all of which have established research partnerships or offices near the University of Waterloo. The broader trend is clear: many skilled technical professionals now work in the financial industry, and this flow will only accelerate.

Background Assumed

The course assumes students have a working knowledge of programming (implementation of algorithms in code), calculus and linear algebra (derivatives, integrals, matrix operations), and statistics (probability distributions, expectation, variance, the normal distribution, conditional expectation). Some mathematical maturity is expected — the ability to follow and construct rigorous arguments — but crucially, no prior finance background is assumed. All financial concepts will be introduced from first principles.

Students are encouraged to review the following before proceeding: basic statistics and probability, expectation, conditional expectation, variance, the normal distribution, calculus, and linear algebra.

What Is This Course About? Risk and Its Management

The intellectual heart of the course is the concept of risk — uncertainty in financial markets that critically affects the operations of financial institutions, companies, universities, individuals, and societies at large. Stock and commodity prices do not move smoothly; they exhibit mountains and valleys in zigzag, Brownian-motion-like movements that make future values inherently unpredictable. Risk management — the systematic analysis, measurement, and reduction of financial uncertainty — is a major agenda of both government and financial institutions, and financial markets have a significant impact on society as a whole.

Two fundamental questions motivate the field: Can uncertainty be analyzed and measured? Can risk be reduced, and if so, how? The answer to both is yes, and mathematics and computing play a key role. Specifically, mathematical and computational methods underpin investment strategy analysis, asset valuation, risk management, and data-driven pattern recognition.

Derivative Markets and Their Importance

This course focuses on financial instruments in a derivative market, which is one of the largest financial markets in the world. Derivative instruments have complex, nonlinear risk profiles that make them particularly challenging — and particularly rewarding — to analyze. Importantly, the models and computational methods developed in this course go beyond the derivative markets and apply broadly to quantitative finance.

Financial derivatives are major news, topics of social discussion, and even the subject of popular films such as The Big Short and Margin Call. CBS’s 60 Minutes described derivatives as “too important to ignore and too complex to explain.” Warren Buffett famously called financial derivatives “weapons of mass destruction.” These characterizations underscore why rigorous computational methods for understanding derivatives are so valuable: the stakes are high.

Course Outline: Main Topics

The course covers the following major topics, progressing from financial definitions through mathematical theory and into practical computation.

The first topic is basic financial derivatives: definitions and uses. Students will learn what options, futures, and other derivatives are, and why they are used in practice. The second topic is no-arbitrage pricing theory: a financial theory based on the principle of trading without risk-free profit opportunities. This principle, which will be developed carefully, is the foundation for all rigorous option pricing.

The third major topic is stochastic asset price models, which come in two flavors: discrete time and discrete state models based on the binomial lattice, and continuous-time models based on Brownian motion. These models capture the random movement of asset prices over time.

On the mathematical side, the course introduces stochastic differential equations (SDEs), which describe how asset prices evolve in continuous time under randomness. Students will learn the tools of Ito’s calculus, the branch of mathematics that extends ordinary calculus to functions of stochastic processes. The course also covers partial differential equations (PDEs), which arise naturally from the no-arbitrage pricing arguments, and optimization theory, which underlies portfolio construction and model calibration.

On the computational side, the course covers binomial lattice option valuation, Monte Carlo simulations for estimating expected payoffs, techniques for analyzing dynamic trading strategies, dynamic programming for solving multi-period decision problems, numerical methods for solving PDEs, portfolio optimization, model calibration (fitting model parameters to market data), and data-driven optimization.

A Brief History of Options

History of Options

The history of financial options stretches back more than two millennia, long predating the formal mathematical machinery that would eventually be developed to price them. Understanding this history illuminates why options exist and what economic purposes they serve.

Thales of Miletus and the Olive Harvest (332 BC)

The earliest recorded option-like transaction is attributed to Thales of Miletus, an ancient Greek philosopher, astronomer, and mathematician. Around 332 BC, Thales used his knowledge of star positions — a sophisticated observational tool in antiquity — to predict that the coming olive harvest would be exceptionally large. Reasoning from this astronomical forecast, he anticipated that demand for olive presses (the equipment used to extract olive oil) would be very high at harvest time.

To profit from his prediction, Thales paid the owners of olive presses a fee in advance in order to secure the right to use the presses during the harvest period. The contract was agreed at time \(t = 0\), before the harvest, for a price that reflected the present uncertainty about demand. The underlying price in this transaction was the market rental price of an olive press at harvest time \(T\). The diagram of this relationship places Thales on one side, the press owner on the other, with the contract specifying the olive press price \(S\) at harvest time \(T\) linking the two parties.

Thales was the holder of the contract — he paid for the right, and he could choose to exercise it or not. The press owner was the writer — having accepted payment, they had the obligation to make the presses available if Thales chose to use them. When the harvest indeed proved large and rental prices rose, Thales exercised his right, renting the presses at the pre-agreed lower price and subletting them at the prevailing high market price, earning a profit. If the harvest had been poor and rental prices had fallen below his pre-agreed price, Thales would simply have walked away, losing only his initial payment. This is precisely the asymmetric payoff structure that defines a modern financial option.

Tulip Bulb Mania in 17th Century Holland

The next landmark in option history is considerably more dramatic. In the 1600s, tulips became prized possessions in Holland, with rare varieties commanding extraordinary prices. The tulip market attracted participants ranging from professional flower growers and wholesalers to ordinary citizens speculating on price movements, and the resulting price spiral is widely regarded as possibly the first financial bubble in recorded history.

Within this feverish market, growers and wholesalers used complex option contracts to reduce their risk exposure to the tulip bulb price at the harvest time \(T\). The contracts worked in two directions. Tulip bulb growers bought contracts that allowed them to sell at today’s price, protecting them against the risk that the price would fall by harvest time — these were put-like contracts. Tulip wholesalers bought contracts that allowed them to buy at today’s price, protecting them against the risk that the price would rise — these were call-like contracts. In both cases, the underlying asset \(S_t\) was the tulip price. The diagram of this relationship shows the holder on one side and the writer on the other, with the contract specifying the right to buy or sell tulip at harvest time \(T\).

Beyond these hedging uses, ordinary people began using options speculatively, betting on tulip prices continuing to rise. Options provided leverage: a small upfront payment could secure the right to profit from a much larger movement in the underlying price. However, leverage cuts both ways. For a call option, if the underlying price falls below the strike price at expiry, the option’s value is zero — a 100% loss on the premium paid. When the tulip market bubble burst, those who had bought call options lost everything they had invested in them, and the Dutch economy went into recession. The episode stands as a stark early illustration of how derivative instruments amplify both gains and losses.

Derivative Trading: A Risky Business

The aftermath of tulip mania shaped public and regulatory attitudes toward derivative trading for generations. Derivative trading acquired a bad reputation — it was seen as inherently speculative and socially harmful. Because derivative trading was unregulated for a long time and had demonstrated its capacity for economic disruption, option trading was actually made illegal from 1733 to 1860, a ban lasting nearly 130 years. This regulatory episode underscores that derivatives have always attracted controversy: their potential for amplifying risk is real, and the question of how to measure and manage that risk is precisely what this course is about.

Listed Options Markets

The rehabilitation of option trading as a legitimate financial activity happened gradually. In the late 19th century, Russel Sage, an American financier, traded calls and puts over-the-counter — that is, through private bilateral agreements rather than on a formal exchange. Sage was the first to identify empirically that option value is related to the price of the underlying asset and to the prevailing interest rate, foreshadowing the formal theory that would come decades later.

The modern era of listed options markets began in 1973 with the opening of the Chicago Board of Options Exchange (CBOE), the first formal exchange dedicated to standardized option contracts. The existence of a centralized exchange made contracts more transparent, more liquid, and more accessible to a wide range of participants.

Also in 1973, the Black-Scholes option pricing methodology was published, providing for the first time a rigorous mathematical formula for determining the fair value of a European option. This pricing theory helped participants determine fair values and dramatically increased market liquidity by giving buyers and sellers a common, theoretically grounded framework for negotiation.

The Quest for an Option Pricing Formula

The intellectual history of option pricing is a story of gradual convergence on a rigorous solution. We know at expiry \(T\) that \(V_T = \text{payoff}(S_T)\). We know the current price \(S_0\). The question is: what is the fair value \(V_0\) today?

The solution approach is to determine the relationship between the underlying price \(S\) and the option value \(V\) at any time \(t\) — that is, to find the function \(V(S, t)\). Once this function is known, today’s fair value is simply \(V(S_0, 0)\).

The key contributors to this quest, in historical sequence, were as follows. First, Louis Bachelier submitted a seminal doctoral thesis at the University of Paris in 1900, in which he modeled stock prices as a random walk and derived an early call pricing formula — arguably the founding document of mathematical finance. Second, Paul Samuelson (Nobel laureate in economics) rediscovered Bachelier’s thesis around 1950 and refined the model, introducing the lognormal model for asset prices. Third, Ed Thorp derived the correct option pricing formula in 1965, though without providing a formal proof of why it was correct. Finally, the seminal works of Fischer Black, Myron Scholes, and Robert Merton in 1970 provided the complete, rigorous derivation using no-arbitrage arguments and stochastic calculus. Black-Scholes and Merton received the Nobel Prize in Economics in 1997 (Black had died in 1995).

In this course we will focus on stock options with expiry \(T \leq 1\) (at most one year), and we will reasonably ignore randomness in the interest rate. Before discussing option pricing, we need some basic definitions.

A stock is a share in the ownership of a company. A dividend is a payment made to shareholders out of the company’s profits. An important subtlety is that when a stock pays a dividend to its shareholders, the holder of an option on that stock receives nothing — the option holder does not own the stock, only the right to buy or sell it. For this reason, an option is said to be dividend protected: its payoff function is defined purely in terms of the stock price, not the dividend stream.

Financial Options — Definitions, Payoffs, and the Pricing Problem

Financial Options: The Basic Definition

A financial option (also called a financial derivative) is a financial contract stipulated today at time \(t = 0\). The defining feature of such a contract is that its value at the future expiry date \(T\) is determined exactly by the market price of an underlying asset at \(T\). The contract is entered into between two parties: the holder, who buys the option and enters a long position, and the writer, who sells the option and enters a short position. This relationship is often depicted with a diagram showing the holder on the left connected to the writer on the right, with the contract — specifying the underlying price process \(S_t\), the expiry \(T\), and the payoff function \(\text{payoff}(S_T)\) — in the middle.

The Underlying Asset

The underlying asset \(S_t\) can be any of a wide variety of financial instruments: a stock (a share in a company), a commodity (such as oil, gold, or agricultural products), a market index (such as the S&P 500), an interest rate or bond, or an exchange rate (the price of one currency in terms of another). In each case, the underlying evolves randomly over time. We let \(S_t\), or equivalently \(S(t)\), denote the underlying price at time \(t\). This quantity is a stochastic process — a mathematical object that assigns a random value to each point in time. Intuitively, a stochastic process is a time-indexed family of random variables; at each time \(t\), \(S_t\) is uncertain until that moment arrives and the market price is observed.

The Insurance Interpretation

Knowing how the contract’s future value relates to the underlying asset allows the option to be used as a form of financial insurance. The holder has bought protection against an adverse movement in the underlying price; the question is how much that protection is worth today. Equivalently, the writer has sold that protection and taken on the corresponding risk (uncertainty); the question is how much compensation the writer should receive today for bearing that risk. The diagram of the cash flow shows a premium \(V_0\) flowing from holder to writer at time \(t = 0\), and a payoff \(V_T = \text{payoff}(S_T)\) flowing from writer to holder at time \(T\).

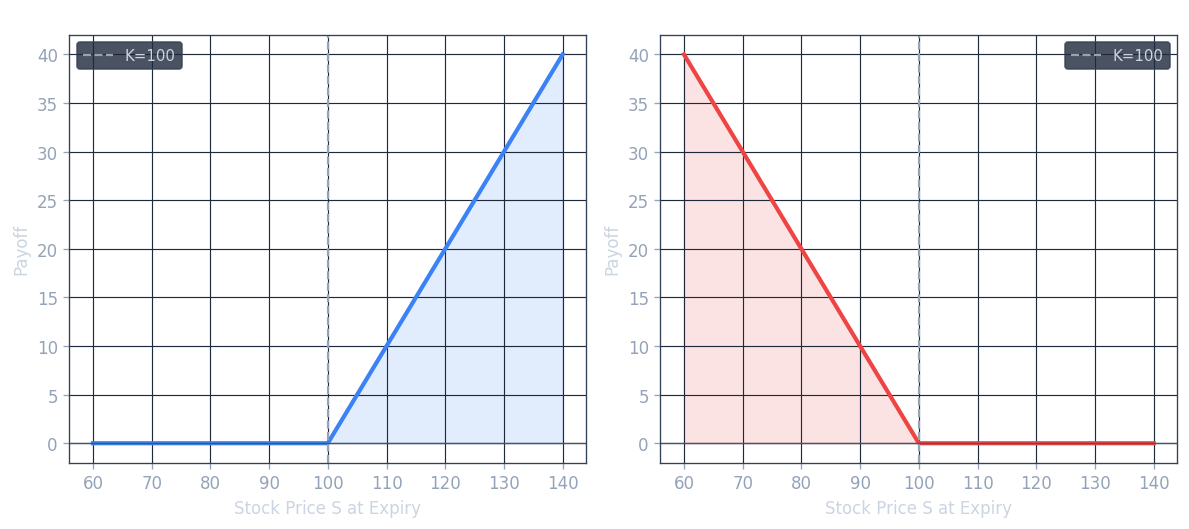

European Calls and Puts

The two most fundamental types of option are the call and the put, each available in European and American varieties.

A European call option is the right to buy the underlying asset at a pre-specified strike price \(K\). Crucially, this right can only be exercised at the expiry date \(T\) — not before. There is an important asymmetry: the holder has the option to exercise but no obligation to do so, while the writer has the obligation to sell at \(K\) if the holder exercises.

A European put option is the right to sell the underlying asset at the strike price \(K\), again exercisable only at expiry \(T\).

An American put option is the right to sell the underlying asset at the strike price \(K\), with the right exercisable at any time from the present up to and including the expiry \(T\). The ability to exercise early makes American options harder to price than their European counterparts.

We let \(V(S(t), t)\), or \(V_t\), denote the option value at time \(t\).

Payoff Functions

The central question of option pricing is: what is the fair value \(V_0\) of the option today? And relatedly: how should a writer hedge the risk they have taken on? Before addressing pricing, we first determine the payoff functions — the value \(V_T = \text{payoff}(S_T)\) at expiry — for calls and puts.

Call Payoff at Expiry

At expiry \(T\), the holder of a call option compares the market price \(S_T\) against the strike price \(K\). If \(S_T \leq K\), the holder can buy the underlying more cheaply on the open market than by exercising the option, so it is rational not to exercise, and the call expires worthless with \(V_T = 0\). If \(S_T \geq K\), the holder exercises the call, receiving the underlying worth \(S_T\) and paying only \(K\), for a net gain of \(S_T - K\). Therefore:

\[ V_T = \text{payoff}(S_T) = \max(S_T - K,\, 0) = \begin{cases} S_T - K & \text{if } S_T \geq K \\ 0 & \text{otherwise} \end{cases} \]The call payoff diagram is a “hockey stick” shape: it is flat at zero for all \(S_T \leq K\), and then rises linearly with slope 1 for \(S_T > K\), with the kink occurring exactly at the strike price \(K\).

Put Payoff at Expiry

For a put option, the analysis is symmetric. If \(S_T \leq K\), the holder can sell the underlying at the strike price \(K\) — which is above the market price — and gains \(K - S_T\). If \(S_T \geq K\), it is not rational to exercise (the holder could sell at a higher price on the open market), so the put expires worthless. Therefore:

\[ V_T = \text{payoff}(S_T) = \max(K - S_T,\, 0) = \begin{cases} K - S_T & \text{if } S_T \leq K \\ 0 & \text{otherwise} \end{cases} \]The put payoff diagram is also a hockey stick, but mirrored: it is zero for \(S_T \geq K\) and rises linearly (with slope -1 in \(S_T\) as \(S_T\) falls below \(K\).

Exotic Payoffs: The Straddle

Many other payoff functions have been constructed beyond simple calls and puts. A straddle is a combination of a call and a put with the same strike and expiry:

\[ \text{payoff}(S_T) = \max(S_T - K, 0) + \max(K - S_T, 0) \]The straddle’s payoff diagram has a V-shape centered at \(K\): it pays \(|S_T - K|\) regardless of which direction the underlying moves. This makes a straddle a bet on volatility rather than on direction — the holder profits if the underlying moves significantly in either direction from \(K\).

Concrete Examples

To make these definitions concrete, consider two tulip-market examples from the historical context. A bulb wholesaler can purchase a call option to have the right to buy tulip bulbs at a fixed price of $0.50 per dozen in three months — this protects the wholesaler against the price rising above $0.50. A bulb grower can purchase a put option to have the right to sell tulip bulbs at a fixed price of $1.00 per dozen in three months — this protects the grower against the price falling below $1.00. A speculative bet on the underlying price can be placed by trading either the underlying itself \(S_t\) or the option value \(V_t\).

The Leverage Effect

It is important to appreciate that an option is more risky relative to the underlying — this is the leverage effect. The relative move in the underlying is typically much smaller than the relative move in the option value:

\[ \left| \frac{S_T - S_0}{S_0} \right| \ll \left| \frac{V_T - V_0}{V_0} \right| \]In the extreme case where the option expires out of the money (the payoff is zero), the holder suffers a 100% loss: they paid \(V_0\) as a premium and receive nothing at expiry, so \(\frac{V_T - V_0}{V_0} = -100\%\). A comparable move in the underlying would typically not cause anywhere near a 100% loss in the stock itself. This asymmetry in leverage is exactly what made tulip call options so dangerous for ordinary speculators when the bubble burst.

What Do We Know About the Pricing Problem?

Summarizing what is known at the time of pricing: we know at expiry that \(V_T = \text{payoff}(S_T)\), and we know the current stock price \(S_0\). What we do not know is how \(S_0\) will evolve to \(S_T\) — the future path is uncertain. The question is: what is the fair value \(V_0\) today?

Stock, Dividends, and Dividend Protection

Throughout this course we focus on stock options with expiry \(T \leq 1\) year, and we will ignore randomness in the interest rate (a simplification that is reasonable for short-dated options). A stock is a share in the ownership of a company, entitling the holder to a fraction of future profits. A dividend is a payment made to shareholders from those profits. When a stock pays a dividend, the holder of an option on the stock receives nothing — the option is said to be dividend protected, meaning its payoff and value are defined solely in terms of the stock price, not the dividend.

The One-Period Binomial Model and No-Arbitrage Pricing

Outline

Lecture 3 introduces the simplest possible model for option pricing: the one-period binomial model. This model is deliberately stripped down to its essence — one time step, two possible outcomes — but it contains all the conceptual ingredients of the much more general theory. Along the way, the lecture introduces the riskless asset (bond with constant interest rate), the no-arbitrage principle, the mathematical characterization of arbitrage strategies via portfolios, and the famous put-call parity relationship.

The One-Period Binomial Case

Consider a stock currently priced at \(S_0 = 20\). Over one period (to time \(T = 1\), the stock can move to exactly one of two values: it goes up to \(S_T = 22\) with probability \(p = 0.1\), or it goes down to \(S_T = 18\) with probability \(1 - p = 0.9\). This is depicted as a tree diagram with a single node at 20 branching upward to 22 and downward to 18.

Now consider a European call option on this stock with strike price \(K = 21\) and expiry \(T = 1\). The question is: what do we know about the option value at expiry?

Applying the call payoff formula: if the stock goes up to 22, then \(S_T = 22 \geq K = 21\), so the payoff is \(22 - 21 = 1\). If the stock goes down to 18, then \(S_T = 18 < K = 21\), so the option expires worthless and the payoff is 0. The call tree therefore shows a node with unknown value “?” at time 0, branching upward to payoff = 1 and downward to payoff = 0.

Why a Simple Expected Value Is Not the Answer

A first attempt at pricing might be to discount the expected payoff under the real-world probability:

\[ \text{naive estimate} = 0.1 \times 1 + 0.9 \times 0 = \$0.10 \]But this raises an immediate objection: what about the time value of money? A dollar paid at time \(T = 1\) is not worth a dollar today, because money deposited today grows at the risk-free interest rate \(r\). If \(r = 0.05\), then perhaps the fair value is \(e^{-0.05} \times 0.10\)? This time-value-of-money correction is necessary but, as we will see, it is not sufficient. The real-world probability \(p\) is not the right probability to use for pricing at all.

The Riskless Asset and Continuous Compounding

Before we can determine the fair option value, we need to establish the mathematics of the riskless asset — a cash account or bond that grows at a known, deterministic interest rate.

The risk-free interest rate \(r \geq 0\) is the continuously compounded rate at which a cash account grows with certainty. Lending (depositing) money to a bank is equivalent to buying a bond from the bank; borrowing money from a bank is equivalent to selling a bond.

Let \(\beta(t)\) denote the value at time \(t\) of a riskless bond. The bond grows at rate \(r\) continuously, so:

\[ \frac{d\beta(\tau)}{\beta(\tau)} = r \, d\tau \]Integrating both sides from \(t\) to \(T\):

\[ \int_t^T \frac{d\beta(\tau)}{\beta(\tau)} = \int_t^T r \, d\tau \]\[ \log(\beta(T)) - \log(\beta(t)) = r(T - t) \]This gives us two useful conventions. For discounting (finding the present value of a future amount): if \(\beta(T) = 1\), then \(\beta(t) = e^{-r(T-t)}\). For compounding (finding the future value of a present amount): if \(\beta(t) = 1\), then \(\beta(T) = e^{r(T-t)}\).

Returning to the binomial example with \(r = 0.05\): even if we simply discount the expected payoff, we get \(e^{-0.05} \times 0.10\). But as the next section shows, this is still not the correct fair value, because it uses the wrong probability.

Determining the Fair Value by Trading: No-Arbitrage Pricing

Determining the fair option value requires thinking carefully about trading in the financial market — which consists of the bond, the stock, and the option itself. The key concept is that of arbitrage.

An arbitrage is a trading opportunity that generates a guaranteed (no-risk) profit greater than what one could earn by depositing money in the bank at the risk-free rate \(r \geq 0\). More colloquially, it is a “free lunch” — a strategy that costs nothing (or less than nothing) today but delivers a guaranteed positive profit in the future. In an efficient market, arbitrage opportunities cannot persist because rational traders would immediately exploit them, driving prices back to levels that eliminate the opportunity.

Definition: The fair value of a financial instrument is the price that does not lead to arbitrage.

Arbitrage can occur only momentarily in a real market, because as soon as it appears, traders exploit it and competition eliminates it. The no-arbitrage principle gives us a powerful tool: under no arbitrage, if two instruments have the same value at every future state of the world, they must have the same price today. This is the foundation for pricing derivatives.

Portfolios and the Mathematical Characterization of Arbitrage

To make the no-arbitrage argument precise, we represent a trading strategy as a portfolio — a collection of positions in the available instruments. As a concrete example, consider a portfolio consisting of one share of stock (a long position) and a borrowing of $100 (selling a bond):

\[ \Pi_0 = 1 \cdot S_0 - 100 \quad \text{or equivalently} \quad \Pi_0 = \{S_0,\, -100\} \]At time \(t\), the value of this portfolio is:

\[ \Pi_t = S_t - 100 e^{rt} \]The bond position grows at the risk-free rate, while the stock position fluctuates randomly.

Mathematically, an arbitrage strategy is characterized by either of the following two conditions:

A portfolio with an initial value \(\Pi_0 = 0\) (costs nothing to set up today) but with \(\Pi_T > 0\) always (always delivers a strictly positive profit at expiry, regardless of what the market does).

A portfolio with \(\Pi_0 < 0\) (the investor actually receives cash today by setting it up) but with \(\Pi_T \geq 0\) for sure (never requires any payment at expiry).

Both conditions represent something for nothing: free money with no risk. The no-arbitrage principle asserts that no such portfolio can exist in an efficiently functioning market.

Put-Call Parity

One of the most elegant consequences of the no-arbitrage principle is put-call parity, a relationship that must hold between the prices of European calls and puts on the same underlying with the same strike and expiry.

Theorem (Put-Call Parity): Assume the stock \(S_t\) does not pay dividends, the interest rate \(r \geq 0\) is constant, and there is no arbitrage. Then for any time \(0 \leq t \leq T\), the European call price \(C_t\) and European put price \(P_t\), both with strike \(K\) and expiry \(T\) on the same underlying, satisfy:

\[ C_t = P_t + S_t - K e^{-r(T-t)} \]Proof: At expiry \(T\), we know the payoff of each instrument:

\[ C_T = \max(S_T - K, 0) \]\[ P_T = \max(K - S_T, 0) \]Subtracting:

\[ C_T - P_T = \max(S_T - K, 0) - \max(K - S_T, 0) \equiv S_T - K \]This identity holds for every possible value of \(S_T\): when \(S_T > K\) both terms give \(S_T - K\), and when \(S_T \leq K\) both terms give \(S_T - K\). So the portfolio “long call, short put” has the same value at expiry as a portfolio “long stock, short bond with face value \(K\).” By the no-arbitrage principle, these two portfolios must have the same value at every time \(t \leq T\):

\[ C_t - P_t = S_t - K e^{-r(T-t)} \]which gives the stated result. \(\square\)

Put-call parity is a model-free result: it does not depend on any assumptions about how \(S_t\) evolves over time, only on the no-arbitrage principle and the known payoff structure of calls and puts. It provides an important consistency check: if market prices of calls and puts violate put-call parity, an arbitrage opportunity exists and can be explicitly constructed.

Option Replication and Risk-Neutral Valuation

Overview

Lecture 4 develops the theoretical foundations of option pricing within the one-period binomial model. The three central themes are option replication and hedging, the computation of a fair option value through no-arbitrage arguments, and the powerful concept of risk-neutral valuation.

The One-Period Binomial Model

Setup and Assumptions

The one-period binomial model provides the simplest tractable framework for derivative pricing. At the current time \(t\), the stock price is \(S_t > 0\). Over a time interval of length \(\Delta t > 0\), the stock price moves to one of exactly two possible values. If the market goes up, the stock reaches

\[ S_{t+1}^u = u S_t \]and if the market goes down, the stock reaches

\[ S_{t+1}^d = d S_t \]where \(u\) is called the up ratio and \(d\) the down ratio. The up move occurs with real-world probability \(p > 0\) and the down move with probability \(1 - p\).

The model operates under four standing assumptions. First, the current stock price is strictly positive: \(S_t > 0\). Second, the time step satisfies \(\Delta t > 0\). Third, the parameters \(u\) and \(d\) are given and satisfy \(0 < d < u\), ensuring that the up move genuinely exceeds the down move and that prices remain positive. Fourth, and most critically, no arbitrage is assumed: there is no way to construct a portfolio that starts at zero cost and yields a non-negative payoff with positive probability of strictly positive profit.

The Bond

Alongside the risky stock, the model includes a risk-free bond (or money market account). The bond has present value \(e^{-r \Delta t}\) at time \(t\) and matures to 1 at time \(t+1\) in both the up and down states. Here \(r\) denotes the continuously compounded risk-free interest rate. In other words, investing \(e^{-r \Delta t}\) today in the bond yields exactly 1 unit of currency at the end of the period, regardless of which state of the world is realized.

The Option

We wish to price an option — a derivative whose value at time \(t+1\) depends on the stock price. Let \(V_{t+1}^u\) denote the option value if the stock went up and \(V_{t+1}^d\) the option value if it went down. These are taken as given: at the expiry date \(T\), they equal the corresponding option payoffs (for example, \(\max(S_T - K, 0)\) for a call). The question is: what is the fair price \(V_t\) today?

Option Replication and the Replicating Portfolio

Constructing the Replicating Portfolio

The replication approach works by constructing a portfolio of stock and bond that exactly mimics the option’s payoff in every future state. At time \(t\), form a portfolio consisting of \(\delta_t\) units of stock and \(\eta_t\) units of bond. The bond position has present value \(\eta_t \beta_t = \eta_t e^{-r \Delta t}\). We require this portfolio to replicate the option in both states, which gives the replication equation:

\[ \begin{bmatrix} 1 \\ 1 \end{bmatrix} \eta_t + \begin{bmatrix} u S_t \\ d S_t \end{bmatrix} \delta_t = \begin{bmatrix} V_{t+1}^u \\ V_{t+1}^d \end{bmatrix} \]The left column from the bond contributes 1 in each state, while the stock contributes \(u S_t\) in the up state and \(d S_t\) in the down state. The right-hand side is the option value at \(t+1\). Importantly, this is a 2-by-2 linear system in the two unknowns \(\delta_t\) and \(\eta_t\), and because \(u \neq d\), the solution is unique. The existence of a unique replicating portfolio is what makes the binomial model complete.

Once the replicating portfolio \(\{\delta_t S_t, \eta_t \beta_t\}\) is found, the no-arbitrage principle immediately forces:

\[ V_t = \delta_t S_t + \eta_t \beta_t = \delta_t S_t + \eta_t e^{-r \Delta t} \]If the market price of the option differed from this quantity, one could buy the cheaper and sell the more expensive, locking in a riskless profit — an arbitrage.

The Hedged Portfolio and Irrelevance of Real-World Probability

The existence of a replicating portfolio has a striking consequence: the portfolio \(\{V_t, -\delta_t S_t\}\), consisting of a long option position and a short stock position, is risk-free. Its value does not depend on whether the up or down move occurs. This is the foundation of delta hedging: by holding \(\delta_t\) shares of stock against the option, all market risk is eliminated.

A critical observation follows: the real-world probability \(p\) is entirely irrelevant to the fair value of the option. The replication equations depend only on \(u\), \(d\), \(S_t\), and the future option values; the actual probability of an up move plays no role whatsoever. This is a deep and non-intuitive result.

A Worked Example

Setting Up the Example

Consider a stock with current price \(S_0 = 20\). In one period the stock can rise to \(S_T^u = 22 = 1.1 S_0\) (so \(u = 1.1\) or fall to \(S_T^d = 18 = 0.9 S_0\) (so \(d = 0.9\). Assume the risk-free rate is \(r = 0\). We wish to price a European call option with strike \(K = 21\).

The call payoffs at expiry are: in the up state, \(C_T^u = \max(22 - 21, 0) = 1\); in the down state, \(C_T^d = \max(18 - 21, 0) = 0\).

Solving for the Replicating Portfolio

The replication equations become:

\[ 22\delta + 1 \cdot \eta = 1 \]\[ 18\delta + 1 \cdot \eta = 0 \]Subtracting the second from the first gives \(4\delta = 1\), so

\[ \delta = \frac{C_T^u - C_T^d}{(u - d)S_0} = \frac{1 - 0}{(1.1 - 0.9) \times 20} = 0.25 \]Substituting back, \(\eta = -18 \times 0.25 = -4.5\). A negative \(\eta\) means the bond position is short — equivalently, one borrows cash. The fair option value is therefore

\[ C_0 = \delta S_0 + \eta \beta_0 = 0.25 \times 20 - 4.5 = 0.5 \]Verifying No-Arbitrage: What If the Market Price Differs?

Suppose the market price of the call is 0.95, greater than the fair value of 0.5. An arbitrageur would sell the call for 0.95, buy 0.25 shares of stock (costing \(0.25 \times 20 = 5\), and borrow 4.5 in cash. The net portfolio value at time zero is

\[ \Pi_0 = -0.95 + 0.25 \times 20 - 4.5 = -0.45 \]This is a net receipt of 0.45 (the portfolio costs negative, meaning cash is received). At time \(T\), the total position value is

\[ -C_T + 0.25 S_T - 4.5 \equiv 0 \]in both states, because \(\delta = 0.25\) and \(\eta = -4.5\) satisfy the replication equations. The portfolio costs nothing at expiry but generated income of 0.45 at inception — a pure arbitrage. The reader is invited to similarly find an arbitrage when the market price is set to 0.10 (the naively computed expected payoff under the real-world probability, assuming equal up and down probabilities), which also differs from the fair value of 0.5.

Risk-Neutral Valuation

The No-Arbitrage Constraint on Parameters

The no-arbitrage assumption places an important constraint on the model parameters. If \(e^{r \Delta t} > u\), then the risk-free bond dominates the stock in every state, and one could profit by selling stock and investing in bonds. Conversely, if \(e^{r \Delta t} < d\), the stock dominates the bond in every state, enabling the reverse arbitrage. Therefore, no arbitrage implies

\[ d \leq e^{r \Delta t} \leq u \]Deriving the Risk-Neutral Probability

Rather than working directly with the replicating portfolio, we can introduce an elegant dual representation. Consider finding parameters \(\psi^u\) and \(\psi^d\) (called state prices or Arrow-Debreu prices) that satisfy a 2-by-2 linear system expressing that the bond and stock are correctly priced as discounted expectations:

\[ \begin{bmatrix} 1 \\ u S_t \end{bmatrix} \psi^u + \begin{bmatrix} 1 \\ d S_t \end{bmatrix} \psi^d = \begin{bmatrix} e^{-r \Delta t} \\ S_t \end{bmatrix} \]The unique solution is \(\psi^u = e^{-r \Delta t} q^*\) and \(\psi^d = e^{-r \Delta t}(1 - q^*)\), where

\[ q^* = \frac{e^{r \Delta t} - d}{u - d}, \qquad 0 \leq q^* \leq 1 \]The quantity \(q^*\) is the risk-neutral probability (also written \(q^*\) under the Q measure). The no-arbitrage condition \(d \leq e^{r \Delta t} \leq u\) guarantees that \(q^*\) lies in \([0,1]\), making it a genuine probability.

Interpretation: The Q Measure

From the second equation of the state-price system, we obtain the remarkable relation

\[ S_t = e^{-r \Delta t} \left( q^* u S_t + (1 - q^*) d S_t \right) = e^{-r \Delta t} \mathbf{E}^Q(S_{t+1}) \]and from the first equation,

\[ \beta_t = e^{-r \Delta t} \left( q^* \cdot 1 + (1-q^*) \cdot 1 \right) = e^{-r \Delta t} \mathbf{E}^Q(\beta_{t+1}) \]where \(\mathbf{E}^Q(\cdot)\) denotes expectation using \(q^*\) as the probability of the up state. Under the Q measure, every asset — bond, stock, and any derivative — earns the risk-free rate of return \(r\) in expectation.

The Risk-Neutral Pricing Formula

Let \(\{\delta_t S_t, \eta_t \beta_t\}\) be the replicating portfolio. Using the expressions for \(S_t\) and \(\beta_t\) above, the option value can be expanded as

\[ V_t = \delta_t S_t + \eta_t \beta_t = \delta_t e^{-r \Delta t}(q^* u S_t + (1-q^*) d S_t) + \eta_t e^{-r \Delta t}(q^* \cdot 1 + (1-q^*) \cdot 1) \]Rearranging and using the replication equations yields

\[ V_t = e^{-r \Delta t} q^* V_{t+1}^u + e^{-r \Delta t}(1 - q^*) V_{t+1}^d = e^{-r \Delta t} \mathbf{E}^Q(V_{t+1}) \]This is the risk-neutral pricing formula: the option price today is the discounted expected payoff under the risk-neutral measure Q. More precisely, under Q the expected rate of return of every traded asset equals the risk-free rate \(r\):

\[ \beta_t = e^{-r \Delta t} \beta_{t+1} \quad \text{(rate of return } = r\text{)} \]\[ S_t = e^{-r \Delta t} \mathbf{E}^Q(S_{t+1}) \quad \text{(expected rate of return is } r \text{ under Q)} \]\[ V_t = e^{-r \Delta t} \mathbf{E}^Q(V_{t+1}) \quad \text{(expected rate of return is } r \text{ under Q)} \]This result is deeper than it may first appear: it holds not just in the one-period model but extends to multi-period models and, in the limit, to continuous-time finance. Furthermore, the converse is also true: if such a probability Q exists with \(0 \leq q^* \leq 1\), then there is no arbitrage. The existence of no-arbitrage and the existence of a risk-neutral probability measure are equivalent.

Revisiting the Example Under Risk-Neutral Valuation

Return to the call with \(K = 21\), \(S_0 = 20\), \(u = 1.1\), \(d = 0.9\), and \(r = 0\). The risk-neutral probability is

\[ q^* = \frac{e^{r \Delta t} - d}{u - d} = \frac{1 - 0.9}{1.1 - 0.9} = \frac{0.1}{0.2} = 0.5 \]The option value is then

\[ C_0 = e^{-r \Delta t}(q^* C_T^u + (1 - q^*) C_T^d) = 1 \times (0.5 \times 1 + 0.5 \times 0) = 0.5 \]This matches exactly the value obtained by direct replication, confirming the equivalence of both approaches. The real-world probability \(p\) is nowhere needed.

The Multi-Period Binomial Lattice

Overview

The one-period binomial model, while conceptually complete, is too crude for practical option pricing: it models the entire life of the option as a single coin flip. The remedy is to apply the one-period model repeatedly on small sub-intervals, producing a multi-period binomial lattice. This lecture introduces the lattice structure, the logarithmic return model that motivates parameter choices, the random walk interpretation of log prices, and the complete backward induction algorithm for pricing European options.

Time Partition and Notation

Partitioning the Time Horizon

Let the option have expiry \(T\). We divide the interval \([0, T]\) into \(N\) equal sub-intervals by introducing time points

\[ t_0 = 0 < t_1 < \cdots < t_n < \cdots < t_N = T \]where \(t_n = n \Delta t\) and \(\Delta t = T/N\). As \(N\) increases, \(\Delta t\) shrinks and the lattice provides a finer approximation to the continuous-time dynamics of the stock.

Notation for Node Prices

Rather than using \(S_t\) to emphasize continuous time, within the lattice it is more convenient to write \(S_n = S(t_n)\) for the stock price at time \(t_n\). When both superscripts and subscripts are used simultaneously, the superscript denotes the time step (the column of the lattice) and the subscript denotes the node index (the row). Specifically, \(S_j^n\) denotes the \(j\)-th price at time \(t_n\), where \(j = 0, 1, \ldots, n\), giving a total of \(n+1\) distinct price levels at time step \(n\). The initial condition is \(S_0^0 = S_0\).

The Multiplicative Price Model

Transition Rules

From node \(S_j^n\) at time \(t_n\), the price can move to one of two successor nodes at time \(t_{n+1}\):

\[ S_{j+1}^{n+1} = u S_j^n \quad \text{(up move, probability } p\text{)} \]\[ S_j^{n+1} = d S_j^n \quad \text{(down move, probability } 1-p\text{)} \]The key structural property is that an up followed by a down move, or a down followed by an up move, leads to the same node \(S_1^2 = ud S_0\). If we additionally assume \(d = 1/u\), this recombination property is exact, producing a non-drifting recombining tree. In a two-period lattice, for example, there are five nodes: \(S_0^0 = S_0\) at step 0; \(S_1^1 = u S_0\) and \(S_0^1 = d S_0\) at step 1; and \(S_2^2 = u^2 S_0\), \(S_1^2 = ud S_0\), \(S_0^2 = d^2 S_0\) at step 2. The recombination means the number of nodes at step \(n\) is only \(n+1\) rather than \(2^n\), a crucial computational savings.

More generally, the price at node \(j\) at time step \(N\) (expiry) can be written in closed form as

\[ S_j^N = u^j d^{N-j} S_0 \]Given a binomial lattice satisfying the no-arbitrage condition \(d < e^{r \Delta t} < u\), any European option can be priced by backward induction through the lattice.

The Additive Log-Price Model and Random Walk

Logarithmic Returns

In finance it is common and natural to work with logarithmic returns rather than price levels. Define \(X_n = \log(S_n)\). The log return in the \((n+1)\)-th period is

\[ \Delta X_n = \log\left(\frac{S_{n+1}}{S_n}\right) \]The simple return \((S_{n+1} - S_n)/S_n\) is approximately equal to the log return when returns are small, since \(\log(1 + x) \approx x\) for small \(x\). Thus

\[ \frac{S_{n+1} - S_n}{S_n} \approx \log\left(\frac{S_{n+1}}{S_n}\right) \]The log price evolves additively:

\[ X_{n+1} = \log(S_{n+1}) = X_n + \Delta X_n \]The Binomial Log-Return Distribution

In the binomial model, the log return in each period takes one of two values:

\[ \Delta X_n = \begin{cases} +\Delta h & \text{with probability } p \\ -\Delta h & \text{with probability } 1 - p \end{cases} \]where \(\Delta h\) is a given positive constant. This is equivalent to the multiplicative model with \(u = e^{\Delta h}\) and \(d = e^{-\Delta h} = 1/u\), so the symmetric condition \(d = 1/u\) corresponds exactly to the up and down log returns being \(+\Delta h\) and \(-\Delta h\) respectively.

The cumulative log return over the first \(n\) periods is the sum \(\sum_{k=0}^{n-1} \Delta X_k\), and the log price at time \(t_n\) is

\[ X_n = X_0 + \sum_{k=0}^{n-1} \Delta X_k \]This is precisely the position of a random walker starting at \(X_0\) (or at 0 if \(X_0 = 0\) who at each step moves up by \(\Delta h\) with probability \(p\) and down by \(\Delta h\) with probability \(1-p\). This additive model for the log price is far easier to analyze than the multiplicative model for the price itself.

Properties of the Discrete Random Walk

The discrete random walk formed by the log returns has three fundamental properties. First, each increment \(\Delta X_n\) has the same distribution for any \(n\), taking value \(+\Delta h\) with probability \(p\) and \(-\Delta h\) with probability \(1-p\). Second, the increments are independent: for \(n \neq n'\),

\[ \mathbf{E}(\Delta X_n \Delta X_{n'}) = \mathbf{E}(\Delta X_n)\mathbf{E}(\Delta X_{n'}) \]This means the next price move is independent of all past moves — the process is Markovian (a random walk). Third, the statistical moments are determined by \(p\) and \(\Delta h\):

\[ \mathbf{E}(\Delta X_n) = p\Delta h - (1-p)\Delta h = (2p-1)\Delta h =: \alpha_0 \]\[ \mathbf{E}(\Delta X_n^2) = p(\Delta h)^2 + (1-p)(\Delta h)^2 = (\Delta h)^2 \]\[ \mathbf{var}(\Delta X_n) = (\Delta h)^2 - (2p-1)^2(\Delta h)^2 =: \sigma_0^2 \]Convergence to the Normal Distribution via the Central Limit Theorem

By the Central Limit Theorem, as \(N \to \infty\) with the time step \(\Delta t = T/N\) shrinking, the normalized cumulative log return converges in distribution to a standard normal:

\[ \text{prob}\left(\frac{\sum_{n=0}^{N-1} \Delta X_n - N\alpha_0}{\sigma_0 \sqrt{N}} \leq x\right) \to F_{\text{normal}}(x) \]where \(F_{\text{normal}}(x)\) is the cumulative distribution function (CDF) of the standard normal:

\[ F_{\text{normal}}(x) = \frac{1}{\sqrt{2\pi}} \int_{-\infty}^{x} e^{-s^2/2} \, ds \]This convergence result is the continuous-time limit underpinning the Black-Scholes formula.

Constructing the Binomial Lattice: Choosing u, d, p

Matching the Statistical Moments of the Underlying

To use the binomial lattice as a model for a real stock, we need to choose the parameters \(u\), \(d\), and \(p\) so that the lattice correctly captures the statistical properties of the stock’s returns. Assume the underlying stock has two key annual statistics: the average log return per year \(\alpha\) and the volatility (standard deviation of the annual log return) \(\sigma\), so the variance of the annual log return is \(\sigma^2\).

Over a small interval \(\Delta t\), the expected log return is \(\alpha \Delta t\) and its variance is \(\sigma^2 \Delta t\). We choose the binomial parameters to match these two statistics simultaneously. Taking \(u = e^{\Delta h}\) and \(d = 1/u = e^{-\Delta h}\), the matching conditions become:

\[ \mathbf{E}(\Delta X_n) = p \Delta h - (1-p)\Delta h = \mathbf{E}\left[\log(S_{t+\Delta t}/S_t)\right] = \alpha \Delta t \]\[ \mathbf{var}(\Delta X_n) = (\Delta h)^2 - (2p-1)^2(\Delta h)^2 = \mathbf{var}\left[\log(S_{t+\Delta t}/S_t)\right] = \sigma^2 \Delta t \]Since we have two equations and three unknowns (\(u\), \(d\), \(p\), there is a one-parameter family of solutions. One additional constraint is needed to pin down a unique choice.

The CRR Parameterization

A widely used choice, due to Cox, Ross, and Rubinstein (1979) and known as the CRR parameterization, is:

\[ u = e^{\sigma \sqrt{\Delta t}}, \qquad d = \frac{1}{u} = e^{-\sigma \sqrt{\Delta t}}, \qquad p = \frac{1}{2} + \frac{1}{2} \frac{\alpha}{\sigma} \sqrt{\Delta t} \]Here \(\Delta h = \sigma \sqrt{\Delta t}\), meaning the step size in the log-price random walk scales as the square root of the time step. Several important observations follow from this construction.

First, the parameters \(u\) and \(d\) depend only on the volatility \(\sigma\), not on the drift \(\alpha\). The expected log return \(\alpha\) enters only through the real-world probability \(p\). But since we already know from Lecture 4 that \(p\) is irrelevant to option pricing, the drift \(\alpha\) plays no role in determining option values — option value depends on volatility only, not the expected rate of return.

Second, the CRR choice produces a recombining tree, since \(ud = 1\). This is illustrated by a two-period CRR lattice, where the five nodes take values \(S_0 e^{2\sigma\sqrt{\Delta t}}\), \(S_0\), and \(S_0 e^{-2\sigma\sqrt{\Delta t}}\) at the terminal step — the middle node \(S_0\) is reached by either an up-then-down or a down-then-up path.

Third, there are in principle many other valid parameterizations beyond CRR, since we have two moment-matching equations and three free parameters. However, one can also directly set up the lattice under the no-arbitrage (risk-neutral) measure, in which the expected rate of return is \(r\) and the probability is \(q^*\), rather than the real-world measure with drift \(\alpha\) and probability \(p\). Both approaches yield the same option prices.

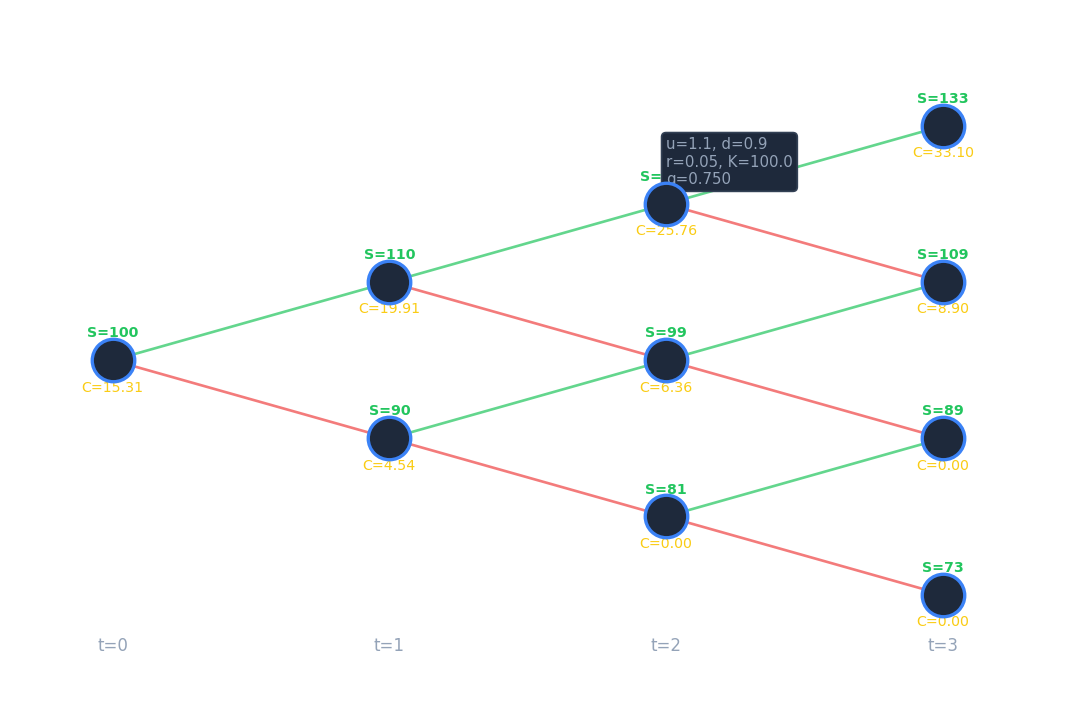

Pricing European Options on the Binomial Lattice

The Four-Step Algorithm

Pricing a European option with expiry \(T\) on an \(N\)-period lattice proceeds in four steps.

Step 1: Choose parameters. Select \(u\), \(d\), and \(p\) to match the log-return statistics of the underlying. For the CRR choice, set \(\log(u) = \Delta h = \sigma \sqrt{\Delta t}\). Ensure \(\Delta t\) is sufficiently small that the no-arbitrage condition

\[ e^{-\sigma\sqrt{\Delta t}} \leq e^{r \Delta t} \leq e^{\sigma \sqrt{\Delta t}} \]is satisfied. For the CRR lattice with \(\Delta t\) small, this condition holds automatically.

Step 2: Build the lattice of stock prices. Using \(u\) and \(d\), populate the lattice via the recurrence \(S_{j+1}^{n+1} = u S_j^n\) and \(S_j^{n+1} = d S_j^n\). The closed-form terminal prices are \(S_j^N = u^j d^{N-j} S_0\) for \(j = 0, 1, \ldots, N\).

Step 3: Compute terminal option values. At the expiry \(T = t_N\), set the option values to the payoff:

\[ V_j^N = \text{payoff}(S_j^N), \qquad 0 \leq j \leq N \]For a European call this is \(\max(S_j^N - K, 0)\); for a put, \(\max(K - S_j^N, 0)\).

Step 4: Roll back through the lattice (backward induction). Working from \(n = N-1\) back to \(n = 0\), apply the one-step risk-neutral pricing formula at each node:

\[ V_j^n = e^{-r \Delta t} \left( q^* V_{j+1}^{n+1} + (1 - q^*) V_j^{n+1} \right), \qquad j = 0, 1, \ldots, n \]where the risk-neutral probability is

\[ q^* = \frac{e^{r \Delta t} - d}{u - d} \]After completing the backward sweep, \(V_0^0\) is the fair option price at time 0. An example MATLAB implementation of this algorithm (the “TreeSlow” code) is discussed in Section 5.5 of the course notes.

American Options, Dividends, Convergence, and the Black-Scholes Formula

Overview

Lecture 6 extends the binomial lattice framework in three directions: handling dividends paid by the underlying stock, pricing American options with their early-exercise feature, and analyzing the convergence of binomial lattice prices to the exact continuous-time limit known as the Black-Scholes formula.

European Option Pricing with Dividends

The Dividend Model

Many stocks pay dividends during the life of an option, and these payments affect the stock price and hence the option value. We model a single dividend payment occurring at a known future time \(t_d\) with \(0 \leq t_d \leq T\). Two time-point notations are useful: \(t_d^-\) denotes the instant immediately before the dividend payment, and \(t_d^+\) denotes the instant immediately after.

The dividend amount is \(D = \rho S(t_d^-)\), a fixed fraction \(\rho\) of the stock price just before the ex-dividend instant. Such a proportional dividend is the standard assumption in the binomial lattice framework.

Effect of the Dividend on the Stock Price

By the no-arbitrage principle, the stock price must drop by exactly the dividend amount at the dividend date. If it did not, one could buy the stock just before \(t_d\), collect the dividend, and sell immediately after, generating a riskless profit. Therefore:

\[ S(t_d^+) = S(t_d^-) - D \]However, this drop in the stock price does not affect the option holder or writer, because the option is assumed to be dividend protected by contract. The option’s value is a continuous function of time at \(t_d\):

\[ V\left(S(t_d^-), t_d^-\right) = V\left(S(t_d^+), t_d^+\right) = V\left(S(t_d^-) - D, t_d^+\right) \]This relationship is key: it says that the option value computed using the pre-dividend stock price equals the option value computed using the post-dividend (lower) stock price at the same instant. This continuity condition is used in the backward induction to handle the jump in the underlying.

Backward Induction Algorithm with Dividends

The pricing algorithm is modified as follows. Roll back through the lattice from \(n = N-1\) to \(n = 0\). At each interior step, apply the standard one-step discounting:

\[ V_j^n = e^{-\Delta t} \left( q^* V_{j+1}^{n+1} + (1 - q^*) V_j^{n+1} \right) \]When the backward sweep reaches the time step \(t_n\) that is closest to the dividend date \(t_d\), an additional adjustment is applied. The option values \(V_j^n\) at time \(t_n^-\) are computed using the continuity condition (equation (1) above) and interpolation. The interpolation is necessary because \(S(t_d^-) - D\) will generally not coincide with any of the lattice node prices \(S_j^n\), so the option value at \(S(t_d^-) - D\) must be interpolated from neighboring node values.

Pricing American Options

The Early Exercise Problem

An American option differs from its European counterpart in that the holder may exercise it at any time up to and including expiry. Formally, the holder may exercise at any of the discrete times \(t_n\), \(n = 0, 1, \ldots, N\). At each node the holder faces a decision: exercise now and receive \(\text{payoff}(S_j^n)\), or continue to hold and receive the continuation value. The holder will rationally choose whichever is larger.

At expiry \(T = t_N\), the holder exercises if and only if the payoff is positive, so \(V_j^N = \text{payoff}(S_j^N)\) as before. The optimal exercise strategy must be optimal at every node, given that optimal decisions will be made at all subsequent nodes. This Bellman’s principle of dynamic programming means the problem can be decomposed into a sequence of single-step decisions solved in backward order.

Backward Induction with Early Exercise

The algorithm for pricing an American option is:

Terminal condition: At expiry, \(V_j^N = \text{payoff}(S_j^N)\) for \(j = 0, 1, \ldots, N\).

Backward recursion: For \(n = N-1, N-2, \ldots, 0\) and for each \(j = 0, 1, \ldots, n\), first compute the continuation value (the value of holding the option for one more step):

\[ (V_j^n)^* = e^{-r \Delta t} \mathbf{E}^Q(V^{n+1} | S_j^n) = e^{-r \Delta t} \left( q^* V_{j+1}^{n+1} + (1-q^*) V_j^{n+1} \right) \]Then compare with the immediate exercise value to get the American option value at this node:

\[ V_j^n = \max\left( (V_j^n)^*, \text{payoff}(S_j^n) \right), \qquad j = 0, 1, \ldots, n \]The optimal exercise strategy is to exercise immediately at node \((t_n, S_j^n)\) whenever the continuation value is less than the intrinsic (immediate exercise) value. After completing the backward sweep, \(V_0^0\) gives the fair American option price today.

The only algorithmic change from European pricing is the replacement of the direct assignment \(V_j^n = (V_j^n)^*\) with the max operation. This single modification correctly accounts for the early exercise premium that American options carry relative to European options.

Computational Costs

The backward induction algorithm over an \(N\)-period lattice has a total floating point operation count of \(O(N^2)\), since there are on the order of \(N^2/2\) nodes in the lattice. For time efficiency, the inner loop over node indices \(j\) can be vectorized in MATLAB or NumPy, replacing the explicit inner for-loop with array operations (see Section 2.5 of the course notes for a vectorized implementation). For space efficiency, if only the price \(V_0^0\) at time zero is needed (and not the full lattice of option values), the backward sweep can be carried out using \(O(N)\) storage by overwriting a one-dimensional array of length \(N+1\) at each step.

Convergence of the Binomial Lattice

Setting and Result

As \(N \to \infty\) (equivalently, \(\Delta t \to 0\), the binomial lattice option price converges to the exact continuous-time price. By the Central Limit Theorem, \(\log S_T - \log S_0\) converges to a normal distribution with mean \(\alpha T\) and variance \(\sigma^2 T\), which is the log-normal stock price model of Black and Scholes. It can be shown rigorously that the binomial lattice European option value functions converge to the Black-Scholes formulae.

Convergence Rate

Let \(V_0^{\text{exact}} = V(S_0, 0)\) denote the exact Black-Scholes price, and let \(V_0^{\text{tree}}(\Delta t)\) denote the binomial lattice price at time 0 with step size \(\Delta t = T/N\). PDE theory (discussed later in the course) shows that if the strike price falls exactly on a binomial lattice node, the error is linear in \(\Delta t\):

\[ V_0^{\text{tree}}(\Delta t) = V_0^{\text{exact}} + \text{const} \cdot \Delta t + \text{small } o(\Delta t) \]Otherwise, when the strike does not align with a node, the convergence may be erratic and smoothing of the payoff is required (see Section 5.4 of the course notes for techniques to restore clean convergence).

Diagnosing Convergence Rate Computationally

If the convergence is linear in \(\Delta t\), then halving \(\Delta t\) should halve the error, and the ratio

\[ \lim_{\Delta t \to 0} \frac{V_0^{\text{tree}}(\Delta t / 2) - V_0^{\text{tree}}(\Delta t)}{V_0^{\text{tree}}(\Delta t / 4) - V_0^{\text{tree}}(\Delta t / 2)} \]should approach 2. If the convergence is quadratic in \(\Delta t\), meaning

\[ V_0^{\text{tree}}(\Delta t) = V_0^{\text{exact}} + \alpha (\Delta t)^2 + o((\Delta t)^2) \]for some constant \(\alpha\) independent of \(\Delta t\), then the same ratio would approach 4. This provides a practical computational test for verifying the order of convergence of any numerical method.

The Black-Scholes Formula

Derivation via the Central Limit Theorem

The continuous-time limit of the binomial lattice is the Black-Scholes model, in which the log price \(\log S_t\) follows a Brownian motion with drift. The Central Limit Theorem implies that \(\log S_t - \log S_0\) has a normal distribution with mean \(\alpha t\) and variance \(\sigma^2 t\). The binomial lattice option value converges to the Black-Scholes formula as \(N \to \infty\).

The Black-Scholes Formula for European Calls

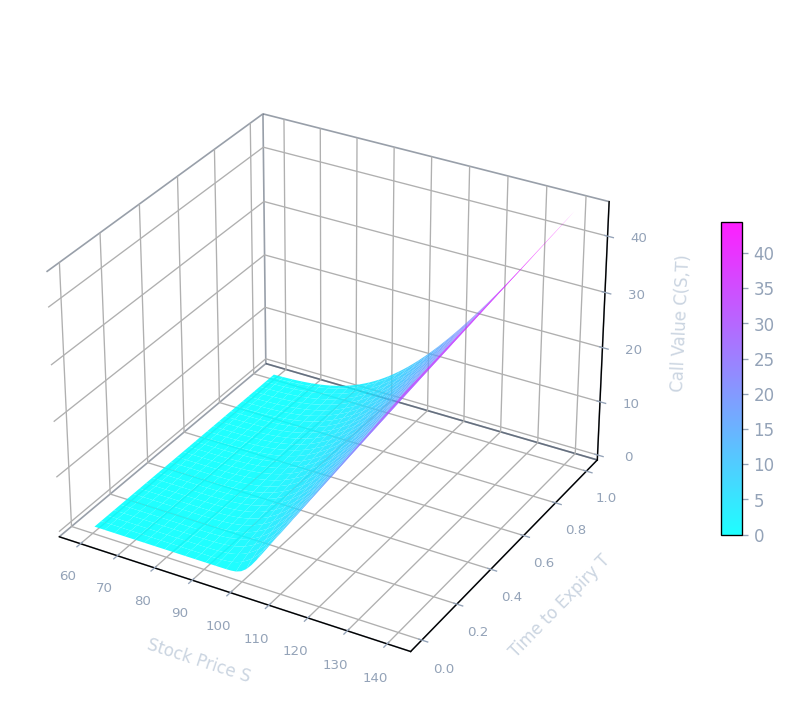

For \(0 \leq t \leq T\), the Black-Scholes price of a European call option with current stock price \(S\), strike \(K\), risk-free rate \(r\), volatility \(\sigma\), and time to expiry \(T - t\) is

\[ C(S, t) = S \mathcal{N}_{cdf}(d_1) - K e^{-r(T-t)} \mathcal{N}_{cdf}(d_2) \]where \(\mathcal{N}_{cdf}(d)\) is the cumulative distribution function of the standard normal:

\[ \mathcal{N}_{cdf}(d) = \frac{1}{\sqrt{2\pi}} \int_{-\infty}^{d} e^{-\frac{1}{2} y^2} \, dy \]and the arguments \(d_1\) and \(d_2\) are given by

\[ d_1 = \frac{\log(S/K) + (r + 0.5\sigma^2)(T-t)}{\sigma \sqrt{T-t}} \]\[ d_2 = \frac{\log(S/K) + (r - 0.5\sigma^2)(T-t)}{\sigma \sqrt{T-t}} \]Note that \(d_2 = d_1 - \sigma\sqrt{T-t}\). Here \(T - t\) is the time to expiry. The formula makes clear that the option price depends on the current stock price \(S\), the strike \(K\), the risk-free rate \(r\), the volatility \(\sigma\), and the time to expiry \(T - t\).

Key Properties of the Black-Scholes Formula

Two properties of the formula deserve special emphasis. First, consistent with the binomial model analysis, the option value depends only on the volatility \(\sigma\), not on the average rate of return \(\alpha\). The drift of the stock under the real-world measure is entirely irrelevant to the option price. Second, unlike the European option, there is no closed-form analytic formula for American option pricing in the Black-Scholes framework. American options must be priced numerically — for instance using the binomial lattice backward induction algorithm with the early-exercise modification — or by solving a partial differential equation with a free boundary.

Put-Call Parity and European Puts

The Black-Scholes formula for a European put can be derived either by direct computation or, more elegantly, from put-call parity. Put-call parity states that for European options on the same underlying with the same strike and expiry:

\[ C(S, t) - P(S, t) = S - K e^{-r(T-t)} \]Rearranging and using the identity \(\mathcal{N}_{cdf}(-x) = 1 - \mathcal{N}_{cdf}(x)\) yields the European put price:

\[ P(S, t) = K e^{-r(T-t)} \mathcal{N}_{cdf}(-d_2) - S \mathcal{N}_{cdf}(-d_1) \]The Black-Scholes formula represents the culmination of the binomial lattice approach: it is the analytic closed-form that the numerical lattice converges to as the number of time steps grows without bound, and it provides a benchmark against which computational methods can be validated.

Stochastic Calculus and the Black-Scholes Model

Standard Brownian Motion

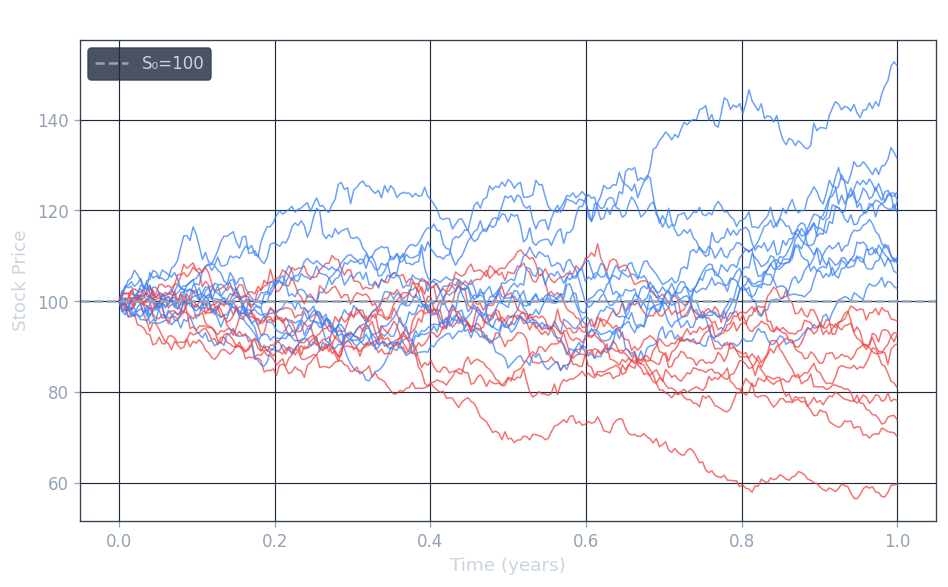

From Discrete Random Walk to Continuous Process

The mathematical foundation of modern option pricing theory rests on the concept of standard Brownian motion, also called the Wiener process. To understand where this object comes from, it is helpful to begin with a discrete model and examine what happens as the time step shrinks to zero.

Consider a special binomial lattice, a discrete random walk starting at \( X_0 = 0 \). At each step, the increment \( \Delta X_n \) is a symmetric coin flip scaled by the square root of the time step:

\[ \Delta X_n = \begin{cases} \sqrt{\Delta t} & \text{with probability } p = 0.5 \\ -\sqrt{\Delta t} & \text{with probability } 0.5 \end{cases} \]The choice to scale by \( \sqrt{\Delta t} \) rather than \( \Delta t \) is deliberate and essential: it ensures that the variance of the cumulative sum grows linearly with time, which is the signature property of Brownian motion. Now we take the continuous-time limit. As \( \Delta t \to 0 \), the discrete time step becomes the differential \( dt \), and the increment \( \Delta X_t \) becomes the infinitesimal \( dX_t \). The limit of this random walk is a continuous process, which is called standard Brownian motion and is conventionally denoted \( Z_t \).

Formal Properties

A standard Brownian motion \( Z_t \) is defined by three fundamental properties.

First, initialization: \( Z(0) = 0 \). The process starts at the origin.

Second, normally distributed increments: for all \( t \geq 0 \) and \( \Delta t > 0 \), the increment \( Z(t + \Delta t) - Z(t) \) is normally distributed with mean zero and variance \( \Delta t \):

\[ Z(t + \Delta t) - Z(t) \sim \mathcal{N}(0, \Delta t) \]This says that over any time interval of length \( \Delta t \), the change in the Brownian motion is a zero-mean Gaussian random variable whose variance equals the length of the interval.

Third, independent increments: for any \( 0 \leq t_1 < t_2 \leq t_3 < t_4 \), the increments \( Z(t_2) - Z(t_1) \) and \( Z(t_4) - Z(t_3) \) are independent random variables. Non-overlapping increments carry no information about one another.

Two important consequences follow from these definitions. Taking \( t_1 = 0 \) in the second property, one sees that \( Z_t \sim \mathcal{N}(0, t) \): the process \( Z_t \) at any time \( t \) has a normal distribution with mean zero and variance \( t \). The independent-increments property is also known as the Markovian property: the future evolution of the process depends only on the current state, not on the full history. This is consistent with the efficient market hypothesis, which asserts that all past price information is already reflected in the current price.

More Properties: The Infinitesimal Increment

It is instructive to examine the structure of an infinitesimal increment of Brownian motion more carefully. Since \( \Delta Z_t = Z(t + \Delta t) - Z(t) \sim \mathcal{N}(0, \Delta t) \), we can write this increment as

\[ \Delta Z_t = \phi_t \sqrt{\Delta t} \quad \text{or equivalently} \quad dZ_t = \phi_t \sqrt{dt} \]where \( \phi_t \sim \mathcal{N}(0,1) \) is an independent standard normal random variable at each time \( t \). Equivalently, \( dZ_t / dt = \phi_t / \sqrt{dt} \), which diverges as \( dt \to 0 \). This captures a fundamental regularity result: the standard Brownian motion \( Z_t = Z_0 + \int_0^t dZ_s \) is continuous but not differentiable. Its sample paths are nowhere smooth in the classical sense.

The moments of the standard normal \( \phi_t \) are:

\[ \mathbf{E}(\phi_t) = 0, \quad \mathbf{E}(\phi_t^2) = 1, \quad \mathbf{E}(\phi_t^3) = 0, \quad \mathbf{E}(\phi_t^4) = 3 \]From these one computes the mean and variance of the squared increment \( (\Delta Z_t)^2 \):

\[ \mathbf{E}((\Delta Z_t)^2) = \mathbf{E}(\Delta t \cdot \phi_t^2) = \Delta t \cdot \mathbf{E}(\phi_t^2) = \Delta t \]\[ \mathbf{var}((\Delta Z_t)^2) = \mathbf{E}((\Delta Z_t)^4) - (\mathbf{E}((\Delta Z_t)^2))^2 = (\Delta t)^2 \mathbf{E}(\phi_t^4) - (\Delta t)^2 = O((\Delta t)^2) \]A crucial observation: the variance of \( (\Delta Z_t)^2 \) goes to zero quadratically in \( \Delta t \). This means that as \( \Delta t \to 0 \), the squared increment \( (dZ_t)^2 \) becomes deterministic, concentrating on its expected value. We can therefore write the fundamental identity of stochastic calculus:

\[ (dZ_t)^2 = dt \]This non-classical rule, where a stochastic differential squared equals a deterministic differential, is at the heart of Itô’s Lemma.

Summary of Important Properties of \( Z_t \)

To summarize the key operational facts: if \( \phi_t \sim \mathcal{N}(0,1) \), then

\[ \Delta Z_t = \phi_t \sqrt{\Delta t}, \quad dZ_t = \phi_t \sqrt{dt}, \quad (dZ_t)^2 = dt \]These identities will be used repeatedly in deriving Itô’s Lemma and solving the Black-Scholes SDE.

Itô’s Process and the Stochastic Integral

Itô’s Process

A general Itô’s process \( X_t \) is a solution to the stochastic differential equation (SDE):

\[ dX_t = a \cdot dt + b \cdot dZ_t \]where \( a \) and \( b \) can be constants or functions of \( (X_t, t) \). The term \( a \cdot dt \) is the deterministic trend component, governing the expected direction of movement, while \( b \cdot dZ_t \) is the random fluctuation component, encoding the uncertainty driven by the standard Brownian motion.

The Stochastic (Itô) Integral

Before turning to Itô’s Lemma, we need to define precisely what \( \int_0^T b(t) \, dZ_t \) means. In classical deterministic calculus, the integral \( \int_0^T f(t_n) \, dt \) is defined as the limit of Riemann sums, and the choice of evaluation point within each sub-interval (left endpoint, right endpoint, midpoint) does not matter in the limit because the integrand is smooth. Formally:

\[ \int_0^T f(t_n) \, dt = \lim_{N \to +\infty} \sum_{n=0}^{N-1} f(t_n) \Delta t = \lim_{N \to +\infty} \sum_{n=0}^{N-1} f(t_{n+1}) \Delta t \]where \( t_n = n \Delta t \) and \( \Delta t = T/N \). For stochastic integrals, the situation is fundamentally different because the integrand \( b(t) \) and the integrator \( Z_t \) are both random and correlated, so the choice of evaluation point matters crucially in the limit.

The Itô integral is defined by evaluating the integrand at the left endpoint of each sub-interval:

\[ \int_0^T b(t) \, dZ_t = \lim_{N \to +\infty} \left( \sum_{n=0}^{N-1} b(t_n)(Z(t_{n+1}) - Z(t_n)) \right) \]where the limit is taken in the mean square sense, meaning:

\[ \lim_{N \to +\infty} \mathbf{E}\left( \left( \sum_{k=0}^{N-1} b(t_{n+1})(Z(t_{n+1}) - Z(t_n)) - \int_0^T b(t) \, dZ_t \right)^2 \right) = 0 \]Two notes are important here. First, the partial sum \( \sum_{n=0}^{N-1} b(t_n)(Z(t_{n+1}) - Z(t_n)) \) is a random variable, and so is the limiting Itô integral \( \int_0^T b(t) \, dZ_t \). Second, the left-endpoint convention is financially meaningful: it says that the trading position \( b(t_n) \) is determined using only information available up to time \( t_n \), before seeing the Brownian increment \( Z(t_{n+1}) - Z(t_n) \). Using the right-endpoint (which would yield a different answer) would correspond to using future information, which is not admissible in finance.

Solution for Constant Coefficients

As an analogy, recall that the bond price satisfies the deterministic ODE \( d\beta(\tau) = r \beta \, d\tau \), or equivalently \( d\log(\beta(\tau)) = r \, d\tau \). Integrating gives \( \beta(t) = \beta(0) e^{rt} \).

For the SDE \( dX_t = a \, dt + b \, dZ_t \) with constants \( a, b \), integrating directly gives:

\[ X(t) = X(0) + \int_0^t (a \, d\tau + b \, dZ(\tau)) = X(0) + a \cdot t + b \cdot Z_t \]The solution is simply the initial value plus a linear trend plus a Brownian motion term scaled by \( b \). This is the simplest Itô process, sometimes called arithmetic Brownian motion.

Geometric Brownian Motion and the Black-Scholes Model

The GBM SDE

The Black-Scholes model for the stock price \( S_t \) is the Geometric Brownian Motion (GBM) model, a stochastic differential equation of the form:

\[ \frac{dS_t}{S_t} = \mu \, dt + \sigma \, dZ_t \]or equivalently,

\[ dS_t = \mu S_t \, dt + \sigma S_t \, dZ_t \]Here \( \mu \) is the drift (expected instantaneous rate of return) and \( \sigma \) is the volatility (standard deviation of returns per unit time), both treated as constants. This is an Itô process with \( a(S_t, t) = \mu S_t \) and \( b(S_t, t) = \sigma S_t \). The left-hand side \( dS_t / S_t \) is the instantaneous relative return, so the model says the return consists of a deterministic drift \( \mu \, dt \) and a random fluctuation \( \sigma \, dZ_t \). The central question is: how do we solve this SDE for \( S_t \)?

We cannot solve it the same way as the constant-coefficient case, because the coefficients depend on \( S_t \) itself. The correct tool is Itô’s Lemma.

Itô’s Lemma

Statement

Itô’s Lemma is the stochastic analogue of the chain rule. It tells us how a smooth function of an Itô process evolves over time. Suppose that \( S_t \) satisfies the general SDE:

\[ dS_t = a(S_t, t) \, dt + b(S_t, t) \, dZ_t \]Let \( G(S, t) : \mathbb{R}^2 \to \mathbb{R} \) be a smooth (twice continuously differentiable) function. Then \( Y_t = G(S_t, t) \) is itself an Itô process, and it satisfies:

\[ dY_t = \left[ \frac{\partial G}{\partial t} + a(S_t, t) \frac{\partial G}{\partial S} + \frac{1}{2} b(S_t, t)^2 \frac{\partial^2 G}{\partial S^2} \right] dt + b(S_t, t) \frac{\partial G}{\partial S} \, dZ_t \]For the Black-Scholes model specifically, \( a(S_t, t) = \mu S_t \) and \( b(S_t, t) = \sigma S_t \), so the formula specializes to the well-known form involving the option Greeks: a theta term, a delta term, and a gamma term.

Proof (Derivation)

The key step in understanding Itô’s Lemma is to perform a Taylor expansion of \( G \) and keep track of which terms survive in the limit \( \Delta t \to 0 \), using the special stochastic rules.

For notational simplicity, write \( a \equiv a(S_t, t) \) and \( b \equiv b(S_t, t) \). By Taylor expansion:

\[ \Delta Y_t = G(S_t + \Delta S_t, t + \Delta t) - G(S_t, t) = \frac{\partial G}{\partial S} \Delta S_t + \frac{\partial G}{\partial t} \Delta t + \frac{1}{2} \frac{\partial^2 G}{\partial S^2} (\Delta S_t)^2 + \frac{\partial^2 G}{\partial S \partial t} (\Delta S_t)(\Delta t) + O((\Delta t)^2) \]Now, since \( dS_t = a \, dt + b \, dZ_t \) and \( dZ_t = O(\sqrt{dt}) \), the cross term satisfies \( \Delta S_t \Delta t = O((\Delta t)^{3/2}) \), which is negligible. After discarding this cross term, we have:

\[ \Delta Y_t \approx \frac{\partial G}{\partial S} \Delta S_t + \frac{\partial G}{\partial t} \Delta t + \frac{1}{2} \frac{\partial^2 G}{\partial S^2} (\Delta S_t)^2 + O((\Delta t)^{3/2}) \]The crucial computation is \( (dS_t)^2 \):

\[ (dS_t)^2 = (a \, dt + b \, dZ_t)^2 = a^2 (dt)^2 + b^2 (dZ_t)^2 + 2ab \, dt \, dZ_t = b^2 \, dt \]where we used the fundamental identity \( (dZ_t)^2 = dt \), together with \( (dt)^2 = 0 \) and \( dt \, dZ_t = 0 \) (both vanish faster than \( dt \) as \( \Delta t \to 0 \). Taking the limit \( \Delta t \to 0 \):

\[ dY_t = \frac{\partial G}{\partial t} dt + \frac{\partial G}{\partial S} dS_t + \frac{1}{2} \frac{\partial^2 G}{\partial S^2} b^2 \, dt \]Substituting \( dS_t = a \, dt + b \, dZ_t \) and collecting terms:

\[ dY_t = \left[ \frac{\partial G}{\partial t} + a(S_t, t) \frac{\partial G}{\partial S} + \frac{1}{2} b(S_t, t)^2 \frac{\partial^2 G}{\partial S^2} \right] dt + b(S_t, t) \frac{\partial G}{\partial S} \, dZ_t \]This is Itô’s Lemma. The extra term \( \frac{1}{2} b^2 \partial^2 G / \partial S^2 \) — the Itô correction term — has no analogue in ordinary calculus. It arises precisely because Brownian motion has non-zero quadratic variation, captured by \( (dZ_t)^2 = dt \). In classical calculus, all second-order terms vanish; in stochastic calculus, the second-order Brownian term survives.

Explicit Solution of the Black-Scholes SDE

Applying Itô’s Lemma to \( \log S_t \)

We now apply Itô’s Lemma to solve the GBM SDE. Consider the function \( G(S, t) = \log(S) \). Its partial derivatives are:

\[ \frac{\partial G}{\partial t} = 0, \quad \frac{\partial G}{\partial S} = \frac{1}{S}, \quad \frac{\partial^2 G}{\partial S^2} = -\frac{1}{S^2} \]For the Black-Scholes model, recall \( a(S_t, t) = \mu S_t \) and \( b(S_t, t) = \sigma S_t \). Substituting into Itô’s Lemma:

\[ d \log(S_t) = \left[ 0 + \mu S_t \cdot \frac{1}{S_t} + \frac{1}{2} (\sigma S_t)^2 \cdot \left(-\frac{1}{S_t^2}\right) \right] dt + \sigma S_t \cdot \frac{1}{S_t} \, dZ_t \]\[ d \log(S_t) = \left( \mu - \frac{1}{2} \sigma^2 \right) dt + \sigma \, dZ_t \]This is a remarkable simplification: the SDE for \( \log(S_t) \) has constant coefficients, so we can integrate it directly. Integrating from \( 0 \) to \( t \):

\[ \log(S_t) - \log(S_0) = \int_0^t \left(\mu - \frac{1}{2}\sigma^2\right) ds + \int_0^t \sigma \, dZ_s = \left(\mu - \frac{1}{2}\sigma^2\right) t + \sigma(Z_t - Z_0) \]Exponentiating both sides yields the explicit solution of the Black-Scholes model:

\[ S_t = S_0 \, e^{(\mu - \frac{1}{2}\sigma^2)t + \sigma Z_t} \]Interpretation and the Lognormal Distribution

This explicit formula has several important consequences. First, assuming \( S_0 > 0 \), the stock price \( S_t \) is always strictly positive, since it is the exponential of a real number. This is one of the key advantages of GBM over arithmetic Brownian motion, which can take negative values and is therefore unsuitable as a model for stock prices.

Second, \( S_t \) has a lognormal distribution. Since \( Z_t \sim \mathcal{N}(0, t) \), the exponent \( (\mu - \frac{1}{2}\sigma^2)t + \sigma Z_t \) is normally distributed with mean \( (\mu - \frac{1}{2}\sigma^2)t \) and variance \( \sigma^2 t \). Therefore \( \log(S_t/S_0) \) is Gaussian, making \( S_t \) lognormally distributed.