AMATH 455/655: Control Theory

Jun Liu

Estimated study time: 5 hr 1 min

Table of contents

Introduction and State-Space Models

Introduction and State-Space Models

A Minimal Set of Notation

Before entering the substance of the course, it is useful to fix notation. We denote by \(\mathbb{R}\) the set of real numbers, and by \(\mathbb{R}^n\) the \(n\)-dimensional Euclidean space. For a vector \(x \in \mathbb{R}^n\), the Euclidean norm (also called the \(L^2\) norm or 2-norm) is defined by

\[\|x\| = \sqrt{\sum_{i=1}^{n} x_i^2}.\]We write \(\mathbb{R}^{n \times m}\) for the space of real matrices with \(n\) rows and \(m\) columns, and \(\mathbb{C}\) for the set of complex numbers, where the imaginary unit is denoted \(j\). The time derivative of a function \(x\) of time is written \(x'\); alternative notations include \(\frac{dx}{dt}\) and \(\dot{x}\). Transposes of a vector \(x\) and a matrix \(A\) are written \(x^T\) and \(A^T\), respectively.

Introduction

Feedback is ubiquitous in nature and engineering. It is a fundamental principle that underpins the effects of influence and dependence throughout both biological and technological systems. It is perhaps not an exaggeration to say that living organisms would not exist without feedback, and that most if not all advanced technologies must rely on feedback to achieve their intended functionalities. Control theory can be seen as the systematic mathematical study of feedback.

To make this study mathematically precise, we employ mathematical models. The models introduced in this course are the so-called state-space models, which are systems of ordinary differential equations equipped with inputs and outputs. Like ordinary differential equations, they may be classified as linear or nonlinear, and as time-varying or time-invariant.

Linear Time-Varying (LTV) Systems

A control system of the form

\[\begin{align} x'(t) &= A(t)x(t) + B(t)u(t), \tag{1.1a} \\ y(t) &= C(t)x(t) + D(t)u(t), \tag{1.1b} \end{align}\]is called a linear time-varying (LTV) system. Here \(x(t) \in \mathbb{R}^n\), \(u(t) \in \mathbb{R}^k\), and \(y(t) \in \mathbb{R}^m\) are called the state, input, and output of the system, respectively. The coefficient matrices \(A(t) \in \mathbb{R}^{n \times n}\), \(B(t) \in \mathbb{R}^{n \times k}\), \(C(t) \in \mathbb{R}^{m \times n}\), and \(D(t) \in \mathbb{R}^{m \times k}\) are time-varying. The integer \(n\) is called the dimension or order of the state space.

Linear Time-Invariant (LTI) Systems

A special and especially important case arises when all coefficient matrices are constant. An linear time-invariant (LTI) system has the form

\[\begin{align} x'(t) &= Ax(t) + Bu(t), \tag{1.2a} \\ y(t) &= Cx(t) + Du(t), \tag{1.2b} \end{align}\]where \(A\), \(B\), \(C\), \(D\) are constant matrices of appropriate dimensions. We often write the system in the compact form

\[\begin{align} x' &= Ax + Bu, \tag{1.3a} \\ y &= Cx + Du, \tag{1.3b} \end{align}\]or simply denote it by the quadruple \((A, B, C, D)\). LTI systems will be our primary focus throughout the course.

Nonlinear Systems

More generally, a continuous-time control system can be described by a system of ordinary differential equations with inputs and outputs of the form

\[\begin{align} x' &= f(x, u), \tag{1.4a} \\ y &= h(x, u), \tag{1.4b} \end{align}\]where \(x \in \mathbb{R}^n\) is the state, \(u \in \mathbb{R}^k\) the input, \(y \in \mathbb{R}^m\) the output, and

\[f \colon \mathbb{R}^n \times \mathbb{R}^k \to \mathbb{R}^n, \qquad h \colon \mathbb{R}^n \times \mathbb{R}^k \to \mathbb{R}^m\]are potentially nonlinear functions defining the state equation (1.4a) and the output equation (1.4b), respectively. We refer to system (1.4) as a nonlinear system. We typically assume that both \(f\) and \(h\) are sufficiently smooth, for instance continuously differentiable with respect to both variables. By input, state, and output signals we mean functions \(u(t)\), \(x(t)\), and \(y(t)\) satisfying equations (1.4a) and (1.4b).

Linearization around a Trajectory

A general nonlinear system can be difficult to analyze directly. A standard technique is linearization, which aims to approximate the behavior of the nonlinear system (1.4) in some neighborhood of a given solution. Let \((\bar{x}(t), \bar{u}(t))\) be a solution to (1.4), meaning this pair of functions satisfies the state equation. The linearization of (1.4) around the trajectory \((\bar{x}(t), \bar{u}(t))\) is the LTV system

\[\begin{aligned} x' &= A(t)x + B(t)u, \\ y &= C(t)x + D(t)u, \end{aligned}\]where the coefficient matrices are the Jacobians of \(f\) and \(h\) evaluated along the trajectory:

\[A(t) = \left.\frac{\partial f}{\partial x}\right|_{\substack{x=\bar{x}(t)\\ u=\bar{u}(t)}}, \qquad B(t) = \left.\frac{\partial f}{\partial u}\right|_{\substack{x=\bar{x}(t)\\ u=\bar{u}(t)}},\]\[C(t) = \left.\frac{\partial h}{\partial x}\right|_{\substack{x=\bar{x}(t)\\ u=\bar{u}(t)}}, \qquad D(t) = \left.\frac{\partial h}{\partial u}\right|_{\substack{x=\bar{x}(t)\\ u=\bar{u}(t)}}.\]Here \(\frac{\partial f}{\partial x}\) denotes the Jacobian matrix of \(f\) with respect to \(x\), defined entry-wise by

\[\frac{\partial f}{\partial x} = \left(\frac{\partial f_i}{\partial x_j}\right) = \begin{bmatrix} \frac{\partial f_1}{\partial x_1} & \cdots & \frac{\partial f_1}{\partial x_n} \\ \vdots & \ddots & \vdots \\ \frac{\partial f_n}{\partial x_1} & \cdots & \frac{\partial f_n}{\partial x_n} \end{bmatrix}.\]The Jacobians \(\frac{\partial f}{\partial u}\), \(\frac{\partial h}{\partial x}\), and \(\frac{\partial h}{\partial u}\) are similarly defined.

Linearization around an Equilibrium Point

A particularly important special case of the above is linearization around an equilibrium point. A pair of vectors \((x^*, u^*) \in \mathbb{R}^n \times \mathbb{R}^k\) is said to be an equilibrium point (EP) of the system (1.4) if \(f(x^*, u^*) = 0\). At an equilibrium, the state does not change if the input is held fixed at \(u^*\). The linearization of (1.4) around the equilibrium \((x^*, u^*)\) yields the LTI system

\[\begin{aligned} x' &= Ax + Bu, \\ y &= Cx + Du, \end{aligned}\]where the constant matrices are

\[A = \left.\frac{\partial f}{\partial x}\right|_{\substack{x=x^*\\ u=u^*}}, \qquad B = \left.\frac{\partial f}{\partial u}\right|_{\substack{x=x^*\\ u=u^*}}, \qquad C = \left.\frac{\partial h}{\partial x}\right|_{\substack{x=x^*\\ u=u^*}}, \qquad D = \left.\frac{\partial h}{\partial u}\right|_{\substack{x=x^*\\ u=u^*}}.\]A large body of control theory is concerned with the analysis and design of controllers for stabilizing an otherwise unstable equilibrium — a classical example being the task of balancing an inverted pendulum with one’s hand. Because an LTI system accurately captures the local behavior of a nonlinear system near an equilibrium point, the study of LTI systems is of central importance, and this course will primarily focus on them.

Some Terminology

A control system of the form (1.2), (1.3), or (1.4) is called single input (SI) if \(k = 1\), that is, if \(u \in \mathbb{R}\), and multiple input (MI) if \(k > 1\). Similarly, it is single output (SO) if \(m = 1\) and multiple output (MO) if \(m > 1\). Using this terminology, a system with a single input and single output is called a SISO system, while a system with multiple inputs and multiple outputs is called a MIMO system.

Matrix Exponential and Solutions to LTI Systems

The Matrix Exponential

For a square matrix \(A \in \mathbb{R}^{n \times n}\), the matrix exponential of \(A\) is defined by the power series

\[e^A = \sum_{k=0}^{\infty} \frac{A^k}{k!}.\]It can be shown that this infinite series is well defined and converges element-wise to a fixed matrix. The matrix exponential is the key tool for solving LTI systems explicitly.

Proposition 2.1 (Properties of Matrix Exponential). Let \(A \in \mathbb{R}^{n \times n}\). The following properties hold.

(1) \(e^0 = I\), where \(0\) and \(I\) are the \(n \times n\) zero and identity matrices, respectively.

(2) \(\frac{d}{dt} e^{At} = A e^{At} = e^{At} A\) for all \(t \in \mathbb{R}\).

(3) If \(P^{-1}AP = B\) for some matrices \(P\) and \(B\), then \(e^A = P e^B P^{-1}\).

(4) If \(AB = BA\), then \(e^A B = B e^A\).

(5) If \(AB = BA\), then \(e^A e^B = e^B e^A\).

(6) \(e^{A(t_1 + t_2)} = e^{At_1} e^{At_2} = e^{At_2} e^{At_1}\) for all \(t_1, t_2 \in \mathbb{R}\).

(7) \([e^{At}]^{-1} = e^{-At}\) for all \(t \in \mathbb{R}\).

Proof. Items (1), (2), (3), and (4) can be verified directly using the definition. We verify item (2) as an illustration. Differentiating term by term,

\[\frac{d}{dt} e^{At} = \frac{d}{dt} \sum_{k=0}^{\infty} \frac{t^k A^k}{k!} = \sum_{k=0}^{\infty} \frac{t^{k-1}}{(k-1)!} A^k = A \sum_{k=0}^{\infty} \frac{t^k A^k}{k!} = \left(\sum_{k=0}^{\infty} \frac{t^k A^k}{k!}\right) A = Ae^{At} = e^{At}A.\]Item (5) can be proved using Theorem 2.2 below (left as an exercise). Items (6) and (7) follow from (5) together with (1) and (2). \(\square\)

The Fundamental Theorem for LTI Systems

The preceding properties of the matrix exponential allow us to solve the unforced LTI system exactly.

Theorem 2.2 (Fundamental Theorem for LTI Systems). The unique solution of

\[x' = Ax, \qquad x(0) = x_0,\]is given by

\[x(t) = e^{At} x_0, \qquad t \in \mathbb{R}.\]Proof. We first verify that \(x(t) = e^{At}x_0\) is indeed a solution. By Proposition 2.1(2),

\[x'(t) = Ae^{At}x_0 = Ax(t),\]and by Proposition 2.1(1), \(x(0) = e^0 x_0 = I x_0 = x_0\). To prove uniqueness, suppose \(y(t)\) is any other solution defined on some interval \(I\) containing \(0\), satisfying \(y'(t) = Ay(t)\) and \(y(0) = x_0\). Define

\[z(t) = e^{-At} y(t).\]By Proposition 2.1(1) and (2), we have \(z(0) = y(0) = x_0\) and

\[z'(t) = -Ae^{-At}y(t) + e^{-At}Ay(t) = -e^{-At}Ay(t) + e^{-At}Ay(t) = 0, \qquad t \in I.\]It follows that \(z(t) = x_0\) is constant, and therefore \(y(t) = e^{At}x_0\) for all \(t \in I\). \(\square\)

Solutions to LTI Systems with Input

When a control input is present, the solution is given by the variation of constants (or Duhamel) formula.

Corollary 2.3 (Solutions to LTI Systems). Given a control input \(u \colon [0,\infty) \to \mathbb{R}^k\), the unique solution to the LTI system

\[x' = Ax + Bu, \qquad y = Cx + Du\]with initial condition \(x(0) = x_0 \in \mathbb{R}^n\) is given by

\[x(t) = e^{At}x_0 + \int_0^t e^{A(t-\tau)} Bu(\tau)\,d\tau, \tag{2.1a}\]\[y(t) = Ce^{At}x_0 + \int_0^t Ce^{A(t-\tau)}Bu(\tau)\,d\tau + Du(t). \tag{2.1b}\]Proof. One can directly verify that (2.1a) satisfies the state equation and initial condition, and then invoke the uniqueness argument from the proof of Theorem 2.2. Alternatively, (2.1a) can be derived directly from Theorem 2.2 by the method of variation of constants. The output formula (2.1b) then follows by substituting (2.1a) into the output equation. \(\square\)

The formula (2.1a) has a natural interpretation: the first term \(e^{At}x_0\) is the free response, describing how the initial state evolves under the unforced dynamics, while the integral term is the forced response (or convolution), capturing the effect of the input accumulated over time. In particular, the quantity \(Ce^{At}\) in (2.1b) is the impulse response of the output due to the initial state, and the kernel \(Ce^{A(t-\tau)}B\) is the system’s impulse response matrix.

Computing Matrix Exponentials

Given \(A \in \mathbb{R}^{n \times n}\), how does one compute \(e^{At}\) in practice? For numerical work, one uses a computer algebra system. For example, the following MATLAB script computes \(e^{At}\) symbolically for \(A = \begin{bmatrix} 1 & 1 \\ 0 & 1 \end{bmatrix}\):

A = [1 1; 0 1];

syms t;

Q = expm(A*t);

The result is

\[e^{At} = \begin{bmatrix} e^t & te^t \\ 0 & e^t \end{bmatrix}.\]How to compute matrix exponentials analytically is an important theoretical question. A general and systematic approach uses the Jordan normal form. Every square matrix \(A\) is similar to a block diagonal matrix

\[J = \begin{bmatrix} J_1 & 0 & \cdots & 0 \\ 0 & J_2 & \ddots & \vdots \\ \vdots & \ddots & \ddots & 0 \\ 0 & \cdots & 0 & J_k \end{bmatrix},\]where each Jordan block \(J_i\) is a \(k_i \times k_i\) upper-bidiagonal matrix of the form

\[J_i = \begin{bmatrix} \lambda_i & 1 & 0 & \cdots & 0 \\ 0 & \lambda_i & \ddots & \ddots & \vdots \\ \vdots & \ddots & \ddots & \ddots & 0 \\ 0 & \ddots & \ddots & \lambda_i & 1 \\ 0 & 0 & \cdots & 0 & \lambda_i \end{bmatrix}_{k_i \times k_i},\]and \(\lambda_i\) is a (possibly complex) eigenvalue of \(A\). The exponential of the full Jordan form is block diagonal:

\[e^{Jt} = \begin{bmatrix} e^{J_1 t} & 0 & \cdots & 0 \\ 0 & e^{J_2 t} & \ddots & \vdots \\ \vdots & \ddots & \ddots & 0 \\ 0 & \cdots & 0 & e^{J_p t} \end{bmatrix},\]and the exponential of a single Jordan block is given explicitly by

\[e^{J_i t} = e^{\lambda_i t} \begin{bmatrix} 1 & t & \frac{t^2}{2!} & \cdots & \frac{t^{k_i-1}}{(k_i-1)!} \\ 0 & 1 & \ddots & \ddots & \vdots \\ \vdots & \ddots & \ddots & \ddots & \frac{t^2}{2!} \\ 0 & \ddots & \ddots & 1 & t \\ 0 & 0 & \cdots & 0 & 1 \end{bmatrix}.\]The procedure for computing \(e^{At}\) is thus as follows. First, find an invertible matrix \(P\) such that \(P^{-1}AP = J\) is in Jordan normal form. Second, compute \(e^{Jt}\) using the block formula above. Third, use Proposition 2.1(3) to obtain \(e^{At} = P e^{Jt} P^{-1}\).

Structure of the Matrix Exponential

One important consequence of the Jordan normal form computation is the following structural result.

Corollary 2.4. Each entry of \(e^{At}\) is a linear combination of terms of the form

\[t^k e^{\alpha t} \cos \beta t \qquad \text{or} \qquad t^k e^{\alpha t} \sin \beta t,\]where \(\lambda = \alpha + j\beta\) is an eigenvalue of \(A\) and \(k\) is a non-negative integer. Consequently, if all eigenvalues of \(A\) have strictly negative real parts, then \(e^{At} \to 0\) as \(t \to \infty\) (entry-wise).

This corollary has profound implications for stability: the long-term behavior of the free response \(x(t) = e^{At}x_0\) is entirely governed by the eigenvalues of \(A\). If every eigenvalue has negative real part, the state decays to zero regardless of the initial condition; if any eigenvalue has positive real part, there exist initial conditions for which the state grows without bound.

Controllability

Controllability

The Controllability Question

Consider the LTI system

\[x' = Ax + Bu, \qquad x \in \mathbb{R}^n, \quad u \in \mathbb{R}^k,\]which we denote simply by \((A, B)\), omitting the output equation for now. From Lecture 2, we know that given a control input \(u(t)\) and an initial condition \(x_0\), the unique solution is

\[x(t) = e^{At}x_0 + \int_0^t e^{A(t-\tau)}Bu(\tau)\,d\tau.\]Controllability asks a fundamental question about the capabilities of this system: given any initial state \(x_0\) and any desired final state \(x_1\), can we always find a control input \(u(t)\) that steers the system from \(x_0\) to \(x_1\) in finite time? The answer depends on the structure of the matrices \(A\) and \(B\), and characterizing exactly when such steering is possible is the central problem of this lecture.

Definition 3.1. The LTI system \((A, B)\) is said to be controllable if for any initial state \(x_0 \in \mathbb{R}^n\), any final state \(x_1 \in \mathbb{R}^n\), and any time \(t_1 > 0\), there exists an input \(u \colon [0, t_1] \to \mathbb{R}^k\) such that the solution satisfies \(x(0) = x_0\) and \(x(t_1) = x_1\).

The Main Controllability Theorem

The following theorem provides four equivalent characterizations of controllability, each offering a different perspective and practical utility.

Theorem 3.2 (Controllability). The following statements are equivalent:

(1) The LTI system \((A, B)\) is controllable.

(2) The controllability Gramian

\[W(t) = \int_0^t e^{A\tau} BB^T e^{A^T \tau}\,d\tau\]is positive definite for all \(t > 0\).

(3) (Kalman’s rank condition) The controllability matrix

\[\mathcal{C}(A,B) = \begin{bmatrix} B & AB & \cdots & A^{n-1}B \end{bmatrix}\]has rank \(n\) (i.e., full row rank).

(4) (Popov-Belevitch-Hautus test) The matrix

\[\begin{bmatrix} A - \lambda I & B \end{bmatrix}\]has rank \(n\) for every \(\lambda \in \mathbb{C}\).

Proof. We prove the equivalences in the order \((2) \Rightarrow (1) \Rightarrow (2) \Rightarrow (3) \Leftrightarrow (2)\), leaving the PBH test to Lecture 4.

\((2) \Rightarrow (1)\): Suppose \(W(t)\) is positive definite for all \(t > 0\). For any \(x_0, x_1 \in \mathbb{R}^n\) and \(t_1 > 0\), define the control input

\[u(t) = -B^T e^{A^T(t_1-t)} W^{-1}(t_1) \left[e^{At_1}x_0 - x_1\right].\]Substituting into the solution formula and recalling the definition of \(W(t_1)\),

\[\begin{aligned} x(t_1) &= e^{At_1}x_0 + \int_0^{t_1} e^{A(t_1-\tau)} B \left[-B^T e^{A^T(t_1-\tau)} W^{-1}(t_1)(e^{At_1}x_0 - x_1)\right] d\tau \\ &= e^{At_1}x_0 - \left[\int_0^{t_1} e^{A(t_1-\tau)} BB^T e^{A^T(t_1-\tau)}\,d\tau\right] W^{-1}(t_1)(e^{At_1}x_0 - x_1) \\ &= e^{At_1}x_0 - W(t_1)W^{-1}(t_1)(e^{At_1}x_0 - x_1) = x_1. \end{aligned}\]Hence \((A,B)\) is controllable.

\((1) \Rightarrow (2)\): Suppose that \(W(t_1)\) is not positive definite for some \(t_1 > 0\). Since \(W(t_1)\) is positive semi-definite by definition, there exists a nonzero vector \(v \in \mathbb{R}^n\) such that

\[v^T W(t_1) v = \int_0^{t_1} \|v^T e^{A\tau} B\|^2\,d\tau = 0,\]which implies \(v^T e^{A\tau} B = 0\) for all \(\tau \in [0, t_1]\). Because \((A,B)\) is controllable, there exists an input \(u(\cdot)\) on \([0, t_1]\) steering \(x(0) = e^{-At_1}v\) to \(x(t_1) = 0\), which means

\[0 = e^{At_1}(e^{-At_1}v) + \int_0^{t_1} e^{A(t_1-\tau)}Bu(\tau)\,d\tau = v + \int_0^{t_1} e^{A(t_1-\tau)}Bu(\tau)\,d\tau.\]Left-multiplying by \(v^T\) and using \(v^T e^{A\tau}B = 0\),

\[0 = v^T v + \int_0^{t_1} v^T e^{A(t_1-\tau)}Bu(\tau)\,d\tau = \|v\|^2,\]so \(v = 0\), a contradiction. Therefore \(W(t)\) is positive definite for all \(t > 0\).

\((2) \Rightarrow (3)\): We require the following lemma.

Lemma 3.3. Let \(A \in \mathbb{R}^{n \times n}\). There exist scalar functions \(\alpha_0(t), \alpha_1(t), \ldots, \alpha_{n-1}(t)\) such that

\[e^{At} = \sum_{i=0}^{n-1} \alpha_i(t) A^i, \qquad \forall t \in \mathbb{R}.\]Proof of Lemma. By the Cayley-Hamilton theorem, \(A\) satisfies its own characteristic polynomial:

\[A^n + a_1 A^{n-1} + a_2 A^{n-2} + \cdots + a_{n-1}A + a_n I = 0,\]where \(P(\lambda) = \lambda^n + a_1 \lambda^{n-1} + \cdots + a_{n-1}\lambda + a_n\) is the characteristic polynomial of \(A\). It follows that every power \(A^k\) for \(k \geq n\) can be written as a linear combination of \(I, A, A^2, \ldots, A^{n-1}\). Writing \(A^k = \sum_{i=0}^{n-1} b_i(k) A^i\), we obtain

\[e^{At} = \sum_{k=0}^{\infty} \frac{t^k}{k!} A^k = \sum_{k=0}^{\infty} \frac{t^k}{k!} \sum_{i=0}^{n-1} b_i(k) A^i = \sum_{i=0}^{n-1} \left(\sum_{k=0}^{\infty} \frac{t^k}{k!} b_i(k)\right) A^i = \sum_{i=0}^{n-1} \alpha_i(t) A^i,\]where we define \(\alpha_i(t) = \sum_{k=0}^{\infty} \frac{t^k}{k!} b_i(k)\). \(\square\)

Returning to \((2) \Rightarrow (3)\): suppose that \(\mathrm{rank}\,[B\ AB\ \cdots\ A^{n-1}B] < n\). Then there exists a nonzero \(v \in \mathbb{R}^n\) such that \(v^T [B\ AB\ \cdots\ A^{n-1}B] = 0\), which means \(v^T A^i B = 0\) for all \(i = 0, 1, \ldots, n-1\). By Lemma 3.3,

\[v^T e^{At} B = \sum_{i=0}^{n-1} \alpha_i(t)\, v^T A^i B = 0, \qquad \forall t \in \mathbb{R}.\]Hence \(v^T e^{At} BB^T e^{A^Tt} v = 0\) for all \(t\), so

\[v^T W(t) v = \int_0^t v^T e^{A\tau} BB^T e^{A^T\tau} v\,d\tau = 0\]for all \(t\). This shows \(W(t)\) is not positive definite for any \(t\).

\((3) \Rightarrow (2)\): Suppose \(W(t)\) is not positive definite for some \(t > 0\). As shown in the proof of \((1) \Rightarrow (2)\), there exists a nonzero \(v\) with \(v^T e^{A\tau} B = 0\) for all \(\tau \in [0, t]\). Differentiating repeatedly with respect to \(\tau\) and evaluating at \(\tau = 0\),

\[v^T A^i B = 0, \qquad \forall i = 0, 1, 2, \ldots,\]and in particular \(v^T [B\ AB\ \cdots\ A^{n-1}B] = 0\), so the controllability matrix has rank less than \(n\). The proof of the PBH test (statement (4)) is deferred to Lecture 4. \(\square\)

The PBH Test in Practice

Remark 3.4. The Popov-Belevitch-Hautus test is commonly referred to as the PBH test. To apply it, one need only check \(\mathrm{rank}[A - \lambda I\ \ B] = n\) for eigenvalues \(\lambda\) of \(A\), because for any \(\lambda\) that is not an eigenvalue of \(A\), the matrix \(A - \lambda I\) is already invertible and hence has rank \(n\) on its own.

Examples

Example 3.5. Consider the LTI system

\[x' = \begin{bmatrix} 0 & 1 \\ 1 & 0 \end{bmatrix} x + \begin{bmatrix} 1 \\ 1 \end{bmatrix} u.\]The controllability matrix is

\[\mathcal{C}(A,B) = \begin{bmatrix} B & AB \end{bmatrix} = \begin{bmatrix} 1 & 1 \\ 1 & 1 \end{bmatrix},\]which has rank 1. Hence \((A, B)\) is not controllable. We can confirm this with the PBH test. The eigenvalues of \(A\) are \(\lambda = -1\) and \(\lambda = 1\). For \(\lambda = -1\),

\[\mathrm{rank}[A - \lambda I \quad B] = \mathrm{rank}\begin{bmatrix} 1 & 1 & 1 \\ 1 & 1 & 1 \end{bmatrix} = 1 < 2.\]The matrix fails to have full rank, confirming that \((A,B)\) is not controllable.

Example 3.6 (Coupled cart-spring system). A coupled cart-spring system consists of two masses \(m_1 = 1\) and \(m_2 = 1/2\) connected by a spring with constant \(k = 1\). The equations of motion are

\[m_1 \ddot{y}_1 = u_1 + k(y_2 - y_1), \qquad m_2 \ddot{y}_2 = u_2 + k(y_1 - y_2).\]Introducing the state vector \(x = (x_1, x_2, x_3, x_4)^T = (y_1, \dot{y}_1, y_2, \dot{y}_2)^T\), the system takes the form \(x' = Ax + Bu\) with

\[A = \begin{bmatrix} 0 & 1 & 0 & 0 \\ -1 & 0 & 1 & 0 \\ 0 & 0 & 0 & 1 \\ 2 & 0 & -2 & 0 \end{bmatrix}, \qquad B = \begin{bmatrix} 0 & 0 \\ 1 & 0 \\ 0 & 0 \\ 0 & 2 \end{bmatrix}.\]The controllability matrix \(\mathcal{C}(A,B) = [B\ AB\ A^2B\ A^3B]\) is the \(4 \times 8\) matrix

\[\mathcal{C}(A,B) = \begin{bmatrix} 0 & 0 & 1 & 0 & 0 & 0 & -1 & 2 \\ 1 & 0 & 0 & 0 & -1 & 2 & 0 & 0 \\ 0 & 0 & 0 & 2 & 0 & 0 & 2 & -4 \\ 0 & 2 & 0 & 0 & 2 & -4 & 0 & 0 \end{bmatrix}.\]This matrix has rank 4, so \((A,B)\) is controllable. When both carts are independently actuated, the system can be steered to any desired configuration.

Example 3.7. Consider the same cart-spring system but with only a single input \(u\) applied to both carts simultaneously (Figure 3.2). The question of whether this single-input system remains controllable is an important practical one — can a single actuator still achieve full control? The analysis follows the same procedure, and the answer depends on the specific structure of \(B\) in that configuration.

Controllability (continued)

Controllability under State Transformation

Before proving the PBH test, we establish that controllability is a property intrinsic to the system and not an artifact of the particular choice of state coordinates. Consider the state transformation \(z = Px\), where \(P \in \mathbb{R}^{n \times n}\) is a non-singular matrix. Differentiating and substituting the state equation,

\[z' = Px' = PAx + PBu = PAP^{-1}z + PBu.\]We obtain the transformed LTI system \((PAP^{-1}, PB)\).

Theorem 4.8 (Controllability is invariant under state transformation). Let \(P \in \mathbb{R}^{n \times n}\) be non-singular. Then \((A, B)\) is controllable if and only if \((PAP^{-1}, PB)\) is controllable.

Proof. Observe that

\[\begin{aligned} \mathcal{C}(PAP^{-1}, PB) &= \begin{bmatrix} PB & PAP^{-1} \cdot PB & \cdots & (PAP^{-1})^{n-1} PB \end{bmatrix} \\ &= \begin{bmatrix} PB & PAB & \cdots & PA^{n-1}B \end{bmatrix} \\ &= P\begin{bmatrix} B & AB & \cdots & A^{n-1}B \end{bmatrix} = P\,\mathcal{C}(A,B). \end{aligned}\]Since \(P\) is non-singular, left-multiplying by \(P\) does not change the rank. The conclusion follows from Kalman’s rank condition. \(\square\)

Remark 4.9. The above proof also shows that \(\mathcal{C}(A,B)\) and \(\mathcal{C}(PAP^{-1}, PB)\) have the same rank for any non-singular \(P\).

Controllable Decomposition

When \((A,B)\) is not controllable, the state space can be decomposed into a part that is reachable by the input and a part that evolves freely and cannot be influenced. This decomposition is formalized as follows.

Suppose \((A,B)\) is not controllable, so the controllability matrix \(\mathcal{C}(A,B) = [B\ AB\ \cdots\ A^{n-1}B]\) has rank \(n_1 < n\). Let \(v_1, v_2, \ldots, v_{n_1}\) be \(n_1\) linearly independent columns of \(\mathcal{C}(A,B)\), and choose additional vectors \(v_{n_1+1}, \ldots, v_n\) so that \(P^{-1} = [v_1\ v_2\ \cdots\ v_n]\) is invertible. Introduce the state transformation \(z = Px\).

A key structural observation is that the image of the controllability matrix is invariant under \(A\): that is, \(A \cdot \mathrm{Im}(\mathcal{C}(A,B)) \subseteq \mathrm{Im}(\mathcal{C}(A,B))\). This follows from the Cayley-Hamilton theorem, since every column of \(A^n B\) lies in the span of the columns of \([B\ AB\ \cdots\ A^{n-1}B]\). Consequently, in the new coordinates, \(PAP^{-1}\) and \(PB\) take the block forms

\[PAP^{-1} = \begin{pmatrix} A_c & A_{12} \\ 0 & A_u \end{pmatrix}, \qquad PB = \begin{pmatrix} B_c \\ 0 \end{pmatrix}, \tag{4.1, 4.2}\]where \(A_c \in \mathbb{R}^{n_1 \times n_1}\) and \(B_c \in \mathbb{R}^{n_1 \times k}\). Writing the new state as \(z = (z_1^T, z_2^T)^T\) with \(z_1 \in \mathbb{R}^{n_1}\) and \(z_2 \in \mathbb{R}^{n-n_1}\), the system in the new coordinates becomes

\[\begin{align} z_1' &= A_c z_1 + A_{12} z_2 + B_c u, \tag{4.3a} \\ z_2' &= A_u z_2. \tag{4.3b} \end{align}\]The subsystem (4.3b) evolves autonomously, entirely independent of the input \(u\). No matter what control is applied, \(z_2\) cannot be influenced. The subsystem (4.3a) is driven by the input, and the pair \((A_c, B_c)\) is called the controllable part of \((A,B)\).

We claim that \((A_c, B_c)\) is itself controllable. To see this, compute the controllability matrix of \((PAP^{-1}, PB)\) using the block structure:

\[\mathcal{C}(PAP^{-1}, PB) = \begin{bmatrix} B_c & A_c B_c & A_c^2 B_c & \cdots & A_c^{n-1}B_c \\ 0 & 0 & 0 & \cdots & 0 \end{bmatrix}.\]This matrix clearly has the same rank as \(\mathcal{C}(A_c, B_c) = [B_c\ A_c B_c\ \cdots\ A_c^{n_1-1}B_c]\). By Remark 4.9, the rank of \(\mathcal{C}(PAP^{-1}, PB)\) equals the rank of \(\mathcal{C}(A,B)\), which is \(n_1\). Therefore \(\mathcal{C}(A_c, B_c)\) has rank \(n_1\), which is full row rank for a system of dimension \(n_1\). By Kalman’s rank condition, \((A_c, B_c)\) is controllable.

Theorem 4.10 (Controllable Decomposition). If \((A,B)\) is not controllable, then there exists a non-singular matrix \(P\) such that

\[PAP^{-1} = \begin{bmatrix} A_c & A_{12} \\ 0 & A_u \end{bmatrix}, \qquad PB = \begin{bmatrix} B_c \\ 0 \end{bmatrix},\]where \((A_c, B_c)\) is controllable (provided \(n_1 > 0\).

Example of Controllable Decomposition

Example 4.11. Consider the LTI system

\[x' = \begin{bmatrix} 1 & 1 & 0 \\ 0 & 1 & 0 \\ 0 & 1 & 1 \end{bmatrix} x + \begin{bmatrix} 0 & 1 \\ 1 & 0 \\ 0 & 1 \end{bmatrix} u.\]The controllability matrix is

\[\mathcal{C}(A,B) = \begin{bmatrix} B & AB & A^2B \end{bmatrix} = \begin{bmatrix} 0 & 1 & 1 & 1 & 2 & 1 \\ 1 & 0 & 1 & 0 & 1 & 0 \\ 0 & 1 & 1 & 1 & 2 & 1 \end{bmatrix},\]which has rank 2. Hence \((A,B)\) is not controllable. To find the controllable decomposition, pick two linearly independent columns of \(\mathcal{C}(A,B)\) spanning its column space and one additional vector:

\[v_1 = \begin{bmatrix} 0 \\ 1 \\ 0 \end{bmatrix}, \quad v_2 = \begin{bmatrix} 1 \\ 0 \\ 1 \end{bmatrix}, \quad v_3 = \begin{bmatrix} 1 \\ 0 \\ 0 \end{bmatrix}.\]Then

\[P^{-1} = \begin{bmatrix} 0 & 1 & 1 \\ 1 & 0 & 0 \\ 0 & 1 & 0 \end{bmatrix} \implies P = \begin{bmatrix} 0 & 1 & 0 \\ 0 & 0 & 1 \\ 1 & 0 & -1 \end{bmatrix}.\]Computing the transformed matrices,

\[PAP^{-1} = \begin{bmatrix} 1 & 0 & 0 \\ 1 & 1 & 0 \\ 0 & 0 & 1 \end{bmatrix}, \qquad PB = \begin{bmatrix} 1 & 0 \\ 0 & 1 \\ 0 & 0 \end{bmatrix}.\]The controllable part is \((A_c, B_c) = \left(\begin{bmatrix}1 & 0 \\ 1 & 1\end{bmatrix}, \begin{bmatrix}1 & 0 \\ 0 & 1\end{bmatrix}\right)\), and it is straightforward to verify that this pair is indeed controllable by checking that its controllability matrix has rank 2.

Proof of the PBH Test

We can now prove the PBH test, restated here for completeness.

Theorem 4.12 (PBH Test). Let \(A \in \mathbb{R}^{n \times n}\) and \(B \in \mathbb{R}^{n \times k}\). The pair \((A,B)\) is controllable if and only if

\[\mathrm{rank}\begin{bmatrix} A - \lambda I & B \end{bmatrix} = n \qquad \text{for all } \lambda \in \mathbb{C}.\]Proof. We prove both directions.

(\(\Rightarrow\) contrapositive): Suppose \([A - \lambda I\ \ B]\) does not have full rank for some \(\lambda \in \mathbb{C}\). Then there exists a nonzero complex vector \(v\) such that

\[v^T [A - \lambda I \quad B] = \begin{bmatrix} v^T A - \lambda v^T & v^T B \end{bmatrix} = 0,\]so \(v^T A = \lambda v^T\) (meaning \(v\) is a left eigenvector of \(A\) with eigenvalue \(\lambda\) and \(v^T B = 0\). It follows that

\[v^T A^i B = \lambda^i v^T B = 0, \qquad i = 0, 1, \ldots, n-1,\]so \(v^T [B\ AB\ \cdots\ A^{n-1}B] = 0\). The controllability matrix fails to have full row rank, so \((A,B)\) is not controllable by Kalman’s rank condition.

(\(\Leftarrow\) contrapositive): Now suppose \((A,B)\) is not controllable. By the Controllable Decomposition Theorem (Theorem 4.10), there exists a non-singular \(P\) such that

\[PAP^{-1} = \begin{bmatrix} A_c & A_{12} \\ 0 & A_u \end{bmatrix}, \qquad PB = \begin{bmatrix} B_c \\ 0 \end{bmatrix}.\]Let \(\lambda\) be an eigenvalue of \(A_u\) and let \(v^T\) be the corresponding left eigenvector, so \(v^T A_u = \lambda v^T\). Then

\[\begin{bmatrix} 0 & v^T \end{bmatrix} \begin{bmatrix} PAP^{-1} - \lambda I & PB \end{bmatrix} = \begin{bmatrix} 0 & v^T \end{bmatrix} \begin{bmatrix} A_c - \lambda I_{n_1} & A_{12} & B_c \\ 0 & A_u - \lambda I_{n-n_1} & 0 \end{bmatrix} = \begin{bmatrix} 0 & v^T A_u - \lambda v^T & 0 \end{bmatrix} = 0.\]Setting \(w = [0\ v^T] P\), we have \(w \neq 0\) and

\[w [A - \lambda I \quad B] = \begin{bmatrix} 0 & v^T \end{bmatrix} P [A - \lambda I \quad B] = \begin{bmatrix} 0 & v^T \end{bmatrix} [PAP^{-1} - \lambda I \quad PB] P_{\mathrm{aug}} = 0,\]where the last step uses the block calculation above (right-multiplying \([PAP^{-1} - \lambda I\quad PB]\) by \(P\) in the state part). Hence \(\mathrm{rank}[A - \lambda I\ \ B] < n\). The proof is complete. \(\square\)

The PBH test has an elegant interpretation: the system \((A,B)\) is uncontrollable if and only if there exists a left eigenvector of \(A\) that is orthogonal to the range of \(B\). In other words, if the input cannot excite some eigendirection of the dynamics, that mode is forever inaccessible to control.

Observability and Transfer Functions

Observability

The preceding lectures established the theory of controllability, which concerns whether a system’s state can be driven to any desired value by choosing an appropriate input. The complementary concept, observability, addresses the dual question: can the internal state of the system be reconstructed from external measurements? This is of central practical importance, since the state \( x(t) \) is often not directly accessible to measurement; only the output \( y(t) \) is observed.

Consider the LTI system \( (A, B, C, D) \), that is,

\[\begin{aligned} x'(t) &= Ax(t) + Bu(t) \\ y(t) &= Cx(t) + Du(t), \end{aligned}\]where \( x(t) \in \mathbb{R}^n \), \( u(t) \in \mathbb{R}^k \), and \( y(t) \in \mathbb{R}^m \).

Definition 5.1 (Observability). The LTI system is said to be observable if, for any \( t_1 > 0 \), one can uniquely determine \( x(0) \) from the input \( u : [0, t_1] \to \mathbb{R}^k \) and the output \( y : [0, t_1] \to \mathbb{R}^m \).

To understand what this definition entails, recall the variation-of-constants formula for the output:

\[ y(t) = Ce^{At}x(0) + \int_0^t Ce^{A(t-\tau)}Bu(\tau)\,d\tau + Du(t). \]Rearranging, we isolate the term involving the unknown initial condition:

\[ Ce^{At}x(0) = y(t) - \int_0^t Ce^{A(t-\tau)}Bu(\tau)\,d\tau - Du(t). \]The right-hand side is a known signal whenever \( u(\cdot) \) and \( y(\cdot) \) are known. Hence the observability problem reduces to determining \( x(0) \) from the signal \( Ce^{At}x(0) \) on the interval \( [0, t_1] \). This is entirely equivalent to observability of the autonomous system

\[\begin{aligned} x'(t) &= Ax(t) \\ y(t) &= Cx(t), \end{aligned}\]and therefore observability of \( (A, B, C, D) \) depends only on the pair \( (A, C) \). We accordingly say that the pair \( (A, C) \) is observable.

The Observability Gramian

The first characterization of observability uses an integral criterion analogous to the controllability Gramian.

Theorem 5.2. The pair \( (A, C) \) is observable if and only if the observability Gramian

\[ W_o(t) = \int_0^t e^{A^T\tau} C^T C e^{A\tau}\,d\tau \]is positive definite for all \( t > 0 \).

Proof. We first prove the sufficiency direction. Suppose \( W_o(t) \) is positive definite for all \( t > 0 \). Left-multiplying both sides of \( Ce^{A\tau}x(0) = y(\tau) \) by \( e^{A^T\tau}C^T \) and integrating over \( [0, t] \) gives

\[ \int_0^t e^{A^T\tau}C^T C e^{A\tau}x(0)\,d\tau = \int_0^t e^{A^T\tau}C^T y(\tau)\,d\tau, \]that is,

\[ W_o(t)\,x(0) = \int_0^t e^{A^T\tau}C^T y(\tau)\,d\tau. \]Since \( W_o(t) \) is positive definite (hence invertible), we can uniquely recover the initial condition as

\[ x(0) = W_o^{-1}(t)\int_0^t e^{A^T\tau}C^T y(\tau)\,d\tau. \]This establishes observability.

For the necessity direction, suppose that \( W_o(t_1) \) is not positive definite for some \( t_1 > 0 \). Then there exists a nonzero vector \( v \neq 0 \) such that \( v^T W_o(t_1)v = 0 \), which implies

\[ \int_0^{t_1} v^T e^{A^T\tau}C^T C e^{A\tau}v\,d\tau = \int_0^{t_1} \|Ce^{A\tau}v\|^2\,d\tau = 0. \]It follows that \( Ce^{At}v = 0 \) for all \( t \in [0, t_1] \). Now consider two initial conditions \( x(0) = 0 \) and \( x(0) = v \). Both produce the identical output \( y(t) = Ce^{At}x(0) = 0 \) for \( t \in [0, t_1] \). Since two distinct initial conditions produce indistinguishable outputs, \( x(0) \) cannot be uniquely determined and the pair \( (A,C) \) is not observable. \( \square \)

The observability Gramian is structurally parallel to the controllability Gramian

\[ W_c(t) = \int_0^t e^{A\tau}BB^T e^{A^T\tau}\,d\tau, \]and the analogy between the two runs deeper than mere structural resemblance, as the next theorem reveals.

Duality of Observability and Controllability

Theorem 5.3 (Duality). The pair \( (A, C) \) is observable if and only if the pair \( (A^T, C^T) \) is controllable.

Proof. By Theorem 5.2, \( (A, C) \) is observable if and only if

\[ \int_0^t e^{A^T\tau}C^T C e^{A\tau}\,d\tau \]is positive definite for all \( t > 0 \). Comparing with the controllability Gramian applied to the pair \( (A^T, C^T) \), namely

\[ \int_0^t e^{A^T\tau}(C^T)(C^T)^T e^{(A^T)^T\tau}\,d\tau = \int_0^t e^{A^T\tau}C^T C e^{A\tau}\,d\tau, \]the condition for observability of \( (A, C) \) is exactly the condition for controllability of \( (A^T, C^T) \). \( \square \)

This elegant duality allows the rich theory of controllability to be immediately translated into results about observability, simply by transposing the relevant matrices.

Equivalent Conditions for Observability

Theorem 5.4 (Observability — equivalent conditions). The following statements are equivalent:

The pair \( (A, C) \) is observable.

The observability Gramian \( W_o(t) = \int_0^t e^{A^T\tau}C^T Ce^{A\tau}\,d\tau \) is positive definite for all \( t > 0 \).

(Kalman’s rank condition) The observability matrix

has rank \( n \), i.e., full column rank.

- (PBH test) The matrix

has rank \( n \) for every \( \lambda \in \mathbb{C} \).

The proof of the equivalences follows by duality from the analogous controllability theorem: applying the controllability rank and PBH conditions to the transposed pair \( (A^T, C^T) \) and translating back yields conditions (3) and (4) above.

Example 5.5. Consider

\[ A = \begin{bmatrix} 1 & 0 & 0 \\ 0 & 0 & 0 \\ 0 & 0 & -1 \end{bmatrix}, \quad C = [c_1 \; c_2 \; c_3]. \]We ask: for which values of \( c_1, c_2, c_3 \) is \( (A, C) \) observable? The observability matrix is

\[ \mathcal{O}(A, C) = \begin{bmatrix} C \\ CA \\ CA^2 \end{bmatrix} = \begin{bmatrix} c_1 & c_2 & c_3 \\ c_1 & 0 & -c_3 \\ c_1 & 0 & c_3 \end{bmatrix}. \]For this \( 3 \times 3 \) matrix to have rank 3, its determinant must be nonzero. Expanding along the second column gives

\[ \det(\mathcal{O}(A,C)) = -c_2 \det\begin{bmatrix} c_1 & -c_3 \\ c_1 & c_3 \end{bmatrix} = -c_2(2c_1 c_3) = -2c_1 c_2 c_3. \]Hence \( (A, C) \) is observable if and only if \( c_1 c_2 c_3 \neq 0 \); in other words, all three output coefficients must be nonzero.

Observable Decomposition

The duality between observability and controllability also produces an analog to the controllable decomposition. Suppose that \( (A, C) \) is not observable, so that the observability matrix

\[ \mathcal{O}(A, C) = \begin{bmatrix} C \\ CA \\ \vdots \\ CA^{n-1} \end{bmatrix} \]has rank \( n_1 < n \). Let \( v_1, \ldots, v_{n_1} \) be \( n_1 \) linearly independent rows of \( \mathcal{O}(A, C) \) and choose additional vectors \( v_{n_1+1}, \ldots, v_n \) so that the matrix

\[ P = \begin{bmatrix} v_1 \\ v_2 \\ \vdots \\ v_n \end{bmatrix} \]is invertible. Under the state transformation \( z = Px \), the LTI system \( (A, B, C, D) \) becomes

\[\begin{align} z'(t) &= PAP^{-1}z(t) + PBu(t) \\ y(t) &= CP^{-1}z(t) + Du(t), \end{aligned}\tag{5.1}\]where the transformed system matrix has the block structure

\[ PAP^{-1} = \begin{pmatrix} A_o & 0 \\ A_{21} & A_u \end{pmatrix} \tag{5.2} \]with the blocks partitioned according to dimensions \( n_1 \) and \( n - n_1 \), and the transformed output matrix takes the form

\[ CP^{-1} = (C_o \;\; 0). \tag{5.3} \]The pair \( (A_o, C_o) \) is called the observable part of the system. By duality, \( (A_o, C_o) \) is indeed observable whenever \( n_1 > 0 \). The crucial structural feature is that the unobservable states (those corresponding to the zero block in \( CP^{-1} \) do not appear in the output at all; no measurement can reveal their values.

Example 5.6. Consider

\[ A = \begin{bmatrix} 1 & 0 & 0 \\ 0 & 0 & 0 \\ 0 & 0 & -1 \end{bmatrix}, \quad C = [1 \; 0 \; 1]. \]The observability matrix is

\[ \mathcal{O}(A, C) = \begin{bmatrix} 1 & 0 & 1 \\ 1 & 0 & -1 \\ 1 & 0 & 1 \end{bmatrix}, \]which has rank 2 (since row 3 equals row 1). We choose \( v_1 = [1\;0\;1] \), \( v_2 = [1\;0\;-1] \) (two linearly independent rows) and augment with \( v_3 = [0\;1\;0] \) to obtain the invertible matrix

\[ P = \begin{bmatrix} 1 & 0 & 1 \\ 1 & 0 & -1 \\ 0 & 1 & 0 \end{bmatrix}, \quad P^{-1} = \begin{bmatrix} \tfrac{1}{2} & \tfrac{1}{2} & 0 \\ 0 & 0 & 1 \\ \tfrac{1}{2} & -\tfrac{1}{2} & 0 \end{bmatrix}. \]Computing the transformed matrices gives

\[ PAP^{-1} = \begin{bmatrix} 0 & 1 & 0 \\ 1 & 0 & 0 \\ 0 & 0 & 0 \end{bmatrix}, \qquad CP^{-1} = [1\;0\;0]. \]The observable part is therefore

\[ (A_o, C_o) = \left(\begin{bmatrix} 0 & 1 \\ 1 & 0 \end{bmatrix},\; [1\;0]\right). \]One can verify directly that this two-dimensional pair is observable.

Transfer Functions

The preceding lectures developed state-space methods for analyzing LTI systems, working entirely in the time domain. A complementary approach operates in the frequency domain, representing signals and systems in terms of their frequency content rather than their time-domain trajectories. The central tool for frequency-domain analysis is the transfer function, which we develop rigorously via the Laplace transform.

Laplace Transforms

Consider a signal \( x : [0, \infty) \to \mathbb{R}^n \). The Laplace transform of \( x \) is defined by

\[ \hat{x}(s) = \mathcal{L}[x(t)] = \int_0^\infty x(t)e^{-st}\,dt, \]where \( s \) is a complex variable. The Laplace transform thus maps a time-domain signal to a function of the complex variable \( s \). Several standard pairs are frequently used:

\[\begin{align} \mathcal{L}[t^k] &= \frac{k!}{s^{k+1}}, \quad k = 0, 1, 2, \ldots \\ \mathcal{L}[e^{at}] &= \frac{1}{s-a} \\ \mathcal{L}[\sin(\omega t)] &= \frac{\omega}{s^2 + \omega^2} \\ \mathcal{L}[\cos(\omega t)] &= \frac{s}{s^2 + \omega^2}. \end{align}\]The transform of derivatives, which is the key property for analyzing differential equations, is given by

\[\begin{aligned} \mathcal{L}[x'(t)] &= s\hat{x}(s) - x(0) \\ \mathcal{L}[x''(t)] &= s^2\hat{x}(s) - s\,x(0) - x'(0). \end{aligned}\]The appearance of the initial condition \( x(0) \) in the first formula is crucial: it separates the effects of the initial state from the effects of the input signal.

Laplace Transform of the LTI System

Consider the LTI system \( (A, B, C, D) \):

\[\begin{aligned} x'(t) &= Ax(t) + Bu(t) \\ y(t) &= Cx(t) + Du(t). \end{aligned}\]Taking the Laplace transform of both equations and applying the derivative rule yields

\[\begin{aligned} s\hat{x}(s) - x(0) &= A\hat{x}(s) + B\hat{u}(s) \\ \hat{y}(s) &= C\hat{x}(s) + D\hat{u}(s). \end{aligned}\]Solving the first equation for \( \hat{x}(s) \) gives

\[ \hat{x}(s) = (sI - A)^{-1}x(0) + (sI - A)^{-1}B\hat{u}(s). \]Substituting into the output equation:

\[ \hat{y}(s) = \underbrace{C(sI-A)^{-1}x(0)}_{\text{zero-input response}} + \underbrace{[C(sI-A)^{-1}B + D]\hat{u}(s)}_{\text{zero-state response}}. \]The zero-state response captures the input-output relationship when the system starts from rest. Setting \( x(0) = 0 \) gives

\[ \hat{y}(s) = \underbrace{[C(sI - A)^{-1}B + D]}_{\text{transfer function}}\hat{u}(s). \]Transfer Functions

Definition 6.7 (Transfer function). The transfer function (matrix) of the LTI system \( (A, B, C, D) \) is defined by

\[ G(s) = C(sI - A)^{-1}B + D. \]Using this notation, the zero-state input-output relation in the Laplace domain is simply

\[ \hat{y}(s) = G(s)\hat{u}(s). \]To compute \( G(s) \) explicitly, one applies Cramer’s rule for matrix inversion:

\[ G(s) = C\,\frac{\text{adj}(sI - A)}{\det(sI - A)}\,B + D, \]where \( \text{adj}(\cdot) \) denotes the adjugate matrix. Since \( \det(sI - A) \) is a polynomial in \( s \) and each entry of \( \text{adj}(sI - A) \) is also a polynomial in \( s \), every entry of \( G(s) \) is a rational function of \( s \).

Example 6.8. Consider the system

\[ x' = \begin{bmatrix} 0 & 1 \\ 1 & 0 \end{bmatrix}x + \begin{bmatrix} 0 \\ 1 \end{bmatrix}u, \qquad y = [1 \; 0]x. \]Computing \( (sI - A)^{-1} \):

\[ G(s) = [1\;0]\begin{bmatrix} s & -1 \\ -1 & s \end{bmatrix}^{-1}\begin{bmatrix} 0 \\ 1 \end{bmatrix} = [1\;0]\,\frac{1}{s^2-1}\begin{bmatrix} s & 1 \\ 1 & s \end{bmatrix}\begin{bmatrix} 0 \\ 1 \end{bmatrix} = \frac{1}{s^2 - 1}. \]Example 6.9. Consider the \( n \)th-order scalar differential equation

\[ x^{(n)}(t) + b_{n-1}x^{(n-1)}(t) + \cdots + b_0 x(t) = u(t), \]with output

\[ y(t) = a_0 x(t) + a_1 x'(t) + \cdots + a_{n-1}x^{(n-1)}(t). \]Taking the Laplace transform with zero initial conditions gives \( (s^n + b_{n-1}s^{n-1} + \cdots + b_0)\hat{x}(s) = \hat{u}(s) \), and consequently

\[ \hat{y}(s) = (a_0 + a_1 s + \cdots + a_{n-1}s^{n-1})\hat{x}(s) = \frac{a_{n-1}s^{n-1} + \cdots + a_1 s + a_0}{s^n + b_{n-1}s^{n-1} + \cdots + b_0}\,\hat{u}(s). \]Hence the transfer function of this higher-order scalar system is

\[ G(s) = \frac{a_{n-1}s^{n-1} + \cdots + a_1 s + a_0}{s^n + b_{n-1}s^{n-1} + \cdots + b_0}. \]This example shows explicitly that the transfer function of a scalar system described by a linear ODE with constant coefficients is a rational function whose denominator degree equals the order of the equation.

Impulse Response

The Laplace transform connects elegantly to a time-domain representation of the input-output map. Taking the inverse Laplace transform of \( \hat{y}(s) = G(s)\hat{u}(s) \) gives

\[ y(t) = (g * u)(t), \]where \( g(t) = \mathcal{L}^{-1}[G(s)] \) and \( * \) denotes convolution. The function \( g(t) \) is the impulse response of the system.

To understand its physical meaning, consider the approximate impulse given by the pulse

\[ P_\varepsilon(t) = \begin{cases} 0 & t < 0 \\ \tfrac{1}{\varepsilon} & 0 \leq t < \varepsilon \\ 0 & t \geq \varepsilon, \end{cases} \]which has duration \( \varepsilon \), amplitude \( 1/\varepsilon \), and unit area. The Dirac delta function \( \delta(t) \) is defined as the limiting distribution \( \delta(t) = \lim_{\varepsilon \to 0} P_\varepsilon(t) \), a generalized function of zero duration, infinite amplitude, and unit area.

Definition 6.10 (Impulse response). The impulse response \( g(t) \) of the system \( (A, B, C, D) \) is the output corresponding to an impulse input with zero initial condition:

\[ g(t) = C\int_0^t e^{A(t-\tau)}B\,\delta(\tau)\,d\tau + D\delta(t) = Ce^{At}B + D\delta(t). \]The general output can then be interpreted as a superposition of impulse responses scaled by the input values:

\[\begin{aligned} y(t) &= C\int_0^t e^{A(t-\tau)}Bu(\tau)\,d\tau + Du(t) \\ &= \int_0^t g(t-\tau)u(\tau)\,d\tau \\ &= (g * u)(t). \end{aligned}\]Since \( \hat{y}(s) = G(s)\hat{u}(s) \), it follows that \( \mathcal{L}[g(t)] = G(s) \): the transfer function is precisely the Laplace transform of the impulse response.

Realizations of Transfer Functions

We have seen how to compute \( G(s) \) from a state-space model \( (A, B, C, D) \). The converse question — finding a state-space model for a prescribed transfer function — is equally important.

Definition 6.11 (Realization). An LTI system \( (A, B, C, D) \) is said to be a realization of a transfer function \( G(s) \) if

\[ C(sI - A)^{-1}B + D = G(s). \]Example 6.12 (Realizability of proper transfer functions). From Example 6.9, the nth-order system

\[ x^{(n)}(t) + b_{n-1}x^{(n-1)}(t) + \cdots + b_0 x(t) = u(t), \quad y(t) = a_0 x(t) + a_1 x'(t) + \cdots + a_{n-1}x^{(n-1)}(t) \]is a realization of

\[ G(s) = \frac{a_{n-1}s^{n-1} + \cdots + a_1 s + a_0}{s^n + b_{n-1}s^{n-1} + \cdots + b_0}. \]A natural question is whether the realization of a given transfer function is unique. One immediate source of non-uniqueness is a state transformation: any invertible change of coordinates in state space preserves the transfer function.

Proposition 6.13 (Invariance under state transformation). If \( (A, B, C, D) \) is a realization of \( G(s) \), then so is \( (PAP^{-1}, PB, CP^{-1}, D) \) for any invertible matrix \( P \).

Proof. The result follows directly from the computation

\[ (CP^{-1})(sI - PAP^{-1})^{-1}(PB) + D = CP^{-1}(P(sI-A)P^{-1})^{-1}PB + D = C(sI-A)^{-1}B + D = G(s). \quad \square \]In other words, transfer functions are invariant under state transformation. The question of whether realization is unique modulo state transformation — that is, whether every two realizations of the same transfer function are related by an invertible state transformation — leads naturally to the concept of minimality developed in the next lecture.

Realizations and Frequency Response

Realizations of Transfer Functions

In the previous lecture we introduced the notion of a realization and observed that any proper rational function arises as the transfer function of some LTI system. This lecture develops that idea systematically. We characterize precisely which transfer functions are realizable, explain a canonical construction that produces a realization from any proper rational function, and then address the fundamental question of uniqueness: when are two realizations related by a state transformation?

Proper Rational Functions and Realizability

Definition 7.1. A rational function is a ratio of two polynomials, \( r(s) = p(s)/q(s) \). It is called strictly proper if \( \deg(p) < \deg(q) \), and proper if \( \deg(p) \leq \deg(q) \). A rational function matrix is (strictly) proper if every entry is (strictly) proper.

Theorem 7.2 (Realizability). A transfer function \( G(s) \) is realizable (i.e., it is the transfer function of some LTI system) if and only if \( G(s) \) is proper.

Proof. We first show necessity. If \( G(s) = C(sI-A)^{-1}B + D \), then

\[ G(s) = C\,\frac{\text{adj}(sI - A)}{\det(sI - A)}\,B + D. \]Since each entry of \( \text{adj}(sI - A) \) is a polynomial of degree at most \( n-1 \) while \( \det(sI - A) \) is a polynomial of degree \( n \), the term \( C(sI-A)^{-1}B \) is strictly proper, and hence \( G(s) \) is proper.

For sufficiency, we construct realizations explicitly. Every proper \( G(s) \) can be decomposed as \( G(s) = G_{sp}(s) + D \), where \( \lim_{s \to \infty} G(s) = D \) and \( G_{sp}(s) \) is strictly proper.

SISO case. A general proper SISO transfer function takes the form

\[ G(s) = \frac{a_{n-1}s^{n-1} + a_{n-2}s^{n-2} + \cdots + a_0}{s^n + b_{n-1}s^{n-1} + \cdots + b_1 s + b_0} + D. \]One verifies directly that the nth-order system

\[\begin{cases} x^{(n)}(t) + b_{n-1}x^{(n-1)}(t) + b_{n-2}x^{(n-2)}(t) + \cdots + b_0 x(t) = u(t) \\ y(t) = a_0 x(t) + a_1 x'(t) + \cdots + a_{n-1}x^{(n-1)}(t) + Du(t) \end{cases}\]realizes \( G(s) \). Writing this as a first-order system with state vector \( x = [x', x'', \ldots, x^{(n-1)}]^T \) yields the companion form matrices:

\[ A = \begin{bmatrix} 0 & 1 & 0 & \cdots & 0 \\ 0 & 0 & 1 & \cdots & 0 \\ \vdots & \vdots & \vdots & \ddots & \vdots \\ 0 & 0 & 0 & \cdots & 1 \\ -b_0 & -b_1 & -b_2 & \cdots & -b_{n-1} \end{bmatrix}, \quad B = \begin{bmatrix} 0 \\ 0 \\ \vdots \\ 0 \\ 1 \end{bmatrix}, \quad C = [a_0 \; a_1 \; \cdots \; a_{n-1}]. \]MIMO case. A general proper MIMO transfer function of dimension \( m \times k \) can be written as \( G(s) = G_{sp}(s) + D \), where

\[ G_{sp}(s) = \frac{A_{r-1}s^{r-1} + A_{r-2}s^{r-2} + \cdots + A_1 s + A_0}{s^r + b_{r-1}s^{r-1} + \cdots + b_1 s + b_0} \]with scalar coefficients \( b_0, \ldots, b_{r-1} \) and \( m \times k \) matrix coefficients \( A_0, \ldots, A_{r-1} \). A realization \( (A, B, C, D) \) is given by the block companion form

\[ A = \begin{bmatrix} 0 & I & 0 & \cdots & 0 \\ 0 & 0 & I & \cdots & 0 \\ \vdots & \vdots & \vdots & \ddots & \vdots \\ 0 & 0 & 0 & \cdots & I \\ -b_0 I & -b_1 I & -b_2 I & \cdots & -b_{r-1}I \end{bmatrix}, \quad B = \begin{bmatrix} 0 \\ 0 \\ \vdots \\ 0 \\ I \end{bmatrix}, \quad C = [A_0 \; A_1 \; \cdots \; A_{r-1}], \]where \( 0 \) and \( I \) are \( k \times k \) matrices, \( A \) is \( rk \times rk \), \( B \) is \( rk \times k \), and \( C \) is \( m \times rk \). \( \square \)

Remark 7.3. The realizations constructed in the proof above are called controllable canonical realizations. It can be verified that they are indeed controllable.

Remark 7.4. Since any proper transfer function decomposes as \( G(s) = G_{sp}(s) + D \), we have

\[ \lim_{s \to \infty} G(s) = \lim_{s \to \infty} G_{sp}(s) + D = D. \]This gives a direct method to read off the \( D \) matrix: it is the limit of the transfer function as \( s \to \infty \).

Example 7.5. Consider the strictly proper SISO transfer function

\[ G(s) = \frac{s}{s^2 + s + 1}. \]Here \( D = \lim_{s \to \infty} G(s) = 0 \). The controllable canonical realization is

\[ A = \begin{bmatrix} 0 & 1 \\ -1 & -1 \end{bmatrix}, \quad B = \begin{bmatrix} 0 \\ 1 \end{bmatrix}, \quad C = [0 \; 1], \quad D = 0. \]For the improper transfer function \( G(s) = (s+1)^2/(s^2 + s + 1) = s/(s^2+s+1) + 1 \), we extract \( D = 1 \) and use the same state matrices with \( D = 1 \).

Example 7.6. Find a realization for the MIMO transfer function

\[ G(s) = \begin{bmatrix} \dfrac{s}{s+1} \\[6pt] \dfrac{1}{s+2} \end{bmatrix}. \]We compute \( D = \lim_{s \to \infty} G(s) = [1, \; 0]^T \) and write the strictly proper part

\[ G_{sp}(s) = G(s) - D = \begin{bmatrix} -\tfrac{1}{s+1} \\[4pt] \tfrac{1}{s+2} \end{bmatrix} = \frac{1}{(s+1)(s+2)}\begin{bmatrix} -(s+2) \\ (s+1) \end{bmatrix} = \frac{1}{s^2 + 3s + 2}\left(\begin{bmatrix}-1 \\ 1\end{bmatrix}s + \begin{bmatrix}-2 \\ 1\end{bmatrix}\right). \]Identifying \( b_1 = 3 \), \( b_0 = 2 \), \( A_1 = [-1, \; 1]^T \), \( A_0 = [-2, \; 1]^T \), the controllable canonical realization (with \( m=2 \), \( k=1 \) is

\[ A = \begin{bmatrix} 0 & 1 \\ -2 & -3 \end{bmatrix}, \quad B = \begin{bmatrix} 0 \\ 1 \end{bmatrix}, \quad C = \begin{bmatrix} -2 & -1 \\ 1 & 1 \end{bmatrix}, \quad D = \begin{bmatrix} 1 \\ 0 \end{bmatrix}. \]Example 7.7. Consider

\[ G(s) = \begin{bmatrix} \dfrac{-2}{s+1} & \dfrac{1}{s+1} \end{bmatrix}. \]Here \( D = \lim_{s \to \infty} G(s) = [2 \; 1] \) and the strictly proper part factors as

\[ G_{sp}(s) = G(s) - D = \frac{1}{s+1}[-2 \;\; 1]. \]The controllable canonical realization is

\[ A = -I_{2\times 2} = \begin{bmatrix} -1 & 0 \\ 0 & -1 \end{bmatrix}, \quad B = I_{2\times 2} = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix}, \quad C = [-2 \;\; 1], \quad D = [2 \;\; 1]. \]Controllable and Observable Realizations

The controllable canonical realizations may not be the most compact realizations one can find. If a realization is not observable, one can apply observable decomposition to obtain a lower-dimensional system with the same transfer function.

Theorem 7.8. The controllable decomposition \( (A_c, B_c, C_c, D) \) and the observable decomposition \( (A_o, B_o, C_o, D) \) of an LTI system \( (A, B, C, D) \) have the same transfer function as \( (A, B, C, D) \).

Proof. Recall that the controllable decomposition takes the form

\[ (P_1 AP_1^{-1},\, P_1 B,\, CP_1^{-1},\, D) = \left(\begin{bmatrix} A_c & A_{12} \\ 0 & A_u \end{bmatrix},\; \begin{bmatrix} B_c \\ 0 \end{bmatrix},\; [C_c \;\; C_u],\; D\right) \]and the observable decomposition takes the form

\[ (P_2 AP_2^{-1},\, P_2 B,\, CP_2^{-1},\, D) = \left(\begin{bmatrix} A_o & 0 \\ A_{21} & A_u \end{bmatrix},\; \begin{bmatrix} B_o \\ B_u \end{bmatrix},\; [C_o \;\; 0],\; D\right). \]For the controllable decomposition, the block-triangular structure of \( sI - P_1 AP_1^{-1} \) implies

\[ (sI - P_1 AP_1^{-1})^{-1} = \begin{pmatrix} (sI - A_c)^{-1} & * \\ 0 & (sI - A_u)^{-1} \end{pmatrix}, \]and multiplying out gives

\[ [C_c \;\; C_u](sI - P_1AP_1^{-1})^{-1}\begin{bmatrix} B_c \\ 0 \end{bmatrix} + D = C_c(sI - A_c)^{-1}B_c + D = G(s). \]The observable decomposition case is analogous. \( \square \)

By Theorem 7.8, if a system is not controllable or not observable, one can always find a lower-dimensional system realizing the same transfer function through decomposition. This process terminates when a realization that is simultaneously controllable and observable is found, motivating the central concept below.

Minimal Realization

Definition 7.9 (Minimal realization). A realization of \( G(s) \) is called minimal if no other realization of \( G(s) \) has smaller state dimension.

The following theorem is the cornerstone result on minimality: it characterizes minimal realizations by a simple structural property and establishes their uniqueness up to state transformation.

Theorem 7.10. Let \( G(s) \) be a transfer function. Then:

A realization of \( G(s) \) is minimal if and only if it is both controllable and observable.

If \( (A, B, C, D) \) and \( (A_1, B_1, C_1, D_1) \) are both minimal realizations of \( G(s) \), then there exists a non-singular matrix \( P \) such that

Proof of part (1). For the direction “minimal implies controllable and observable”: suppose \( (A, B, C, D) \) is minimal but fails to be controllable or observable. By Theorem 7.8, we could then apply controllable or observable decomposition to obtain a strictly lower-dimensional realization of \( G(s) \), contradicting minimality.

For the converse, suppose \( (A, B, C, D) \) is controllable and observable of order \( n \), but is not minimal, so there exists another realization \( (A_1, B_1, C_1, D_1) \) of order \( n_1 < n \). Let \( \mathcal{C} \) and \( \mathcal{O} \) be the controllability and observability matrices of \( (A, B, C, D) \). Since \( \text{rank}\,\mathcal{O} = \text{rank}\,\mathcal{C} = n \), one shows that \( \text{rank}(\mathcal{O}\mathcal{C}) = n \).

The key tool is the following lemma.

Lemma 7.11. Two systems \( (A, B, C, D) \) and \( (A_1, B_1, C_1, D_1) \) share the transfer function \( G(s) \) if and only if \( D = D_1 \) and \( CA^i B = C_1 A_1^i B_1 \) for all \( i = 0, 1, 2, \ldots \)

Proof of Lemma 7.11. Using the matrix exponential series, the transfer function expands as

\[ G(s) = C(sI - A)^{-1}B + D = C\,\mathcal{L}[e^{At}]B + D = C\,\mathcal{L}\left[\sum_{k=0}^\infty \frac{t^k A^k}{k!}\right]B + D = \sum_{k=0}^\infty CA^k B\,s^{-(k+1)} + D. \]By uniqueness of the Laurent expansion in \( s^{-1} \), two transfer functions are identical if and only if all coefficients match: \( D_1 = D \) and \( CA^k B = C_1 A_1^k B_1 \) for all \( k \geq 0 \). \( \square \)

Returning to the proof of Theorem 7.10 part (1): by Lemma 7.11, \( \mathcal{O}\mathcal{C} = \mathcal{O}_1 \mathcal{C}_1 \), so

\[ \text{rank}(\mathcal{O}\mathcal{C}) = \text{rank}(\mathcal{O}_1 \mathcal{C}_1) \leq \min(\text{rank}\,\mathcal{O}_1, \text{rank}\,\mathcal{C}_1) \leq n_1 < n, \]contradicting \( \text{rank}(\mathcal{O}\mathcal{C}) = n \). \( \square \)

Proof of part (2). From Lemma 7.11 and the condition that both systems realize \( G(s) \), we have \( \mathcal{O}\mathcal{C} = \mathcal{O}_1\mathcal{C}_1 \). We use the following algebraic fact.

Lemma 7.12. A full column rank matrix \( M \) has a left inverse \( (M^T M)^{-1}M^T \), and a full row rank matrix \( N \) has a right inverse \( N^T(NN^T)^{-1} \).

Since \( \mathcal{C} \) has full row rank and \( \mathcal{O} \) has full column rank, we define

\[ P = \mathcal{C}_1\mathcal{C}^T(\mathcal{C}\mathcal{C}^T)^{-1}. \]One can verify that \( P \) is non-singular, with inverse \( P^{-1} = (\mathcal{O}^T\mathcal{O})^{-1}\mathcal{O}^T\mathcal{O}_1 \). Right-multiplying \( \mathcal{O}\mathcal{C} = \mathcal{O}_1\mathcal{C}_1 \) by the right inverse of \( \mathcal{C} \) gives

\[ \mathcal{O} = \mathcal{O}_1\mathcal{C}_1\mathcal{C}^T(\mathcal{C}\mathcal{C}^T)^{-1} = \mathcal{O}_1 P. \tag{7.1} \]From the definition of the observability matrix, equation (7.1) implies \( C = C_1 P \), i.e., \( CP^{-1} = C_1 \). Left-multiplying (7.1) by the left inverse of \( \mathcal{O}_1 \) yields \( P = (\mathcal{O}_1^T\mathcal{O}_1)^{-1}\mathcal{O}_1^T\mathcal{O} \), and using this together with \( \mathcal{O}\mathcal{C} = \mathcal{O}_1\mathcal{C}_1 \) gives \( P\mathcal{C} = \mathcal{C}_1 \), hence \( PB = B_1 \).

Finally, Lemma 7.11 gives \( \mathcal{O}A\mathcal{C} = \mathcal{O}_1 A_1 \mathcal{C}_1 \). Left-multiplying by the left inverse of \( \mathcal{O}_1 \) and right-multiplying by the right inverse of \( \mathcal{C} \) produces \( PA = A_1 P \), i.e., \( PAP^{-1} = A_1 \). \( \square \)

In summary, the minimal realization is uniquely determined by the transfer function up to an invertible state transformation. To find a minimal realization in practice, one constructs any realization (e.g., controllable canonical), then applies controllable and observable decomposition to reduce the state dimension until the result is both controllable and observable.

Example 7.13. Find a minimal realization for

\[ G(s) = \begin{bmatrix} \dfrac{-2}{s+1} & \dfrac{1}{s+1} \end{bmatrix}. \]We already found the controllable canonical realization (see Example 7.7):

\[ A = -I_{2\times 2}, \quad B = I_{2\times 2}, \quad C = [-2 \;\; 1], \quad D = [2 \;\; 1]. \]One verifies that this realization is controllable. Computing the observability matrix:

\[ \mathcal{O}(A, C) = \begin{bmatrix} C \\ CA \end{bmatrix} = \begin{bmatrix} -2 & 1 \\ 2 & -1 \end{bmatrix}, \]which has rank 1 since row 2 is \( -1 \) times row 1. The system is not observable, so we apply observable decomposition. Let

\[ P = \begin{bmatrix} -2 & 1 \\ 0 & 1 \end{bmatrix}, \quad P^{-1} = \begin{bmatrix} -\tfrac{1}{2} & \tfrac{1}{2} \\ 0 & 1 \end{bmatrix}. \]Computing:

\[ PAP^{-1} = \begin{bmatrix} -1 & 0 \\ 0 & -1 \end{bmatrix}, \quad PB = \begin{bmatrix} -2 & 1 \\ 0 & 1 \end{bmatrix}, \quad CP^{-1} = [1 \;\; 0]. \]The observable decomposition reveals that the observable part is

\[ A_o = -1, \quad B_o = [-2 \;\; 1], \quad C_o = 1, \quad D = [2 \;\; 1]. \]This one-dimensional realization is both controllable and observable, hence minimal. One can verify directly that \( C_o(sI - A_o)^{-1}B_o + D = \frac{1}{s+1}[-2\;\;1] + [2\;\;1] = G(s) \).

Frequency Response and Bode Plots

The time response of a control system describes how the output evolves in time for a given time-varying input. The frequency response is the complementary description: it characterizes how the steady-state output amplitude and phase depend on the frequency of a sinusoidal input. This frequency-domain perspective is indispensable for engineering analysis and design, because it provides an intuitive and graphically accessible summary of system behaviour across all frequencies simultaneously. We focus on SISO systems throughout.

Frequency Response

Consider an exponential input of the form

\[ u(t) = e^{st}, \quad s = \sigma + j\omega \in \mathbb{C}. \]Note that sinusoidal inputs are special cases; for instance, \( \cos(\omega t) = (e^{j\omega t} + e^{-j\omega t})/2 \). Starting from the variation-of-constants formula and assuming \( s \notin \lambda(A) \) (i.e., \( s \) is not an eigenvalue of \( A \), one computes the state trajectory:

\[\begin{aligned} x(t) &= e^{At}x(0) + \int_0^t e^{A(t-\tau)}Be^{s\tau}\,d\tau \\ &= e^{At}x(0) + e^{At}(sI - A)^{-1}\left[e^{(sI-A)t} - I\right]B \\ &= e^{At}[x(0) - (sI - A)^{-1}B] + (sI - A)^{-1}Be^{st}. \end{aligned}\]The output is then

\[ y(t) = \underbrace{Ce^{At}[x(0) - (sI-A)^{-1}B]}_{\text{transient response}} + \underbrace{[C(sI-A)^{-1}B + D]e^{st}}_{\text{steady-state response}}. \]Since all eigenvalues of \( A \) have negative real parts for a stable system, the transient term decays to zero as \( t \to \infty \). The steady-state response is then

\[ y_{ss}(t) = G(s)\,e^{st}, \]where \( G(s) \) is the transfer function. This elegant result shows that a stable LTI system transmits an exponential signal \( e^{st} \) with its amplitude and phase modified by \( G(s) \).

For the purely imaginary case \( s = j\omega \) (a sinusoidal input), we write

\[ G(j\omega) = M e^{j\phi}, \]where \( M = |G(j\omega)| \) is the gain and \( \phi = \angle G(j\omega) = \arctan\dfrac{\operatorname{Im} G(j\omega)}{\operatorname{Re} G(j\omega)} \) is the phase. The steady-state response becomes

\[ y_{ss}(t) = Me^{j(\omega t + \phi)} = M[\cos(\omega t + \phi) + j\sin(\omega t + \phi)]. \]By linearity, for a real sinusoidal input \( u(t) = \cos(\omega t) \), the steady-state output is

\[ y_{ss}(t) = M\cos(\omega t + \phi). \]If \( \phi > 0 \), the output leads the input; if \( \phi < 0 \), the output lags the input.

Definition 8.14 (Frequency response). The frequency response of the system is the transfer function evaluated on the imaginary axis,

\[ G(j\omega) = Me^{j\phi}, \]where \( M = |G(j\omega)| \) is the gain and \( \phi = \angle G(j\omega) \) is the phase. Since \( G(-j\omega) = \overline{G(j\omega)} \), it suffices to consider \( \omega \geq 0 \).

Example 8.15. Consider the first-order transfer function \( G(s) = \dfrac{1}{s+1} \). The frequency response is

\[ G(j\omega) = \frac{1}{j\omega + 1} = Me^{j\phi}, \quad M = |G(j\omega)| = \frac{1}{\sqrt{\omega^2 + 1}}, \quad \phi = \angle G(j\omega) = -\arctan(\omega). \]For \( \omega = 1 \), the gain is \( M = 1/\sqrt{2} \approx 0.707 \) and the phase lag is \( 45^\circ \). For \( \omega = 10 \), the gain is much smaller (\( M \approx 0.1 \) and the phase lag approaches \( 90^\circ \). This illustrates that higher-frequency inputs are attenuated more strongly, and that the output always lags the input for this system.

More generally, the frequency response provides comprehensive information about a system: a large gain \( |G(j\omega)| \) at a particular frequency \( \omega \) means the system amplifies sinusoidal inputs at that frequency, while a small gain means the system attenuates them.

Bode Plots

The frequency response can be measured experimentally by sweeping through a range of frequencies \( \omega = \omega_1, \omega_2, \ldots, \omega_N \), applying a sinusoid at each frequency, waiting for the transient to die out, and measuring the steady-state amplitude ratio and phase shift. The result can be displayed in several ways. Plotting the real and imaginary parts of \( G(j\omega) \) as a curve in the complex plane as \( \omega \) varies is called a Nyquist plot. Plotting both the gain \( |G(j\omega)| \) and the phase \( \angle G(j\omega) \) as functions of \( \omega \) separately is called a Bode plot.

Definition 8.16 (Bode plot). A Bode plot for the frequency response \( G(j\omega) \) consists of two curves: the gain curve (or magnitude plot), which shows \( |G(j\omega)| \) as a function of \( \omega \), and the phase curve (or phase plot), which shows \( \angle G(j\omega) \) as a function of \( \omega \).

Remark 8.17. In standard engineering practice, the Bode gain curve is plotted on a log/log scale (i.e., both axes are logarithmic) and the phase curve on a log/linear scale (logarithmic frequency axis, linear phase axis). The magnitude is expressed in decibels (dB):

\[ |G(j\omega)|_{\rm dB} = 20\log_{10}|G(j\omega)|. \]Bode plots are particularly powerful because of the following multiplicative property. For a transfer function \( G(s) = G_1(s)G_2(s)/G_3(s) \), we have

\[\begin{aligned} \log|G(j\omega)| &= \log|G_1(j\omega)| + \log|G_2(j\omega)| - \log|G_3(j\omega)| \\ \angle G(j\omega) &= \angle G_1(j\omega) + \angle G_2(j\omega) - \angle G_3(j\omega). \end{aligned}\]The Bode gain and phase of a composite transfer function are thus obtained by addition and subtraction of the individual component curves. Since any polynomial factors into terms of the form

\[ k, \quad s, \quad s + a, \quad s^2 + 2\zeta\omega_0 s + \omega_0^2, \]it suffices to understand the Bode plots of these four elementary building blocks and then combine them.

Example 8.18 (Constant gain). For \( G(s) = k \), the frequency response is the constant \( k \), giving

\[ \text{gain (dB)} = 20\log|k|, \qquad \angle k = \begin{cases} 0^\circ & k > 0 \\ 180^\circ & k < 0. \end{cases} \]The gain curve is a horizontal line at \( 20\log|k| \) dB and the phase curve is constant at \( 0^\circ \) or \( 180^\circ \).

Example 8.19 (Pure integrator/differentiator). For \( G(s) = s^k \) where \( k \) is an integer, evaluating at \( s = j\omega \) gives \( G(j\omega) = (j\omega)^k = \omega^k e^{jk\pi/2} \), so

\[ \text{gain (dB)} = 20\log|G(j\omega)| = 20k\log\omega, \qquad \text{phase (deg)} = \angle G(j\omega) = k \cdot 90^\circ. \]The gain curve is a straight line with slope \( 20k \) dB per decade on the log-log scale, passing through 0 dB at \( \omega = 1 \). The phase is a constant \( k \cdot 90^\circ \). For an integrator (\( k = -1 \), the gain falls at \( -20 \) dB/decade and the phase is a constant \( -90^\circ \).

Example 8.20 (First-order system). Consider the first-order transfer function

\[ G(s) = \frac{a}{s + a}. \]Evaluating at \( s = j\omega \):

\[ \text{gain (dB)} = 20\log a - 10\log(\omega^2 + a^2), \qquad \text{phase (deg)} = -\frac{180^\circ}{\pi}\arctan\frac{\omega}{a}. \]At low frequencies \( \omega \ll a \), the gain is approximately \( 20\log(a/a) = 0 \) dB (normalized) and the phase approaches \( 0^\circ \). At the corner frequency (or break frequency) \( \omega = a \), the gain is \( -3 \) dB and the phase is \( -45^\circ \). At high frequencies \( \omega \gg a \), the gain rolls off at \( -20 \) dB/decade and the phase asymptotically approaches \( -90^\circ \). The asymptotic Bode approximation models the gain curve as two straight-line segments: 0 dB for \( \omega < a \), and a line of slope \( -20 \) dB/decade for \( \omega > a \).

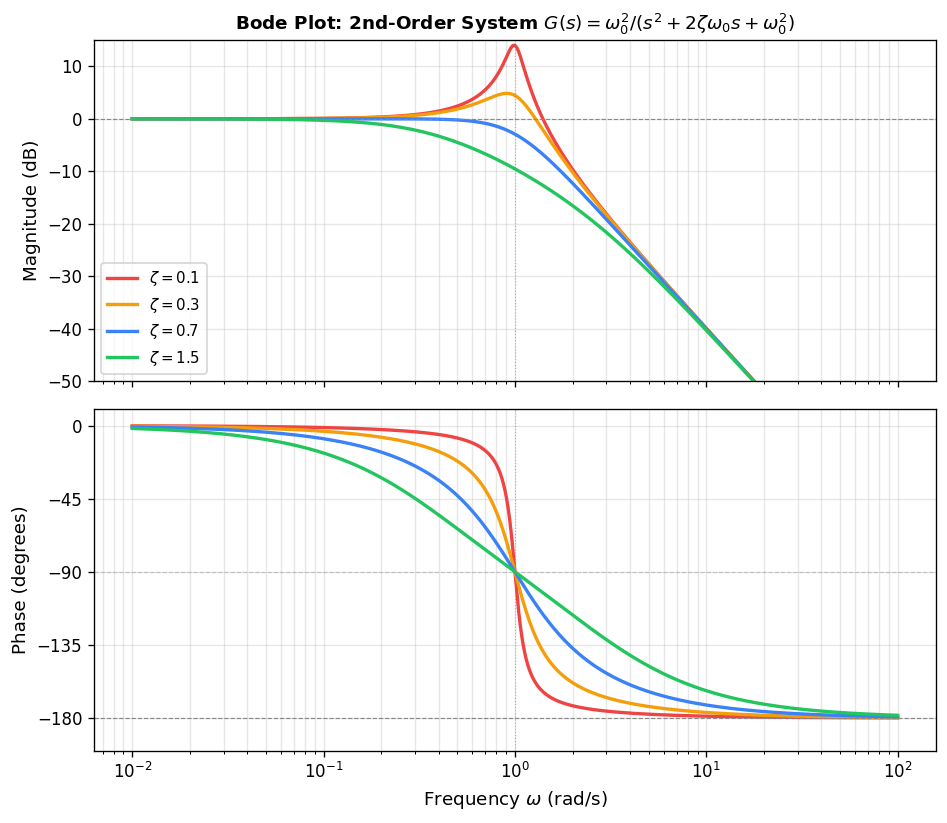

Example 8.21 (Second-order system). Consider the second-order transfer function

\[ G(s) = \frac{\omega_0^2}{s^2 + 2\zeta\omega_0 s + \omega_0^2}, \]where \( \omega_0 > 0 \) is the natural frequency and \( \zeta > 0 \) is the damping ratio. The gain and phase of the frequency response are

\[ \text{gain (dB)} = 40\log\omega_0 - 10\log\!\left(\omega^4 + 2\omega_0^2\omega^2(2\zeta^2 - 1) + \omega_0^4\right), \]\[ \text{phase (deg)} = -\frac{180^\circ}{\pi}\arctan\frac{2\zeta\omega_0\,\omega}{\omega_0^2 - \omega^2}. \]For \( \omega \ll \omega_0 \), the gain is approximately 0 dB and the phase approaches \( 0^\circ \). For \( \omega \gg \omega_0 \), the gain falls at \( -40 \) dB/decade and the phase approaches \( -180^\circ \). The transition near \( \omega = \omega_0 \) depends strongly on the damping ratio: for small \( \zeta \) (lightly damped systems), a sharp resonance peak appears in the gain curve near \( \omega_0 \), while large \( \zeta \) (heavily damped systems) produces a much smoother roll-off. The height of the resonance peak grows as \( \zeta \to 0 \), approaching infinity for an undamped system (which has poles on the imaginary axis at \( \pm j\omega_0 \). The higher the damping ratio, the flatter the Bode curves.

In MATLAB, for a defined transfer function object G, the command bode(G) generates the Bode plot automatically. For instance, to plot the Bode diagram for the second-order system with \( \omega_0 = 1 \) and \( \zeta = 0.1 \):

s = tf('s');

zeta = 0.1;

G = 1/(s^2 + 2*zeta*s + 1);

bode(G);

The resulting Bode plot shows the characteristic resonance peak in the gain curve near \( \omega = 1 \) rad/s and the transition in the phase curve from \( 0^\circ \) to \( -180^\circ \).

Poles, Zeros, and Internal Stability

Poles and Zeros

The input-output behavior of a linear control system is shaped, to a remarkable degree, by the locations of certain special points in the complex plane associated with its transfer function. These points — the poles and zeros — encode information about the system’s natural frequencies, resonances, and the signals it can block. In this lecture we define poles and zeros precisely, relate them to the underlying state-space realization, and examine their influence on the step response.

Poles and Zeros for a SISO Transfer Function

Definition 9.1 (Poles and zeros). Consider a SISO transfer function written in the coprime form

\[ G(s) = \frac{n(s)}{d(s)}, \]where \(n(s)\) and \(d(s)\) are coprime polynomials (i.e., their greatest common divisor is 1). The roots of the numerator \(n(s)\) are called the zeros of \(G(s)\), and the roots of the denominator \(d(s)\) are called the poles of \(G(s)\).

This definition parallels the notion of poles and zeros from complex analysis. Note that complex poles and zeros always appear in conjugate pairs when the coefficients of \(n(s)\) and \(d(s)\) are real.

Example 9.2. Consider

\[ G(s) = \frac{s+1}{s^2 + s + 1}. \]There is a single zero at \(s = -1\) and two poles at \(s = \frac{-1 \pm j\sqrt{3}}{2}\). Tools such as pzplot or pzmap in MATLAB render these graphically: poles are indicated by a cross (\(\times\) and zeros by a circle (\(\circ\).

Relationship with the Frequency Response

Any SISO transfer function can be factored as

\[ G(s) = k \frac{(s - z_1)(s - z_2)\cdots(s - z_r)}{(s - p_1)(s - p_2)\cdots(s - p_n)}, \]where \(z_1, \ldots, z_r\) are the zeros, \(p_1, \ldots, p_n\) are the poles, and \(k\) is a scalar gain. Evaluating on the imaginary axis at \(s = j\omega\) yields

\[ G(j\omega) = k \frac{(j\omega - z_1)(j\omega - z_2)\cdots(j\omega - z_r)}{(j\omega - p_1)(j\omega - p_2)\cdots(j\omega - p_n)}. \]Taking logarithms and arguments, we obtain

\[\begin{aligned} \log |G(j\omega)| &= \log k + \sum_{i=1}^{r} |j\omega - z_i| - \sum_{i=1}^{n} |j\omega - p_i|, \\ \angle G(j\omega) &= \sum_{i=1}^{r} \angle(j\omega - z_i) - \sum_{i=1}^{n} \angle(j\omega - p_i). \end{aligned}\]In other words, both the magnitude and phase of the frequency response are completely determined by the pole-zero locations up to an overall gain constant. The geometric interpretation — each term is the angle or distance from \(j\omega\) to a specific pole or zero in the complex plane — provides an intuitive way to reason about Bode plots without explicit computation.

Relationship with State-Space Realizations

Poles Versus Eigenvalues

Let \((A, B, C, D)\) be a state-space realization of \(G(s)\). Recall that

\[ G(s) = C(sI - A)^{-1}B + D = \frac{C\,\mathrm{adj}(sI - A)B + D\det(sI - A)}{\det(sI - A)} = \frac{n(s)}{d(s)}. \]It is possible for \(C\,\mathrm{adj}(sI-A)B + D\det(sI-A)\) and \(\det(sI-A)\) to share common factors, which must be cancelled to reach the coprime form. Nevertheless, every root of \(d(s)\) is always a root of \(\det(sI-A)\), the characteristic polynomial of \(A\). Hence every pole of \(G(s)\) is an eigenvalue of \(A\). The converse holds precisely when the realization is minimal.

Theorem 9.3. Let \((A, B, C, D)\) be a realization of a SISO transfer function \(G(s)\). Then every pole of \(G(s)\) is an eigenvalue of \(A\). Furthermore, the following statements are equivalent:

- All eigenvalues of \(A\) are poles of \(G(s)\).

- \((A, B, C, D)\) is a minimal realization of \(G(s)\).

- \(C\,\mathrm{adj}(sI-A)B\) and \(\det(sI-A)\) are coprime.

The proof follows from the observation that if \((A_1, B_1, C_1, D_1)\) is a minimal realization, then its denominator has strictly smaller degree than \(\det(sI-A)\) whenever \((A, B, C, D)\) is non-minimal, so \(\det(sI-A)\) and \(C\,\mathrm{adj}(sI-A)B\) cannot be coprime in that case.

Zeros Versus Invariant Zeros

Let \(u(t) = e^{st}u_0\) be an exponential input with \(u_0 \in \mathbb{R}\) and let \(s \neq \lambda(A)\) (i.e., \(s\) is not an eigenvalue of \(A\). A computation using the variation-of-parameters formula shows that if one chooses the initial condition \(x_0 = (sI - A)^{-1}Bu_0\), then

\[\begin{aligned} x(t) &= (sI - A)^{-1}B\,e^{st}u_0, \\ y(t) &= \bigl[C(sI-A)^{-1}B + D\bigr]e^{st}u_0 = G(s)\,e^{st}u_0. \end{aligned}\]If \(s\) is a zero of \(G(s)\), then \(y(t) = 0\) for all \(t \geq 0\): the system completely blocks the transmission of the exponential \(e^{st}\). Substituting \(x(t) = e^{st}x_0\) back into the state equations yields

\[ \begin{bmatrix} sI - A & -B \\ -C & -D \end{bmatrix} \begin{bmatrix} x_0 \\ u_0 \end{bmatrix} = 0, \]which has a nonzero solution only if the matrix on the left loses rank. This motivates the following definition.

Definition 9.4 (Invariant zeros). A complex number \(s\) is called an invariant zero of the state-space model \((A, B, C, D)\) if

\[ \mathrm{rank}\begin{bmatrix} sI - A & -B \\ -C & -D \end{bmatrix} < n + 1. \]Theorem 9.5. Let \((A, B, C, D)\) be a realization of a SISO transfer function \(G(s)\). Then every zero of \(G(s)\) is an invariant zero of \((A, B, C, D)\). Furthermore, the following statements are equivalent:

- All invariant zeros of \((A, B, C, D)\) are zeros of \(G(s)\).

- \((A, B, C, D)\) is a minimal realization of \(G(s)\).

- \(C\,\mathrm{adj}(sI-A)B\) and \(\det(sI-A)\) are coprime.

Proof. Let \(s\) be a zero of \(G(s)\), so that \(C\,\mathrm{adj}(sI-A)B + D\det(sI-A) = 0\). By Cramer’s rule one verifies that

\[ C\,\mathrm{adj}(sI-A)B + D\det(sI-A) = \det\begin{bmatrix} sI-A & -B \\ -C & -D \end{bmatrix}. \]Hence this determinant vanishes, meaning the matrix loses rank and \(s\) is an invariant zero. The remainder of the equivalences follows by an argument parallel to that for Theorem 9.3.

Proposition 9.6 (Transmission blocking). Consider a SISO system \((A, B, C, D)\) with transfer function \(G(s)\). Let \(s_0\) be an invariant zero of \((A, B, C, D)\) that is not an eigenvalue of \(A\). Then there exists a nonzero input of the form \(u(t) = e^{s_0 t}u_0\) and an initial condition \(x_0\) such that the corresponding output \(y(t) = 0\) for all \(t \geq 0\). In particular, the same conclusion holds for any zero of \(G(s)\).

Proof. Since \(s_0\) is an invariant zero, there exists a nonzero vector \((x_0, u_0) \in \mathbb{R}^{n+1}\) satisfying

\[ \begin{bmatrix} s_0 I - A & -B \\ -C & -D \end{bmatrix} \begin{bmatrix} x_0 \\ u_0 \end{bmatrix} = 0. \]Because \(s_0\) is not an eigenvalue of \(A\), we can write \(x_0 = (s_0 I - A)^{-1}Bu_0\); in particular \(u_0 \neq 0\). Setting \(x(t) = e^{s_0 t}x_0\) and \(u(t) = e^{s_0 t}u_0\), the condition \(s_0 x_0 = Ax_0 + Bu_0\) ensures \(x'(t) = Ax(t) + Bu(t)\), and the condition \(Cx_0 + Du_0 = 0\) gives \(y(t) = 0\) for all \(t \geq 0\).

Effects of Zeros and Poles on the Step Response

The unit step response is the output produced by the input \(u(t) = 1\) for \(t \geq 0\) and zero initial conditions. Its Laplace transform is \(\hat{u}(s) = 1/s\), so the step response in the frequency domain is \(\hat{y}(s) = G(s)/s\).

Example 9.7 (Step response of a first-order system). A general first-order strictly proper transfer function is

\[ G(s) = \frac{a_0}{s + b_0} = \frac{k}{\tau s + 1}, \]where \(k = a_0/b_0\) is the DC gain (also called the steady-state gain) and \(\tau = 1/b_0\) is the time constant. With a unit step input, \(\hat{y}(s) = k/(s(\tau s+1))\), and taking the inverse Laplace transform yields the step response

\[ y(t) = k\!\left[1 - e^{-t/\tau}\right], \quad t \geq 0. \]The system rises monotonically from zero to the steady state \(k\), reaching approximately \(63\%\) of its final value after one time constant.

Example 9.8 (Step response of a second-order system). Consider

\[ G(s) = k\frac{\omega_n^2}{s^2 + 2\zeta\omega_n s + \omega_n^2}, \]where \(k\) is the DC gain, \(\omega_n = \sqrt{b_0}\) is the natural frequency, and \(\zeta = b_1/(2\omega_n)\) is the damping ratio. In the underdamped case \(\zeta < 1\), the step response is

\[ y(t) = k\!\left[1 - \frac{1}{\beta}e^{-\zeta\omega_n t}\sin(\beta\omega_n t + \theta)\right], \]where \(\beta = \sqrt{1-\zeta^2}\) and \(\theta = \arctan(\beta/\zeta)\).

Adding a pole. Inserting an additional pole at location \(-a\) gives