PHYS 358: Thermal Physics

J. D. D. Martin

Estimated study time: 3 hr 51 min

Table of contents

Sources and References

Primary textbook — Schroeder, D. V. An Introduction to Thermal Physics. Addison-Wesley, 2000.

Supplementary texts — Callen, H. B. Thermodynamics and an Introduction to Thermostatistics, 2nd ed. Wiley, 1985; Kittel, C. and Kroemer, H. Thermal Physics, 2nd ed. W. H. Freeman, 1980; Blundell, S. J. and Blundell, K. M. Concepts in Thermal Physics, 2nd ed. Oxford University Press, 2010; Reif, F. Fundamentals of Statistical and Thermal Physics. McGraw-Hill, 1965; Fermi, E. Thermodynamics. Dover, 1956.

Online resources — Tong, D. Statistical Physics (Cambridge Part II Mathematical Tripos lecture notes, 2012); MIT OpenCourseWare 8.044 Statistical Physics I; NIST Chemistry WebBook (webbook.nist.gov) for fluid and thermochemical reference data.

Part I: Macroscopic Phenomenological Thermal Physics

Chapter 1: Temperature and Thermometry

The empirical notion of temperature

Every student enters a first course in thermal physics carrying an informal notion of temperature picked up from everyday life — the burning sensation of a hot pan, the chill of a winter morning, the body’s fever. Before we can do any serious physics, we need to sharpen that intuition into a measurement procedure, and ultimately into a mathematical definition. That formal definition will come much later, grounded in the statistical behaviour of many-particle systems. For now we adopt an operational stance: temperature is what a thermometer reads.

This may sound circular, but it is less evasive than it appears. The statement is grounded in a physical fact: when two bodies are brought into thermal contact for long enough, they reach thermal equilibrium — a condition in which no further spontaneous heat flow occurs. We say the two bodies have reached the same temperature. This is the content of what is sometimes called the zeroth law of thermodynamics: if body A is in thermal equilibrium with body C, and body B is also in equilibrium with C, then A and B are in equilibrium with each other. The zeroth law makes temperature a well-defined equivalence relation and justifies the use of a single number — the thermometric reading — to characterise thermal equilibrium.

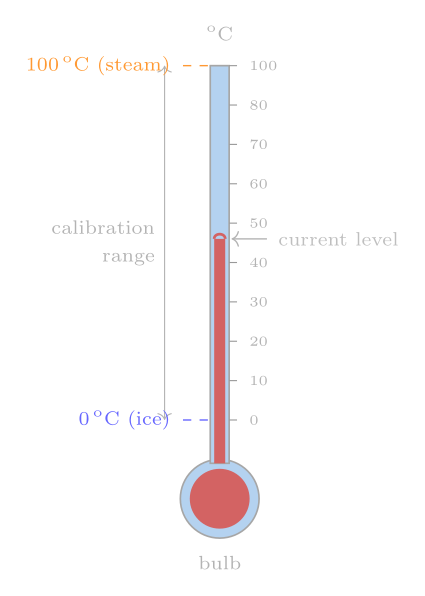

Liquid-in-glass thermometers

The most familiar thermometer exploits thermal expansion. A glass capillary contains a liquid — historically mercury, now more often red-dyed alcohol or mineral spirits — whose volume increases with temperature. As the liquid expands it rises in the capillary; by marking the position of the meniscus against a scale one obtains a temperature reading.

Constructing the scale requires fixed points: reproducible temperatures at which we all agree on the numerical value. Anders Celsius in 1742 chose the freezing point of water as 0 °C and the boiling point as 100 °C (his original scale was inverted, with boiling at 0 and freezing at 100 — later colleagues reversed it). Given those two anchor points, the simplest prescription is to divide the intervening column length into a hundred equal parts, assigning each one the label of one degree Celsius.

Here a subtle but important problem arises: the scale between the fixed points depends on which liquid is inside the thermometer. A mercury thermometer and an alcohol thermometer, both calibrated to agree at 0 °C and 100 °C, will disagree at intermediate temperatures because the thermal expansion coefficients of the two liquids vary with temperature in different ways. Measurements confirm this: an alcohol thermometer can read several degrees lower than mercury when both are immersed in a bath that mercury calls 50 °C. So the liquid-in-glass thermometer gives us an arbitrary, material-dependent scale. We need something better.

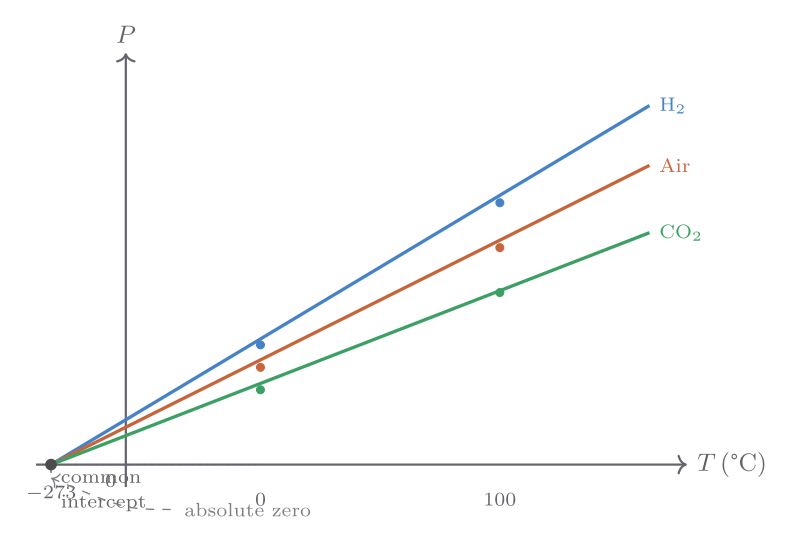

Constant-volume gas thermometers and absolute zero

A far more universal thermometer uses the pressure of a dilute gas confined to a fixed volume. The apparatus — a bulb containing gas connected via a manometer to a reservoir — can maintain constant volume by adjusting the height of a mercury column while reading the gas pressure. Early experimenters, following the work of Gay-Lussac and Charles, found that gases of different chemical identity (air, carbon dioxide, hydrogen) gave pressure-temperature curves that were very nearly identical when the gas was dilute enough, and that they all extrapolated to zero pressure at the same temperature, regardless of which gas was used.

Extrapolating the pressure–temperature straight lines to zero pressure yields a common intercept at approximately \(-273.15\) °C. This is not a coincidence — it marks the point at which a classical ideal gas would have zero kinetic energy, and it defines a natural lower bound to temperature. We call this temperature absolute zero.

Shifting the origin to absolute zero gives the Kelvin scale: \(T(\text{K}) = T(°\text{C}) + 273.15\). In terms of absolute temperature the ideal-gas-thermometer relationship becomes simply

\[ PV = Nk_{\mathrm{B}}T, \]where \(N\) is the number of molecules and \(k_{\mathrm{B}} \approx 1.381 \times 10^{-23}\ \text{J K}^{-1}\) is Boltzmann’s constant. Equivalently, writing \(n\) for the number of moles and \(R = N_{\mathrm{A}} k_{\mathrm{B}} \approx 8.314\ \text{J mol}^{-1}\ \text{K}^{-1}\) for the ideal gas constant,

\[ PV = nRT. \]All four quantities — Boltzmann’s constant, Avogadro’s number \(N_{\mathrm{A}} \approx 6.022 \times 10^{23}\ \text{mol}^{-1}\), the ideal gas constant, and absolute temperature itself — are intimately linked through these two equations. The ideal gas law will serve as our operational definition of temperature throughout Part I; in Part II we will derive it from microscopic first principles.

The International Temperature Scale (ITS-90), adopted by international agreement in 1990, achieves reproducibility to better than a millikelvin by specifying a network of fixed reference points — the triple point of water at 273.16 K, the freezing points of various metals — and interpolation procedures between them. The Kelvin was formally redefined in 2019 in terms of a fixed numerical value of \(k_{\mathrm{B}}\), removing any dependence on physical artefacts.

A charming historical curiosity: Galileo thermometers exploit the temperature-dependence of fluid density rather than volume. Glass spheres of slightly different masses are sealed inside a liquid column; as temperature changes, the liquid’s density shifts and different spheres float or sink, with the lowest floating sphere indicating the temperature. The device is decorative but imprecise — a reminder that many of thermometry’s foundational concepts, including the notion of a fixed point, were worked out long before thermodynamics was a science.

Chapter 2: The Ideal Gas and the Microscopic Origin of Temperature

The ideal gas law revisited

The ideal gas law \(PV = Nk_{\mathrm{B}}T\) encodes a great deal of physics in a deceptively compact form. An ideal gas is one in which intermolecular interactions are negligible — the molecules move freely except during brief, elastic collisions. This is an excellent approximation for real gases at low densities and high temperatures, and it captures the essential behaviour that connects pressure, volume, temperature, and the amount of substance.

Before moving to a formal derivation, we should absorb what the law is saying. Pressure arises from molecular impacts on the container walls; doubling the number of molecules at fixed volume and temperature doubles the pressure. Doubling the temperature at fixed volume doubles the pressure because molecules move faster and hit harder. Doubling the volume at fixed temperature and molecule number halves the pressure because molecules strike the walls less frequently. Each of these statements is embedded in the single equation \(PV = Nk_{\mathrm{B}}T\).

Kinetic derivation of pressure and temperature

Consider a single molecule of mass \(m\) bouncing elastically between the walls of a rectangular box of length \(L\) in the \(x\)-direction. Every time the molecule strikes the right wall, it reverses its \(x\)-component of velocity from \(+v_x\) to \(-v_x\), transferring momentum \(2mv_x\) to the wall. The time between successive strikes on the same wall is \(2L/v_x\), so the average force exerted on the wall by this molecule is

\[ \bar{F}_x = \frac{2mv_x}{2L/v_x} = \frac{mv_x^2}{L}. \]For \(N\) molecules with a distribution of velocities, the total pressure on the wall of area \(A = V/L\) is

\[ P = \frac{N m \langle v_x^2 \rangle}{V}, \]where angle brackets denote an average over molecules. By isotropy — no direction is preferred — \(\langle v_x^2 \rangle = \langle v_y^2 \rangle = \langle v_z^2 \rangle = \tfrac{1}{3}\langle v^2 \rangle\), so

\[ P = \frac{2N}{3V}\left\langle \frac{1}{2}mv^2 \right\rangle = \frac{2N}{3V}\langle \epsilon_{\mathrm{kin}} \rangle. \]Comparing with the ideal gas law \(PV = Nk_{\mathrm{B}}T\) gives immediately

\[ \langle \epsilon_{\mathrm{kin}} \rangle = \frac{3}{2}k_{\mathrm{B}}T. \]This is a profound result: temperature is a measure of the mean translational kinetic energy per molecule. The factor \(\tfrac{3}{2}\) arises from the three independent spatial degrees of freedom. The total internal energy of a monatomic ideal gas of \(N\) molecules — each with no internal structure to store energy — is therefore

\[ U = \frac{3}{2}Nk_{\mathrm{B}}T. \]Two significant assumptions underlie this derivation: the collisions are elastic (no energy is lost to the walls), and all of the molecule’s energy is translational kinetic energy. For monatomic gases such as helium, neon, and argon these assumptions hold remarkably well at ordinary temperatures. For diatomic or polyatomic molecules the picture is richer: the molecule can also rotate and vibrate, storing energy in ways that do not contribute to the pressure.

Joule’s free-expansion experiment and the energy of an ideal gas

James Prescott Joule established experimentally that the internal energy of a real gas depends almost entirely on temperature and not on volume. In his famous free-expansion experiment, gas from a pressurised vessel was allowed to expand into an evacuated vessel, the whole apparatus being immersed in a water bath. Because the gas expanded into vacuum, it did no work; and Joule detected no change in the water temperature, indicating no heat flow. Since \(\Delta U = Q + W = 0\), the internal energy of the gas remained constant even though its volume doubled. The temperature also remained constant. Together these observations mean that, for an ideal gas, \(U\) depends only on \(T\) (and the fixed amount of substance), not on \(P\) or \(V\) independently. This is consistent with the kinetic result \(U = \tfrac{3}{2}Nk_{\mathrm{B}}T\) for a monatomic gas: once \(T\) is fixed, \(U\) is fixed.

The equipartition theorem and its limits

A broader empirical regularity appears when the constant-volume heat capacities of various gases are measured as a function of temperature. Monatomic gases give \(C_V = \tfrac{3}{2}Nk_{\mathrm{B}}\), diatomic gases give roughly \(\tfrac{5}{2}Nk_{\mathrm{B}}\) near room temperature and \(\tfrac{7}{2}Nk_{\mathrm{B}}\) at high temperatures, and polyatomic molecules yield still larger values. The pattern suggests a rule: each quadratic degree of freedom — one kinetic or potential energy term proportional to a coordinate or velocity squared — contributes \(\tfrac{1}{2}k_{\mathrm{B}}T\) to the mean energy and \(\tfrac{1}{2}Nk_{\mathrm{B}}\) to the heat capacity.

This is the equipartition theorem of classical statistical mechanics. For a diatomic molecule that can translate in three directions and rotate about two axes, \(f = 5\) quadratic degrees of freedom give \(U = \tfrac{5}{2}Nk_{\mathrm{B}}T\) and \(C_V = \tfrac{5}{2}Nk_{\mathrm{B}}\). At high enough temperatures vibrational modes unlock, adding two more (one kinetic, one potential per mode), raising \(f\) to 7.

The equipartition theorem is a classical result and fails dramatically when quantum effects are important. The energy spacings of rotational and vibrational levels are discrete, and at temperatures where \(k_{\mathrm{B}}T\) is much smaller than those spacings the modes are “frozen out” and do not contribute. This explains why the heat capacity of hydrogen rises in steps as temperature increases: translational modes are always active, rotational modes switch on around 100 K, and vibrational modes require several thousand kelvin. Equipartition should therefore be regarded as a useful approximation valid in specific temperature ranges rather than an exact theorem. Its proper derivation and limits are the subject of a full statistical-mechanics course; here we use its results empirically, taking \(C_V = \tfrac{3}{2}Nk_{\mathrm{B}}\) for noble gases and \(C_V \approx \tfrac{5}{2}Nk_{\mathrm{B}}\) for air near room temperature.

Chapter 3: The First Law, Work, and Heat Capacities

The first law of thermodynamics

Energy is conserved. For a thermodynamic system, energy can change in two ways: by heat flowing across its boundary, or by work being done on or by it. Writing \(Q\) for the heat flowing into the system and \(W\) for the work done on the system, the first law states

\[ \Delta U = Q + W. \]Several subtleties of notation deserve emphasis. Heat \(Q\) and work \(W\) are not properties of the system’s state — they describe processes, not states. There is no such thing as “the heat content” of a gas; there is only the heat that has flowed during a particular change. For this reason, writing the first law as \(\Delta U = \Delta Q + \Delta W\) is misleading, and the more careful notation with plain \(Q\) and \(W\) (understood as finite quantities associated with a process) is preferred. An infinitesimal version is often written \(dU = \delta Q + \delta W\), where the Greek \(\delta\) signals that \(\delta Q\) and \(\delta W\) are not exact differentials — they depend on the path taken, not just on the initial and final states.

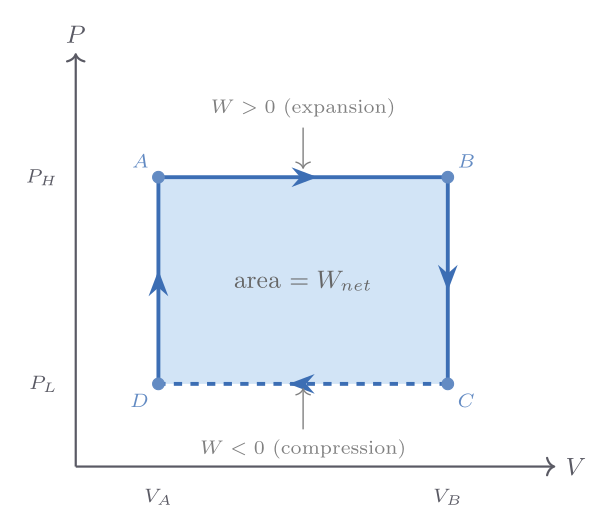

Expansion and compression work

When a gas expands quasi-statically against an external pressure, it does work on its surroundings. Quasi-static means the process passes through a continuous sequence of equilibrium states: the gas pressure remains well-defined throughout. Under this condition, the work done on the gas in a volume change \(dV\) is

\[ \delta W = -P\,dV, \]with the sign convention that compression (\(dV < 0\)) is positive work done on the gas. For a finite quasi-static process,

\[ W = -\int_{V_i}^{V_f} P\,dV. \]On a pressure-volume indicator diagram — a plot of \(P\) versus \(V\) — the work done by the gas in going from state \(i\) to state \(f\) is the area under the curve. A crucial lesson: this area, and hence \(W\), depends on the path taken in \(PV\)-space, not just on the endpoints.

The path-dependence of work becomes vivid when we compare three ways of taking an ideal gas from a state \((P_i, V_i)\) to a state \((P_i/2,\, 2V_i)\) — the same initial and final temperatures since \(T = PV/Nk_{\mathrm{B}}\) is unchanged. Along an isotherm the work done on the gas is \(W = -Nk_{\mathrm{B}}T\ln 2 \approx -0.69\,Nk_{\mathrm{B}}T\); along the path of constant volume followed by constant pressure it is \(-Nk_{\mathrm{B}}T/2\); and along constant pressure followed by constant volume it is \(-Nk_{\mathrm{B}}T\). The internal energy change \(\Delta U = 0\) in all three cases (since \(\Delta T = 0\) for an ideal gas), but \(Q = -W\) takes three different values. This concretely demonstrates that \(Q\) and \(W\) are properties of the process, not of the states.

Non-quasi-static processes — such as Joule’s free expansion into vacuum — cannot be represented as paths on an indicator diagram because the pressure is not well-defined during the process. Such processes are indicated by dotted lines connecting the well-defined initial and final states.

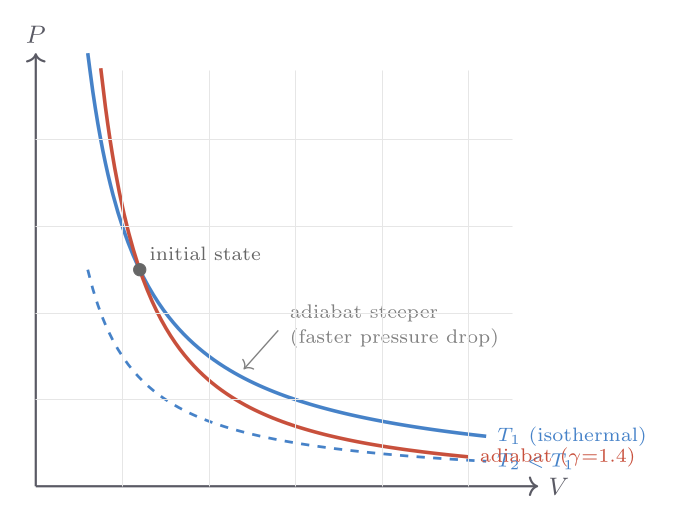

Isothermal and adiabatic processes

An isothermal process maintains constant temperature. For an ideal gas, \(PV = Nk_{\mathrm{B}}T = \text{const}\), so the indicator-diagram curve is a hyperbola \(P \propto V^{-1}\). Since \(\Delta U = 0\) for isothermal changes of an ideal gas, all heat flowing in exactly balances the work done by the gas: \(Q = -W = Nk_{\mathrm{B}}T\ln(V_f/V_i)\).

An adiabatic process allows no heat exchange: \(\delta Q = 0\). This can be achieved either by thermally insulating the system or by performing the process so rapidly that heat has no time to flow. When a gas is compressed adiabatically it heats up; when it expands it cools — phenomena familiar in the firing of a fire syringe (rapid compression of air ignites tinder) and in the cooling of rising air masses in meteorology.

For a quasi-static adiabatic process on an ideal gas with constant heat-capacity ratio \(\gamma = C_P/C_V\), the first law gives \(C_V\,dT = -P\,dV\), which together with the ideal gas law integrates to the adiabat relations:

\[ TV^{\gamma-1} = \text{const}, \qquad PV^{\gamma} = \text{const}. \]Since \(\gamma > 1\) always, adiabats are steeper than isotherms on a \(PV\) diagram — the gas cools faster than it would need to in order to maintain temperature. This steepness has a famous application: Newton computed the speed of sound assuming isothermal compression and obtained the wrong answer; Laplace’s correction using adiabatic compression (since sound waves oscillate too fast for heat to flow) gave the right result, \(v_s = \sqrt{\gamma P/\rho}\).

Internal energy and heat capacities

The heat capacity at constant volume is defined as the ratio of heat flow to temperature change under conditions of constant volume (so that no work is done):

\[ C_V = \left(\frac{\partial U}{\partial T}\right)_V. \]For a monatomic ideal gas, \(C_V = \tfrac{3}{2}Nk_{\mathrm{B}}\). For air (predominantly diatomic \(\mathrm{N_2}\) and \(\mathrm{O_2}\) near room temperature), \(C_V \approx \tfrac{5}{2}Nk_{\mathrm{B}}\).

The heat capacity at constant pressure \(C_P\) is more relevant for laboratory experiments on gases and for all work with condensed phases (solids and liquids), where constant volume is difficult to enforce. Under constant pressure, the first law gives

\[ Q = \Delta U + P\Delta V = \Delta H, \]where \(H \equiv U + PV\) is the enthalpy (discussed in Chapter 5). Hence

\[ C_P = \left(\frac{\partial H}{\partial T}\right)_P. \]For an ideal gas, Joule’s result that \(U\) depends only on \(T\) means \((\partial U/\partial T)_P = (\partial U/\partial T)_V = C_V\). The extra work term when the gas expands at constant pressure contributes \(P(\partial V/\partial T)_P = Nk_{\mathrm{B}}\), giving the Mayer relation:

\[ C_P - C_V = Nk_{\mathrm{B}} \quad \text{(ideal gas)}. \]This is not an approximation for an ideal gas — it is exact. For a real substance the general relation is \(C_P - C_V = TV\beta^2/\kappa_T\), where \(\beta = (1/V)(\partial V/\partial T)_P\) is the isobaric thermal expansivity and \(\kappa_T = -(1/V)(\partial V/\partial P)_T\) is the isothermal compressibility. For solids and liquids, \(\beta\) and \(\kappa_T\) are small, so \(C_P \approx C_V\) — the difference is typically a few percent of \(C_V\) near room temperature for crystalline solids. For gases, the Mayer correction is significant: for air, \(C_P/C_V = \gamma \approx 1.40\).

As a numerical example, for copper at 298 K: \(\beta = 5.0 \times 10^{-5}\ \text{K}^{-1}\), \(\kappa_T = 7.2 \times 10^{-12}\ \text{Pa}^{-1}\), molar volume \(V_m = 7.1 \times 10^{-6}\ \text{m}^3\text{mol}^{-1}\), and \(C_V = 22.6\ \text{J mol}^{-1}\text{K}^{-1}\). The correction is \(TV_m\beta^2/\kappa_T = 298 \times 7.1\times10^{-6} \times (5\times10^{-5})^2 / (7.2\times10^{-12}) \approx 0.73\ \text{J mol}^{-1}\text{K}^{-1}\), giving \(C_P \approx 24.5\ \text{J mol}^{-1}\text{K}^{-1}\) — a 3.2% correction, in agreement with the tabulated value of \(24.4\ \text{J mol}^{-1}\text{K}^{-1}\).

The general relation \(C_P - C_V = TV\beta^2/\kappa_T\) has an important consequence: since \(\beta^2 \geq 0\) and \(\kappa_T \geq 0\) (matter is mechanically stable), we always have \(C_P \geq C_V\). This is a thermodynamic stability condition, not an accident.

Deriving \(C_P - C_V = Nk_{\mathrm{B}}\) for an ideal gas

The Mayer relation deserves a careful derivation, because the argument is frequently glossed over. Starting from the definition \(C_P - C_V = (\partial H/\partial T)_P - (\partial U/\partial T)_V\), substitute \(H = U + PV\):

\[ C_P - C_V = \left(\frac{\partial U}{\partial T}\right)_P + P\left(\frac{\partial V}{\partial T}\right)_P - \left(\frac{\partial U}{\partial T}\right)_V. \]The first and third terms are not identical because \(U\) is evaluated at constant \(P\) and constant \(V\) respectively. Using the chain rule for \(U(T, V)\) evaluated at constant \(P\):

\[ \left(\frac{\partial U}{\partial T}\right)_P = \left(\frac{\partial U}{\partial T}\right)_V + \left(\frac{\partial U}{\partial V}\right)_T \left(\frac{\partial V}{\partial T}\right)_P. \]Substituting and collecting terms:

\[ C_P - C_V = \left[\left(\frac{\partial U}{\partial V}\right)_T + P\right]\left(\frac{\partial V}{\partial T}\right)_P. \]For an ideal gas, Joule’s free-expansion experiment established \((\partial U/\partial V)_T = 0\). The remaining factor is \(P(\partial V/\partial T)_P\). With \(V = Nk_{\mathrm{B}}T/P\), differentiation gives \((\partial V/\partial T)_P = Nk_{\mathrm{B}}/P\), and therefore

\[ C_P - C_V = P \cdot \frac{Nk_{\mathrm{B}}}{P} = Nk_{\mathrm{B}}. \]For a non-ideal substance, the internal pressure \((\partial U/\partial V)_T\) is not zero — it quantifies how intermolecular attractions store potential energy when the substance is expanded. In Chapter 18 the Maxwell relation \((\partial U/\partial V)_T = T(\partial P/\partial T)_V - P\) will be used to express this internal pressure in terms of measurable \(P\)–\(V\)–\(T\) derivatives, yielding the general formula \(C_P - C_V = TV\beta^2/\kappa_T\).

The heat-capacity ratio \(\gamma\) and its physical meaning

The dimensionless ratio \(\gamma \equiv C_P/C_V\) appears in nearly every formula involving adiabatic processes. For monatomic ideal gases \(\gamma = 5/3 \approx 1.667\); for diatomic gases near room temperature \(\gamma = 7/5 = 1.4\); for polyatomic gases with many modes \(\gamma \to 1\) from above. The significance of \(\gamma\) is most transparent in the adiabatic relations. When an ideal gas is compressed adiabatically, the first law gives \(C_V\,dT = -P\,dV\). Using the ideal gas law to eliminate \(P\) and integrating yields \(TV^{\gamma-1} = \text{const}\). Rewriting in terms of pressure: since \(T = PV/(Nk_{\mathrm{B}})\), one obtains \(PV^\gamma = \text{const}\).

The ratio \(\gamma\) also controls the speed of sound in an ideal gas. Newton originally computed the sound speed as \(v_s = \sqrt{P/\rho}\), assuming isothermal compression. Laplace corrected this by noting that acoustic oscillations are so rapid that heat has no time to flow between compression and rarefaction zones — they are adiabatic. The corrected result is \(v_s = \sqrt{\gamma P/\rho} = \sqrt{\gamma k_{\mathrm{B}}T/m}\), where \(m\) is the molecular mass. For air at 293 K with \(\gamma = 1.40\) and \(m = 29 \times 1.66 \times 10^{-27}\ \text{kg}\), this gives \(v_s \approx 343\ \text{m s}^{-1}\), in excellent agreement with measurement.

Worked example: isothermal and adiabatic processes

Isothermal expansion. One mole of an ideal diatomic gas (\(C_V = \tfrac{5}{2}R\), \(C_P = \tfrac{7}{2}R\), \(\gamma = 1.4\)) at \(T = 300\ \text{K}\) and \(P_i = 2\ \text{atm}\) expands isothermally to \(P_f = 1\ \text{atm}\). Since temperature is fixed, \(U\) does not change. The work done by the gas is \(W_\text{by} = nRT\ln(V_f/V_i) = nRT\ln(P_i/P_f) = (1)(8.314)(300)\ln 2 \approx 1730\ \text{J}\). All of this work is supplied by heat flowing in: \(Q = 1730\ \text{J}\).

Adiabatic compression. Now suppose the gas at \(T_i = 300\ \text{K}\), \(P_i = 1\ \text{atm}\) is compressed adiabatically by a factor \(V_i/V_f = 10\) (compression ratio \(r = 10\)). The final temperature follows from \(TV^{\gamma-1} = \text{const}\):

\[ T_f = T_i \left(\frac{V_i}{V_f}\right)^{\gamma-1} = 300 \times 10^{0.4} \approx 300 \times 2.512 \approx 754\ \text{K}. \]The temperature rises by 454 K purely from compression — no heat is added. The final pressure is \(P_f = P_i(V_i/V_f)^\gamma = 1 \times 10^{1.4} \approx 25.1\ \text{atm}\). The work done on the gas equals the increase in internal energy: \(W = \Delta U = nC_V(T_f - T_i) = (1)(\tfrac{5}{2} \times 8.314)(454) \approx 9430\ \text{J}\). This large quantity of work stored as internal energy is the principle behind diesel ignition: air compressed to a ratio of 15:1 reaches temperatures around 800–900 K, sufficient to ignite injected fuel without a spark.

Physical difference between \(C_P\) and \(C_V\) experiments

Measuring \(C_V\) at constant volume requires a rigid container — straightforward for liquids (place sample in a sealed bomb calorimeter), but mechanically demanding for gases under pressure, and essentially impossible for a gas that must remain at low pressure while spanning a large temperature range. In practice, gas heat capacities are almost always measured at constant pressure (typically atmospheric), and \(C_V\) is obtained by subtraction using the Mayer relation. For solids and liquids, maintaining strict constant volume is so difficult that \(C_P\) is measured and the difference \(TV\beta^2/\kappa_T\) (typically 1–5 % of \(C_V\) for crystalline solids near room temperature) is subtracted to get \(C_V\). This general formula thus has direct experimental utility beyond its aesthetic elegance.

Chapter 4: Phase Transitions and Latent Heat

What is a phase?

Ordinary matter appears in distinct phases: solid, liquid, and gas (and less familiar phases such as plasma and superfluid). Within a given phase, macroscopic properties such as density and compressibility vary smoothly with temperature and pressure. At a phase transition, properties can change discontinuously — ice melts at a sharp temperature, and the density jumps from roughly \(917\ \text{kg/m}^3\) to \(1000\ \text{kg/m}^3\).

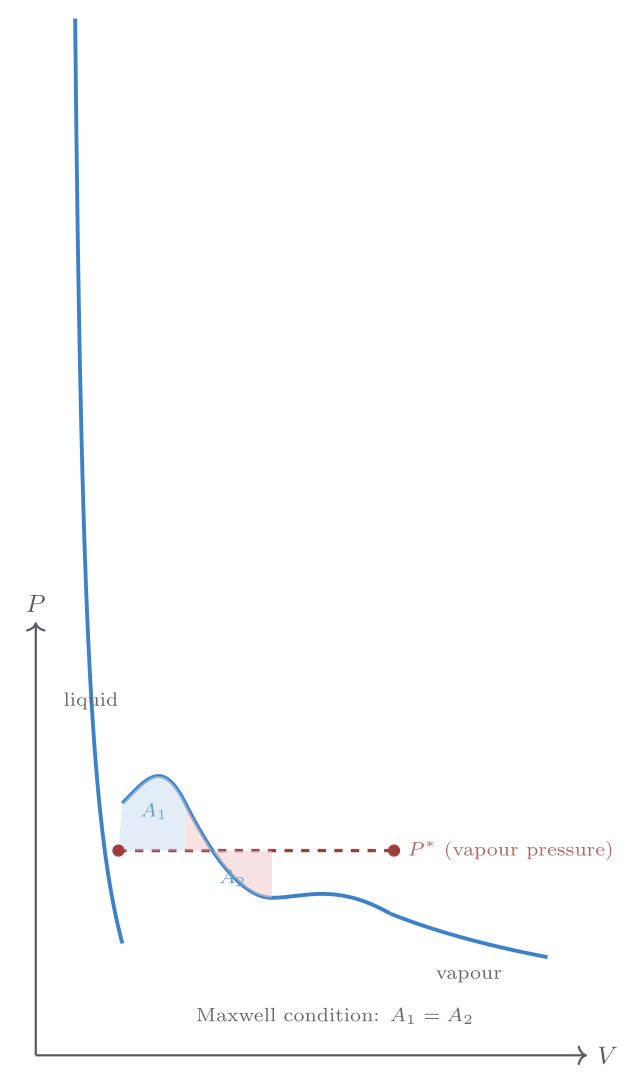

The clearest way to approach phase transitions is to start with the ideal gas law and ask where it breaks down. Consider the isotherms of real nitrogen (\(\mathrm{N_2}\)) on a \(P\)–\(V\) diagram. At high temperatures and large volumes, the ideal gas law \(P = Nk_{\mathrm{B}}T/V\) is an excellent description. As the temperature drops or the pressure rises, the actual pressure at a given volume falls below the ideal prediction — intermolecular attractions pull the molecules together slightly. At low enough temperatures, something dramatic occurs: as the gas is compressed at constant temperature, the pressure stops changing over a finite range of volumes. During this pressure plateau, liquid is forming from gas; the two phases coexist in equilibrium, and the volume decreases as more gas condenses into denser liquid. Only when all gas has condensed does the pressure rise steeply again.

This constant-pressure plateau — a first-order phase transition — disappears above the critical temperature \(T_c\). Above \(T_c\), no amount of pressure can force the fluid to condense discontinuously; there is a single fluid phase that connects smoothly between what we might call gas and liquid. Nitrogen has \(T_c \approx 126\ \text{K}\), \(P_c \approx 3.4\ \text{MPa}\). This means liquid nitrogen cannot exist above 126 K at any pressure — an important fact for cryogenic applications.

A gas can be continuously transformed into a liquid by skirting around the critical point in \(P\)–\(T\) space, never crossing the phase boundary. This reveals that liquids and gases are not fundamentally different phases — they are continuously connected. Solids, however, are fundamentally different: in the solid phase, atoms or molecules are fixed in their relative positions, and no continuous path in \(P\)–\(T\) space connects solid to fluid without crossing a phase boundary.

Latent heat

At a first-order phase transition such as melting or vaporisation, heat flows into (or out of) the system at constant temperature and pressure. This heat, the latent heat \(L\), is associated with the rearrangement of molecular bonds rather than a change in kinetic energy. The temperature remains constant during the transition because the added thermal energy is used to break bonds (increasing potential energy) rather than to increase the kinetic energy of molecules. This behaviour is fundamentally different from what the equipartition theorem would predict for a smooth, single-phase substance — it is a signature of the discrete, discontinuous nature of first-order phase transitions. For fusion (solid \(\to\) liquid) and vaporisation (liquid \(\to\) gas):

| Substance | \(L_\text{fus}\ (\text{kJ/mol})\) | \(L_\text{vap}\ (\text{kJ/mol})\) |

|---|---|---|

| Water | 6.01 | 40.7 |

| Nitrogen | 0.72 | 5.57 |

| Iron | 13.8 | 340 |

| Helium-4 | 0.021 | 0.083 |

Vaporisation latent heats are typically much larger than fusion latent heats, because converting a liquid to a gas requires breaking most intermolecular bonds, while melting only partially disorders the structure.

At constant pressure, the heat required to melt a mass \(m\) of a substance is \(Q = mL_\text{fus}\), where \(L_\text{fus}\) is the specific latent heat (per kilogram). Since this occurs at constant pressure, it equals the enthalpy change: \(\Delta H_\text{fus} = mL_\text{fus}\). The entropy change at a first-order transition is

\[ \Delta S = \frac{Q}{T_\text{transition}} = \frac{\Delta H}{T_\text{transition}}. \]For the melting of one mole of ice at 273 K, \(\Delta S = 6010/273 \approx 22\ \text{J mol}^{-1}\text{K}^{-1}\). Entropy increases on melting, as expected — the liquid has far more accessible microstates than the ordered crystal.

A remarkable demonstration of thermal physics involves the fire syringe: a tightly fitting piston rapidly compresses a small volume of air. The adiabatic temperature rise — which, for a compression ratio of 10:1 with \(\gamma = 1.4\), brings the temperature from 300 K to about 753 K — is sufficient to ignite a piece of tinder placed inside. The device was known in Southeast Asia long before the invention of matches.

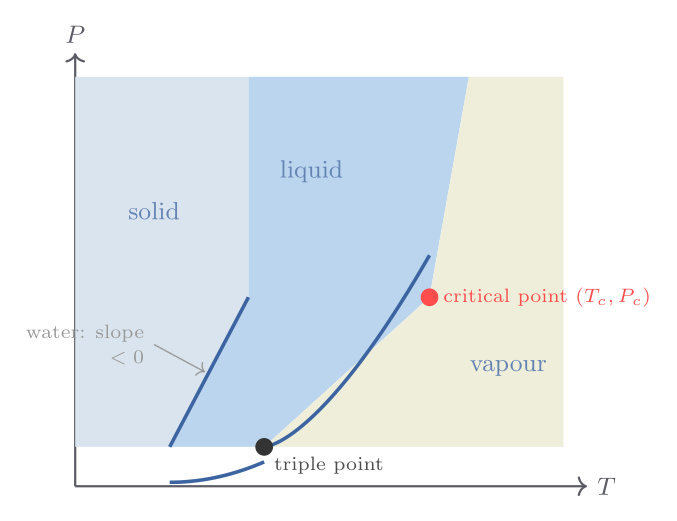

The phase diagram and the triple point

The two-dimensional \(P\)–\(T\) plane provides the most economical representation of the stable phases of a single-component substance. Each region of this phase diagram corresponds to a single stable phase; the boundaries between regions are the curves along which two phases coexist in equilibrium. Three such curves meet at a single invariant point — the triple point — where all three phases coexist simultaneously. For water, the triple point occurs at \(T_\text{tp} = 273.16\ \text{K}\) and \(P_\text{tp} = 611.7\ \text{Pa}\). It is used as the primary thermometric fixed point in the International Temperature Scale because, unlike the melting and boiling points at 1 atm, the triple point is independent of external pressure.

Above the critical point \((T_c, P_c)\), the liquid–gas phase boundary terminates and there is no longer a discontinuous distinction between liquid and gas. For water, \(T_c = 647.1\ \text{K}\) and \(P_c = 218\ \text{atm}\); for carbon dioxide, \(T_c = 304.1\ \text{K}\) and \(P_c = 72.8\ \text{atm}\). This means that \(\mathrm{CO_2}\) has a reachable critical point at temperatures accessible in a laboratory, making it easy to observe critical opalescence and supercritical fluid behaviour.

The Clausius-Clapeyron equation — a preview

The slope of any phase boundary in the \(P\)–\(T\) plane is not arbitrary; it is governed by the thermodynamic condition \(\mu_\alpha(T,P) = \mu_\beta(T,P)\), which must hold along the entire boundary. Differentiating this condition with respect to \(T\) along the boundary, and using \(d\mu = -S_m\,dT + V_m\,dP\) (where \(S_m\) and \(V_m\) are molar entropy and volume), one obtains the Clausius-Clapeyron equation:

\[ \frac{dP}{dT}\bigg|_\text{coexistence} = \frac{\Delta S_m}{\Delta V_m} = \frac{L}{T\Delta V_m}, \]where \(L = T\Delta S_m\) is the molar latent heat and \(\Delta V_m = V_m^\beta - V_m^\alpha\) is the molar volume difference between the two phases. This equation is derived in full detail in Chapter 19; here it explains the qualitative features of phase diagrams.

Why water’s melting curve has a negative slope

For almost every pure substance, the solid phase is denser than the liquid at the melting point, so \(\Delta V_m = V_m^\text{liq} - V_m^\text{solid} > 0\). Since \(L > 0\) always (melting absorbs heat), the Clausius-Clapeyron slope is positive: raising the pressure raises the melting temperature. Water is famous for its anomalous behaviour: ice at 0 °C has density \(917\ \text{kg m}^{-3}\) while liquid water has density \(1000\ \text{kg m}^{-3}\), so \(\Delta V_m < 0\) and \(dP/dT|_\text{melt} \approx -13.5\ \text{MPa K}^{-1}\). Increasing pressure by 1 atm lowers the melting point by only 0.0074 K — far too small to explain ice skating, which requires cooling of several degrees. The anomaly arises because ice forms an open hydrogen-bonded network (the wurtzite-type crystal structure of hexagonal ice) that collapses to a denser arrangement upon melting, unlike close-packed crystals.

The negative slope of water’s melting curve also means that, below the triple point, one cannot liquefy water by pressure alone — instead ice sublimes directly to vapour. This is why freeze-drying works: food is cooled below the triple-point temperature and placed in a low-pressure chamber so that ice sublimes without passing through the liquid phase, preserving texture and nutritional value.

More on latent heat physics

Latent heat reflects a fundamental competition between entropy and internal energy at the molecular level. At the melting point, the solid and liquid have equal Gibbs free energies: \(G_\text{solid} = G_\text{liq}\), meaning \(U_\text{solid} - TS_\text{solid} + PV_\text{solid} = U_\text{liq} - TS_\text{liq} + PV_\text{liq}\). Rearranging: \(L = T\Delta S = \Delta U + P\Delta V\). Most of the latent heat of fusion goes into breaking the rigid crystal lattice’s directional bonds (the \(\Delta U\) term), with a smaller contribution from the volume change \(P\Delta V\). For vaporisation, the dominant term is the intermolecular potential energy: bringing a molecule from the bulk liquid to the vapour requires breaking essentially all its close-range interactions, each of order \(\epsilon \sim k_{\mathrm{B}}T_\text{boil}\), with roughly \(z/2 \sim 5\) nearest neighbours. This gives a rough estimate \(L_\text{vap} \sim 5 N_A k_{\mathrm{B}} T_\text{boil}\), or about \(5RT_\text{boil}\) per mole. For water (\(T_\text{boil} = 373\ \text{K}\)), this gives \(L_\text{vap} \sim 5 \times 8.314 \times 373 \approx 15.5\ \text{kJ mol}^{-1}\), somewhat below the actual value of \(40.7\ \text{kJ mol}^{-1}\) because water’s hydrogen bonds are unusually strong. The ratio \(L_\text{vap}/L_\text{fus}\) is typically 6–10 for most substances, reflecting the larger disruption of intermolecular order in vaporisation than in melting.

Chapter 5: Enthalpy and Chemical-Reaction Energetics

Definition and motivation

The enthalpy is the combination

\[ H \equiv U + PV, \]which might at first look like an unmotivated algebraic construction. Its utility becomes clear in constant-pressure processes. When a system at constant pressure \(P_0\) changes state, the heat flowing in is

\[ Q_P = \Delta U + P_0 \Delta V = \Delta U + \Delta(P_0 V) = \Delta H. \]So enthalpy change equals heat flow at constant pressure (provided no non-\(PV\) work is done). Since most laboratory chemistry and engineering occurs at atmospheric pressure, enthalpy is the natural thermodynamic potential for thermal processes in open vessels.

Enthalpy is a state function — it depends only on the current state of the system, not on how the system got there. This makes enthalpy changes additive and tabulated: to find the heat released in a complex reaction, one can combine tabulated enthalpy changes of simpler reactions. This is Hess’s law, a direct consequence of enthalpy being a state function.

Throttling and the Joule-Thomson process

A particularly elegant application of enthalpy arises in throttling (the Joule-Thomson process): a gas is forced through a porous plug or narrow valve from high pressure \(P_i\) to low pressure \(P_f\), with insulating walls so \(Q = 0\). Work done by the gas on both sides of the throttle gives

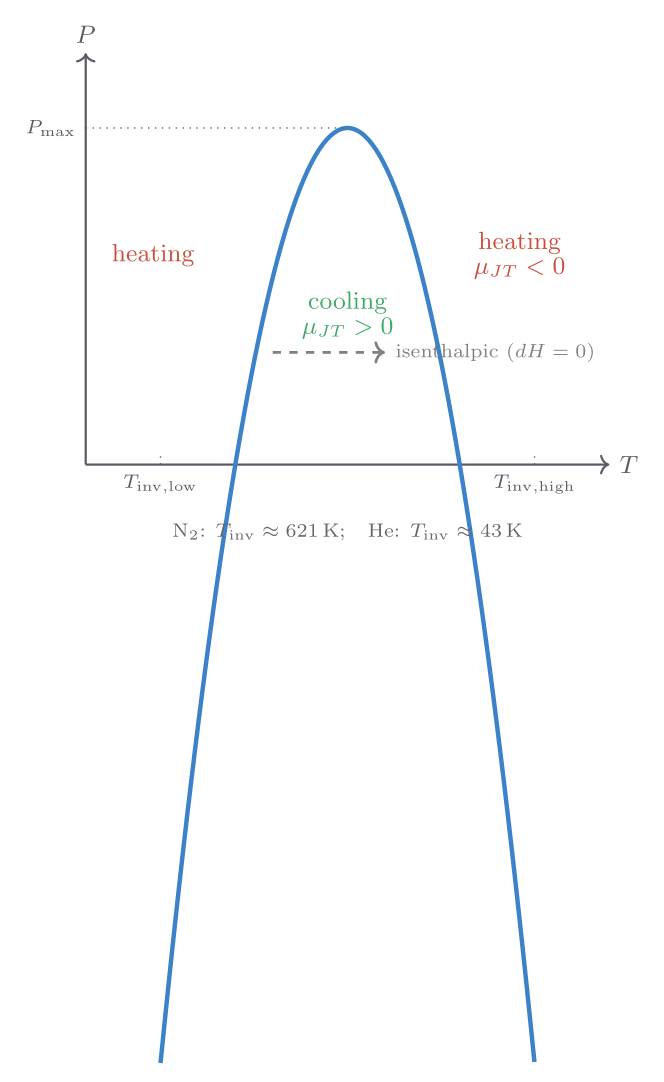

\[ W = P_i V_i - P_f V_f, \qquad \therefore\; \Delta U = P_i V_i - P_f V_f, \]which rearranges to \(U_f + P_f V_f = U_i + P_i V_i\), i.e., enthalpy is conserved in throttling: \(\Delta H = 0\). For an ideal gas, \(H = U + PV = U + Nk_{\mathrm{B}}T\) depends only on temperature, so \(\Delta H = 0\) implies \(\Delta T = 0\): throttling an ideal gas produces no temperature change. Real gases do cool or warm slightly because their internal energy has a weak volume-dependence. The Joule-Thomson coefficient

\[ \mu_{\mathrm{JT}} = \left(\frac{\partial T}{\partial P}\right)_H \]is positive (cooling on expansion) below an inversion temperature and negative above it. For most common gases at room temperature \(\mu_{\mathrm{JT}} > 0\), which is why throttling is used in refrigerators and liquefiers — the Linde process for liquefying air relies on it.

Enthalpies of formation and chemical reactions

The enthalpy of formation \(\Delta_f H^\circ\) of a substance is defined as the enthalpy change when one mole of the substance is produced from its elemental constituents in their standard states (298 K, 1 bar). By convention, elemental substances in their standard forms (H\(_2\)(g), O\(_2\)(g), Fe(s), etc.) have \(\Delta_f H^\circ = 0\).

For the formation of liquid water: \(\text{H}_2(\text{g}) + \tfrac{1}{2}\text{O}_2(\text{g}) \to \text{H}_2\text{O}(\ell)\), \(\Delta_f H^\circ = -286\ \text{kJ mol}^{-1}\). The negative sign means heat is released — this is an exothermic reaction. For any reaction, Hess’s law gives the total enthalpy change as the sum of formation enthalpies of products minus those of reactants:

\[ \Delta_r H^\circ = \sum_\text{products} \Delta_f H^\circ - \sum_\text{reactants} \Delta_f H^\circ. \]This \(\Delta_r H^\circ\) equals the heat released to the surroundings (at constant pressure, with no electrical or other non-\(PV\) work). When a battery is discharging, electrical work is also done, and the heat released is less than \(\Delta_r H^\circ\) — a point to keep in mind when comparing fuel combustion to electrochemical cells.

Why enthalpy is the correct variable for constant-pressure chemistry

Almost all laboratory chemistry takes place in open vessels exposed to atmospheric pressure. The experimenter typically cares only about the heat released or absorbed — not about the mechanical work done on the atmosphere when gas is produced or consumed. Enthalpy captures precisely this quantity: the heat flow at constant pressure, regardless of the volume change.

Consider the combustion of methane: \(\mathrm{CH_4(g) + 2\,O_2(g) \to CO_2(g) + 2\,H_2O(l)}\). The moles of gas decrease from 3 to 1. At 298 K and 1 bar, the volume decrease \(\Delta V = (1-3) \times RT/P = -2RT/P\) corresponds to a work done on the gas of \(P|\Delta V| = 2RT \approx 4.96\ \text{kJ mol}^{-1}\). The internal energy change is \(\Delta_r U^\circ = -890.3 + 4.96 \approx -885.3\ \text{kJ mol}^{-1}\), while the tabulated enthalpy change is \(\Delta_r H^\circ = -890.3\ \text{kJ mol}^{-1}\). For a calorimetric measurement in an open vessel, it is \(\Delta_r H^\circ\) that appears directly as the heat released; isolating \(\Delta_r U^\circ\) requires a rigid-walled bomb calorimeter. Both quantities are physically meaningful, but enthalpy is the one directly accessible in open-vessel experiments.

Hess’s law as a consequence of enthalpy being a state function

The power of Hess’s law goes beyond simple additive bookkeeping. Because \(H\) is a state function, the enthalpy change for any chemical transformation is independent of the path taken — regardless of what intermediates the reaction passes through, what mechanisms are operative, or even whether the direct reaction is experimentally feasible. This means that reactions which are too fast, too slow, or too dangerous to study calorimetrically can have their enthalpies determined indirectly by combining the enthalpies of related measurable reactions.

A classic application: the enthalpy of formation of carbon monoxide, \(\text{C(s)} + \tfrac{1}{2}\text{O}_2(\text{g}) \to \text{CO(g)}\), is difficult to measure directly because carbon also burns to \(\text{CO}_2\). Using known values \(\Delta_f H^\circ[\text{CO}_2] = -393.5\ \text{kJ mol}^{-1}\) and \(\Delta_\text{comb} H^\circ[\text{CO}] = -283.0\ \text{kJ mol}^{-1}\) (combustion of CO to \(\text{CO}_2\)):

\[ \Delta_f H^\circ[\text{CO}] = \Delta_f H^\circ[\text{CO}_2] - \Delta_\text{comb} H^\circ[\text{CO}] = -393.5 - (-283.0) = -110.5\ \text{kJ mol}^{-1}. \]This value, inaccessible by direct experiment, is central to industrial chemistry — the water-gas shift reaction and Fischer-Tropsch synthesis both involve CO, and knowing \(\Delta_f H^\circ[\text{CO}]\) is essential for energy accounting.

Worked enthalpy calculation: combustion of octane

Octane (\(\mathrm{C_8H_{18}}\), the primary component of gasoline) combusts as \(\mathrm{C_8H_{18}(l) + \tfrac{25}{2}\,O_2(g) \to 8\,CO_2(g) + 9\,H_2O(l)}\). Using standard enthalpies of formation:

\[ \Delta_r H^\circ = [8 \times (-393.5) + 9 \times (-285.8)] - [(-250.1) + 0] = [-3148 - 2572.2] - [-250.1] \]\[ = -5720.2 + 250.1 = -5470.1\ \text{kJ mol}^{-1}. \]The molar mass of octane is \(114.2\ \text{g mol}^{-1}\), giving a specific energy of \(5470/114.2 \approx 47.9\ \text{kJ g}^{-1}\). This compares well with the tabulated lower heating value of gasoline (\(\approx 44\ \text{MJ kg}^{-1}\)), with the discrepancy reflecting the mixture composition and the fact that the “lower” heating value assumes water leaves as vapour rather than liquid. The enormous enthalpy change per mole — more than 5 MJ — reflects the very large number of C–H and C–C bonds being replaced by the stronger C=O bonds in \(\text{CO}_2\) and O–H bonds in water.

Chapter 6: Thermal Conduction and Fourier’s Law

Mechanisms of heat transport

Heat moves between regions of differing temperature by three mechanisms: conduction, in which energy is transferred by molecular collisions through a stationary medium; convection, in which a fluid carries heat by bulk motion; and radiation, in which electromagnetic waves transport energy without need of a material medium. In this chapter we focus on conduction; radiation is the subject of Part VI.

Fourier’s law

Fourier’s law of heat conduction (1822) states that the rate of heat flow per unit area through a medium is proportional to the local temperature gradient and directed down the gradient:

\[ \mathbf{j}_Q = -\kappa_\text{th}\,\nabla T, \]where \(\mathbf{j}_Q\) is the heat-flux vector (watts per square metre) and \(\kappa_\text{th}\) is the thermal conductivity of the material (W m\(^{-1}\) K\(^{-1}\)). In one dimension,

\[ \dot{Q} = -\kappa_\text{th} A \frac{dT}{dx}, \]where \(\dot{Q}\) is the heat current (watts) through cross-section \(A\). The minus sign ensures that heat flows from hot to cold: a positive temperature gradient in the \(x\)-direction drives a negative (leftward) heat flux.

The thermal conductivities of common materials span orders of magnitude:

| Material | \(\kappa_\text{th}\ (\text{W m}^{-1}\text{K}^{-1})\) |

|---|---|

| Diamond | 2000 |

| Copper | 400 |

| Aluminium | 237 |

| Glass | 0.8 |

| Water | 0.6 |

| Wood | 0.1 |

| Air | 0.026 |

| Aerogel | 0.015 |

The enormous range — from diamond (the best thermal conductor known, used in heat sinks for high-power electronics) to aerogel (approaching the conductivity of the air it contains) — reflects the wide variety of microscopic heat-transport mechanisms. In metals, conduction electrons carry most of the heat; in insulators, lattice vibrations (phonons) carry it; in porous materials, the entrapped gas dominates.

The contrast between water and air explains a practical hazard: falling into cold water at 5 °C is far more dangerous than standing in air at the same temperature, because water conducts heat away from the body roughly 25 times faster. The thermal conductivities also reflect the underlying physics of heat transport: in metals, the dominant carriers are conduction electrons, which have much higher mean free paths than phonons in insulators. The Wiedemann-Franz law states that the ratio of thermal conductivity to electrical conductivity is proportional to temperature: \(\kappa_\text{th}/\sigma_\text{el} = LT\), where \(L = \pi^2 k_{\mathrm{B}}^2/(3e^2) \approx 2.44 \times 10^{-8}\ \text{W}\Omega\text{K}^{-2}\) is the Lorenz number. Good electrical conductors (copper, silver) are therefore also excellent thermal conductors — explaining why copper water pipes are hot to the touch near a heating element.

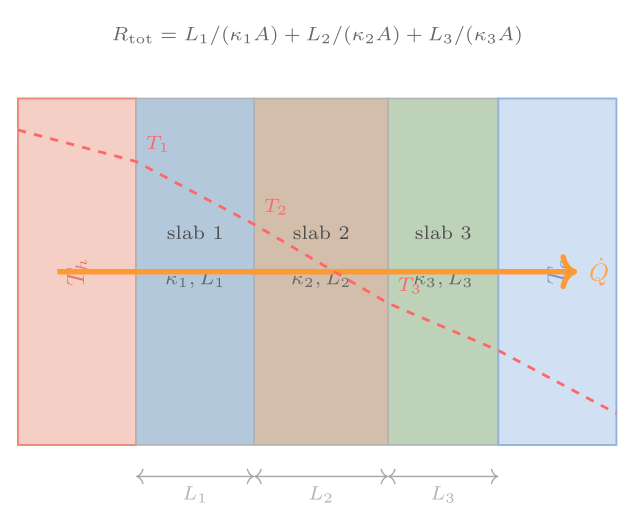

Heat conduction through composite slabs

In steady state, the heat flux \(\dot{Q}/A\) must be the same throughout a series of layers — otherwise energy would be accumulating somewhere. For a composite slab of \(n\) layers with thermal conductivities \(\kappa_i\) and thicknesses \(L_i\), equating the heat flux in each layer and summing gives

\[ \frac{\dot{Q}}{A} = \frac{T_\text{hot} - T_\text{cold}}{\displaystyle\sum_{i=1}^{n} \frac{L_i}{\kappa_i}} = \frac{\Delta T}{\sum_i R_i}, \]where the thermal resistance (R-value) of each layer is \(R_i = L_i/\kappa_i\). Resistances in series add — exactly analogously to electrical resistors in series. This analogy with electrical circuits is not coincidental: both Fourier’s law and Ohm’s law are linear transport laws of the same mathematical form.

A double-glazed window illustrates the power of this formula. A single 3.2 mm pane of glass (\(\kappa = 0.8\ \text{W m}^{-1}\text{K}^{-1}\)) has \(R = 4 \times 10^{-3}\ \text{m}^2\text{K W}^{-1}\); it admits roughly 5 kW through each square metre at a 20 K indoor-outdoor temperature difference. Adding two thin still-air layers (1 mm each, \(\kappa = 0.026\)) raises the total R-value to about \(8 \times 10^{-3}\ \text{m}^2\text{K W}^{-1}\) and halves the heat loss. A proper double-glazed unit with a vacuum gap does far better still. R-values quoted on commercial insulation are just the ratios \(L/\kappa\) for the insulating product, directly comparable by simple addition.

Kinetic-theory underpinning of thermal conductivity

Fourier’s law is a macroscopic statement; its microscopic foundation is kinetic theory. In a dilute gas, molecules travel freely between collisions. Those coming from a hotter region carry more energy, on average, than those from a cooler region. The net energy flux turns out to be

\[ \kappa_\text{th} = \frac{1}{3}\,\bar{v}\,\lambda\,\frac{C_V}{V}, \]where \(\bar{v}\) is the mean molecular speed, \(\lambda\) the mean free path (average distance between collisions), and \(C_V/V\) the heat capacity per unit volume. This expression, derived properly in Chapter 12, captures the key physics: better thermal conductors have faster molecules, longer free paths, or larger heat capacities per unit volume. The mean free path \(\lambda \sim 1/(n\sigma)\), where \(n\) is the number density and \(\sigma\) the collision cross-section, introduces the density-dependence. Halving the pressure halves \(n\), doubles \(\lambda\), and leaves \(\kappa_\text{th}\) unchanged — a somewhat counterintuitive but experimentally verified result. Only at very low pressures, when \(\lambda\) becomes comparable to the container size, does \(\kappa_\text{th}\) finally start to decrease with pressure. This is why vacuum insulation works: at pressures below about 0.1 Pa (where \(\lambda\) exceeds the gap width in a vacuum panel), the gas thermal conductivity drops effectively to zero, and the only remaining heat transfer mechanisms are radiation across the evacuated gap and solid conduction through the thin support structure. Vacuum-insulated panels achieve effective thermal conductivities of \(0.004–0.006\ \text{W m}^{-1}\text{K}^{-1}\), an order of magnitude below aerogel and nearly 200 times better than glass.

Multi-layer composite slabs and the R-value

The result for a composite slab of \(n\) layers deserves a more explicit derivation. In steady state, energy conservation requires the heat current \(\dot{Q}\) to be the same in every layer. For layer \(i\) with conductivity \(\kappa_i\), thickness \(L_i\), and cross-sectional area \(A\), Fourier’s law gives \(\dot{Q} = \kappa_i A\,\Delta T_i / L_i\), where \(\Delta T_i\) is the temperature drop across layer \(i\). Solving for each temperature drop: \(\Delta T_i = \dot{Q} L_i / (\kappa_i A)\). The total temperature difference across all layers is the sum:

\[ \Delta T_\text{total} = \sum_{i=1}^n \Delta T_i = \frac{\dot{Q}}{A}\sum_{i=1}^n \frac{L_i}{\kappa_i}. \]Inverting to get \(\dot{Q}\):

\[ \dot{Q} = A\,\frac{\Delta T_\text{total}}{\displaystyle\sum_{i=1}^n R_i}, \qquad R_i \equiv \frac{L_i}{\kappa_i}, \]where \(R_i\) has units of \(\text{m}^2\text{ K W}^{-1}\). In the building industry, thermal resistance is commonly quoted as the RSI value in SI units (or the R-value in imperial units, \(1\ \text{ft}^2\text{°F·hr/Btu} = 0.176\ \text{m}^2\text{K W}^{-1}\)). A well-insulated wall with fibreglass batts (\(L = 14\ \text{cm}\), \(\kappa \approx 0.045\ \text{W m}^{-1}\text{K}^{-1}\)) has \(R_\text{insul} \approx 3.1\ \text{m}^2\text{K W}^{-1}\) (RSI 3.1, approximately R-18 in North American convention). Adding two layers of 1.2 cm gypsum board (\(\kappa = 0.17\)) contributes \(2 \times 0.012/0.17 \approx 0.14\ \text{m}^2\text{K W}^{-1}\) — a minor correction showing that the insulation dominates the thermal resistance of a well-designed wall.

Convective and radiative limits

Fourier’s law governs heat transport by pure conduction. In practice, however, the surfaces of a solid are almost always in contact with a fluid, and heat transfer at the surface involves convection — the movement of the fluid itself. The effective convective heat flux is often written \(\dot{Q}/A = h_c(T_\text{surface} - T_\text{fluid})\), where \(h_c\) is the convective heat-transfer coefficient (W m\(^{-2}\) K\(^{-1}\)). For still air, \(h_c \approx 5\ \text{W m}^{-2}\text{K}^{-1}\); for a moderate wind or forced flow, \(h_c \approx 20\)–\(50\ \text{W m}^{-2}\text{K}^{-1}\). The resistance interpretation extends naturally: the convective resistance is \(R_\text{conv} = 1/h_c\), and it appears in series with the conductive resistances. A poorly insulated wall with \(R_\text{cond} = 0.05\ \text{m}^2\text{K W}^{-1}\) but with still-air convective resistances of \(2 \times 0.2 = 0.4\ \text{m}^2\text{K W}^{-1}\) on both surfaces has its heat loss dominated by the air-film resistances — a dramatic illustration that surface convection can be the limiting factor even when conduction through the wall seems very good.

Radiative heat transfer, governed by the Stefan-Boltzmann law, provides a third parallel pathway. For surfaces at temperatures \(T_1\) and \(T_2\) with emissivities near unity, the radiative flux is approximately \(\sigma(T_1^4 - T_2^4) \approx 4\sigma T_\text{avg}^3 (T_1 - T_2)\) for small temperature differences, giving an effective radiative transfer coefficient \(h_\text{rad} = 4\varepsilon\sigma T_\text{avg}^3\). At room temperature (\(T_\text{avg} = 300\ \text{K}\)) with \(\varepsilon = 0.9\), \(h_\text{rad} \approx 5.5\ \text{W m}^{-2}\text{K}^{-1}\) — comparable to natural convection. Thus radiation and convection are always present alongside conduction in real systems.

Thermal diffusivity and the heat diffusion equation

Fourier’s law in differential form, combined with energy conservation, yields the heat equation. Consider a small volume element of material with density \(\rho\), specific heat capacity \(c_P\), and thermal conductivity \(\kappa_\text{th}\). The net heat flux into the element per unit volume is \(\kappa_\text{th}\nabla^2 T\), and this must equal the rate of energy storage: \(\rho c_P \partial T/\partial t\). Thus

\[ \frac{\partial T}{\partial t} = \alpha_\text{th}\,\nabla^2 T, \qquad \alpha_\text{th} \equiv \frac{\kappa_\text{th}}{\rho c_P}, \]where \(\alpha_\text{th}\) is the thermal diffusivity (m\(^2\text{ s}^{-1}\)). It measures how quickly a temperature disturbance propagates through a material. High conductivity promotes rapid spreading; high heat capacity (or high density) retards it because more energy must be redistributed for each degree of temperature change. For copper, \(\alpha_\text{th} = 400/(8960 \times 385) \approx 1.16 \times 10^{-4}\ \text{m}^2\text{s}^{-1}\) — a thermal disturbance penetrates about 1 cm in 0.1 s. For soil, \(\alpha_\text{th} \approx 5 \times 10^{-7}\ \text{m}^2\text{s}^{-1}\), so the seasonal temperature cycle penetrates to a characteristic depth of \(\sqrt{\alpha_\text{th}/\omega} \approx \sqrt{5\times10^{-7}/2\times10^{-7}} \approx 1.6\ \text{m}\) (using annual frequency \(\omega \approx 2\pi/(3.15\times10^7)\ \text{s}^{-1}\)), which is why underground wine cellars below 2 m depth have nearly constant temperature year-round.

Part II: Microscopic Foundations of Entropy

Chapter 7: The Einstein Solid Model and Multiplicity

From macroscopic to microscopic

Part I established the phenomenological laws of thermal physics using temperature as an operationally defined quantity. Now we ask a deeper question: what is temperature at the atomic level? To answer it, we need to count — to enumerate the microscopic arrangements compatible with a given macroscopic state. The central concept is multiplicity (also called the number of microstates), and the central tool is combinatorics.

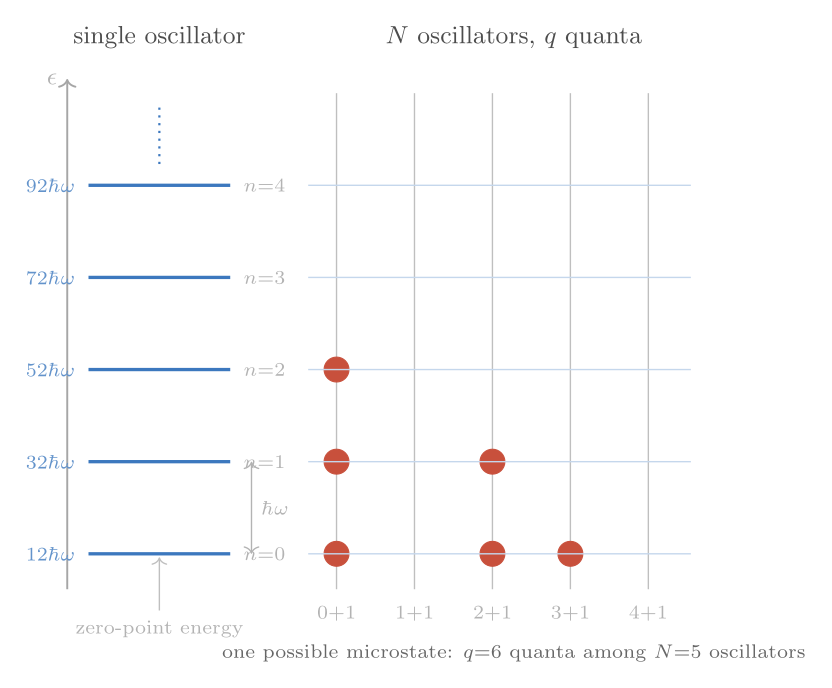

The Einstein solid (proposed by Albert Einstein in 1907) is our first and most important model system. It captures the essential physics of a crystal: \(N\) atoms, each vibrating about its equilibrium position. In quantum mechanics, a one-dimensional harmonic oscillator of angular frequency \(\omega\) has energy levels \(\epsilon_n = \hbar\omega(n + \tfrac{1}{2})\) for \(n = 0, 1, 2, \ldots\) Each atom in a three-dimensional crystal vibrates in three independent directions, so a solid of \(N\) atoms is modelled as \(3N\) quantum harmonic oscillators all sharing a common frequency \(\omega\).

Counting microstates: the stars-and-bars method

We drop the constant zero-point energy \(\tfrac{1}{2}\hbar\omega\) per oscillator (it is always present and never exchanged) and define the dimensionless energy parameter \(q = U/(\hbar\omega)\), the total number of energy quanta distributed among the \(N\) oscillators. A microstate specifies exactly how many quanta each oscillator holds; a macrostate specifies only the total \(q\) and \(N\). We want \(\Omega(N, q)\), the number of microstates corresponding to the macrostate \((N, q)\).

The counting problem maps onto a combinatorial one: in how many ways can \(q\) identical stars be arranged with \(N - 1\) identical bars in a row? Each arrangement corresponds to a distribution of quanta — the stars to the left of the first bar go to oscillator 1, those between the first and second bars go to oscillator 2, and so on. The total number of symbols is \(q + (N-1)\), and the number of distinct arrangements of \(q\) identical stars and \(N-1\) identical bars is the binomial coefficient:

\[ \Omega(N, q) = \binom{q + N - 1}{q} = \frac{(q + N - 1)!}{q!\,(N-1)!}. \]For the simplest case \(N = 3\), \(q = 2\): \(\Omega = \binom{4}{2} = 6\), agreeing with explicit enumeration. As both \(N\) and \(q\) become large — as they must for a macroscopic solid with \(N \sim 10^{23}\) — the factorials require Stirling’s approximation:

\[ \ln(n!) \approx n\ln n - n \qquad (n \gg 1), \]which leads to

\[ \ln\Omega \approx (q + N)\ln(q + N) - q\ln q - N\ln N. \]In the limit where each oscillator holds many quanta on average, \(q \gg N\), this simplifies further to \(\ln\Omega \approx N\ln(q/N) + N\), a result that will prove essential for extracting the temperature.

Chapter 8: Two Einstein Solids in Thermal Contact

The fundamental assumption

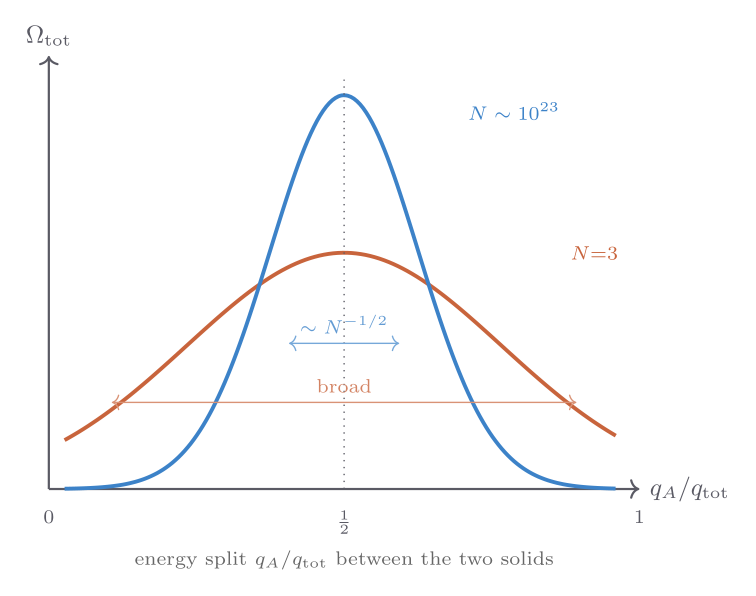

Two Einstein solids, A and B, with \(N_A\) and \(N_B\) oscillators respectively, are placed in thermal contact: they can exchange energy but the total \(q_{\text{tot}} = q_A + q_B\) is fixed. For any given split \(q_A\), the number of microstates of the combined system is

\[ \Omega_\text{tot}(q_A) = \Omega_A(N_A, q_A)\,\cdot\,\Omega_B(N_B, q_{\text{tot}} - q_A). \]The fundamental assumption of statistical mechanics is that all accessible microstates are equally probable. With this assumption, the probability of observing a particular macrostate \(q_A\) is proportional to \(\Omega_\text{tot}(q_A)\) — the most probable macrostate is the one with the most microstates.

Sharpness of the peak

For a small system (\(N_A = N_B = 3\), \(q_{\text{tot}} = 6\)), the function \(\Omega_\text{tot}(q_A)\) has a gentle maximum at equal sharing \(q_A = q_B = 3\), but the peak is only a few times the value at the extremes — there is an appreciable probability of one solid hogging nearly all the energy.

As \(N_A = N_B = N\) grows, the peak sharpens dramatically. Using Stirling’s approximation one can show that the relative width of the peak scales as \(N^{-1/2}\): for \(N = 10^{23}\), the relative fluctuation in \(q_A\) around its equilibrium value is of order \(10^{-11}\). This is the statistical basis of the second law of thermodynamics: the approach to the most probable macrostate is so overwhelmingly favoured that deviations are, for all practical purposes, impossible. What looks like an irreversible tendency — heat flowing from hot to cold, systems approaching equilibrium — is simply the system exploring its microstates and ending up in the overwhelmingly most probable macrostate.

The equal-sharing macrostate corresponds to thermal equilibrium. But why is equal-energy-per-oscillator the most probable? The multiplicity grows very steeply with energy when energy is scarce (low \(q\)), so adding energy to the solid with less energy increases the total multiplicity more than it decreases the multiplicity of the other. The system explores its microstates, and finds — overwhelmingly — that the energy distributes until neither transfer direction increases the total multiplicity. That condition defines equilibrium.

This argument makes vivid why thermal equilibrium always involves the maximisation of entropy rather than the minimisation of energy. Energy is not the relevant quantity to extremise when heat can flow freely; instead, the equilibrium condition is that the total entropy — the logarithm of the total number of accessible microstates — reaches its maximum. The tendency to maximise entropy is not an additional postulate of thermodynamics layered on top of mechanics; it is a consequence of the fundamental assumption of equal probability combined with the combinatorial fact that, for large systems, the overwhelmingly most probable macrostate has a multiplicity that dwarfs all others combined. This is why the second law, while statistical in origin, is effectively absolute for macroscopic systems: the probability of observing a spontaneous decrease in entropy over any realistic observation time is not merely small but stupendously, inconceivably small.

Chapter 9: Statistical Definition of Temperature; Two-State Systems

Temperature from entropy

The insight from Chapter 8 — that equilibrium is the state of maximum total multiplicity — motivates a precise definition. We define the entropy of a system by

\[ S = k_{\mathrm{B}}\ln\Omega, \]where \(k_{\mathrm{B}}\) is Boltzmann’s constant, included to make \(S\) an extensive quantity with units of J/K matching the classical thermodynamic entropy. The factor of \(k_{\mathrm{B}}\) is the bridge between the counting of microscopic states and the macroscopic thermal quantities defined in Part I.

With this definition, the equilibrium condition — maximum total entropy — reads

\[ \frac{\partial S_A}{\partial U_A} = \frac{\partial S_B}{\partial U_B}. \]We define the temperature as the quantity that is equal between two systems in thermal equilibrium:

\[ \frac{1}{T} \equiv \left(\frac{\partial S}{\partial U}\right)_{V, N}. \]This is the microscopic definition of temperature. It coincides exactly with the ideal-gas-thermometer definition introduced in Part I — one can verify this by computing \(\partial S/\partial U\) for the Sackur-Tetrode entropy of an ideal gas (Chapter 10). A system with steeply rising \(\Omega(U)\) — one that gains many microstates for each unit of energy added — has low temperature. A system with slowly rising \(\Omega(U)\) has high temperature. If system A has higher temperature than B, meaning \(\partial S_A/\partial U_A < \partial S_B/\partial U_B\), then transferring energy from A to B increases the total entropy — the spontaneous direction of heat flow is from hot to cold, as expected.

For the Einstein solid in the large-\(q\) limit, \(S \approx Nk_{\mathrm{B}}\ln(q/N) + \text{const}\), and \(\partial S/\partial U = (Nk_{\mathrm{B}}/\hbar\omega)\cdot(1/q)\). Since \(q = U/(\hbar\omega)\), this is \(\partial S/\partial U = Nk_{\mathrm{B}}/U\). Setting this equal to \(1/T\) gives

\[ U = Nk_{\mathrm{B}}T, \]predicting heat capacity \(C_V = Nk_{\mathrm{B}}\). This is the Dulong-Petit law, confirmed experimentally for most metals near room temperature. At low temperatures the quantum discreteness of the energy levels matters and the classical equipartition value overestimates the heat capacity — Einstein’s 1907 correction correctly predicted the falling heat capacity, a landmark early success of quantum theory.

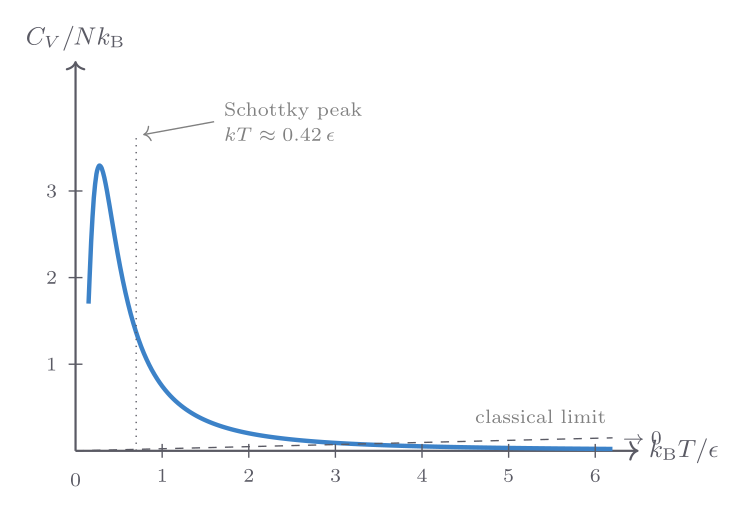

Two-state systems and the Schottky anomaly

A simpler model than the Einstein solid is a system of \(N\) non-interacting spin-\(\tfrac{1}{2}\) particles in a magnetic field, each of which can be in one of two states — spin up (energy \(-\mu B\)) or spin down (energy \(+\mu B\)). With \(N_\uparrow\) particles in the lower-energy state and \(N_\downarrow = N - N_\uparrow\) in the upper,

\[ \Omega = \binom{N}{N_\uparrow}, \qquad U = N_\downarrow \cdot 2\mu B - N\mu B. \]Applying the entropy definition and \(1/T = \partial S/\partial U\) yields

\[ C_V = Nk_{\mathrm{B}}\left(\frac{\epsilon}{k_{\mathrm{B}}T}\right)^2 \frac{e^{\epsilon/k_{\mathrm{B}}T}}{\left(e^{\epsilon/k_{\mathrm{B}}T} + 1\right)^2}, \]where \(\epsilon = 2\mu B\). This function — known as the Schottky anomaly — vanishes at both \(T \to 0\) (insufficient thermal energy to excite the upper state) and \(T \to \infty\) (both states equally populated, no further energy can be stored), with a broad maximum at \(T \approx 0.42\,\epsilon/k_{\mathrm{B}}\).

A Schottky anomaly in the specific heat of a material is a diagnostic signature of a two-level system. It appears in magnetic materials (where it is driven by the magnetic-field splitting of spin states), in glasses (where tunnelling two-level systems give a characteristic low-temperature heat capacity), and in some molecular crystals (where quantum rotational tunnelling provides the two-level structure).

The two-state system also illustrates negative absolute temperature, a subtle but real phenomenon. For a spin system, the entropy \(S(U)\) has a maximum at \(U = 0\) (equal populations, maximum disorder), so \(\partial S/\partial U = 0\) and \(1/T = 0\), corresponding to \(T = \pm\infty\). If extra energy is added beyond this point — achieved in magnetic systems by rapid field reversal — the population inversion makes \(\partial S/\partial U < 0\) and \(T < 0\). Negative-temperature states are “hotter” than any positive temperature: heat flows from a negative-temperature body to any body at finite positive temperature. They arise only in systems with a bounded energy spectrum (a maximum possible energy), which includes nuclear and electronic spin systems but not gases or phonons. The concept does not violate the laws of thermodynamics but does require a careful re-examination of the statistical foundation.

Chapter 10: The Monatomic Ideal Gas and the Sackur-Tetrode Equation

Why the ideal gas needs new tools

The Einstein solid lives in a space without volume: it has no concept of pressure. To generalise the microscopic framework to a gas, we must count states for particles that can move freely in a three-dimensional volume. This requires quantum mechanics — specifically, the quantisation of a particle in a box — and a careful treatment of the indistinguishability of identical particles.

Counting states in momentum space

A single particle of mass \(m\) confined to a cubical box of side \(L\) has allowed momenta

\[ \mathbf{p} = \frac{\pi\hbar}{L}(n_x, n_y, n_z), \qquad n_i = 1, 2, 3, \ldots \]Each point in the positive octant of momentum space occupies a “cell” of volume \((\pi\hbar/L)^3\). The number of states with magnitude of momentum less than \(p_{\max} = \sqrt{2mU}\) is proportional to the volume of a \(3N\)-dimensional hypersphere (for \(N\) particles) of radius \(\sqrt{2mU}\). Using the formula for the volume of a \(d\)-dimensional hypersphere,

\[ \mathcal{V}_d(R) = \frac{\pi^{d/2}}{\Gamma(d/2 + 1)}R^d, \]one obtains (for \(d = 3N\)) the number of states with energy less than \(U\). Differentiating gives the density of states, and multiplying by a small energy window \(\delta U\) gives \(\Omega\).

The crucial step — required to avoid the Gibbs paradox — is to divide by \(N!\) to account for the indistinguishability of identical particles. Without this factor, mixing two samples of the same gas at the same temperature and pressure would appear to increase the entropy, which is physically wrong (entropy of mixing is zero for identical gases). The Gibbs paradox was paradoxical to 19th-century physicists because classical mechanics assumes particles have identity; quantum mechanics resolves it by making identical particles truly indistinguishable.

The Sackur-Tetrode equation

After these steps, the entropy of a monatomic ideal gas of \(N\) atoms in volume \(V\) with total energy \(U\) is the Sackur-Tetrode equation (1912):

\[ S = Nk_{\mathrm{B}}\left[\ln\!\left(\frac{V}{N}\left(\frac{4\pi m U}{3Nh^2}\right)^{3/2}\right) + \frac{5}{2}\right]. \]This is an exact, closed-form expression for the entropy of a quantum ideal gas valid in the classical (high-temperature) limit. Several checks confirm its correctness.

Temperature recovery: computing \(1/T = (\partial S/\partial U)_V\) gives \(U = \tfrac{3}{2}Nk_{\mathrm{B}}T\), the familiar monatomic ideal gas energy.

Pressure recovery: computing \(P/T = (\partial S/\partial V)_U\) gives \(P = Nk_{\mathrm{B}}T/V\), the ideal gas law.

Heat capacity: \(C_V = (\partial U/\partial T)_V = \tfrac{3}{2}Nk_{\mathrm{B}}\), matching experiment for noble gases.

Entropy of mixing: computing the entropy change when two different gases mix at equal volumes and temperatures gives a positive value \(\Delta S = 2Nk_{\mathrm{B}}\ln 2\); computing the same for two samples of identical gas gives \(\Delta S = 0\), thanks to the \(N!\) factor. The Gibbs paradox is resolved.

Adiabatic processes: holding \(S\) constant in the Sackur-Tetrode equation while varying \(V\) recovers \(TV^{\gamma-1} = \text{const}\) with \(\gamma = 5/3\), consistent with the macroscopic adiabat relation derived in Chapter 3.

The Sackur-Tetrode equation also reveals that entropy decreases as temperature approaches zero — consistent with the third law, which asserts that \(S \to 0\) at absolute zero for a perfect crystal. It also shows that entropy diverges logarithmically as \(T \to 0\), signalling the breakdown of the classical (high-temperature) approximation: quantum corrections become essential at low temperatures, and a proper treatment requires Bose-Einstein or Fermi-Dirac statistics (Chapter 23).

Explicit derivation: the hypersphere volume substitution

The derivation of the Sackur-Tetrode equation requires counting the number of quantum states with total energy at most \(U\) for \(N\) non-interacting particles in a cubical box of side \(L\). Each particle \(i\) has three quantum numbers \((n_{ix}, n_{iy}, n_{iz})\), each a positive integer, with single-particle energy \(\epsilon_i = (\hbar^2\pi^2/2mL^2)(n_{ix}^2 + n_{iy}^2 + n_{iz}^2)\). The total energy constraint is

\[ \sum_{i=1}^N (n_{ix}^2 + n_{iy}^2 + n_{iz}^2) \leq \frac{2mUL^2}{\hbar^2\pi^2} \equiv R^2, \]which is the interior of a \(3N\)-dimensional hypersphere of radius \(R = L\sqrt{2mU}/(\hbar\pi)\) in the \(3N\)-dimensional space of positive integers. Since only the positive-integer octant is physical, the number of lattice points inside this region is approximately \(2^{-3N}\) of the total hypersphere volume (one out of \(2^{3N}\) equal orthants), plus a small correction from surface states that is negligible for large \(N\). The volume of the \(d = 3N\) dimensional hypersphere of radius \(R\) is

\[ \mathcal{V}_{3N}(R) = \frac{\pi^{3N/2}}{\Gamma(3N/2 + 1)}\,R^{3N}. \]Substituting \(R = L(2mU)^{1/2}/(\hbar\pi)\) and using \(V = L^3\), one obtains the number of states \(\Phi(N, V, U)\) with energy at most \(U\). The multiplicity \(\Omega\) for a thin energy shell of width \(\delta U\) is \(\Omega = (d\Phi/dU)\delta U\). After dividing by \(N!\) for indistinguishability and using Stirling’s approximation for \(\Gamma(3N/2 + 1) \approx (3N/2)!\), the entropy \(S = k_{\mathrm{B}}\ln\Omega\) simplifies to the Sackur-Tetrode form. The factor \(\delta U\) appears only in the additive constant inside the logarithm and drops out when derivatives of \(S\) are taken to extract physical predictions.

Comparison with the Einstein solid and the Gibbs factor

The Sackur-Tetrode entropy differs from that of the Einstein solid in a fundamental structural way. For the Einstein solid, the multiplicity \(\Omega(N, q) = \binom{q+N-1}{q}\) is purely combinatorial and purely depends on the number of quanta and oscillators — volume plays no role. The ideal gas entropy explicitly depends on volume through \(\ln(V/N)\), reflecting the fact that the gas can sample a larger region of phase space when confined to a larger volume. This is why the ideal gas law \(P = Nk_{\mathrm{B}}T/V\) follows from \(P/T = (\partial S/\partial V)_U\) — pressure is a direct consequence of the entropy’s logarithmic volume dependence.

The \(1/N!\) Gibbs factor is quantitatively important. Without it, the entropy would be \(S_\text{wrong} = Nk_{\mathrm{B}}\ln V + \ldots\) rather than \(Nk_{\mathrm{B}}\ln(V/N) + \ldots\). The wrong formula gives \(S_\text{wrong} \propto N\ln V\), which is not extensive: doubling \(N\) and \(V\) simultaneously would give \(2N\ln(2V) = 2S + 2Nk_{\mathrm{B}}\ln 2\) rather than \(2S\). Only with the \(1/N!\) does the entropy scale correctly as an extensive quantity. The \(N!\) factor changes the logarithm of the partition function from \(\ln V^N\) to \(\ln(V^N/N!)\), and via Stirling’s approximation: \(\ln(V^N/N!) \approx N\ln(V/N) + N\), the crucial \(N\) appears in the denominator of the logarithm, ensuring extensivity. Physically, this factor reflects the fact that quantum mechanics forbids assigning persistent identities to identical particles: a configuration of \(N\) ideal gas atoms is fully described by the occupation numbers of single-particle states, not by labelling which atom is in which state.

Chapter 11: The Boltzmann Distribution and the Canonical Partition Function

Beyond fixed energy: contact with a reservoir

The microcanonical ensemble (fixed \(U\), \(V\), \(N\)) underlying the Einstein solid analysis is elegant but restrictive. Most real systems are in contact with a heat reservoir — a large body at fixed temperature \(T\) with which they can exchange energy. The relevant ensemble is the canonical ensemble, where \(T\), \(V\), and \(N\) are fixed rather than \(U\).

Consider a small system \(s\) in contact with a large reservoir \(r\) at temperature \(T\). The total energy \(U_\text{tot} = \epsilon_s + U_r\) is conserved. By the fundamental assumption, the probability that the system is in a specific microstate with energy \(\epsilon_s\) is proportional to the multiplicity of the reservoir when it has energy \(U_\text{tot} - \epsilon_s\):

\[ P(\epsilon_s) \propto \Omega_r(U_\text{tot} - \epsilon_s) = e^{S_r(U_\text{tot} - \epsilon_s)/k_{\mathrm{B}}}. \]Expanding the reservoir entropy to first order in \(\epsilon_s\) (valid because the reservoir is large):

\[ S_r(U_\text{tot} - \epsilon_s) \approx S_r(U_\text{tot}) - \frac{\epsilon_s}{T}, \]giving the Boltzmann distribution:

\[ \boxed{P(\epsilon_s) = \frac{e^{-\epsilon_s/k_{\mathrm{B}}T}}{Z}, \qquad Z \equiv \sum_j e^{-\epsilon_j/k_{\mathrm{B}}T}.} \]The sum \(Z\) over all microstates of the system is the partition function. The factor \(e^{-\epsilon/k_{\mathrm{B}}T}\) is the Boltzmann factor; higher-energy states are exponentially less probable.

The partition function and thermodynamics

The partition function encodes all thermodynamic information about the system. The mean energy is

\[ \langle U \rangle = -\frac{\partial \ln Z}{\partial \beta}, \qquad \beta \equiv \frac{1}{k_{\mathrm{B}}T}. \]The Helmholtz free energy is \(F = -k_{\mathrm{B}}T\ln Z\), and all other thermodynamic quantities follow by standard differentiation:

\[ S = -\left(\frac{\partial F}{\partial T}\right)_V, \quad P = -\left(\frac{\partial F}{\partial V}\right)_T, \quad \mu = \left(\frac{\partial F}{\partial N}\right)_{T,V}. \]For a monatomic ideal gas, the partition function factorises over particles: \(Z = z^N/N!\) where \(z = V/\lambda_\text{th}^3\) is the single-particle partition function, and \(\lambda_\text{th} = h/\sqrt{2\pi mk_{\mathrm{B}}T}\) is the thermal de Broglie wavelength. Computing \(F = -k_{\mathrm{B}}T\ln Z\) and then \(S = -(\partial F/\partial T)_V\) recovers the Sackur-Tetrode equation — a satisfying consistency check.

The classical regime corresponds to \(V/N \gg \lambda_\text{th}^3\): the mean volume per particle is much larger than the cube of the thermal de Broglie wavelength. When this condition fails, quantum statistics become essential and the classical partition function breaks down.

Mathematical framework of the partition function

The partition function \(Z(\beta, V, N) = \sum_j e^{-\beta\epsilon_j}\) encodes all thermodynamic information about a system in the canonical ensemble. The connection to the Helmholtz free energy \(F = -k_{\mathrm{B}}T\ln Z\) is the master relation from which everything else follows by differentiation.

The mean energy is obtained by differentiating with respect to \(\beta\):

\[ \langle U \rangle = -\frac{\partial \ln Z}{\partial \beta} = k_{\mathrm{B}}T^2\frac{\partial \ln Z}{\partial T}. \]The entropy is \(S = -(\partial F/\partial T)_V\):

\[ S = k_{\mathrm{B}}\ln Z + \frac{\langle U \rangle}{T} = k_{\mathrm{B}}\left(\ln Z - \beta\frac{\partial \ln Z}{\partial \beta}\right). \]The heat capacity follows as \(C_V = (\partial\langle U\rangle/\partial T)_V = k_{\mathrm{B}}\beta^2\,\partial^2\ln Z/\partial\beta^2\). It is also the energy variance divided by \((k_{\mathrm{B}}T)^2\): \(C_V = (\langle U^2\rangle - \langle U\rangle^2)/(k_{\mathrm{B}}T^2)\). This fluctuation-response relation is a special case of the fluctuation-dissipation theorem.

Worked example: two-level system

Consider a single quantum system with two levels: ground state at energy 0 and excited state at energy \(\epsilon\). The partition function is \(Z = 1 + e^{-\beta\epsilon}\). The mean energy is

\[ \langle U \rangle = -\frac{\partial\ln Z}{\partial\beta} = \frac{\epsilon\,e^{-\beta\epsilon}}{1 + e^{-\beta\epsilon}} = \frac{\epsilon}{e^{\beta\epsilon} + 1}. \]The heat capacity is

\[ C = k_{\mathrm{B}}\left(\frac{\epsilon}{k_{\mathrm{B}}T}\right)^2\frac{e^{\epsilon/k_{\mathrm{B}}T}}{(e^{\epsilon/k_{\mathrm{B}}T} + 1)^2}, \]which is the Schottky anomaly encountered in Chapter 9. The entropy is

\[ S = k_{\mathrm{B}}\ln(1 + e^{-\beta\epsilon}) + \frac{\epsilon}{T(e^{\beta\epsilon} + 1)}. \]At high temperatures \((k_{\mathrm{B}}T \gg \epsilon)\), both levels are equally populated and \(S \to k_{\mathrm{B}}\ln 2\) — the maximum entropy for a two-level system. For \(N\) independent such systems, all quantities scale by \(N\): \(\langle U\rangle_N = N\langle U\rangle\), \(C_N = NC\), \(S_N = NS\).

Worked example: harmonic oscillator from the partition function

For a quantum harmonic oscillator with energy levels \(\epsilon_n = \hbar\omega(n + 1/2)\), the partition function is \(Z = e^{-\beta\hbar\omega/2}\sum_{n=0}^\infty e^{-n\beta\hbar\omega} = e^{-\beta\hbar\omega/2}/(1 - e^{-\beta\hbar\omega})\). Taking the logarithm: \(\ln Z = -\beta\hbar\omega/2 - \ln(1 - e^{-\beta\hbar\omega})\). Differentiating with respect to \(-\beta\) gives the mean energy:

\[ \langle U \rangle = \frac{\hbar\omega}{2} + \frac{\hbar\omega}{e^{\hbar\omega/k_{\mathrm{B}}T} - 1}. \]The first term is the zero-point energy (present even at \(T = 0\)); the second is the thermal excitation. At high temperatures, \(e^x - 1 \approx x\) gives \(\langle U\rangle \approx \hbar\omega/2 + k_{\mathrm{B}}T\), and dropping the (constant) zero-point energy, \(C_V = k_{\mathrm{B}}\) per oscillator — consistent with classical equipartition. For \(N\) oscillators (Einstein solid with \(3N\) oscillators per atom), the high-temperature limit \(C_V = 3Nk_{\mathrm{B}}\) is the Dulong-Petit law.

The partition-function route to thermodynamics is superior to the microcanonical route (direct counting) when the energies are complicated or continuous. For systems with many types of excitation — electronic, vibrational, rotational, translational — one can often write \(Z = Z_\text{trans} \times Z_\text{rot} \times Z_\text{vib} \times Z_\text{elec}\) because the energies are approximately additive, and the free energy is then the sum of the free energies of each mode: \(F = F_\text{trans} + F_\text{rot} + \ldots\).

Application: the Einstein solid from the partition function

For a single quantum harmonic oscillator, \(Z = \sum_{n=0}^\infty e^{-n\beta\hbar\omega} = 1/(1 - e^{-\beta\hbar\omega})\). The mean energy is

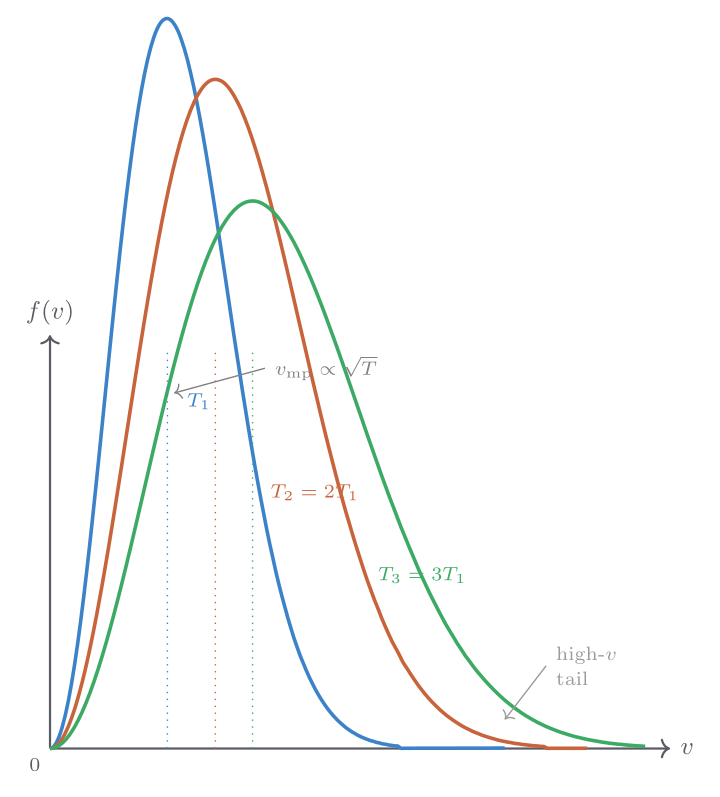

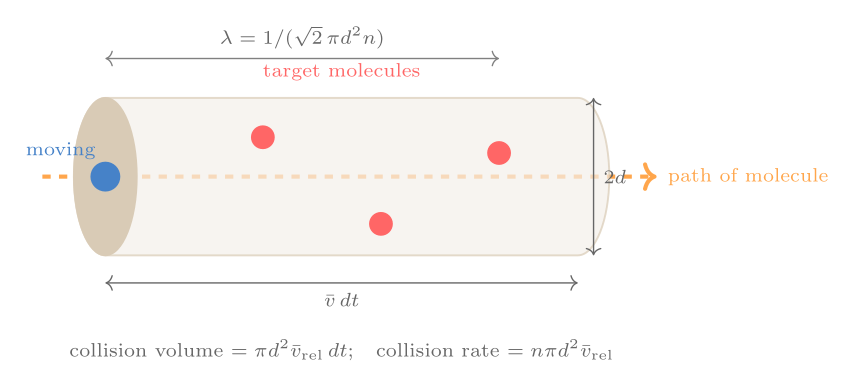

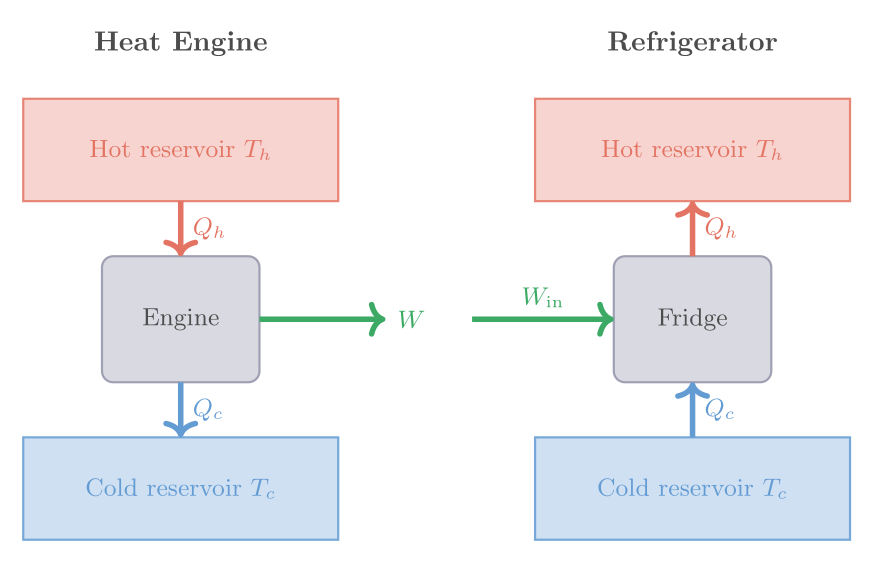

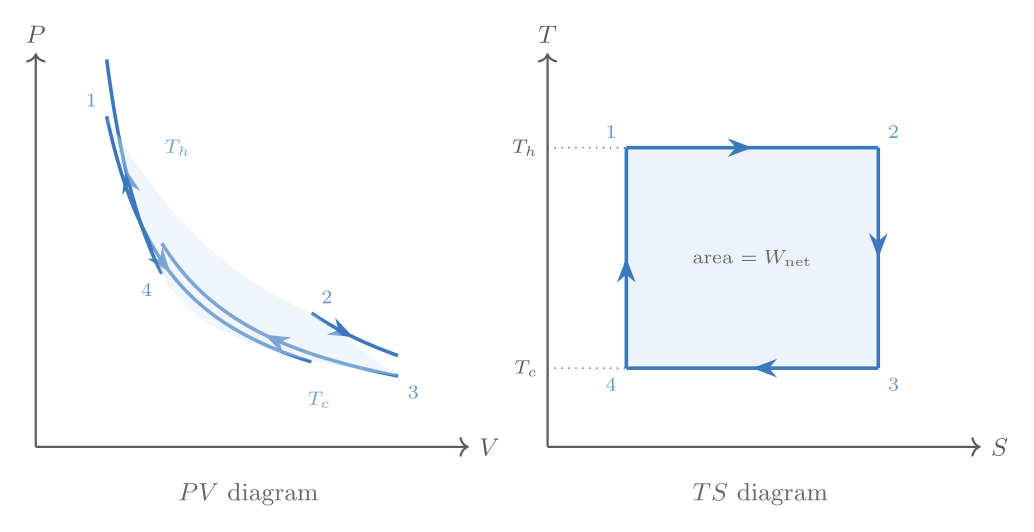

\[ \langle \epsilon \rangle = \frac{\hbar\omega}{e^{\hbar\omega/k_{\mathrm{B}}T} - 1} + \frac{\hbar\omega}{2}. \]For \(N\) oscillators in the Einstein solid, \(\langle U \rangle = N\langle \epsilon \rangle - N\hbar\omega/2\), and the heat capacity is