CS 762: Graph-Theoretic Algorithms

Therese Biedl

Estimated study time: 2 hr 30 min

Table of contents

These notes are based on Felix Zhou’s course notes. Additional explanations, visualizations, and examples have been added for clarity.

Introduction

The central theme of CS 762 is structural graph theory in service of algorithms. Many classical problems — graph coloring, independent set, dominating set — are NP-hard in general. But for graphs belonging to special classes, efficient algorithms frequently exist. Prof. Biedl’s course asks three organizing questions:

- What graph problems become easier when the input belongs to a class \(\mathcal{C}\)?

- How easy is it to recognize membership in \(\mathcal{C}\)?

- What are the structural properties and equivalent characterizations of \(\mathcal{C}\)?

Graph conventions. All graphs are finite, simple, and undirected unless stated otherwise. We write \(n = |V|\) and \(m = |E|\). Graphs are stored as adjacency (incidence) lists: vertex or edge insertion costs \(O(1)\), edge deletion \(O(1)\) given a pointer, vertex deletion \(\Theta(\deg v)\).

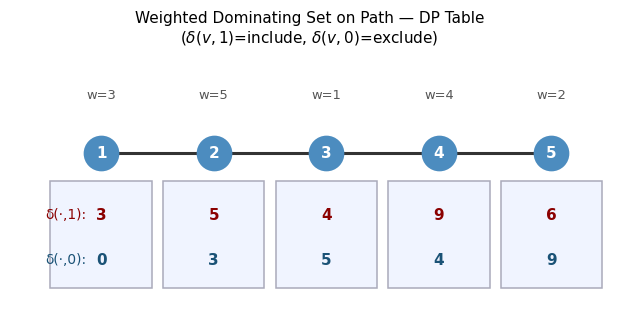

Warm-up: Weighted Dominating Set on Paths. Even before studying special graph classes, dynamic programming can exploit path structure. Define:

\[ \delta(v_i, 1) = \text{weight of best dominating set in } \{v_1,\ldots,v_i\} \text{ containing } v_i \]\[ \delta(v_i, 0) = \text{weight of best dominating set in } \{v_1,\ldots,v_i\} \text{ NOT containing } v_i \]The key insight: if \(v_i \notin D\) then \(v_{i-1}\) must be in \(D\) to dominate \(v_i\). If \(v_i \in D\) then \(v_{i-1}\) is already dominated and we may freely include or exclude it. This yields a linear-time solution — the pattern of “conditioning on the separator between solved and unsolved” recurs throughout the course.

The DP table \(\delta(\cdot,1)\) and \(\delta(\cdot,0)\) for a weighted path graph. Optimal solution is obtained bottom-up.

The DP table \(\delta(\cdot,1)\) and \(\delta(\cdot,0)\) for a weighted path graph. Optimal solution is obtained bottom-up.

Chapter 1: Planar Graphs

Planar graphs form the gateway into structural graph theory. They are among the oldest and most thoroughly understood graph classes, and their study motivates virtually every technique encountered later in the course: embeddings, dual constructions, separator theorems, and forbidden minor characterizations all make their first appearance here. The goal of this chapter is not merely to catalog properties of planar graphs, but to understand why those properties hold and how they can be exploited algorithmically.

1.1 Definitions and Embeddings

A planar graph is one that can be drawn in the plane without edge crossings. Making this precise requires separating the drawing (an analytic object) from the combinatorial information it encodes.

To work rigorously with planarity, we need to make the notion of a “drawing” mathematically precise. The following definition captures the essential requirement: edges are continuous curves, and distinct edge interiors never meet.

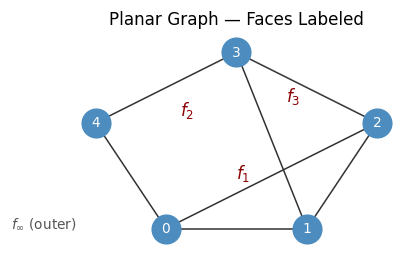

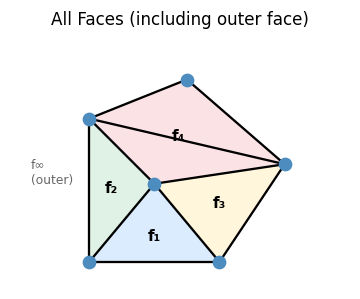

A planar drawing carves the plane into regions. These regions — the faces — are the fundamental objects of planar graph theory, and counting them correctly is what gives Euler’s formula its power.

The existence of the unique unbounded outer face is a topological fact: removing a compact graph from the plane leaves exactly one unbounded connected component.

A planar graph drawn without crossings. Interior faces \(f_1, f_2, f_3\) and the outer face \(f_\infty\) are labeled.

A planar graph drawn without crossings. Interior faces \(f_1, f_2, f_3\) and the outer face \(f_\infty\) are labeled.

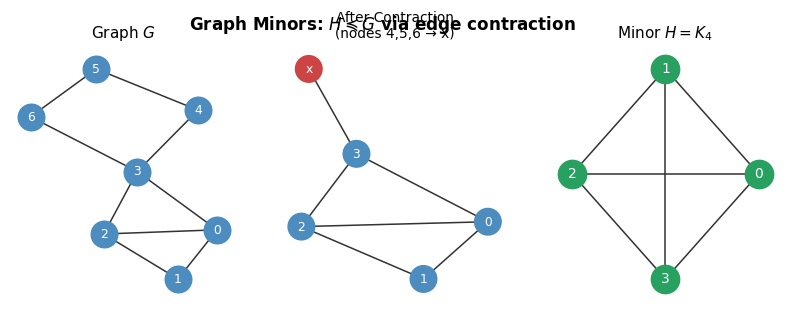

This closure under minors will become central in Chapter 6: it means that planarity is a minor-closed property, and by the Robertson–Seymour theorem this forces a finite forbidden minor characterization — which turns out to be exactly \(\{K_5, K_{3,3}\}\).

The combinatorial essence of a drawing is captured by the cyclic order of edges at each vertex. Two very different drawings can carry the same combinatorial information, so we want a discrete object that captures exactly this information.

A rotation system is a purely combinatorial object — a finite collection of cyclic orderings — with no reference to coordinates. Together with a choice of outer face, it completely encodes the planar embedding.

The combinatorial embedding is remarkably powerful: once we know the cyclic edge orders at every vertex, we can recover all faces of the drawing by following the “next edge at the far endpoint” rule, without ever consulting a geometric picture.

Every face — interior and exterior — can be identified from the rotation system alone.

Every face — interior and exterior — can be identified from the rotation system alone.

The re-rooting lemma tells us that the designation of an “outer face” is a matter of convention rather than a deep structural distinction: every face of a connected plane graph can serve as the outer face. This flexibility is frequently exploited — for instance, in the dual graph construction and in the max-flow algorithm of Section 1.4.

1.2 Euler’s Formula

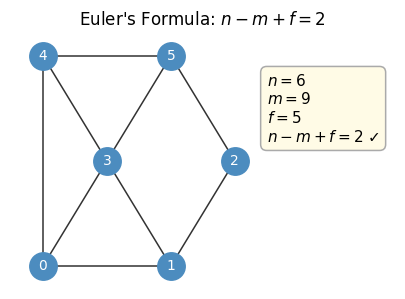

With the basic definitions in hand, we can now derive the most celebrated and useful theorem about planar graphs. Euler’s formula relates the three fundamental counts of a plane graph — vertices, edges, and faces — in a beautifully simple linear relation. That this relation is constant (equal to 2) for every connected plane graph is striking; it says that the topology of the drawing imposes an iron constraint on the combinatorics.

The proof strategy is characteristic of planar graph arguments: induct by reducing faces one at a time, maintaining the formula at each step. The base case — a tree, with exactly one face — is the anchor.

Example: \(n=6\), \(m=9\), \(f=5\) gives \(6 - 9 + 5 = 2\). ✓

Example: \(n=6\), \(m=9\), \(f=5\) gives \(6 - 9 + 5 = 2\). ✓

Euler’s formula immediately implies a tight upper bound on the number of edges in a planar graph. This edge bound is the workhorse of almost every planarity argument.

The proof uses a double-counting argument on the “face-edge incidence” — a technique that recurs throughout the course. An immediate consequence of Euler’s formula is that the number of faces is a topological invariant of the graph — any two planar drawings have the same face count.

Corollary 2.4.1.1. Let \(G\) be planar. Any planar drawing of \(G\) has the same number of faces.

The two bounds (\(m \leq 3n-6\) and \(m \leq 2n-4\)) immediately settle the non-planarity of the two forbidden minors.

Corollary 2.4.1.3. Every simple planar graph has a vertex of degree at most 5.

Lemma 2.4.2. Every simple planar triangle-free graph with at least 3 vertices has at most \(2n - 4\) edges.

The non-planarity of \(K_5\) and \(K_{3,3}\) is more than a curiosity: Kuratowski’s theorem asserts that every non-planar graph contains one of them as a subdivision. In this sense \(K_5\) and \(K_{3,3}\) are the complete obstruction theory for planarity.

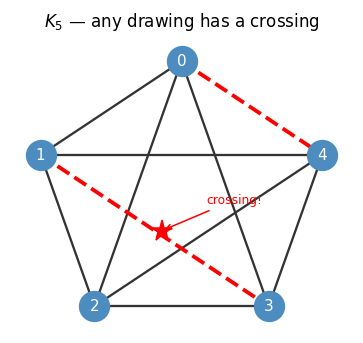

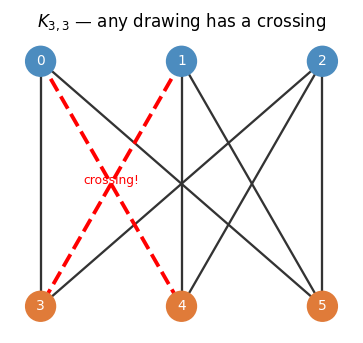

\(K_5\): any planar drawing must have at least one crossing (red dashed edges). The red star marks the crossing point.

\(K_5\): any planar drawing must have at least one crossing (red dashed edges). The red star marks the crossing point.

\(K_{3,3}\): the complete bipartite graph on \(3+3\) vertices cannot be embedded in the plane without a crossing.

\(K_{3,3}\): the complete bipartite graph on \(3+3\) vertices cannot be embedded in the plane without a crossing.

Kuratowski’s theorem is one of the great gems of combinatorics: it reduces the potentially uncountable family of non-planar graphs to just two obstructions. Wagner’s formulation replaces topological minors with graph minors, and both versions play roles in the course.

1.3 Algorithmic Consequences

The edge bound \(m \leq 3n-6\) is not only a non-planarity certificate; it is the source of several linear or near-linear-time algorithms. Every planar subgraph must satisfy the same bound, so every subgraph of a planar graph has minimum degree at most 5. This minimum-degree guarantee is the key to efficient greedy algorithms.

Definition 2.4.1 (Minimum-Degree Order). A vertex order \(v_1, \ldots, v_n\) such that \(v_i\) has minimum degree in \(G[v_1, \ldots, v_n]\).

Lemma 2.4.4. Every planar graph has a minimum-degree order \(v_1, \ldots, v_n\) such that each \(v_i\) has at most 5 predecessors. It can be found in linear time.

Minimum-degree order. Since every planar subgraph also has minimum degree \(\leq 5\) (from the edge bound), we can build a vertex order \(v_1, \ldots, v_n\) where each \(v_i\) has at most 5 predecessors in \(O(n)\) time.

Six-coloring. Greedy coloring along the minimum-degree order uses at most 6 colors. A more careful argument gives a linear-time 5-coloring algorithm.

The Four Color Theorem required a computer-assisted proof and remains one of the most computationally demanding results in mathematics. In contrast, the 5-colorability of planar graphs admits a clean inductive proof using the minimum-degree order — an instructive instance of how structural bounds yield algorithmic dividends.

Theorem 2.4.6. Any planar graph can be stored using \(O(n)\) space so that adjacency testing is \(O(1)\).

Adjacency testing. Storing predecessors in the minimum-degree order allows \(O(1)\) adjacency testing using \(O(n)\) space (since each vertex has \(\leq 5\) predecessors).

Maximum clique. Using \(O(1)\) adjacency testing, we can find maximum cliques in planar graphs by checking each vertex’s constant-size predecessor set.

Dijkstra’s algorithm. Since \(m \in O(n)\) for planar graphs, binary heaps suffice and Fibonacci heaps are unnecessary: shortest paths run in \(O(n \log n)\).

1.4 Dual Graphs

One of the most powerful ideas in planar graph theory is the construction of the dual graph, which interchanges the roles of vertices and faces. Historically this duality underpins Kirchhoff’s laws for electrical networks and, in modern form, gives the clean connection between minimum cuts and shortest paths in planar graphs.

The dual is itself a plane graph (embed each dual vertex inside its corresponding face, and route dual edges across primal edges). Crucially, taking the dual twice recovers the original graph.

Lemma 2.3.1. Let \(G\) be a connected plane graph. Then \((G^*)^* \cong G\).

Lemma 2.3.2. The dual graph of any connected plane graph \(G\) can be computed in \(O(m + n)\) time.

Definition 2.3.2 (Polygon Mesh). A way to approximate surfaces by small polygons. For genus-0 surfaces the polygon mesh is a planar graph. A triangular mesh is one where every face is a triangle; converting it to a quadrangular mesh is equivalent to finding a perfect matching in the dual.

This involution — dualizing twice yields the original — is what makes planar duality such a clean and useful tool. It converts cut problems in the primal to cycle problems in the dual, and vice versa.

Application — polygon mesh conversion. Given a triangular mesh (a triangulated planar graph), converting it to a quadrangular mesh means finding a perfect matching in the dual graph — a classical task solvable in linear time.

Application — maximum flow via shortest paths. For an undirected flow network that is \(st\)-planar (both \(s\) and \(t\) lie on the outer face), the minimum \(st\)-cut corresponds to a shortest path in the dual. We first set up the formal definitions of flow networks.

Definition 3.2.1 (Flow Network). A simple graph \(G\) with two distinct vertices \(s, t\) and a capacity function \(c : E \to \mathbb{R}^+\). The network is undirected if \(c(i,j) = c(j,i)\) for all \(ij \in E\).

Definition 3.2.2 (Network Flow). A function \(x : V \times V \to \mathbb{R}^+\) such that: (i) \(x(ij) > 0 \Rightarrow ij \in E\); (ii) \(x(ij) - x(ji) \leq c(ij)\) (capacity constraint); (iii) \(x(\delta(v)) = x(\delta(\bar v))\) for all \(v \neq s, t\) (balance constraint).

Definition 3.2.3 (Flow Value). The value of a flow \(x\) is \(x(\delta(s)) - x(\delta(\bar s))\), the net flow out of \(s\).

Definition 3.2.4 (st-Cut). A partition \((S, \bar S)\) of the vertices with \(s \in S\) and \(t \notin S\).

Definition 3.2.5 (Cut Value). The value of a cut \((S, \bar S)\) is \(x(\delta(S))\), the total capacity of edges crossing from \(S\) to \(\bar S\).

Lemma 3.2.1. If \(x\) is a flow and \((S, \bar S)\) is an \(st\)-cut, then \(x(\delta(s)) - x(\delta(\bar s)) \leq x(\delta(S))\).

Theorem 3.2.2 (Maximum-Flow Minimum-Cut). For any network, the value of the maximum \(st\)-flow equals the value of the minimum \(st\)-cut.

For planar graphs, a minimum cut corresponds to a cycle in the dual. More precisely:

Lemma 3.2.3. A minimum cut in an undirected planar network \((G, s, t)\) corresponds to a shortest-weight cycle in the dual graph \(G^*\) that separates \(s\) and \(t\).

For st-planar graphs, this is made algorithmic via the dual network:

Definition 3.2.6 (Dual Network for st-Planar Graph). Add an edge \(st\) in the primal to obtain \(G'\). Take the dual \((G')^*\) and delete the edge between the two faces \(s^*, t^*\) incident to \(st\). For any other dual edge \(e^*\), set \(w(e^*) = c(e)\).

Definition 2.2.2 (st-Planar). A graph is st-planar if it has a planar embedding in which both \(s\) and \(t\) lie on the outer face.

Lemma 3.2.4. The length of the shortest \(s^*t^*\)-path in the dual network equals the value of maximum flow in the primal network.

Theorem 3.2.5. Maximum flow in an undirected st-planar graph can be computed in \(O(n)\) time.

The key construction: add a temporary edge \(st\) on the outer face, form \(G'\), take its dual \((G')^*\), remove the dual edge between the two faces incident to \(st\), and set dual edge weights equal to primal capacities. A shortest \(s^*t^*\)-path in this network yields both the maximum flow value and a minimum cut. This result is a striking demonstration of planar duality at work: a flow problem in the primal becomes a shortest-path problem in the dual, and linear-time algorithms that would be impossible in general graphs become accessible.

1.5 NP-Hard Problems in Planar Graphs

Not everything becomes easy on planar graphs. Several fundamental problems remain NP-hard even when the input is planar, and understanding why — through carefully constructed reductions — reveals the limits of structural restrictions. The two main proof techniques exploit either the ability to simulate crossings with planar gadgets, or the fact that a simpler NP-hard problem (Planar 3-SAT) is already stated on a planar object.

Several problems remain NP-hard even restricted to planar graphs. The proof techniques are:

- Crossing gadget: reduce from the general (non-planar) version using a gadget that simulates a crossing while preserving planarity.

- Planarity-preserving reduction: start from an NP-hard problem already stated on planar graphs (e.g., Planar 3-SAT) and show the reduction preserves planarity.

These hardness results are important calibration points: they tell us that planarity alone is not a sufficient structural restriction for tractability. To get polynomial-time algorithms for hard problems, we need additional structural parameters — which is precisely the motivation for treewidth and the separator hierarchy developed in Chapters 3 and 4.

The crossing gadget argument works as follows. For 3-coloring, a crossing gadget is a planar subgraph on four boundary vertices \(a, a', b, b'\) such that any 3-coloring forces \(c(a) = c(a')\) and \(c(b) = c(b')\), while both same-color and different-color assignments are achievable.

Lemma 3.1.1. In any 3-coloring of the crossing gadget, \(c(a) = c(a')\) and \(c(b) = c(b')\). Moreover, there is a 3-coloring where \(c(a) = c(b)\) and one where \(c(a) \neq c(b)\).

Using this gadget, any graph \(G\) can be made planar while preserving 3-colorability.

Proposition 3.1.2. \(G\) is 3-colorable if and only if the planarized graph \(G_1\) (with crossings replaced by gadgets) is 3-colorable.

Planar 3-SAT is central: given a 3-SAT formula, build a graph with one vertex per variable, one per clause, edges for (literal, clause) containment, and a cycle over variables. If this SAT graph is planar, the instance is Planar 3-SAT.

Definition 3.1.1 (3-SAT Graph). Given an instance of 3-SAT, the corresponding SAT-graph has: one vertex for every variable \(x_i\); one vertex for every clause \(c_j\); an edge \(x_i c_j\) iff \(c_j\) contains \(x_i\) or \(\bar x_i\); and a variable cycle \(x_1, x_2, \ldots, x_n, x_1\).

Theorem 3.1.3. 3-SAT reduces to Planar 3-SAT, so Planar 3-SAT is NP-hard.

The reduction makes the SAT graph planar by rerouting the variable cycle and using crossing gadgets. The key reductions for other problems follow:

Theorem 3.1.4. 3-SAT reduces polynomially to Independent Set: given a 3-SAT instance with \(N\) variables and \(M\) clauses, construct a graph \(G'\) with a clause-triangle per clause and a variable-cycle per variable. Then the 3-SAT instance is satisfiable iff \(G'\) has an independent set of size \(M + \sum_i \deg(x_i)\).

Proposition 3.1.5. Applying the same reduction to Planar 3-SAT yields an instance of Independent Set whose graph is planar. Thus Independent Set is NP-hard in planar graphs.

Corollary 3.1.5.1. Independent Set is NP-hard even in planar graphs with maximum degree 3.

Chapter 2: Graph Drawing and Embeddings

Chapter 1 established the basic theory of planarity. This chapter turns to finer questions about how graphs are embedded: how to search a planar graph in a way that respects the embedding, how to test planarity algorithmically, and how to produce aesthetically and mathematically canonical drawings. These questions have both theoretical and practical significance — canonical orderings and straight-line drawings underlie modern graph visualization tools.

2.1 Right-First Search

Ordinary DFS ignores the geometry of a planar embedding and treats all outgoing edges symmetrically. In a plane graph, there is a canonical way to break ties: use the cyclic edge order at each vertex. Right-First Search is a DFS that breaks ties using the embedding: at each vertex, edges are explored in counter-clockwise order with the incoming edge last.

RightFirstSearch(G, v₀, e₀):

discovered[v] = None for all v

def recurse(v, e):

discovered[v] = e # arrived via e

for eᵢ in edges at v in CCW order, with e last:

v, w = eᵢ

if discovered[w] is None:

recurse(w, eᵢ)

recurse(v₀, e₀)

The key insight is that always taking the “rightmost” option at each step produces paths with a global optimality property. To make this precise, we need to define what “rightmost” means globally.

The right-most path is the one that “hugs” the rightmost part of the plane as tightly as possible. The following lemma says that greedy right-first choices globally produce this extremal path.

This lemma is the engine behind the Menger algorithm for st-planar graphs: right-most paths in an st-planar graph are “as far apart as possible,” so finding them greedily and removing used vertices produces a maximum collection of vertex-disjoint paths.

Menger’s problem in \(st\)-planar graphs. Given an \(st\)-planar directed graph, find the maximum number of vertex-disjoint \(st\)-paths. Algorithm:

- Temporarily insert edge \(ts\) on the outer face.

- Run right-first search from \(s\) relative to \(ts\).

- If \(t\) is reached, output the path, remove used vertices (excluding \(s, t\)), repeat.

Since each call only visits previously unvisited edges, total time is \(O(m + n)\). The right-most lemma guarantees optimality.

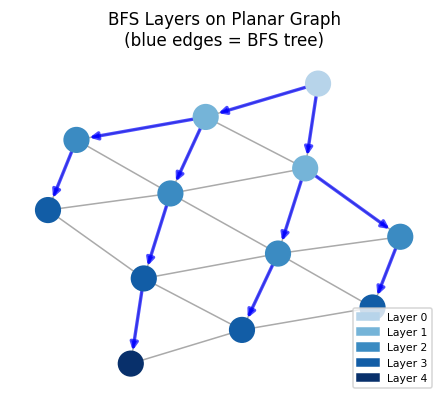

BFS layering reveals structure exploitable by algorithms like Baker’s PTAS. Blue edges form the BFS tree; node shading encodes distance from the root.

BFS layering reveals structure exploitable by algorithms like Baker’s PTAS. Blue edges form the BFS tree; node shading encodes distance from the root.

2.2 Planarity Testing

A graph is planar if and only if a planar embedding exists. Recognizing planarity and constructing the embedding can both be done in linear time. This is a remarkable algorithmic achievement: planarity is a global topological property, yet it can be certified by a local, incremental DFS procedure.

The key idea is to build the embedding one DFS subtree at a time. At each step, we maintain the invariant that the partial embedding is a valid “bush form.”

The validity condition ensures that the “pending” edges (those connecting the current partial subgraph to the rest of the graph) can all be routed out through a single face, leaving room for the rest of the embedding to be appended.

Lemma 4.1.1. Let \(G\) be planar and \((V', \bar{V}')\) be a cut for which \(G[V \setminus V']\) is connected. Then \(G[V']\) has a planar embedding where all endpoints of cut edges lie on the outer face.

The Haeupler–Tarjan algorithm runs DFS and, at each retreat from a vertex \(w\) to parent \(v\), checks whether the bush form of \(D(w)\) (descendants of \(w\)) can accommodate the edges from \(v\) into \(D(w)\) consecutively on the outer face. Two auxiliary lemmas drive the correctness argument.

Lemma 4.2.1. If \(G\) is planar, then for any tree edge \(vw\) with \(v\) the parent, the bush form \(B(D(w))\) has a valid embedding where the leaves of cut-edges are consecutive on the outer face.

Lemma 4.2.2. PQ-trees can be implemented such that a reduction for constraint \(I\) takes \(O(|I|)\) amortized time.

Lemma 4.2.3. Let \(X\) be a finite set and \(\mathcal{I}\) a finite set of consecutive constraints. We can test whether there is a permutation of \(X\) such that for every \(I \in \mathcal{I}\) the elements are consecutive in \(O(\sum_{I \in \mathcal{I}} |I|)\) time.

Proposition 4.2.4. At the time we retreat from \(w\) to its parent \(v\), if \(G\) is planar then a reduction of the PQ-tree \(PQ(w,t)\) with respect to constraint \(I := S(v)\) is successful.

This is tracked using PQ-trees — a data structure specifically designed for the problem of maintaining consecutive orderings under constraints.

PQ-trees encode a potentially exponential family of permutations in a polynomial-sized structure, and reductions are cheap precisely because the planarity constraints imposed at each DFS step are local. This amortized efficiency is what enables the linear overall runtime.

This is optimal: any algorithm must at least read the entire input graph, which takes \(\Omega(m)\) time.

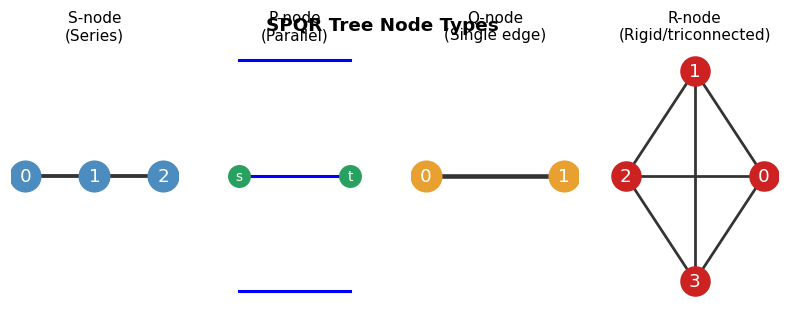

The four SPQR-tree node types encode biconnected components: S (series), P (parallel), Q (single edge), R (rigid/triconnected). The SPQR tree gives a compact representation of all planar embeddings of a biconnected graph.

The four SPQR-tree node types encode biconnected components: S (series), P (parallel), Q (single edge), R (rigid/triconnected). The SPQR tree gives a compact representation of all planar embeddings of a biconnected graph.

2.3 Triangulated Graphs and Canonical Orderings

To produce straight-line and grid drawings of planar graphs, we need a special vertex ordering that allows an inductive construction of the drawing. Triangulated graphs — those where every face is a triangle — are the natural starting point, since every planar graph can be triangulated by adding edges. The canonical ordering is the key algorithmic tool.

Triangulated planar graphs are the “densest” planar graphs possible — adding any edge would create a crossing. Three useful characterizations hold simultaneously:

Lemma 5.1.1. The following are equivalent for a simple planar graph with \(n \geq 3\): (1) \(G\) is triangulated; (2) \(G\) is maximal planar; (3) \(G\) has exactly \(3n - 6\) edges.

Definition 5.1.1 (Maximal Planar Graph). A simple planar graph to which no edge can be added while preserving planarity.

Lemma 5.1.2. Any triangulated graph with \(n \geq 3\) has exactly \(2n - 4\) faces.

Lemma 5.1.3. Any simple triangulated graph is 3-connected.

Lemma 5.1.4. Any simple planar graph can be made triangulated by adding edges.

The triangulated disk is the building block for inductive constructions:

Definition 5.2.1 (Inner Triangulated Graph). A set of points and straight-line edges such that all interior faces are triangles.

Definition 5.2.2 (Triangulated Disk). A planar graph with a planar drawing that is inner triangulated and whose outer face is a simple cycle.

Lemma 5.2.1. Any triangulated disk \(G\) is 2-connected. Furthermore, any separating pair \(\{v, w\}\) has both \(v, w\) on the outer face connected by a chord.

The canonical ordering is designed so that each new vertex \(v_k\) “sits on top of” a consecutive arc of the current outer face, making an inductive placement of vertices geometrically consistent.

Lemma 5.3.1. Let \(G\) be a triangulated graph with canonical order \(v_1, \ldots, v_n\). For each \(3 \leq k \leq n-1\), the predecessors of \(v_k\) on the outer face of \(G_{k-1}\) form a consecutive path.

Lemma 5.3.2. Let \(G\) be a triangulated disk with outer-face edge \(ab\). Then \(G\) has a vertex order \(v_1 = a, v_2 = b, v_3, \ldots, v_n\) such that for \(i \geq 3\), vertex \(v_i\) has at least two predecessors on the outer face of \(G[v_1, \ldots, v_i]\) and at least one successor.

Theorem 5.3.3. Let \(G\) be a triangulated planar graph with outer face \(\{a, b, t\}\). There is a canonical order of \(G\) starting \(v_1 = a\), \(v_2 = b\), \(v_n = t\).

Lemma 5.3.4. A canonical order of a triangulated planar graph can be found in linear time.

The existence of a canonical ordering is not obvious: it requires showing that there always exists a vertex whose removal leaves the graph in the right inductive state. The proof uses the structure of 2-connected triangulated disks.

Key property (maintained inductively): \(G_k = G[v_1, \ldots, v_k]\) is a triangulated disk — 2-connected, all inner faces are triangles, the outer face is a simple cycle. The predecessors of \(v_{k+1}\) form a consecutive interval on the outer face of \(G_k\).

Schnyder Wood. The canonical ordering induces a partition of the edges of a triangulated graph into 3 spanning forests (actually spanning trees, after accounting for vertex indices).

Theorem 5.3.5. The edge set of any triangulated simple graph can be partitioned into 3 edge-disjoint trees.

Definition 5.3.2 (Schnyder Wood). The three trees obtained from a canonical order as above.

Definition 5.3.3 (Arboricity). The minimum number of forests needed to cover all edges of a graph \(G\).

Corollary 5.3.5.1. Every planar graph has arboricity at most 3.

The arboricity bound is sharp: the icosahedron has arboricity exactly 3. It also implies that any planar graph can be 3-colored as a “forest” rather than a graph — a useful structural decomposition.

Straight-line embedding (Tutte / Canonical Order). The canonical ordering constructively proves that planar graphs do not need curved edges in their drawings — every planar graph has a drawing where all edges are straight line segments.

The proof strategy — place each new vertex in the interior of the triangle formed by its outer-face neighbors — is an inductive geometric argument enabled entirely by the canonical ordering.

Visibility representation. A bar visibility representation maps each vertex to a horizontal segment and each edge to a vertical segment connecting its endpoints. The canonical ordering implies every triangulated graph has a strong visibility representation, with coordinates in \([1, 3n-6] \times [1, n]\).

Definition 5.3.4 (Bar Visibility Representation). Each vertex is a horizontal line segment. Each edge is a vertical line segment connecting the bars of its endpoints and intersecting no other bars.

Definition 5.3.5 (Strong Visibility Representation). A bar visibility representation where edges between two vertices exist if and only if there is some vertical line intersecting both horizontal vertex segments and nothing else.

Definition 5.3.6 (Weak Visibility Representation). A bar visibility representation where the strong model rule does not necessarily apply.

Theorem 5.3.6. Every triangulated graph has a strong visibility representation.

Corollary 5.3.6.1. Every planar graph has a weak visibility representation. (Proof: triangulate and apply the theorem.)

Corollary 5.3.6.2. Any planar graph has a weak visibility representation for which all endpoints are grid points in a grid of size \(O(n) \times O(n)\).

Theorem 5.3.7. Every planar graph is the minor of a \(k \times k\)-grid for \(k \leq 3n\).

Theorem 5.3.8. Every simple planar graph has a straight-line embedding.

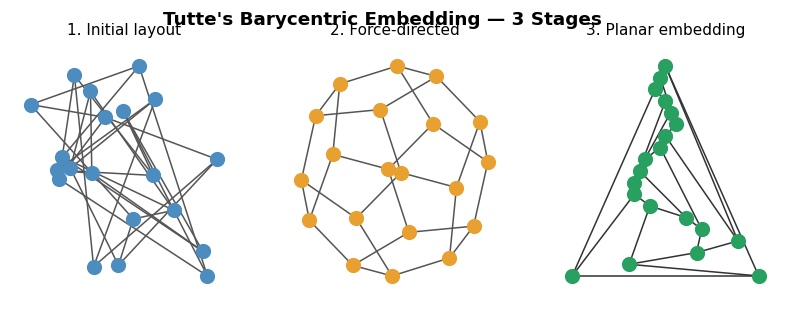

Three stages of Tutte’s barycentric embedding on the dodecahedron: (1) arbitrary initial placement, (2) force-directed layout, (3) final planar embedding.

Three stages of Tutte’s barycentric embedding on the dodecahedron: (1) arbitrary initial placement, (2) force-directed layout, (3) final planar embedding.

Chapter 2.5: Graph Classes Beyond Planar

Planar graphs are the starting point of structural graph theory, but they are far from the end of the story. Once we have a clean characterization of one class — planar graphs — the natural question is: what lies on either side? Are there useful sub-classes of planar graphs, and are there larger classes that capture some but not all of the planarity structure?

This chapter charts a hierarchy of graph classes organized by how “planar-like” they are. On the more restrictive side, we have outerplanar graphs (all vertices on the outer face), series-parallel graphs (built by series and parallel combinations), and Apollonian networks (built by iterating triangle subdivision). Each restriction imposes stronger structural constraints and enables more efficient algorithms. On the less restrictive side, we have near-planar graphs — those with bounded crossing number, bounded skew number, or bounded local crossing number — and 3D graphs, which can always be drawn in three dimensions without any crossings.

The deeper purpose of this survey is to calibrate our intuition for treewidth. Outerplanar graphs have treewidth at most 2; series-parallel graphs are precisely the partial 2-trees; Apollonian networks are 3-trees. Each sub-class of planar graphs sits at a specific treewidth level in the hierarchy we will make precise in Chapter 4. Understanding this hierarchy — and which problems are tractable at each level — is the conceptual scaffold for the entire second half of the course.

2.5.1 Graphs in 3D

Surprisingly, the restriction to the plane is what causes crossing problems. In three dimensions, there is so much “room” that any graph whatsoever can be drawn without crossings using only straight edges. The proof uses the moment curve — a one-dimensional algebraic variety in \(\mathbb{R}^3\) with the property that no four of its points are coplanar.

Theorem 6.1.1. Any graph can be drawn straight-line without crossings in 3D.

The proof places vertices on the moment curve \(\{(x, x^2, x^3) : x \in \mathbb{R}^+\}\). Any potential crossing would require 4 coplanar points on the moment curve, but Vandermonde determinants show no 3 distinct points on the moment curve are collinear, so no 4 are coplanar.

Definition 6.1.1 (Knot-Less Graph). A graph that can be drawn in 3D such that any cycle is homeomorphic to the unit circle.

Definition 6.1.2 (Link-Less Graph). A graph that can be drawn in 3D such that no two disjoint cycles form curves that are linked.

2.5.2 Near-Planar Graphs

If we must work in the plane but cannot avoid all crossings, the natural question is how few crossings are unavoidable. The crossing number of a graph is the minimum over all drawings. Allowing even one crossing dramatically expands the class — graphs of crossing number 1 include many non-planar graphs — but crossing number remains NP-hard to compute. Weaker variants (bounded skew number, bounded local crossings) give tractable recognition problems and often preserve enough planar structure for algorithmic purposes.

Definition 6.1.3 (Bounded Crossing Number). A graph \(G\) has crossing number \(k\) if there is a drawing of \(G\) in the plane with exactly \(k\) crossings.

Definition 6.1.4 (Bounded Skew Number). A graph has skew number \(k\) if there exists a drawing of \(G\) in the plane with at most \(k\) edges removed to make it planar, and \(k\) is minimal.

Definition 6.1.5 (\(k\)-Planar Graphs). Graphs that can be drawn such that any edge crosses at most \(k\) other edges.

2.5.3 Outer-Planar Graphs

Outer-planar graphs are the simplest proper sub-class of planar graphs. The requirement that every vertex lies on the outer face is a strong restriction: it means the graph is “one-dimensional” in a sense — you can always see all vertices from the outside. This makes many hard problems tractable. For instance, any maximal outer-planar graph is a triangulated disk whose dual is a tree, so problems that require global reasoning on a general planar graph reduce to tree problems on outer-planar graphs.

The key algorithmic leverage is that outer-planar graphs have treewidth at most 2: their structure is captured by a tree of triangles (the interior dual), and the DP from Chapter 4 runs in \(O(n)\). This connection — outerplanar equals partial 2-tree — will be made precise in Section 4.4.

Definition 6.2.1 (Outer-Planar). A graph with a planar embedding such that all vertices lie on the outer face.

Lemma 6.2.1. A graph \(G\) is outer-planar if and only if adding a universal vertex \(u\) (adjacent to all others) yields a planar graph.

Corollary 6.2.1.1. Outer-planarity testing can be done in \(O(n)\) time.

Theorem 6.2.2. Any maximal outer-planar graph \(G\) with \(n \geq 3\) vertices satisfies: (1) every interior face is a triangle; (2) the outer face is a simple cycle (Hamiltonian); (3) \(G\) is a triangulated disk; (4) \(G\) has exactly \(n - 2\) interior faces; (5) \(G\) has exactly \(2n - 3\) edges.

Lemma 6.2.3. Let \(G\) be a maximal outer-planar graph. Then \(G^* = \{v^*\} \cup T\) where \(v^*\) is the outer-face vertex and \(T\) is a tree with maximum degree 3.

Lemma 6.2.4. Every maximal outer-planar graph has a vertex ordering \(v_1, \ldots, v_n\) such that \(v_1 v_2\) is an outer-face edge and each \(v_i\) (\(i \geq 3\)) has exactly two predecessors that are adjacent.

The vertex order from Lemma 6.2.4 admits an easy linear-time 3-coloring by greedy on this order.

Definition 6.2.2 (\(k\)-Outer-Planar Graphs). Graphs that can be embedded in the plane such that all vertices are removed after removing the outer face \(k\) times (onion peeling).

Theorem 6.2.5. Let \(G\) be a planar graph with a planar grid-drawing in a \(w \times h\) grid. Then the outer-planarity of \(G\) is at most \(\min\!\left(\lceil w/2 \rceil, \lceil h/2 \rceil\right)\).

2.5.4 Series-Parallel Graphs

Series-parallel graphs generalize the circuits of electrical engineering. Any resistor network built from series and parallel combinations — no Wheatstone bridge, no \(Y\)-\(\Delta\) configuration — is a series-parallel graph. The recursive construction makes many optimization problems (shortest paths with constraints, maximum flow, network reliability) solvable in linear time by a simple bottom-up computation on the SP-tree. Series-parallel graphs sit at exactly treewidth 2 in the hierarchy: they are the partial 2-trees, strictly more general than outerplanar graphs but still far more tractable than general planar graphs.

Definition 6.2.3 (2-Terminal SP-Graph). A graph with two terminals \(s, t\) built inductively: base case is a single edge \(st\); series combination identifies \(t_1\) with \(s_2\); parallel combination identifies both \(s_1 = s_2\) and \(t_1 = t_2\).

Lemma 6.2.6. Let \(G\) be a 2-terminal SP-graph with edge \(vw\). Both (1) duplicating \(vw\) and (2) subdividing \(vw\) with a new vertex yield 2-terminal SP-graphs.

Lemma 6.2.7. Let \(G\) be a 2-terminal SP-graph with \(n \geq 3\) and terminals \(s, t\). Then: (1) \(G\) has at most \(2n - 3\) edges; (2) \(G\) is either a single edge or contains a vertex of degree 2; (3) \(G\) is planar with terminals on the outer face; (4) \(G\) has a 3-coloring where the terminals receive different colors; (5) \(G\) has an acyclic edge orientation with \(s\) the only source and \(t\) the only target.

Definition 6.2.4 (Series-Parallel Graph). A graph that is a spanning subgraph of some 2-terminal series-parallel graph.

2.5.5 Apollonian Networks

Apollonian networks sit at treewidth 3 in the hierarchy. They arise naturally in geometry — the Apollonian gasket of classical circle-packing is their continuous analog — and they serve as the canonical example of 3-trees. Every Apollonian network is triangulated, planar, and has a clean recursive structure: at each step, a new vertex is inserted into a triangular face and connected to all three triangle vertices. This inductive definition gives a perfect elimination order almost by construction.

Definition 6.2.5 (Apollonian Network). A triangulated plane graph obtained as follows: base case is a triangle; inductive step inserts a new vertex inside a triangular inner face and connects it to all three triangle vertices.

Lemma 6.2.8. Any Apollonian network on \(n \geq 4\) vertices has a vertex order \(v_1, \ldots, v_n\) where \(v_1, v_2, v_3\) form a triangle and for \(i \geq 4\), vertex \(v_i\) has exactly three predecessors that form a triangle. Hence every Apollonian network is a 3-tree.

Chapter 3: Separators and Decompositions

The previous chapters developed the rich structure of planar graphs and their embeddings. We now turn to one of the most powerful consequences of planarity for algorithm design: the existence of small separators. A separator is a small set of vertices whose removal splits the graph into balanced pieces. Small separators enable divide-and-conquer algorithms with sub-quadratic running times for problems that are otherwise expensive, and they lead directly to the concept of treewidth explored in Chapter 4.

3.1 Separators

The central question is: how cheaply can we split a planar graph into balanced pieces? “Cheaply” means using few vertices as the separator. If a separator has \(s\) vertices, then to solve a problem by divide-and-conquer we pay at most \(O(s \cdot T(n/2) + s \cdot C)\) for the boundary work, where \(C\) is the per-separator-vertex cost. For the recursion to beat \(O(n^2)\), we need \(s = o(n)\). The Lipton–Tarjan theorem gives \(s = O(\sqrt{n})\), which is enough to beat quadratic time for a wide range of problems.

To measure “balance,” we allow vertices to have weights and ask that no piece of the divided graph is too heavy. This generality is important for the recursive application of the separator theorem.

A \(\frac{1}{2}\)-separator is the strongest case: every piece has at most half the total weight. Separators of size \(O(\sqrt{n})\) in planar graphs — the Lipton–Tarjan theorem — are what make the divide-and-conquer algorithms of Section 3.2 possible.

Definition 15.1.2 (Uniform Weights). The weight function \(w(v) = 1\) for all \(v \in V\).

Lemma 15.2.1. The \(a \times b\)-grid has a \(\frac{1}{2}\)-separator of size \(\min(a, b)\).

Lemma 15.2.2. The \(k \times k\)-grid does NOT have a \(\frac{1}{2}\)-separator of size \(\frac{1}{2}k\), even for uniform weights.

Lemma 15.2.3. The 3-tree obtained from \(K_4\) by inserting 3 weight-1 vertices into each face does not have an \(\alpha\)-separator of size 3 or less for \(\alpha < \frac{3}{4}\).

Proposition 15.3.1. In any tree, the vertex returned by the maximum-weighted-child walk is a \(\frac{1}{2}\)-separator.

Lemma 15.3.2. Every tree with \(n\) nodes has \(\mathrm{pw}(T) \leq \log_2 n\).

Theorem 15.4.1. Every partial \(k\)-tree \(G\) has a weighted \(\frac{1}{2}\)-separator of size at most \(k + 1\).

Lemma 15.4.2. The \(k \times k\)-grid has treewidth at least \(k/2\).

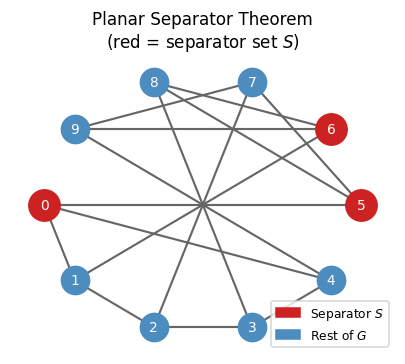

The planar separator theorem guarantees a small vertex cut (red) dividing the graph into balanced parts. Here the Petersen graph is shown with a small separator highlighted.

The planar separator theorem guarantees a small vertex cut (red) dividing the graph into balanced parts. Here the Petersen graph is shown with a small separator highlighted.

Separators in trees. Any tree has a \(\frac{1}{2}\)-separator of size 1: pick the root \(r\), walk to the heaviest child repeatedly until no child’s subtree exceeds \(\frac{1}{2} w(V)\). The current vertex is the separator.

The proof strategy is elegant: the onion peeling structure of planar graphs (Section 6.1) allows us to sparsify the graph to something of bounded treewidth, on which small separators are guaranteed. The optimal \(k = \Theta(\sqrt{n})\) balances the two separator contributions.

3.2 Divide-and-Conquer with Separators

The Planar Separator Theorem is not just a structural curiosity — it is a powerful algorithmic tool. For problems whose running time scales superlinearly with the number of vertices, a balanced separator lets us split the work, solve independently, and combine. The recursion tree has depth \(O(\log n)\) and at each level, the separator vertices require special attention.

Planar separators enable divide-and-conquer algorithms with sub-quadratic running times:

Shortest directed cycle. Use the \(\frac{1}{2}\)-separator recursively. At each level, run Dijkstra from each separator vertex. Recursion: \(T(n) = \sum T(n_i) + O(\sqrt{n} \cdot n \log n)\), yielding \(T(n) \in O(n^{3/2} \log n)\).

Global minimum cut. By duality, a minimum cut in an undirected planar graph corresponds to a shortest cycle in the dual. The same divide-and-conquer approach applies.

Maximum matching. Build the matching recursively in each flap, then augment along paths passing through each separator vertex. Total time: \(O(n^{3/2} \log n)\).

3.3 Separator Hierarchies and Pathwidth

We have used separators as a computational device. Now we show that the recursive separator structure carries deep information about the graph’s intrinsic complexity: it gives an explicit upper bound on pathwidth, and through pathwidth, on treewidth. This is the bridge that takes us from the “divide-and-conquer” world of separator algorithms into the “bag decomposition” world of treewidth.

The recursive application of the separator theorem produces a hierarchical decomposition of the graph that is more than just a computational convenience — it encodes the graph’s structure in a form that directly gives bounds on pathwidth and treewidth. This connection is the bridge between the separator world and the decomposition world of Chapter 4.

The hierarchy records the full recursive structure: at each level, the separator vertices are the “interface” between the two halves. Collecting these interfaces along a root-to-leaf path gives a sequence of bags that forms a path decomposition.

Definition 15.7.1 (\(\alpha\)-Hierarchy). An \(\alpha\)-separator hierarchy of a weighted graph \(G\) is a rooted tree where each node \(i\) stores a separator \(S(i) \neq \emptyset\) such that: (i) each \(v \in V\) is stored at exactly one node; (ii) for every internal node \(i\), \(S(i)\) is an \(\alpha\)-separator of \(G(i)\) (the graph at \(i\) and descendants); (iii) children of \(i\) correspond to flaps of \(G(i) - S(i)\).

Proposition 15.7.1. In an \(\alpha\)-hierarchy with uniform weights, any node \(i\) at depth \(d\) has \(n(i) \leq n \alpha^d\) and the hierarchy height is at most \(\log_{1/\alpha} n\).

Proposition 15.7.2. The path decomposition obtained from a separator hierarchy (by letting each leaf’s bag be the union of all ancestor separators, arranged left-to-right) is a valid path decomposition of \(G\).

From a hierarchy, one extracts a path decomposition by letting each leaf’s bag be the union of all ancestor separators. This yields:

Theorem 15.7.3. Any induced-closed graph class \(\mathcal{G}\) with \(\alpha\)-separators of size \(\sigma(n)\) has pathwidth at most \(\sigma(n) \cdot \log_{1/\alpha} n\).

Corollary 15.7.3.1. Every partial \(k\)-tree has pathwidth at most \((k+1) \log n\).

Lemma 15.7.4. If an induced-closed graph class \(\mathcal{G}\) has \(\alpha\)-separators of size \(O(\sqrt{n})\), then all graphs in \(\mathcal{G}\) have pathwidth \(O(\sqrt{n})\).

Corollary 15.7.4.1. Any planar graph has \(\mathrm{tw}(G) \leq \mathrm{pw}(G) \in O(\sqrt{n})\).

Theorem 15.8.1 (Generalized Separator). If an induced-closed graph class \(\mathcal{G}\) has \(\alpha\)-separators of size \(O(\sqrt{n})\), then for any \(\epsilon > 0\), it also has \(\epsilon\)-separators of size \(O(\sqrt{n/\epsilon})\).

The tightness of the \(k \times k\)-grid example shows that the separator theorem is essentially sharp for planar graphs: neither the separator size nor the treewidth bound can be improved to \(o(\sqrt{n})\) in general. This motivates the quest for subclasses with smaller treewidth, addressed in the next chapter.

Chapter 4: Tree Decompositions and Treewidth

This is the heart of the course. Tree decompositions generalize interval graph representations and allow dynamic programming to be lifted from trees to any graph with small “tree-like” structure. The concept of treewidth — the minimum width of any tree decomposition — emerges as the master parameter of the whole theory: small treewidth enables efficient algorithms for virtually all natural graph problems, while large treewidth (specifically, containing a large grid minor) signals computational hardness.

4.1 Interval Graphs and Chordal Graphs

Before defining tree decompositions in full generality, it is instructive to study two families where the decomposition structure is already visible: interval graphs and chordal graphs. These classes admit polynomial-time algorithms for problems that are NP-hard in general, and understanding why sets up the machinery for bounded-treewidth graphs.

Here is a useful mental model. Think of Gaussian elimination on a sparse symmetric matrix: when you eliminate a variable, you fill in edges between its remaining neighbors. If those neighbors already form a clique, no new edges are created — the matrix stays sparse. A graph where every vertex can be eliminated in this “fill-free” order is precisely a chordal graph, and the elimination order is its perfect elimination order. This connection is not merely analogical: Gaussian elimination on the adjacency matrix of a chordal graph runs without any fill-in, which is why chordal graphs are the key to efficient sparse linear solvers. The algorithm we study — Maximum Cardinality Search — is essentially the graph-theoretic version of choosing the best pivot at each elimination step.

Definition 7.1.1 (Intersection Graph of Intervals). Given a set of intervals, define a graph with one vertex \(v\) per interval \(I_v\) and an edge \(vw\) iff \(I_v \cap I_w \neq \emptyset\).

Definition 7.1.2 (Interval Graph). \(G\) is an interval graph if it is the intersection graph of some set of intervals.

Interval graphs model scheduling conflicts, molecular sequence overlaps, and a host of other practical problems where “overlap” is the fundamental relation. Their structure is essentially one-dimensional, which is why they are so tractable.

Proposition 7.1.1. A cycle of length \(k \geq 4\) cannot be an interval graph.

Definition 7.1.3 (Induced-Hereditary). A graph class closed under taking induced subgraphs. Interval graphs are induced-hereditary.

Definition 7.1.4 (Hereditary). A graph class closed under taking subgraphs. Interval graphs are NOT hereditary.

Definition 7.2.1 (Chordal Graph). A graph \(G\) is chordal if it does not contain an induced \(k\)-cycle for any \(k \geq 4\).

Chordality is the abstract algebraic generalization of interval graph structure: a graph is chordal when it has no “long” induced cycles, i.e., when every cycle can be “shortcut” by a chord.

Every interval graph is chordal. Every tree is chordal.

The key algorithmic handle on chordal graphs is the perfect elimination order (PEO): an ordering in which each vertex, together with its earlier neighbors, forms a clique. The PEO is the sequence in which vertices can be “safely eliminated” — removed from the graph without disturbing the clique structure of their neighborhood. Think of building up the graph in reverse: each new vertex \(v_i\) arrives and finds that all of its already-placed neighbors are mutually adjacent, forming a clique. This is exactly the condition that makes greedy coloring optimal and that allows the tree decomposition to be read off from the order.

Definition 7.3.1 (Perfect Elimination Order). A vertex order \(v_1, \ldots, v_n\) such that \(\mathrm{pred}(v_i) = \{v_j : j < i,\, v_j v_i \in E\}\) forms a clique for all \(i\).

The PEO is precisely the order in which vertices can be eliminated — removed without destroying the clique structure of their neighborhood. This turns out to characterize chordality exactly.

Theorem 7.3.1. If \(G\) has a perfect elimination order, then \(G\) is chordal. The converse also holds: \(G\) is chordal if and only if it has a perfect elimination order.

This equivalence is the key to understanding chordal graphs algorithmically: we can recognize them, solve optimization problems on them, and build their tree decompositions, all via the PEO.

Definition 8.1.1 (Simplicial Vertex). A vertex \(v \in V(G)\) whose neighbours form a clique in \(G\).

Lemma 8.1.1. A graph \(G\) has a p.e.o. if and only if every induced subgraph of \(G\) has a simplicial vertex.

Maximum Cardinality Search (MCS). Repeatedly pick the vertex with the most already-chosen neighbors (breaking ties arbitrarily). This runs in \(O(m + n)\) and produces a PEO of any chordal graph.

Lemma 8.1.2. Let \(C = c_0, \ldots, c_{k-1}\) be a cycle in a chordal graph. Then for any \(0 \leq i < k\), either \(c_{i-1}c_{i+1}\) is an edge, or \(c_i\) is incident to a chord of \(C\).

Lemma 8.1.3. Assume \(v_1, \ldots, v_n\) was the output of MCS. Let \(C\) be a component of \(G \setminus \{v_1, \ldots, v_i\}\) for some \(1 \leq i < n\). Then \(N_i(C)\) is a clique.

Theorem 8.1.4. Let \(G\) be a chordal graph. Then the order \(v_1, \ldots, v_n\) returned by MCS is a perfect elimination order, regardless of tie-breaking.

Theorem 8.2.1. Chordal graphs can be recognized in linear time.

Lemma 8.3.1. Let \(G\) have a p.e.o. \(v_1, \ldots, v_n\). Then any clique \(C\) is a subset of \(\{v_i\} \cup \mathrm{pred}(v_i)\) for some \(i\). If \(C\) is a maximal clique, then \(C = \{v_i\} \cup \mathrm{pred}(v_i)\).

Theorem 8.3.2. \(G\) is an interval graph if and only if its maximal cliques can be ordered consecutively: there exist \(C_1, \ldots, C_k\) such that if \(v \in C_i \cap C_j\) then \(v \in C_h\) for all \(i < h < j\).

Proposition 8.3.3. Fix a p.e.o. and set \(C_i := \{v_i\} \cup \mathrm{pred}(v_i)\). Then \(C_i\) is not a maximal clique if and only if there exists \(j > i\) such that \(v_i\) is the last predecessor of \(v_j\) and \(\mathrm{indeg}(v_j) = \mathrm{indeg}(v_i) + 1\).

Theorem 8.3.4. Testing whether a graph is an interval graph can be done in linear time.

The PQ-tree reappears here: the consecutive ordering of cliques in an interval graph is precisely the kind of constraint that PQ-trees were designed to encode and check efficiently.

Theorem 7.4.1. The greedy algorithm on a perfect elimination order yields the optimal coloring: \(\chi(G) = \omega(G)\).

Corollary 7.4.1.1. For a graph with p.e.o. \(v_1, \ldots, v_n\), \(\omega(G) = \chi(G) = \max_i\{\mathrm{indeg}(v_i) + 1\}\).

Definition 7.4.1 (Clique Cover). A partition of \(V(G)\) such that each class forms a clique in \(G\).

Theorem 7.4.2. The greedy algorithm on a p.e.o. \(v_1, \ldots, v_n\) (processed in reverse) yields a maximum independent set.

Theorem 7.4.3. Dominating Set is NP-hard in chordal graphs.

Definition 7.5.1 (Intersection Graph). A graph \(G\) is an intersection graph if there is a universe \(X\) such that every vertex \(v\) corresponds to a set \(S_v \subseteq X\), and edge \(uv\) exists iff \(S_u \cap S_v \neq \emptyset\).

Theorem 7.5.1. Every graph is an intersection graph. (Take \(X = E\) and \(S_v = \{e = vw : e \in E\}\).)

Definition 7.5.2 (Induced-\(\mathcal{H}\)-Free Graph). Let \(\mathcal{H}\) be a collection of graphs. The class of induced-\(\mathcal{H}\)-free graphs consists of those whose induced subgraphs never belong to \(\mathcal{H}\).

Definition 7.5.3 (Perfect Graph). A graph \(G\) is perfect if \(\omega(H) = \chi(H)\) for every induced subgraph \(H\) of \(G\).

Theorem 7.5.2 (Strong Perfect Graph Theorem). A graph is perfect if and only if it does not have an odd hole or an odd anti-hole as an induced subgraph.

Polynomial algorithms in chordal graphs. The PEO does for chordal graphs what the minimum-degree ordering did for planar graphs: it provides a vertex ordering that makes greedy algorithms optimal. Because each vertex’s predecessor set is a clique, there is no “wasted” coloring and no “hidden” interference that greedy might miss.

Using a PEO:

- Coloring: Greedy on the PEO gives the optimal \(\chi(G) = \omega(G)\) coloring.

- Maximum independent set: Greedy in reverse PEO (the marked-set argument) gives an optimal solution.

- Maximum clique: \(\omega(G) = \max_i(\text{indeg}(v_i) + 1)\).

Note: Dominating Set is NP-hard in chordal graphs (reduce from Vertex Cover in general graphs by subdividing every edge and adding all clique edges between original vertices). This shows that even well-structured graph classes can retain NP-hard problems — treewidth, not chordality, is the right parameter for universal tractability.

4.2 Strong Tree Decompositions

The connection between interval graphs and path decompositions, and between chordal graphs and tree decompositions, can be made precise via strong decompositions.

Let us pause to consolidate what the perfect elimination order tells us. For a chordal graph, the set \(\{v_i\} \cup \text{pred}(v_i)\) — vertex \(v_i\) together with its earlier neighbors — is a clique, and these cliques can be organized into a tree structure where adjacent bags share a large overlap. This is the strong tree decomposition: the tree whose nodes are the maximal cliques and whose edges connect cliques that differ by exactly one vertex. The “strong” refers to the biconditional in condition (iii): every pair sharing a bag is adjacent, and conversely. This is only possible for chordal graphs. For general graphs, we must relax this to the weak tree decomposition — every edge is covered by a bag, but two vertices sharing a bag need not be adjacent. That relaxation is the key to making the theory work for arbitrary graphs.

Definition 9.1.1 (Strong Path Decomposition). A strong path decomposition \(\mathcal{P}\) of \(G\) consists of a path \(P\) with nodes \(I\) and an assignment \(X : I \to 2^V\) such that: (i) every vertex of \(G\) belongs to at least one bag; (ii) for every \(v\), the bags containing \(v\) are consecutive along \(P\); (iii) \(vw \in E\) if and only if some bag \(X_i\) contains both \(v\) and \(w\).

Corollary 9.1.0.1. A graph \(G\) is an interval graph if and only if it has a strong path decomposition.

Definition 9.2.2 (Intersection Graph of Subtrees). Given a tree \(T\) and subtrees \(T_1, \ldots, T_n\), define a graph with vertices \(v_1, \ldots, v_n\) and edge \(v_i v_j\) iff \(T_i \cap T_j \neq \emptyset\).

Proposition 9.2.1. A graph is an intersection graph of subtrees of a tree if and only if it has a strong tree decomposition.

Lemma 9.2.2. If \(G\) has a strong tree decomposition, then \(G\) has a perfect elimination order. Furthermore, for each \(j\), there exists a bag containing \(\{v_j\} \cup \mathrm{pred}(v_j)\).

Corollary 9.2.2.1. Let \(T\) be a strong tree decomposition of \(G\). Then for any clique \(C\), some bag of \(T\) contains all vertices of \(C\).

Lemma 9.2.3. If \(G\) has a p.e.o. \(v_1, \ldots, v_n\), then it has a strong tree decomposition where every bag has the form \(\{v_i\} \cup \mathrm{pred}(v_i)\) for some \(i\).

Theorem 9.2.4. A graph \(G\) is chordal if and only if it has a strong tree decomposition \(T\). Furthermore, \(\omega(G) \leq k + 1\) if and only if all bags of \(T\) have size at most \(k + 1\).

The perfect elimination order of a chordal graph encodes a tree-like structure: each vertex’s predecessor set is a clique, and these cliques can be organized into a tree. This is the strong tree decomposition — a tree whose bags are the maximal cliques, with edges representing shared elements. It is the prototype for the more general tree decomposition.

Definition 9.2.1 (Strong Tree Decomposition). A strong tree decomposition \(T\) of \(G\) consists of a tree \(T\) with nodes \(I\) and bags \(X : I \to 2^V\) such that: (i) every vertex belongs to at least one bag; (ii) for every \(v\), the bags containing \(v\) are connected (connectivity condition); (iii) \(vw \in E\) if and only if some bag \(X_i\) contains both \(v\) and \(w\).

Condition (iii) is the “if and only if” form: every edge is represented in some bag, and every pair of vertices sharing a bag is adjacent. This biconditional makes the decomposition exact — the tree completely encodes the graph structure.

The proof is constructive in both directions: given a chordal graph we build its strong tree decomposition via the PEO, and given any strong tree decomposition we recover a PEO by traversal. The two structures are in perfect correspondence.

4.3 Tree Decomposition and Treewidth

The strong tree decomposition requires the “if and only if” edge condition — every pair sharing a bag must be adjacent. For general (non-chordal) graphs, this is impossible. The solution is to relax to the weak tree decomposition, which only requires that edges are covered by bags, not that all pairs sharing a bag are adjacent. This relaxation is what allows tree decompositions to capture graphs far beyond the chordal class.

Before the definition, let us build intuition for what a tree decomposition is. Picture the graph \(G\) spread out on a table. A tree decomposition finds a way to “cover” \(G\) with overlapping clusters — the bags — arranged in a tree. Each vertex of \(G\) appears in one or more bags, and the bags containing any fixed vertex form a connected subtree (the connectivity condition). Every edge of \(G\) is “witnessed” by at least one bag that contains both its endpoints.

The tree structure means that if you take any bag \(X_i\) and remove it, the host tree splits into subtrees, and the corresponding subgraphs of \(G\) on each side can only interact through \(X_i\) itself. This is the fundamental separation property: \(X_i\) is a separator between the two sides. The width of a decomposition is one less than the size of the largest bag; we subtract one so that trees themselves (which have bags of size 2, one vertex per edge) get treewidth 1. Treewidth 0 means the graph is a collection of isolated vertices; treewidth 1 means a forest; treewidth 2 means outerplanar or series-parallel; treewidth \(k\) means “as complex as a \(k\)-tree but no more.”

The treewidth of a graph is the minimum width over all possible tree decompositions. Finding the optimal decomposition is NP-hard in general — but the parameter itself, once computed or approximated, unlocks efficient algorithms for an enormous range of problems.

The weaker condition means that a tree decomposition is not unique — every graph admits many tree decompositions of varying widths. We seek the decomposition of minimum width.

Definition 10.3.1 (Tree Decomposition). A (weak) tree decomposition of \(G\) is a strong tree decomposition of some supergraph of \(G\). Equivalently, a tree \(T\) with bags \(X_i \subseteq V\) such that: (i) every vertex appears in at least one bag; (ii) for every \(v\), the bags containing \(v\) form a connected subtree; (iii) if \(vw \in E\) then some bag contains \(\{v, w\}\).

Definition 10.3.2 (Width). The width of a tree decomposition is \(\max_i |X_i| - 1\). The treewidth \(\mathrm{tw}(G)\) is the minimum width over all tree decompositions of \(G\).

The shift to \(|X_i| - 1\) (rather than \(|X_i|\)) is a normalization convention that makes trees have treewidth 1 (every bag has size 2 = 1 + 1) and cliques have treewidth \(n-1\). Treewidth measures “how far from a tree the graph is,” with trees at one extreme and dense graphs at the other.

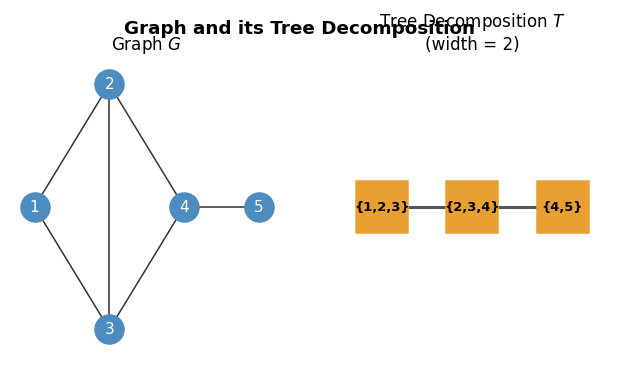

A graph \(G\) and a tree decomposition of width 2. Each bag covers a clique in the supergraph.

A graph \(G\) and a tree decomposition of width 2. Each bag covers a clique in the supergraph.

The following theorem collects the most important equivalent characterizations of bounded treewidth, connecting the decomposition definition to a concrete graph-theoretic construction.

This equivalence is the foundation of efficient DP on bounded-treewidth graphs: characterization 1 gives a concrete inductive construction, characterization 2 connects to the PEO theory of Section 4.1, and characterization 3 is the algorithmic handle.

Theorem 10.3.1. The following are equivalent for a graph \(G\) with \(n \geq k + 1\): (1) \(G\) is a partial \(k\)-tree; (2) \(G\) is a subgraph of a chordal graph \(H\) with \(\omega(H) = k + 1\); (3) \(\mathrm{tw}(G) \leq k\).

Proposition 10.3.2. For any tree decomposition \(T\) of \(G\), any clique \(C\) of \(G\) is contained in some bag of \(T\). In particular, \(\omega(G) \leq \mathrm{tw}(G) + 1\).

Proposition 10.3.3. If \(\mathrm{tw}(G) \leq k\) then for any subgraph \(G'\) of \(G\), \(\mathrm{tw}(G') \leq k\).

Lemma 10.3.4. If \(G\) has treewidth at most \(k\) and \(H\) is obtained by contracting an edge \(vw\) in \(G\), then \(\mathrm{tw}(H) \leq k\).

Corollary 10.3.4.1. Graphs of treewidth at most \(k\) are closed under taking minors.

Corollary 10.3.4.2. Let \(H\) be a connected subgraph of \(G\). In any tree decomposition \(T\) of \(G\), the set of bags containing a vertex from \(H\) is connected.

Proposition 10.3.5. Any graph containing \(K_{k+1}\) as a minor has treewidth at least \(k\).

Lemma 10.3.6. The treewidth of the \(k \times k\)-grid is exactly \(k\) for \(k \geq 2\).

Corollary 10.3.6.1. If a graph \(G\) contains a \(k \times k\)-grid as a minor then \(\mathrm{tw}(G) \geq k\).

Lemma 10.3.7. Every simple 2-terminal SP-graph has treewidth at most 2.

Corollary 10.3.7.1. SP-graphs are the same as partial 2-trees.

Lemma 10.3.8. If \(G\) is a simple SP-graph, then any simple minor \(G'\) of \(G\) has a vertex of degree at most 2.

Property (b) — that treewidth is minor-monotone — is why treewidth fits so naturally into the Robertson–Seymour theory of Chapter 6. Property (d) calibrates our intuition: the \(k \times k\)-grid is the “most tree-unlike” planar graph of its size, and treewidth exactly captures this.

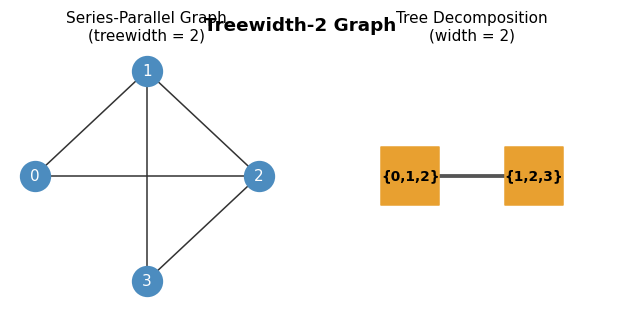

A series-parallel (treewidth-2) graph and its width-2 tree decomposition. Bags of size 3 correspond to the clique-number \(\omega = 3 = k+1\).

A series-parallel (treewidth-2) graph and its width-2 tree decomposition. Bags of size 3 correspond to the clique-number \(\omega = 3 = k+1\).

4.4 k-Trees and Partial k-Trees

Treewidth is defined as a minimum over all possible tree decompositions — an existential definition that does not immediately suggest what graphs of treewidth exactly \(k\) look like. The \(k\)-tree provides the answer: it is the canonical graph of treewidth \(k\), obtained by the simplest possible inductive construction, and every graph of treewidth \(\leq k\) is a subgraph (partial \(k\)-tree) of one.

To make the concept of bounded treewidth concrete, it helps to have an explicit recursive construction. A \(k\)-tree is built by starting with a \(k\)-clique and repeatedly adding vertices, each connected to an existing \(k\)-clique. This is the simplest graph of treewidth exactly \(k\).

A \(k\)-tree is the “maximally dense” graph of treewidth \(k\): we cannot add any vertex without either raising treewidth above \(k\) or creating a duplicate. A partial \(k\)-tree is simply any subgraph of a \(k\)-tree — equivalently, any graph of treewidth at most \(k\). The partial 1-trees are forests. The partial 2-trees are exactly the series-parallel graphs. The partial 3-trees include all planar graphs of treewidth 3, and the Apollonian networks (defined in Chapter 2.5) are exactly the 3-trees. This concrete characterization via the inductive construction is what makes it easy to build tree decompositions explicitly: the PEO of the host \(k\)-tree provides the decomposition for any partial \(k\)-tree subgraph.

Lemma 10.1.1. The following are equivalent for a graph with \(n \geq 2\): (1) \(G\) is a tree; (2) \(G\) has a p.e.o. \(v_1, \ldots, v_n\) with \(\mathrm{indeg}(v_i) = 1\) for \(i > 1\); (3) \(G\) is chordal with \(\omega(G) = 2\) and \(n - 1\) edges.

The inductive construction is transparent: each new vertex is connected to exactly \(k\) existing vertices forming a clique, so the structure grows “uniformly” in a tree-like fashion.

Theorem 10.1.2. Let \(G\) have \(n \geq k + 1\). Then \(G\) is a \(k\)-tree if and only if: (i) \(G\) is chordal; (ii) \(\omega(G) = k + 1\); (iii) \(m = kn - \binom{k}{2} - \frac{k}{2}\).

Lemma 10.1.3. Every 2-tree is a 2-terminal SP-graph.

The \(k\)-trees are exactly the chordal graphs with \(\omega = k+1\) and the exact edge count \(m = kn - \binom{k}{2} - \frac{k}{2}\). Partial \(k\)-trees (spanning subgraphs of \(k\)-trees) are exactly the graphs of treewidth \(\leq k\).

Definition 10.2.1 (Partial \(k\)-Tree). A spanning subgraph of a \(k\)-tree.

Lemma 10.2.1. Any chordal graph \(G\) with \(\omega(G) \leq k + 1\) is a partial \(k\)-tree.

Corollary 10.2.1.1. If \(G\) is a subgraph of a \(k\)-tree \(H\), then \(G\) is a partial \(k\)-tree.

Planar instances:

- Trees = partial 1-trees.

- Maximal outer-planar graphs = 2-trees; outer-planar graphs = partial 2-trees = SP-graphs.

- Apollonian networks = 3-trees; partial 3-trees include all planar graphs of low treewidth.

4.5 Series-Parallel Graphs

Series-parallel graphs are a particularly clean family of graphs with treewidth at most 2. They are defined by a recursive construction that mimics the series and parallel combinations of electrical circuits — a connection that is more than coincidental, since SP-graphs are exactly the graphs for which Kirchhoff’s equations can be solved by simple elimination.

The recursive structure is captured by the SP-tree, which makes many algorithms on SP-graphs straightforward bottom-up computations.

The SP-tree is a binary tree encoding the construction. SP-graphs are exactly the partial 2-trees. Recognition runs in linear time by repeatedly contracting degree-2 vertices or removing parallel edges.

The proof is constructive: the SP-tree directly encodes a tree decomposition. This is a paradigm that recurs in more general settings — an explicit recursive construction of the graph gives an explicit tree decomposition.

4.6 Branchwidth and Pathwidth

Having established treewidth as the central parameter, it is natural to ask whether it is the right parameter for every application. The answer is nuanced. For most theoretical purposes — FPT algorithms, Courcelle’s theorem, the Robertson–Seymour theory — treewidth is ideal. But for specific algorithmic tasks, particularly on planar graphs, two variants offer advantages: branchwidth (computable in polynomial time for planar graphs) and pathwidth (relevant for linear-arrangement problems and graph searching games).

Treewidth is not the only width parameter that captures tree-like structure. Two important variants — branchwidth and pathwidth — offer different trade-offs and are useful in different algorithmic contexts.

Think of the three parameters as measuring “tree-likeness” in different ways. Treewidth puts vertices in the bags and asks that the host tree have a connected subtree for each vertex. Branchwidth puts edges at the leaves of a binary tree and measures how many vertices are “shared” when you cut any arc. Pathwidth is the most restrictive: the host tree must be a path, meaning the bags are arranged in a linear sequence. A graph can have small treewidth but large pathwidth if its tree structure fans out in many directions — for instance, a balanced binary tree has treewidth 1 (it is a tree!) but pathwidth \(\Theta(\log n)\).

The three parameters are related by inequalities: \(\text{tw}(G) \leq \text{pw}(G)\) always, and \(\text{bw}(G)\) is within a factor of \(3/2\) of \(\text{tw}(G)\). But their computational complexity differs sharply. All three are NP-hard to compute exactly on general graphs. However, branchwidth of planar graphs can be computed exactly in \(O(n^2)\) time via the sphere-cut decomposition — a remarkable exception that makes branchwidth the preferred parameter for exact planar graph algorithms.

Pathwidth has an elegant alternative characterization as the vertex-separation number — the number of vertices one must “remember” when processing the graph left to right in the best linear arrangement. This connects pathwidth to graph searching (the “cop number” for node searching) and to register allocation in compilers, where the pathwidth of a program’s dependency graph bounds the number of registers needed.

The branch decomposition is defined in two equivalent ways:

Definition 11.1.1 (e-Separation). A recursively defined partition of the edges: if \(G\) has more than one edge, partition \(E\) into non-empty sets \(E_1, E_2\), then recursively partition each.

Definition 11.1.2 (Rooted Branch Decomposition). A rooted binary tree describing an e-separation: edges of \(G\) at its leaves and an interior node for each recursive partition.

Definition 11.1.3 (Width of Branch Decomposition). For any link/arc \(a\) of \(T\), the separator is the set of vertices incident to edges in both subtrees of \(T - a\). The width is the largest separator size over all links.

Definition 11.1.4 (Branch-Width). The branch-width \(\mathrm{bw}(G)\) is the smallest width over all branch decompositions.

Definition 11.1.5 (Branch Decomposition). An unrooted binary tree \(T\) with maximum degree 3 in which the edges of \(G\) are mapped to distinct leaves.

Proposition 11.1.1. A graph has a rooted branch decomposition of width \(w\) if and only if it has an (unrooted) branch decomposition of width \(w\).

Lemma 11.1.2. If \(G\) has branchwidth at most \(k\), then any minor \(H\) of \(G\) also has branchwidth at most \(k\).

Corollary 11.1.2.1. Every SP-graph has branchwidth at most 2.

Branchwidth and treewidth measure essentially the same thing, as confirmed by the following quantitative relationship.

The two parameters are within a constant factor of each other, so for most purposes they are interchangeable. However, branchwidth has a decisive computational advantage for planar graphs: it can be computed exactly in polynomial time.

Theorem 11.2.2. For any graph \(G\): \(\mathrm{bw}(G) \leq \mathrm{tw}(G) + 1\).

Definition 11.3.1 (Sphere-Cut Decomposition). A branch decomposition of a planar graph \(G\) such that for every separator \(\sigma_a\) of a link \(a\), there is a noose (closed Jordan curve) of \(G\) containing exactly the vertices of \(\sigma_a\).

Theorem 11.3.1. If a planar graph has branchwidth \(k\), then it also has a sphere-cut decomposition of width \(k\).

Theorem 11.3.2. The branchwidth of a planar graph can be computed in \(O(n^2)\) time.

Definition 11.3.2 (Co-Tree). Given a spanning tree \(T\) in a connected planar graph \(G\), the co-tree \(T^*\) is the subgraph of \(G^*\) formed by the duals of edges in \(E \setminus E(T)\).

Lemma 11.3.3. Let \(G\) be a connected planar graph with spanning tree \(T\). Then the co-tree \(T^*\) is a spanning tree of \(G^*\).

Lemma 11.3.4. Let \(G\) be a triangulated planar graph with a spanning tree \(T\) of height \(R\). Then \(\mathrm{bw}(G) \leq 2R + 1\).

Corollary 11.3.4.1. If \(G\) is planar with a spanning tree \(T\) of height \(R\), then \(\mathrm{bw}(G) \leq 2R + 1\).

Corollary 11.3.4.2. Let \(G\) be a planar graph with a spanning tree of height \(R\). Then \(\mathrm{tw}(G) \leq 3R\).

Branchwidth of planar graphs is computable in \(O(n^2)\) time (and the optimal branch decomposition is sphere-cut). This gives a \(\frac{3}{2}\)-approximation for treewidth of planar graphs.

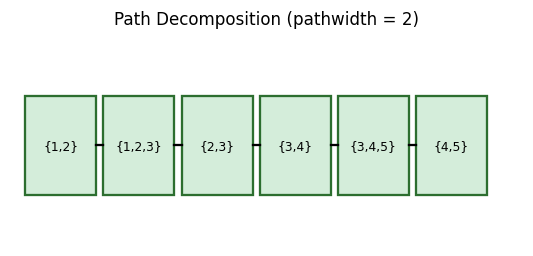

Definition 12.1.1 (Path Decomposition). A tree decomposition \(T\) for which the host tree is a path.

Definition 12.1.2 (Pathwidth). The pathwidth \(\mathrm{pw}(G)\) of a graph \(G\) is the smallest \(k\) such that \(G\) has a path decomposition of width \(k\).

Theorem 12.1.1. A graph \(G\) has pathwidth at most \(k\) if and only if \(G\) is a spanning subgraph of an interval graph \(G'\) with \(\omega(G') \leq k + 1\).

Pathwidth is a stronger condition than treewidth: \(\text{tw}(G) \leq \text{pw}(G)\) always, and the gap can be logarithmic. But for linear-time algorithms and certain graph searching problems, pathwidth is the natural parameter.

Theorem 12.2.1. A tree has pathwidth at most \(k > 0\) if and only if there is a path \(P\) in \(T\) such that all subtrees of \(T - P\) have pathwidth at most \(k - 1\).

Definition 12.2.1 (\(k\)-Caterpillar). A 0-caterpillar is a single vertex. A \(k\)-caterpillar (\(k > 0\)) is a tree \(T\) with a path \(P\) (spine) such that every component of \(T - P\) is an \(\ell\)-caterpillar for some \(\ell < k\).

Corollary 12.2.1.1. For any tree \(T\), the pathwidth \(\mathrm{pw}(T)\) equals the smallest \(k\) for which \(T\) is a \(k\)-caterpillar.

Lemma 12.2.2. Any tree with \(n\) nodes has \(\mathrm{pw}(T) \leq \log_2 n\).

The following linear-arrangement definitions are the key to proving the pathwidth equivalences.

Definition 12.3.1 (Cut-Edges). For a linear arrangement \(v_1, \ldots, v_n\), \(\mathrm{Cut}[i-1:i]\) is the set of edges in the cut induced by \(\{v_1, \ldots, v_{i-1}\}\).

Definition 12.3.2 (Separation-Vertices of the Cut). \(\mathrm{Vs}[i-1:i]\) are the vertices in \(\{v_1, \ldots, v_{i-1}\}\) that have incident edges in \(\mathrm{Cut}[i-1:i]\).

Definition 12.3.3 (Cutwidth). \(G\) has cutwidth at most \(k\) if there is a linear arrangement with \(|\mathrm{Cut}[i-1:i]| \leq k\) for all \(i\).

Definition 12.3.4 (Vertex-Separation Number). \(G\) has vertex-separation number at most \(k\) if there is a linear arrangement with \(|\mathrm{Vs}[i-1:i]| \leq k\) for all \(i\).

A path decomposition: bags are arranged linearly, each consecutive pair differs by at most one vertex (in a “nice” decomposition).

A path decomposition: bags are arranged linearly, each consecutive pair differs by at most one vertex (in a “nice” decomposition).

The equivalence between pathwidth and interval graph containment mirrors the equivalence between treewidth and chordal graph containment. In both cases, the “forbidden subgraph” perspective and the “decomposition” perspective give the same parameter.

Theorem 12.4.1 (Pathwidth Equivalences). The following are equivalent: (1) \(\mathrm{pw}(G) \leq k\); (2) \(G\) has a path decomposition with all bags of size \(\leq k+1\); (3) \(G\) is a subgraph of an interval graph \(H\) with \(\omega(H) \leq k+1\); (4) \(G\) has an interval representation where at most \(k+1\) intervals intersect at any point; (5) \(G\) has vertex-separation number at most \(k\).

Definition 12.5.1 (Line Graph). The line graph \(L(G)\) has vertex \(v_e\) for each \(e \in E(G)\) and an edge \((v_e, v_{e'})\) iff \(e, e'\) share an endpoint.

Lemma 12.5.1. For any graph \(G\): \(\mathrm{pw}(L(G)) \leq \mathrm{cutwidth}(G) + \lfloor \Delta/2 \rfloor - 1\).

Lemma 12.5.2. For any connected graph \(G\) with \(n \geq 3\): \(\mathrm{cutwidth}(G) \leq \mathrm{pw}(L(G))\).

Corollary 12.5.2.1. For any graph \(G\) with \(\Delta(G) \leq 3\): \(\mathrm{cutwidth}(G) = \mathrm{pw}(L(G))\).

Definition 12.6.1 (Weighted Cutwidth). For a graph \(G\) with positive edge-weights \(w : E \to \mathbb{R}^+\), the weighted cutwidth is at most \(k\) if there is a linear arrangement with \(\sum_{e \in \mathrm{Cut}[i-1:i]} w(e) \leq k\) for all cuts.

Theorem 12.6.1. Computing the weighted cutwidth of a graph is NP-hard, even in trees.

Definition 12.6.2 (Strongly NP-Hard). A weighted decision problem is strongly NP-hard when it remains NP-hard even if all weights are polynomial in the input size.

Theorem 12.6.2. Computing the weighted cutwidth is strongly NP-hard, even in trees.

Corollary 12.6.2.1. Computing the cutwidth is NP-hard even in a simple series-parallel graph of pathwidth 3.

Theorem 12.6.3. Computing the cutwidth is NP-hard even in planar graphs with maximum degree 3.

Corollary 12.6.3.1. Computing the pathwidth is NP-hard even in planar graphs with maximum degree 4.

Pathwidth of trees. Any tree has pathwidth at most \(\log_2 n\). A tree is a \(k\)-caterpillar iff its pathwidth is exactly \(k\); this gives a recursive characterization computable in linear time.

Computing width parameters is NP-hard: treewidth, pathwidth, and cutwidth are all NP-hard to compute exactly, even in restricted graph families. However, computing branchwidth of planar graphs is polynomial. This computational gap between exact treewidth computation (NP-hard) and the FPT algorithms that use treewidth as a parameter motivates the approximation algorithm discussed in Section 6.2.

Chapter 5: Fixed-Parameter Tractability

Treewidth was introduced in Chapter 4 as a structural parameter measuring the “tree-likeness” of a graph. This chapter cashes in on that investment: graphs of small treewidth admit efficient dynamic programming algorithms for a wide range of hard combinatorial problems. The key insight is that a tree decomposition breaks a problem into subproblems that interact only through small “interfaces” — the bags — and DP over the decomposition solves the original problem in time exponential only in the treewidth, not in \(n\).

5.1 Dynamic Programming in Partial \(k\)-Trees