CS 466: Algorithm Design and Analysis

Lap Chi Lau

Estimated study time: 4 hr 41 min

Table of contents

The course develops three families of techniques for algorithm design: probabilistic methods, spectral and linear-algebraic methods, and continuous optimization. A recurring theme is the maximum flow problem, which serves as a unifying thread: each family of techniques offers a different and progressively more powerful approach to this fundamental problem.

Prerequisites. A first course in algorithms (CS 341), a first course in probability (random variables, expectation, variance), and a first course in linear algebra (rank, determinant, eigenvalues, orthonormal bases).

References.

- Mitzenmacher and Upfal, Probability and Computing

- Spielman, Notes on Spectral Graph Theory

- Williamson and Shmoys, The Design of Approximation Algorithms

Chapter 1: Randomized Algorithms and Probability

In a first algorithms course, one learns combinatorial techniques — divide and conquer, greedy algorithms, dynamic programming, local search — and uses them to design deterministic algorithms that always return optimal solutions. These techniques are elegant and insightful, but they have two significant limitations. First, most combinatorial problems are NP-complete, and we do not expect efficient algorithms that solve them optimally. Second, even for problems in P, the fastest deterministic algorithms are often complicated and difficult to implement in practice.

Randomized algorithms offer a way forward. By introducing carefully controlled randomness into algorithm design, we can obtain simpler, faster algorithms for problems already solvable in polynomial time, approximation algorithms for NP-hard problems, and sometimes achieve feats impossible for deterministic algorithms — such as sublinear-time algorithms that do not even read the entire input.

There are more than thirty years of active research in randomized algorithms, and probabilistic ideas have become indispensable in modern algorithm design and analysis. In Part I, we develop the foundational probabilistic tools — concentration inequalities, the probabilistic method, and algebraic techniques — and apply them to graph sparsification, data streaming, hashing, network coding, and parallel matching.

Section 1.1: Randomized Minimum Cut

1.1 Maximum Flow and Minimum Cut: A Motivating Problem

We begin the course with a problem that will reappear throughout: the relationship between maximum flow and minimum cut. Given an unweighted undirected graph \( G = (V, E) \) and two specified vertices \( s \) and \( t \), the minimum \( s \)-\( t \) cut problem asks us to remove a minimum number of edges to disconnect \( s \) from \( t \). The maximum \( s \)-\( t \) flow problem asks for a maximum set of edge-disjoint paths from \( s \) to \( t \).

It is not difficult to see that the maximum \( s \)-\( t \) flow value is at most the minimum \( s \)-\( t \) cut value: any path from \( s \) to \( t \) must use at least one edge from any cut separating them. The celebrated max-flow min-cut theorem proves that these optimal values are always equal.

One way to prove this is via the augmenting path algorithm, which also provides an \( O(|V| \cdot |E|) \)-time algorithm for both problems.With additional combinatorial ideas (blocking flows, binary length functions) and advanced data structures (dynamic trees), Goldberg and Rao gave an \( O(\min\{|E|^{3/2},\; |E| \cdot |V|^{2/3}\}) \)-time algorithm. This algorithm is deterministic and extends to the weighted case and directed graphs. For thirty years, no one knew how to improve this result.

In this course, we will attack this problem from three different angles:

Graph sparsification (Part I): Using random sampling or spectral methods, any graph can be approximated by a sparse graph preserving all cut values. This yields faster approximation algorithms for minimum \( s \)-\( t \) cut in dense graphs.

Linear programming (Part III): The maximum flow problem can be formulated as a linear program. We will prove that there is always an optimal integral solution and derive the max-flow min-cut theorem from LP duality. We will also learn how to round a fractional solution to an integral one in nearly linear time using random walks.

Spectral techniques and continuous optimization (Parts II–III): Graph sparsification and combinatorial ideas combine to give a nearly linear time algorithm for computing electrical flow. Using the multiplicative weight update method, this electrical flow solver yields a fast approximation algorithm for maximum flow — the first breakthrough in an exciting line of research that eventually improved the long-standing Goldberg–Rao result.

1.2 The Global Minimum Cut Problem

Problem. In the minimum cut (or global minimum cut) problem, we are given an unweighted undirected graph \( G = (V, E) \), and the objective is to find a minimum-cardinality subset \( F \subseteq E \) such that \( G - F \) is disconnected.

A simple observation is that a minimum cut is also a minimum \( s \)-\( t \) cut for some pair \( s, t \). One can therefore compute the minimum \( s \)-\( t \) cut for all pairs, requiring \( n^2 \) calls to a minimum \( s \)-\( t \) cut subroutine. A better observation is that it suffices to fix an arbitrary vertex \( s \) and compute the minimum \( s \)-\( t \) cut for all possible \( t \), reducing the number of calls to \( n - 1 \).

For a long time, the global minimum cut problem seemed inherently harder than the \( s \)-\( t \) version. Then Matula (1987) gave a clever algorithm running in \( O(|V| \cdot |E|) \) time, using a graph search to identify edges that can be contracted.

Karger came up with the idea of random contraction and improved the runtime to \( \tilde{O}(|V|^2) \), which is faster than computing a single minimum \( s \)-\( t \) cut. Eventually, he gave a near-linear time \( \tilde{O}(|E|) \) algorithm, which is remarkable. Here the \( \tilde{O} \) notation hides polylogarithmic factors.

1.3 Karger’s Contraction Algorithm

Karger’s algorithm is strikingly simple:

While there are more than two vertices in the graph:

- Pick a uniformly random edge.

- Contract the two endpoints into a single vertex (removing self-loops but keeping parallel edges).

Output the edges between the two remaining vertices.

By “contracting” an edge \( (u, v) \), we mean identifying \( u \) and \( v \) as a single vertex, placing both on the same side of any future cut.

Each vertex in an intermediate graph represents a subset of vertices in the original graph. Consequently, each cut in an intermediate graph corresponds to a cut in the original graph, and the minimum cut value in the intermediate graph is at least as large as in the original.Theorem 1.1. The probability that the algorithm outputs a minimum cut is at least \( \frac{2}{n(n-1)} \), where \( n = |V| \).

Proof. Let \( F \) be a minimum cut with \( k = |F| \) edges. If we never contract an edge of \( F \), the algorithm succeeds. What is the probability that an edge of \( F \) is contracted in the \( i \)-th iteration?

By the observation above, the minimum cut in the \( i \)-th iteration is still at least \( k \). This implies that every vertex has degree at least \( k \) (otherwise we could disconnect a single vertex by removing fewer than \( k \) edges). Therefore, the number of edges in the \( i \)-th iteration is at least \( (n - i + 1) \cdot k / 2 \).

Since we pick a random edge to contract, the probability of picking an edge in \( F \) is at most

\[ \frac{k}{(n - i + 1) \cdot k / 2} = \frac{2}{n - i + 1}. \]The probability that \( F \) survives until the end is at least

\[ \prod_{i=1}^{n-2} \left(1 - \frac{2}{n - i + 1}\right) = \frac{n-2}{n} \cdot \frac{n-3}{n-1} \cdot \frac{n-4}{n-2} \cdots \frac{2}{4} \cdot \frac{1}{3} = \frac{2}{n(n-1)}. \qquad \square \]1.4 Boosting Success Probability

A natural approach is to repeat the algorithm independently many times and return the smallest cut found. If we repeat \( t \) times, the failure probability is at most

\[ \left(1 - \frac{2}{n(n-1)}\right)^t. \]Using the inequality \( 1 - x \leq e^{-x} \) (which follows from the Taylor expansion of \( e^{-x} \)), this is at most \( e^{-2t/(n(n-1))} \). Setting \( t = 10 \cdot \binom{n}{2} \) drives the failure probability below \( 2^{-20} \).

Running time. A single execution can be implemented in \( O(n^2) \) time (an exercise in data structures), giving a total time complexity of \( O(n^4) \).

Improving the running time. The key observation is that the failure probability is only large near the end of an execution — in the early iterations, the survival probability per step is very high. This suggests repeating only the later iterations while sharing the early ones. This leads to the Karger–Stein algorithm (1993), which achieves \( \tilde{O}(n^2) \) running time by branching into independent repetitions after contracting down to \( n / \sqrt{2} \) vertices. See Motwani–Raghavan, Section 10.2, for details.

1.5 Combinatorial Consequences

An elegant corollary of Theorem 1.1 is that any undirected graph has at most \( \binom{n}{2} = O(n^2) \) minimum cuts. This follows because each minimum cut survives with probability \( \Omega(1/n^2) \), and the events that two different minimum cuts survive are disjoint (the algorithm can output at most one cut). This is a nontrivial structural statement that is surprisingly difficult to prove by other means.

The algorithm can also be extended to find a minimum \( k \)-cut (partitioning the vertices into \( k \) nonempty groups while minimizing the number of crossing edges) in \( n^{O(k)} \) time. Note that the minimum \( k \)-cut problem is NP-hard when \( k \) is part of the input.

1.6 Probability Review

Before proceeding, we recall the essential probability concepts used throughout the course.

The sample space \( \Omega \) is the set of all possible outcomes. An event \( E \subseteq \Omega \) has probability \( \Pr(E) = \sum_{s \in E} \Pr(s) \). The axioms require \( 0 \leq \Pr(E) \leq 1 \), \( \Pr(\Omega) = 1 \), and countable additivity for disjoint events.

The union bound states \( \Pr\left(\bigcup_i E_i\right) \leq \sum_i \Pr(E_i) \). The inclusion-exclusion principle gives the exact probability by alternating sums of intersection probabilities.

A random variable \( X \) is a function from \( \Omega \) to \( \mathbb{R} \). Two random variables are independent if \( \Pr(X = x \cap Y = y) = \Pr(X = x) \cdot \Pr(Y = y) \) for all \( x, y \).

The expectation \( E[X] = \sum_i i \cdot \Pr(X = i) \) is linear: \( E\left[\sum_i X_i\right] = \sum_i E[X_i] \), even for dependent variables. This linearity of expectation is one of the most powerful tools in probabilistic analysis and will be used repeatedly.

Section 1.2: Concentration Inequalities

Tail inequalities, also called concentration inequalities, bound the probability that a random variable deviates far from its expected value. They allow us to show that randomized algorithms behave as expected with high probability — almost deterministically. These are fundamental tools that we will use throughout the course.

2.1 Markov’s Inequality

The simplest tail inequality requires only the expected value.

Theorem 2.1 (Markov’s Inequality). Let \( X \) be a non-negative random variable. Then for any \( a > 0 \),

\[ \Pr(X \geq a) \leq \frac{E[X]}{a}. \]Proof. \( E[X] = \sum_i i \cdot \Pr(X = i) \geq \sum_{i \geq a} i \cdot \Pr(X = i) \geq a \cdot \Pr(X \geq a) \). \( \square \)

Example (Quicksort). The expected runtime of randomized quicksort is \( 2n \ln n \). Markov’s inequality tells us that the runtime exceeds \( 2cn \ln n \) with probability at most \( 1/c \).

Example (Coin flips). If we flip \( n \) fair coins, the expected number of heads is \( n/2 \). Markov’s inequality gives \( \Pr(\text{heads} \geq 3n/4) \leq 2/3 \) — a rather weak bound that we will soon improve dramatically.

Markov’s inequality is most useful when we have no information beyond the expected value, or when the random variable is too complicated to analyze further. It is tight: consider a random variable that equals \( a \) with probability \( \mu/a \) and 0 otherwise.

2.2 Chebyshev’s Inequality

To obtain sharper bounds, we use the variance \( \text{Var}[X] = E[(X - E[X])^2] = E[X^2] - E[X]^2 \), which measures how spread out a distribution is. The standard deviation is \( \sigma(X) = \sqrt{\text{Var}[X]} \).

Two useful facts (whose proofs are exercises):

- \( \text{Var}[X + Y] = \text{Var}[X] + \text{Var}[Y] + 2\text{Cov}(X, Y) \).

- If \( X \) and \( Y \) are independent, then \( \text{Var}[X + Y] = \text{Var}[X] + \text{Var}[Y] \).

Theorem 2.2 (Chebyshev’s Inequality). For any random variable \( X \) and any \( a > 0 \),

\[ \Pr(|X - E[X]| \geq a) \leq \frac{\text{Var}(X)}{a^2}. \]Proof. Apply Markov’s inequality to the non-negative random variable \( (X - E[X])^2 \):

\[ \Pr(|X - E[X]| \geq a) = \Pr\left((X - E[X])^2 \geq a^2\right) \leq \frac{E[(X - E[X])^2]}{a^2} = \frac{\text{Var}(X)}{a^2}. \qquad \square \]Example (Coin flips revisited). For \( n \) independent fair coin flips with \( X = \) number of heads, each indicator \( X_i \) has variance \( p(1-p) = 1/4 \). By independence, \( \text{Var}(X) = n/4 \). Chebyshev gives

\[ \Pr\left(X \geq \frac{3n}{4}\right) \leq \Pr\left(|X - n/2| \geq \frac{n}{4}\right) \leq \frac{n/4}{(n/4)^2} = \frac{4}{n}. \]This is already much better than Markov’s bound of \( 2/3 \), and it improves with \( n \).

2.3 Chernoff Bounds

When \( X \) is a sum of independent random variables, we can obtain exponentially small tail bounds. The key idea is to apply Markov’s inequality not to \( X \) itself, but to the moment generating function \( e^{tX} \):

\[ \Pr(X \geq a) = \Pr(e^{tX} \geq e^{ta}) \leq \frac{E[e^{tX}]}{e^{ta}} \quad \text{for any } t > 0. \]There are two reasons this approach is powerful:

The function \( M_X(t) = E[e^{tX}] \) encodes all moments of \( X \) (it is the moment generating function), so Markov’s inequality applied to \( e^{tX} \) exploits all moment information simultaneously.

If \( X = X_1 + X_2 \) with \( X_1, X_2 \) independent, then \( E[e^{tX}] = E[e^{tX_1}] \cdot E[e^{tX_2}] \), so the moment generating function factorizes — making it easy to compute for sums of independent variables.

Heterogeneous Coin Flips

Let \( X_1, \ldots, X_n \) be independent random variables with \( X_i = 1 \) with probability \( p_i \) and \( X_i = 0 \) otherwise. Let \( X = \sum_{i=1}^n X_i \) and \( \mu = E[X] = \sum_{i=1}^n p_i \).

Then

\[ E[e^{tX}] = \prod_{i=1}^n E[e^{tX_i}] = \prod_{i=1}^n \left(1 + p_i(e^t - 1)\right) \leq \prod_{i=1}^n e^{p_i(e^t - 1)} = e^{\mu(e^t - 1)}, \]where we used \( 1 + x \leq e^x \).

Theorem 2.3 (Chernoff Bounds). In the heterogeneous coin-flipping setting:

\[ \Pr(X \geq (1 + \delta)\mu) \leq \left(\frac{e^\delta}{(1+\delta)^{1+\delta}}\right)^\mu. \]\[ \Pr(X \geq (1 + \delta)\mu) \leq e^{-\mu \delta^2 / 3}. \]\[ \Pr(X \geq R) \leq 2^{-R}. \]Proof of (i). By the calculation above, \( \Pr(X \geq (1+\delta)\mu) \leq e^{\mu(e^t - 1)} / e^{t(1+\delta)\mu} \). Minimizing over \( t \) by elementary calculus gives \( t = \ln(1+\delta) \), yielding the bound. \( \square \)

Part (ii) follows from the inequality \( e^\delta / (1+\delta)^{1+\delta} \leq e^{-\delta^2/3} \) for \( \delta \in (0, 1) \), verified by analyzing the function \( f(\delta) = \delta - (1+\delta)\ln(1+\delta) + \delta^2/3 \).

\[ \Pr(|X - \mu| \geq \delta \mu) \leq 2e^{-\mu \delta^2 / 3}. \]Similar bounds hold for the lower tail by setting \( t < 0 \) in the moment generating function argument.

![Chernoff vs Markov tail bound comparison: P[X > (1+δ)μ] vs δ for μ=10 (log scale)](/pics/cs466/chernoff-tail.png)

The Coin-Flip Example Completed

For \( n \) fair coin flips, \( \mu = n/2 \). Setting \( \delta = \sqrt{60/n} \) gives \( \Pr(|X - n/2| \geq \sqrt{15n}) \leq 2e^{-10} \). With high probability, the number of heads lies within \( O(\sqrt{n \log n}) \) of its expectation — this \( \sqrt{n \log n} \) scale appears repeatedly in the analysis of randomized algorithms.

For the specific bound on \( 3n/4 \) heads: Chernoff gives \( \Pr(X \geq 3n/4) \leq e^{-n/24} \), which is exponentially small in \( n \). Compare this with Markov’s \( 2/3 \) and Chebyshev’s \( 4/n \).

Extensions

Hoeffding’s extension. The same Chernoff bounds hold when each \( X_i \) takes values in \( [0, 1] \) (not just \( \{0, 1\} \)). This follows because \( e^{tx} \) is convex, so \( E[e^{tX_i}] \leq 1 + p_i(e^t - 1) \) by the chord inequality.

Negatively correlated variables. Chernoff bounds also apply when the variables are negatively correlated, because \( E[e^{t(X_1 + X_2)}] \leq E[e^{tX_1}] \cdot E[e^{tX_2}] \) still holds. This is useful, for example, in analyzing random spanning trees, where the events that two edges appear are negatively correlated.

2.4 Probability Amplification

Concentration inequalities provide a systematic way to boost the success probability of randomized algorithms.

One-sided error. If a randomized algorithm is always correct when it answers NO but correct with probability \( p \) when it answers YES, we simply repeat \( k \) times or until it says NO. The failure probability drops to \( (1-p)^k \). For constant \( p \), repeating \( O(\log n) \) times drives the failure probability to \( O(1/n) \).

Two-sided error. If the algorithm gives the correct answer with probability 0.6, we repeat \( k \) times and output the majority answer. By the Chernoff bound, the majority is wrong with probability at most \( e^{-k/120} \). Again, \( k = O(\log n) \) suffices for failure probability \( O(1/n) \).

This \( O(\log n) \) amplification cost is another recurring quantity throughout the course.

Section 1.3: Applications of Tail Inequalities

We now apply concentration inequalities to two beautiful and important problems: graph sparsification and dimension reduction. Both demonstrate a recurring theme — random sampling preserves structure surprisingly well.

3.1 Graph Sparsification

Given an undirected graph \( G = (V, E) \) with edge weights \( w(e) \), we want a sparse graph \( H \) that approximates the cut structure of \( G \).

Definition 3.1 (\( \varepsilon \)-cut sparsifier). We say \( H = (V, F) \) is an \( \varepsilon \)-cut sparsifier of \( G = (V, E) \) if for all \( S \subseteq V \),

\[ (1 - \varepsilon) \cdot w(\delta_G(S)) \leq w(\delta_H(S)) \leq (1 + \varepsilon) \cdot w(\delta_G(S)), \]where \( \delta_G(S) \) denotes the set of edges crossing the cut \( (S, V \setminus S) \).

Note that \( G \) and \( H \) share the same vertex set but may have different edge sets and weights. The question is: how few edges can \( H \) have while still preserving all \( 2^n \) cut values?

Uniform Sampling under Large Minimum Cut

We start with a simple but instructive result that assumes the input graph is unweighted with minimum cut value \( c = \Omega(\log n) \).

Algorithm. Set a sampling probability \( p \). For every edge \( e \in E(G) \), independently include \( e \) in \( H \) with probability \( p \), assigning it weight \( 1/p \).

The idea is to set expectations right: we sample a \( p \)-fraction of edges and scale their weights by \( 1/p \), so that the expected weight of each cut in \( H \) equals its weight in \( G \). The challenge is ensuring that all cuts are simultaneously well-approximated.

Theorem 3.2 (Karger). Set \( p = \frac{15 \ln n}{\varepsilon^2 c} \). Then \( H \) is an \( \varepsilon \)-cut sparsifier of \( G \) with \( O(p \cdot |E|) \) edges, with probability at least \( 1 - 4/n \).

Proof. Fix a subset \( S \subseteq V \) whose cut has \( k \geq c \) edges. The number of sampled edges crossing \( S \) is a sum of independent Bernoulli variables with mean \( pk \). By the Chernoff bound,

\[ \Pr\left(|\delta_H(S)| \text{ deviates from } pk \text{ by more than } \varepsilon pk\right) \leq 2e^{-\varepsilon^2 pk / 3} = 2n^{-5k/c}. \]Since \( k \geq c \), the violation probability for any single cut is at most \( n^{-5} \). But there are \( 2^n \) subsets, so a naive union bound fails. The key insight uses the combinatorial structure from Chapter 1:

Lemma 3.3. The number of cuts with at most \( \alpha c \) edges is at most \( n^{2\alpha} \).

Grouping cuts by size and applying a refined union bound:

\[ \Pr(\text{some cut is violated}) \leq \sum_{\alpha = 1, 2, 4, \ldots} n^{4\alpha} \cdot 2n^{-5\alpha} = \sum_{\alpha = 1, 2, 4, \ldots} 2n^{-\alpha} \leq \frac{4}{n}. \qquad \square \]Applications. For essentially complete graphs (\( c = \Omega(n) \)), this gives an \( \varepsilon \)-cut sparsifier with only \( O(n \log n / \varepsilon^2) \) edges — a dramatic reduction from \( \Omega(n^2) \) edges. To approximate the minimum \( s \)-\( t \) cut, sparsify first, then run an exact algorithm on the sparse graph.

Improvement by Benczur and Karger. Without the minimum-cut assumption, uniform sampling fails (e.g., a bridge edge might not be sampled). Benczur and Karger designed a non-uniform sampling algorithm where each edge is sampled with probability inversely proportional to the “strong connectivity” of its endpoints, yielding an \( \varepsilon \)-cut sparsifier with \( O(n \log n / \varepsilon^2) \) edges for any graph, constructible in near-linear time.

Near-Linear Time Minimum Cut (Sketch)

An amazing application of graph sparsification is Karger’s near-linear time algorithm for minimum cuts. The idea uses a classical result: if \( G \) is \( c \)-edge-connected, then \( G \) contains at least \( \lfloor c/2 \rfloor \) edge-disjoint spanning trees. At least one of these trees crosses a minimum cut at most twice. Finding a minimum cut that removes at most two edges from a given tree can be solved by a sophisticated dynamic programming algorithm in \( \tilde{O}(m) \) time.

The catch is that finding \( c \) edge-disjoint spanning trees takes \( O(mc) \) time, which is too slow. The solution: sparsify the graph so the minimum cut value drops to \( O(\log n) \) while preserving all cuts approximately. Now we only need \( O(\log n) \) spanning trees, and the total complexity is \( \tilde{O}(m) \).

3.2 Dimension Reduction: The Johnson–Lindenstrauss Lemma

Given \( n \) points in high-dimensional Euclidean space, can we project them to a much lower-dimensional space while approximately preserving all pairwise distances? Surprisingly, yes.

Theorem 3.4 (Johnson–Lindenstrauss Lemma). For any \( \varepsilon \in (0, 1/2) \) and any set of \( n \) points \( X = \{x_1, \ldots, x_n\} \) in \( \mathbb{R}^d \), there exists a map \( A: \mathbb{R}^d \to \mathbb{R}^k \) for \( k = O(\log n / \varepsilon^2) \) such that

\[ (1 - \varepsilon) \|x_i - x_j\|_2^2 \leq \|A(x_i) - A(x_j)\|_2^2 \leq (1 + \varepsilon) \|x_i - x_j\|_2^2 \]for all pairs \( i, j \).

The construction is beautifully simple: let \( M \) be a \( k \times d \) matrix whose entries are independent standard Gaussians \( N(0, 1) \), and define \( A(x) = \frac{1}{\sqrt{k}} M x \).

Since \( A \) is linear, preserving distances reduces to preserving norms: it suffices to show that \( \|A(x)\|_2^2 \approx \|x\|_2^2 \) for any unit vector \( x \).

Proof sketch. Consider the special case \( x = e_1 = (1, 0, \ldots, 0) \). Then \( Me_1 \) is the first column of \( M \), a vector of \( k \) independent standard Gaussians. Its squared norm is \( \sum_{i=1}^k M_{i,1}^2 \), which has expectation \( k \). After scaling by \( 1/k \), the expected squared norm of \( A(e_1) \) is 1.

For a general unit vector \( x \), each coordinate of \( Mx \) is a linear combination of Gaussians, which is itself Gaussian with mean 0 and variance \( \|x\|_2^2 = 1 \). So the argument reduces to the special case.

The concentration bound requires a Chernoff-type inequality for chi-squared random variables (sums of squared Gaussians): \( \Pr(\|Ax\|_2^2 \geq 1 + \varepsilon) \leq e^{-k\varepsilon^2/8} \). Setting \( k = O(\log n / \varepsilon^2) \) and applying the union bound over all \( \binom{n}{2} \) pairs completes the proof.

Applications. The JL lemma enables approximate nearest-neighbor search in \( O(n \log n) \) time (down from \( O(n^2) \) with a linear scan) and approximate matrix multiplication in \( O(n^2 \log n) \) time. Note that it applies specifically to Euclidean (\( \ell_2 \)) distances.

The same result holds when \( M \) is a random \( \pm 1 \) matrix (Achlioptas), which is simpler to implement. This connection to random sign matrices will reappear when we study data streaming.

Section 1.4: Balls and Bins

The balls-and-bins model is one of the most fundamental random processes in computer science. We throw \( m \) balls independently and uniformly at random into \( n \) bins, and study the resulting distribution. This simple model underlies the analysis of hashing, load balancing, and many other applications.

4.1 Expected Number of Balls in a Bin

Let \( B_{i,j} \) be the indicator that ball \( j \) lands in bin \( i \). Then

\[ E[\text{balls in bin } i] = \sum_{j=1}^m E[B_{i,j}] = \sum_{j=1}^m \frac{1}{n} = \frac{m}{n}. \]When \( m = n \), the expected load per bin is 1. But do we expect most bins to have about one ball?

4.2 Expected Number of Empty Bins

Let \( Y_i \) be the indicator that bin \( i \) is empty. Then

\[ E[Y_i] = \Pr(\text{bin } i \text{ is empty}) = \left(1 - \frac{1}{n}\right)^m \approx e^{-m/n}, \]using \( 1 - x \approx e^{-x} \) for small \( x \). By linearity of expectation,

\[ E[\text{empty bins}] = n \cdot e^{-m/n}. \]When \( m = n \), roughly \( n/e \approx 0.37n \) bins are expected to be empty — more than a third! This random variable is in fact concentrated around its mean, confirming that we reliably see about 37% empty bins.

4.3 The Birthday Paradox

For what \( m \) do we expect a collision — two balls in the same bin? The probability of no collision in the first \( m \) balls is

\[ \left(1 - \frac{1}{n}\right)\left(1 - \frac{2}{n}\right) \cdots \left(1 - \frac{m-1}{n}\right) \leq e^{-m(m-1)/(2n)} \approx e^{-m^2/(2n)}. \]This drops below \( 1/2 \) when \( m = \sqrt{2n \ln 2} \). For \( n = 365 \) (the classical birthday problem), this gives \( m \geq 23 \), very close to the exact answer.

The key intuition: there are \( \binom{m}{2} \) pairs of potential collisions, so we expect a collision when \( m^2 \approx n \), i.e., \( m \approx \sqrt{n} \). This square-root threshold appears throughout hashing, cryptographic attacks, and number-theoretic algorithms.

4.4 Maximum Load

When \( m = n \), what is the maximum number of balls in any bin?

The probability that a specific bin has at least \( k \) balls is at most \( \binom{n}{k} (1/n)^k \leq (e/k)^k \). By the union bound over all \( n \) bins,

\[ \Pr(\text{max load} \geq k) \leq n \cdot \left(\frac{e}{k}\right)^k = e^{\ln n + k - k \ln k}. \]This is small when \( k \ln k > \ln n \), which holds for \( k = \frac{3 \ln n}{\ln \ln n} \).

Theorem 4.1. When \( m = n \) balls are thrown into \( n \) bins, the maximum load is \( O(\ln n / \ln \ln n) \) with high probability.

This bound is tight — heuristic arguments via Poisson approximation confirm that the maximum load is \( \Theta(\ln n / \ln \ln n) \). The quantity \( O(\log n / \log \log n) \) appears in hashing and approximation algorithms (it is still the best known approximation ratio for congestion minimization).

4.5 The Coupon Collector Problem

How many balls must we throw before every bin contains at least one ball? This is the coupon collector problem.

Let \( X \) be the total number of balls needed, and let \( X_i \) be the number thrown while exactly \( i \) bins remain empty. Each \( X_i \) is a geometric random variable with parameter \( i/n \) (the probability that the next ball hits an empty bin). Therefore,

\[ E[X] = \sum_{i=1}^n E[X_i] = \sum_{i=1}^n \frac{n}{i} = n \cdot H_n = n \ln n + \Theta(n), \]where \( H_n = \sum_{i=1}^n 1/i \) is the \( n \)-th harmonic number.

The \( n \ln n \) threshold is sharp: after throwing \( n \ln n + cn \) balls, the probability that some bin is empty is approximately \( 1 - e^{-e^{-c}} \). For large positive \( c \), this is near zero; for large negative \( c \), near one. This sharp threshold phenomenon is characteristic of the coupon collector problem.

The \( n \ln n \) quantity serves as a lower bound for several other problems, including the cover time of random walks on complete graphs and the number of edges needed for random-sampling graph sparsifiers.

4.6 Poisson Approximation

A technical difficulty in balls-and-bins analysis is that the bin loads are not independent. The Poisson approximation technique sidesteps this by replacing the \( (X_1, \ldots, X_n) \) vector (actual bin loads) with independent Poisson random variables \( (Y_1, \ldots, Y_n) \), each with mean \( m/n \).

Two key facts make this work:

- Conditioned on \( \sum_i Y_i = m \), the distribution of \( (Y_1, \ldots, Y_n) \) equals that of \( (X_1, \ldots, X_n) \).

- \( \Pr(\sum_i Y_i = m) \geq 1/(e\sqrt{m}) \).

If an event \( \mathcal{E} \) has probability \( p \) in the Poisson model, then \( \Pr_X(\mathcal{E}) \leq e\sqrt{m} \cdot p \) in the real model. For events with exponentially small probability, this overhead is negligible.

4.7 The Power of Two Choices

The standard balls-and-bins model gives maximum load \( \Theta(\ln n / \ln \ln n) \). What if, for each ball, we sample two random bins and place the ball in the less loaded one?

Theorem 4.2. With the power of two choices, the maximum load when \( n \) balls are thrown into \( n \) bins is \( O(\log \log n) \) with high probability.

This exponential improvement from \( \Theta(\log n / \log \log n) \) to \( O(\log \log n) \) is remarkable.

Proof sketch. Define \( \beta_i \) as the number of bins with load at least \( i \). A ball reaches height \( i+1 \) only if both chosen bins have load at least \( i \), which happens with probability at most \( (\beta_i / n)^2 \). This gives the recurrence \( \beta_{i+1} \leq 2\beta_i^2 / n \).

Starting from \( \beta_4 = n/4 \), induction gives \( \beta_{i+4} \leq n / 2^{2^i} \). After \( O(\log \log n) \) steps, \( \beta_i \) drops to \( O(\log n) \), and from there a simple argument shows no more growth. Chernoff bounds are used at each inductive step to handle the concentration.

The bound is tight: one can show that the maximum load is \( \Theta(\log \log n) \) with high probability.

Section 1.5: Hashing

Hashing is not only fundamental for efficient data structures but also plays a key role in data streaming algorithms and derandomization. The central concept in this chapter is \( k \)-wise independence, a weaker form of randomness that is cheap to generate yet powerful enough for many applications.

5.1 Hash Functions and the Space Problem

The goal of hashing is to store \( n \) elements from a large universe \( U = \{0, 1, \ldots, M-1\} \) in a table of size \( O(n) \) while supporting \( O(1) \)-time lookups.

A hash table consists of a table \( T \) with \( n \) cells and a hash function \( h: U \to \{0, 1, \ldots, n-1\} \). We want \( h \) to spread elements evenly, but by the pigeonhole principle, collisions (\( x \neq y \) with \( h(x) = h(y) \)) are unavoidable without knowing the elements in advance.

If we could use a truly random function \( h: U \to \{0, \ldots, n-1\} \), the analysis reduces to balls and bins: the expected load per bin is 1, and the maximum load is \( \Theta(\log n / \log \log n) \) with chain hashing (storing collisions in linked lists). Using the power of two choices with two random hash functions \( h_1, h_2 \), the maximum search time drops to \( O(\log \log n) \).

But a truly random function requires \( M \log n \) bits to store — more than the universe itself! We need hash functions that are succinct (stored in \( O(1) \) words), fast to evaluate, yet still provide useful randomness guarantees.

5.2 \( k \)-Wise Independence

Definition 5.1. Random variables \( X_1, \ldots, X_n \) are \( k \)-wise independent if for any subset \( I \subseteq [n] \) with \( |I| \leq k \) and any values \( x_i \),

\[ \Pr\left(\bigcap_{i \in I} (X_i = x_i)\right) = \prod_{i \in I} \Pr(X_i = x_i). \]The special case \( k = 2 \) is called pairwise independence.

The power of \( k \)-wise independence is that it can be generated from very few truly random bits:

Construction 1 (XOR). Given \( b \) random bits \( X_1, \ldots, X_b \), define \( Y_S = \bigoplus_{i \in S} X_i \) for each nonempty subset \( S \subseteq \{1, \ldots, b\} \). This produces \( 2^b - 1 \) pairwise independent random bits from only \( b \) truly random bits.

Pairwise independence follows from the principle of deferred decisions: for any two subsets \( S_1 \neq S_2 \), there exists some index \( i \) in their symmetric difference. Fixing all bits except \( X_i \), the value \( Y_{S_1} \) is still equally likely to be 0 or 1 regardless of \( Y_{S_2} \).

Construction 2 (Random Lines over Finite Fields). Given independent uniform \( X_1, X_2 \in \{0, \ldots, p-1\} \) (with \( p \) prime), define \( Y_i = (X_1 + i \cdot X_2) \bmod p \) for \( i = 0, \ldots, p-1 \). This produces \( p \) pairwise independent variables from 2 random values.

Pairwise independence follows because for distinct \( i, j \), the system \( X_1 + iX_2 \equiv a \pmod{p} \), \( X_1 + jX_2 \equiv b \pmod{p} \) has a unique solution (since \( p \) is prime), so \( \Pr(Y_i = a, Y_j = b) = 1/p^2 \).

Intuitively, this construction generates a random line over a finite field: knowing one point reveals nothing about another, but knowing two points determines the entire line.

5.3 Chebyshev’s Inequality for Pairwise Independent Variables

We cannot apply Chernoff bounds with only pairwise independence. However, Chebyshev’s inequality still works, because for pairwise independent variables,

\[ \text{Var}\left[\sum_{i=1}^n X_i\right] = \sum_{i=1}^n \text{Var}[X_i], \]since pairwise independence implies \( \text{Cov}(X_i, X_j) = 0 \) for all \( i \neq j \). This will be critical for data streaming algorithms in the next chapter.

5.4 Universal Hash Families

Definition 5.2. A family \( \mathcal{H} \) of hash functions from universe \( U \) to \( V = \{0, \ldots, n-1\} \) is \( k \)-universal if for any \( k \) distinct elements \( x_1, \ldots, x_k \in U \) and a uniformly random \( h \in \mathcal{H} \),

\[ \Pr(h(x_1) = h(x_2) = \cdots = h(x_k)) \leq \frac{1}{n^{k-1}}. \]It is strongly \( k \)-universal if for any values \( y_1, \ldots, y_k \),

\[ \Pr(h(x_1) = y_1, \ldots, h(x_k) = y_k) = \frac{1}{n^k}. \]A strongly \( k \)-universal family is equivalent to saying that the outputs \( h(0), h(1), \ldots \) are \( k \)-wise independent when \( h \) is chosen uniformly.

The Linear Construction

For the case \( |U| = |V| = p \) (a prime), define \( h_{a,b}(x) = (ax + b) \bmod p \) and \( \mathcal{H} = \{h_{a,b} : 0 \leq a, b \leq p-1\} \).

Claim. \( \mathcal{H} \) is strongly 2-universal.

Proof. For distinct \( x_1, x_2 \) and any \( y_1, y_2 \), the conditions \( ax_1 + b \equiv y_1 \) and \( ax_2 + b \equiv y_2 \pmod{p} \) form a system of two linear equations in two unknowns with a unique solution. Thus exactly one pair \( (a, b) \) out of \( p^2 \) satisfies both conditions, giving probability \( 1/p^2 \). \( \square \)

For a larger universe \( U = \{0, \ldots, p^k - 1\} \), interpret each element as a vector in \( \mathbb{F}_p^k \) and define \( h_{\vec{a}, b}(\vec{u}) = (\sum_{i=0}^{k-1} a_i u_i + b) \bmod p \). The same argument shows this family is strongly 2-universal.

For general table sizes, \( h_{a,b}(x) = ((ax + b) \bmod p) \bmod n \) is 2-universal (though no longer strongly so).

For \( k \)-universal families: use degree-\( (k-1) \) polynomials \( h_{\vec{a}}(x) = (a_{k-1}x^{k-1} + \cdots + a_1 x + a_0) \bmod p \bmod n \). By polynomial interpolation, any \( k \) distinct inputs have independently uniform outputs.

5.5 Performance of 2-Universal Hashing

Expected search time. With \( m \) elements in an \( n \)-cell table and a 2-universal hash function, the expected number of items at the location of any element \( x \) is at most \( 1 + (m-1)/n \) if \( x \in S \) and \( m/n \) if \( x \notin S \). When \( m = n \), the expected search time is \( O(1) \).

Maximum load. The maximum load guarantee is weaker: letting \( X \) count the number of collision pairs, we have \( E[X] \leq \binom{m}{2}/n \leq m^2/(2n) \). By Markov’s inequality, with probability at least \( 1/2 \), the maximum load is at most \( m \sqrt{2/n} \). For \( m = n \), this gives \( O(\sqrt{n}) \) — much worse than the \( O(\log n / \log \log n) \) of fully random hashing.

To recover the \( O(\log n / \log \log n) \) bound, one would need an \( \Omega(\log n / \log \log n) \)-universal family, but then the evaluation time itself becomes \( \Omega(\log n / \log \log n) \), a poor tradeoff.

5.6 Perfect Hashing

When the set \( S \) is static (no insertions or deletions), we can do much better.

Definition 5.3. A hash function is perfect for a set \( S \) if it maps all elements of \( S \) to distinct locations, enabling \( O(1) \) worst-case search time.

Lemma 5.4. If \( h \) is random from a 2-universal family mapping \( m \) items into \( n \geq m^2 \) bins, then \( h \) is perfect with probability at least \( 1/2 \).

This follows from the collision-pair analysis: \( E[\text{collisions}] \leq m^2/(2n) \leq 1/2 \), so by Markov, there are no collisions with probability at least \( 1/2 \). But using \( \Omega(m^2) \) space is wasteful.

Theorem 5.5 (Two-Level Perfect Hashing). There exists a perfect hashing scheme for \( m \) items using \( O(m) \) space, with \( O(1) \) worst-case search time.

Construction. The first-level hash function maps \( m \) items into \( m \) bins. If a bin contains \( c_i \) items, build a second-level hash table for that bin using \( O(c_i^2) \) space (which guarantees no collisions by the lemma). The total space is

\[ m + \sum_{i=1}^m c_i^2 = m + 2\sum_{i=1}^m \binom{c_i}{2} + \sum_{i=1}^m c_i \leq m + 2m + m = 4m, \]since the number of collision pairs in the first level is at most \( m \) (achieved with probability \( \geq 1/2 \) by choosing the first-level hash function from a 2-universal family).

The hash functions are found by random trials: on average, at most 2 trials per level. Storing all \( O(m) \) hash functions requires \( O(m) \) additional space (each needs only the pair \( (a, b) \)). Overall, this achieves the same performance as a direct-address table over the entire universe, using only \( O(m) \) space.

Further reading. In practice, simpler schemes like tabulation hashing (using table lookups and XOR) are not even 4-wise independent but still achieve \( O(\log n / \log \log n) \) maximum load. Cuckoo hashing achieves \( O(1) \) worst-case lookup with \( O(n) \) space. The theory of \( k \)-wise independence also plays a central role in derandomization, where one searches over the \( O(n^k) \)-sized sample space to turn randomized algorithms into deterministic ones.

Section 1.6: Data Streaming

In many applications involving massive datasets, the data is too large to store or even to read more than once. Yet we can design sublinear-space algorithms that compute useful statistics in a single pass over the data. Randomness is crucial in this setting: it can be proved that deterministic algorithms cannot guarantee anything nontrivial for these problems.

The data streaming model allows the algorithm to read the data in one sequential pass while maintaining only very limited working memory. A canonical motivating example is network monitoring: routers need good traffic statistics but cannot afford to store all packets.

6.1 Heavy Hitters

Given a data stream \( X_1, X_2, \ldots, X_T \), where each \( X_t = (i_t, c_t) \) consists of an item ID \( i_t \) and a weight \( c_t \), let \( Q = \sum_t c_t \) be the total count and \( \text{Count}(i, T) = \sum_{t: i_t = i} c_t \) the total weight for ID \( i \). Given a threshold \( q \), item \( i \) is a heavy hitter if \( \text{Count}(i, T) \geq q \).

We design an algorithm with the following guarantees: (1) every heavy hitter is reported, and (2) any item with \( \text{Count}(i, T) \leq q - \varepsilon Q \) is reported with probability at most \( \delta \).

Algorithm. Maintain \( k \) independent hash functions \( h_1, \ldots, h_k \), each mapping the universe to \( \{1, \ldots, \ell\} \), chosen from a 2-universal family. Maintain \( k \cdot \ell \) counters \( C_{a,j} \), initially zero. For each data item \( (i_t, c_t) \), increment \( C_{a, h_a(i_t)} \) by \( c_t \) for each \( 1 \leq a \leq k \). Report item \( i \) if \( \min_{a} C_{a, h_a(i)} \geq q \).

Analysis. A heavy hitter is always reported, since its own contributions already push all its counters above \( q \). For a non-heavy-hitter \( i \) with \( \text{Count}(i, T) \leq q - \varepsilon Q \), let \( Z_a \) denote the contribution of other items to counter \( C_{a, h_a(i)} \). By the 2-universal property, \( E[Z_a] \leq Q / \ell \). By Markov’s inequality, \( \Pr(Z_a \geq \varepsilon Q) \leq 1/(\varepsilon \ell) \). Since the hash functions are independent, \( \Pr(\min_a Z_a \geq \varepsilon Q) \leq (1/(\varepsilon \ell))^k \).

Setting \( \ell = 1/\varepsilon \) and \( k = \ln(1/\delta) \) gives false-positive probability at most \( \delta \), using only \( O(\frac{1}{\varepsilon} \ln \frac{1}{\delta}) \) counters — a constant for constant \( \varepsilon \) and \( \delta \).

6.2 Estimating Distinct Elements

Given a stream \( a_1, a_2, \ldots, a_n \) where each \( a_i \in \{1, \ldots, m\} \), estimate the number \( D \) of distinct elements using only \( O(\log m) \) space.

Algorithm. Choose a random hash function \( h \) from a strongly 2-universal family mapping \( \{1, \ldots, m\} \to \{1, \ldots, m^3\} \). Maintain the \( t \) smallest hash values seen so far (using a heap). After all data is read, let \( T \) be the \( t \)-th smallest hash value and return \( Y = t \cdot m^3 / T \).

The intuition is that if \( D \) distinct elements have their hash values spread uniformly in \( \{1, \ldots, m^3\} \), the \( t \)-th smallest should be approximately \( t \cdot m^3 / D \), so \( D \approx t \cdot m^3 / T \).

Theorem 6.1. Setting \( t = O(1/\varepsilon^2) \) gives \( (1 - \varepsilon)D \leq Y \leq (1 + \varepsilon)D \) with constant probability.

The proof uses Chebyshev’s inequality, relying on the fact that the hash values of distinct elements are pairwise independent (from the strongly 2-universal family), so the variance of the count of small hash values is manageable. The success probability can be boosted to \( 1 - \delta \) by running \( O(\log(1/\delta)) \) parallel copies and taking the median.

Space. The total space is \( O(\frac{1}{\varepsilon^2} \log \frac{1}{\delta} \log m) \) bits. Kane, Nelson, and Woodruff later gave an optimal algorithm using \( O(1/\varepsilon^2 + \log m) \) space with \( O(1) \) update time.

6.3 Frequency Moments

For a stream \( a_1, \ldots, a_n \) over universe \( \{1, \ldots, m\} \), let \( x_i \) be the number of occurrences of item \( i \). The \( p \)-th frequency moment is \( F_p = \sum_{i=1}^m x_i^p \). When \( p = 0 \) this is the distinct-elements problem; \( p = 1 \) is trivial (just the stream length). We focus on \( p = 2 \).

Algorithm (AMS Sketch). Choose random signs \( r_1, \ldots, r_m \in \{+1, -1\} \) with equal probability. Maintain \( Y = \sum_{i=1}^m r_i x_i \) (updated incrementally as items arrive). Return \( Y^2 \).

Analysis. Since \( E[r_i r_j] = \begin{cases} 1 & \text{if } i = j \\ 0 & \text{if } i \neq j \end{cases} \), we get \( E[Y^2] = \sum_{i=1}^m x_i^2 = F_2 \). Computing the fourth moment shows \( \text{Var}[Y^2] \leq 2(E[Y^2])^2 \), so by Chebyshev’s inequality, \( Y^2 \) is a constant-factor approximation to \( F_2 \) with constant probability.

For a \( (1 \pm \varepsilon) \)-approximation, average \( O(1/\varepsilon^2) \) independent copies. The total space is \( O(1/\varepsilon^2) \) numbers.

A crucial observation is that the analysis only requires the random signs to be 4-wise independent, so that the calculations of \( E[r_i r_j] \) and \( E[r_i r_j r_k r_\ell] \) remain valid. Since \( m \) values of 4-wise independent bits can be generated from only \( O(\log m) \) random bits, the extra storage is just \( O(\log m) \) bits.

This sketching technique is closely related to dimension reduction and is one of the most important ideas in data streaming.

For higher moments: estimating \( F_p \) for \( p > 2 \) requires \( \Theta(n^{1 - 2/p} \cdot \text{polylog}(n)) \) space, while for \( 0 \leq p \leq 2 \), \( O(\text{polylog}(m)) \) space suffices.

Section 1.7: Algebraic Techniques

Simple algebraic ideas — fingerprinting via polynomials and the Schwartz–Zippel lemma — have surprisingly powerful applications in algorithm design, from verifying computations to designing fast parallel algorithms for matching.

7.1 String Equality and Fingerprinting

Problem. Alice holds a binary string \( a_1 \ldots a_n \) and Bob holds \( b_1 \ldots b_n \). They want to check equality using as few communicated bits as possible.

Deterministically, \( \Omega(n) \) bits are necessary (a result from communication complexity). But a randomized protocol needs only \( O(\log n) \) bits:

Algorithm. Interpret the strings as polynomials \( A(x) = \sum_{i=1}^n a_i x^i \) and \( B(x) = \sum_{i=1}^n b_i x^i \) over a finite field \( \mathbb{F} \) with \( |\mathbb{F}| \approx 1000n \). Alice picks a random \( r \in \mathbb{F} \), sends \( r \) and \( A(r) \) to Bob. Bob returns “consistent” if \( A(r) = B(r) \) and “inconsistent” otherwise.

If the strings are equal, \( A \equiv B \) and the algorithm always says “consistent.” If they differ, \( (A - B)(x) \) is a nonzero polynomial of degree at most \( n \) with at most \( n \) roots, so \( \Pr(\text{error}) \leq n / |\mathbb{F}| \leq 0.001 \). Using \( |\mathbb{F}| = n^2 \) gives error probability \( 1/n \) while still sending only \( O(\log n) \) bits.

7.2 The Schwartz–Zippel Lemma

Lemma 7.1 (Schwartz–Zippel). Let \( Q(x_1, \ldots, x_n) \) be a nonzero multivariate polynomial of total degree \( d \) over a field \( \mathbb{F} \). For any finite set \( S \subseteq \mathbb{F} \) and independently uniform random \( r_1, \ldots, r_n \in S \),

\[ \Pr[Q(r_1, \ldots, r_n) = 0] \leq \frac{d}{|S|}. \]Proof. By induction on \( n \). The base case \( n = 1 \) is the fact that a univariate polynomial of degree \( d \) has at most \( d \) roots. For the inductive step, write \( Q = \sum_{i=0}^k x_1^i Q_i(x_2, \ldots, x_n) \) where \( Q_k \not\equiv 0 \). By induction, \( \Pr(Q_k(r_2, \ldots, r_n) = 0) \leq (d-k)/|S| \). Conditioned on \( Q_k \neq 0 \), the polynomial \( Q(x_1, r_2, \ldots, r_n) \) is a nonzero univariate polynomial of degree \( k \), so \( \Pr(Q(r_1, \ldots, r_n) = 0 \mid Q_k \neq 0) \leq k/|S| \). Combining gives \( \Pr(Q = 0) \leq (d-k)/|S| + k/|S| = d/|S| \). \( \square \)

7.3 Randomized Matching via Determinants

Bipartite matching. Given a bipartite graph \( G = (U, V; E) \), define the \( n \times n \) matrix \( A \) with \( A_{ij} = x_{ij} \) if \( u_i v_j \in E \) and 0 otherwise.

Theorem 7.2 (Edmonds). \( G \) has a perfect matching if and only if \( \det(A) \not\equiv 0 \).

This follows because each nonzero term in the determinant expansion \( \det(A) = \sum_\sigma \text{sign}(\sigma) \prod_i A_{i,\sigma(i)} \) corresponds to a permutation \( \sigma \) defining a perfect matching \( \{u_i v_{\sigma(i)}\} \). Since each term uses a different subset of variables, nonzero terms cannot cancel.

Randomized algorithm. Choose a prime \( p \geq 100n \), substitute independent random values from \( \{0, \ldots, p-1\} \) for each variable, and compute the numerical determinant by Gaussian elimination in \( O(n^3) \) time (or \( O(n^\omega) \) with fast matrix multiplication, \( \omega \approx 2.37 \)). By Schwartz–Zippel, the error probability is at most \( n/p \leq 1/100 \).

General graphs. For non-bipartite graphs, replace the symmetric matrix with the skew-symmetric Tutte matrix: \( A_{ij} = x_e \) and \( A_{ji} = -x_e \) for edge \( e = ij \). Tutte’s theorem states that \( G \) has a perfect matching if and only if \( \det(A) \not\equiv 0 \).

7.4 Parallel Algorithms and the Isolation Lemma

The algebraic approach is especially powerful for parallel computation, because determinants can be computed in \( O(\log^2 n) \) time using \( O(n^{4.2}) \) processors. This immediately gives an efficient parallel algorithm for deciding whether a perfect matching exists. But finding one is harder: different processors must coordinate to select the same matching.

Lemma 7.3 (Isolation Lemma). Given a set system on \( n \) elements, assign each element an independent random weight from \( \{1, \ldots, 2n\} \). With probability at least \( 1/2 \), there is a unique minimum-weight set.

This is counterintuitive: a set system may have \( 2^n \) sets, yet a random weighting isolates a unique minimum with constant probability. The proof shows that an element \( v \) is “ambiguous” (could be in or out of the minimum-weight set) with probability at most \( 1/(2n) \), and by the union bound, all elements are unambiguous with probability at least \( 1/2 \).

Application to matching. Assign random weights from \( \{1, \ldots, 2m\} \) to edges and substitute \( x_e = 2^{w(e)} \). With probability \( \geq 1/2 \), there is a unique minimum-weight perfect matching, and its weight \( W \) can be found by checking the highest power of 2 dividing \( \det(A) \). Each edge \( e = uv \) belongs to this matching if and only if the minimum-weight perfect matching in \( G - \{u, v\} \) has weight \( W - w(e) \). All these checks can be done in parallel.

Open problems. Finding a deterministic parallel algorithm for matching remains a major open question. Recent progress on derandomizing the isolation lemma has led to algorithms using \( n^{O(\log n)} \) processors but not yet polynomial parallelism.

Section 1.8: Network Coding

Network coding is a revolutionary approach to data transmission in networks. In traditional networking, intermediate nodes simply store and forward data — they act as switching nodes that relay packets without modification. Network coding breaks this paradigm: intermediate nodes are permitted to perform algebraic operations on the data they receive, combining incoming messages before forwarding. This seemingly small change can dramatically increase throughput for multicasting problems, and the analysis beautifully applies the Schwartz–Zippel lemma from Chapter 7.

8.1 The Multicasting Problem

Given a directed acyclic graph \( G = (V, E) \), a source vertex \( s \), and a set of receivers \( \{t_1, \ldots, t_\ell\} \subseteq V \), the multicasting problem asks how quickly \( s \) can send data to all receivers simultaneously. Each directed edge can carry one unit of data per time step, and the objective is to maximize the transmission rate.

In traditional store-and-forward networks, the analysis reduces to a tree-packing problem. A rate of \( k \) requires \( k \) edge-disjoint trees, each connecting \( s \) to all receivers. This is computationally hard to optimize, and worse, it can be far from optimal. The critical insight is that even when there are \( k \) edge-disjoint paths from \( s \) to each individual receiver \( t_i \), there may be far fewer edge-disjoint trees spanning \( s \) and all receivers simultaneously.

The butterfly network is the canonical example illustrating this gap. Consider a source \( s \) with two messages \( x_1, x_2 \), two receivers \( t_1, t_2 \), and a central bottleneck edge that both receivers depend on. With traditional routing, the bottleneck edge can carry at most one unit of data per step, limiting throughput to 1. But there are two edge-disjoint paths to each individual receiver. Network coding resolves this: the bottleneck node sends \( x_1 \oplus x_2 \) (the XOR of both messages). Receiver \( t_1 \), having received \( x_1 \) via a direct path, can recover \( x_2 = x_1 \oplus (x_1 \oplus x_2) \). Similarly, receiver \( t_2 \), having received \( x_2 \), recovers \( x_1 \). Both receivers decode both messages in one time step — achieving rate 2, matching the min-cut.

This example shows that the gap between tree-packing and network coding throughput can be unbounded as the network grows.

8.2 The Max-Information-Flow Min-Cut Theorem

Network coding achieves the information-theoretic optimum.

Theorem 8.1 (Max-Information-Flow Min-Cut, Ahlswede–Cai–Li–Yeung 2000). If the source \( s \) has \( k \) edge-disjoint paths to each receiver \( t_i \), then using network coding, the source can send \( k \) units of data to all receivers simultaneously.

The optimality direction is clear: any \( s \)-\( t_i \) cut of size \( k \) limits throughput to \( k \) regardless of the coding scheme. The theorem states that this information-theoretic bound is always achievable. This is the deep and surprising direction: not only is the min-cut the right answer for each individual receiver, it is simultaneously achievable for all receivers at once, something entirely impossible without coding.

This result opened a new research area. The ratio of network-coding throughput to tree-packing throughput can grow without bound, so the improvement from allowing coding is not merely a constant factor but can be asymptotically unbounded.

8.3 Linear Network Coding

A further simplification makes the result practical.

Theorem 8.2 (Linear Coding Suffices). Linear network coding achieves the optimal multicasting rate.

In linear network coding, the data transmitted on each edge is always a linear combination of the incoming messages at that node, computed over a finite field \( \mathbb{F} \). Formally, if node \( v \) receives messages \( m_1, \ldots, m_\ell \) on its incoming edges, then on each outgoing edge \( e \) it sends \( \sum_{j=1}^{\ell} b_j^{(e)} m_j \) for some local encoding coefficients \( b_j^{(e)} \in \mathbb{F} \). The key simplification is that general non-linear operations are unnecessary.

By induction on the topological ordering, every edge in the network carries a linear combination of the original source messages \( x_1, \ldots, x_k \). Explicitly, edge \( e \) carries \( c_1 x_1 + c_2 x_2 + \cdots + c_k x_k \) for some coefficients \( c_1, \ldots, c_k \in \mathbb{F} \). The vector \( (c_1, \ldots, c_k) \) is called the global encoding vector of edge \( e \). Once all local encoding coefficients are fixed, all global encoding vectors are determined.

A receiver \( t \) with \( k \) incoming edges receives \( k \) messages. Arranging the global encoding vectors of these \( k \) edges as rows of a matrix \( C \), receiver \( t \) can recover \( (x_1, \ldots, x_k) \) uniquely by solving the linear system if and only if \( C \) is invertible, i.e., \( \det(C) \neq 0 \).

8.4 Randomized Distributed Algorithm

The key algorithmic question is how to choose the local encoding coefficients efficiently. Deterministic algorithms exist but require a central authority to inspect the entire network topology. The following randomized algorithm is far more practical.

Algorithm. Choose a prime field \( \mathbb{F} \) with \( |\mathbb{F}| \geq kn^3 \). The source sends \( x_i \) on its \( i \)-th outgoing edge. Process remaining vertices in topological order. At each vertex \( v \), for each outgoing edge \( e \), draw the local encoding coefficients \( b_1^{(e)}, \ldots, b_\ell^{(e)} \) independently and uniformly at random from \( \mathbb{F} \), and transmit the resulting linear combination.

This algorithm is fully decentralized: each node independently draws its own random coefficients with no knowledge of other nodes’ choices, and no central coordination. The total communication overhead is negligible (the field size is polynomial in \( n \) and \( k \)).

Analysis. The correctness argument ties directly back to the Schwartz–Zippel lemma.

Step 1: The degree bound. For vertex \( v_i \) in the topological ordering \( v_1 = s, v_2, \ldots, v_n \), each entry of the global encoding vector on any outgoing edge of \( v_i \) is a multivariate polynomial in the local encoding coefficients of total degree at most \( i - 1 \). The proof is by induction: the base case is that the \( i \)-th outgoing edge of the source carries the unit vector \( e_i \), which is a degree-0 polynomial. Each local encoding step adds degree exactly 1 to the polynomial. Consequently, each entry of the receiver matrix \( C \) is a polynomial of total degree at most \( n - 1 \), and \( \det(C) \) is a polynomial of total degree at most \( kn \).

Step 2: The polynomial is nonzero. If the source has \( k \) edge-disjoint paths to receiver \( t \), we exhibit one assignment of local coefficients making \( \det(C) \neq 0 \). Use the \( k \) edge-disjoint paths to implement traditional store-and-forward: on the \( j \)-th path, forward message \( x_j \) unchanged (i.e., set local encoding coefficients to 0 or 1 to isolate each path). Then the global encoding vectors of the \( k \) incoming edges of \( t \) are exactly the \( k \) standard basis vectors \( e_1, \ldots, e_k \), so \( C = I \) and \( \det(C) = 1 \neq 0 \). Thus the polynomial \( \det(C) \) is not identically zero.

Step 3: Schwartz–Zippel. Since \( \det(C) \) is a nonzero polynomial of total degree at most \( kn \) in the local encoding coefficients, and we evaluate it at uniformly random points in \( \mathbb{F}^m \) (where \( m \) is the number of local coefficients), the Schwartz–Zippel lemma gives

\[ \Pr\bigl(\det(C) = 0\bigr) \leq \frac{kn}{|\mathbb{F}|} \leq \frac{kn}{kn^3} = \frac{1}{n^2}. \]Taking a union bound over all \( \ell \leq n \) receivers, every receiver decodes correctly simultaneously with probability at least \( 1 - n \cdot (1/n^2) = 1 - 1/n \).

8.5 Efficient Encoding via Superconcentrators

One practical concern is computational efficiency. If a node has \( \ell \) incoming edges each carrying a \( d \)-dimensional vector (i.e., \( d \) parallel streams), the encoding work for each outgoing edge is \( O(d\ell) \) (one inner product), and for all outgoing edges together is \( O(d\ell^2) \). In dense graphs this can be expensive.

The key observation is that encoding is cheap when the in-degree is small. This motivates transforming the graph to have bounded in-degree without sacrificing connectivity.

A superconcentrator is a directed acyclic graph with \( n \) input vertices and \( n \) output vertices, \( O(n) \) total vertices and edges, and the property that for any \( k \) and any \( k \) input vertices \( S \) and \( k \) output vertices \( T \), there exist \( k \) vertex-disjoint paths from \( S \) to \( T \). Crucially, every vertex in a superconcentrator has constant in-degree and out-degree.

Given any high-degree vertex \( v \) in the original network, we can replace it by a superconcentrator of size \( O(\deg(v)) \). The resulting graph preserves all \( k \)-connectivity properties (since superconcentrators by definition support \( k \)-way connections), but now every vertex has constant in-degree. After this reduction, encoding at each vertex takes \( O(d) \) time for all its outgoing edges combined, and the total encoding work across all vertices is \( O(dn) \), which is optimal (one cannot avoid writing down the \( n \) output vectors).

The existence of superconcentrators with \( O(n) \) edges was proved by Valiant (1975), disproving his own earlier conjecture that they did not exist. The construction uses magical graphs — sparse bipartite expanders that are themselves constructed probabilistically (see Section 9.1).

This chapter illustrates a recurring theme: randomization makes an otherwise centralized and computationally-intensive task (optimal network coding) into a simple, distributed, and efficient one. The correctness proof directly applies the polynomial identity testing framework from Chapter 7, showing how algebraic techniques unify seemingly disparate algorithmic problems.

Section 1.9: Probabilistic Methods

The probabilistic method proves the existence of combinatorial objects by showing that a random construction succeeds with positive probability. This elegant technique, pioneered by Erdos, has become one of the most powerful tools in combinatorics. We study three variants: the first moment method, the second moment method, and the Lovász Local Lemma.

9.1 The First Moment Method

The simplest form: if \( E[X] < 1 \) for a non-negative integer-valued random variable \( X \), then \( \Pr(X = 0) > 0 \), so there exists an outcome with \( X = 0 \).

Ramsey Graphs

Can we 2-color the edges of the complete graph \( K_n \) so that there is no monochromatic clique of size \( k \)?

Theorem 9.1. If \( \binom{n}{k} \cdot 2^{1 - \binom{k}{2}} < 1 \), then such a coloring exists.

Proof. Color each edge uniformly at random. The probability that a fixed \( k \)-subset is monochromatic is \( 2 \cdot 2^{-\binom{k}{2}} \). By the union bound, the probability that some \( k \)-subset is monochromatic is at most \( \binom{n}{k} \cdot 2^{1 - \binom{k}{2}} < 1 \). Hence some coloring avoids all monochromatic \( K_k \). \( \square \)

This is satisfied for \( k \geq 2\log_2 n \), proving that a random 2-coloring of \( K_n \) has no monochromatic clique of size \( 2\log_2 n \) with high probability. Remarkably, no deterministic algorithm is known that constructs a coloring avoiding monochromatic cliques of size \( (1 + \varepsilon) \log_2 n \).

Magical Graphs and Superconcentrators

A magical graph is a sparse bipartite graph \( G = (U, W; E) \) where every subset \( S \subseteq U \) with \( |S| \leq |U|/2 \) has a perfect matching into \( W \). Such graphs exist with \( |W| = 3|U|/4 \) and constant degree \( d \geq 8 \), proved by the first moment method on random bipartite graphs with each left vertex connected to \( d \) random right vertices.

Magical graphs are the building blocks for superconcentrators: directed acyclic graphs with \( n \) inputs and \( n \) outputs such that any \( k \) inputs can be connected to any \( k \) outputs by vertex-disjoint paths. Valiant showed that superconcentrators with \( O(n) \) edges exist, using a recursive construction based on magical graphs (disproving his own conjecture that \( O(n) \) was impossible). These structures have applications in network coding, sorting networks, and complexity theory.

9.2 The Second Moment Method

The first moment method shows \( X = 0 \) with high probability when \( E[X] \to 0 \). To show \( X \geq 1 \) when \( E[X] \to \infty \), we need concentration:

Theorem 9.2 (Second Moment Method). If \( X \) is an integer-valued random variable, then \( \Pr(X = 0) \leq \text{Var}[X] / E[X]^2 \).

This follows directly from Chebyshev’s inequality.

Threshold Phenomena in Random Graphs

In the Erdos–Rényi model \( G(n, p) \), each edge appears independently with probability \( p \). Many graph properties exhibit threshold behavior: there exists a function \( f(n) \) such that the property almost surely fails when \( p \ll f(n) \) and almost surely holds when \( p \gg f(n) \).

Example (4-cliques). Let \( X \) count the number of 4-cliques. Then \( E[X] = \binom{n}{4} p^6 \). When \( p = o(n^{-2/3}) \), \( E[X] \to 0 \) and the first moment method gives \( \Pr(X = 0) \to 1 \). When \( p = \omega(n^{-2/3}) \), \( E[X] \to \infty \), and the second moment method (verifying \( \text{Var}[X] = o(E[X]^2) \)) shows \( \Pr(X \geq 1) \to 1 \). The threshold function is \( f(n) = n^{-2/3} \).

Example (Diameter 2). The property that \( G(n, p) \) has diameter at most 2 has a sharp threshold at \( p = \sqrt{2 \ln n / n} \). The proof defines a pair \( (i, j) \) as “bad” if there is no edge between them and no common neighbor. For \( p = \sqrt{c \ln n / n} \), the expected number of bad pairs is \( \sim n^{2-c}/2 \). When \( c > 2 \), this tends to 0 (first moment method). When \( c < 2 \), a careful second-moment calculation shows the number of bad pairs is positive with high probability.

Algorithmic gap. The second moment method shows \( G(n, 1/2) \) almost surely has a clique of size \( 2\log_2 n \), but the best known polynomial-time algorithm finds cliques of only \( \sim \log_2 n \). This gap is believed to be inherent and connects to average-case complexity.

9.3 The Lovász Local Lemma

When bad events are not independent but have limited dependencies, the Lovász Local Lemma provides a powerful existence tool.

Theorem 9.3 (Lovász Local Lemma). Let \( E_1, \ldots, E_n \) be events with \( \Pr(E_i) \leq p \) for all \( i \). If each event is mutually independent of all but at most \( d \) other events, and \( 4dp \leq 1 \), then

\[ \Pr\left(\bigcap_{i=1}^n \overline{E_i}\right) > 0. \]This can be interpreted as: if the union bound holds locally (in the dependency neighborhood of each event), then a good outcome exists. The proof proceeds by induction, showing that \( \Pr(E_k \mid \bigcap_{i \in S} \overline{E_i}) \leq 2p \) for all \( k \) and subsets \( S \) not containing \( k \).

Application: Satisfiability of Sparse \( k \)-SAT

Theorem 9.4. If each variable in a \( k \)-SAT formula appears in at most \( T = 2^k / (4k) \) clauses, then the formula is satisfiable.

Proof. Consider a random truth assignment. The probability that a specific clause is unsatisfied is \( p = 2^{-k} \). Each clause shares variables with at most \( d = kT \leq 2^{k-2} \) other clauses. Since \( 4dp \leq 4 \cdot 2^{k-2} \cdot 2^{-k} = 1 \), the Local Lemma guarantees a satisfying assignment. \( \square \)

Application: Edge-Disjoint Paths

Theorem 9.5. Suppose each of \( k \) source-sink pairs has \( L \) candidate paths, and each path shares edges with at most \( C \) paths for other pairs. If \( 8kC \leq L \), then edge-disjoint paths exist.

The proof applies the Local Lemma with bad events being non-disjointness of pairs of selected paths.

9.4 Making the Local Lemma Constructive

The original Local Lemma is non-constructive. For decades, efficient algorithms to find the guaranteed good outcomes were elusive. In 2009, Robin Moser gave a remarkably simple algorithm for \( k \)-SAT:

Start with a random assignment. Scan clauses in order. If clause \( C_i \) is unsatisfied, resample its variables randomly, and recursively fix any clauses that share variables with \( C_i \) and become unsatisfied.

Analysis. Moser’s brilliant insight was an incompressibility argument: if the algorithm runs for \( t \) steps, it uses \( n + tk \) random bits. But the execution trace can be encoded in approximately \( n + t(\log_2 d + 2) \) bits, from which the original random bits can be fully recovered. If \( k > \log_2 d + 2 \) (equivalently, \( d < 2^{k-2} \)), this encoding is shorter than the original random string. Since random strings cannot be compressed, the algorithm must terminate quickly — specifically in \( O(m \log m) \) steps with high probability.

This breakthrough sparked extensive follow-up work, establishing the Local Lemma as not just a probabilistic existence tool but a powerful algorithmic technique.

Chapter 2: Spectral Methods and Random Walks

In the second part of the course, we learn how to use concepts from linear algebra — eigenvalues, eigenvectors, and the Laplacian matrix — to design and analyze algorithms. Eigenvalues have traditionally been useful for analyzing random walks and designing graph partitioning algorithms. Through the lens of random walks, we will discover the elegant perspective of viewing a graph as an electrical network, using concepts such as effective resistance for graph problems. Recently, this perspective has led to surprising breakthroughs in graph sparsification and solving linear equations, enabling faster algorithms for many combinatorial problems.

Section 2.1: Random Walks on Graphs

10.1 Overview and Motivation

A random walk on a graph is one of the most natural stochastic processes one can define: start at some vertex, and at each step move to a uniformly random neighbor of the current vertex. Despite its simplicity, the random walk encodes a surprising amount of information about the graph’s structure. The long-run behavior of the walk reflects global connectivity; the rate of convergence reflects how well-knit the graph is; and hitting times connect to the electrical resistance of the graph viewed as a physical network.

Random walks are fundamental in both theory and practice. They underlie the PageRank algorithm that powered early web search, the Markov Chain Monte Carlo (MCMC) methods used throughout statistics and machine learning, algorithms for approximately counting combinatorial structures, and even sublinear-time algorithms that achieve results impossible for deterministic methods. We begin with the basic mathematical questions, state the central theorem, and then explore two applications that exemplify the technique’s power.

Given a graph \( G = (V, E) \), the four central questions about random walks are:

- Stationary distribution: Does the walk converge to a limiting distribution over the vertices, and if so, what is it?

- Mixing time: How many steps are needed before the current distribution is close (in some measure) to the stationary distribution?

- Hitting time: Starting from vertex \( s \), what is the expected number of steps to first reach vertex \( t \)?

- Cover time: How many steps until every vertex has been visited at least once?

There are two main approaches to questions (1) and (2). The probabilistic approach uses the idea of “coupling”: run two copies of the walk from different starting points and analyze when they first meet. The spectral approach uses the eigenvalues of the transition matrix. We will develop the spectral approach in detail in subsequent chapters. Questions (3) and (4) are best answered via the connection to electrical networks, which we study in Chapter 14.

10.2 Markov Chains and the Transition Matrix

A random walk on a directed graph is a special case of a Markov chain — a random process with the property that the next state depends only on the current state, not on the full history. Formally, letting \( X_t \) denote the state at time \( t \):

\[ \Pr(X_t = a_t \mid X_{t-1} = a_{t-1}, X_{t-2} = a_{t-2}, \ldots, X_0 = a_0) = \Pr(X_t = a_t \mid X_{t-1} = a_{t-1}). \]This “memoryless” property allows the entire dynamics to be captured by the transition matrix \( P \), where \( P_{i,j} \) is the probability of moving from state \( i \) to state \( j \). For a random walk on an undirected graph where each step moves to a uniformly random neighbor, we have \( P_{i,j} = 1/d(i) \) if \( ij \in E \) and \( P_{i,j} = 0 \) otherwise, where \( d(i) \) is the degree of vertex \( i \).

Let \( \vec{p}_t \) be the row vector giving the probability distribution over states at time \( t \). The update rule is simply \( \vec{p}_{t+1} = \vec{p}_t P \), and by induction, \( \vec{p}_{t+m} = \vec{p}_t P^m \). This means the \( t \)-step distribution is determined entirely by the initial distribution and powers of \( P \) — making the spectral decomposition of \( P \) (or a related symmetric matrix) the key analytical tool.

Two structural properties of Markov chains determine their long-run behavior. A chain is irreducible if every state can reach every other state (the underlying directed graph is strongly connected). The period of state \( i \) is \( d(i) = \gcd\{t : P^t_{i,i} > 0\} \), and the chain is aperiodic if all states have period 1. For random walks on undirected graphs, irreducibility corresponds to connectivity, and aperiodicity corresponds to the graph being non-bipartite (since bipartite graphs have all periods equal to 2).

10.3 The Stationary Distribution

A stationary distribution is a probability vector \( \vec{\pi} \) satisfying \( \vec{\pi} = \vec{\pi} P \) — a fixed point of the dynamics. If the chain is at the stationary distribution, it stays there forever. The return time \( H_i = \min\{t \geq 1 : X_t = i\} \) is the first time the chain returns to state \( i \); its expected value \( h_i = E[H_i \mid X_0 = i] \) is the expected return time.

Theorem 10.1 (Fundamental Theorem of Markov Chains). For any finite, irreducible, aperiodic Markov chain:

- A stationary distribution \( \vec{\pi} \) exists.

- The distribution \( \vec{p}_t \) converges to \( \vec{\pi} \) as \( t \to \infty \), regardless of the initial distribution \( \vec{p}_0 \).

- The stationary distribution is unique.

- \( \pi_i = 1/h_i \) for all states \( i \).

The intuition for convergence is elegant: two independent copies of the chain, started from different initial distributions, will eventually “meet” at the same state. Once they meet, they are indistinguishable and from that point forward follow identical trajectories. Since the chain is irreducible and aperiodic, any two copies will meet with positive probability in every window of \( T \) steps (for some finite \( T \)), and hence they meet with probability one eventually. The relationship \( \pi_i = 1/h_i \) is intuitive: if you visit state \( i \) once every \( h_i \) steps on average, then the fraction of time spent at \( i \) is \( 1/h_i \).

For random walks on undirected graphs, the stationary distribution has a beautiful closed form.

Claim. For a random walk on a connected undirected graph \( G \) with \( m = |E| \) edges, the stationary distribution is \( \pi_v = d(v)/(2m) \).

Proof. We verify the stationarity equation \( \vec{\pi} = \vec{\pi} P \) directly using the detailed balance equations. For each edge \( uv \in E \), note that

\[ \pi_u \cdot P_{u,v} = \frac{d(u)}{2m} \cdot \frac{1}{d(u)} = \frac{1}{2m} = \frac{d(v)}{2m} \cdot \frac{1}{d(v)} = \pi_v \cdot P_{v,u}. \]This says the probability flux from \( u \) to \( v \) equals the flux from \( v \) to \( u \). Summing over all neighbors \( u \) of \( v \):

\[ \sum_{u \in N(v)} \pi_u P_{u,v} = \sum_{u \in N(v)} \frac{1}{2m} = \frac{d(v)}{2m} = \pi_v. \]Since \( \sum_v \pi_v = \sum_v d(v)/(2m) = 2m/(2m) = 1 \), this is a valid probability distribution. \( \square \)

The stationary distribution assigns each vertex weight proportional to its degree: high-degree vertices are visited more often. This is natural — a vertex with more edges is more “accessible” from its neighborhood.

Equivalently, one can check stationarity using matrix notation. Let \( W = AD^{-1} \) be the transition matrix (where \( A \) is the adjacency matrix and \( D \) is the diagonal degree matrix). Then \( (AD^{-1}) \cdot \vec{d}/(2m) = A\vec{1}/(2m) = \vec{d}/(2m) \), confirming stationarity.

10.4 PageRank: Stationary Distribution on the Web

The PageRank algorithm, originally developed by Larry Page and Sergey Brin, is a canonical application of stationary distributions. The World Wide Web can be modeled as a directed graph where each webpage is a vertex and a hyperlink from page \( i \) to page \( j \) is a directed arc. The question is: which pages are most “important”?

The key insight is that importance is recursive: a page is important if many important pages link to it. This motivates the following system of equations:

\[ \text{rank}(j) = \sum_{i : ij \in E} \frac{\text{rank}(i)}{d^{\text{out}}(i)}, \]where \( d^{\text{out}}(i) \) is the out-degree of page \( i \). This is precisely the stationarity equation \( \vec{\pi} = \vec{\pi} P \) for the transition matrix with \( P_{i,j} = 1/d^{\text{out}}(i) \). The PageRank values are the stationary probabilities of a random walk that, at each step, follows a uniformly random outgoing link.

In practice, the directed web graph may not be strongly connected and may have sink vertices (pages with no outgoing links). To guarantee a unique stationary distribution, the actual PageRank algorithm uses a modified random walk: with probability \( s \), follow a random outgoing link; with probability \( 1 - s \), teleport to a uniformly random page. This “damping factor” modification ensures that the underlying Markov chain is irreducible and aperiodic, guaranteeing convergence to a unique stationary distribution. The constant \( 1 - s \approx 0.15 \) represents the fraction of time a random surfer gets “bored” and starts fresh. Importantly, this modification does not significantly change the relative importance of pages while ensuring mathematical well-definedness.

The connection to the return time (part 4 of the Fundamental Theorem) gives additional insight: a page with high PageRank is one that a random surfer returns to frequently, with expected return time \( 1/\pi_i \) steps.

10.5 Fast Perfect Matching in Regular Bipartite Graphs

One of the most elegant applications of random walks is an \( O(n \log n) \)-time algorithm for finding a perfect matching in a \( d \)-regular bipartite graph. This is remarkable because such a graph has \( dn \) edges, so the algorithm runs in sublinear time in the input size when \( d \) is large (say \( d = n \)).

The classical approach builds a perfect matching incrementally, at each stage finding an augmenting path — a path \( v_1, v_2, \ldots, v_{2\ell+1} \) alternating between non-matching and matching edges, with \( v_1 \) and \( v_{2\ell+1} \) unmatched. Flipping the matching along this path increases the matching size by one. By Hall’s theorem, in a regular bipartite graph there is always an augmenting path (in fact, every edge is in some perfect matching). The standard algorithm uses BFS/DFS to find augmenting paths in \( O(m) \) time each, giving \( O(mn) = O(dn^2) \) total, which is too slow for large \( d \).

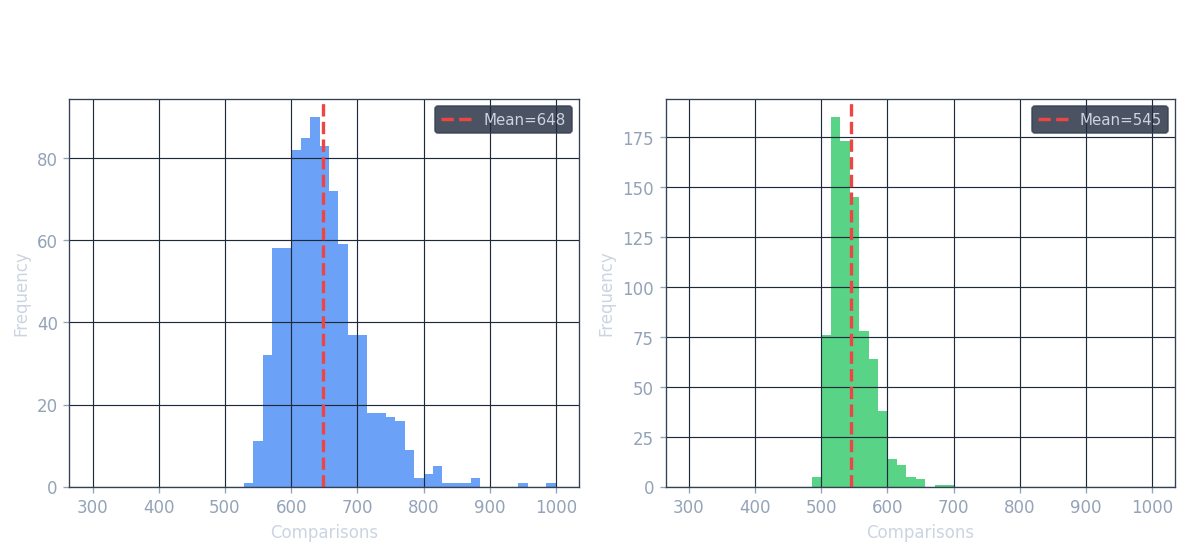

The new idea (Goel, Kapralov, Khanna, 2010) is to replace BFS/DFS with a random walk to find augmenting paths. The key insight is that in an Eulerian directed graph, a random walk finds a cycle from the source in expected time proportional to \( 1/\pi_s \), which can be computed easily.