CO 342: Introduction to Graph Theory

Estimated reading time: 1 hr 56 min

Table of contents

These notes are based on Felix Zhou’s course notes. Additional explanations, visualizations, and examples have been added for clarity.

Graph theory is one of the most beautiful branches of combinatorics. Its objects — vertices, edges, paths, and cycles — are simple enough to draw on a napkin, yet they give rise to deep theorems whose proofs require genuine ingenuity. This course, CO 342 at the University of Waterloo (Fall 2019), covers the classical core of the subject: trees, coloring, matchings, connectivity, planar graphs, and the interplay between them.

Chapter 1: Review

This chapter revisits foundational material from prerequisites (Math 249 / CO 250 level), establishing notation and the key theorems we will use throughout. Even familiar results are stated precisely, because graph theory rewards careful definitions.

Trees and Forests

Before we can analyze more intricate graph properties, we need to understand the simplest connected structures. Trees are graphs with exactly enough edges to stay connected — remove any one edge and they fall apart; add any one edge and they gain a cycle. This minimality is what makes them so fundamental.

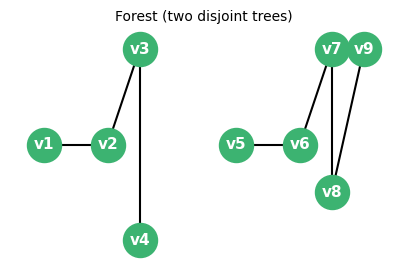

A tree is a connected acyclic graph. A forest is an acyclic graph (equivalently, a disjoint union of trees). These are among the most fundamental objects in combinatorics: they appear in algorithm design, network routing, phylogenetics, and data structures.

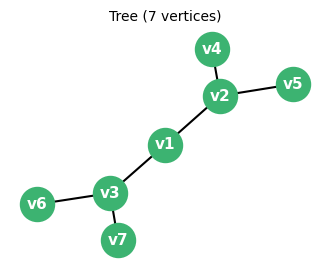

A balanced binary tree with 7 vertices — a prototypical tree structure.

One elementary but important fact about forests is that they always have “leaf” vertices — degree-1 vertices that can be removed without disconnecting anything. The proof is elegant: just follow the longest path.

One elementary but important fact about forests is that they always have “leaf” vertices — degree-1 vertices that can be removed without disconnecting anything. The proof is elegant: just follow the longest path.

The proof strategy here is extremal: pick the longest possible path, then argue that its endpoint must be a leaf. This “longest path” trick recurs throughout graph theory. The existence of leaves is what makes induction on trees so natural — one peels off leaves one at a time.

A useful equivalent characterisation: a graph on \(n\) vertices is a tree if and only if it is connected and has exactly \(n-1\) edges. Trees have exactly one face when drawn as plane graphs, reflecting the formula \(v - e + f = 2\) applied to forests.

A forest consisting of two disconnected trees — the union of trees with no extra edges.

Graph Colorings

Coloring problems are among the oldest questions in graph theory, tracing back to the four-color problem for maps posed in 1852. The central question is: how few colors suffice to color the vertices of a graph so that no two adjacent vertices share a color? This models scheduling conflicts, register allocation in compilers, and frequency assignment in wireless networks.

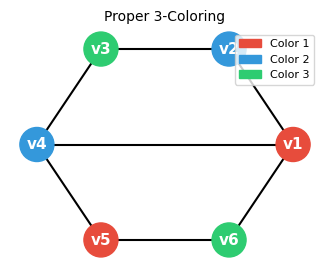

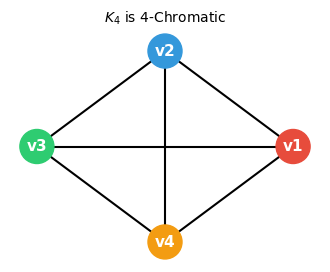

A proper \(k\)-coloring of a graph \(G\) assigns one of \(k\) colors to each vertex such that adjacent vertices receive different colors. The chromatic number \(\chi(G)\) is the minimum \(k\) for which a proper \(k\)-coloring exists.

The chromatic number captures a fundamental tension: dense local structure (large cliques, odd cycles) forces many colors, while sparse or bipartite structure allows few. It is generally NP-hard to compute, but upper bounds from simple greedy algorithms are both easy to prove and quite useful.

The simplest upper bound on \(\chi(G)\) comes from a greedy argument: color vertices one at a time, assigning each vertex the smallest color not used by its already-colored neighbors.

The proof uses a “greedy extension” strategy: the inductive hypothesis provides a coloring of a smaller graph, and we verify that the removed vertex can always be reintegrated. This technique — remove one element, induct, reattach — is ubiquitous in graph theory.

The bound \(\Delta + 1\) is tight for complete graphs \(K_n\) (where \(\Delta = n-1\) and \(\chi = n\)) and for odd cycles \(C_{2k+1}\) (where \(\Delta = 2\) and \(\chi = 3\)). Brooks’ theorem (beyond the scope of this course) strengthens the bound: if \(G\) is connected, not a complete graph, and not an odd cycle, then \(\chi(G) \leq \Delta\).

A proper 3-coloring: no two adjacent vertices share a color.

The complete graph \(K_4\) requires exactly 4 colors — it is 4-chromatic.

The celebrated Four Color Theorem (Appel-Haken, 1976) states that every planar graph is 4-colorable. This is one of the deepest results in combinatorics and was the first major theorem proved with computer assistance.

Matchings

Matchings are a central topic in combinatorial optimization. A matching captures the idea of pairing up elements of a set subject to compatibility constraints — scheduling workers to shifts, assigning residents to hospitals, or routing packets in a network. The goal is typically to find the largest or most efficient such pairing.

A matching is a collection of vertex-disjoint edges; each vertex can be “used” at most once. This models one-to-one assignments: each worker gets at most one shift, each resident gets at most one hospital.

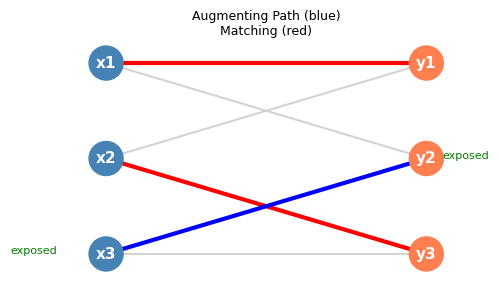

Whether a vertex is “covered” by a matching is crucial for characterizing optimal matchings; exposed vertices are precisely those that an augmentation can eliminate.

A perfect matching is the most desirable outcome: everyone gets paired. The parity restriction is a simple but fundamental obstruction — odd-order graphs can never be perfectly matched.

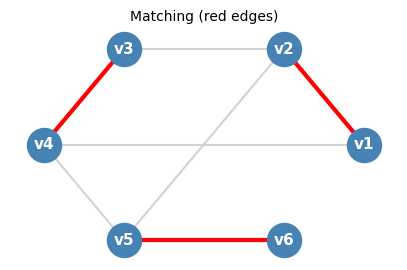

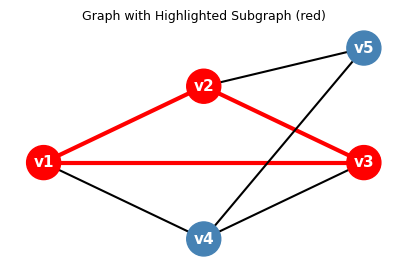

Red edges form a matching: each vertex is incident to at most one red edge.

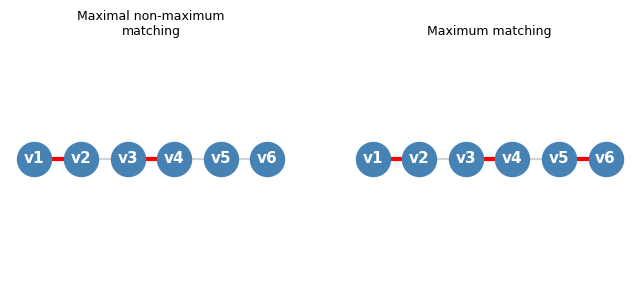

The distinction between maximal and maximum matchings deserves emphasis. A matching is maximal if no edge can be added to it; it is maximum if no matching has more edges. Every maximum matching is maximal, but the converse fails.

Left: a maximal matching of size 2 (cannot extend, but not maximum). Right: a maximum matching of size 3 on the same path graph \(P_6\).

1.3.1 Hall’s Theorem

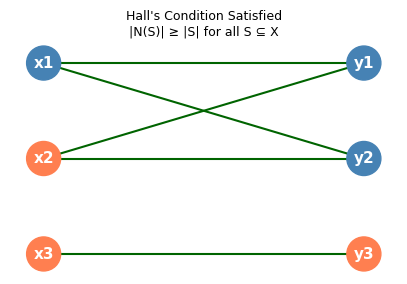

With the definitions in place, the central question is: when does a bipartite graph have a matching that covers one entire side? Clearly a necessary condition is that every group of vertices on the left side must collectively have “enough” neighbors on the right. Remarkably, this necessary condition is also sufficient.

The most important theorem in matching theory is Hall’s Marriage Theorem (1935), which gives a complete combinatorial characterization of when a bipartite graph has a matching saturating one side. Its name comes from the following metaphor: we have a set \(X\) of men and a set \(Y\) of women, with edges indicating compatibility. When can every man be matched to a compatible woman?

To state Hall’s theorem, we need the notion of neighborhood — the “reach” of a set of vertices.

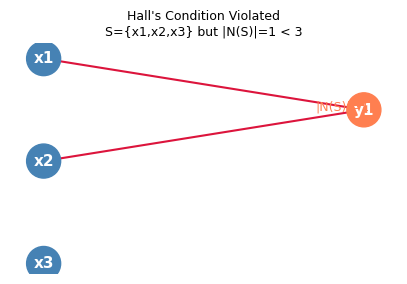

The neighborhood measures how many “slots” on the right side are accessible from a set \(S\) on the left. If \(|\Gamma(S)| < |S|\) for some \(S\), then \(S\) has too few neighbors to be matched — a combinatorial obstruction.

Hall’s Condition is a necessary condition for a matching saturating \(X\): if any \(k\) vertices on the left have fewer than \(k\) neighbors on the right, they cannot all be matched. Remarkably, it is also sufficient.

The following key theorem establishes that Hall’s Condition is not merely necessary but sufficient — a local neighborhood condition completely controls a global matching property.

(\(\Leftarrow\)) By induction on \(|X|\). For \(|X|=1\), Hall’s Condition gives at least one neighbor to match to.

Case I (Tight set exists): Pick any \(x \in X\) and any neighbor \(y \in Y\). If Hall’s Condition holds in the subgraph \(G - x - y\) (with parts \(X-x\) and \(Y-y\)), the inductive hypothesis gives a matching saturating \(X-x\), which combined with the edge \(xy\) saturates all of \(X\).

Case II (No tight set): If Hall’s Condition fails for \(G - x - y\), there is \(S_0 \subseteq X - x\) with \(|\Gamma_{G-x-y}(S_0)| < |S_0|\). Since Hall’s Condition holds for \(G\) and removing \(y\) reduces the neighborhood of \(S_0\) by at most 1, we get \(|\Gamma_G(S_0)| = |S_0|\). We find disjoint matchings: one saturating \(S_0\) into \(\Gamma_G(S_0)\) (by Hall’s Condition applied to \(S_0\)), and one saturating \(X \setminus S_0\) in the subgraph avoiding \(S_0\) and \(\Gamma_G(S_0)\) (Hall’s Condition verified via inclusion-exclusion). Their union saturates \(X\). \(\square\)

The proof strategy is inductive, splitting into cases based on whether a “tight” set (one where equality \(|\Gamma(S_0)| = |S_0|\) holds) exists. Tight sets are natural bottlenecks: they must be matched entirely within their neighborhood, and this constraint propagates through the induction cleanly.

Hall’s Condition satisfied: every subset \(S \subseteq X\) has \(|\Gamma(S)| \geq |S|\). A perfect matching exists.

Hall’s Condition violated: \(S = \{x_1,x_2,x_3\}\) but \(|N(S)| = 1 < 3\). No matching saturating \(X\) exists.

Hall’s theorem immediately yields a satisfying consequence for symmetric, regular graphs.

The proof uses a double-counting argument on edges — a technique that will recur in Euler’s formula and König’s theorem. Regularity enforces uniform Hall’s Condition almost for free.

1.3.2 Berge’s Theorem

Hall’s theorem tells us when a perfect matching exists. Berge’s theorem tells us when a matching is as large as possible — and provides an algorithmic way to check. The key concept is an augmenting path: a path that starts and ends at unmatched vertices, which we can “flip” to gain one more matching edge.

Berge’s theorem characterizes maximum matchings via augmenting paths — alternating paths that start and end at exposed vertices, and can be used to increase the matching size.

Alternating paths weave in and out of the current matching; they are the “modification paths” along which we can locally restructure the matching.

An augmenting path begins and ends outside the matching, so swapping matched and unmatched edges along it strictly increases the matching size by one. The existence of such paths is exactly the obstruction to optimality.

Current matching in red; augmenting path in blue connecting two exposed vertices. Swapping along the blue path increases the matching by one.

Animated: the augmenting path algorithm on a bipartite graph, step by step.

Berge’s 1957 theorem establishes the elegant equivalence between the absence of augmenting paths and the optimality of the matching.

This theorem provides the conceptual basis for all matching algorithms: to find a maximum matching, repeatedly search for augmenting paths and augment until none remain. It also shifts the computational question from “is this matching maximum?” to “does an augmenting path exist?” — the latter being answerable by graph search.

Vertex Covers

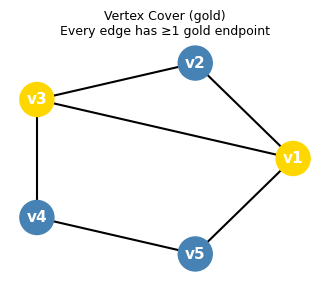

Having understood matchings — sets of edges with no shared vertices — we now turn to their natural “dual” structure. A vertex cover is a set of vertices that “hits” every edge. The interplay between the two is one of the most beautiful min-max dualities in combinatorics.

Intuitively, a vertex cover “guards” every edge by selecting a sentinel at one endpoint. Every complement of an independent set is a vertex cover — the two concepts are dual to each other.

Gold vertices form a vertex cover: every edge touches at least one gold vertex.

Matchings and vertex covers are dual to each other in the following fundamental sense: a large matching forces a large cover, and the two sizes are always within a factor of two. The following inequality is immediate:

The factor-of-two gap between \(\nu\) and \(\tau\) is tight for general graphs (achieved by \(K_3\)), but for bipartite graphs it collapses to perfect equality. This is König’s theorem.

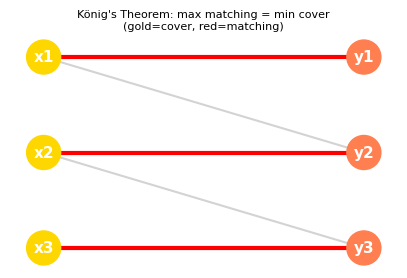

König’s Theorem

For bipartite graphs, the inequality \(\nu \leq \tau\) becomes an equality — a remarkable fact with no analogue for general graphs.

König’s theorem is the cornerstone of bipartite matching theory, establishing a perfect min-max duality between matchings and covers that will be echoed throughout the course.

We apply Hall’s Theorem to find matchings: for any \(Q \subseteq S\), if \(Q\) had fewer than \(|Q|\) neighbors in \(B \setminus T\), we could replace \(Q\) in the cover by \(\Gamma_G(Q) \setminus T\) (a smaller set covering the same edges), contradicting minimality. So Hall’s Condition holds, and we find a matching saturating \(S\) into \(B \setminus T\). Symmetrically, we find a matching saturating \(T\) into \(A \setminus S\). These two matchings are disjoint and together have \(|S| + |T| = \tau(G)\) edges, so \(\nu(G) \geq \tau(G)\). Combined with \(\nu(G) \leq \tau(G)\), we conclude. \(\square\)

The proof is a beautiful example of using one theorem (Hall’s) to prove another: the minimum cover structure forces Hall’s Condition on the two sides of the bipartition, which supplies the matchings that witness the lower bound on \(\nu\).

Gold nodes form a minimum vertex cover; red edges form a maximum matching. König’s theorem guarantees their sizes are equal in bipartite graphs.

König’s theorem fails for non-bipartite graphs: the triangle \(K_3\) has \(\nu = 1\) but \(\tau = 2\). The obstruction is odd cycles, which cannot be perfectly matched.

Chapter 2: Introduction

Chapter 1 used informal graph-theoretic language, relying on intuition. Chapter 2 lays the rigorous foundations — the axiomatic definition of a graph — that make it possible to state theorems precisely and handle degenerate cases (loops, parallel edges, disconnected graphs) without ambiguity. This precision pays dividends immediately in the theory of planar graphs and their duals.

Formal Definitions

Graph theory requires a careful general definition of “graph” that handles loops and parallel edges. Felix Zhou’s notes follow the Diestel convention:

This formal triple-based definition is more general than the familiar “set of vertices and set of edges” description from undergraduate courses. The advantage appears in dual graph constructions: if \(G = (V, E, i)\) is a plane graph with faces \(F\), its dual \(G^* = (F, E, j)\) uses the same edge set \(E\) with a new incidence function \(j\), naturally handled by this framework.

Example 2.1.1 (Dual Graph). If \(G = (V, E, i)\) is a planar graph with a fixed planar embedding with face set \(F\), then \(H = (F, E, j)\) is the dual graph of \(G\), where \(j\) is the incidence function determined by which faces are adjacent to each edge. The general triple definition makes the dual construction natural, since the same edge set \(E\) is reused with a different incidence function.

The dual construction exemplifies why the triple \((V, E, i)\) formulation is preferable to the pair \((V, E)\): the same edge set plays different roles in \(G\) and \(G^*\).

Definition 2.1.2 (Adjacent). Vertices \(u, v \in V\) are adjacent in \(G\) if either (1) \(i(u,e) = i(v,e) = 1\) for some \(e \in E\) (when \(u \neq v\)), or (2) \(i(u,e) = 2\) for some \(e \in E\) (a loop at \(u\)). An edge \(e\) and a vertex \(v\) are incident if \(i(v,e) \neq 0\).

Adjacency and incidence are the elementary relationships between vertices and edges; all higher graph structure is built from them.

Definition 2.1.4 (Degree). The degree of vertex \(v\) is \(\deg(v) = \sum_{e \in E} i(v, e)\). Loops contribute 2 to the degree.

Definition 2.1.5 (Ends). The ends of an edge \(e\) are the vertices \(u, v\) with \(i(u,e) > 0\) and \(i(v,e) > 0\).

The degree measures how many edge-ends attach to a vertex — the fundamental quantity in handshaking arguments and in characterizing regular graphs.

The Handshaking Lemma states that \(\sum_{v \in V} \deg(v) = 2|E|\), since each edge contributes exactly 2 to the total degree sum.

Definition 2.1.6 (Walks and Paths). A walk in \(G\) is an alternating sequence \(v_0, e_1, v_1, e_2, \ldots, e_k, v_k\) of vertices and edges where each \(e_i\) is incident to \(v_{i-1}\) and \(v_i\). A path is a walk with all vertices distinct. A circuit (or cycle) is a closed walk \(v_0, e_1, \ldots, e_k, v_0\) with \(k \geq 1\) and all vertices \(v_0, \ldots, v_{k-1}\) distinct. A forest is a graph with no circuit. An isolated vertex has degree 0; a leaf has degree 1.

These basic notions — walks, paths, circuits — are the building blocks for all connectivity arguments. Paths are the most restrictive: no repeated vertices. Circuits are the minimal “loops” in a graph; their absence defines forests and trees.

Definition 2.1.7 (Connected). Vertices \(u, v \in V(G)\) are connected in \(G\) if there is a walk from \(u\) to \(v\). A graph \(G\) is connected if \(V(G) \neq \emptyset\) and every pair of vertices is connected in \(G\).

Proposition 2.1.2. Connectedness is an equivalence relation on \(V(G)\). Its equivalence classes are the components of \(G\) — they are maximal connected induced subgraphs.

Components partition the vertex set into maximal connected pieces; the entire theory of graph decomposition rests on this elementary observation.

To study local structure, we need the notion of a subgraph — a graph contained within another.

Induced subgraphs are the “natural” restriction to a vertex subset — they inherit all relationships between chosen vertices. They arise constantly when studying connectivity: removing a vertex \(v\) yields the induced subgraph \(G[V \setminus \{v\}]\).

Red vertices and edges form an induced subgraph: all edges between red vertices are included.

Graph Families and Basic Notions

With the formal definition in place, we can catalogue the most important named graph families. These serve as both examples and extremal cases throughout the course.

- Complete graph \(K_n\): all \(\binom{n}{2}\) possible edges on \(n\) vertices; \(\chi(K_n) = n\).

- Cycle \(C_n\): a single circuit on \(n\) vertices.

- Path \(P_n\): a path on \(n\) vertices.

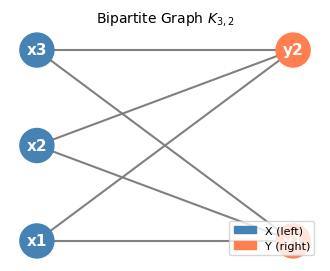

- Bipartite graph: vertex set partitioned into \(X \cup Y\) with all edges between \(X\) and \(Y\); equivalent to being 2-colorable.

- Complete bipartite graph \(K_{m,n}\): all \(mn\) edges between parts of size \(m\) and \(n\).

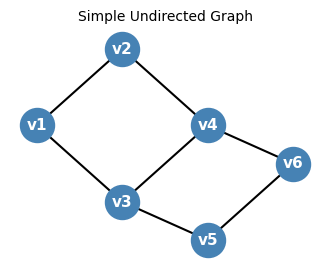

A simple undirected graph on 6 labeled vertices: the fundamental combinatorial object.

The complete bipartite graph \(K_{3,2}\): every vertex on the left is adjacent to every vertex on the right.

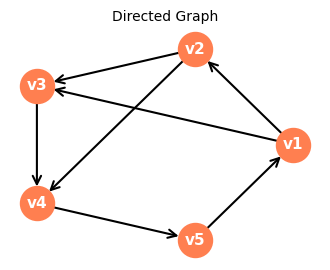

A directed graph (digraph): edges have orientation, represented by arrows.

Connectivity is the most basic structural property of a graph: does it hang together as a single piece, or does it split into isolated islands? (See Definition 2.1.7 above for the formal statement.)

Components partition the vertex set into maximal connected pieces. Much of graph theory — Euler’s formula, Menger’s theorem, the block-cut tree — is stated for connected graphs, and the general case reduces to component-by-component analysis.

A key fact: a bipartite graph is characterized by having no odd cycles. The BFS 2-coloring check assigns alternating colors during a breadth-first traversal; a contradiction (adjacent vertices of the same color) occurs precisely when an odd cycle is found.

BFS coloring animation: vertices are colored alternately as the BFS frontier expands. If a conflict arises, the graph is not bipartite.

Chapter 3: Connectedness

Moving from local structure to global robustness: Chapter 3 asks not merely whether a graph is connected, but how strongly it is connected. Connectivity measures how “robustly” a graph is connected: how many vertices or edges must be removed to disconnect it. This is crucial in network reliability, VLSI design, and algorithm complexity. The chapter culminates in Menger’s theorem, a min-max result that will reappear in a different guise as the max-flow min-cut theorem in network flows.

Connectivity and Cut Vertices

Before defining connectivity, we set up the foundational vocabulary of paths, separators, and graph operations.

Definition 3.1.1 (Components). A component of \(G\) is an induced subgraph \(G[X]\) where \(X\) is an equivalence class under the connectedness relation. Components are maximal connected subgraphs.

The component decomposition is the coarsest structural fact about a graph: it partitions vertices into maximal islands that have no edges between them. Once we understand components, connectivity theory asks a sharper question — not just whether two vertices are connected, but how robustly so.

Definition 3.1.2 (Graph Union). Given graphs \(G_1 = (V_1, E_1, i_1)\) and \(G_2 = (V_2, E_2, i_2)\) that agree on their common vertices and edges, their union \(G_1 \cup G_2\) has vertex set \(V_1 \cup V_2\), edge set \(E_1 \cup E_2\), and incidence determined by either \(G_1\) or \(G_2\). The direct sum \(G_1 \oplus G_2\) is their union when \((V_1 \cup E_1) \cap (V_2 \cup E_2) = \emptyset\).

Graph union formalizes what it means to “paste” two graphs together at shared structure. The direct sum is the cleaner notion: the two graphs share nothing, so there is no ambiguity about edges between them. This becomes essential when we state that a graph is the disjoint union of its components.

Proposition 3.1.2. Every graph is uniquely the direct sum of its connected components (defining the empty graph as disconnected).

The direct sum is simply the disjoint union — the formal counterpart of the intuitive picture of a graph falling into separate pieces. This unique decomposition means we can almost always reduce proofs about general graphs to proofs about connected graphs, handling each component independently.

Definition 3.1.4 (Subtraction). For \(X \subseteq V \cup E\), write \(G - X\) for the subgraph of \(G\) with vertex set \(V \setminus X\) and edge set \(E \setminus X'\), where \(X'\) is the set of edges in \(X\) or incident with a vertex in \(X\).

Subtraction is the key operation for studying robustness: deleting a set \(X\) of vertices or edges and asking whether the remainder is still connected is exactly how we measure connectivity. Notice that deleting a vertex automatically removes all its incident edges — we do not leave “dangling” edge-ends behind.

Definition 3.1.5 (Path). A path is a graph whose vertices and edges can be written as a sequence \(v_0, e_1, v_1, \ldots, e_k, v_k\) with all vertices distinct. The ends of a path are its degree-1 vertices (or the sole vertex if there are no edges). A circuit is a graph forming a closed cycle with all vertices distinct. An \(AB\)-path (for disjoint vertex sets \(A, B\)) is a path with one end in \(A\), one end in \(B\), and all internal vertices in \(V(G) \setminus (A \cup B)\). An \(ab\)-path is an \(\{a\}\{b\}\)-path.

The \(AB\)-path formulation is crucial for Menger’s theorem: it captures the idea of a path “traveling from one side to the other” without touching either side internally, exactly what is needed for the combinatorial counting argument to work.

Definition 3.1.10 (Separates). A set \(X \subseteq V \cup E\) separates \(A\) from \(B\) in \(G\) if there is no \(AB\)-path in \(G - X\).

Definition 3.1.11 (Cut Edge / Bridge). An edge \(e\) is a cut edge (or bridge) if there exist vertices \(u, v\) that are not separated by \(\emptyset\) but are separated by \(\{e\}\).

A separator consisting of a single edge — a bridge — represents the most extreme form of fragility: one edge stands between connectivity and disconnection. Think of a bridge as a literal bridge over a river: if it collapses, the two banks have no way to communicate. The definition parallels the cut vertex definition above, simply replacing the vertex by an edge in the separator.

Bridges are the most fragile edges in a graph: they single-handedly hold together two otherwise disconnected regions. Networks relying on bridge edges are highly vulnerable — losing that one edge disrupts all communication. The block-cut tree, defined shortly, organizes the bridge structure into a clean hierarchy.

To quantify robustness, we define \(k\)-connectivity: a graph is \(k\)-connected if no set of fewer than \(k\) vertices can disconnect it.

Definition 3.1.12 (\(k\)-Connectivity). A graph \(G\) is \(k\)-connected (for \(k \geq 1\)) if \(|V(G)| > k\) and there is no set \(X \subseteq V(G)\) with \(|X| < k\) such that \(G - X\) is disconnected. The connectivity \(\kappa(G)\) is the largest \(k\) for which \(G\) is \(k\)-connected.

Higher connectivity means greater fault tolerance: a 3-connected network remains functional after any two nodes fail. The connectivity \(\kappa(G)\) is a single number that summarizes this structural robustness.

Cut vertices are the individual points of failure — vertices whose removal breaks the graph apart. They are the local manifestation of low connectivity.

Definition 3.1.13 (Cut Vertex). A vertex \(v\) is a cut vertex of \(G\) if there exist vertices \(a, b\) not separated by \(\emptyset\) but separated by \(\{v\}\). Equivalently, \(G - v\) has more components than \(G\).

Key equivalences: being 1-connected is the same as being connected (and not a singleton). Being 2-connected means connected with no cut vertex (with the exception of the single-edge graph \(K_2\)). Cut vertices are precisely the bottlenecks through which all traffic between certain parts of the graph must pass.

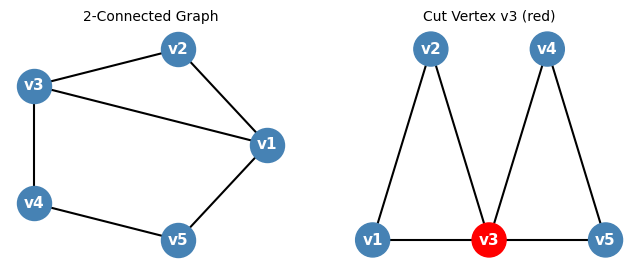

Left: a 2-connected graph (no cut vertex). Right: vertex \(v_3\) in red is a cut vertex — removing it disconnects the graph into two triangles.

Rather than characterizing 2-connectivity by what is absent (cut vertices), the following theorem characterizes it constructively — showing how 2-connected graphs can be built up step by step.

Think of an ear as a “bridge addition”: rather than attaching a single edge, we attach a whole path whose two endpoints already exist in the graph but whose interior is entirely new. Each ear adds new internal vertices and creates new cycles, incrementally increasing the connectivity and the number of independent paths.

Definition 3.2.1 (Adding a Path). A graph \(G'\) arises from \(G\) by adding a path if there is a non-trivial path \(P\) such that \(G' = G \cup P\) and \((E(P) \cup V(P)) \cap (E(G) \cup V(G))\) is exactly the set of the two ends of \(P\). The path \(P\) is called an ear.

This constructive characterization is very useful: it shows that 2-connected graphs have a “layered” structure that can be built incrementally. Ear decompositions are also the basis for efficient algorithms that find 2-connected components in linear time.

The Block Graph

With cut vertices identified, we can decompose any graph into its irreducible 2-connected pieces. These pieces — the blocks — are joined together at cut vertices, and the global structure is captured by a tree.

Blocks are the “atoms” of connectivity: within a block, there are no bottleneck vertices. Bridges — single edges that are blocks — are the most vulnerable connections in the graph.

The block-cut tree is a beautiful structural decomposition: it reveals exactly how the 2-connected pieces are joined together at cut vertices, like beads on a string. That the block-cut tree is always a tree (never has cycles) reflects the fact that blocks can only share vertices at cut vertices — never at interior vertices — so the graph of their “interactions” is acyclic.

The key structural fact is that blocks partition the edges, and overlap only at cut vertices.

The proof argument is a “union of 2-connected graphs sharing two vertices is 2-connected” fact. This tells us blocks genuinely partition the edge set: every edge belongs to exactly one block.

Strong Orientation

The block-cut tree and ear decompositions characterize the undirected structure of a 2-connected graph. Here we add a direction to each edge — asking: when can we orient every edge of \(G\) so that the resulting directed graph is strongly connected, meaning there is a directed path from any vertex to any other vertex? This question arises naturally in traffic engineering (one-way roads), network routing, and tournament scheduling.

An orientation of a graph \(G\) is an assignment of a direction to each edge: each edge \(\{u,v\}\) becomes either the arc \(u \to v\) or \(v \to u\). A directed \(u\)-\(v\) path is a sequence \(u = v_0, v_1, \ldots, v_k = v\) where each consecutive pair forms a directed arc \(v_i \to v_{i+1}\). A strong orientation is one in which there exists a directed \(u\)-\(v\) path for every ordered pair of vertices \((u, v)\). In a strongly oriented graph, you can navigate from any location to any other location — even though every road is one-way.

The fundamental obstacle to a strong orientation is immediately apparent: if \(G\) has a cut edge (bridge), then any orientation of that edge makes traversal in one direction impossible. Removing a bridge disconnects the graph, so the two components cannot communicate after one direction is chosen. This suggests that the right condition is 2-edge-connectivity.

The forward direction is the observation above: a bridge forces a bottleneck that cannot be navigated in both directions. The reverse direction says that the absence of bridges is sufficient — a remarkable fact that says 2-edge-connectivity is already enough. The proof of sufficiency uses the block structure and the ear decomposition to construct the orientation inductively.

We first establish the key building block: 2-connected graphs are strongly orientable.

Suppose \(G_i\) has been given a strong orientation. To form \(G_{i+1}\), we attach an ear \(P = w_1, w_2, \ldots, w_m\) with \(w_1, w_m \in V(G_i)\) but all internal vertices new. Keep the existing orientation of \(G_i\), and direct the ear from \(w_1\) to \(w_m\) (i.e., orient each edge \(w_j \to w_{j+1}\)). We claim this is a strong orientation of \(G_{i+1}\).

Given any two vertices \(x, y \in V(G_{i+1})\), we need a directed \(x\)-\(y\) path. There are five cases: (i) both in \(G_i\) — use the strong orientation of \(G_i\); (ii) \(x \in G_i\), \(y\) on the ear — use a directed path from \(x\) to \(w_1\) in \(G_i\), then follow the ear to \(y\); (iii) \(x\) on the ear, \(y \in G_i\) — follow the ear to \(w_m\), then use a directed path from \(w_m\) to \(y\) in \(G_i\); (iv) both on the ear with \(x\) before \(y\) — traverse the ear directly; (v) both on the ear with \(x\) after \(y\) — follow the ear from \(x\) to \(w_m\), use the strong orientation of \(G_i\) to reach \(w_1\), then follow the ear to \(y\). In all cases a directed path exists, so \(G_{i+1}\) is strongly oriented. \(\square\)

Notice the proof is constructive: the ear decomposition algorithm simultaneously finds the decomposition and builds the strong orientation. The orientation direction of each ear is arbitrary; flipping it would give a different but equally valid strong orientation.

Now we extend to the general case using the block-cut tree. The key observation is that blocks are the “atoms” of orientation — a strong orientation of the whole graph is equivalent to a strong orientation of each block independently.

(\(\Leftarrow\)) Suppose each block has a strong orientation. Orient \(G\) by combining these block-level orientations. Given \(x, y \in V(G)\), consider the path in the block-cut tree from the block \(B_1\) containing \(x\) to the block \(B_k\) containing \(y\): say it passes through blocks \(B_1, B_2, \ldots, B_k\) and cut vertices \(v_1, v_2, \ldots, v_{k-1}\) with \(v_i \in B_i \cap B_{i+1}\). Find a directed path from \(x\) to \(v_1\) in \(B_1\), from \(v_1\) to \(v_2\) in \(B_2\), and so on, ending with a directed path from \(v_{k-1}\) to \(y\) in \(B_k\). Concatenating these gives a directed \(x\)-\(y\) path in \(G\). \(\square\)

Combining both theorems yields Robbins’ theorem: since a block is either an isolated vertex (no orientation needed), a bridge (which has no strong orientation), or a 2-connected graph (which does have a strong orientation), a connected graph is strongly orientable if and only if none of its blocks are bridges — which is precisely the condition of 2-edge-connectivity.

This characterization is clean and satisfying. The proof of the ear decomposition theorem (that 2-connected graphs have ear decompositions) is the hidden engine: it converts the global property of 2-connectivity into a constructive inductive structure that can carry the orientation forward. The ear decomposition is the right notion of “how 2-connected graphs are built,” and this theorem is one of its first payoffs.

Contractions and Tutte’s Theorem

Edge contraction is the operation of “collapsing” an edge into a single vertex, merging the two endpoints. This simple operation is the key to inductive proofs about 3-connected graphs and to the theory of graph minors, which culminates in one of the deepest theorems in mathematics (Robertson-Seymour, 2004).

Contraction is the dual of subdivision. Together with deletion, it generates the minor relation, which underlies some of the deepest theorems in graph theory (Robertson-Seymour). After contracting \(e\), the new vertex \(x_{uv}\) represents the “merged” pair \(\{u,v\}\); the neighbors of \(x_{uv}\) are exactly all former neighbors of either endpoint.

Lemma 3.4.1. If \(G\) is \(k\)-connected and \(X \subseteq V(G)\) with \(|X| = k\), then each vertex in \(X\) has a neighbor in each component of \(G - X\).

Proof. Suppose some \(x \in X\) has no neighbor in some component \(C\) of \(G - X\). Then \(X \setminus \{x\}\) separates \(C\) from the rest, giving a separator of size \(k-1\), contradicting \(k\)-connectivity. \(\square\)

This lemma says that a minimum separator does not “waste” any of its vertices — every member of a minimum cut genuinely participates in blocking some connection. It is used in Tutte’s proof to derive a contradiction: if every edge of a 3-connected graph created a problem when contracted, one could extract an impossibly small separator.

Proposition 3.4.2. If \(e\) is an edge of \(G\) with ends \(u, v\) and \(X \subseteq V \cup E\) contains none of \(e, u, v\), then \((G - X)/e = (G/e) - X\).

Notice that this commutativity requires the sets acted on to be disjoint: the deletion set \(X\) must not touch the contracted edge or its endpoints. When this disjointness holds, the two operations commute — we can delete first and contract second, or vice versa, with identical results.

This commutativity of deletion and contraction is used repeatedly in inductive arguments — it allows us to freely switch the order of these operations when they act on disjoint parts of the graph.

The following result shows that 2-connectivity is “stable” under edge operations: we can always contract or delete without completely destroying connectivity.

Proposition 3.4.3. If \(G\) is 2-connected, \(e \in E(G)\), and \(|V(G)| > 3\), then at least one of \(G/e\) or \(G - e\) is 2-connected.

This proposition enables inductive proofs on 2-connected graphs: either \(G/e\) or \(G - e\) is a smaller 2-connected graph to which we may apply the inductive hypothesis. The case \(|V(G)| > 3\) is necessary: the triangle \(K_3\) is 2-connected, but deleting any edge gives a path (not 2-connected) and contracting any edge gives \(K_2\) (not 2-connected either). Beyond four vertices, however, at least one operation always preserves 2-connectivity, giving the inductive step we need.

Proposition 3.4.4. Any graph with a degree-2 vertex is not 3-connected.

Proof. If \(v\) has degree 2, removing its two neighbors disconnects \(v\) from the rest of the graph. \(\square\)

A basic degree condition on connectivity: high connectivity forces high minimum degree, since a vertex of low degree can be disconnected by deleting its small neighborhood.

Proposition 3.4.5. Every \(k\)-connected graph has minimum vertex degree at least \(k\).

This provides a simple necessary condition that rules out many graphs from being highly connected at a glance.

Example 3.4.6. Consider \(K_{3,3}\): removing any vertex lowers the degrees of all adjacent vertices. More generally, if every vertex of a graph \(G\) has degree 3, then \(G - e\) is not 3-connected for any edge \(e\), since \(G - e\) has degree-2 vertices.

The \(K_{3,3}\) example highlights an important subtlety: deletion can immediately violate 3-connectivity even when the original graph is highly structured. It also shows that for cubic (3-regular) graphs, the only available operation to preserve 3-connectivity is contraction — deletion always creates degree-2 vertices. This makes Tutte’s theorem — which guarantees a contractible edge in every 3-connected graph — especially powerful for the cubic setting.

Example 3.4.7. Consider the 8-wheel (a wheel graph \(W_8\)): its spoke edges can be neither deleted nor contracted while maintaining 3-connectedness.

The 8-wheel example is instructive precisely because it shows that not every edge of a 3-connected graph can be contracted — some edges are “essential” in both directions. The power of Tutte’s theorem is that it guarantees at least one contractible edge always exists, even if most edges are essential.

Proposition 3.4.8. An \(n\)-wheel for \(n \geq 3\) is 3-connected. However, its spoke edges cannot be deleted nor contracted while maintaining 3-connectedness.

Wheels are the extremal examples showing Tutte’s Wheel Theorem is tight: every edge of a wheel is “essential” for 3-connectivity, yet the theorem guarantees at least one contractible edge in any 3-connected graph with more than 4 vertices.

The analogous statement for 3-connectivity is Tutte’s Wheel Theorem, one of the most elegant results in structural graph theory:

Lemma 3.4.10. If Tutte’s Theorem does not hold, then for every edge \(e\) with distinct ends \(x, y\), there is a vertex \(z \notin \{x,y\}\) such that \(G - \{x,y,z\}\) is disconnected and each of \(x, y, z\) has a neighbor in each component of \(G - \{x,y,z\}\).

It is worth pausing to appreciate what this lemma asserts: in a hypothetical counterexample to Tutte’s theorem, every single edge of the graph “witnesses” a 3-vertex separator. The vertices \(x, y\) are the edge’s endpoints and \(z\) is a third vertex that completes the separator — and crucially, all three members of the separator must have neighbors in every component of what remains. Lemma 3.4.1 (proved earlier) provides exactly this neighbor condition for minimum separators. The proof then feeds this highly constrained structure into a component-size argument, eventually reaching the conclusion that \(G\) must be a wheel.

This lemma is the key technical tool in the proof of Tutte’s Wheel Theorem: it characterizes what a minimal counterexample must look like, then derives a contradiction by finding a smaller component. Notice that every edge \(e\) produces a triple \(\{x, y, z\}\) that acts as a separator — the 3-connectivity of \(G/e\) failing is encoded in the existence of this third vertex \(z\). The proof then shows that the component structure under all these triples simultaneously is too constrained to be consistent, forcing the graph to be a wheel.

This theorem implies that every 3-connected graph can be built from a wheel \(W_k\) by successively splitting vertices or adding edges — a precise analogue of the ear decomposition for 2-connectivity. The theorem is the inductive engine powering the proof of Kuratowski’s theorem in Chapter 4.

Menger’s Theorem

Having characterized connectivity structurally (block-cut tree, ear decompositions), we now characterize it quantitatively. Menger’s theorem (1927) is the cornerstone of connectivity theory. It establishes a min-max duality between paths and separators, deeply related to the max-flow min-cut theorem in network flows.

The central objects in Menger’s theorem are internally disjoint paths: multiple routes between two vertices that avoid each other’s intermediate nodes.

Multiple internally disjoint paths represent independent “communication channels” between \(a\) and \(b\). The more such paths exist, the more robustly \(a\) and \(b\) are connected — removing any single vertex cannot block all of them simultaneously.

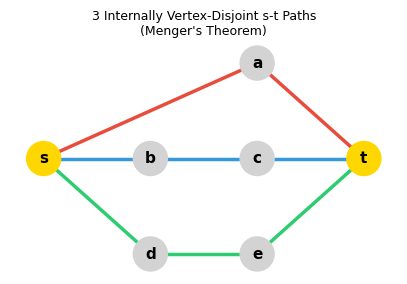

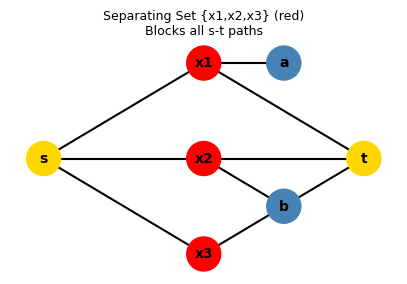

Three internally vertex-disjoint \(s\)–\(t\) paths (colored differently). Menger’s theorem guarantees this is possible whenever no set of fewer than 3 vertices separates \(s\) from \(t\).

A vertex cut \(\{x_1, x_2, x_3\}\) (red) that blocks all \(s\)–\(t\) paths. Menger’s theorem says the minimum such cut size equals the maximum number of internally disjoint paths.

Menger I gives a “global” reformulation of \(k\)-connectivity in terms of disjoint paths. This is often a more useful characterization because it describes what the connectivity number means structurally — not just what removal breaks the graph, but how many independent paths exist.

- \(G\) is \(k\)-connected.

- For every pair \(a \neq b \in V(G)\), there are \(k\) internally disjoint \(a\)–\(b\) paths.

Menger II is the “local” pairwise version: for any specific pair \(a, b\), the maximum number of internally disjoint paths equals the minimum size of a separator. This is the combinatorial prototype of max-flow equals min-cut.

- There exist \(k\) internally disjoint \(a\)–\(b\) paths.

- There is a set \(X \subseteq V(G) \setminus \{a,b\}\) with \(|X| < k\) such that \(G - X\) contains no \(a\)–\(b\) path.

Menger II already has an elegant min-max flavor, but it is restricted to a single pair of vertices. The most general form, Menger III, lifts this to arbitrary sets \(A\) and \(B\) — which is the version that most directly corresponds to the max-flow min-cut theorem in network flows, where \(A\) is the source set and \(B\) is the sink set.

Theorem 3.5.3 (Menger III). Given sets \(A, B \subseteq V(G)\) and integer \(k \geq 1\), exactly one of the following holds:

- There are \(k\) vertex-disjoint \(A\)–\(B\) paths in \(G\).

- There is some \(X \subseteq V(G)\) with \(|X| < k\) such that \(G - X\) has no \(A\)–\(B\) path.

Menger III tells us that one of the two alternatives always holds, but it is not yet a clean min-max equality — it only shows the maximum cannot exceed the minimum. The strengthened version below establishes that the two quantities are in fact equal:

- There are \(k\) vertex-disjoint \(A\)–\(B\) paths in \(G\).

- There is some \(X \subseteq V(G)\) with \(|X| < k\) such that \(G - X\) has no \(A\)–\(B\) path.

The proof proceeds by induction on edges, using contraction to reduce to a smaller graph. The key idea is that any minimum separator in the contracted graph “lifts” to a minimum separator in the original — contraction cannot accidentally merge separator vertices. Together, Menger I, II, and III give a complete min-max picture of vertex connectivity.

Corollary 3.5.4.1 (Menger’s Theorem II, derived). Let \(a, b\) be distinct vertices and \(k\) be the size of the smallest \(a\)–\(b\) separator in \(V(G) \setminus \{a,b\}\). Then there are \(k\) internally disjoint \(a\)–\(b\) paths in \(G\).

Proof. Let \(A = N(a)\) and \(B = N(b)\). Any \(AB\)-separator in \(G\) is also an \(ab\)-separator, so the minimum \(AB\)-separator has size at least \(k\). Apply Menger III* to get \(k\) vertex-disjoint \(AB\)-paths; prepend \(a\) and append \(b\) to each to obtain \(k\) internally disjoint \(ab\)-paths. \(\square\)

What makes this derivation elegant is the neighborhood reduction trick: by working with the neighbors of \(a\) and \(b\) rather than \(a\) and \(b\) themselves, we convert internally-disjoint paths (a condition on internal vertices) into vertex-disjoint paths (a cleaner combinatorial condition), enabling a clean application of Menger III*.

The Fan Lemma is a useful special case of Menger III where one endpoint is a single vertex. It characterizes when a vertex “fans out” to a large set.

A fan from \(a\) to \(B\) is a collection of \(k\) paths that branch out from \(a\) and reach distinct members of \(B\) without crossing each other. The Fan Lemma is the tool used in the proof of Kuratowski’s theorem: in a 3-connected graph, fans of size 3 must exist, and their structure forces planarity or the presence of \(K_5\)/\(K_{3,3}\).

A charming corollary shows that high connectivity forces any small set of vertices to lie on a common cycle:

Chapter 4: Planar Graphs

The jump from connectivity to planarity is a jump from combinatorics to topology. Planar graphs are those that can be drawn in the plane without edge crossings. They arise naturally in geography (maps), VLSI chip design, and network layout. This chapter develops the theory around two landmark theorems: Euler’s formula (a topological identity relating vertices, edges, and faces) and Kuratowski’s theorem (a complete obstruction characterization of planarity). Together they give a remarkably complete picture of which graphs are planar and which are not.

Plane Graphs and Embeddings

A key distinction: a plane graph is a specific geometric realization in \(\mathbb{R}^2\), while a planar graph is an abstract graph that admits such a realization. A given planar graph may have many non-isomorphic plane embeddings.

Planarity is an intrinsic graph property — it does not depend on any particular drawing, only on the existence of a good one. A useful theorem (Thomassen’s) says straight-line drawings are equivalent in power to curved-edge drawings: we may assume edges are polygonal arcs without loss of generality.

The topological setting requires precise vocabulary. A curve is a subset of \(\mathbb{R}^2\) homeomorphic to \([0,1]\); it is polygonal (an arc) if it is a finite union of line segments. A closed curve (or polygon if polygonal) is the image of a continuous map \(f: [0,1] \to \mathbb{R}^2\) injective on \([0,1)\) with \(f(0) = f(1)\). The edges of a plane graph are arcs.

Definition 4.1.7 (Abstract Graph). A plane graph \(G = (V, E)\) naturally corresponds to an abstract graph \(G' = (V, E, i)\) (its abstract graph), where \(G\) is a plane drawing or plane embedding of \(G'\).

The distinction between the plane graph and its abstract graph reflects a fundamental tension in planarity theory: the same abstract graph may admit many non-equivalent planar embeddings, with different faces and different combinatorial structure. Whitney’s theorem (a consequence of Kuratowski’s theorem) shows that 3-connected planar graphs have essentially unique embeddings — the combinatorics locks in the topology.

Definition 4.1.14 (Linked). Points \(x_1, x_2 \in X \subseteq \mathbb{R}^2\) are linked in \(X\) if there is an arc contained in \(X\) with endpoints \(x_1, x_2\). The equivalence classes under “linked in \(X\)” are the components of \(X\).

Linkedness is the topological notion of connectivity in a subset of the plane. The components of \(\mathbb{R}^2 \setminus G\) under this relation are exactly the faces of \(G\) — the open regions the drawing carves out. Each face is a maximal arc-connected piece of the complement.

Once we have a plane graph, the plane is divided into regions by the drawing. These regions are the faces — the “countries” on the map.

Proposition 4.2.1. Every plane graph has exactly one unbounded face.

The uniqueness of the unbounded face is intuitively obvious — the “outside” of any finite drawing is a single connected region — but it requires the Jordan Curve Theorem to make rigorous. It motivates a useful trick: by stereographic projection onto the sphere, all faces become bounded and symmetric. On the sphere, we can choose any face to serve as the “outer” face, which is why Proposition 4.7.5 (below) allows any vertex to appear on the outer face of a planar graph.

Proposition 4.2.2. Faces of a plane graph are always open sets.

The openness of faces is a topological necessity: the boundary of each face — the edges and vertices of \(G\) that “frame” it — belongs to the graph itself, not to the face. This is why the frontier and the face are distinct notions, with the face being the open region and the frontier being its closure inside \(G\).

Definition 4.2.2 (Frontier). The frontier of a face \(f\) of a plane graph \(G\) is \(\{x \in G : \text{every disc centered at } x \text{ contains a point in } f\}\). The boundary of \(f\) is the subgraph of \(G\) corresponding to its frontier.

Theorem 4.2.3 (Jordan Edge Theorem for Polygons). If \(G\) is a plane graph whose abstract graph is a circuit, then \(G\) has exactly two faces.

This is the graph-theoretic Jordan Curve Theorem: a simple closed polygon divides the plane into exactly two regions (inside and outside). It underpins all face-counting in planar graph theory. The proof uses the fact that a polygon separates any two points in different regions — any path between them must cross the polygon — which can be made rigorous using the theory of winding numbers or the intermediate value theorem.

Proposition 4.2.4. If \(e\) is a cut edge of a plane graph \(G\), then the interior of \(e\) lies on the frontier of exactly one face of \(G\).

Proposition 4.2.5. (1) If \(X\) is the frontier of a face \(f\) of a plane graph \(G\), then every edge \(e\) of \(G\) either has its interior \(\dot{e} \subseteq X\) or \(\dot{e} \cap X = \emptyset\). (2) For each edge \(e\), the interior \(\dot{e}\) is contained in the frontier of at most 2 faces.

Corollary 4.2.5.1. The frontier of a face \(f\) is a subgraph of \(G\) (a union of vertices and edges of \(G\)).

The frontier being a genuine subgraph is the critical fact that makes face boundaries combinatorially tractable: we can count their edge lengths, apply König-style double-counting arguments, and invoke connectivity properties of the bounding subgraph. This is not obvious from the topological definition of a face as an open set — the corollary bridges the topological and combinatorial viewpoints.

Propositions 4.2.4 and 4.2.5 together set up the crucial double-counting argument for Euler’s formula: each non-bridge edge borders exactly two faces, contributing 2 to the sum of face-boundary lengths. This makes the sum \(\sum_f |\partial f| = 2|E|\) precise, which is the algebraic engine behind Euler’s formula and the edge-density bounds for planar graphs.

Faces are the topological dual of edges: just as edges separate vertices, faces are separated by edges. The unbounded face is an artifact of working in \(\mathbb{R}^2\) rather than on the sphere; projecting to the sphere would eliminate this asymmetry.

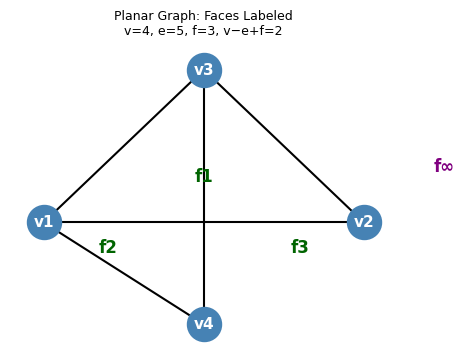

A planar graph drawn without crossings. The faces \(f_1, f_2, f_3\) are the bounded regions; \(f_\infty\) is the unbounded outer face. Here \(v=4, e=5, f=3\) and \(4-5+3=2\).

The Jordan Curve Theorem for polygons states that a circuit drawn in the plane divides it into exactly two faces. This is the topological backbone of planarity theory.

Corollary 4.2.6.1. If \(G\) is a plane forest, then \(G\) has exactly one face, and the boundary of this face is all of \(G\).

This corollary is a useful sanity check for Euler’s formula: a forest on \(n\) vertices has \(n-1\) edges and exactly 1 face, giving \(n - (n-1) + 1 = 2\) — the formula holds. The entire plane “wraps around” a forest since it has no interior, so there is really only one region surrounding all the tree branches.

A bridge, lying on only one face boundary, is a topological “spike” protruding into a single face. Non-bridge edges, by contrast, separate two distinct faces and appear on both boundaries.

Proposition 4.2.7. Let \(G\) be a plane graph and \(P\) a path of \(G\) such that \(G\) is obtained from a graph \(H\) by adding the path \(P\). Then: (1) there is a single face \(f\) of \(H\) containing the interior of \(P\); (2) each face of \(H\) other than \(f\) is a face of \(G\); (3) the face \(f\) splits into two faces \(f_1, f_2\) of \(G\) plus the interior of \(P\). If \(f\) is bounded by a circuit, then so are \(f_1, f_2\). Consequently, \(G\) has exactly one more face than \(H\).

Proposition 4.2.7 is the inductive step for Euler’s formula: adding a path increases \(|E|\) by the path’s length and increases \(|F|\) by exactly 1, while leaving \(|V|\) unchanged (except for the interior vertices of \(P\), which increase \(|V|\) and \(|E|\) equally). The net effect on \(v - e + f\) is always zero, preserving the invariant.

This explains why 2-connectivity is the natural hypothesis for many planar graph results: it ensures faces are “well-shaped” — bounded by genuine cycles rather than paths or trees.

Euler’s Formula

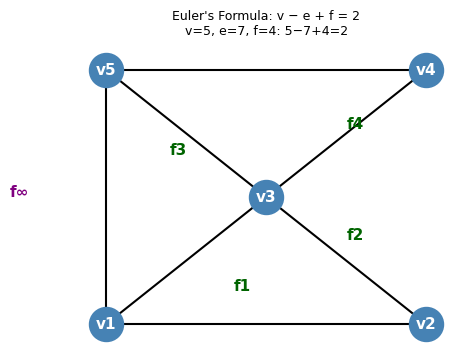

With vertices, edges, and faces all defined, we can now state and prove the single most important fact about plane graphs. Euler’s formula is the fundamental identity relating the combinatorial structure of a planar graph to its topology. Discovered by Euler in 1752, it was one of the first theorems in topology.

Euler’s formula says that the quantity \(v - e + f\) (the Euler characteristic) is a topological invariant: it equals 2 for any connected plane graph, regardless of which graph it is or how it is drawn.

The proof peels off edges one at a time, starting from any spanning tree (which satisfies the formula trivially) and adding edges back, each time increasing both \(|E|\) and \(|F|\) by one so their contribution cancels. The formula is the origin of all the edge-count bounds that make planarity so powerful as a structural hypothesis.

\(v=5\), \(e=7\), \(f=4\) (including the outer face): \(5-7+4 = 2\). Euler’s formula holds.

Proposition 4.3.2. If \(f\) is a face of a plane graph \(G\) that is not a forest, then the boundary of \(f\) contains a circuit of \(G\).

This proposition is the bridge between the topological world of faces and the combinatorial world of cycles. A face boundary that contains a cycle means the face is “enclosed” by that cycle — the Jordan Curve Theorem applies, and the face lies in one of the two regions created by the cycle. This is exactly the situation needed to apply the double-counting argument for Euler’s edge-density bound: faces with cycle boundaries contribute at least 3 to the face-edge count, while faces with tree boundaries contribute fewer edges but are handled separately.

A powerful consequence of Euler’s formula is an edge count bound — planarity severely limits how many edges a graph can have.

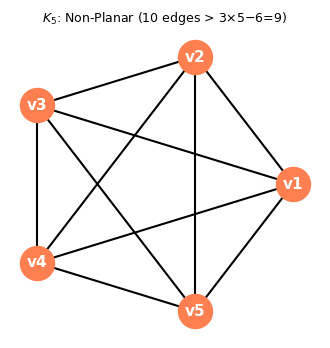

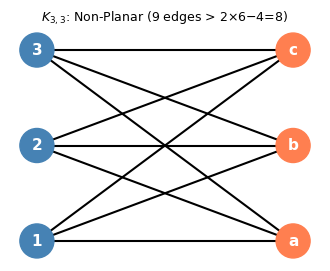

The proof is a double-counting argument on face-edge incidences, combined with Euler’s formula to translate a face count into an edge bound. The edge density bound \(|E| \leq 3|V| - 6\) is one of the most practically useful facts about planar graphs: it immediately certifies non-planarity by comparing edge counts alone.

This corollary is the key step toward Kuratowski’s theorem: \(K_5\) and \(K_{3,3}\) are non-planar by pure counting. The deep result ahead is that these are the only “minimal” non-planar graphs.

\(K_5\) has 10 edges, exceeding the bound \(3 \times 5 - 6 = 9\). It cannot be planar.

\(K_{3,3}\) is triangle-free with 9 edges, exceeding the bound \(2 \times 6 - 4 = 8\). It cannot be planar.

Subdivisions and Graph Minors

To make Kuratowski’s theorem precise, we need two ways of “containing” a graph inside another: subdivisions (inserting degree-2 vertices into edges) and minors (contracting edges). Both preserve non-planarity — if a non-planar graph appears inside \(G\) in either sense, then \(G\) itself cannot be planar.

Definition 4.4.2 (Edge Subdivision). The graph \(H\) obtained from \(G\) by subdividing an edge \(e\) is formed by deleting \(e\), adding a new vertex \(v_e\), and adding edges \(v_e u_1\) and \(v_e u_2\) where \(u_1, u_2\) were the ends of \(e\). A subdivision of \(G\) is obtained by repeatedly subdividing edges.

Definition 4.4.1 (Subdivision / Topological Minor). A subdivision of a graph \(H\) is obtained by replacing each edge with a path (inserting new degree-2 vertices). A graph \(G\) has \(H\) as a topological minor if some subdivision of \(H\) is a subgraph of \(G\).

Think of a topological minor as a “stretched copy” of \(H\) living inside \(G\): the edges of \(H\) are replaced by longer paths, but the high-degree branching structure is preserved. The key insight is that subdivision does not change whether a graph is planar — you can always place new degree-2 vertices in the interior of an edge without introducing crossings. So if \(H\) is non-planar, any graph containing a subdivision of \(H\) as a subgraph is also non-planar.

Proposition 4.4.1. \(G\) is planar if and only if any subdivision \(H\) of \(G\) is planar. In fact, \(G\) and \(H\) have plane drawings corresponding to the same set of points in \(\mathbb{R}^2\).

Corollary 4.4.1.1. If \(H\) is non-planar and \(G\) is a subdivision of \(H\), then \(G\) is non-planar.

Corollary 4.4.1.2. If \(G\) has a subdivision of a non-planar graph \(H\) as a subgraph, then \(G\) is non-planar.

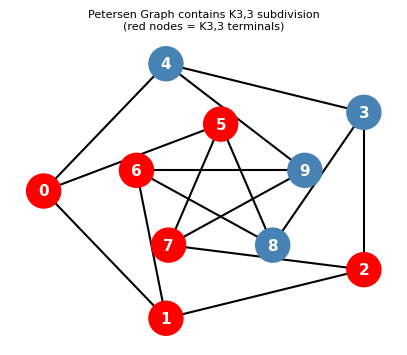

Subdividing an edge introduces degree-2 “relay” vertices but preserves the drawing topology: if \(H\) cannot be drawn without crossings, neither can any subdivision of \(H\). The Petersen graph, for instance, contains a subdivision of \(K_{3,3}\) as a subgraph, proving it non-planar.

Proposition 4.6.1. \(G\) has an \(H\)-minor if and only if there is a function \(\varphi\) mapping (i) vertices of \(H\) to connected subgraphs of \(G\), and (ii) edges of \(H\) to edges of \(G\), such that: (a) the subgraphs \(\varphi(v)\) are vertex-disjoint; (b) for each edge \(e\) of \(H\) with ends \(u, v\), the edge \(\varphi(e)\) has one end in \(\varphi(u)\) and one in \(\varphi(v)\); (c) \(\varphi\) is injective on edges.

Definition 4.6.2 (Topological Minor). A graph \(G\) has \(H\) as a topological minor if some subdivision of \(H\) is a subgraph of \(G\).

The functional characterization in Proposition 4.6.2 makes the topological minor relation tractable for algorithmic purposes: instead of searching through all possible subdivisions, we look for a mapping of vertices to terminals and edges to internally disjoint paths. This is exactly the structure a planarity algorithm needs to exhibit when certifying that a non-planar graph contains a \(K_5\) or \(K_{3,3}\) subdivision.

Proposition 4.6.2. \(H\) is a topological minor of \(G\) if and only if there is a function \(\varphi\) mapping (i) vertices of \(H\) to distinct vertices of \(G\) (terminals), and (ii) edges of \(H\) to internally vertex-disjoint paths of \(G\) with the correct endpoints, and such that paths for different edges only share vertices at terminals.

Minors are more general than subdivisions: a contraction can merge vertices of arbitrarily high degree, whereas a subdivision only affects degree-2 vertices. The distinction is important for the equivalence stated next.

The distinction between topological minors (subdivisions) and minors (contractions) is subtle but important. Topological minors are the more restrictive notion:

The converse is false in general: having \(H\) as a minor does not imply having \(H\) as a subdivision. However, for \(K_5\) and \(K_{3,3}\) specifically, the two notions coincide.

Proposition 4.6.5. If \(H\) has maximum degree at most 3 and \(G\) has an \(H\)-minor, then \(G\) has an \(H\)-topological-minor.

The proof uses the fact that both \(K_5\) and \(K_{3,3}\) have maximum degree \(\leq 4\) and \(3\) respectively, so their minors can be “expanded” back into topological copies. This equivalence is what makes the formulation of Kuratowski’s theorem in terms of either minors or subdivisions equally valid.

The Petersen graph contains a subdivision of \(K_{3,3}\) (red terminals). This proves the Petersen graph is non-planar via Kuratowski’s theorem.

Kuratowski’s Theorem

The proof of Kuratowski’s theorem uses two key lemmas about circuits and vertex sets on those circuits.

Lemma 4.7.2 (A-interval). Let \(C\) be a circuit and \(A \subseteq V(C)\). An \(A\)-interval is a path contained in \(C\) with both endpoints in \(A\) but no internal vertices in \(A\).

The \(A\)-interval is simply an arc of the cycle \(C\) delimited by consecutive members of \(A\). When two vertex sets \(A\) and \(B\) both mark points on the cycle, the relative positions of these marks — whether they interleave or nest — determine whether a \(K_5\) or \(K_{3,3}\) minor is forced. This is exactly what the Circle Lemma classifies.

Lemma 4.7.3 (Circle Lemma). Let \(C\) be a circuit and \(A, B \subseteq V(C)\). Exactly one of the following holds:

- \(|A \cup B| \leq 2\).

- \(|A \cap B| \geq 3\).

- There are distinct vertices \(a_1, b_1, a_2, b_2\) in cyclic order around \(C\) with \(a_1, a_2 \in A\) and \(b_1, b_2 \in B\).

- There is an \(A\)-interval containing \(B\), or a \(B\)-interval containing \(A\).

The Circle Lemma is the technical core of the proof of Lemma 4.7.4: given neighbors of the two ends of a contracted edge lying on a facial circuit, the four outcomes correspond to a small separator (contradicting 3-connectivity), a \(K_5\) minor, a \(K_{3,3}\) minor, or a valid planar embedding.

All the machinery of this chapter — Euler’s formula, minors, subdivisions, Tutte’s Wheel Theorem, and the Fan Lemma — converges on one theorem. Kuratowski’s theorem (1930) gives a complete characterization of planar graphs — arguably the most celebrated theorem in graph theory.

- \(G\) is planar.

- \(G\) contains no topological minor in \(\{K_5, K_{3,3}\}\).

- \(G\) contains no minor in \(\{K_5, K_{3,3}\}\).

The theorem says that \(K_5\) and \(K_{3,3}\) are the only “minimal” obstructions to planarity: every non-planar graph contains one of them in disguise. This is a profound combinatorial fact — there are infinitely many non-planar graphs, yet they all share a finite set of witnesses.

The key step is the 3-connected case, which uses Tutte’s Wheel Theorem as its inductive engine:

The proof strategy is a minimal counterexample argument combined with Tutte’s theorem: contract an edge to get a smaller 3-connected graph, embed it by induction, then “uncontracting” the edge must either work (giving a planar embedding) or reveal a forbidden minor. The Circle Lemma is the technical tool that classifies all cases.

For the general (not necessarily 3-connected) case, one reduces to the 3-connected case by showing that non-3-connected planar graphs can be built from planar pieces:

Lemma 4.5.1. If \(f\) is a face of a plane graph \(G\), then there is a plane graph \(G^+\) obtained by adding a vertex \(v\) inside the face \(f\) and an edge from \(v\) to each vertex in the boundary of \(f\).

This lemma is used in the proof of Theorem 4.5.2: adding a vertex inside a face and connecting it to the boundary preserves planarity, and allows the Circle Lemma to be applied.

Facial Circuit Characterization for 3-Connected Graphs

- induced (chordless), and

- non-separating: \(G - C\) is connected.

This is a purely combinatorial characterization — it does not depend on the choice of planar embedding! For 3-connected planar graphs, the embedding is essentially unique (by Whitney’s theorem), so the facial circuits are combinatorially determined.

Algorithmic Planarity Testing

Planarity can be tested in \(O(n)\) time (Hopcroft-Tarjan, 1974). The approach in these notes is:

1. Check if G is 3-connected (O(n²) via pairwise vertex deletion + BFS).

2. If G is 3-connected:

a. Find edge e such that G/e is 3-connected (Tutte's theorem guarantees existence).

b. Recursively test G/e.

c. If G/e is planar, use Circle Lemma to either find a K5/K33 minor or embed G.

3. If not 3-connected:

a. Handle cut vertices and 2-separators by splitting into simpler pieces.

b. Embed pieces separately and combine using Proposition 4.7.5.

Straight-Line Embeddings

The spring embedding is constructed as a linear system: fix the outer face as a convex polygon, then set each interior vertex to the average (centroid) of its neighbors’ positions. This system has a unique solution, and Tutte’s theorem proves the resulting drawing is planar with convex faces. The name “spring embedding” comes from a physical analogy: imagine replacing every edge with an ideal spring of unit stiffness and natural length zero — the equilibrium configuration of the spring system is exactly the centroid condition, and the unique equilibrium is the unique solution to the linear system.

Definition 4.9.1 (Spring Embedding). Given a circuit \(C\) of a 3-connected plane graph \(G = (V, E)\), a spring embedding is a function \(\varphi: V \to \mathbb{R}^2\) such that: (1) the vertices of \(C\) are mapped to a prescribed convex polygon; (2) \(\varphi(u) = \frac{1}{\deg u} \sum_{v \sim u} \varphi(v)\) for each \(u \in V \setminus C\).

Theorem 4.9.3 (Tutte). A spring embedding of a 3-connected simple planar graph \(G\) gives a straight-line drawing with convex faces.

The proof proceeds via four key lemmas about the geometry of the spring embedding. The logic is layered: Lemma I gives a local balancing property for every interior vertex, Lemma II lifts this to a global connectedness statement, Lemma III rules out the degenerate case where neighbors collapse onto a line, and Lemma IV uses the circuit structure to separate adjacent faces. Together they force the faces to be genuine convex polygons with disjoint interiors.

Lemma 4.9.4 (I). In a spring embedding, for each \(u \in V \setminus C\) and each line \(L\) through \(\varphi(u)\), either all neighbors of \(u\) lie on \(L\), or \(u\) has a neighbor strictly on each side of \(L\).

The key insight here is the centroid condition: if \(\varphi(u)\) is the average of its neighbors’ positions, then \(\varphi(u)\) lies in the convex hull of its neighbors. Any line through \(\varphi(u)\) must therefore have neighbors on both sides — otherwise the centroid would be pushed off that line, a contradiction.

Lemma 4.9.5 (II). For each open half-plane \(P\) with \(P \cap \varphi(V) \neq \emptyset\), the vertices in \(P \cap \varphi(V)\) induce a connected subgraph of \(G\).

This connectivity-in-half-planes property is what guarantees the drawing does not “fold back” on itself. It is a global consequence of the local balancing: no subset of embedded vertices can be isolated in a half-plane unless they are already isolated in the graph, which 3-connectivity rules out.

Lemma 4.9.6 (III). No vertex \(u \notin C\) has all its neighbors on a single line through \(u\) (i.e., case (i) of Lemma 4.9.4 does not occur for interior vertices).

Lemma III rules out the degenerate scenario where all neighbors of an interior vertex collapse onto a single line. This would cause the embedding to be “flat” at \(u\) — all neighbors on one line means the faces meeting at \(u\) have no angular separation, and the drawing folds. The proof uses the 3-connectivity of \(G\): if all neighbors of \(u\) lay on a line, the half-plane connectivity of Lemma II would be violated. This is where the 3-connectivity hypothesis does its deepest work in the entire proof.

Lemma 4.9.7 (IV). For every pair \(C_1, C_2\) of facial circuits of \(G\) sharing an edge \(e = uv\), the line through \(\varphi(u), \varphi(v)\) separates \(C_1\) from \(C_2\) in the embedding.

Lemma IV is where the combinatorial structure of the planar graph meets the geometry of the embedding: adjacent faces, sharing an edge, are separated by the line through that edge’s endpoints. This is what ensures the drawing is crossing-free — no two face interiors overlap.

Lemma 4.9.8 (V). The facial circuits map to convex polygons (tiles), and no two tiles intersect.

Together these lemmas establish that the spring embedding produces a valid straight-line plane drawing with all faces convex. What makes this remarkable is that convexity is achieved purely from the linear system — no geometric optimization is needed; the equilibrium condition of the spring system automatically produces a convex embedding, a beautiful interplay between linear algebra and graph topology.

Dual Graphs

Every plane graph has a natural “mirror image” — its plane dual — where faces become vertices and edges connect faces that share a boundary. This duality swaps the roles of faces and vertices, turning cuts into cycles and cycles into cuts. It is one of the most beautiful correspondences in graph theory.

Given a plane graph \(G\), its plane dual \(G^*\) is constructed as follows: for each face \(f\) of \(G\), place a vertex \(f^*\) inside that face; for each edge \(e\) of \(G\) shared by faces \(f\) and \(g\), draw an edge \(e^*\) connecting \(f^*\) and \(g^*\), crossing only the edge \(e\). The result is another plane graph, with one vertex per face of \(G\) and one edge per edge of \(G\).

Two immediate observations: (1) loops arise in \(G^*\) when \(e\) is a cut edge of \(G\) (both sides of \(e\) are in the same face after removing \(e\)); (2) multiple edges arise when two faces share more than one boundary edge. So even if \(G\) is simple, \(G^*\) may have loops and multiple edges.

The planarity of \(G^*\) is immediate from the construction: we explicitly drew it in the plane without crossings. The double-dual identity holds for connected plane graphs but fails for disconnected ones: a disconnected plane graph has a connected dual (since any two face-vertices are connected through the outer face), but the dual of that connected dual cannot recover the original disconnected graph.

The dual construction respects the operations of deletion and contraction in a beautifully symmetric way:

- If \(e\) is not a cut edge, then \((G - e)^* \cong G^* / e^*\).

- If \(e\) is not a loop, then \((G / e)^* \cong G^* - e^*\).

In words: deleting an edge from \(G\) corresponds to contracting its dual edge in \(G^*\), and vice versa. This is a perfect duality of operations: the two “simpler” operations — edge deletion and edge contraction — are swapped by the dual. It implies that the class of planar graphs is closed under both deletion and contraction, a key ingredient in the proof of Kuratowski’s theorem via the minor relation.

The deepest connection between a graph and its dual involves cycles and cuts. A cycle in \(G\) separates the plane into an interior and exterior, so the faces inside and outside the cycle each form a connected set. This means the corresponding edges in \(G^*\) form a bond (a minimal cut):

- If \(C\) is a cycle in \(G\), then \(C^* = \{e^* : e \in C\}\) is a bond in \(G^*\).

- If \(B\) is a bond in \(G\), then \(B^* = \{e^* : e \in B\}\) is a cycle in \(G^*\).

The intuition for the first statement: a cycle \(C\) encloses a region of the plane; the faces inside and outside form two connected sets, and the edges of \(G^*\) crossing \(C\) are exactly those separating these two sets — a bond. The algebraic formulation says the cycle and cut spaces swap roles under duality, which is a profound structural symmetry.

This suggests a purely combinatorial notion of “dual” that does not require a planar embedding. Two graphs \(G\) and \(H\) on the same edge set are abstract duals if every cycle in \(G\) is a bond in \(H\) and every bond in \(G\) is a cycle in \(H\). Connected plane graphs and their plane duals are abstract duals, but the definition applies to any pair of graphs. Whitney’s theorem (1932) characterizes exactly which graphs have abstract duals:

This is yet another characterization of planarity — this time in terms of the algebraic relation between cycles and bonds. The forward direction follows from the plane dual construction above. The reverse uses MacLane’s theorem (below): if \(G\) has an abstract dual \(G^*\), then the cycle space of \(G\) equals the cut space of \(G^*\), and the cuts of any graph are spanned by the \(\delta(v)\) (the edge-cuts at individual vertices), each edge appearing in at most two such cuts — giving a 2-basis for the cycle space of \(G\), hence \(G\) is planar by MacLane’s theorem.

MacLane’s Theorem

Kuratowski’s theorem characterizes planar graphs by what they cannot contain (no subdivision of \(K_5\) or \(K_{3,3}\)). MacLane’s theorem (1937) characterizes planarity positively — by a special algebraic property of the cycle space. It says that a graph is planar if and only if its cycle space has a particularly efficient spanning set.

Recall that the cycle space \(\mathcal{C}(G)\) is the subspace of the edge space over \(\mathbb{F}_2\) spanned by all cycles. A 2-basis for a subspace is a basis where each edge of \(G\) appears in at most 2 of the basis elements.

The motivation for this definition comes from plane graphs: in any 2-connected plane embedding, the facial cycles — the cycles bounding the faces — span the cycle space (as we showed via fundamental cycles of the dual spanning tree), and every edge borders exactly 2 faces, so the facial cycles form a 2-basis. MacLane’s theorem says this property characterizes planarity:

The forward direction follows from plane graphs: embed \(G\) in the plane, take any 2-connected block, and use its facial cycles as the 2-basis (every edge borders exactly 2 faces). For disconnected graphs, combine the 2-bases of each block.

The reverse direction requires more work. The key idea is to use Wagner’s theorem: if \(G\) is not planar, then \(G\) has \(K_5\) or \(K_{3,3}\) as a minor. The proof then shows two things:

The 2-basis property is inherited by minors. If the cycle space of \(G\) has a 2-basis, then so does the cycle space of any minor of \(G\). This is proved by tracking how a 2-basis transforms under each minor operation: removing an isolated vertex does not change the edge set; deleting an edge either removes a non-essential basis element or reduces the dimension by one; contracting an edge modifies each basis element containing the contracted edge by removing it, and the parity constraints are preserved.

\(K_5\) and \(K_{3,3}\) do not have 2-bases. For \(K_5\): the cycle space has dimension \(|E| - |V| + 1 = 10 - 5 + 1 = 6\). If \(\{B_1, \ldots, B_6\}\) were a 2-basis, form \(B_7 = B_1 \oplus \cdots \oplus B_6\). Now \(B_7\) contains exactly the edges appearing in an odd number of basis elements. The collection \(B_1, \ldots, B_7\) together contains each of the 10 edges exactly twice (2-basis condition), so \(20\) edge-appearances total. But \(7\) elements with \(\geq 3\) edges each would require \(\geq 21\) appearances — a contradiction by the pigeonhole principle. For \(K_{3,3}\), a similar dimension argument works.

Combining: if \(G\) is not planar, it has \(K_5\) or \(K_{3,3}\) as a minor; those do not have 2-bases; hence \(G\) does not have a 2-basis either (since the property is preserved under taking minors).

MacLane’s theorem is remarkable because it translates a topological property (embeddability in the plane) into a purely algebraic statement about a vector space. The 2-basis condition is checkable in polynomial time: one can compute a basis for the cycle space and test whether each edge appears at most twice. This gives an algebraic planarity algorithm complementary to Kuratowski’s combinatorial criterion.

Chapter 5: Matchings (Advanced)

The bipartite matching theory of Chapter 1 — Hall’s theorem, König’s theorem — relied on the absence of odd cycles. In general graphs, odd cycles create an obstruction that has no bipartite analogue: they prevent perfect matchings in a fundamentally different way. This chapter develops the matching theory for general graphs, building toward Edmonds’ celebrated Blossom Algorithm (1965), which was one of the first examples of a polynomial-time algorithm for a seemingly difficult combinatorial problem.

Chapter 5 re-develops the matching vocabulary in the general (non-bipartite) setting, then builds toward the Tutte-Berge formula and the Blossom Algorithm. The key obstacle that makes general matching harder than bipartite matching is the existence of odd cycles: a single triangle prevents a perfect matching on 3 vertices, and the pattern generalizes. The theory must somehow quantify and account for this parity obstruction.

Definition 5.1.1 (Matching). A matching in a graph \(G\) is a set of edges with no two sharing an endpoint. \(\nu(G)\) denotes the size of a maximum matching.

Definition 5.1.2 (Maximum Matching). A maximum matching of \(G\) is a matching of size \(\nu(G)\).

Definition 5.1.3 (Saturated). A vertex that is an end of an edge in a matching is saturated; otherwise it is unsaturated.

The Chapter 1 definitions are re-stated here in the general (non-bipartite) setting because the subsequent theory demands precision: the notions of saturation and exposure are exactly what augmenting paths and blossoms interact with. In bipartite graphs, Berge’s augmenting path theorem sufficed algorithmically. In general graphs, the same theorem holds (Proposition 5.4.5 below), but finding augmenting paths becomes much subtler because odd cycles create “fake dead ends” in any straightforward BFS search.

Definition 5.1.4 (Vertex Cover). A vertex cover of \(G\) is a set \(U \subseteq V(G)\) such that every edge has an end in \(U\) (i.e., \(G - U\) has no edges). \(\tau(G)\) denotes the size of a minimum vertex cover.

Matchings and vertex covers are dual problems: one packs as many vertex-disjoint edges as possible, the other selects the fewest vertices to “guard” all edges. The fact that \(\nu \leq \tau\) is trivial — every vertex in the cover must hit every matching edge, and matching edges are disjoint. The non-trivial question is whether equality holds, and the answer depends crucially on the bipartiteness of the graph.

Proposition 5.2.1. If \(U\) is a vertex cover and \(M\) is a matching, then \(|M| \leq |U|\), so \(\nu(G) \leq \tau(G)\).

Proposition 5.2.2. If \(M\) is a matching and \(U\) is a cover with \(|M| = |U|\), then \(M\) is a maximum matching and \(U\) is a minimum cover. Moreover: (i) every vertex in \(U\) is an end of an edge in \(M\); (ii) every edge in \(M\) has exactly one end in \(U\).

Proposition 5.2.2 gives a certificate of optimality: if you exhibit a matching and a cover of the same size, you have simultaneously proved both are optimal without needing to check any other matchings or covers. This “primal-dual” certificate is the foundation of LP duality applied to matchings.

Theorem 5.2.3 (König, 1931). If \(G\) is bipartite, then \(\nu(G) = \tau(G)\).

Proof (via Menger’s Theorem). Let \(A, B\) be the bipartition. A vertex cover is a set \(X\) such that \(G - X\) has no \(AB\)-paths. So \(\tau(G) = \min_{X} |X|\) over \(AB\)-separators, which by Menger’s Theorem equals the number of vertex-disjoint \(AB\)-paths, which equals \(\nu(G)\). \(\square\)