CO 255: Introduction to Optimization (Advanced)

Levent Tuncel

Estimated study time: 3 hr 3 min

Table of contents

These notes are based on Felix Zhou’s course notes. Additional explanations, visualizations, and examples have been added for clarity.

Chapter 1: Introduction and Problem Formulations

Optimization is the mathematical backbone of modern engineering, economics, and data science. At its core, an optimization problem asks: over a set of feasible choices, which one achieves the best objective? This course focuses primarily on linear programs — the most tractable class of optimization problems — but places them in the broader context of convex programs and integer programs. Understanding when a problem is “easy” (solvable in polynomial time) versus “hard” (NP-complete) is one of the course’s major themes.

By convention we minimize, since any maximization problem \(\max f\) is equivalent to \(\min (-f)\). This symmetry allows a unified treatment of all LP problems regardless of objective direction.

1.1 Outcomes of an Optimization Problem

Every optimization problem falls into exactly one of four categories:

- Infeasible: \(S = \emptyset\). No solution satisfies all constraints.

- Optimal: There exists \(x_0 \in S\) with \(f(x_0) \le f(x)\) for all \(x \in S\). We call \(x_0\) an optimal solution and \(f(x_0)\) the optimal value.

- Unbounded: \(S \ne \emptyset\) but for every \(M \in \mathbb{R}\) there exists \(x \in S\) with \(f(x) < M\). The objective can be made arbitrarily small.

- Feasible but no optimum attained: \(S \ne \emptyset\) and the infimum is finite but not achieved (unusual in LP but possible in NLP).

1.2 Classes of Optimization Problems

1.2.1 Linear Programs

Linear programs are the workhorse of applied optimization: they are rich enough to model most real-world allocation problems, yet tractable enough to solve in polynomial time. Their defining feature — linearity — allows a complete geometric and algebraic theory.

Definition 2.1.1 (Affine Function). \(f : \mathbb{R}^n \to \mathbb{R}\) is affine if \(f(x) = \alpha^T x + \beta\) for some \(\alpha \in \mathbb{R}^n,\ \beta \in \mathbb{R}\).

Definition 2.1.2 (Linear Function). An affine function with \(\beta = 0\).

Definition 2.1.3 (Linear Constraint). An inequality of the form \(f(x) \le g(x)\), \(f(x) = g(x)\), or \(f(x) \ge g(x)\) where \(f, g\) are affine.

Definition 2.1.4 (Linear Program). An optimization problem such that the objective function is affine, the variables satisfy \(x \in \mathbb{R}^n\), and the variables are subject to finitely many linear constraints. A feasible solution is any \(\bar x \in \mathbb{R}^n\) satisfying the constraints; an optimal solution is a feasible solution with maximal objective value; the feasible region is the set of all feasible solutions.

Definition 2.1.5 (Standard Inequality Form). \(\max\ c^T x\) subject to \(Ax \le b,\ x \ge 0\).

Definition 2.1.6 (Standard Equality Form). \(\max\ c^T x\) subject to \(Ax = b,\ x \ge 0\).

Proposition 2.1.1. The Standard Inequality Form and Standard Equality Form are general enough to express any linear program.

The two forms are interconvertible: introduce slack variables \(x_{n+1}, \ldots\) to turn SIF into SEF; reverse \(\ge\) constraints by multiplying by \(-1\) and split equalities into two inequalities to turn any LP into SIF.

Standard Inequality Form (SIF): \(\max\ c^T x\) subject to \(Ax \le b,\ x \ge 0\).

Standard Equality Form (SEF): \(\max\ c^T x\) subject to \(Ax = b,\ x \ge 0\).

These are equivalent: slack variables convert SIF to SEF, and splitting equality constraints converts any LP to SIF.

The two standard forms are algebraically equivalent: each can be transformed into the other by introducing slack or surplus variables. Working in SEF simplifies the simplex method’s algebraic description (bases, pivots), while SIF is more natural for duality theory.

Adding integrality constraints makes the problem dramatically harder in general (NP-complete), but for special constraint matrices — such as those arising from network flow and bipartite matching — the LP relaxation automatically produces integer solutions. This is the content of Chapter 5’s results on totally unimodular matrices.

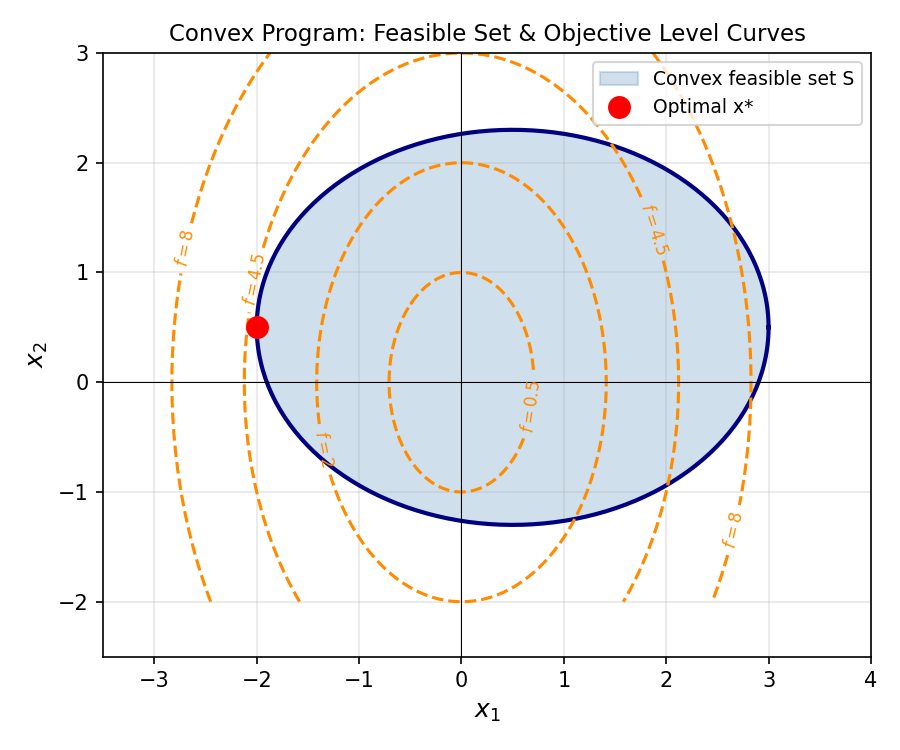

1.2.2 Convex Programs

Convex programs generalize linear programs by allowing curved objective functions and constraints, provided they are all convex. The fundamental insight is that convexity guarantees “no false local minima”: every local minimum of a convex function over a convex set is a global minimum.

Convexity of the feasible set rules out “holes” or disconnected regions — the feasible region is a single connected chunk, which simplifies both analysis and algorithms.

\(f\) is concave if \(-f\) is convex.

A convex function lies below the chord connecting any two of its points. Geometrically, the “bowl shape” of a convex function ensures that gradient methods always descend toward the global minimum.

Convex programs occupy the “tractable” end of the optimization spectrum: the KKT conditions (Chapter 5) provide necessary and sufficient optimality conditions, and interior-point methods solve them in polynomial time.

1.3 Motivating Examples

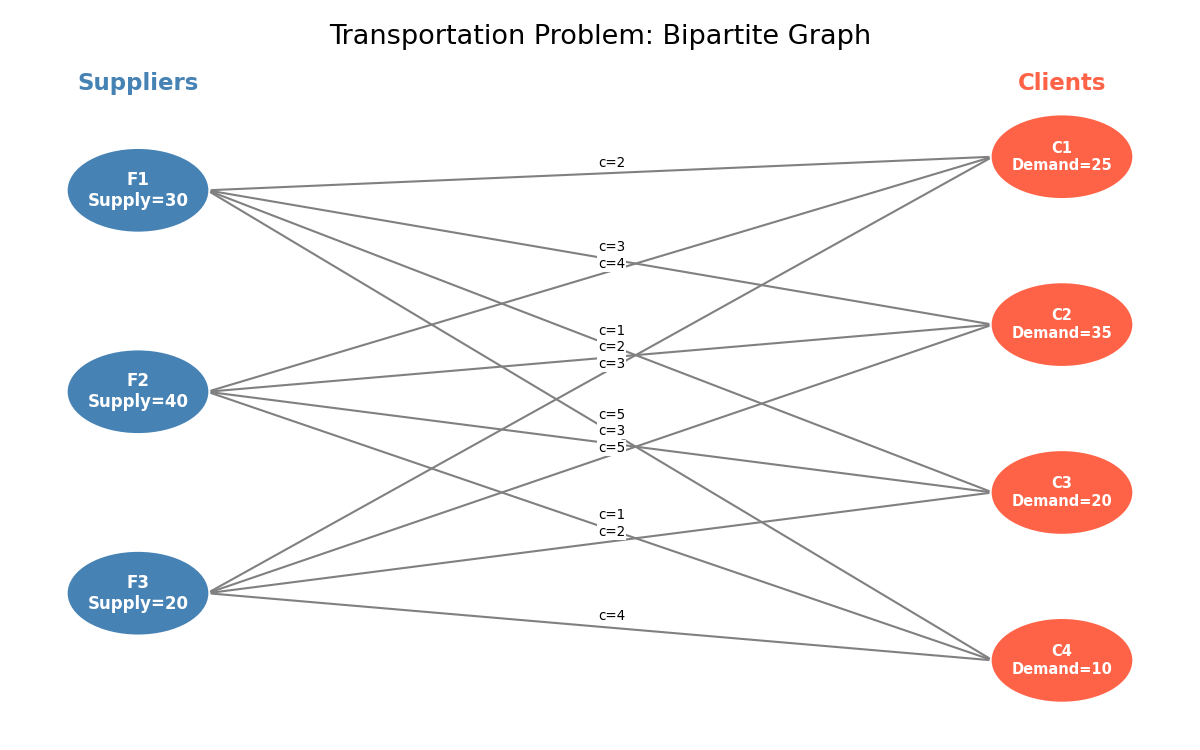

Transportation Problem

A company has a set \(F\) of distribution centers (facilities) and a set \(C\) of clients. Facility \(i\) can supply at most \(u_i\) units; client \(j\) demands exactly \(d_j\) units; the unit cost of shipping from \(i\) to \(j\) is \(c_{ij}\). We seek a minimum-cost shipping plan.

Let \(x_{ij}\) be the number of units shipped from \(i\) to \(j\). The LP is:

\[ \min \sum_{i \in F}\sum_{j \in C} c_{ij} x_{ij} \]subject to \(\sum_{j \in C} x_{ij} \le u_i\) for all \(i \in F\), \(\sum_{i \in F} x_{ij} = d_j\) for all \(j \in C\), and \(x_{ij} \ge 0\).

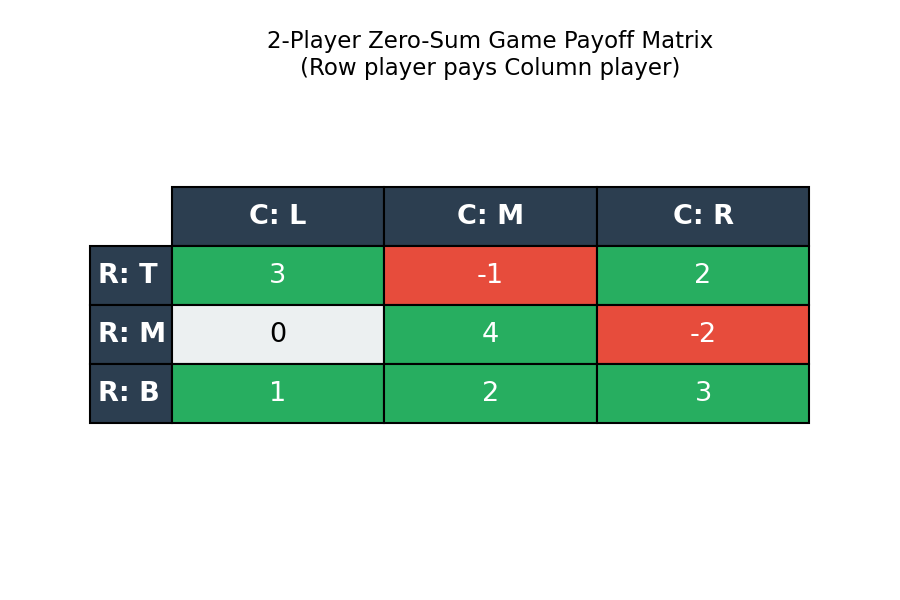

2-Player Zero-Sum Game

Given a payoff matrix \(A \in \mathbb{R}^{m \times n}\), player R (row) chooses row \(i\), player C (column) chooses column \(j\), and R pays C the amount \(a_{ij}\). If C plays a randomized strategy \(p\) (probability vector over columns), C’s guaranteed expected payoff is \(\min_i \sum_j a_{ij} p_j\). C’s LP (C-LP) is:

\[ \max\ v \quad \text{s.t.} \quad \sum_j a_{ij} p_j \ge v\ \forall i,\quad \sum_j p_j = 1,\ p \ge 0. \]Symmetrically, R’s LP (R-LP) minimizes the maximum expected payout over columns. A remarkable consequence of LP duality is that the optimal values of (C-LP) and (R-LP) are equal — this is the minimax theorem.

Job Assignment and Fair Division

The assignment problem — matching \(n\) workers bijectively to \(n\) jobs at minimum total cost — is an IP. Crucially, its LP relaxation (dropping integrality) automatically has integer extreme points because the constraint matrix is totally unimodular (see Chapter 5).

The fair division problem seeks to distribute items among agents to maximize the Nash welfare \(\prod_i (\sum_j u_{ij} x_{ij})\), equivalently \(\max \sum_i \ln(\sum_j u_{ij} x_{ij})\), a convex program.

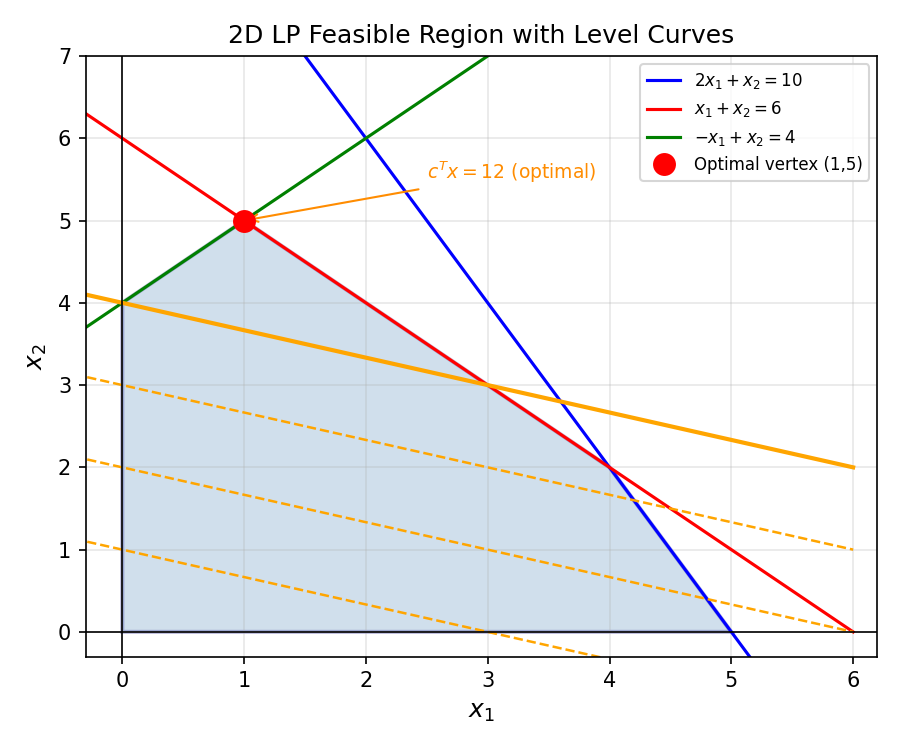

Chapter 2: Linear Programming Geometry

Chapter 1 introduced LP abstractly. Chapter 2 develops the geometric language — polyhedra, vertices, faces — that makes LP theory visual and intuitive. The key insight is that LP feasible regions are polyhedra, and optimal solutions (when they exist) always occur at vertices. This reduces the infinite optimization problem to a finite combinatorial one.

2.1 Polyhedra and Feasible Regions

The feasible region of an LP is defined by finitely many linear inequalities, each cutting the space with a hyperplane and keeping one side. The intersection of all these half-spaces is a polyhedron.

Polytopes are finite, compact objects — all their “directions” are bounded. The simplex method works especially cleanly on polytopes because the number of vertices is finite, though potentially exponentially large.

The feasible region of any LP is a polyhedron. This geometric fact underpins all of LP theory — algorithms like the simplex method walk along the vertices (extreme points) of this polyhedron.

Extreme points are the “corners” of the polyhedron — the points that cannot be expressed as the midpoint of two other feasible points. These are precisely where optimal solutions live.

Extreme points are “pinned down” by constraints: they cannot wiggle in any direction while staying feasible. The following theorem characterizes them algebraically, connecting the geometric notion to the rank of the tight constraint matrix.

Definition 2.7.4 (Convex Hull). \(\mathrm{conv}(S)\) for \(S \subseteq \mathbb{R}^n\) is the smallest convex set containing \(S\): \(\mathrm{conv}(S) := \bigcap_{S \subseteq H,\ H \text{ convex}} H\).

Definition 2.7.5 (Convex Combination). For \(x^{(1)}, \ldots, x^{(k)} \in \mathbb{R}^n\), a convex combination is \(\sum_{i=1}^k \lambda_i x^{(i)}\) with \(\sum_{i=1}^k \lambda_i = 1,\ \lambda_i \ge 0\).

Proposition 2.7.4. \(S \subseteq \mathbb{R}^n\) is convex if and only if it contains all convex combinations of its elements.

Corollary 2.7.4.1. The convex hull of \(S\) is the set of all convex combinations of elements of \(S\).

What makes this remarkable is that it bounds the “complexity” of any convex combination: no matter how many points are in \(S\), and no matter how elaborate their convex combinations, you never need more than \(n+1\) of them. In the plane (\(n=2\)), any point in the convex hull of an arbitrary set can be written as a convex combination of just three points — a triangle suffices. In three dimensions, four points (a tetrahedron). This dimensionality bound is tight and beautiful.

The two versions of Carathéodory’s theorem — for convex hulls and for cones — are closely related via a lifting argument. For any set \(S \subseteq \mathbb{R}^n\), define the lifted set \(S^+ = \{[s; 1] : s \in S\} \subseteq \mathbb{R}^{n+1}\). Then \(x \in \operatorname{conv}(S)\) if and only if \([x; 1] \in \operatorname{cone}(S^+)\). This “homogenization” trick — appending a 1 to every vector — converts convex hull membership into cone membership, allowing the cone version of Carathéodory to imply the convex hull version: a point in \(\operatorname{cone}(S^+) \subseteq \mathbb{R}^{n+1}\) is a non-negative combination of at most \(n+1\) linearly independent vectors from \(S^+\), which after projecting back to \(\mathbb{R}^n\) gives a convex combination of at most \(n+1\) points of \(S\). The same lifting device appears throughout the course: Farkas’ Lemma lifts the constraint vectors \(a_i\) to \([a_i; b_i]\) in the proof of Helly’s theorem, and polyhedral cones are compactified via the unit box to extract extreme rays.

Theorem 2.7.5 (Carathéodory, 1907). Let \(S \subseteq \mathbb{R}^n\). Every point in \(\mathrm{conv}(S)\) can be expressed as a convex combination of at most \(n+1\) points in \(S\).

The proof is an elegant application of linear independence: if a convex combination uses more than \(n+1\) points, the coefficients satisfy a linear dependence relation (there are too many for \(\mathbb{R}^n\)), which can be used to eliminate one point while maintaining a valid convex combination. Repeating this reduction arrives at \(n+1\) or fewer points.

The following theorem offers an equivalent, and somewhat surprising, characterization of extreme points. It says \(\bar x\) is extreme if and only if removing it from \(S\) does not destroy convexity. Intuitively, a non-extreme point is a “midpoint” of two others — removing it creates a gap in the line segment connecting those two others, breaking convexity. An extreme point, by contrast, is not “needed” as a midpoint by any pair, so removing it leaves convexity intact.

Theorem 2.7.6. Let \(\bar x \in S \subseteq \mathbb{R}^n\) be convex. \(\bar x\) is an extreme point of \(S\) if and only if \(S \setminus \{\bar x\}\) is convex.

Theorem 2.7.7 below is the algebraic heart of the theory of extreme points. It translates the geometric notion — “cannot be expressed as a midpoint” — into a linear-algebraic rank condition that is checkable by computation.

Theorem 2.7.7. Let \(P = \{x \in \mathbb{R}^n : Ax \le b\}\), \(\bar x \in P\), and let \(A^= x \le b^=\) be the constraints tight at \(\bar x\). Then \(\bar x\) is an extreme point of \(P\) if and only if \(\mathrm{rank}(A^=) = n\).

The corollary gives a useful finiteness bound: since we choose \(n\) linearly independent rows from the \(m\) rows of \(A\) to determine each extreme point, there are at most \(\binom{m}{n}\) extreme points. This bound is exponential, but it is the quantity that controls the worst-case complexity of the simplex method.

Corollary 2.7.8.1. (i) If \(\mathrm{rank}(A) < n\) there are no extreme points. (ii) Every polyhedron has at most \(\binom{m}{n}\) extreme points.

A polyhedron with no extreme points must contain an entire line — it extends infinitely in both directions along some direction. The following definition and proposition capture exactly this dichotomy.

Definition 2.7.8 (Pointed Polyhedron). A polyhedron is pointed if it does not contain a line (i.e., there is no \(d \ne 0\) such that \(\{x + \lambda d : \lambda \in \mathbb{R}\} \subseteq P\)).

Proposition 2.7.9. \(P \subseteq \mathbb{R}^n\) is a nonempty pointed polyhedron if and only if \(P\) has an extreme point.

Full rank of tight constraints means \(\bar x\) is uniquely determined by those constraints — it is a “vertex” in the algebraic sense. If the rank falls short, a direction exists along which we can move while staying on all tight constraints, so \(\bar x\) lies in the interior of an edge or face.

Theorem 2.7.10. Let \(P = \{x \in \mathbb{R}^n : Ax \le b\}\) be a polyhedron with no lines. If the LP \(\max\ c^T x,\ x \in P\) has an optimal solution, then it has an optimal solution that is an extreme point of \(P\).

Theorem 2.7.11. Let \(P = \{x \in \mathbb{R}^n : Ax \le b\}\) be a polyhedron and \(\bar x \in P\). Then \(\bar x\) is an extreme point of \(P\) if and only if there exists \(c \in \mathbb{R}^n\) such that \(\bar x\) is the unique optimal solution to \(\max\ c^T x,\ x \in P\).

Two fundamental structural theorems about polytopes follow from the extreme point characterization. The first says a bounded polyhedron is nothing more than the convex hull of its corners; the second says the converse — any finite set of points generates a polytope. Together they close a duality between the “inequality description” and the “vertex description” of polytopes.

Definition 2.7.9 (Polytope). A polytope is a bounded polyhedron.

Theorem 2.7.12. Let \(F \subseteq \mathbb{R}^n\) be a polytope. Then \(F\) is the convex hull of its extreme points.

Theorem 2.7.13. The convex hull of any finite subset of \(\mathbb{R}^n\) is a polytope.

What about unbounded polyhedra? A polyhedron that extends to infinity cannot be described purely by its extreme points — it also has extreme rays, directions in which the feasible region extends without bound. The Minkowski sum allows us to decompose any pointed polyhedron into a compact “core” (a polytope spanned by extreme points) and a cone of extreme rays. This decomposition is the key to understanding unbounded LPs: an LP is unbounded precisely when there exists an extreme ray along which the objective improves.

The Minkowski sum gives us a powerful algebraic operation on sets: adding two sets pointwise. Geometrically, \(S + T\) is obtained by “sliding” every copy of \(T\) to every point of \(S\). This operation is exactly what we need to decompose an unbounded polyhedron into its bounded “core” and its cone of unbounded directions.

Definition 2.7.10 (Minkowski Sum). \(S + T := \{s + t \in \mathbb{R}^n : s \in S,\ t \in T\}\).

Definition 2.7.11 (Polyhedral Cone). A set that is simultaneously a cone and a polyhedron.

The next two theorems — together known as the Minkowski-Weyl theorem — provide the complete structural description of polyhedra. Theorem 2.7.14 handles the pointed case, decomposing any pointed polyhedron into a compact part (a polytope) plus a cone of unbounded directions. Theorem 2.7.15 generalizes to all polyhedra, including those containing lines.

Theorem 2.7.14. Let \(F \subseteq \mathbb{R}^n\) be a nonempty pointed polyhedron. There is a polytope \(P \subseteq \mathbb{R}^n\) and pointed polyhedral cone \(K \subseteq \mathbb{R}^n\) such that \(F = P + K\).

Theorem 2.7.15. \(P\) is a polyhedron if and only if \(P = Q + C\) where \(Q\) is a polytope and \(C\) is a polyhedral cone.

Theorem 2.7.15 is the Minkowski-Weyl theorem: every polyhedron can be built by taking a polytope and “extending” it in the directions of a polyhedral cone. This is the complete structural description of polyhedra. When the polyhedral cone is trivial (just the origin), we recover a polytope. When there is no polytope part (the cone is the whole thing), we have a polyhedral cone. The general case is always a sum of these two extreme cases.

Some polyhedra contain entire lines — they extend to infinity in both directions along some vector. Such polyhedra have no extreme points at all. The lineality space captures exactly which directions allow this bidirectional extension.

The two key structural concepts needed to handle polyhedra containing lines are affine subspaces and lineality spaces. An affine subspace is a “shifted” linear subspace — it passes through a specific point but is otherwise flat. The lineality space is the collection of all directions in which the polyhedron extends without bound in both directions simultaneously; it is the “linear part” of the polyhedron.

Definition 2.7.12 (Affine Subspace). The solution set of \(Ax = b\) for some \(A, b\). Every affine subspace of \(\mathbb{R}^n\) can be written as \(l + \{x : Ax = 0\}\) for some \(l\).

Definition 2.7.13 (Lineality Space). The largest affine subspace contained in a polyhedron.

The culminating structural theorem of this section completes the Minkowski-Weyl decomposition by adding the lineality space. Notice how \(F = P + K + L\) breaks the polyhedron into three orthogonal parts: the bounded core \(P\), the “one-directional” cone \(K\) of extreme rays, and the “two-directional” flat \(L\). This is the most general description of any nonempty polyhedron.

Theorem 2.7.18. Let \(F \subseteq \mathbb{R}^n\) be a nonempty polyhedron. There exist a pointed polyhedral cone \(K \subseteq \mathbb{R}^n\) and a polytope \(P \subseteq \mathbb{R}^n\) such that \(F = P + K + L\) where \(L\) is the lineality space of \(F\).

The full decomposition \(F = P + K + L\) is beautiful: every nonempty polyhedron is a “translated” polytope, extended by a cone of rays and a lineality space of lines. From an LP perspective, the lineality space contains directions that never affect objective value — they are the “neutral” directions. The cone contains directions that may improve the objective (unboundedness), and the polytope contains the bounded part where optimal vertices live.

Theorems 2.7.20 and 2.7.21 are the “vertex description” counterparts to the “halfspace description” of polytopes and cones. They say that the two perspectives — describing a set by the halfspaces that contain it (the \(H\)-representation) or by the vertices and rays that generate it (the \(V\)-representation) — are equivalent. Every polytope can be built as the convex hull of finitely many points, and every polyhedral cone can be built as the conic hull of finitely many rays. Converting between these representations is a fundamental computational problem in LP.

Theorem 2.7.20 (Characterization of Polytopes). \(P\) is a polytope if and only if \(P = \mathrm{conv}(S)\) for a finite set \(S \subseteq \mathbb{R}^n\). Moreover, we may take \(S\) to be the set of extreme points of \(P\).

Theorem 2.7.21. \(P\) is a polyhedral cone if and only if \(P = \mathrm{cone}(S)\) for a finite set \(S \subseteq \mathbb{R}^n\).

This theorem says that a polytope is completely described by its vertices — a finite set of points. Every other feasible point is a convex combination of vertices. This is both a structural fact and the basis for LP optimality theory.

The proof is a “greedy tightening” argument: start at any optimal point, and if it is not a vertex, slide along the constant-objective face until a new constraint becomes tight. Repeat until full rank — an extreme point — is reached. This justifies the simplex method’s vertex-walking strategy: we need only search among vertices.

2.2 Feasibility: Fourier-Motzkin and Farkas

Having characterized optimal solutions geometrically (vertices of polyhedra), we now address the prior question: is the system \(Ax \le b\) feasible at all? Fourier-Motzkin elimination gives a constructive procedure, and Farkas’ lemma extracts from it a clean algebraic certificate of infeasibility.

Fourier-Motzkin Elimination (FME) is a procedure to determine whether a system \(Ax \le b\) is feasible by successively eliminating variables. Partition rows by the sign of coefficient \(a_{in}\): let \(I^+, I^-, I^0\) index positive, negative, zero entries. For each pair \((k,l) \in I^+ \times I^-\), generate the inequality:

\[ \frac{1}{a_{ln}}\left(b_l - \sum_{j=1}^{n-1} a_{lj}x_j\right) \le \frac{1}{a_{kn}}\left(b_k - \sum_{j=1}^{n-1} a_{kj}x_j\right). \]The new system in \(n-1\) variables is feasible if and only if the original is. Repeating until no variables remain: the final system is a set of scalar inequalities \(0 \le \gamma_i\); feasibility holds iff all \(\gamma_i \ge 0\). If infeasible, FME produces a certificate \(u \ge 0\) with \(u^T A = 0\) and \(u^T b < 0\), which is exactly the content of Farkas’ Lemma.

Theorem 2.2.2 (Fourier-Motzkin Elimination). Let \(Ax \le b\) be the given system where \(A \in \mathbb{R}^{m \times n}\). Define \(I^+ = \{i : a_{in} > 0\}\), \(I^- = \{i : a_{in} < 0\}\), \(I^0 = \{i : a_{in} = 0\}\). Generate the reduced system \(A'x' \le b'\) in \(n-1\) variables using inequalities from \(I^0\) and the \((k,l)\)-inequalities for all \(k \in I^+,\ l \in I^-\). Then \(Ax \le b\) is feasible if and only if \(A'x' \le b'\) is feasible. After eliminating all variables, the final system has only trivial scalar inequalities \(0 \le \gamma_i\) and is feasible iff all \(\gamma_i \ge 0\).

Proposition 2.2.3. (1) If either \(I^+\) or \(I^-\) is empty, there are no \((k,l)\)-inequalities. (2) Every inequality of \(A'x' \le b'\) is a nonnegative linear combination of inequalities from \(Ax \le b\). (3) \(A'x' \le b'\) has \(n-1\) variables but can have as many as \(\tfrac{|I^+| \cdot |I^-|}{1}\) constraints from the new \((k,l)\)-pairs alone, so in the worst case \(O(m^2)\) new constraints per variable eliminated.

The exponential blow-up in FME is severe in practice: after \(k\) rounds of elimination, the system can have up to \(O(m^{2^k})\) inequalities. This makes FME impractical as an algorithm for solving LP — it has theoretical significance (it proves the projection theorem and implies Farkas’ Lemma) but the simplex method is far more efficient. The key theoretical use of FME is that every derived inequality is a certified non-negative combination of original inequalities — so the Farkas certificate \(y\) can be traced back through the elimination steps.

Lemma 2.2.4. \(Ax \le b\) is feasible if and only if \(A'x' \le b'\) is feasible.

Farkas’ lemma is one of the most important results in LP theory. It says feasibility and infeasibility have “certificates” — proofs that can be verified in polynomial time. The great philosophical point is this: if you claim a system is infeasible, how do you convince someone else? You need to exhibit a short proof — a Farkas certificate. The lemma says such a certificate always exists.

There are three variants of Farkas’ lemma, each suited to a different LP form. They all say the same thing in different algebraic disguises: feasibility and infeasibility are dual to each other, and exactly one holds. Understanding which variant applies to which situation is a core skill in LP.

The first variant handles the purest case: linear equations with no sign restrictions on \(x\).

Theorem 2.2.5 (Fundamental Theorem of Linear Algebra). Let \(A \in \mathbb{R}^{m \times n},\ b \in \mathbb{R}^m\). Exactly one of the following holds:

- \(\exists x \in \mathbb{R}^n\) with \(Ax = b\).

- \(\exists y \in \mathbb{R}^m\) with \(A^T y = 0\) and \(b^T y = -1\).

The key insight here is that alternative (II) is exactly a certificate of linear dependence: \(y\) shows that \(b\) is not in the column span of \(A\). This is precisely the familiar statement from linear algebra about the column space.

The second variant handles inequality systems — the most common form in LP. Notice how the non-negativity of \(u\) in alternative (II) corresponds to the requirement that the “combination” of constraints uses the inequalities in their stated direction.

Theorem 2.2.6. Let \(A \in \mathbb{R}^{n \times m},\ b \in \mathbb{R}^m\). Exactly one of the following has a solution:

- \(x\) such that \(Ax \le b\).

- \(u\) such that \(A^T u = 0,\ u \ge 0,\ b^T u < 0\).

Notice how the non-negativity \(u \ge 0\) in alternative (II) corresponds to the fact that the certificate combines the inequalities of (I) in their stated direction — you may scale each inequality by a non-negative factor but not flip it. If you could flip inequalities, you could manufacture contradictions out of thin air; the sign constraint on \(u\) prevents this.

The third variant is the most directly useful for LP: it handles inequality systems with non-negative variables, matching the Standard Inequality Form of LP. What makes this remarkable is that the certificate \(y\) in alternative (II) provides an explicit linear combination of the constraints that “derives” a contradiction — it witnesses infeasibility with arithmetic you can check by hand.

Theorem 2.2.7 (Farkas’ Lemma). Let \(A \in \mathbb{R}^{m \times n},\ b \in \mathbb{R}^m\). Exactly one of the following holds:

- \(\exists x \ge 0\) with \(Ax \le b\).

- \(\exists y \ge 0\) with \(A^T y \ge 0\) and \(b^T y < 0\).

The proof shows mutual exclusivity by a “dot product sandwich” argument: if (I) holds, then any \(y\) satisfying the constraints in (II) would give \(0 > b^T y \geq 0\), a contradiction. The existence of (II) when (I) fails follows from FME.

Geometric interpretation: \(b\) lies in the cone \(\text{cone}\{A_1, \ldots, A_n\}\) if and only if no hyperplane \(\{y : y^T \delta = 0\}\) separates \(b\) from the cone. Farkas’ Lemma says either \(b\) is in the cone (I), or a separating hyperplane exists (II). This is the “alternative theorem” of linear algebra: exactly one of the two alternatives holds.

2.3 Helly’s Theorem

Helly’s theorem is a remarkable result in combinatorial geometry: the failure of a family of convex sets to share a common point is always witnessed by a small subfamily — small in a size determined only by the ambient dimension, not by the size of the family.

Theorem 2.3.1 (Helly, 1923). Let \(H_1, \ldots, H_m\) be closed half-spaces in \(\mathbb{R}^n\) with \(\bigcap_{i=1}^m H_i = \emptyset\). Then some \(n+1\) of them have empty intersection.

The proof is beautifully modular: Farkas converts infeasibility into a cone membership problem in \(\mathbb{R}^{n+1}\), and Carathéodory reduces the cone representation to at most \(n+1\) generators. The bound \(n+1\) in Helly’s theorem is exactly the Carathéodory bound for cones in \(\mathbb{R}^{n+1}\) — these two theorems are dual to each other in a deep sense.

The half-space version extends to general convex sets by a finite approximation argument.

Theorem 2.3.2 (Helly for Convex Sets). Let \(S_1, \ldots, S_m \subseteq \mathbb{R}^n\) be convex sets with \(\bigcap_{i=1}^m S_i = \emptyset\). Then some \(n+1\) of them have empty intersection.

Helly’s theorem fails for infinite families: the sets \(S_k = [k, \infty)\) have every finite subcollection feasible but empty total intersection. Compactness restores the theorem — if every \(S_i\) is compact, an infinite family with pairwise non-empty intersections has a common point. This is a convex-set formulation of the Heine-Borel theorem.

Chapter 3: The Simplex Method

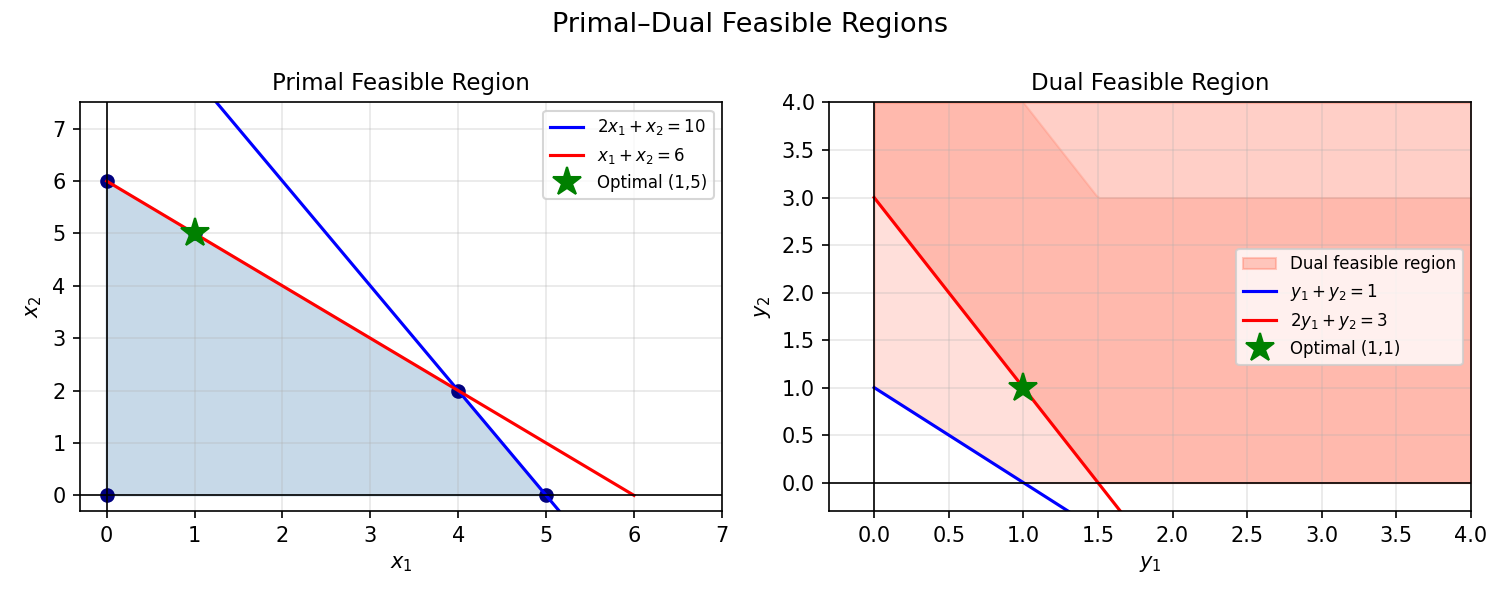

Chapter 2 established the geometry: LP optimal solutions occur at vertices of polyhedra. Chapter 3 develops the algebra and algorithms that exploit this structure. The simplex method walks along edges from vertex to vertex, improving the objective at each step. But before the algorithm, we need the dual LP — the “other side” of the problem that provides optimality certificates.

3.1 LP Duality: Motivation

How can we certify the optimality of a feasible solution? By taking nonnegative linear combinations of the constraints. For the LP \(\max\ 2x_1 + x_2\) subject to \(x_1 - 2x_2 \le 4,\ -x_1 + x_2 \le 10,\ \ldots\), a particular nonnegative combination of constraints yields \(2x_1 + x_2 \le 10\). Since the objective equals 10 at the feasible point \((0, 10)\), this proves optimality.

This motivates a systematic “dual” LP whose feasible solutions provide upper bounds on the primal objective.

Example 2.3.1 (LP). Consider \(\max\ z(x) := 2x_1 + x_2\) subject to \(x_1 - 2x_2 \le 4,\ -x_1 + x_2 \le 10,\ -x_2 \le 0,\ x \ge 0\). The combination \((0,1,2,0) \cdot Ax \le (0,1,2,0) \cdot b\) gives \(2x_1 + x_2 \le 10\). Since \(\bar x^T = (0,10)\) is feasible with \(z(\bar x) = 10\), this proves \(\bar x\) is optimal.

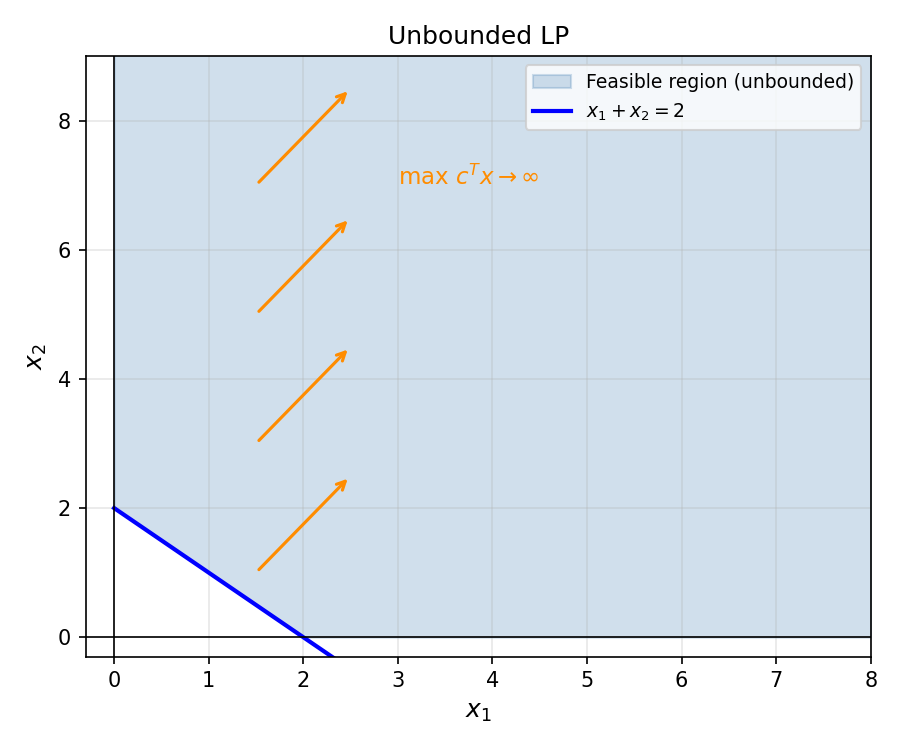

Definition 2.3.1 (Unbounded). An LP is unbounded if for every \(M \in \mathbb{R}\) there is a feasible \(x\) such that \(z(x)\) has a better objective value than \(M\). An unbounded LP is certainly feasible.

Definition 2.3.2 (Bounded). \(S \subseteq \mathbb{R}^n\) is bounded if there is some \(M \in \mathbb{R}_{++}\) such that \(S \subseteq [-M, M]^n\).

The dual LP is constructed by associating one variable \(y_i\) with each primal constraint. Multiplying the \(i\)-th constraint by \(y_i \geq 0\) and summing gives an upper bound on the primal objective — and minimizing this upper bound over all such \(y\) is the dual problem.

Definition 2.3.3 (Dual). Let \(A \in \mathbb{R}^{m \times n},\ b \in \mathbb{R}^m,\ c \in \mathbb{R}^n\). The primal LP is \((P): \max\ c^T x\) s.t. \(Ax \le b,\ x \ge 0\). Its dual is \((D): \min\ b^T y\) s.t. \(A^T y \ge c,\ y \ge 0\). Each primal constraint gives rise to a dual variable; each primal variable gives rise to a dual constraint. \((P)\) being a max-LP gives \((D)\) a min-LP.

Each primal constraint \(a_i^T x \le b_i\) gives rise to a dual variable \(y_i \ge 0\); each primal variable \(x_j\) gives rise to a dual constraint \((A^T y)_j \ge c_j\). Taking the dual twice returns the original primal: \(\text{dual}(\text{dual}(P)) = P\).

The dual is another LP. Its feasible solutions certify upper bounds on the primal: if \(b^T y = 17\) for some feasible dual \(y\), then the primal optimum is at most 17. Strong duality says the best (smallest) such certificate exactly matches the primal optimum.

3.2 Weak and Strong Duality

Weak duality is the trivial direction: any feasible dual solution provides an upper bound on the primal.

Theorem 2.3.2 (Weak Duality). Let \((P)\) be a max LP with objective \(c^T x\) and \((D)\) its dual min LP with objective \(b^T y\). Let \(x\) be feasible for \((P)\) and \(y\) feasible for \((D)\). Then \(c^T x \le b^T y\).

Corollary 2.3.2.1. If \(\bar x, \bar y\) are feasible for \((P), (D)\) respectively and \(c^T \bar x = b^T \bar y\), then \(\bar x, \bar y\) are both optimal.

Corollary 2.3.2.2. \((P)\) is unbounded implies \((D)\) is infeasible, and vice versa.

Proposition 2.3.3. \(\mathrm{dual}(\mathrm{dual}(P)) = P\).

Weak duality says the primal and dual objectives form a “sandwich”: any primal feasible objective value is at most any dual feasible objective value. But it leaves open the question: how large is the gap? Could the primal optimum be strictly below the dual optimum? Strong duality — one of the most powerful results in all of optimization — says the answer is no. Under feasibility of both sides, the gap is exactly zero.

Notice the elegance of what this achieves. The primal problem asks “how good can we do?” The dual asks “how tight can we certify a bound?” Strong duality says these two processes converge to the same answer. The algorithm (simplex, interior point) and the certificate (dual solution) agree.

Theorem 2.3.4 (Strong Duality). If both \((P)\) and \((D)\) have feasible solutions, they both have optimal solutions \(\bar x, \bar y\) with equal optimal values: \(c^T \bar x = b^T \bar y\).

Theorem (Uber Theorem). For any LP \((P): \min\ c^T x,\ Ax \ge b\), exactly one of the following holds:

- Both \((P)\) and its dual \((D): \max\ b^T y,\ A^T y = c,\ y \ge 0\) have optimal solutions with equal values.

- \((P)\) is infeasible: there exists \(\bar y \ge 0\) with \(A^T \bar y = 0\) and \(b^T \bar y > 0\) (Farkas certificate).

- \((P)\) is feasible and unbounded: there exists a direction \(\bar d\) with \(A\bar d \ge 0\) and \(c^T \bar d < 0\).

Claim 1: Exactly one of System (I) (primal-dual feasibility with equal objectives) and System (B) is feasible. This follows from a Farkas-type argument: System (B) is feasible if and only if System (I) is infeasible. The argument is a block-Farkas computation.

Claim 2: If System (I) is infeasible, any solution \((\bar y, \bar d)\) to (B) satisfies \(b^T \bar y - c^T \bar d = s \ge 1 > 0\). If \(b^T \bar y > 0\) then \(\bar y / b^T \bar y\) certifies outcome (II). If \(b^T \bar y = 0\) then \(c^T \bar d < 0\) and \(A\bar d \ge 0\), giving outcome (III) together with any feasible \(\bar x\) (if (P) is infeasible, outcome (II) holds directly).

Strong duality follows immediately: since both \((P)\) and \((D)\) are feasible, outcome (II) is impossible (a Farkas certificate for infeasibility would contradict the existence of a feasible point) and outcome (III) is impossible (an unbounded direction \(A\bar d \ge 0,\ c^T \bar d < 0\) combined with a dual feasible \(y\) gives \(0 \le (A\bar d)^T y = \bar d^T A^T y = c^T \bar d < 0\), a contradiction). So outcome (I) holds. \(\square\)

The Uber Theorem is more powerful than strong duality alone: it gives a complete classification of LP outcomes in a single theorem, with explicit certificates for each case. This makes it the master tool of LP theory — every LP algorithm either finds an outcome-(I) witness (a primal-dual optimal pair) or an outcome-(II)/(III) certificate.

The proof technique is characteristic of LP theory: assume the desired system fails, derive a Farkas certificate, observe it contradicts a known fact, conclude the system must hold. This pattern — “Farkas applied one level up” — repeats throughout the theory.

Lemma 2.3.5. If \((P)\) is feasible and \((D)\) is infeasible, then \((P)\) is unbounded.

Lemma 2.3.6. If \((P)\) is feasible and \((D)\) is infeasible, then \((P)\) is unbounded. (Proof via Farkas’ Lemma: there is \(d \ge 0\) with \(Ad \le 0\) and \(c^T d > 0\), so \(x(\lambda) = \bar x + \lambda d\) is feasible for all \(\lambda \ge 0\) with \(c^T x(\lambda) \to \infty\).)

Notice what Lemma 2.3.6 says geometrically: dual infeasibility means no nonneg combination of constraints pins down the objective, so there is an unbounded ray in the primal feasible region along which the objective grows without limit. The Farkas certificate \(d\) is literally this unbounded direction.

Theorem 2.3.7. If \((P)\) has an optimal solution then \((D)\) also has an optimal solution, and they have equivalent optimal values.

Theorem 2.4.1 (Fundamental Theorem of Linear Programming). Every LP is exactly one of: (i) has an optimal solution, (ii) unbounded, (iii) infeasible.

Moreover, if \((P)\) is feasible and \((D)\) is infeasible, then \((P)\) is unbounded.

This “trichotomy” is the complete classification of LP outcomes. The four-way classification from Chapter 1 reduces to three: the “feasible but no optimum attained” case cannot occur for LPs because the feasible region is a closed polyhedron (compact level sets). The interplay between primal and dual gives a 3×3 grid of possible outcomes for the pair \((P), (D)\), and remarkably only four entries in that grid can actually occur: both optimal, \((P)\) unbounded / \((D)\) infeasible, \((P)\) infeasible / \((D)\) unbounded, and both infeasible. This is a complete characterization.

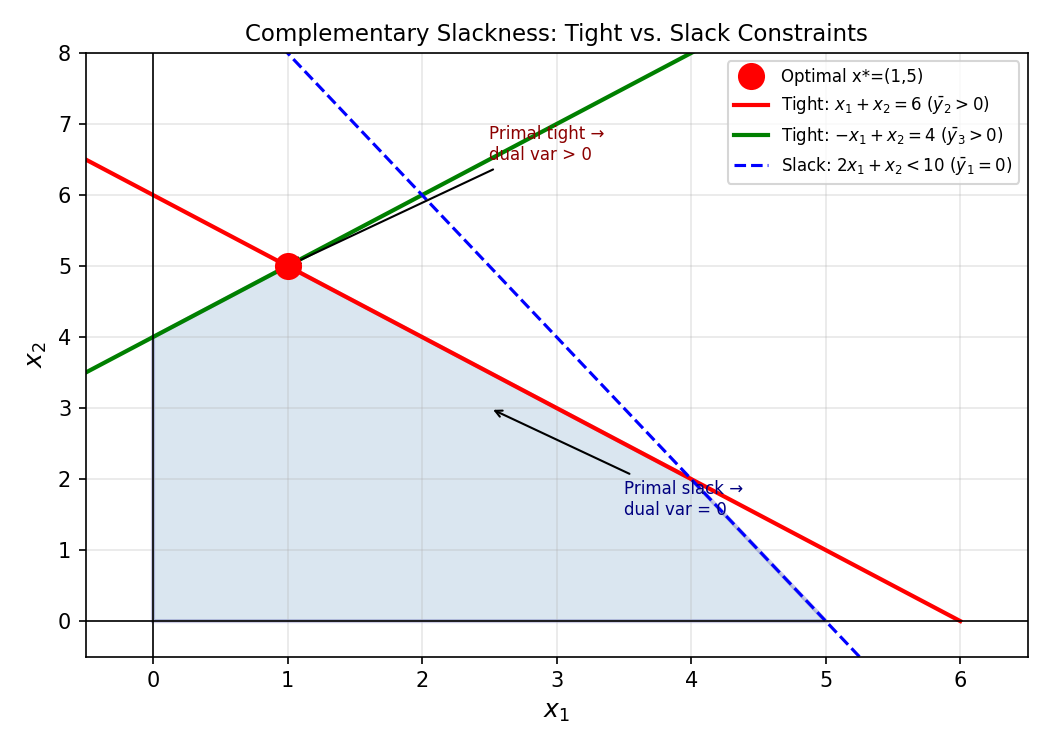

3.3 Complementary Slackness

Strong duality tells us that at optimality, the primal and dual objective values are equal. But “the two objectives are equal” is a global condition — it is hard to check without already knowing both optima. Complementary slackness translates this global equality into a system of local “on/off” conditions on individual variables and constraints. The key insight is that the equality \(c^T \bar x = b^T \bar y\) forces each term in a sum of nonnegative quantities to be exactly zero, giving sharp pointwise conditions.

In practice, complementary slackness is used in two ways. First, given a candidate pair \((\bar x, \bar y)\), one can verify optimality by checking the CS conditions — no need to solve the LP again. Second, one can use the CS conditions to find the optimal dual given a primal solution, or vice versa: fix one solution, enforce CS conditions, and solve for the other.

Complementary slackness (CS) translates the condition \(c^T \bar x = b^T \bar y\) into a system of “on/off” conditions on the variables and constraints.

Theorem 2.5.1 (Complementary Slackness Theorem). \(\bar x, \bar y\) feasible for \((P), (D)\) respectively are optimal if and only if:

- For all \(j\): \(\bar x_j = 0\) or the \(j\)-th dual constraint is tight for \(\bar y\): \((A^T \bar y)_j = c_j\).

- For all \(i\): \(\bar y_i = 0\) or the \(i\)-th primal constraint is tight for \(\bar x\): \((A\bar x)_i = b_i\).

The proof extracts CS from the “sandwich” equality in weak duality: the sum of nonneg terms equals zero, so every term must be zero individually. This “each term is zero” condition is exactly the complementary slackness. In practice, CS conditions allow us to verify optimality of a claimed solution by checking a list of “either-or” conditions — each variable is either zero, or its corresponding dual constraint is tight.

The definitions of valid inequalities, cones, and active constraints are the vocabulary of duality geometry. A valid inequality is any constraint that every feasible point satisfies; dual variables generate valid inequalities by taking nonneg combinations of the primal constraints. Active constraints are the ones “touching” the current point — the boundary the point rests against. These concepts connect the algebra of CS conditions to the geometry of extreme points.

Definition 2.5.1 (Valid Inequality). We say that an inequality \(\alpha^T x \le \beta\), \(\alpha, x \in \mathbb{R}^n\), \(\beta \in \mathbb{R}\), is valid for a set \(S \subseteq \mathbb{R}^n\) if \(\alpha^T \bar x \le \beta\) for all \(\bar x \in S\).

Definition 2.5.2 (Cone). \(K \subseteq \mathbb{R}^n\) is a cone if: (1) \(0 \in K\); (2) \(\forall x \in K,\ \forall \lambda \ge 0,\ \lambda x \in K\); (3) \(\forall x, y \in K,\ x + y \in K\). For \(S \subseteq \mathbb{R}^n\), \(\mathrm{cone}(S)\) denotes the smallest cone containing \(S\).

Lemma 2.5.4. The intersection of an arbitrary family of cones is a cone.

Lemma 2.5.5. If \(S \subseteq \mathbb{R}^n\) is finite, \(S = \{a^{(1)}, \ldots, a^{(k)}\}\), then \(\mathrm{cone}(S) = \{x : x = \sum_i \lambda_i a^{(i)},\ \lambda_i \ge 0\}\).

Definition 2.5.3 (Tight/Active). We say \(\alpha^T x \le \beta\) is tight or active at \(\bar x\) if \(\alpha^T \bar x = \beta\).

Proposition 2.5.6 below is the geometric heart of LP optimality. It says a feasible point is optimal precisely when the objective direction \(c\) lies in the cone generated by the active constraint normals. Intuitively: if you stand at a corner of the feasible region and the “uphill” direction of the objective is blocked by the walls you are touching, you are at the optimum. If the objective points “outside” the cone of active walls, you can move along the boundary and improve.

Proposition 2.5.6 (Geometric Interpretation of Duality). Let \((P): \max\ c^T x\) s.t. \(Ax \le b\), and let \(\bar x\) be feasible. Let \(A^= x \le b^=\) be the constraints tight at \(\bar x\). Then \(\bar x\) is an optimal solution to \((P)\) if and only if \(c \in \mathrm{cone}(\text{rows of } A^=)\).

Proposition 2.5.8 shows that strong duality subsumes Farkas’ lemma — the two results are not independent but are part of the same duality fabric. Farkas is “strong duality for the trivial LP.”

Proposition 2.5.8 (Strong Duality Implies Farkas’ Lemma). Consider \((P): \max\ 0^T x\) s.t. \(Ax \le b,\ x \ge 0\). This is infeasible or has optimal value 0. Its dual \((D): \min\ b^T y\) s.t. \(y^T A \ge 0,\ y \ge 0\) is feasible with \(\bar y = 0\). Therefore, \((P)\) is infeasible if and only if \((D)\) is unbounded, i.e., there is a dual feasible solution with \(b^T y < 0\).

Physical interpretation: Think of each hyperplane \(a_i^T x = b_i\) as a wall in the feasible region. The objective \(c\) acts like a force on a particle. The particle reaches equilibrium (optimality) when \(c\) is balanced by normal forces from the walls it touches (tight constraints), with the normal force magnitudes being the dual variables. Walls not touching the particle exert no force, so their dual variables are zero.

3.4 Duality Table and Shadow Prices

The strong duality theorem and CS conditions extend beyond the standard SIF formulation to general LP forms. The duality table below is a mechanical rule for constructing the dual from any LP: match each primal constraint type to a dual variable type, and vice versa. This table should be memorized — it is the “grammar” of LP duality.

For general LPs the primal-dual correspondence is:

| Primal (max) | Dual (min) |

|---|---|

| \(\le\) constraint | \(\ge 0\) variable |

| \(\ge\) constraint | \(\le 0\) variable |

| \(=\) constraint | free variable |

| \(\ge 0\) variable | \(\ge\) constraint |

| \(\le 0\) variable | \(\le\) constraint |

| free variable | \(=\) constraint |

Economic interpretation: In the resource-allocation LP \(\max c^T x\) s.t. \(Ax \le b,\ x \ge 0\), where \(b_i\) is the supply of resource \(i\), the optimal dual variable \(\bar y_i\) is the shadow price — the rate of change of the optimal value with respect to an increase in \(b_i\). This follows directly from strong duality: if \(b_i \to b_i + \varepsilon\), the optimal value changes by approximately \(\varepsilon \bar y_i\).

LP equivalence is important because the simplex method, duality theory, and Farkas’ lemma are each stated for specific standard forms. Equivalence ensures that all these tools apply regardless of how the LP is originally written. Two LPs are equivalent not just when they have the same feasible region, but when certificates of optimality, infeasibility, and unboundedness translate efficiently between them.

Definition 2.6.1 (LP Equivalence). LPs \((P)\) and \((P')\) are equivalent if: (1) \((P)\) has an optimal solution iff \((P')\) does; (2) \((P)\) is infeasible iff \((P')\) is; (3) \((P)\) is unbounded iff \((P')\) is; (4) certificates of optimality, infeasibility, and unboundedness can be “easily” converted between the two problems.

The following strong duality theorem for general LPs is the culmination of all the duality theory developed so far. It applies to any LP, regardless of whether variables are free or sign-constrained, and regardless of whether constraints are equalities or inequalities. The proof reduces to the SIF case using equivalence transformations.

Theorem 2.6.4 (Strong Duality for General LPs). Let \((P), (D)\) be primal-dual LPs.

- If \((P), (D)\) both have feasible solutions, they both have optimal solutions with equal optimal objective values.

- If one of \((P), (D)\) has an optimal solution, then both do, with equal optimal objective values.

Theorem 2.6.5 (CS Conditions for General LPs). Let \((P), (D)\) be a primal-dual LP pair. Let \(\bar x\) be feasible in \((P)\) and \(\bar y\) feasible in \((D)\). Then \(\bar x, \bar y\) are optimal in their respective problems if and only if: \(\forall j \in [n],\ \bar x_j = 0\) or the \(j\)-th dual constraint is tight; \(\forall i \in [m],\ \bar y_i = 0\) or the \(i\)-th primal constraint is tight.

3.5 Geometry of Duality

The algebraic optimality conditions have a beautiful geometric interpretation. A feasible point is optimal if and only if the objective vector is “trapped” by the normals of the active constraints — it cannot point in any direction that would increase the objective while staying feasible.

Valid inequalities are constraints that every feasible point satisfies — they can be added to the LP formulation without changing the feasible region. They are also the “witnesses” in duality: a dual feasible \(y\) produces a valid inequality \(c^T x \le b^T y\) for the primal.

This says: \(\bar x\) is optimal precisely when the objective direction \(c\) lies in the cone generated by the active constraint normals — exactly the equilibrium condition described physically above. If \(c\) pointed outside this cone, we could move in the direction of \(c\) while staying feasible (by moving away from the active constraints), increasing the objective — contradicting optimality.

3.6 Game Theory and the Minimax Theorem

LP duality provides an elegant proof of one of the foundational results in game theory. A two-player zero-sum game is specified by a payoff matrix \(A \in \mathbb{R}^{m \times n}\): if the row player (Rose) plays row \(i\) and the column player (Colin) plays column \(j\), Rose pays Colin the amount \(A_{ij}\). In a zero-sum game the two players have directly opposing interests — every dollar Colin gains is a dollar Rose loses.

In a mixed strategy, each player randomizes. Rose picks a probability distribution \(p \in \Delta_m = \{p \ge 0 : \mathbf{1}^T p = 1\}\) and Colin picks \(q \in \Delta_n\). The expected payout is \(p^T A q\). Rose wants to minimize this; Colin wants to maximize it.

\[ (\text{Rose}): \quad v^* = \min_{p \in \Delta_m} \max_{j \in [n]} (Ap)_j. \]\[ \min\ v \quad \text{s.t.} \quad (Ap)_j \le v\ \forall j,\quad \mathbf{1}^T p = 1,\quad p \ge 0. \]\[ (\text{Colin}): \quad w^* = \max_{q \in \Delta_n} \min_{i \in [m]} (A^T q)_i, \]\[ \max\ w \quad \text{s.t.} \quad (A^T q)_i \ge w\ \forall i,\quad \mathbf{1}^T q = 1,\quad q \ge 0. \]The minimax theorem was regarded as a deep result when von Neumann proved it in 1928 using fixed-point theory. LP duality reduces it to a one-line argument. The common optimal value \(v^* = w^*\) is the value of the game: it is the amount Colin can guarantee receiving (by playing \(\bar q\)) and simultaneously the maximum Rose can guarantee paying (by playing \(\bar p\)). The strategies \((\bar p, \bar q)\) form a Nash equilibrium — neither player benefits from deviating unilaterally.

A striking feature is the symmetry: it does not matter who commits first. Whether Rose reveals her strategy before Colin responds, or vice versa, the equilibrium payout is the same \(v^*\). This is LP strong duality in game-theoretic language: the min-over-primal equals the max-over-dual.

Chapter 4: The Simplex Method

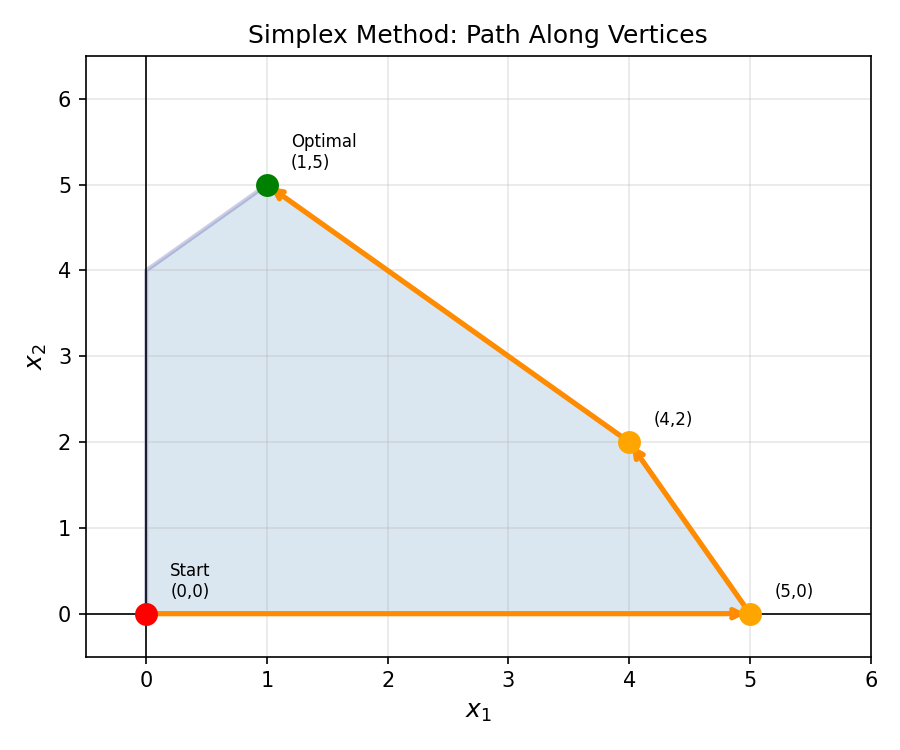

Chapter 3 developed LP duality and optimality conditions. Chapter 4 turns these conditions into a concrete algorithm. The simplex method is a vertex-walking algorithm for LPs in Standard Equality Form (SEF). It exploits the theorem that an optimal solution, if it exists, can always be found at a vertex (extreme point) of the feasible polyhedron. Each pivot moves from one vertex to an adjacent one with a strictly better objective value — a finite process that must terminate at an optimum.

4.1 Bases and Basic Feasible Solutions

Work with \((P): \max\ c^T x,\ Ax = b,\ x \ge 0\) where \(A \in \mathbb{R}^{m \times n}\), \(\operatorname{rank}(A) = m\).

The algebraic notion of a basis corresponds to the geometric notion of a vertex: each vertex of the polyhedron is determined by exactly \(m\) linearly independent tight constraints. To turn this geometric insight into an algorithm, we need an algebraic handle on vertices — that is what bases and basic feasible solutions provide.

Think of it this way. We have \(n\) variables and \(m\) equality constraints. The equality constraints form an underdetermined system: there are infinitely many solutions. A basis \(B\) of size \(m\) picks out \(m\) “special” variables (the basic variables) and forces all other variables to zero. The resulting unique solution \(x_B = A_B^{-1} b,\ x_N = 0\) is the basic solution. If it happens to be non-negative, it is a basic feasible solution — and this corresponds exactly to a vertex of the feasible polytope.

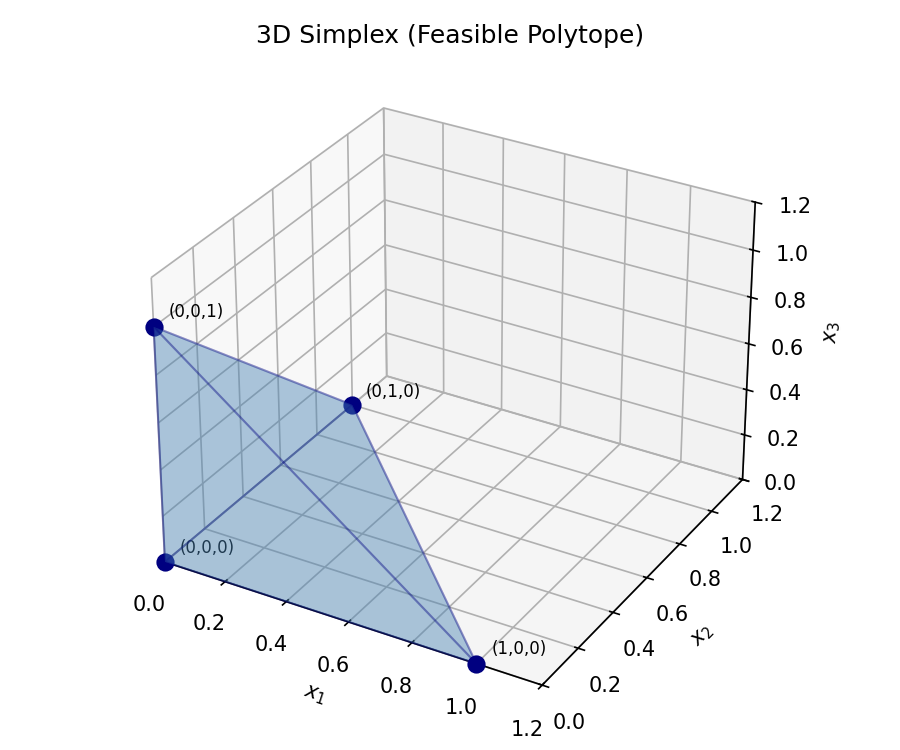

The word “simplex” in the simplex method comes from the following geometric object — in low dimensions, the simplest possible polytope with the most basic structure.

Definition 2.8.1 (Simplex). \(S \subseteq \mathbb{R}^n\) is a simplex if \(S = \mathrm{conv}(v^{(1)}, \ldots, v^{(n+1)})\) where the \(v^{(i)}\) are affinely independent.

Definition 2.8.2 (Basis). We say \(B \subseteq [n]\) is a basis of \(A\) if \(A_B\) is square and nonsingular, equivalently \(|B| = m\) and \(\mathrm{rank}(A_B) = m\). The complementary index set is \(N = [n] \setminus B\). The unique solution \(x_B = A_B^{-1} b,\ x_N = 0\) is the basic solution corresponding to \(B\).

Definition 2.8.3 (Basic Solution). \(\bar x\) is a basic solution to \(Ax = b\) if there is a basis \(B\) such that \(\bar x\) is the basic solution corresponding to \(B\).

Definition 2.8.4 (Basic Feasible Solution). A basic solution \(\bar x \ge 0\) is a basic feasible solution (BFS). Variables \(x_j,\ j \in B\) are basic variables; the others are non-basic variables.

Definition 2.8.5 (Feasible Basis). \(B \subseteq [n]\) is a feasible basis for \((P)\) if \(B\) is a basis of \(A\) and the BFS determined by \(B\) is feasible in \((P)\). A feasible basis determines a unique BFS.

A BFS corresponds to a vertex of the feasible polyhedron: the non-basic variables at zero are the “tight constraints” \(x_j = 0\), and together with \(Ax = b\) they pin down the vertex uniquely.

The equivalence between BFS, linear independence, and extreme points is the cornerstone of the simplex method’s correctness:

Theorem 2.8.1. Let \(A \in \mathbb{R}^{m \times n}\), \(\mathrm{rank}\ A = m\), \(b \in \mathbb{R}^m\), \(F = \{x \in \mathbb{R}^m : Ax = b,\ x \ge 0\}\). Suppose \(\bar x \in F\). The following are equivalent:

- \(\bar x\) is a basic feasible solution to \(Ax = b,\ x \ge 0\).

- The columns \(\{A_j : \bar x_j > 0\}\) are linearly independent.

- \(\bar x\) is an extreme point of \(F\).

This three-way equivalence confirms that the simplex method — which moves between BFS — is indeed walking along the vertices of the feasible polytope. The algebraic pivot corresponds precisely to the geometric step from one vertex to an adjacent one.

4.2 Simplex Algorithm: Pivot Steps

Each simplex pivot moves from the current BFS to a better adjacent one. The key insight is that each step decomposes the problem into: (a) which non-basic variable to “bring in” (the entering variable), and (b) which basic variable to “kick out” (the leaving variable). The entering variable is chosen to improve the objective (positive reduced cost); the leaving variable is determined by the ratio test, which ensures we do not violate feasibility by overshooting.

The reduced costs \(\bar c_N = c_N - A_N^T \bar y\) are the true “value” of increasing each non-basic variable, accounting for the indirect effect through the basic variables. A positive reduced cost means increasing that variable strictly improves the objective — it is “under-priced” relative to its shadow price. When all reduced costs are non-positive, no non-basic variable is worth increasing, and the current BFS is optimal.

The ratio test in step 7 deserves special attention. When we increase the entering variable \(x_k\) from zero, the basic variables change as \(x_B \leftarrow x_B - d x_k\). The minimum ratio \(\bar\alpha = \min\{x_j/d_j : d_j > 0\}\) tells us how much we can increase \(x_k\) before some basic variable hits zero (and must leave the basis). This is exactly the constraint that keeps all variables non-negative.

Given a feasible basis \(B\) and corresponding BFS \(\bar x\):

- Compute the dual variable \(\bar y = A_B^{-T} c_B\) (solution to \(A_B^T y = c_B\)).

- Compute reduced costs \(\bar c_N = c_N - A_N^T \bar y\).

- If \(\bar c_N \le 0\): stop — \(\bar x\) is optimal (and \(\bar y\) is dual optimal).

- Pick any entering variable \(k \in N\) with \(\bar c_k > 0\) (improving direction).

- Compute pivot direction \(d = A_B^{-1} A_k\).

- If \(d \le 0\): stop — \((P)\) is unbounded.

- Compute step size \(\bar\alpha = \min\{x_j / d_j : j \in B,\ d_j > 0\}\) and leaving variable \(l\) achieving the minimum (ratio test).

- Update basis: \(B' = B \cup \{k\} \setminus \{l\}\) and \(\bar x' = \bar x + \bar\alpha \bar d\).

Input: \((A, b, c)\) defining (P) in SEF; initial feasible basis \(B\) with BFS \(\bar x\).

Loop:

- Solve \(A_B^T \bar y = c_B\). Compute \(\bar c_N = c_N - A_N^T \bar y\).

- If \(\bar c_N \le 0\): return optimal \((\bar x, \bar y)\).

- Pick \(k \in N\) with \(\bar c_k > 0\) (e.g., smallest index: Bland's rule).

- Solve \(A_B d = A_k\).

- If \(d \le 0\): return unbounded.

- Compute \(\bar\alpha = \min\{x_j/d_j : j \in B, d_j > 0\}\); let \(l\) achieve the min.

- Update: \(B \leftarrow B \cup \{k\} \setminus \{l\}\); \(\bar x \leftarrow \bar x + \bar\alpha \bar d\).

- Go to step 1.

Correctness of a pivot step. The three key properties of each non-degenerate pivot are captured by three claims:

Claim 1: the direction \(d\) satisfies \(c^T d < 0\). \textit{Proof}: \(c^T d = \bar y^T A^= d = \bar y^T e_j = \bar y_j < 0\), where \(j\) is the leaving constraint index with \(\bar y_j < 0\), and \(e_j\) is the \(j\)th standard basis vector.

Claim 2: for sufficiently small \(\varepsilon > 0\), the point \(\tilde x + \varepsilon d\) is feasible. \textit{Proof}: tight constraints remain satisfied (\(A^= (\tilde x + \varepsilon d) = b^= + \varepsilon A^= d \ge b^=\) since the pivot step ensures the entering constraint activates), and slack constraints remain satisfied by continuity.

Claim 3: after the pivot, the new point \(\tilde x + \bar\lambda d\) is an extreme point with strictly better objective. \textit{Proof}: it is a BFS by Proposition 2.8.2, and \(c^T(\tilde x + \bar\lambda d) = c^T \tilde x + \bar\lambda c^T d < c^T \tilde x\) since \(\bar\lambda > 0\) and \(c^T d < 0\) by Claim 1.

Why does the simplex method improve the objective? The objective rewrites as:

\[ c^T x = c_B^T A_B^{-1} b + \sum_{j \in N} \bar c_j x_j. \]The constant term is the current value. Since \(\bar c_k > 0\) and we increase \(x_k\), the objective strictly increases (when \(\bar\alpha > 0\)).

4.3 Termination and Degeneracy

The simplex method must terminate because there are only finitely many bases. But degeneracy — where multiple bases correspond to the same BFS — can cause the algorithm to cycle indefinitely without making progress.

The next two propositions confirm that each step of the simplex method maintains the invariants that make it work: after a pivot, the new solution is still a BFS (Proposition 2.8.2), and when no pivot can improve the objective, the current solution is optimal (Proposition 2.8.3). Together they establish the correctness of the algorithm.

Proposition 2.8.2. If the pivot step does not result in an unbounded direction, then the updated solution \(x'\) is a BFS with basis \(B' = B \cup \{k\} \setminus \{l\}\).

Proposition 2.8.3. If \(\bar c_N \le 0\), then the current BFS \(\bar x\) is optimal in \((P)\).

Proposition 2.8.3 is the “certificate of optimality” half of the simplex method: when the algorithm halts with \(\bar c_N \le 0\), the dual solution \(\bar y\) computed in step 1 is dual feasible, and by construction the primal-dual pair \((\bar x, \bar y)\) satisfies complementary slackness. Strong duality then confirms they are both optimal. The simplex method does not merely find a solution — it simultaneously finds a proof of optimality.

Theorem 2.8.4. The Simplex Method applied to LP problems in SEF with a basic feasible solution terminates in at most \(\binom{n}{m}\) iterations provided that \(\bar\alpha > 0\) in each iteration. When it stops, it gives either a certificate of optimality or unboundedness.

When \(\bar\alpha > 0\), every pivot strictly improves the objective, so no basis can be revisited. The bound \(\binom{n}{m}\) is the number of possible bases — an exponential bound, but one that is rarely approached in practice.

Definition 2.8.6 (Degenerate). A BFS \(\bar x\) determined by basis \(B\) of \(A\) is degenerate if \(\bar x_i = 0\) for some \(i \in B\). When degeneracy occurs, \(\bar\alpha = 0\) and the algorithm may not make progress (it may cycle).

Definition 2.8.7 (Nondegenerate). An LP problem \((P)\) with constraints \(Ax = b,\ x \ge 0\) is nondegenerate if every basis of \(A\) is nondegenerate.

Degeneracy occurs when more than \(m\) constraints are tight at a vertex — the vertex is an intersection of “too many” hyperplanes. Geometrically, degenerate vertices look like regular vertices but may cause the algorithm to walk in circles through different bases all representing the same vertex.

Bland’s Rule: Choose the entering variable \(k\) and leaving variable \(l\) both with the smallest index among all eligible candidates. This guarantees termination.

Bland’s rule breaks the symmetry that causes cycling: by imposing a total order on variable choices, it ensures the algorithm always makes a definite “progress” signal even when the objective does not improve.

4.4 Lexicographic Simplex and Two-Phase Method

The degeneracy problem — stalling at a degenerate vertex — and the initialization problem — finding an initial BFS — are the two practical obstacles to running the simplex method on a real LP. The lexicographic pivot rule addresses the former; the two-phase method addresses the latter.

An elegant alternative to Bland’s rule is perturbation: slightly modify the right-hand side \(b\) to eliminate all degeneracy. The perturbed LP has no degenerate vertices, so the simplex method terminates by the basic finite termination theorem.

To handle degeneracy more elegantly, perturb \(b\) formally by replacing \(b_i\) with \(b_i - \varepsilon^i\), where \(\varepsilon\) is a formal indeterminate and computations take place in the polynomial ring \(\mathbb{R}[\varepsilon]\) with the ordering: \(p(\varepsilon) < q(\varepsilon)\) if \(p(\varepsilon') < q(\varepsilon')\) for all sufficiently small \(\varepsilon' > 0\). The lexicographic simplex compares ratio-test candidates under this polynomial ordering, breaking all ties. The perturbed LP is automatically nondegenerate, guaranteeing strict improvement at every step.

Proposition 2.9.1. The perturbed LP problem \((P')\) (obtained by replacing \(b_i\) with \(b_i - \varepsilon^i\)) is nondegenerate.

Theorem 2.9.2. The Lexicographic Simplex Method applied to \((P)\) with a starting BFS terminates in at most \(\binom{n}{m}\) iterations.

The initialization problem is the other practical challenge: the simplex method requires a starting BFS, but none may be obvious from the LP’s original formulation. The two-phase method is a beautiful resolution: solve a modified LP (Phase I) whose optimal value tells us whether the original LP is feasible, and whose optimal solution gives us the starting BFS for Phase II. The auxiliary LP always has an obvious starting BFS — the artificial variables \(s_i\) are set to \(b_i\) (assuming \(b \ge 0\) after possibly flipping rows).

To find an initial feasible basis when none is obvious, the Two-Phase Method introduces artificial variables \(s_1, \ldots, s_m\) and solves:

\[ \text{(P-AUX):}\ \max\ -\mathbf{1}^T s \quad \text{s.t.}\ Ax + Is = b,\ x,s \ge 0. \]The basis \(B = \{n+1,\ldots,n+m\}\) gives an immediate BFS with \(s = b \ge 0\). If the optimal value of (P-AUX) is zero, then a feasible basis for (P) has been found; otherwise (P) is infeasible.

Proposition 2.10.1. \((\text{P-AUX})\) has an optimal objective value equal to zero if and only if \((P)\) has a feasible solution.

The simplex method not only finds a primal optimal solution \(\bar x\) — it simultaneously computes a dual optimal solution \(\bar y = A_B^{-T} c_B\). At termination, the pair \((\bar x, \bar y)\) satisfies complementary slackness. The abridged CS theorem below gives a stronger form of this: not only is one side of each CS pair zero, but their sum is strictly positive. This “complementary” relationship is exact — no partial zeroes are possible.

Theorem 2.11.1 (Abridged Complementary Slackness). Consider \((P): \max\ c^T x,\ Ax = b,\ x \ge 0\) and \((D): \min\ b^T y,\ A^T y \ge c\). Suppose \((P)\) has an optimal solution. Then \((P), (D)\) have optimal solutions \(\bar x, \bar y\) such that for all \(j = 1, 2, \ldots, n\):

\[ \bar x_j (A^T \bar y - c)_j = 0 \quad \text{and} \quad \bar x_j + (A^T \bar y - c)_j > 0. \]This is a strengthening of ordinary CS: not only does one of the two quantities vanish, but their sum is strictly positive — so exactly one is zero and the other is strictly positive.

Chapter 5: Applications and Special Structure

With the theory and algorithm of LP developed, Chapter 5 turns to applications: combinatorial optimization (matchings, flows, games), special matrix structure (totally unimodular matrices), and extensions to nonlinear optimization (convex programs, KKT conditions). The common thread is that linear programming, when applied to problems with special structure, yields much more than just a solution — it yields structural insight, integer optima automatically, and a bridge to combinatorial min-max theorems.

5.1 LP Unboundedness and Infeasibility (Geometric)

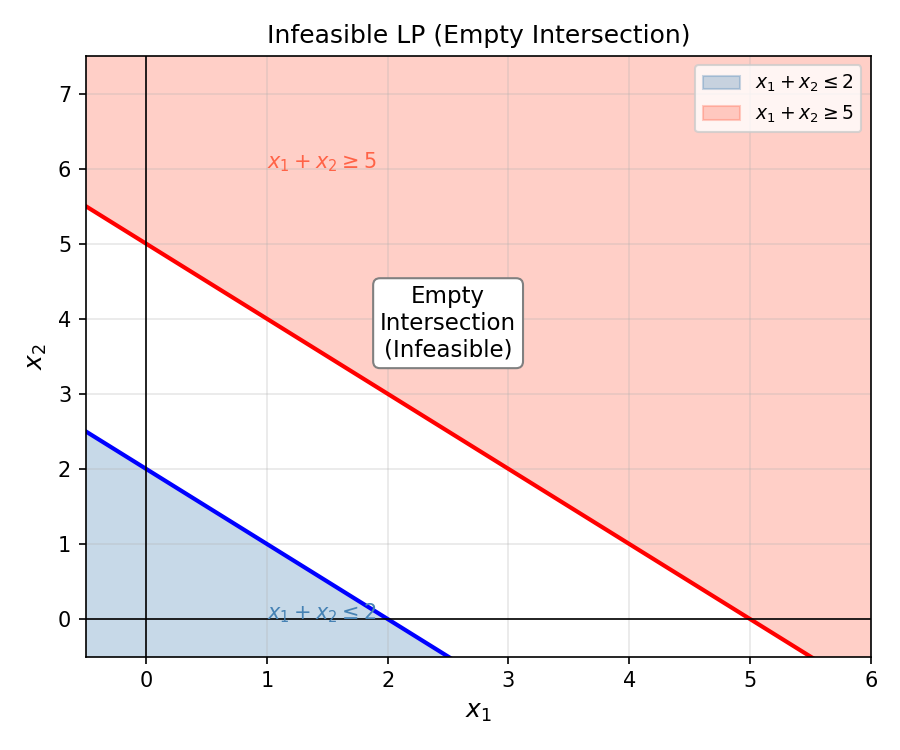

An LP can fail in two ways. Geometrically:

- Infeasible: the constraint halfspaces have empty intersection.

- Unbounded: the feasible region extends infinitely in the direction of improving objective.

5.2 Combinatorial Optimization and Integer Programs

Many optimization problems arising from combinatorics — who matches to whom, which path is shortest, how much flow fits through a network — are naturally formulated as integer programs. The key insight is that for many such problems, the LP relaxation (dropping integrality) automatically produces integer solutions, making them solvable in polynomial time despite their integer formulation.

Definition 3.1.1 (Graph). \(G = (V, E)\) is a graph where \(E\) is a subset of pairs \(\{u, v\}\) with \(u \ne v \in V\). We take graphs to be simple by default.

Definition 3.1.2 (Matching). A matching in a graph \(G\) is a subset \(M \subseteq E\) such that every vertex \(v \in V(G)\) is incident with at most one edge in \(M\).

Definition 3.1.3 (Perfect Matching). A matching in \(G\) is perfect if it is incident with every vertex (i.e., its cardinality is exactly \(|V|/2\)).

Definition 3.1.4 (Maximum Weight Matching Problem). Let \(w_e \in \mathbb{R}\) for every \(e \in E\). The maximum weight matching problem in \(G\) is to find a matching \(M\) such that \(\sum_{e \in M} w_e\) is maximized.

Definition 3.1.5 (Maximum Weight Perfect Matching Problem). The maximum weight matching problem with the added constraint that the matching must be perfect.

Definition 3.1.6 (Bipartite). A graph \(G = (V, E)\) is bipartite if there is a bipartition \(A, B\) of \(V\) such that every edge \(\{u,v\} \in E\) has \(u \in A,\ v \in B\) or vice versa.

Definition 3.1.7 (Complete). A graph is complete if \(\{u, v\} \in E\) for every pair \(u, v \in V\).

Many combinatorial problems (matching, shortest path, max-flow) are naturally formulated as integer programs. The LP relaxation drops the integrality constraint:

\[ (\text{IP})\ \max c^T x,\ Ax \le b,\ x \in \mathbb{Z}^n \quad \le \quad (\text{LP})\ \max c^T x,\ Ax \le b. \]The LP optimal value is an upper bound on the IP optimal value. Equality holds when the LP solution is automatically integral — a situation governed by totally unimodular matrices.

The integer hull captures all integer points that can be convexly combined — it is the “best LP relaxation” of the integer program.

The integer hull is the most important polyhedral object in integer programming. It is the convex hull of all integer feasible points — the “LP relaxation of the LP relaxation” that is tight for integer solutions. If we knew the integer hull explicitly (as a set of linear inequalities), we could solve any IP over these constraints as an LP. The challenge of IP is precisely that the integer hull may require exponentially many inequalities to describe. Theorems 3.2.1 and 3.2.2 confirm that the integer hull is always a polyhedron — it is a well-structured object, even if its description is complex.

Definition 3.2.1 (Integer Hull). If \(P := \{x \in \mathbb{R}^n : Ax \le b\}\), the integer hull of \(P\) is \(P_I := \mathrm{conv}(P \cap \mathbb{Z}^n)\).

Theorem 3.2.1. Let \(A \in \mathbb{R}^{m \times n}\), \(b \in \mathbb{R}^m\), \(S := \{x \in \mathbb{Z}^n : Ax \le b\}\) be bounded. Then \(\mathrm{conv}(S)\) is a polytope.

Theorem 3.2.2. Let \(A \in \mathbb{Q}^{m \times n}\), \(b \in \mathbb{Q}^m\), \(P := \{x \in \mathbb{Z}^n : Ax \le b\}\). Then \(P\) is a polyhedron.

Corollary 3.2.2.1. Let \(A \in \mathbb{Q}^{m \times n}\), \(b \in \mathbb{R}^m\), \(S := \{x \in \mathbb{Z}^n : Ax \le b\}\). Then \(\mathrm{conv}(S)\) is a polyhedron.

When \(P = P_I\), the LP and the IP have the same optimal value for every objective — the LP is an “exact” relaxation. This is the ideal situation, and it holds whenever \(A\) is totally unimodular.

Theorem 3.2.3. Let \(A \in \mathbb{Q}^{m \times n}\), \(b \in \mathbb{Q}^m\), \(P := \{x \in \mathbb{R}^n : Ax \le b\}\) nonempty and bounded. Then \(P = P_I\) if and only if for all \(c \in \mathbb{Z}^n\), the LP \(\max\ c^T x,\ x \in P\) has an integer optimal value.

Theorem 3.2.4 collects five equivalent characterizations of the condition \(P = P_I\). This five-way equivalence is remarkable: it shows that the condition “the LP relaxation always has an integral optimal solution” is equivalent to “every extreme point is integral” (a structural condition), “the LP optimal is always integral” (an objective-value condition), and even “the dual LP optimal is always integral” (a condition on the dual). Each equivalence opens a different avenue for proving or refuting \(P = P_I\). In practice, the most useful direction is (1) \(\Rightarrow\) (3): if we can verify that every extreme point of \(P\) is integral (e.g., by showing \(A\) is TU), then we conclude the LP always has an integral optimal solution.

Theorem 3.2.4. Let \(A \in \mathbb{Q}^{m \times n}\), \(b \in \mathbb{Q}^m\). Suppose \(P := \{x \in \mathbb{R}^n : Ax \le b\}\) is nonempty and bounded. The following are equivalent:

- \(P = P_I\)

- Every extreme point of \(P\) is in \(\mathbb{Z}^n\)

- \(\forall c \in \mathbb{R}^n\), the LP \(\max\{c^T x : Ax \le b\}\) has an optimal solution \(\bar x \in \mathbb{Z}^n\)

- \(\forall c \in \mathbb{Z}^n\), \(\max\{c^T x : Ax \le b\} \in \mathbb{Z}\)

- \(\forall c \in \mathbb{Z}^n\), \(\min\{b^T y : A^T y = c,\ y \ge 0\} \in \mathbb{Z}\)

5.3 Totally Unimodular Matrices

The condition that makes LP relaxations automatically integral is total unimodularity — a condition on the constraint matrix \(A\) that ensures all bases have determinant \(\pm 1\), forcing Cramer’s rule to produce integers.

Definition 3.3.1 (Submatrix). Let \(I \subseteq [m],\ J \subseteq [n]\); then \(A_{IJ}\) is the submatrix of \(A\) with rows indexed by \(I\) and columns by \(J\): \([a_{ij}]_{i \in I, j \in J}\).

Definition 3.3.2 (Totally Unimodular). \(A \in \mathbb{Z}^{m \times n}\) is totally unimodular (TU) if for every \(k \in [m]\), the determinant of every \(k \times k\) submatrix of \(A\) is in \(\{-1, 0, 1\}\). In particular, every entry of \(A\) must lie in \(\{-1, 0, 1\}\).

Total unimodularity is a strong algebraic condition: not just the full matrix, but every square submatrix must have determinant in \(\{-1, 0, 1\}\). Yet many natural constraint matrices from combinatorics satisfy it.

Theorem 3.3.3 establishes that total unimodularity is equivalent to two other properties: determinant \(\pm 1\) for every basis, and integer-valued inverse for every basis. The equivalence (1) \(\Leftrightarrow\) (3) is immediate from Cramer’s rule: if \(\det A_B = \pm 1\), then \(A_B^{-1} = \frac{\text{adj}(A_B)}{\det A_B}\) has integer entries. The equivalence (2) \(\Leftrightarrow\) (3) is the crucial LP statement: integer right-hand sides and integer inverse bases produce integer extreme points.

Theorem 3.3.3. Let \(A \in \mathbb{Z}^{m \times n}\), \(\mathrm{rank}\ A = m \le n\). The following are equivalent:

- \(|\det A_B| = 1\) for every basis \(B\) of \(A\).

- Every extreme point of \(\{x \in \mathbb{R}^n : Ax = b,\ x \ge 0\}\) is in \(\mathbb{Z}^n\) for every \(b \in \mathbb{Z}^m\).

- \(A_B^{-1} \in \mathbb{Z}^{m \times m}\) for all bases \(B\) of \(A\).

Proposition 3.3.4 lists the closure properties of TU matrices — operations that preserve total unimodularity. These are extremely useful in practice: they tell us that we can augment a TU matrix with an identity block, take its transpose, or scale its rows by \(\pm 1\), and the result is still TU. This allows us to recognize TU structure even when it is disguised by routine LP transformations such as adding slack variables or switching to SEF.

Proposition 3.3.4. Let \(A \in \{-1, 0, 1\}^{m \times n}\). The following are equivalent: (i) \(A\) is TU; (ii) \(A^T\) is TU; (iii) \([A \mid I]\) is TU; (iv) \(\begin{pmatrix}A \\ I\end{pmatrix}\) is TU; (v) \([A \mid A]\) is TU; (vi) \(DA\) is TU for any diagonal matrix \(D\) with \(\pm 1\) entries.

Theorems 3.3.5 and 3.3.6 are the payoff of TU for LP in practice. They say that if \(A\) is TU and \(b\) is integral, then the LP over \(\{Ax \le b\}\) or \(\{Ax \le b, x \ge 0\}\) automatically has integral extreme points — no matter what the objective is. This means: solve the LP (ignoring integrality), and the optimal solution you get is already integral. No branch-and-bound, no cutting planes, no extra work.

Theorem 3.3.5. Let \(A \in \{-1, 0, 1\}^{m \times n}\) be TU and \(b \in \mathbb{Z}^m\). Then every extreme point of \(\{x \in \mathbb{R}^n : Ax \le b\}\) is in \(\mathbb{Z}^n\).

Theorem 3.3.6. Let \(A \in \{-1, 0, 1\}^{m \times n}\) be TU and \(b \in \mathbb{Z}^m\). Then every extreme point of \(\{x \in \mathbb{R}^n : Ax \le b,\ x \ge 0\}\) is in \(\mathbb{Z}^n\).

Now the critical question is: which matrices actually arise in practice are TU? The two most important examples come from graphs. Theorem 3.3.7 says the node-arc incidence matrix of any directed graph is TU — this single fact implies that network flow problems (shortest path, maximum flow, minimum cost flow) all have integral LP relaxations. The corollary then gives us the bipartite case, which drives the matching theory.

Theorem 3.3.7. The node-arc incidence matrix of every directed graph is TU.

Corollary 3.3.8.1. The incidence matrix of every undirected bipartite graph is TU.

The proof is a simple application of Cramer’s rule: inverse of an integer matrix with determinant \(\pm 1\) is another integer matrix. TU makes every basis inversion produce an integer result, so every extreme point is integral.

Key examples of TU matrices:

The node-arc incidence matrix of any directed graph is TU. Each column (arc) has exactly one \(+1\) (head) and one \(-1\) (tail), so any square submatrix has determinant in \(\{-1, 0, 1\}\) (by induction on size).

The incidence matrix of any bipartite graph is TU. Proof: orient all edges from one side of the bipartition to the other to get a directed graph; its incidence matrix is TU by (1), and the undirected incidence matrix differs only by row signs.

These facts are tight: the incidence matrix of an odd cycle (non-bipartite graph) is not TU. The determinant of the incidence matrix of a triangle is \(\pm 2\), violating TU. This is why bipartiteness is necessary for König’s theorem: non-bipartite graphs have incidence matrices that are not TU, so their LP relaxations may have fractional optima.

5.4 König’s Theorem

König’s theorem — first proved in 1916 by purely combinatorial means — follows from total unimodularity as an almost immediate corollary. The LP lens makes the underlying structure transparent. What makes this theorem striking is that it asserts the equality of two quantities that are trivially in a one-sided relationship: a matching and a vertex cover cannot have the same size unless both are optimal. König says they always can be made equal in bipartite graphs — a min-max equality that has no analog in general graphs.

The key insight here is that maximum matching is a primal LP (maximize the number of edges selected, subject to each vertex being covered at most once), and minimum vertex cover is its dual (minimize the number of vertices selected, subject to each edge being covered by at least one selected vertex). Strong LP duality gives equal objective values; TU of the bipartite incidence matrix gives integral solutions. These two facts together prove König in two lines.

Definition 3.4.1 (Vertex Cover). A set \(C \subseteq V(G)\) such that every edge is incident with some vertex in \(C\).

Theorem 3.4.1 (König’s Theorem, 1916). In every bipartite graph, the cardinality of a maximum matching equals the cardinality of a minimum vertex cover.

The proof is beautifully concise: the two facts (TU gives integrality; LP duality gives equal optima) combine to prove a non-trivial combinatorial equality in two lines. This is the power of the LP/TU framework: combinatorial theorems become consequences of algebraic structure.

Theorem 3.8.1 is a clean dichotomy theorem for perfect matchings in balanced bipartite graphs: either the graph has a perfect matching, or it has a “deficient set” — a subset of one side that is too small in the neighborhood to be matched. This is the contrapositively stated form of Hall’s theorem, and it gives an explicit obstruction certificate whenever a perfect matching does not exist.

Theorem 3.8.1. A bipartite graph \(G = (V, E)\) with bipartition \((W, J)\), \(|W| = |J|\), either (i) has a perfect matching, or (ii) has a deficient set.

5.5 Regular Bipartite Graphs, Edge Coloring, and the Card Puzzle

The LP relaxation framework is particularly powerful for regular graphs. An \(r\)-regular graph has every vertex with degree exactly \(r\). The key observation is that for regular bipartite graphs, an explicit fractional solution lies in the perfect matching polytope — and TU forces integer extreme points.

Theorem 5.5.1. Every \(r\)-regular bipartite graph has a perfect matching.

This proof is a template for the LP/TU proof machine: exhibit a fractional point in the polytope, invoke TU for integrality of extreme points, and conclude by convexity. No alternating-path argument is needed.

Corollary 5.5.2. Every \(r\)-regular bipartite graph (or multigraph) is \(r\)-edge-colorable.

The same result holds for multigraphs by the same proof, since the incidence matrix of a bipartite multigraph is also TU.

The card puzzle. Take a standard 52-card deck and deal all cards into a \(4 \times 13\) grid. Can you always rearrange the cards within each column so that every row contains each of the 13 ranks exactly once? Yes. Model this as a 13-regular bipartite multigraph \(G\) with parts \(\{\text{rows } 1,2,3,4\}\) and \(\{\text{ranks } A, 2, \ldots, K\}\): for each card in the grid, add an edge between its row and its rank. Since each row has 13 cards (one per column) and each rank has 4 cards (one per suit), \(G\) is 13-regular on the row side and 4-regular on the rank side. A proper 4-edge-coloring of \(G\) assigns each card a column slot (1–13) such that each row has all 13 ranks and each rank appears once in each row — exactly the desired arrangement. By Corollary 5.5.2, such a coloring exists.

5.6 Maximum Flow — Minimum Cut

The Max-Flow Min-Cut theorem admits a proof identical in structure to König’s, once we observe that the flow LP has a TU constraint matrix. This unifies two seemingly different theorems under the same algebraic roof. Notice how the “LP + TU” framework becomes a proof machine: once you identify the right LP formulation and verify TU of the constraint matrix, you get (a) an integral optimal solution automatically, and (b) via LP duality, a min-max equality theorem. The Max-Flow Min-Cut theorem, König’s theorem, and Hall’s theorem are all instances of this same machine applied to different combinatorial structures.

Definition 3.5.1 (Cut). For a directed graph \(\tilde G = (V, \tilde E)\) and \(U \subseteq V\), the cut is \(\delta(U) := \{ij \in \tilde E : i \in U,\ j \notin U\}\).

Definition 3.5.2 (st-Cut). Let \(U \subseteq V\) with \(s \in U,\ t \notin U\). Then \(\delta(U)\) is an st-cut.

Definition 3.5.3 (Cut Capacity). The capacity of an st-cut \(W\) is \(\sum_{ij \in \delta(W)} u_{ij}\).

Theorem 3.5.1. The maximum st-flow is equal to the minimum st-cut (capacity).

Theorem 3.5.2 (Maximum-Flow Minimum-Cut). Let \(\tilde G = (V, \tilde E)\) be a directed graph with two distinct nodes \(s, t \in V\) and \(u \in \mathbb{R}^{\tilde E}_+\). The value of the maximum flow in \(\tilde G\) equals the capacity of the minimum st-cut. Furthermore, if \(u \in \mathbb{Z}^{\tilde E}_+\), there exists a maximum flow that is integral.

The proof is identical in structure to König’s: the flow LP has a TU constraint matrix (the node-arc incidence matrix of the directed graph), so the LP and its dual both have integral optima; the dual LP encodes the minimum cut problem. The integrality of the maximum flow is a free consequence — it follows from TU rather than from the Ford-Fulkerson algorithm.