CS 480: Introduction to Machine Learning

Pascal Poupart, Yao-Liang Yu, Gautam Kamath

Estimated study time: 3 hr 35 min

Table of contents

Chapter 1: Introduction to Machine Learning

What Is Machine Learning?

Computer science has historically revolved around one central activity: writing instructions that tell a computer exactly what to do. From algorithms and data structures to compilers and operating systems, the programmer must think through every step and encode it explicitly. This paradigm works brilliantly for well-understood problems, but it breaks down when we face tasks where we cannot articulate the rules. How do you write a program to translate between languages, recognise faces in photographs, or predict tomorrow’s weather from sensor data? Decades of effort in rule-based systems showed that manually specifying these rules simply does not scale.

Machine learning is a fundamentally different paradigm. Rather than programming a computer with explicit instructions, we provide it with examples and let it discover the underlying patterns. As Arthur Samuel put it in 1959, machine learning is “the field of study that gives computers the ability to learn without being explicitly programmed.” The computer receives data, searches through a space of possible functions, and finds one that explains the data well enough to generalise to new, unseen inputs. We are not writing the program directly; we are writing a meta-program that learns the program from data.

Consider machine translation. Instead of encoding thousands of grammar rules and exception tables, modern systems ingest millions of parallel sentence pairs and learn to map one language onto another. The same story repeats across domains: in computer vision, a convolutional neural network trained on labelled images outperforms any hand-crafted edge detector; in speech recognition, end-to-end models trained on thousands of hours of audio surpass traditional phoneme-based pipelines. In each case, the key ingredient is data, not hand-coded rules.

The landscape of machine learning in 2024–2026 has been reshaped by large language models such as GPT-4, Claude, and Gemini, which demonstrate that scaling data and compute can yield remarkably general capabilities. Diffusion models generate photorealistic images, reinforcement learning from human feedback aligns model behaviour with human preferences, and multimodal architectures process text, images, and audio within a single framework. Understanding the foundational algorithms covered in these notes is essential for making sense of these modern advances.

Types of Learning

Machine learning problems are traditionally grouped into three families, distinguished by the nature of the feedback available during training.

Supervised learning is the setting where we have a dataset of input-output pairs \}_{i=1}^n). The goal is to learn a function \ that maps inputs to outputs so that \ \approx y) on unseen test data. When the output is categorical — for instance, “spam” or “not spam” — the problem is called classification. When the output is a continuous value — for instance, predicting a house price — it is called regression. Much of the first half of this course deals with supervised learning.

Unsupervised learning operates on data without labels. Given only inputs , the goal is to discover structure: clusters of similar points, a low-dimensional representation, or a generative model of the data distribution. Clustering algorithms like -means and density estimation methods like Gaussian mixture models fall here.

Reinforcement learning addresses sequential decision-making. An agent interacts with an environment, receives rewards, and must learn a policy that maximises cumulative reward over time. Applications range from game playing to robotics and recommendation systems.

The Learning Pipeline

A typical supervised learning pipeline proceeds through several stages. First, raw data is collected and cleaned. Next, features are extracted: the raw inputs are transformed into a numerical representation \ suitable for the learning algorithm. A model is chosen — for example, linear functions, decision trees, or neural networks. The model’s parameters are fit to the training data by minimising some loss function. Finally, the learned model is evaluated on held-out test data to estimate its performance on unseen inputs.

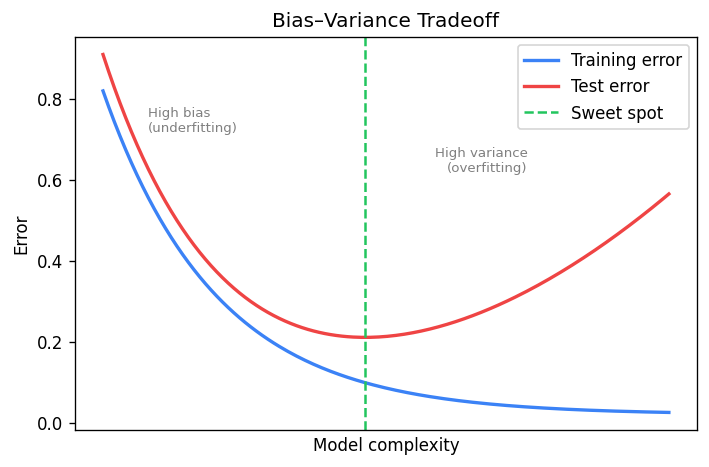

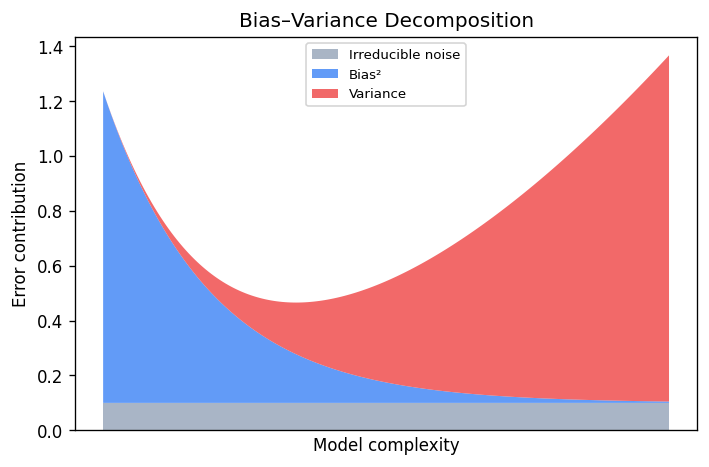

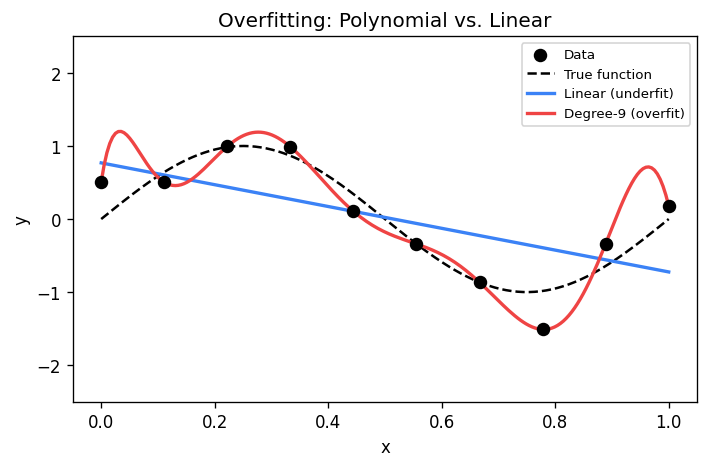

The choice of hypothesis class is critical. If we restrict ourselves to linear functions when the true relationship is nonlinear, no amount of data will save us. If we use a model that is too flexible, we risk fitting noise rather than signal. This tension is formalised by the bias-variance tradeoff.

The Bias-Variance Tradeoff

Suppose the true relationship between inputs and outputs is \ + \epsilon), where \ is noise with mean zero. We train a model \ on a finite sample. For a given test point , the expected prediction error decomposes as:

\[ \mathbb{E}[ - y)^2] = \underbrace{\bigl] - f\bigr)^2}_{\text{bias}^2} + \underbrace{\mathbb{E}\bigl[ - \mathbb{E}[\hat{f}])^2\bigr]}_{\text{variance}} + \underbrace{\mathbb{E}[\epsilon^2]}_{\text{irreducible noise}} \]The bias measures how far the average prediction is from the truth. A model with high bias is too simple; it underfits. The variance measures how much the predictions fluctuate across different training sets. A model with high variance is too complex; it overfits. The irreducible noise is a property of the problem itself and cannot be reduced by any model.

Much of machine learning is about navigating this tradeoff. Regularisation, cross-validation, and careful model selection are all tools for finding the sweet spot where the sum of squared bias and variance is minimised.

Why Machine Learning Matters

Machine learning is no longer a niche academic pursuit. It powers web search, recommendation engines, autonomous vehicles, drug discovery, protein structure prediction, and countless other applications. The explosive growth of data and compute has made previously intractable problems solvable. Every student of computer science should understand the fundamental algorithms that underpin this revolution — not because these exact algorithms are always deployed in production, but because they provide the conceptual vocabulary for understanding, designing, and evaluating more advanced methods.

Chapter 2: K-Nearest Neighbours

The Idea Behind KNN

Suppose you want to classify a new data point but you have no parametric model of the data. The simplest strategy is to look at the training examples that are most similar to the query and let them vote. This is the essence of the -nearest neighbours algorithm: to predict the label of a test point , find the \ training points closest to \ and return the majority label or the average target value.

KNN is a non-parametric method. Unlike linear regression or logistic regression, it does not learn a fixed-size parameter vector . Instead, it stores the entire training set and computes predictions on the fly. This makes training trivial — there is nothing to learn — but prediction expensive, since every query requires scanning the dataset.

Distance Metrics

The notion of “closest” depends on the choice of distance metric. The most common choices for \ are:

| Metric | Formula | Properties |

|---|---|---|

| Euclidean) | ^2}) | Rotation invariant, most common |

| Manhattan) | \ | Robust to outliers in single coordinates |

| Minkowski) | ^{1/p}) | Generalises both; \ is max metric |

The choice of metric can dramatically affect performance. In practice, features are often normalised so that no single dimension dominates the distance computation.

The KNN Algorithm

For classification with \ neighbours, the algorithm is:

- Given a test point , compute the distance from \ to every training point .

- Select the \ training points with the smallest distances. Call this set \).

- Return the majority vote: } \mathbf{1}[y_i = c]).

For regression, step 3 is replaced by returning the average: } y_i).

The implicit assumption is that if two feature vectors \ and \ are close, their labels \ and \ are likely the same. This is a form of smoothness assumption on the underlying data distribution.

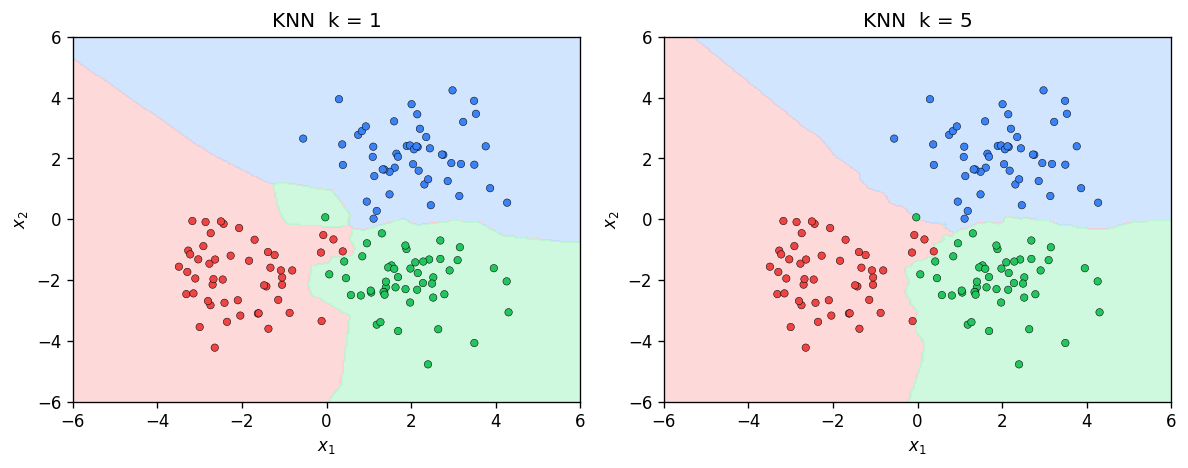

The Role of \

The hyperparameter \ controls the complexity of the model. When , the decision boundary is extremely jagged: every training point creates its own Voronoi cell, and the model memorises the training set exactly. This corresponds to high variance and low bias. As \ increases, the decision boundary becomes smoother. In the extreme case , the classifier always predicts the majority class in the entire training set — high bias, zero variance.

Choosing \ well is critical. A standard approach is to use cross-validation: split the training data into folds, evaluate different values of , and select the one that minimises validation error.

The Bayes Classifier and the Cover-Hart Theorem

To understand the theoretical properties of KNN, we need the concept of the Bayes optimal classifier. Given the true data distribution , the Bayes classifier predicts:

\[ f^* = \arg\max_c \Pr \]No classifier can achieve lower error than the Bayes classifier. The Bayes error \ is the error rate of this optimal classifier.

This is a remarkable result: with enough data, the simplest possible classifier — just copy the label of the nearest neighbour — achieves an error rate within a factor of two of the best possible. For the general -NN classifier, as both \ and , the error converges to the Bayes error.

The Curse of Dimensionality

The Cover-Hart guarantee is asymptotic: it requires . In practice, when the dimension \ is large, we may need an exponentially large number of training points to fill the space densely enough for nearest neighbours to be meaningful. This phenomenon is the curse of dimensionality.

In high dimensions, distances between points concentrate: the ratio of the distance to the nearest neighbour to the distance to the farthest neighbour approaches 1. When all points are roughly equidistant, the notion of “nearest” becomes meaningless. This is why KNN often performs poorly on raw high-dimensional data without dimensionality reduction.

Computational Considerations

Naively, classifying a single test point requires computing \ distances, each costing \), for a total of \) per query. Storing the entire dataset requires \) space. For large datasets, this is prohibitive.

Several data structures can accelerate nearest-neighbour queries. KD-trees partition the feature space along coordinate axes, enabling average-case \) queries in low dimensions. However, their performance degrades to \) as \ grows. Ball trees and locality-sensitive hashing offer alternatives that trade exactness for speed, providing approximate nearest neighbours in sublinear time.

Chapter 3: Linear Regression

Setting Up the Problem

Linear regression is the workhorse of supervised learning for continuous-valued outputs. We are given a training set \}_{i=1}^n) where each \ is a feature vector and \ is a scalar target. The goal is to find a linear function that maps inputs to outputs:

\[ \hat{y} = h = w_0 + w_1 x_1 + w_2 x_2 + \cdots + w_d x_d = \mathbf{w}^\top \bar{\mathbf{x}} \]where ^\top) includes a constant 1 for the bias term , and ^\top). This padding trick lets us absorb the bias into the weight vector and write the prediction compactly as an inner product.

The assumption that the hypothesis is linear may seem restrictive, but it serves as a fundamental building block. Many powerful methods — polynomial regression, kernel methods, neural networks — generalise linear regression in various ways.

Ordinary Least Squares

To find the best linear function, we minimise the sum of squared errors:

\[ L = \sum_{i=1}^n^2 = \|\mathbf{y} - \bar{X}^\top \mathbf{w}\|_2^2 \]where \ \times n}) is the matrix whose columns are the padded feature vectors , and ^\top).

Expanding and taking the gradient:

\[ \nabla_{\mathbf{w}} L = -2\bar{X} \]Setting this to zero yields the normal equations . If \ is invertible, the closed-form solution is:

The matrix \ is always positive semidefinite: for any , we have . This confirms that the loss function is convex, and any critical point is a global minimum.

Gradient Descent for Linear Regression

When \ and \ are large, computing and inverting \ can be expensive. An alternative is gradient descent, which iteratively updates the weights:

\[ \mathbf{w}_{t+1} = \mathbf{w}_t - \eta \nabla_{\mathbf{w}} L \]where \ is the learning rate. For linear regression with the squared loss:

\[ \nabla_{\mathbf{w}} L = 2A^\top \]Each gradient step costs \), which is cheaper than the \) cost of the closed-form solution when we only need a few iterations. Stochastic gradient descent further reduces the per-step cost by computing the gradient on a random subset of the data.

Since the squared loss is convex, gradient descent with a sufficiently small step size is guaranteed to converge to the global minimum. The convergence rate depends on the condition number of : the ratio of its largest to smallest eigenvalue. Ill-conditioned problems converge slowly; this is where regularisation and preconditioning help.

Regularisation: Ridge and Lasso

With many features and limited data, the OLS solution can overfit. Small changes in the training data lead to large changes in , producing predictions with high variance. Regularisation controls this by adding a penalty on the complexity of .

Ridge regression regularisation) adds a penalty proportional to :

\[ L_{\text{ridge}} = \|A\mathbf{w} - \mathbf{y}\|_2^2 + \lambda \|\mathbf{w}\|_2^2 \]The closed-form solution is ^{-1}A^\top \mathbf{y}). The regularisation parameter \ shrinks the weights towards zero and ensures invertibility even when \ is singular. Geometrically, the penalty constrains \ to lie within a ball centred at the origin.

Lasso regression regularisation) uses the penalty :

\[ L_{\text{lasso}} = \|A\mathbf{w} - \mathbf{y}\|_2^2 + \lambda \|\mathbf{w}\|_1 \]The Lasso produces sparse solutions: many components of \ are exactly zero, effectively performing feature selection. There is no closed-form solution; iterative methods such as coordinate descent are used instead.

| Method | Penalty | Sparsity | Closed form? |

|---|---|---|---|

| OLS | None | No | Yes |

| Ridge | \ | No | Yes |

| Lasso | \ | Yes | No |

The Statistical Perspective: Maximum Likelihood

Linear regression can be motivated from a probabilistic viewpoint. Suppose the data is generated by a linear model corrupted by Gaussian noise:

\[ y = \mathbf{w}^\top \mathbf{x} + \epsilon, \qquad \epsilon \sim \mathcal{N} \]Then the conditional distribution of \ given \ is:

\[ p = \frac{1}{\sqrt{2\pi\sigma^2}} \exp\!\left^2}{2\sigma^2}\right) \]The likelihood of the entire dataset, assuming the data points are independent, is:

\[ L = \prod_{i=1}^n p \]Maximising the log-likelihood is equivalent to minimising the negative log-likelihood. Dropping constants that do not depend on :

\[ -\log L = \frac{1}{2\sigma^2}\sum_{i=1}^n^2 + \text{const} \]This is precisely the sum of squared errors! The maximum likelihood estimate under Gaussian noise coincides with the ordinary least squares solution. This beautiful connection tells us that choosing the squared loss is not arbitrary — it is the principled choice when the noise is Gaussian.

Maximum A Posteriori and Regularisation

If we place a prior distribution on , Bayes’ theorem gives the posterior:

\[ p \propto p \cdot p \]The MAP estimate maximises this posterior. With a Gaussian prior \), the MAP objective becomes:

\[ \min_{\mathbf{w}} \sum_{i=1}^n^2 + \frac{\sigma^2}{\tau^2}\|\mathbf{w}\|_2^2 \]This is exactly ridge regression with . Similarly, a Laplace prior on \ leads to the Lasso penalty. The statistical perspective thus provides a principled justification for regularisation: it encodes our prior belief about the plausible magnitude of the weights.

Cross-Validation for Model Selection

The regularisation parameter \ must be chosen carefully. Too small and we overfit; too large and we underfit. Cross-validation is the standard technique.

In -fold cross-validation, the training set is partitioned into \ roughly equal folds. For each candidate value of , we train on \ folds and evaluate on the held-out fold, rotating through all folds. The \ with the lowest average validation error is selected. Common choices are \ or ; the special case \ is called leave-one-out cross-validation.

The Bias-Variance Decomposition for Regression

Returning to the bias-variance tradeoff with the notation of Yu’s notes, the regression function \ = \mathbb{E}[Y \mid X = \mathbf{x}]) is the optimal predictor under squared loss. No function \ can reduce the term \|^2]), which represents the intrinsic difficulty of the problem. When we restrict to a function class , we introduce approximation error. Using a finite training set introduces estimation error. Linear regression with a small number of features has low variance but potentially high bias; adding polynomial features reduces bias at the cost of higher variance.

Chapter 4: Statistical Learning Theory

The Challenge of Generalisation

Every machine learning algorithm faces the same fundamental question: will it perform well on data it has never seen? Training error alone tells us nothing about generalisation. A model that memorises the training set achieves zero training error but may fail catastrophically on new data. Statistical learning theory provides the mathematical framework for understanding when and why learning from finite data is possible.

The PAC Learning Framework

The Probably Approximately Correct framework, introduced by Leslie Valiant in 1984, formalises the idea that a learning algorithm should, with high probability, output a hypothesis that is approximately correct.

The key point is that the sample complexity must be polynomial in \ and . The framework captures the idea that we want to be “probably”) “approximately”) correct.

Empirical Risk Minimisation

In practice, we do not know the true data distribution . Instead, we observe a training set drawn i.i.d. from \ and minimise the empirical risk:

\[ \hat{R} = \frac{1}{n}\sum_{i=1}^n \ell, y_i) \]where \ is a loss function. This approach is called empirical risk minimisation. The hope is that for large , the empirical risk is close to the true risk \ = \mathbb{E}_{ \sim P}[\ell, y)]) uniformly over the hypothesis class.

Concentration Inequalities

The bridge between empirical and true risk is provided by concentration inequalities. The most fundamental is:

For a single, fixed hypothesis , Hoeffding’s inequality tells us that \) concentrates around \) at a rate of \). But ERM searches over a hypothesis class , and we need a uniform guarantee over all .

The Union Bound Argument

For a finite hypothesis class , we can apply the union bound:

\[ \Pr\!\left - R| \geq t\right) \leq \sum_{h \in \mathcal{H}} \Pr - R| \geq t) \leq 2M \exp\!\left \]Setting the right-hand side equal to \ and solving for , we find that with probability at least :

\[ \sup_{h \in \mathcal{H}} |\hat{R} - R| \leq \sqrt{\frac{\log}{2n}} \]This means the sample complexity for uniform convergence is \). The dependence on \ itself) is crucial: it means that even exponentially large hypothesis classes can be learnable if their “effective size” is controlled.

VC Dimension

For infinite hypothesis classes), the union bound over a finite class does not directly apply. The Vapnik-Chervonenkis dimension provides the right notion of complexity.

For example, the class of linear classifiers in \ has VC dimension . Three non-collinear points in \ can be shattered by lines, but no set of four points can be.

The VC dimension replaces \ in the generalisation bound. Specifically, the fundamental theorem of statistical learning states that a hypothesis class is PAC-learnable if and only if its VC dimension is finite. The sample complexity scales as:

\[ n = O\!\left + \log}{\epsilon^2}\right) \]Structural Risk Minimisation

The bias-variance tradeoff from Chapter 1 has a precise analogue in learning theory. Consider a sequence of nested hypothesis classes \ with increasing VC dimension. For each class, we can bound the true risk:

\[ R \leq \hat{R} + \text{complexity penalty}, n, \delta) \]Structural risk minimisation selects the hypothesis class \ that minimises this bound. Using a richer class reduces the empirical risk but increases the complexity penalty. SRM provides a principled way to balance these competing effects without needing a separate validation set.

Chapter 5: Logistic Regression and Generalised Linear Models

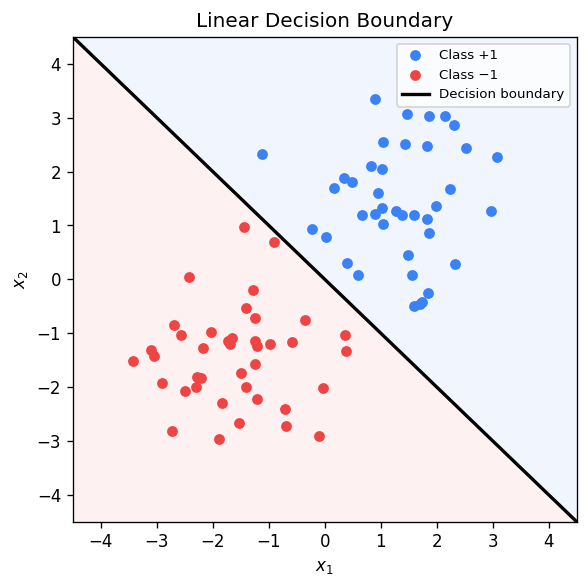

From Regression to Classification

In the previous chapters, linear regression addressed continuous outputs. But many real-world problems require predicting discrete labels. Can we adapt the linear framework for classification?

A naive approach is to use linear regression with labels \ and threshold the output: predict class 1 if . This works poorly because the linear function is unbounded, so we may get predictions far outside , and the squared loss treats all errors equally rather than penalising confident wrong predictions more. As Yu’s notes observe, reducing classification to regression solves a harder problem than necessary.

What we really want is a model that outputs a probability \ = \Pr \in [0, 1]). This probability should depend on the features through a linear combination , but mapped into .

The Sigmoid Function and Log-Odds

The key insight is to model the log-odds as a linear function:

\[ \log \frac{p}{1 - p} = \mathbf{w}^\top \mathbf{x} \]The left-hand side ranges over all of , matching the range of the linear function on the right. Solving for \ gives:

The sigmoid function is an S-shaped curve that maps \ to \). It satisfies the useful identity \ = 1 - \sigma), and its derivative is \ = \sigma)).

Prediction is straightforward: predict class 1 if \ > 1/2), equivalently if . This is the same decision rule as the perceptron! The difference is that logistic regression also provides calibrated probability estimates and uses a different training objective.

Maximum Likelihood Estimation

We model the data with a Bernoulli distribution: \ = p^{y})^{1 - y}) for . Given an i.i.d. training set, the conditional log-likelihood is:

\[ \ell = \sum_{i=1}^n \bigl[y_i \log p +\log)\bigr] \]Maximising this is equivalent to minimising the cross-entropy loss:

\[ L = -\ell = \sum_{i=1}^n \bigl[-y_i \log p_i -\log\bigr] \]Using the \ encoding for labels, the loss takes the elegant form:

\[ L = \sum_{i=1}^n \log\bigl\bigr) \]where . This is the sum of the logistic loss \ = \log) evaluated at the “margin” . When the margin is large and positive, the loss is near zero. When it is negative, the loss grows linearly.

Convexity and Optimisation

A crucial property of the cross-entropy loss is that it is convex in . This can be verified by computing the Hessian. The gradient of the loss labels) is:

\[ \nabla_{\mathbf{w}} \ell_i = - y_i)\,\mathbf{x}_i \]and the Hessian contribution is:

\[ H_i = p_i\,\mathbf{x}_i \mathbf{x}_i^\top \]Since \ > 0), each \ is positive semidefinite, confirming convexity.

Unlike linear regression, there is no closed-form solution. We must resort to iterative methods:

Gradient descent updates \mathbf{x}_i). A safe step size is , where \ is the spectral norm of the data matrix. Stochastic gradient descent uses mini-batches for efficiency.

Newton’s method uses second-order information: , where \mathbf{x}_i\mathbf{x}_i^\top). This converges quadratically near the optimum but requires \) memory and \) per step for the matrix inversion.

Multiclass Logistic Regression: The Softmax

For \ classes, we generalise the sigmoid to the softmax function. Each class \ has its own weight vector , and:

\[ \Pr = \frac{\exp}{\sum_{\ell=1}^c \exp} \]This is known as multinomial logistic regression or softmax regression. The loss function becomes the multiclass cross-entropy, and the optimisation is again convex.

Generalised Linear Models and the Exponential Family

Logistic regression is a special case of the broader framework of generalised linear models. The key observation, as Poupart emphasises in his lectures, is that when the class-conditional distributions belong to the exponential family, the posterior probability takes the form of a sigmoid or softmax that is linear in .

The Gaussian, Bernoulli, Poisson, gamma, and many other common distributions are members of the exponential family. When we use a categorical prior over classes and exponential family class-conditionals, Bayes’ theorem yields a posterior that is precisely a softmax of linear functions. Logistic regression directly parametrises this posterior, bypassing the need to estimate the class-conditional distributions. This is the distinction between generative models and discriminative models) directly).

Chapter 6: The Perceptron

Historical Context

The perceptron, introduced by Frank Rosenblatt in 1958, was the first algorithmically defined learning machine and one of the foundational results of artificial intelligence. Inspired by biological neurons, the perceptron receives a weighted sum of inputs, applies a threshold, and outputs a binary decision. Despite its simplicity, the perceptron carries deep lessons about linear classifiers, convergence guarantees, and the limits of single-layer models.

The Perceptron Model

Using the padding trick into \ and appending a 1 to ), we can write the prediction as \) where \ and \ are now \)-dimensional.

The Perceptron Algorithm

The perceptron is an online algorithm: it processes training examples one at a time and updates only when it makes a mistake.

Algorithm:

- Initialise .

- For each training example \):

- Compute \).

- If \ \leq 0)):

- Update: .

- Repeat until no mistakes are made on a full pass through the data.

The update rule has an elegant geometric interpretation. When the perceptron misclassifies a positive example), adding \ to \ rotates the weight vector towards , increasing the inner product \ and making a correct classification more likely next time. Conversely, for a misclassified negative example, subtracting \ rotates \ away from .

As Yu’s notes observe, the key insight is that after an update, . The update strictly improves the margin on the misclassified point — and the remarkable fact is that it does not ruin the possibility of satisfying all other constraints.

Linear Separability and the Geometric Margin

A large margin means the classes are well-separated and classification is “easy.” A small margin means the classes are nearly overlapping and classification is difficult.

The Perceptron Convergence Theorem

The most important theoretical result about the perceptron is its finite convergence guarantee.

Proof sketch. We track two quantities across the sequence of updates. After \ mistakes:

Lower bound on growth of : Each update increases the inner product with the reference solution by at least :

\[ \langle \mathbf{w}_{k+1}, \mathbf{w}^*\rangle = \langle \mathbf{w}_k, \mathbf{w}^*\rangle + \langle \mathbf{a}, \mathbf{w}^*\rangle \geq \langle \mathbf{w}_k, \mathbf{w}^*\rangle + s \]By telescoping: .

Upper bound on growth of : Each update increases the squared norm by at most ):

\[ \|\mathbf{w}_{k+1}\|_2^2 = \|\mathbf{w}_k\|_2^2 + 2\langle \mathbf{w}_k, \mathbf{a}\rangle + \|\mathbf{a}\|_2^2 \leq \|\mathbf{w}_k\|_2^2 + R^2 \]By telescoping: .

Combining: By Cauchy-Schwarz, . Squaring both sides: , giving . \

The bound is tightest when we optimise over the choice of reference solution . Since both \ and \ scale together if we rescale , we can normalise to , in which case the bound becomes , where \ is the geometric margin.

Limitations: The XOR Problem

The perceptron can only learn linearly separable functions. The classic counterexample is the XOR function: \ \to -1), \ \to +1), \ \to +1), \ \to -1). No single hyperplane can separate the positive and negative examples. When presented with linearly inseparable data, the perceptron will cycle endlessly through the training set, never converging.

This limitation was famously highlighted by Minsky and Papert in 1969, leading to a prolonged “AI winter” during which interest in neural networks waned. The resolution came decades later with the development of multi-layer networks and the backpropagation algorithm, which can represent nonlinear decision boundaries.

Connections to SVMs and Neural Networks

The perceptron’s convergence bound \ reveals that the margin is the key geometric quantity. Support vector machines take this insight to its logical conclusion: instead of finding any separating hyperplane, they find the one that maximises the margin. The perceptron can be viewed as a stochastic approximation to the SVM objective.

Similarly, the perceptron is a single-layer neural network with a sign activation function. By stacking multiple layers of perceptron-like units with differentiable activation functions, we obtain multi-layer perceptrons that can represent arbitrarily complex decision boundaries — overcoming the XOR limitation.

Chapter 7: Mixture Models and the EM Algorithm

Unsupervised Learning and Clustering

So far, every algorithm we have studied has relied on labelled data: pairs \) where the correct output is known. In many settings, labels are expensive or unavailable, and we must discover structure in the data without supervision. Clustering is the canonical unsupervised learning problem: given points , partition them into groups such that points within a group are similar and points across groups are dissimilar.

-Means Clustering

The -means algorithm is the simplest and most widely used clustering method. It seeks to partition \ points into \ clusters \ by minimising the total within-cluster variance:

\[ \min_{C_1, \ldots, C_k} \sum_{j=1}^k \sum_{\mathbf{x}_i \in C_j} \|\mathbf{x}_i - \boldsymbol{\mu}_j\|_2^2, \qquad \boldsymbol{\mu}_j = \frac{1}{|C_j|}\sum_{\mathbf{x}_i \in C_j} \mathbf{x}_i \]where \ is the centroid of cluster .

Lloyd’s algorithm is the standard heuristic for -means. It alternates between two steps:

- Assignment step: Assign each point to the cluster with the nearest centroid: .

- Update step: Recompute each centroid as the mean of its assigned points.

These steps are repeated until convergence. Each step decreases or maintains the objective, so the algorithm is guaranteed to converge, though only to a local minimum. The -means problem is NP-hard in general, even to approximate within a constant factor, so multiple random restarts are standard practice.

From Hard Clustering to Soft Clustering

-means assigns each point to exactly one cluster — a “hard” assignment. But what if a point lies between two clusters? It may be more natural to say that the point belongs 60% to cluster 1 and 40% to cluster 2. This motivates a probabilistic approach where cluster membership is represented by a probability distribution.

Gaussian Mixture Models

A Gaussian mixture model assumes that the data is generated by a mixture of \ Gaussian distributions:

\[ p = \sum_{j=1}^k \pi_j \,\mathcal{N} \]where \ are the mixing weights), and each component is a multivariate Gaussian with mean \ and covariance . The parameters are \}_{j=1}^k).

The generative story is: to produce a data point, first sample a component \), then sample \). The latent variable \ tells us which component generated the point, but it is unobserved.

If we knew the latent assignments , estimation would be straightforward: for each component, compute the empirical mean, covariance, and proportion from the assigned points. The difficulty is that we do not know the assignments, and directly maximising the log-likelihood

\[ \ell = \sum_{i=1}^n \log \sum_{j=1}^k \pi_j \,\mathcal{N} \]is intractable because the log of a sum does not simplify nicely.

The EM Algorithm

The Expectation-Maximisation algorithm, introduced by Dempster, Laird, and Rubin, is a general technique for maximum likelihood estimation in the presence of latent variables. Applied to GMMs, it alternates between computing soft assignments and updating the parameters.

The derivation begins with a lower bound on the log-likelihood. For any distributions \ over the latent variable , Jensen’s inequality gives:

\[ \log p = \log \sum_j q_i \frac{p}{q_i} \geq \sum_j q_i \log \frac{p}{q_i} \]This lower bound is tight when \ = p), the posterior distribution of the latent variable. EM alternates between maximising the ELBO over \ and over .

E-step: Fix . For each data point \ and each component , compute the responsibility:

\[ r_{ij} = q_i = \frac{\pi_j \,\mathcal{N}}{\sum_{\ell=1}^k \pi_\ell \,\mathcal{N}} \]This is the posterior probability that point \ was generated by component . It is the “soft assignment” analogue of -means’ hard assignment.

M-step: Fix the responsibilities . Update the parameters using weighted statistics:

\[ n_j = \sum_{i=1}^n r_{ij}, \qquad \boldsymbol{\mu}_j = \frac{1}{n_j}\sum_{i=1}^n r_{ij}\,\mathbf{x}_i, \qquad \Sigma_j = \frac{1}{n_j}\sum_{i=1}^n r_{ij}^\top, \qquad \pi_j = \frac{n_j}{n} \]These are simply the weighted mean, covariance, and proportion, where the weights are the responsibilities.

Convergence Properties

Each iteration of EM is guaranteed to increase the log-likelihood:

\[ \ell}) \geq \ell}) \]This follows from the fact that the E-step makes the lower bound tight, and the M-step increases the lower bound. Since the log-likelihood is bounded above, the sequence converges. However, like -means, EM converges only to a local maximum of the likelihood, not necessarily the global maximum. Multiple random initialisations are recommended.

-Means as a Special Case of EM

There is a beautiful connection between -means and EM. Consider a GMM where all covariance matrices are \ and we take the limit . In this limit, the responsibilities become “hard”: \ for the closest centroid and 0 for all others. The E-step reduces to the assignment step of -means, and the M-step reduces to recomputing the centroids. Thus, -means is a “hard” version of EM for spherical Gaussians with vanishing variance.

Model Selection: How Many Components?

Choosing the number of components \ is a model selection problem. Simply maximising the likelihood does not work: adding more components always increases the likelihood. We need a criterion that penalises model complexity.

The Bayesian Information Criterion and Akaike Information Criterion are two widely used approaches:

\[ \text{BIC} = -2\ell + p \log n, \qquad \text{AIC} = -2\ell + 2p \]where \ is the number of free parameters and \ is the MLE. Both criteria balance goodness of fit against complexity. BIC imposes a heavier penalty for large \ and tends to select simpler models; AIC is better suited when the goal is prediction rather than model identification. In practice, one fits GMMs for \ and selects the \ that minimises BIC or AIC.

Another approach is to use cross-validation, holding out data and evaluating the log-likelihood on the held-out set. This is more computationally expensive but avoids the assumptions built into BIC and AIC.

Beyond Gaussians

The EM framework is not limited to Gaussian components. Any mixture model with tractable E-step and M-step computations can be fit using EM. Mixtures of Bernoullis, mixtures of multinomials, and mixtures of other exponential family distributions are all common in practice. The general principle remains the same: introduce latent variables, compute posterior responsibilities in the E-step, and update parameters in the M-step.

Chapter 8: Support Vector Machines

Support vector machines occupied the throne of machine learning for nearly two decades before deep neural networks took over around 2010. In the low-data regime they remain remarkably effective, and the mathematical framework they rest on — maximum-margin separation, Lagrangian duality, and the kernel trick — continues to inform modern algorithm design. This chapter develops the theory from first principles.

The Maximum-Margin Classifier

Consider a binary classification problem with a training set \ \subseteq \mathbb{R}^d \times {+1,-1} : i=1,\dots,n}). When the data are linearly separable, there exists a hyperplane \ such that every training point is correctly classified:

\[ \forall\, i,\quad y_i > 0. \]Infinitely many such hyperplanes exist — perturbing \) slightly preserves the separation. The perceptron algorithm finds any separating hyperplane. SVMs ask a sharper question: among all separating hyperplanes, which one is optimal?

The margin of the dataset is the maximum over all hyperplanes: \ = \max_{\mathbf{w}} \gamma).

The distance from a point \ to the hyperplane \ equals . When the hyperplane correctly classifies \), the signed quantity \ / |\mathbf{w}|_2) is positive and equals the geometric distance. The SVM seeks to maximize this worst-case distance:

\[ \max_{\mathbf{w},b} \min_{i=1,\dots,n} \frac{y_i}{\|\mathbf{w}\|_2}. \]This formulation is scale-invariant: replacing \) by \) for any \ does not change the hyperplane or the objective. We exploit this freedom by fixing the minimum functional margin to be 1, that is, requiring \ = 1). Under this normalization the margin becomes , and maximizing it is equivalent to minimizing . We arrive at the hard-margin SVM:

\[ \min_{\mathbf{w}, b} \; \frac{1}{2}\|\mathbf{w}\|_2^2 \quad \text{subject to} \quad y_i \ge 1, \;\; \forall\, i=1,\dots,n. \]This is a quadratic program: a quadratic objective with linear constraints. In contrast, the perceptron solves a linear feasibility problem with objective identically zero. The SVM objective yields a unique solution, whereas the perceptron admits infinitely many.

Geometry: Three Parallel Hyperplanes and Support Vectors

At the optimum, the constraints partition into active) and inactive). The active constraints correspond to points lying exactly on one of two bounding hyperplanes:

\[ H_+ = \{\mathbf{x} : \langle \mathbf{x}, \mathbf{w} \rangle + b = +1\}, \qquad H_- = \{\mathbf{x} : \langle \mathbf{x}, \mathbf{w} \rangle + b = -1\}. \]The decision boundary \ lies equidistant between \ and . The strip between \ and \ is a “dead zone” containing no training points. Each bounding hyperplane has distance \ from , so the total margin width is .

At optimality there must be at least one support vector from each class. This sparsity property is what makes SVMs attractive: the decision boundary depends on only a handful of critical training examples.

The Lagrangian Dual Formulation

To solve the constrained QP, we introduce Lagrange multipliers , one per constraint:

\[ \mathcal{L} = \frac{1}{2}\|\mathbf{w}\|_2^2 - \sum_{i=1}^n \alpha_i \bigl[y_i - 1\bigr]. \]The primal problem is . By strong duality, we can swap the min and max. We first minimize over \ and \ by setting partial derivatives to zero:

\[ \frac{\partial \mathcal{L}}{\partial \mathbf{w}} = \mathbf{w} - \sum_{i=1}^n \alpha_i y_i \mathbf{x}_i = 0 \quad \Longrightarrow \quad \mathbf{w} = \sum_{i=1}^n \alpha_i y_i \mathbf{x}_i, \]\[ \frac{\partial \mathcal{L}}{\partial b} = -\sum_{i=1}^n \alpha_i y_i = 0. \]Substituting back yields the dual problem:

\[ \max_{\boldsymbol{\alpha} \ge 0} \sum_{i=1}^n \alpha_i - \frac{1}{2}\sum_{i,j} \alpha_i \alpha_j y_i y_j \langle \mathbf{x}_i, \mathbf{x}_j \rangle \quad \text{subject to} \quad \sum_{i=1}^n \alpha_i y_i = 0. \]Several observations are crucial. First, the dual depends on the data only through inner products \ — this will enable the kernel trick in the next chapter. Second, the KKT complementary slackness conditions require \ - 1] = 0) for all . Thus \ only for support vectors, confirming sparsity.

The weight vector \ is a linear combination of support vectors. At test time, prediction is \ = \text{sign}\bigl), again involving only inner products. The bias \ is recovered from any support vector: \ for any \ with .

The Dual Geometric View

There is an elegant geometric interpretation due to Rosen. A dataset is linearly separable if and only if the convex hulls of the positive and negative examples are disjoint: \ \cap \text{conv} = \emptyset). The SVM hyperplane is the perpendicular bisector of the shortest line segment connecting \) to \). This minimum-distance pair \) may involve convex combinations of training points, not necessarily individual data points. The maximum margin equals half the distance between the two convex hulls.

Soft-Margin SVM

Real datasets are rarely perfectly separable. The soft-margin SVM relaxes the hard constraints by introducing slack variables :

\[ \min_{\mathbf{w}, b, \boldsymbol{\xi}} \; \frac{1}{2}\|\mathbf{w}\|_2^2 + C \sum_{i=1}^n \xi_i \quad \text{subject to} \quad y_i \ge 1 - \xi_i, \;\; \xi_i \ge 0. \]The hyperparameter \ controls the trade-off between maximizing the margin small) and minimizing training errors small). When , we recover the hard-margin SVM. When , we ignore the data entirely.

The dual of the soft-margin SVM is:

\[ \max_{0 \le \alpha_i \le C} \sum_{i=1}^n \alpha_i - \frac{1}{2}\sum_{i,j} \alpha_i \alpha_j y_i y_j \langle \mathbf{x}_i, \mathbf{x}_j \rangle \quad \text{subject to} \quad \sum_{i=1}^n \alpha_i y_i = 0. \]The only difference from the hard-margin dual is the box constraint . Complementary slackness now yields a richer structure: if , the point lies on the correct side of the margin; if , the point sits exactly on the margin boundary; if , the point is within the margin or misclassified.

The Hinge Loss Perspective

The soft-margin SVM admits an equivalent unconstrained formulation:

\[ \min_{\mathbf{w}, b} \; \frac{1}{2}\|\mathbf{w}\|_2^2 + C \sum_{i=1}^n_+, \quad \hat{y}_i = \langle \mathbf{x}_i, \mathbf{w} \rangle + b, \]where _+ = \max{0, t}) is the hinge loss. This loss is zero when the functional margin \ and grows linearly as the margin decreases below 1. The hinge loss is a convex upper bound on the 0-1 loss , and it is classification-calibrated: minimizing it in population leads to the Bayes-optimal classifier. This perspective connects SVMs to empirical risk minimization with regularization. The \ term is the regularizer, and \ plays the role of the inverse regularization strength.

Other surrogate losses lead to different classifiers: the logistic loss \) gives logistic regression, the exponential loss \ leads to AdaBoost, and the squared hinge _+^2) is sometimes preferred for its smoothness.

Optimization

Training an SVM can be done by several methods. The dual QP can be solved by general-purpose QP solvers, but for large-scale problems, specialized algorithms are preferred. The Sequential Minimal Optimization algorithm decomposes the problem into a sequence of two-variable subproblems that admit closed-form solutions. Alternatively, the primal hinge-loss formulation can be optimized directly by stochasticgradient descent: at each step, sample a data point \) and update

\[ \mathbf{w} \leftarrow \mathbf{w} - \eta\biggl, \]where \). This scales linearly in \ and , making it feasible for very large datasets.

Chapter 9: Kernel Methods

Kernel methods provide a principled framework for extending linear algorithms to handle nonlinear patterns. The central insight is the kernel trick: by replacing inner products with a kernel function, we can implicitly operate in a high-dimensional feature space without ever computing the feature vectors explicitly.

Feature Maps and the Kernel Trick

Consider the XOR problem: four points in \ that no hyperplane can separate. A quadratic classifier \ = \langle \mathbf{x}, Q\mathbf{x} \rangle + \sqrt{2}\langle \mathbf{x}, \mathbf{p} \rangle + b) can separate them. We can rewrite this as a linear function in a lifted feature space by defining the feature map

\[ \phi = \bigl^\top, \]so that \ = \langle \phi, \mathbf{w} \rangle) for an appropriate weight vector . The feature map \ is nonlinear, but the classifier is linear in the new space.

The key observation is that inner products in feature space can be computed cheaply:

\[ \langle \phi, \phi \rangle =^2. \]The right-hand side costs \) to evaluate — the same as computing an inner product in the original space — even though the feature space has dimension 6.

Given a feature map , the kernel is determined. Conversely, given a kernel, there exists a canonical feature map \ = k), where \) is viewed as a function in the reproducing kernel Hilbert space. The reproducing property states \ \rangle_{\mathcal{H}_k} = f) for any .

Mercer’s Theorem and Positive Definite Kernels

Not every function of two arguments is a valid kernel. The characterization is given by the following:

This means \ \ge 0) for all . If equality holds only when , the kernel is strictly positive definite. A kernel can be thought of as a similarity measure that respects the geometry of some feature space.

Common Kernels

Polynomial kernel. \ =^p), where \ and . The corresponding feature space contains all monomials up to degree . Setting \ recovers the linear kernel.

Gaussian kernel. \ = \exp), where \ is the bandwidth parameter. This kernel corresponds to an infinite-dimensional feature space. It is universal: its RKHS is dense in the space of continuous functions over any compact domain.

Laplace kernel. \ = \exp). Like the Gaussian kernel but based on the unsquared distance, producing rougher functions.

Sigmoid kernel. \ = \tanh). Not positive definite for all parameter values, so it must be used with care.

Kernel Calculus

Kernels are closed under several operations, enabling construction of complex kernels from simpler ones. If \ and \ are kernels, then so are: \ for ; ; ; and \ when the limit exists. These closure properties follow directly from the PSD characterization.

The Representer Theorem

where \ is any loss function. The optimal solution has the form \ = \sum_{j=1}^n \alpha_j k) for some coefficients .

The proof uses an orthogonal decomposition: write \ where \ lies in the span of \}). The loss depends only on function values at training points, which depend only on . The regularizer , so setting \ cannot increase the objective.

This theorem justifies the kernel SVM: replacing \ with \) in the dual gives the kernelized soft-margin SVM, which implicitly finds the maximum-margin hyperplane in . At test time, prediction is \ = \sum_i \alpha_i y_i k + b), requiring only kernel evaluations and the support vectors.

Kernel Ridge Regression and Kernel PCA

The kernel trick applies to any algorithm that depends on data only through inner products. Kernel ridge regression replaces the linear prediction \ with \), where ^{-1} \mathbf{y}) and \ is the \ kernel matrix. Kernel PCA computes principal components in feature space by eigendecomposing the centered kernel matrix, enabling nonlinear dimensionality reduction.

Reproducing Kernel Hilbert Spaces

The RKHS \ associated with kernel \ is the unique Hilbert space of functions satisfying the reproducing property. Formally, \ : \mathbf{x} \in \mathcal{X}}}), with inner product , k \rangle_{\mathcal{H}_k} = k). The RKHS norm \ measures the complexity of ; regularizing by this norm in empirical risk minimization controls model complexity. A kernel is universal if its RKHS is dense in \) for compact , meaning it can approximate any continuous function. The Gaussian kernel is universal; the polynomial kernel is not.

Computational Trade-offs

Kernelization shifts the computational bottleneck from the feature dimension to the number of training points. Without kernels, training costs \) and testing \). With kernels, forming the Gram matrix costs \), solving the dual is \), and testing costs \) per new point) where \ is the number of support vectors). This makes kernel methods impractical for very large datasets but powerful when \ is moderate and the feature space is high-dimensional or infinite.

Chapter 10: Gaussian Processes

Gaussian processes provide a Bayesian, nonparametric approach to regression and classification. Where kernel SVMs find a single best function, GPs maintain an entire distribution over functions, enabling principled uncertainty quantification.

From Bayesian Linear Regression to GPs

Recall Bayesian linear regression with features \): we place a prior \), assume \ + \epsilon) with \), and compute the posterior \). This is the weight-space view. Alternatively, we can work directly in function space: since \ = \mathbf{w}^\top \phi) is linear in the Gaussian , the function values at any finite collection of inputs are jointly Gaussian.

The mean function is \ = \mathbb{E}[f] = \phi^\top \mathbb{E}[\mathbf{w}] = 0), and the covariance function is

\[ k = \mathbb{E}[ff] = \frac{1}{\alpha}\phi^\top \phi. \]This is a kernel! The function-space view replaces a distribution over weight vectors with a distribution over functions, described by the mean and covariance functions.

GP Prior and Posterior

The prior \) encodes our beliefs about the function before seeing data. Given training data \ and targets , with a Gaussian likelihood \ + \epsilon_i), the posterior is also a GP. For a test point , the predictive distribution is Gaussian with

\[ \mu_* = \mathbf{k}_*^\top^{-1} \mathbf{y}, \qquad \sigma_*^2 = k - \mathbf{k}_*^\top^{-1} \mathbf{k}_*, \]where \) is the training kernel matrix and , \dots, k]^\top). The predictive mean \ interpolates the training data, while the predictive variance \ shrinks near observed points and grows in unobserved regions. At a noiseless training point, : there is no uncertainty where we have observed the function.

Kernel Design

The choice of kernel profoundly shapes the GP’s behavior. The Gaussian kernel \ = \exp) produces smooth, infinitely differentiable sample functions. The exponential kernel \ = \exp) yields rougher, continuous but not differentiable samples — resembling Brownian motion paths. The bandwidth \ controls the correlation length: small \ means rapid variation, large \ means slowly varying functions. Kernel hyperparameters are typically set by maximizing the marginal likelihood \), which integrates out the function and naturally trades off data fit against model complexity.

GP for Regression

The computational bottleneck of GP regression is the inversion of the \ matrix , costing \). This is cubic in the number of training points rather than in the feature dimension, which is the complementary trade-off to the weight-space view where complexity is cubic in the number of features. For the Gaussian kernel with infinite-dimensional features, the weight-space approach is impossible, but the function-space GP computation is well-defined.

Sparse GP approximations reduce the cost to \) where \ is the number of inducing points, making GPs feasible for moderately large datasets.

GP for Classification

For binary classification, the likelihood is Bernoulli: \ = \sigma)) where \ is the logistic function. The posterior \) is no longer Gaussian. The Laplace approximation finds a Gaussian approximation centered at the mode of the posterior, then uses this Gaussian to make predictions. Expectation propagation provides an alternative that is often more accurate.

Relationship to Kernel Methods and Neural Networks

GP regression with a Gaussian likelihood is equivalent to kernel ridge regression: the predictive mean is identical. However, GPs additionally provide uncertainty estimates through the predictive variance. A remarkable connection to neural networks was established by Neal: a single-hidden-layer neural network with i.i.d. random weights, as the width tends to infinity, converges in distribution to a GP. The kernel of this limiting GP depends on the activation function. This equivalence has been extended to deep networks, where the Neural Tangent Kernel describes the training dynamics of infinitely wide networks trained by gradient descent.

Chapter 11: Neural Networks and Backpropagation

We now turn from kernel methods, which use fixed feature maps, to neural networks, which learn the feature map jointly with the linear predictor.

From Perceptron to Multi-Layer Perceptron

The single-layer perceptron computes \), which defines a linear decision boundary. It cannot solve the XOR problem. The multi-layer perceptron stacks linear transformations and nonlinear activations:

\[ \mathbf{z} = U\mathbf{x} + \mathbf{c}, \quad \mathbf{h} = \sigma, \quad \hat{y} = \langle \mathbf{h}, \mathbf{w} \rangle + b, \]where , , and \ is a nonlinear activation function applied element-wise. The hidden layer \ constitutes a learned representation of the input. For XOR, choosing , , , , and \ correctly classifies all four points.

More generally, a depth-\ MLP computes

\[ \mathbf{h}_0 = \mathbf{x}, \quad \mathbf{h}_k = \sigma \;\text{ for } k=1,\dots,L, \quad \hat{\mathbf{y}} = W_{L+1}\mathbf{h}_L + \mathbf{b}_{L+1}, \]where \ and . For classification into \ classes, the output is passed through a softmax: \ / \sum_j \exp).

The key difference from kernel methods is that the feature map \ = \mathbf{h}_L) is learned through gradient-based optimization rather than fixed a priori.

The Universal Approximation Theorem

is dense in \) if and only if \ is not a polynomial almost everywhere.

This guarantees that a sufficiently wide two-layer network can approximate any continuous function. The caveat is that the required width may be astronomically large. In practice, deeper networks are more parameter-efficient than very wide shallow ones, which motivates deep learning.

An even stronger result is the Kolmogorov-Arnold representation theorem: any continuous function \ can be written as a finite superposition of continuous univariate functions and addition, matching the structure of a two-hidden-layer network.

Forward Pass

The forward pass evaluates the network layer by layer. Each layer applies a linear transformation followed by a nonlinear activation. For a classification task with cross-entropy loss, the forward pass computes:

- Hidden representations .

- Logits .

- Predicted probabilities \).

- Loss .

Backpropagation

Training requires computing the gradient of the loss with respect to all parameters. Backpropagation is simply the chain rule applied systematically to the computational graph, combined with dynamic programming to avoid redundant computation.

The reverse-mode differentiation algorithm proceeds as follows. Let \ be the “adjoint” of node . Initialize . Then propagate backwards:

\[ V_i = \sum_{j \in \text{successors}} \frac{\partial f_j}{\partial v_i} \cdot V_j. \]For a depth-\ MLP, this gives the familiar layer-wise backpropagation:

\[ \frac{\partial \ell}{\partial W_k} = \frac{\partial \mathbf{z}_k}{\partial W_k} \cdot \frac{\partial \ell}{\partial \mathbf{z}_k}, \quad \frac{\partial \ell}{\partial \mathbf{h}_{k-1}} = W_k^\top \frac{\partial \ell}{\partial \mathbf{z}_k}, \quad \frac{\partial \ell}{\partial \mathbf{z}_k} = \text{diag}) \cdot \frac{\partial \ell}{\partial \mathbf{h}_k}. \]Automatic Differentiation

Backpropagation is a special case of automatic differentiation, which computes exact derivatives of any function expressible as a composition of elementary operations.

For neural networks where , reverse-mode AD computes the full gradient in time proportional to a single forward pass. This is why training neural networks is feasible despite having millions of parameters. The forward mode is preferable when the input dimension \ is small and the output dimension \ is large. Modern deep learning frameworks implement reverse-mode AD automatically.

Activation Functions

The choice of activation function significantly affects training dynamics:

Sigmoid: \ = 1/). Outputs in \), saturates for large , causing vanishing gradients.

Tanh: \ = 2\sigma - 1). Zero-centered, but still saturates.

ReLU: \ = \max). Non-saturating for positive inputs, but “dead” neurons can occur when inputs are always negative. Dominates modern architectures due to computational simplicity and strong gradient signal.

GELU: \ = t \cdot \Phi), where \ is the standard Gaussian CDF. Smooth approximation to ReLU that is differentiable everywhere. Used extensively in Transformers.

Leaky ReLU and ELU address the dead neuron problem by allowing small gradients for negative inputs.

Chapter 12: Training Deep Neural Networks

Building a deep network architecture is only half the challenge; training it effectively requires navigating a complex, non-convex loss landscape. This chapter covers the practical toolkit for training deep networks.

Loss Landscapes and Optimization Challenges

The loss function of a deep network, as a function of its parameters, is highly non-convex with many local minima, saddle points, and flat regions. Despite this, gradient-based optimization works remarkably well in practice. Key challenges include: vanishing or exploding gradients as depth increases; sensitivity to initialization; balancing learning speed with generalization.

Stochastic Gradient Descent and Variants

Full-batch gradient descent computes the gradient over all \ training examples per step:

\[ \boldsymbol{\theta} \leftarrow \boldsymbol{\theta} - \eta \cdot \frac{1}{n}\sum_{i=1}^n \nabla_{\boldsymbol{\theta}} \ell, y_i). \]Stochastic gradient descent replaces the full sum with a minibatch :

\[ \boldsymbol{\theta} \leftarrow \boldsymbol{\theta} - \eta \cdot \frac{1}{|B|}\sum_{i \in B} \nabla_{\boldsymbol{\theta}} \ell, y_i). \]The minibatch gradient is an unbiased estimate of the full gradient. Smaller batches introduce more noise but can escape sharp local minima and often lead to better generalization.

Momentum accelerates SGD by accumulating a running average of past gradients:

\[ \mathbf{v}_{t+1} = \beta \mathbf{v}_t + \eta \nabla \ell, \quad \boldsymbol{\theta}_{t+1} = \boldsymbol{\theta}_t - \mathbf{v}_{t+1}. \]This dampens oscillations and speeds convergence along consistent gradient directions. The coefficient \) controls the “memory” of past gradients.

Nesterov momentum evaluates the gradient at a “look-ahead” position: \), where \). This achieves the optimal \) convergence rate for convex problems.

AdaGrad adapts the learning rate per parameter by dividing by the accumulated sum of squared gradients. It works well for sparse gradients but can prematurely shrink the learning rate.

RMSProp fixes this by using an exponential moving average of squared gradients:

\[ s_t =s_{t-1} + \lambda^2, \quad \boldsymbol{\theta} \leftarrow \boldsymbol{\theta} - \frac{\eta}{\sqrt{s_t + \epsilon}} \odot \nabla \ell. \]Adam combines momentum with adaptive learning rates:

\[ \mathbf{v}_t =\mathbf{v}_{t-1} + \gamma \nabla \ell, \quad s_t =s_{t-1} + \lambda^2, \]with bias correction applied to both estimates. Adam is the default optimizer in most deep learning applications, though SGD with momentum can sometimes achieve better generalization with careful tuning.

Learning Rate Schedules

The learning rate \ is perhaps the single most important hyperparameter. Common strategies include:

Step decay: Reduce \ by a factor every fixed number of epochs.

Cosine annealing: )), smoothly decaying from \ to \ over \ iterations.

Warmup: Start with a very small learning rate and linearly increase it over a warmup period before applying the main schedule. Used in Transformer training, where the original Attention Is All You Need paper used \) with warmup steps .

Normalization

Batch normalization normalizes activations across the minibatch. For a layer with activations , BN computes /\sqrt{\sigma_j^2 + \epsilon}) where \ and \ are the minibatch mean and variance of the -th feature. Learnable scale \ and shift \ parameters are applied afterwards: . During testing, running averages of the training statistics are used. BN stabilizes training, allows higher learning rates, and acts as a regularizer.

Layer normalization normalizes across features rather than across the batch, making it suitable for variable-length sequences and small batches. It is the standard in Transformers.

Regularization

Dropout randomly sets each hidden unit to zero with probability \ during training. At test time, no units are dropped, but weights are scaled by \ during training). Dropout prevents co-adaptation of neurons and can be interpreted as an implicit ensemble of exponentially many sub-networks.

Weight decay adds an \ penalty \ to the loss, equivalent to decaying the weights by a factor \) at each SGD step.

Early stopping monitors performance on a validation set and halts training when validation loss begins to increase, preventing overfitting. It can be shown to be equivalent to a form of \ regularization.

Initialization Strategies

Proper initialization is critical to prevent vanishing or exploding signals at the start of training.

Xavier initialization sets weights as )) or uniformly in }, \sqrt{6/}]). This maintains variance of activations across layers for linear or tanh activations.

He initialization uses \), which accounts for the fact that ReLU zeros out roughly half the inputs. This is the standard for networks using ReLU activations.

Chapter 13: Convolutional Neural Networks

Convolutional neural networks exploit the spatial structure of images through three key ideas: local connectivity, weight sharing, and translation equivariance. They have been the dominant architecture for computer vision since AlexNet’s breakthrough on ImageNet in 2012.

The Convolution Operation

In an MLP, each output neuron connects to every input. For images, this is wasteful: a pixel’s neighbors carry far more information than distant pixels. A convolutional layer restricts connections to a local window and slides it across the input:

\[ _{ij} = \sigma\Bigl_{i+a, j+b, c} + \text{bias}\Bigr), \]where \ is a filter of spatial size \ and depth . The same weights are applied at every spatial position — this is weight sharing. Multiple filters produce multiple output feature maps, each detecting a different pattern.

The output spatial dimensions are determined by the input size, filter size, stride, and padding. For input size , filter , stride , and padding :

\[ \text{output size} = \left\lfloor\frac{m + 2p - a}{s}\right\rfloor + 1 \quad \times \quad \left\lfloor\frac{n + 2q - b}{t}\right\rfloor + 1. \]Pooling Layers

Pooling reduces spatial resolution, decreasing computation and providing some translation invariance. Max pooling takes the maximum value in each local window; average pooling takes the mean. Pooling operates independently on each channel and has no learnable parameters. Global average pooling reduces each feature map to a single value by averaging over the entire spatial extent, often used before the final classification layer.

Architecture Evolution

The evolution of CNN architectures illustrates key design principles:

LeNet was among the first successful CNNs, applied to handwritten digit recognition. It uses two convolutional layers with average pooling, followed by fully connected layers.

AlexNet scaled up to 8 layers and won the ImageNet Large Scale Visual Recognition Challenge by a wide margin. Key innovations included ReLU activations, dropout regularization, and GPU training.

VGGNet demonstrated that depth matters: using only \ filters, it achieved excellent results with 16–19 layers. Three stacked \ filters have the same receptive field as one \ filter but with fewer parameters: \ = 27) versus \).

GoogLeNet/Inception introduced the Inception module with parallel branches of different filter sizes, and notably used \ convolutions to control channel dimensionality. It was deeper yet more efficient, eliminating fully connected layers in favor of global average pooling.

Skip Connections and Residual Learning

ResNet solved the degradation problem — deeper networks can paradoxically perform worse than shallower ones — by introducing skip connections. A residual block computes

\[ \mathbf{h}_{l+1} = \sigma), \]where \ is a stack of convolutional layers. The network only needs to learn the residual \ rather than the full mapping. Skip connections provide a direct path for gradients during backpropagation, enabling training of networks with over 100 layers. If the optimal transformation is close to the identity, learning a small residual is far easier than learning the full function from scratch.

Transfer Learning and Fine-Tuning

Training a CNN from scratch requires large datasets and significant compute. Transfer learning leverages a network pre-trained on a large dataset and adapts it to a new task. In feature extraction, the pre-trained convolutional layers are frozen and only the final classifier is retrained. In fine-tuning, some or all pre-trained layers are unfrozen and trained with a small learning rate. Transfer learning is effective because early CNN layers learn generic features that transfer well across tasks.

Modern Directions

Vision Transformers apply the Transformer architecture to images by splitting them into \ patches, linearly embedding each patch, and processing the sequence of patch embeddings with standard self-attention. With sufficient data and compute, ViTs match or exceed CNN performance.

ConvNeXt revisited pure CNN designs, incorporating Transformer-inspired modifications to match ViT performance while retaining the inductive biases and efficiency of convolutions. The boundary between CNNs and Transformers continues to blur.

Chapter 14: Recurrent Neural Networks

Feed-forward networks assume fixed-size inputs. Many real-world problems — language modeling, speech recognition, time-series forecasting — involve sequences of variable length. Recurrent neural networks address this by maintaining a hidden state that summarizes past inputs.

Sequence Modeling Motivation

Consider next-word prediction: given a prefix “The cat is”, predict the next word. The prefix length varies, and the prediction depends on the entire history. We need a model that can process sequences of arbitrary length while maintaining a compressed summary of past information.

Vanilla RNN

A recurrent neural network processes a sequence \ by maintaining a hidden state \ that is updated at each time step:

\[ \mathbf{h}_t = \tanh, \quad \hat{\mathbf{y}}_t = \text{softmax}, \]where \ are weight matrices and \ are biases. Crucially, the parameters are shared across all time steps — the same function is applied at each step, with only the hidden state and input changing. The hidden state \ serves as a compressed memory of the sequence .

RNNs can handle various input-output configurations: many-to-many, many-to-one, one-to-many, and sequence-to-sequence with an encoder-decoder structure.

Backpropagation Through Time

Training an RNN is conceptually straightforward: unroll the network across time steps to create a deep feed-forward network, then apply standard backpropagation. This is called backpropagation through time. The loss is typically summed over time steps: \). The gradient with respect to \ involves a sum over contributions from each time step, each of which requires propagating gradients backward through the recurrence.

Vanishing and Exploding Gradients

The gradient of the loss at time \ with respect to the hidden state at time \ involves the product . Each factor is approximately ) \cdot W). If the spectral radius of this Jacobian is consistently less than 1, gradients shrink exponentially — the vanishing gradient problem. If greater than 1, they grow exponentially — the exploding gradient problem.

Because the same function is applied at every step, RNNs are particularly susceptible: in a feed-forward network, different layers have different Jacobians that may partially cancel, but in an RNN the repeated multiplication amplifies the effect.

Gradient clipping addresses the exploding gradient problem: if , rescale . Truncated BPTT limits backpropagation to a fixed number of steps , approximating the true gradient. Neither solves the vanishing gradient problem, which requires architectural innovations.

Long Short-Term Memory

The LSTM introduces a cell state \ that flows through time with minimal interference, controlled by three gates:

Forget gate: \). Decides what information to discard from the cell state.

Input gate: \). Decides what new information to store.

Output gate: \). Decides what to output from the cell state.

The update equations are:

\[ \tilde{\mathbf{c}}_t = \tanh, \quad \mathbf{c}_t = \mathbf{f}_t \odot \mathbf{c}_{t-1} + \mathbf{i}_t \odot \tilde{\mathbf{c}}_t, \quad \mathbf{h}_t = \mathbf{o}_t \odot \tanh. \]The cell state \ provides a direct additive path for gradients, analogous to skip connections in ResNets. When the forget gate is close to 1 and the input gate is close to 0, the cell state is preserved unchanged, enabling information to persist over long distances.

Gated Recurrent Unit

The GRU simplifies the LSTM by merging the cell state and hidden state and using two gates:

Reset gate: \).

Update gate: \).

\[ \tilde{\mathbf{h}}_t = \tanh + \mathbf{b}), \quad \mathbf{h}_t = \mathbf{z}_t \odot \mathbf{h}_{t-1} + \odot \tilde{\mathbf{h}}_t. \]If , the candidate \ ignores past history. If , the hidden state is copied unchanged. The GRU achieves comparable performance to LSTM with fewer parameters.

Bidirectional RNNs and Encoder-Decoder

A bidirectional RNN processes the sequence in both directions, producing forward hidden states \ and backward hidden states , which are concatenated: . This allows each position to incorporate context from both the past and future.

The encoder-decoder architecture uses one RNN to read the input sequence into a fixed-length context vector, and another RNN to generate the output sequence. This is the foundation of sequence-to-sequence models for machine translation, though it has been largely superseded by the attention mechanism and Transformers.

Chapter 15: Attention and Transformers

The Transformer architecture replaced recurrence with attention, achieving state-of-the-art results first in machine translation and then across essentially all of NLP, vision, and beyond. Transformers underpin GPT, BERT, and virtually all modern large language models.

The Attention Mechanism

where the attention weights /\lambda)_i) form a probability distribution over the keys.

The attention weights can be derived as the solution to an entropy-regularized optimization problem:

\[ \boldsymbol{\pi}^* = \arg\min_{\boldsymbol{\pi} \in \Delta_{m-1}} \sum_{i=1}^m \pi_i \cdot \text{dist} + \lambda \sum_i \pi_i \log \pi_i, \]where the first term measures query-key similarity and the second term is an entropic regularizer. Solving via the KKT conditions yields the softmax form.

Scaled Dot-Product Attention

The dominant choice in Transformers is the dot-product similarity \ = -\mathbf{q}^\top \mathbf{k}), giving:

\[ \text{Attention} = \text{softmax}\!\left V, \]where , , \ are matrices of queries, keys, and values. The scaling factor \ prevents the dot products from growing large in magnitude, which would push the softmax into saturated regions with near-zero gradients. When \ and \ have entries drawn independently from \), \ = d_k), so dividing by \ normalizes the variance to 1.

Multi-Head Attention

A single attention function can only focus on one type of relationship. Multi-head attention runs \ attention functions in parallel, each with its own learned projections:

\[ \text{head}_i = \text{Attention}, \quad \text{MultiHead} = [\text{head}_1; \dots; \text{head}_h]W^O, \]where , , and . Setting \ keeps the total parameter count comparable to single-head attention. Different heads can attend to different positions and capture different types of relationships.

The Transformer Architecture

The Transformer follows an encoder-decoder structure. The encoder consists of \ identical layers, each containing:

- Multi-head self-attention.

- A position-wise feed-forward network: \ = \sigmaW_2), where , , and \ is GELU.

- Residual connections and layer normalization after each sub-layer.

The decoder adds a third sub-layer: cross-attention where queries come from the decoder and keys/values from the encoder output. In the decoder’s self-attention, a causal mask prevents positions from attending to future tokens by setting \ = -\infty) for .

Positional Encoding

Since attention treats its input as a set, positional information must be injected explicitly. The original Transformer uses sinusoidal positional encoding:

\[ p_{t,2i} = \sin, \quad p_{t,2i+1} = \cos, \]which is added to the token embeddings. The key property is that the encoding of position \ is a linear function of the encoding at position , enabling the model to learn relative positions. The sinusoidal form also allows generalization to sequence lengths unseen during training.

Learned positional embeddings simply treat positions as learnable parameters, which works comparably well for fixed maximum lengths. Rotary positional embeddings, used in modern LLMs, encode relative position information by rotating the query and key vectors, providing better extrapolation to longer sequences.

Self-Attention vs Cross-Attention

Self-attention from the same source) allows each token to attend to all other tokens within the same sequence. It is the core building block of both encoder and decoder. Cross-attention uses queries from one sequence and keys/values from another, enabling the decoder to attend to encoder representations. In encoder-only models, only self-attention is used. In decoder-only models, only masked self-attention is used.

Complexity and Why Transformers Dominate

The computational comparison between architectures is revealing:

| Layer Type | Per-layer Complexity | Sequential Ops | Max Path Length |

|---|---|---|---|

| Self-attention | \) | \) | \) |

| Recurrent | \) | \) | \) |

| Convolution) | \) | \) | \) |

Here \ is sequence length and \ is model dimension. The critical advantage of self-attention is the \) maximum path length: any two tokens can interact directly, whereas RNNs require \) sequential steps and CNNs require \) layers of dilated convolutions. This direct interaction, combined with massive parallelism, enables Transformers to scale to enormous model sizes.

The quadratic cost \) in sequence length is the main bottleneck, motivating efficient attention variants for very long sequences.

Pre-training Paradigms

The Transformer’s success is inseparable from large-scale pre-training. GPT pre-trains a decoder-only Transformer as a causal language model: predict the next token given all previous tokens. BERT pre-trains an encoder-only Transformer with masked language modeling: randomly mask 15% of tokens and predict them from context. GPT excels at generation; BERT at understanding tasks requiring bidirectional context. Modern large language models build on the decoder-only paradigm at massive scale.

Chapter 16: Graph Neural Networks

Many real-world datasets have an underlying graph structure: molecules, social networks, knowledge graphs, and traffic networks. Graph neural networks extend deep learning to non-Euclidean domains where the data lives on graphs of varying size and topology.

Graphs in Machine Learning

A graph \) consists of a node set , edge set , node features \ for each , and optionally edge features \ for each . Learning tasks can be at the node level, edge level, or graph level. For instance, predicting whether a molecular graph is active against certain cancer cells is a graph-level task, while classifying users in a social network is a node-level task.

The Message Passing Framework

where \ is a learnable function and \) denotes the neighbors of . The parameters \ are shared across all nodes.

This message passing paradigm has three steps at each layer: each node sends a “message” based on its current representation; each node aggregates messages from its neighbors; each node updates its representation based on the aggregated messages. The aggregation function must be permutation-invariant since there is no canonical ordering of neighbors.

PageRank can be viewed as a special case with linear aggregation: \sum_{ \in E} \frac{1}{|\mathcal{N}|} h_u). Unlike GNNs, PageRank uses a fixed, non-learnable aggregation.

Graph Convolutional Networks

The GCN defines a simple and effective layer:

\[ X^{l+1} = \sigma\!\left, \]where \ adds self-loops to the adjacency matrix, \ is the degree matrix of , \ contains node features at layer , and \ is a learnable weight matrix. In vector form for a single node:

\[ \mathbf{h}_v^{l+1} = \sigma\!\left} \frac{a_{vu}}{\sqrt{}}\mathbf{h}_u^l\right) W^l. \]One layer of GCN aggregates information from 1-hop neighbors. Stacking \ layers aggregates from -hop neighborhoods.

Spectral vs Spatial Methods

GNNs can be motivated from two perspectives. The spectral approach defines graph convolution via the graph Fourier transform. The graph Laplacian ) plays the role of the frequency operator. Given the eigendecomposition , the spectral convolution of graph signals \ and \ is:

\[ \mathbf{x} * \mathbf{g} = U[ \odot], \]analogous to the convolution theorem in classical signal processing: convolution in the graph domain equals pointwise multiplication in the spectral domain.

The Chebyshev net avoids the expensive eigendecomposition by approximating the spectral filter with a Chebyshev polynomial of order : \mathbf{x}), where \ has spectrum in \ and \ are Chebyshev polynomials. This requires only \ sparse matrix-vector multiplications, costing \).

GCN can be seen as a first-order Chebyshev approximation with a renormalization trick: setting \ and adding self-loops to improve numerical conditioning.