CS 240: Data Structures and Data Management

Éric Schost

Estimated study time: 4 hr 11 min

Table of contents

Chapter 1: Introduction and Algorithm Analysis

What This Course Is About

When you first learned to program, the primary question was correctness: does the program produce the right output? CS 240 introduces a second, equally important dimension — efficiency. A correct program that takes a billion years to run is useless. We want programs that are both correct and fast, and this course provides the mathematical tools to reason rigorously about “fast.”

The subject matter is data structures and data management: efficient methods for storing, accessing, and manipulating large collections of data. The typical operations of interest are insertion, deletion, search, and sorting. Throughout, we treat these problems at the level of abstract data types (ADTs) — precise specifications of what a data structure must do, independent of how it is implemented — and we study concrete implementations (data structures coupled with algorithms) that realize those specifications efficiently.

The Algorithm Analysis Framework

Before we can say an algorithm is “efficient,” we need a precise, implementation-independent way to measure its cost. Direct measurement is too fragile: the same code runs at different speeds on different hardware, different operating systems, and with different compilers. Instead, we count primitive operations — arithmetic, comparisons, memory reads and writes — under the Random Access Machine (RAM) model.

The RAM model assumes a simplified computer in which every memory cell holds one word of data, any memory access takes constant time, and any elementary arithmetic or logical operation takes constant time. Under this model, the running time of an algorithm on an input instance \(I\) is the total number of primitive operations executed. We write this as \(T_A(I)\).

Two refinements matter immediately. First, running time depends on the size of the input, not just its contents. We write \(\text{Size}(I)\) for a positive integer measuring how large the instance is (for sorting, this is typically the number of elements \(n\)). Second, two inputs of the same size can require very different amounts of work. This leads to the twin notions of worst-case complexity:

\[ T_A(n) = \max\{\, T_A(I) : \text{Size}(I) = n \,\} \]and average-case complexity:

\[ T_A^{\text{avg}}(n) = \frac{1}{|\{I : \text{Size}(I)=n\}|} \sum_{\{I:\text{Size}(I)=n\}} T_A(I). \]Worst-case complexity is the more conservative and most commonly used measure: it guarantees the algorithm will never be slower than \(T_A(n)\), regardless of input.

Asymptotic Notation

Counting operations exactly introduces messy constants that depend on machine details. What really matters is growth rate — how running time scales as \(n\) grows large. Asymptotic notation captures this rigorously by abstracting away constant factors and lower-order terms.

Big-O gives an asymptotic upper bound: \(f\) grows no faster than \(g\), up to a constant. For example, to prove \(2n^2 + 3n + 11 \in O(n^2)\) from first principles, we need to find \(c\) and \(n_0\) such that \(2n^2 + 3n + 11 \leq c\,n^2\) for all \(n \geq n_0\). Taking \(c = 3\) works when \(n \geq 14\), since for such \(n\) we have \(3n \leq n^2\) and \(11 \leq n^2\), giving \(2n^2 + 3n + 11 \leq 3n^2\).

Big-O alone is too loose for meaningful comparison — after all, \(2n^2 + 3n + 11 \in O(n^{10})\) as well. We need a lower bound.

Big-Theta is a tight, two-sided bound. When we can establish a \(\Theta\)-bound, we have characterized the growth rate completely. For comparing algorithms, we should always use \(\Theta\)-notation where possible; a statement like “algorithm A has running time \(O(n^3)\)” does not rule out the possibility that it is actually \(\Theta(n)\).

The family is rounded out by little-o and little-omega for strict inequalities:

Intuitively, \(f \in o(g)\) means \(f\) is strictly smaller in growth than \(g\); \(f \in \omega(g)\) means strictly larger. These are analogous to \(<\) and \(>\), while \(O\), \(\Omega\), and \(\Theta\) are analogous to \(\leq\), \(\geq\), and \(=\).

Key Relationships and Algebraic Rules

The five notations are related symmetrically: \(f \in O(g) \Leftrightarrow g \in \Omega(f)\), and \(f \in \Theta(g) \Leftrightarrow g \in \Theta(f)\). Little-o implies big-O but not big-Omega.

Two rules make calculation easier. The identity rule states \(f(n) \in \Theta(f(n))\). The maximum rule states that for positive \(f\) and \(g\),

\[ O(f(n) + g(n)) = O(\max\{f(n),\, g(n)\}), \]and similarly for \(\Omega\). This means the dominant term wins: \(O(n^2 + n\log n) = O(n^2)\).

Transitivity holds for all five notations: if \(f \in O(g)\) and \(g \in O(h)\), then \(f \in O(h)\).

The Limit Technique

A convenient shortcut uses limits. Suppose \(f(n) > 0\), \(g(n) > 0\) for large \(n\), and the limit \(L = \lim_{n \to \infty} f(n)/g(n)\) exists (possibly \(+\infty\)). Then:

\[ f(n) \in \begin{cases} o(g(n)) & \text{if } L = 0, \\ \Theta(g(n)) & \text{if } 0 < L < \infty, \\ \omega(g(n)) & \text{if } L = \infty. \end{cases} \]This follows directly from the definitions. For example, since \(\lim_{n\to\infty} \log n / n = 0\) (by L’Hôpital’s rule), we have \(\log n \in o(n)\), i.e., any polynomial of positive degree grows faster than any power of \(\log n\). More precisely, for any constants \(c > 0\) and \(d > 0\), \((\log n)^c \in o(n^d)\).

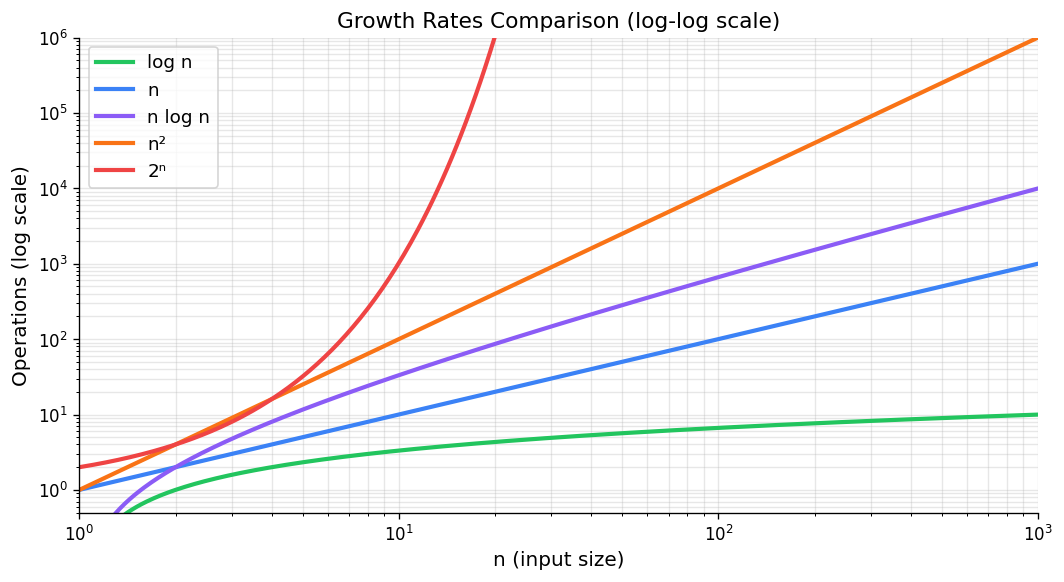

Growth rates comparison — log-log scale shows relative asymptotic behavior.

Common Growth Rates

The growth rates appearing most often in algorithm analysis, in increasing order, are:

\[ \Theta(1) \;\prec\; \Theta(\log n) \;\prec\; \Theta(n) \;\prec\; \Theta(n \log n) \;\prec\; \Theta(n^2) \;\prec\; \Theta(n^3) \;\prec\; \Theta(2^n), \]where \(\prec\) means “strictly slower growth.” These correspond to constant, logarithmic, linear, linearithmic, quadratic, cubic, and exponential complexity. The practical significance becomes clear when the problem size doubles from \(n\) to \(2n\): a constant-time algorithm is unaffected; a logarithmic one gains only one extra unit of time; a linear one doubles; a quadratic one quadruples; an exponential one squares.

Any polynomial \(f(n) = c_d n^d + \cdots + c_0\) with \(c_d > 0\) satisfies \(f(n) \in \Theta(n^d)\), since the limit \(f(n)/n^d \to c_d\). Also, \(\log(n!) = \Theta(n \log n)\), a fact used in lower bound arguments.

Analyzing Algorithms in Practice

To analyze a concrete algorithm, we identify the elementary operations (each costing \(\Theta(1)\)), sum their counts as the algorithm executes, and simplify using the rules above.

For a simple nested loop, we work from the inside out. Consider:

Test1(n):

sum <- 0

for i <- 1 to n do

for j <- i to n do

sum <- sum + (i - j)^2

return sum

The innermost statement executes \(\Theta(1)\) work. The inner loop runs \(n - i + 1\) times for each \(i\). Summing over \(i\):

\[ \sum_{i=1}^{n} (n - i + 1) = \sum_{k=1}^{n} k = \frac{n(n+1)}{2} \in \Theta(n^2). \]So Test1 runs in \(\Theta(n^2)\) time.

When an algorithm has branches or data-dependent early exits, proving a tight bound may require establishing \(O\) and \(\Omega\) separately. For \(O\), use upper bounds on loop counts; for \(\Omega\), exhibit a specific family of inputs that always hit the worst case.

Useful Summation Formulas

Several standard series appear frequently:

Arithmetic series: \(\displaystyle\sum_{i=0}^{n-1}(a + di) = na + \frac{dn(n-1)}{2} \in \Theta(n^2)\) when \(d \neq 0\).

Geometric series: \(\displaystyle\sum_{i=0}^{n-1} r^i = \frac{r^n - 1}{r - 1} \in \Theta(r^{n-1})\) for \(r > 1\), and \(\in \Theta(1)\) for \(0 < r < 1\).

Harmonic series: \(\displaystyle H_n = \sum_{i=1}^{n} \frac{1}{i} = \ln n + \gamma + o(1) \in \Theta(\log n)\), where \(\gamma \approx 0.5772\) is the Euler–Mascheroni constant.

Power sums: \(\displaystyle\sum_{i=1}^{n} i^k \in \Theta(n^{k+1})\) for \(k \geq 0\).

Case Study: Analysis of MergeSort

Merge sort recursion tree for 8 elements — divide-and-conquer, O(n log n) total work.

MergeSort is the canonical example of divide-and-conquer analysis. The algorithm splits the input array in half, recursively sorts each half, and then merges the two sorted halves. In pseudocode:

MergeSort(A, l, r):

if r <= l: return

m <- floor((l + r) / 2)

MergeSort(A, l, m)

MergeSort(A, m+1, r)

Merge(A, l, m, r)

The Merge procedure takes two adjacent sorted subarrays A[l..m] and A[m+1..r] and merges them using an auxiliary array S. It scans both halves with two pointers, always copying the smaller front element into the output. This takes exactly \(r - l + 1\) steps, so merging \(n\) elements costs \(\Theta(n)\).

Let \(T(n)\) be the worst-case running time of MergeSort on an array of length \(n\). The two recursive calls handle halves of size \(\lceil n/2 \rceil\) and \(\lfloor n/2 \rfloor\), and the merge costs \(\Theta(n)\), giving the recurrence:

\[ T(n) = T\!\left(\left\lceil \tfrac{n}{2} \right\rceil\right) + T\!\left(\left\lfloor \tfrac{n}{2} \right\rfloor\right) + \Theta(n), \qquad T(1) = \Theta(1). \]When \(n = 2^k\) is a power of two, the floors and ceilings vanish, yielding the clean recurrence \(T(n) = 2T(n/2) + cn\). Unrolling this \(k = \log_2 n\) levels produces a recursion tree: at level \(j\), there are \(2^j\) subproblems of size \(n/2^j\), each doing \(cn/2^j\) work merging, for a total of \(cn\) work per level. With \(\log n\) levels, the total is \(cn \log n\), giving:

This analysis generalizes to all \(n\) by a careful argument on the exact recurrence with floors and ceilings. The following recurrence table summarizes several important divide-and-conquer patterns:

| Recurrence | Solution | Example |

|---|---|---|

| \(T(n) = T(n/2) + \Theta(1)\) | \(\Theta(\log n)\) | Binary search |

| \(T(n) = 2T(n/2) + \Theta(n)\) | \(\Theta(n \log n)\) | MergeSort |

| \(T(n) = 2T(n/2) + \Theta(\log n)\) | \(\Theta(n)\) | Heapify |

| \(T(n) = T(cn) + \Theta(n)\) for \(0 < c < 1\) | \(\Theta(n)\) | QuickSelect (avg) |

| \(T(n) = T(\sqrt{n}) + \Theta(1)\) | \(\Theta(\log \log n)\) | Interpolation search |

Once a solution is conjectured, it can usually be verified by substitution (induction). More systematic techniques appear in CS 341.

Chapter 2: Priority Queues and Heaps

Abstract Data Types and the Priority Queue

A recurring theme in this course is the separation between what a data structure does (its abstract interface) and how it does it (its concrete implementation). An abstract data type (ADT) specifies a collection of data and a set of operations on that data, with no commitment to any particular internal representation. Different realizations of the same ADT can offer radically different performance characteristics.

The Stack ADT (push/pop, LIFO order) and the Queue ADT (enqueue/dequeue, FIFO order) are familiar examples, both realizable with arrays or linked lists. In this chapter we study a richer ADT: the priority queue.

insert(x): add item \(x\) with its associated key to the collection.

deleteMax(): remove and return the item with the largest key.

A minimum-oriented priority queue replaces deleteMax with deleteMin; the two are symmetric and can be interconverted by negating keys. Applications abound: a task scheduler processes jobs in order of urgency; a simulation system fires events in chronological order; an event-driven game engine processes collisions by time. And, as we will see, priority queues can sort.

PQ-Sort and the Challenge of Implementation

Any priority queue can be turned into a sorting algorithm. Given an array \(A[0..n-1]\), insert all \(n\) elements, then extract them in order via deleteMax:

PQ-Sort(A):

initialize PQ to an empty priority queue

for k <- 0 to n-1 do

PQ.insert(A[k])

for k <- n-1 down to 0 do

A[k] <- PQ.deleteMax()

The total time is \(O(n + n \cdot \text{insert} + n \cdot \text{deleteMax})\). The choice of implementation determines the practical cost:

If we use an unsorted array, insertion is \(O(1)\) (append to the end, expanding with doubling if needed — amortized \(O(1)\) per insert), but deleteMax must scan the whole array, costing \(O(n)\). PQ-Sort with an unsorted array is \(\Theta(n^2)\), which corresponds exactly to selection sort.

If we use a sorted array, deleteMax is \(O(1)\) (remove from the end), but maintaining sorted order requires inserting each new element into its correct position in \(O(n)\) time. PQ-Sort with a sorted array gives insertion sort, also \(\Theta(n^2)\).

Both naive approaches are quadratic. To do better, we need a data structure that balances the cost of insertion and deletion, achieving \(O(\log n)\) for each. The answer is the binary heap.

Binary Heaps: Structure and Representation

Structural property: All levels are completely filled except possibly the last, which is filled left-to-right (i.e., the tree is a complete binary tree).

Heap-order property: For every node \(i\), the key of \(i\)'s parent is greater than or equal to the key of \(i\).

The structural property ensures the tree is “as balanced as possible.” An immediate consequence is:

Proof sketch. A complete binary tree with all levels full has height \(\lfloor \log_2 n \rfloor\). The last (possibly incomplete) level can add at most a constant factor. Since any binary tree with \(n\) nodes has height at least \(\log_2(n+1) - 1 \in \Omega(\log n)\), and the structural property gives height at most \(\lfloor \log_2 n \rfloor \in O(\log n)\), the height is \(\Theta(\log n)\).

The heap-order property ensures the maximum is always at the root, giving \(O(1)\) access to the maximum element.

Binary max-heap — complete binary tree with heap-order property, and its array representation.

Array Representation

Heaps are stored compactly in an array, not as a linked tree. Level-order traversal gives the mapping: the root goes to A[0], then the two children of the root go to A[1] and A[2], and so on. For a heap of size \(n\) stored in A[0..n-1]:

Index of root: 0

Left child of A[i]: A[2i + 1]

Right child of A[i]: A[2i + 2]

Parent of A[i] (i != 0): A[floor((i-1)/2)]

Last node: A[n - 1]

This is illustrated by the following example heap with keys [50, 29, 34, 27, 15, 8, 10, 23, 26]:

50 <- A[0]

/ \

29 34 <- A[1], A[2]

/ \ / \

27 15 8 10 <- A[3..6]

/ \

23 26 <- A[7], A[8]

The array representation eliminates the memory overhead of pointers entirely, and all navigation formulas are simple arithmetic. In practice, implementation should hide these formulas behind helper functions root(), parent(i), leftChild(i), etc., to improve readability and correctness.

Heap Operations

Insertion: Fix-Up (Bubble Up)

To insert a new key \(x\) into a heap of size \(n\), place \(x\) at position A[n] (the next available leaf position) and then restore the heap-order property by bubbling up:

fix-up(A, k):

while parent(k) exists and A[parent(k)] < A[k]:

swap A[k] and A[parent(k)]

k <- parent(k)

The new element climbs toward the root, swapping with its parent each time its key is larger. It stops when either it reaches the root or its parent’s key is at least as large. Since the heap has height \(\Theta(\log n)\), fix-up traverses at most \(\Theta(\log n)\) edges.

Time: \(O(\log n)\).

DeleteMax: Fix-Down (Bubble Down)

To remove the maximum (the root), we cannot simply delete A[0] without leaving a gap. Instead, swap A[0] with the last leaf A[n-1], reduce the heap size by one (the former root is now outside the heap at A[n-1]), and restore the heap-order property by bubbling down from the root:

fix-down(A, n, k):

while k is not a leaf:

j <- left child of k

if j is not the last node and A[j+1] > A[j]:

j <- j + 1 // j is now the larger child

if A[k] >= A[j]: break

swap A[j] and A[k]

k <- j

At each step, the displaced element is swapped with the larger of its two children. This guarantees that after the swap, both the swapped element and the child that did not move still satisfy the heap-order property with respect to their own subtrees. The procedure runs in \(O(\log n)\) time.

The full deleteMax operation wraps fix-down:

deleteMax():

l <- last(size)

swap A[root()] and A[l]

decrease size

fix-down(A, size, root())

return A[l]

Both insert and deleteMax run in \(O(\log n)\) time, and PQ-Sort with a heap implementation takes \(O(n \log n)\).

Building a Heap: Heapify in Linear Time

When all \(n\) elements are available in advance, there is a much faster way to build a heap than inserting elements one by one.

Approach 1 (Bubble-up): Insert elements sequentially, running fix-up after each insertion. This is \(\Theta(n \log n)\) in the worst case: elements near the end of the array may travel all the way to the root.

Approach 2 (Heapify — Bubble-down): Observe that all leaves are already trivially valid single-element heaps. Work backwards from the last internal node to the root, applying fix-down at each position:

heapify(A):

n <- A.size()

for i <- parent(last(n)) downto 0:

fix-down(A, n, i)

The last internal node is at index parent(n-1) = floor((n-2)/2).

heapify runs in \(\Theta(n)\) time.Proof. The key observation is that nodes near the bottom of the tree have small subtrees, and fix-down at those nodes is cheap. At height \(h\) above the leaves, there are at most \(\lceil n/2^{h+1} \rceil\) nodes, and fix-down costs at most \(O(h)\). Summing over all heights:

\[ \sum_{h=0}^{\lfloor \log n \rfloor} \left\lceil \frac{n}{2^{h+1}} \right\rceil \cdot O(h) = O\!\left(n \sum_{h=0}^{\infty} \frac{h}{2^h}\right) = O(n), \]since the series \(\sum_{h=0}^{\infty} h/2^h = 2\) converges. Therefore heapify is \(O(n)\); and clearly it is \(\Omega(n)\) since it must examine each element, so the bound is \(\Theta(n)\).

This is a striking result: despite each individual fix-down taking \(O(\log n)\) time, the combined cost of all fix-downs is only linear, because most nodes sit near the bottom and their fix-downs terminate quickly.

HeapSort

Heapify combined with repeated deleteMax gives an elegant in-place sorting algorithm. Crucially, we reuse the input array as the heap, avoiding any extra space:

HeapSort(A, n):

// Phase 1: build the heap in-place

for i <- parent(last(n)) downto 0:

fix-down(A, n, i)

// Phase 2: extract elements in sorted order

while n > 1:

swap A[root()] and A[last(n)]

decrease n

fix-down(A, n, root())

In Phase 1, heapify establishes the max-heap in \(\Theta(n)\) time. In Phase 2, we repeatedly swap the maximum (at the root) to the end of the current heap, shrink the heap by one, and restore the heap property. After \(n-1\) extractions, the array is sorted in ascending order.

Phase 2 performs \(n-1\) fix-down operations on heaps of decreasing size. Each fix-down costs \(O(\log n)\), so Phase 2 is \(O(n \log n)\). The total running time is \(\Theta(n) + O(n \log n) = O(n \log n)\). Since Phase 2 also requires \(\Omega(n \log n)\) in the worst case (a sorted-order input forces each fix-down to traverse the full height), HeapSort is \(\Theta(n \log n)\).

HeapSort has the remarkable property of using only \(O(1)\) auxiliary space (beyond the input array itself), unlike MergeSort which needs \(\Theta(n)\) extra space. It is the only \(\Theta(n \log n)\) sorting algorithm we have seen with this property.

The Selection Problem

We close this chapter with the selection problem: given an array \(A\) of \(n\) numbers and an index \(0 \leq k < n\), find the element that would appear at position \(k\) in the sorted array. Finding the median is the special case \(k = \lfloor n/2 \rfloor\).

Heaps offer several approaches:

Solution 1 (repeated scan): Make \(k\) passes, deleting the maximum each time. Cost: \(\Theta(kn)\), which is \(\Theta(n^2)\) for the median.

Solution 2 (sort first): Sort, then index. Cost: \(\Theta(n \log n)\).

Solution 3 (sliding min-heap): Scan the array, maintaining a min-heap of the \(k\) largest elements seen so far. The top of the min-heap is the \(k\)th largest. Cost: \(\Theta(n \log k)\).

Solution 4 (heapify then extract): Call heapify to build a max-heap in \(\Theta(n)\), then call deleteMax exactly \(k\) times. Cost: \(\Theta(n + k \log n)\).

For the median (\(k = n/2\)), Solutions 2–4 all give \(\Theta(n \log n)\). The question of whether selection can be done faster — in linear time — is addressed in Chapter 3 via the QuickSelect algorithm.

Advanced Heap Structures

The binary heap is an excellent all-around priority queue: simple to implement, cache-friendly in its array layout, and \(O(\log n)\) for both insert and deleteMax. Its one significant limitation is the merge operation. Given two binary heaps of sizes \(n_1\) and \(n_2\), the only known approach using the array representation is to concatenate the two arrays and run heapify, which costs \(\Theta(n_1 + n_2)\) — linear in the combined size. For applications that frequently merge priority queues (external sorting, network routing, Dijkstra’s algorithm with edge relaxations across components), this linear merge cost is a real bottleneck. Two alternative structures overcome it: meldable heaps achieve \(O(\log n)\) expected merge time through randomisation, and binomial heaps achieve \(O(\log n)\) worst-case merge time through a more elaborate structural invariant.

Meldable Heaps

A meldable heap is a binary tree satisfying the heap-order property — every node’s key is at least as large as the keys of its children — but with no structural property whatsoever. The tree can be completely skewed, arbitrarily unbalanced, or have height anywhere from \(\Theta(\log n)\) to \(\Theta(n)\). Abandoning the structural property is what makes merging tractable.

All priority queue operations reduce to a single subroutine: merge(P1, P2), which combines two meldable heaps into one. The algorithm is recursive and randomised:

Input: roots r1, r2 of two meldable heaps (r1 is not null)

1. If r2 is null, return r1

2. If r1.key < r2.key, swap r1 and r2

3. Randomly pick one of r1's two children, call it c (with equal probability for each child)

4. Replace c with merge(r2, c)

5. Return r1

The key insight is step 2: by ensuring the heap with the larger root plays the role of r1, we guarantee that r1’s root remains the maximum in the merged heap, preserving the heap-order property from the top down. The random choice of which child to recurse into is the trick that keeps the expected cost small.

The other operations are immediate reductions to merge:

- insert(k): Create a new single-node heap \(P'\) containing key \(k\). Call

merge(P, P'). This is \(O(\log n)\) expected. - deleteMax: The maximum is at the root. Remove it, exposing two sub-heaps \(P_L\) and \(P_R\) rooted at the root’s two children. Call

merge(P_L, P_R). This is \(O(\log n)\) expected.

Expected runtime of merge. The merge algorithm traces a random downward walk in each of the two heaps simultaneously: at each level, we pick a child uniformly at random and descend into it. The walk terminates when it hits an empty subtree. The run-time of merge is therefore proportional to the total length of these two walks.

The proof is a clean induction on \(n\). Let \(T(n)\) denote this expected length. The base case \(n=1\) is immediate: the walk visits exactly 1 node and \(\log 2 = 1\). For \(n > 1\), let the left and right subtrees have sizes \(n_L\) and \(n_R\) with \(n_L + n_R = n - 1\). With probability \(1/2\) we go left (expected total length \(1 + T(n_L)\)) and with probability \(1/2\) we go right (expected total length \(1 + T(n_R)\)), so

\[ T(n) \leq 1 + \frac{1}{2}T(n_L) + \frac{1}{2}T(n_R) \leq 1 + \frac{1}{2}\log(n_L+1) + \frac{1}{2}\log(n_R+1). \]By the concavity of \(\log\), and using \(\frac{1}{2}(n_L+1) + \frac{1}{2}(n_R+1) = \frac{n+1}{2}\),

\[ T(n) \leq 1 + \log\!\left(\frac{n+1}{2}\right) = 1 + \log(n+1) - 1 = \log(n+1), \]completing the induction. Since merge does two such walks (one in each heap), its expected run-time is \(O(\log n_1 + \log n_2) = O(\log n)\) where \(n = n_1 + n_2\).

The worst case for merge is still \(\Theta(n)\) — a maximally skewed heap where every random choice descends to the lowest leaf — but this event has probability \(2^{-n}\), so it is essentially irrelevant in practice.

Binomial Heaps

Meldable heaps are conceptually simple but offer only expected guarantees. Binomial heaps replace randomisation with a carefully engineered structural invariant that yields \(O(\log n)\) worst-case merge time.

Binomial trees. The building block is a family of trees \(B_0, B_1, B_2, \ldots\) defined recursively:

- \(B_0\) is a single node.

- \(B_k\) is formed by taking two copies of \(B_{k-1}\) and making one the leftmost child of the other’s root.

(1) \(B_k\) has exactly \(2^k\) nodes.

(2) \(B_k\) has height \(k\).

(3) The root of \(B_k\) has exactly \(k\) children.

(4) The children of \(B_k\)'s root are \(B_0, B_1, \ldots, B_{k-1}\) (from right to left).

The name “binomial” reflects that at depth \(d\), \(B_k\) has exactly \(\binom{k}{d}\) nodes — the binomial coefficient. The properties follow easily from the recursive definition by induction.

Binomial heap structure. A binomial heap is a collection (list) of binomial trees such that no two trees have the same order \(k\). Given a heap of size \(n\), write \(n\) in binary: the collection contains \(B_k\) if and only if the \(k\)-th bit of \(n\) is 1. For example, \(n = 13 = 1101_2\) means the heap contains trees \(B_0\), \(B_2\), and \(B_3\) (13 = 1 + 4 + 8 nodes total). Since the binary representation of \(n\) has at most \(\lfloor \log n \rfloor + 1\) bits, a binomial heap contains at most \(\lfloor \log n \rfloor + 1\) trees.

Each tree satisfies the heap-order property: every node’s key is at least as large as the keys of all nodes in its subtrees. The maximum of the entire heap is therefore the maximum among the roots of the constituent binomial trees, found in \(O(\log n)\) by scanning the root list.

Merging two binomial trees. If we have two copies of \(B_k\), we can merge them into a single \(B_{k+1}\) in \(O(1)\) time: compare the two roots, make the smaller root a child of the larger root (to preserve heap order), and the former left subtrees attach appropriately. This mirrors binary addition: two trees of order \(k\) produce one carry of order \(k+1\).

All operations in \(O(\log n)\). Merging two binomial heaps of sizes \(n_1\) and \(n_2\) is exactly like adding two binary numbers: walk through the tree lists from smallest order to largest, combining any two trees of equal order into a carry for the next order. The process visits at most \(O(\log n)\) trees total and each combining step is \(O(1)\), giving \(O(\log n)\) merge.

Insert is a degenerate merge: a single new element is a \(B_0\), and merging it into the existing heap propagates at most \(O(\log n)\) carries. DeleteMax finds the root list’s maximum in \(O(\log n)\), removes its tree \(B_k\), then splits \(B_k\) into its children \(B_0, B_1, \ldots, B_{k-1}\) — which themselves form a valid binomial heap of size \(2^k - 1\) — and merges that back into the remainder in \(O(\log n)\). All standard priority queue operations thus cost \(O(\log n)\) in the worst case.

Comparison. The binary heap remains preferable when merge is not required: the array layout gives excellent cache behaviour and small constant factors. But if the application merges heaps frequently, binomial heaps (or meldable heaps for a simpler implementation) are the right choice. A further refinement, Fibonacci heaps, achieves amortised \(O(1)\) insert and decreaseKey, which is exploited by Dijkstra’s algorithm in dense graphs — but that analysis is reserved for a later course.

Chapter 3: Sorting

The Selection Problem Revisited: QuickSelect

The heap-based selection algorithms of Chapter 2 achieve \(\Theta(n + k \log n)\) time. For median finding with \(k = n/2\), this is \(\Theta(n \log n)\) — the same cost as sorting. Can we find the \(k\)th element in \(O(n)\) time, for any fixed \(k\)?

The QuickSelect algorithm answers yes, in the average case. It is built from two subroutines that are also central to QuickSort.

The Partition Subroutine

choose-pivot(A) selects an index \(p\); the simplest strategy returns the rightmost index.

partition(A, p) rearranges \(A\) so that all elements smaller than the pivot \(v = A[p]\) appear to its left, and all elements larger appear to its right, returning the final index \(i\) of \(v\):

After partition: A[0..i-1] <= v == A[i] <= A[i+1..n-1]

A conceptually simple implementation creates auxiliary lists small and large and concatenates them. More practically, Hoare’s in-place partition uses two pointers scanning from opposite ends:

partition(A, p):

swap(A[n-1], A[p])

i <- -1, j <- n-1, v <- A[n-1]

loop:

do i <- i+1 while i < n and A[i] < v

do j <- j-1 while j > 0 and A[j] > v

if i >= j: break

else: swap(A[i], A[j])

swap(A[n-1], A[i])

return i

The left pointer i advances until it finds an element \(\geq v\); the right pointer j retreats until it finds an element \(\leq v\). When both are “stuck,” we swap the out-of-place pair and repeat. When the pointers cross, the pivot is swapped into its final position. This runs in \(\Theta(n)\) time and uses \(O(1)\) extra space.

The QuickSelect Algorithm

quick-select(A, k):

p <- choose-pivot(A)

i <- partition(A, p)

if i == k: return A[i]

else if i > k: return quick-select(A[0..i-1], k)

else: return quick-select(A[i+1..n-1], k - i - 1)

After partitioning, the pivot is in its correct sorted position \(i\). If \(i = k\), we are done. If \(i > k\), the \(k\)th element is in the left sub-array; if \(i < k\), it is in the right sub-array. Only one recursive call is made (unlike QuickSort, which makes two).

Worst-case analysis: If the pivot always lands at one extreme (e.g., the array is sorted and we always pick the rightmost element), each call reduces the problem by only 1:

\[ T(n) = T(n-1) + cn \implies T(n) \in \Theta(n^2). \]Average-case analysis: Assume all \(n!\) permutations of the input are equally likely (or equivalently, that the relative order of elements is what matters — the sorting permutation model). For each pivot index \(i\) (which occurs for \((n-1)!\) out of \(n!\) permutations), the recursive call has size at most \(\max(i, n-i-1)\). Therefore:

\[ T(n) \leq cn + \frac{1}{n} \sum_{i=0}^{n-1} \max\{T(i),\, T(n-i-1)\}. \]Proof sketch. Assume \(T(n) \leq an\) for some constant \(a\) to be determined. The sum \(\frac{1}{n}\sum_{i=0}^{n-1}\max\{T(i), T(n-i-1)\}\) can be bounded by noting that \(\max(T(i), T(n-i-1)) \leq T(n-1)\) in the worst case, but on average the pivot splits the array roughly in half. A careful calculation (treating the recurrence as a telescoping inequality) shows that \(T(n) \leq 4cn\), confirming the \(O(n)\) bound. The \(\Omega(n)\) lower bound is trivial since we must read all inputs.

Randomized Algorithms

The average-case result above assumed a uniform distribution over input permutations. In practice, adversarial or highly structured inputs can trigger the worst case. Randomized algorithms shift the source of variability from the input (which we cannot control) to the random choices made by the algorithm (which we can analyze in expectation).

For a randomized algorithm, we define the expected running time on instance \(I\) as:

\[ T^{(\text{exp})}(I) = \mathbb{E}[T(I, R)] = \sum_{R} T(I, R) \cdot \Pr[R], \]where \(R\) ranges over all possible random choices. The worst-case expected running time is then \(\max_{\text{Size}(I)=n} T^{(\text{exp})}(I)\).

There are two natural ways to randomize QuickSelect:

Shuffle first: Randomly permute the input before running the deterministic algorithm. After shuffling, every permutation is equally likely regardless of the original input, so the expected running time equals the average-case deterministic running time: \(\Theta(n)\).

Random pivot: Simply select the pivot index uniformly at random:

choose-pivot2(A): return random(n)

With probability \(1/n\) the pivot lands at each index \(i\), so the analysis is identical to the average-case analysis: the expected running time is \(\Theta(n)\).

The random-pivot approach is generally preferred in practice because it is simpler and avoids the overhead of a full shuffle. There exists a deterministic linear-time selection algorithm (the “median of medians” method), but it is slower by a constant factor and is deferred to CS 341.

QuickSort

QuickSort, developed by C.A.R. Hoare in 1960, applies the same partition idea to sorting by recursing on both sides of the pivot:

quick-sort(A):

if n <= 1: return

p <- choose-pivot(A)

i <- partition(A, p)

quick-sort(A[0..i-1])

quick-sort(A[i+1..n-1])

Worst case: If the pivot always lands at an extreme position, the recurrence is the same as for QuickSelect: \(T(n) = T(n-1) + \Theta(n)\), giving \(T(n) \in \Theta(n^2)\).

Best case: If the pivot always splits the array exactly in half, we get the MergeSort recurrence \(T(n) = 2T(n/2) + \Theta(n)\), giving \(T(n) \in \Theta(n \log n)\).

Average case: Over all \(n!\) permutations, with probability \(1/n\) the pivot has rank \(i\), leaving sub-arrays of sizes \(i\) and \(n-i-1\). The recurrence is:

\[ T^{(\text{avg})}(n) = cn + \frac{1}{n} \sum_{i=0}^{n-1} \left[T^{(\text{avg})}(i) + T^{(\text{avg})}(n-i-1)\right], \qquad n \geq 2. \]Proof sketch. Multiply both sides by \(n\) and subtract the analogous equation for \(n-1\) to telescoping. One obtains \(nT(n) - (n-1)T(n-1) = cn^2 + 2T(n-1)\), which rearranges to \(T(n)/(n+1) = T(n-1)/n + c'\) for an appropriate constant \(c'\). Unrolling gives \(T(n)/(n+1) = \sum_{k=2}^{n} c' \approx c' \ln n\), so \(T(n) \approx c'(n+1)\ln n \in \Theta(n \log n)\).

Using a random pivot (choose-pivot2) gives an expected running time of \(\Theta(n \log n)\), with the same analysis since the randomness is equivalent to a uniform permutation of the input.

Practical Improvements

Several engineering refinements make QuickSort the fastest sorting algorithm in practice for most inputs:

Auxiliary space: The recursion stack uses \(\Omega(\text{recursion depth})\) space — \(\Theta(n)\) in the worst case. By always recursing on the smaller sub-array first and replacing the larger recursion with a loop (tail-call optimization), the stack depth is reduced to \(\Theta(\log n)\) in the worst case.

Cutoff to insertion sort: When sub-arrays become small (say, \(n \leq 10\)), stop recursing and instead run a final pass of insertion sort over the entire array. Since each element is within 10 positions of its final location at that point, the insertion sort pass costs \(O(n)\) total.

Three-way partition: For inputs with many duplicate keys, the two-way partition can degrade. A three-way partition produces three regions — elements less than, equal to, and greater than the pivot — and recurses only on the outer two. This reduces the work on the duplicate elements to zero.

Lower Bound for Comparison-Based Sorting

The sorting algorithms seen so far — Selection Sort, Insertion Sort, MergeSort, HeapSort, QuickSort — all operate in the comparison model: elements can only be examined by comparing pairs, and moved around. Under this model, a fundamental lower bound holds.

Proof. Model any deterministic comparison-based sorting algorithm as a decision tree: each internal node represents a comparison between two elements, with two branches for the two outcomes; each leaf is a possible sorted permutation of the input. For the algorithm to correctly sort every input, the decision tree must have at least one leaf for each of the \(n!\) possible input permutations. A binary tree with \(\ell\) leaves has height at least \(\log_2 \ell\). Since \(\ell \geq n!\), the worst-case number of comparisons (the height of the tree) satisfies:

\[ \text{comparisons} \geq \log_2(n!) = \sum_{k=1}^{n} \log_2 k \geq \sum_{k=\lceil n/2 \rceil}^{n} \log_2 k \geq \frac{n}{2} \log_2\!\left(\frac{n}{2}\right) = \frac{n}{2}(\log_2 n - 1) \in \Omega(n \log n). \]Therefore no comparison-based algorithm can beat \(\Omega(n \log n)\) in the worst case. \(\square\)

This is a striking information-theoretic argument: just to communicate which of the \(n!\) permutations is the correct sorted order requires at least \(\log_2(n!)\) bits, and each comparison provides at most one bit.

The result does not apply to algorithms that exploit additional structure of the keys — for example, when keys are integers in a bounded range.

Non-Comparison-Based Sorting: Radix and Count Sort

When keys are integers (or can be encoded as such), we can beat \(\Omega(n \log n)\) by reading individual digits of the keys rather than comparing whole keys. These algorithms are not in the comparison model.

Single-Digit Bucket Sort

Assume keys are integers with digits from a radix \(R\) alphabet \(\{0, 1, \ldots, R-1\}\). To sort by a single digit \(d\):

Bucket sort creates \(R\) “buckets” \(B[0], B[1], \ldots, B[R-1]\) (each a linked list), distributes each element into the bucket matching its \(d\)th digit, then concatenates the buckets back into the array.

Bucket-Sort(A, d):

initialize B[0..R-1] as empty lists

for i <- 0 to n-1:

append A[i] to B[digit_d(A[i])]

i <- 0

for j <- 0 to R-1:

move all elements of B[j] into A[i++]

Bucket sort is stable: elements with the same digit value appear in the output in the same relative order as in the input. This stability is crucial for multi-digit sorting. Running time: \(\Theta(n + R)\); auxiliary space: \(\Theta(n)\) for the lists.

Count Sort

Bucket sort wastes space on linked list overhead. A space-optimized variant, count sort (also called key-indexed count sort), avoids lists by pre-computing where each group starts:

key-indexed-count-sort(A, d):

count[0..R-1] <- 0

for i <- 0 to n-1:

count[digit_d(A[i])]++

idx[0] <- 0

for i <- 1 to R-1:

idx[i] <- idx[i-1] + count[i-1]

aux[0..n-1] <- empty array

for i <- 0 to n-1:

aux[idx[digit_d(A[i])]] <- A[i]

idx[digit_d(A[i])]++

A <- copy(aux)

The count array tallies how many elements have each digit value. The idx array converts those counts into starting positions via prefix sums. Each element is then placed directly at its correct position in the auxiliary array aux. This too runs in \(\Theta(n + R)\) time.

MSD and LSD Radix Sort

For keys with \(m\) digits each, the single-digit sort extends in two natural ways.

MSD-Radix-Sort (Most Significant Digit first) sorts by the leading digit, then recursively sorts each resulting group by the next digit, and so on. This requires managing up to \(R\) recursive subproblems at each level, leading to \(R^m\) potential leaf groups and significant recursion overhead.

LSD-Radix-Sort (Least Significant Digit first) sorts by the last digit first, then the second-to-last, and so on up to the most significant digit. Because count sort is stable, each pass preserves the ordering established by earlier passes:

LSD-Radix-Sort(A):

for d <- m down to 1:

key-indexed-count-sort(A, d)

After sorting by digit \(d\), the loop invariant is that \(A\) is sorted with respect to digits \(d, d+1, \ldots, m\). When digit 1 is processed last, the array is fully sorted.

[123, 230, 021, 320, 210, 232, 101]:After sorting by digit 3 (last):

[230, 320, 210, 021, 101, 232, 123]After sorting by digit 2:

[101, 210, 320, 021, 123, 230, 232]After sorting by digit 1:

[021, 101, 123, 210, 230, 232, 320]Time: Each of the \(m\) passes runs in \(\Theta(n + R)\) time, giving a total of \(\Theta(m(n + R))\). If keys are \(b\)-bit integers and \(R = 2^r\) for some block size \(r\), then \(m = b/r\) and the cost is \(\Theta\!\left(\frac{b}{r}(n + 2^r)\right)\), which is optimized by choosing \(r = \log_2 n\) to give \(\Theta(bn/\log n)\). For fixed-length keys (\(b = O(\log n)\)), this is \(O(n)\) — linear time.

Auxiliary space: \(\Theta(n + R)\).

Sorting: The Big Picture

The following table summarizes the algorithms covered so far:

| Algorithm | Worst-Case | Average-Case | Extra Space |

|---|---|---|---|

| Selection Sort | \(\Theta(n^2)\) | \(\Theta(n^2)\) | \(O(1)\) |

| Insertion Sort | \(\Theta(n^2)\) | \(\Theta(n^2)\) | \(O(1)\) |

| MergeSort | \(\Theta(n \log n)\) | \(\Theta(n \log n)\) | \(\Theta(n)\) |

| HeapSort | \(\Theta(n \log n)\) | \(\Theta(n \log n)\) | \(O(1)\) |

| QuickSort (det.) | \(\Theta(n^2)\) | \(\Theta(n \log n)\) | \(O(\log n)\)* |

| QuickSort (rand.) | \(\Theta(n^2)\)** | \(\Theta(n \log n)\) expected | \(O(\log n)\)* |

| LSD-Radix Sort | \(\Theta(m(n+R))\) | \(\Theta(m(n+R))\) | \(\Theta(n+R)\) |

* With tail-call optimization. ** With probability exponentially small in \(n\).

The \(\Omega(n \log n)\) lower bound applies to all comparison-based algorithms, making MergeSort and HeapSort asymptotically optimal in that model. QuickSort is faster in practice despite its quadratic worst case, because its average-case constant is small. Radix sort sidesteps the lower bound by exploiting the integer structure of keys, achieving linear time when \(m\) and \(R\) are small — but it requires keys that can be decomposed digit-by-digit, unlike fully general comparison-based sorting.

Chapter 4: Dictionaries and Balanced Search Trees

4.1 The Dictionary ADT

A dictionary is one of the most fundamental abstract data types in computer science. Conceptually, it models any collection of items where each item consists of a key and associated data, forming a key-value pair (KVP). Keys are drawn from some totally ordered universe and are assumed to be unique within the dictionary. The three core operations are:

- search(k): locate the item with key \(k\), returning the value or signalling failure.

- insert(k, v): add the pair \((k, v)\) to the collection.

- delete(k): remove the item with key \(k\).

Optional extensions include closestKeyBefore(k), join, isEmpty, size, and various ordered queries. The dictionary appears throughout software engineering under many guises: symbol tables in compilers, DNS lookup tables, in-memory caches, and license plate databases are all dictionaries at heart.

Elementary Implementations

Before diving into sophisticated structures, it pays to know the landscape of naive implementations. We assume throughout that the dictionary holds \(n\) KVPs, each occupying constant space, and that key comparisons take constant time.

An unordered array or linked list stores items in no particular order. Insertion is cheap — simply append or prepend, costing \(\Theta(1)\) — but search and delete both require scanning the entire collection in the worst case, giving \(\Theta(n)\) for each.

An ordered array keeps items sorted by key, enabling binary search and thus \(\Theta(\log n)\) search time. The price is paid on mutation: inserting or deleting a key requires shifting elements to maintain order, costing \(\Theta(n)\) per operation.

Neither naive approach delivers logarithmic time for all three operations simultaneously. The solution lies in tree-based structures.

4.2 Binary Search Trees: A Review

A binary search tree (BST) organises \(n\) KVPs in a rooted binary tree satisfying the BST ordering property: for every node storing key \(k\), every key in the left subtree is strictly less than \(k\), and every key in the right subtree is strictly greater.

15

/ \

6 25

\ / \

10 23 29

/ \ \

8 24 50

Binary Search Tree — BST ordering property: left subtree < node < right subtree.

Search proceeds by comparison at each node: if the target equals the current key, return it; if the target is smaller, recurse into the left subtree; otherwise recurse right. The process terminates either at the target or at an empty subtree indicating absence. Insert is identical in traversal logic — the new leaf is placed exactly where a search for the new key would have bottomed out.

Deletion handles three cases:

- The target node is a leaf: simply remove it.

- The target node has exactly one non-empty subtree: splice the node out by linking its parent directly to its child.

- The target node has two non-empty subtrees: find the inorder successor (or predecessor) — the smallest key in the right subtree — swap keys, then delete the successor from the right subtree. The successor is guaranteed to have at most one child, reducing this case to case 1 or 2.

All three operations cost \(\Theta(h)\), where \(h\) is the height of the tree, defined as the maximum path length from root to leaf.

The Height Problem

The height of a BST depends critically on insertion order. In the worst case — inserting keys in sorted or reverse-sorted order — the tree degenerates into a path of length \(n - 1 = \Theta(n)\), making every operation linear. In the best case, a perfectly balanced binary tree on \(n\) nodes has height \(\lfloor \log_2(n+1) \rfloor - 1 = \Theta(\log n)\). It can be shown that for a uniformly random insertion sequence, the expected height is also \(\Theta(\log n)\), but an adversary can always force worst-case behaviour. This motivates the need for a self-balancing tree.

4.3 AVL Trees

The AVL tree, introduced by Adel’son-Vel’skiĭ and Landis in 1962, is the classical answer to the BST height problem. It augments the BST with a height-balance property enforced at every node:

The quantity \(\text{height}(R) - \text{height}(L) \in \{-1, 0, +1\}\) is called the balance factor of the node. A balance factor of \(-1\) means the node is left-heavy, \(0\) means balanced, and \(+1\) means right-heavy. Each node stores either its subtree height or its balance factor; the implementation in CS 240 stores heights directly.

Why AVL Trees Have Logarithmic Height

The proof proceeds by counting nodes in the skinniest possible AVL tree. Let \(N(h)\) denote the minimum number of nodes in any height-\(h\) AVL tree. Base cases: \(N(-1) = 0\) (empty tree), \(N(0) = 1\) (single root). For \(h \geq 1\), the thinnest AVL tree of height \(h\) has root, one subtree of height \(h-1\) (the taller one), and one subtree of height \(h-2\) (the shortest the AVL property permits):

\[N(h) = 1 + N(h-1) + N(h-2)\]This is structurally identical to the Fibonacci recurrence shifted by one. Setting \(F_k\) for the \(k\)-th Fibonacci number, one can show \(N(h) = F_{h+3} - 1\). Since Fibonacci numbers grow as \(F_k \approx \phi^k / \sqrt{5}\) where \(\phi = (1+\sqrt{5})/2 \approx 1.618\), we get \(n \geq N(h) \approx \phi^{h+3}/\sqrt{5}\), so \(h \leq \log_\phi(n\sqrt{5}) - 3 = O(\log n)\). The lower bound \(h = \Omega(\log n)\) follows from the fact that any binary tree with \(n\) nodes has height at least \(\lfloor \log_2(n+1) \rfloor - 1\). Together: \(h = \Theta(\log n)\), and all AVL operations cost \(\Theta(\log n)\).

4.4 AVL Insertion and Rotations

AVL rotations — left rotation (right-heavy) and right rotation (left-heavy) restore balance.

To insert \((k, v)\) into an AVL tree:

- Perform the standard BST insertion, obtaining a new leaf \(z\) with height 0.

- Walk back up toward the root, updating heights at each ancestor using

setHeightFromSubtrees. - At the first ancestor \(z\) where the balance factor becomes \(\pm 2\), the AVL property is violated. Perform a restructuring at \(z\) and stop — one restructuring is always sufficient for insertion.

AVL-insert(r, k, v)1. z ← BST-insert(r, k, v)

2. z.height ← 0

3. while z is not the root:

4. z ← parent of z

5. if |z.left.height − z.right.height| > 1 then

6. y ← taller child of z (break ties arbitrarily)

7. x ← taller child of y (prefer left-left or right-right)

8. z ← restructure(x)

9. break // one restructure suffices for insertion

10. setHeightFromSubtrees(z)

The Four Rotation Cases

The key insight is that for any three nodes \(x, y, z\) (grandchild, child, grandparent) and their four subtrees \(A, B, C, D\) in sorted order, there is exactly one perfectly balanced arrangement where the median key becomes the root. The four cases correspond to the four possible shapes of the \(x, y, z\) path.

Right Rotation (left-left case, \(z\) is right-heavy-left or “LL”): \(y\) is the left child of \(z\) and \(x\) is the left child of \(y\).

z y

/ \ / \

y D --> x z

/ \ / \ / \

x C A B C D

/ \

A B

The operation: set \(y \leftarrow z.\text{left}\), then \(z.\text{left} \leftarrow y.\text{right}\), then \(y.\text{right} \leftarrow z\). Update heights of \(z\) then \(y\). Return \(y\) as the new root of the subtree. Only two pointer changes and two height updates are needed.

Left Rotation (right-right case, RR): the symmetric mirror of the right rotation. \(y\) is the right child of \(z\), \(x\) is the right child of \(y\). The left rotation brings \(y\) up and pushes \(z\) down-left.

Double Right Rotation (left-right case, LR): \(y\) is the left child of \(z\), but \(x\) is the right child of \(y\). A single right rotation would not fix the imbalance. Instead, first apply a left rotation at \(y\) (which transforms this into an LL configuration at \(z\)), then a right rotation at \(z\).

z z x

/ \ / \ / \

y D --> x D --> y z

/ \ / \ / \ / \

A x y C A B C D

/ \ / \

B C A B

Double Left Rotation (right-left case, RL): the symmetric mirror. First a right rotation at \(y\), then a left rotation at \(z\).

The restructure(x) function examines the relative positions of \(x\), \(y = \text{parent}(x)\), and \(z = \text{parent}(y)\) to dispatch to the appropriate case. A memorable rule: the median key among \(x, y, z\) always becomes the new subtree root.

4.5 AVL Deletion

Deletion is more involved. The procedure:

- Perform BST deletion on key \(k\). Let \(z\) be the node that was structurally removed (in the two-children case, this is the inorder successor that got spliced out, not the node originally holding \(k\)).

- Starting from \(z\), walk up toward the root, updating heights and restructuring whenever the balance factor reaches \(\pm 2\).

- Unlike insertion, deletion may require up to \(\Theta(h)\) restructurings — each rotation may fix one imbalance only to expose a new one higher up.

AVL-delete(r, k)1. z ← BST-delete(r, k) // z is child of removed node

2. setHeightFromSubtrees(z)

3. while z is not the root:

4. z ← parent of z

5. if |z.left.height − z.right.height| > 1 then

6. y ← taller child of z

7. x ← taller child of y

8. z ← restructure(x)

9. setHeightFromSubtrees(z) // always continue up

Note the contrast with insertion: there is no break after restructuring. Deletion may propagate violations all the way to the root.

Runtime Summary

| Operation | Cost |

|---|---|

| AVL-search | \(\Theta(\log n)\) worst-case |

| AVL-insert | \(\Theta(\log n)\) worst-case (one restructure) |

| AVL-delete | \(\Theta(\log n)\) worst-case (up to \(O(\log n)\) restructures) |

All operations are dominated by the tree height, which is provably \(\Theta(\log n)\). The AVL tree is thus the first data structure in this course achieving worst-case logarithmic time for all dictionary operations simultaneously.

Amortized Analysis and Scapegoat Trees

The Idea Behind Amortized Analysis

Most complexity analyses focus on the worst-case cost of a single operation. But many data structures exhibit bursty behaviour: most operations are cheap, and occasional operations are expensive. If we charge the full worst-case cost to every operation, we are being pessimistic — the expensive operations cannot happen in every step because the very conditions that make them expensive take many cheap steps to create.

Amortized analysis accounts for this by analysing the total cost of a sequence of \(k\) operations and deriving a bound on the average cost per operation, called the amortized cost. Crucially, this average is taken over any possible sequence of operations in a worst-case sense — it is not an average over random inputs. If the amortized cost of each operation is \(f(n)\), then any sequence of \(k\) operations costs at most \(k \cdot f(n)\) in total.

This definition says that the amortized costs form an “account” that is never overdrawn: the sum of credited amounts always covers the sum of actual costs. An individual amortized cost can be larger than the actual cost for a given step (we are “saving up”), or it can even be zero (we are drawing from savings), as long as the total account balance remains non-negative.

The Potential Function Method

While simple arguments (counting cheap and expensive operations) suffice for textbook examples, a systematic approach is needed for more complex structures. The potential function method provides this.

Given a potential function, define the amortized cost of operation \(O\) as:

\[ T^{\text{amort}}(O) := T^{\text{actual}}(O) + \Phi_{\text{after}} - \Phi_{\text{before}} = T^{\text{actual}}(O) + \Delta\Phi. \]The change in potential \(\Delta\Phi\) acts like a “credit adjustment.” When an operation builds up structural disorder (increasing potential), it pays more than its actual cost. When an expensive operation reduces disorder (decreasing potential), the negative \(\Delta\Phi\) absorbs some of the actual cost. Since \(\Phi\) starts at zero and is always non-negative, the telescoping sum

\[ \sum_{i=1}^k T^{\text{amort}}(O_i) = \sum_{i=1}^k T^{\text{actual}}(O_i) + \Phi(k) - \Phi(0) \geq \sum_{i=1}^k T^{\text{actual}}(O_i) \]confirms that the amortized costs upper-bound the actual costs. The art is in choosing \(\Phi\) so that the amortized costs are small.

Example — dynamic array doubling. When a dynamic array of current size \(n\) fills its capacity, it allocates a new array of double the capacity and copies all elements. The actual copy costs \(\Theta(n)\), but it is preceded by \(n/2\) cheap \(O(1)\) insertions. Define

\[ \Phi = \max\{0,\; 2 \cdot \text{size} - \text{capacity}\}. \]When the array is at half capacity, \(\Phi = 0\). Each cheap insertion increases size by 1 without changing capacity, so \(\Delta\Phi = 2\), giving amortized cost \(1 + 2 = 3 = O(1)\). When the array doubles, size equals capacity so \(\Phi_{\text{before}} = \text{size}\). After doubling, capacity = 2·size so \(\Phi_{\text{after}} = 0\), giving \(\Delta\Phi = -\text{size}\) and amortized cost \(\text{size} + (-\text{size}) = 0\). The expensive copy is fully covered by the accumulated potential, confirming that push is amortized \(O(1)\).

Scapegoat Trees

AVL trees maintain height balance by storing balance information at every node and performing rotations on every insert and delete. Scapegoat trees take a radically different approach: they do not store any balance information, they never perform rotations, but they occasionally rebuild a subtree from scratch into a perfectly balanced BST. The rebuilding is triggered only when a node becomes sufficiently unbalanced, and the amortized analysis shows that the occasional rebuild cost is spread over enough cheap operations to keep the amortized cost logarithmic.

Weight-balance. Fix a parameter \(\alpha \in (1/2, 1)\) (a typical choice is \(\alpha = 2/3\)). For a node \(v\), let \(\text{size}(v)\) denote the number of nodes in the subtree rooted at \(v\).

A BST is an \(\alpha\)-weight-balanced scapegoat tree if every node is \(\alpha\)-weight-balanced. An unbalanced node is called a scapegoat.

Height bound. If every node is \(\alpha\)-weight-balanced, the height is at most \(\log_{1/\alpha} n\). This is because on any root-to-leaf path, each step down into a subtree reduces the size by a factor of at most \(\alpha\), so after \(h\) steps the subtree has size at most \(\alpha^h n \geq 1\), giving \(h \leq \log_{1/\alpha} n = O(\log n)\).

Insert. Perform a standard BST insert, walking down and placing the new key at a leaf. After the insert, walk back up toward the root. If any ancestor \(v\) is now \(\alpha\)-weight-unbalanced — that is, one child has more than \(\alpha \cdot \text{size}(v)\) descendants — then \(v\) is the scapegoat. Rebuild the subtree rooted at \(v\) into a perfectly balanced BST using an \(O(\text{size}(v))\) algorithm (e.g., sort the keys and build recursively from the median). After rebuild, every node in the subtree is \(\alpha\)-balanced.

Delete. Rather than immediately rebuilding, scapegoat trees use lazy deletion: mark the node as deleted without removing it from the tree structure. Deleted nodes participate in size counting (affecting balance checks). When the number of deleted nodes exceeds half the total number of nodes, rebuild the entire tree from scratch (discarding all marked nodes) in \(O(n)\) time.

Amortized analysis. To analyse scapegoat trees with the potential method, define

\[ \Phi(i) := c \cdot \sum_{v \in S} \max\!\bigl\{0,\; |\text{size}(\text{left}(v)) - \text{size}(\text{right}(v))| - 1\bigr\}, \]where the sum is over all nodes \(v\) in the current tree, and \(c > 0\) is a constant to be chosen. The term for each node is the excess imbalance above 1; a perfectly balanced node contributes 0. Clearly \(\Phi(0) = 0\) and \(\Phi(i) \geq 0\) for all \(i\).

For insert (excluding any rebuild): the new node \(z\) has at most \(O(\log n)\) ancestors, and each ancestor’s imbalance term can increase by at most 1, so \(\Delta\Phi \leq c \cdot O(\log n)\). The actual cost of the walk is \(O(\log n)\). Thus \(T^{\text{amort}}(\text{insert}) = O(\log n) + c \cdot O(\log n) = O(\log n)\).

For rebuild at node \(p\) with subtree size \(n_p\): the actual cost is \(O(n_p)\) time units. Before the rebuild, \(p\) was \(\alpha\)-unbalanced, so one of its children had size greater than \(\alpha \cdot n_p\). A short calculation shows \(|\text{size}(\text{left}(p)) - \text{size}(\text{right}(p))| \geq (2\alpha - 1)n_p + 1\), which means the potential contribution of \(p\) alone is at least \(c(2\alpha - 1)n_p\). After a perfect rebuild, every node’s imbalance is at most 1, so all potential contributions within the subtree drop to 0. Therefore \(\Delta\Phi \leq -c(2\alpha-1)n_p\). Setting \(c = 1/(2\alpha - 1)\) (which is positive since \(\alpha > 1/2\)) yields

\[ T^{\text{amort}}(\text{rebuild}) = n_p - c(2\alpha-1)n_p = n_p - n_p = O(1). \]The costly rebuild is entirely paid for by the potential that accumulated during prior insertions into the unbalanced subtree. Combining both cases, every scapegoat tree operation has amortized cost \(O(\log n)\), while worst-case individual operations can cost \(\Theta(n)\) when a large subtree is rebuilt.

Scapegoat trees occupy an interesting niche in the BST design space. They have simpler code than AVL trees (no rotations, no balance fields) while guaranteeing the same \(O(\log n)\) amortized performance. The trade-off is that while AVL trees are \(O(\log n)\) worst-case, scapegoat trees are only \(O(\log n)\) amortized — occasional operations can be slow.

Chapter 5: Skip Lists and Randomized Structures

Treaps: Randomized BSTs

The Core Idea

Both AVL trees and scapegoat trees require the implementation to actively monitor and maintain balance. A fundamentally different approach to randomization asks: what if we assign each key a random priority at insertion time, and then organise the tree to respect both the BST order on keys and a heap order on priorities? The resulting structure is called a treap — a portmanteau of tree and heap.

BST property: for every node \(v\), all keys in \(v\)'s left subtree are less than \(v.k\), and all keys in \(v\)'s right subtree are greater than \(v.k\).

Heap property: for every node \(v\), \(v.p\) is greater than or equal to the priorities of all nodes in \(v\)'s subtree (max-heap on priorities).

A key structural fact: if all \(n\) keys are distinct and all \(n\) priorities are distinct, then the treap structure is uniquely determined by the set of (key, priority) pairs. This is because the node with the highest priority must be the root (heap property), keys smaller than the root’s key must go left and larger ones right (BST property), and the same argument applies recursively within each subtree.

The following small example illustrates the structure. Keys are shown above, priorities below each key:

key: 10

pri: 9

/ \

key:5 key:14

pri:7 pri:6

/ \ / \

key:3 key:8 key:12 key:17

pri:4 pri:2 pri:5 pri:1

Reading horizontally: 3 < 5 < 8 < 10 < 12 < 14 < 17 (BST order). Reading vertically from root: 9 > 7 > 4, and 9 > 6 > 5 > 1, etc. (heap order). Both invariants hold simultaneously.

Expected Height: O(log n)

The key property of treaps is that when priorities are assigned uniformly at random, the expected height is \(O(\log n)\). The proof rests on a beautiful symmetry argument.

This is because the node with the highest priority must be inserted first (it becomes the root by the heap property), the node with the second highest priority is inserted second, and so on. Since the priority permutation is uniformly random, this insertion order is uniformly random, and by the classical result on randomly built BSTs, the expected height is \(O(\log n)\).

We can make the depth bound more precise using a direct probabilistic argument. Consider nodes \(x_i\) and \(x_j\) with \(i < j\) (so \(x_i < x_j\) in key order). Node \(x_i\) is an ancestor of \(x_j\) in the treap if and only if \(x_i\) has a higher priority than every other node in \(\{x_i, x_{i+1}, \ldots, x_j\}\). By symmetry among the \(j - i + 1\) nodes in this range, the probability that \(x_i\) has the maximum priority is exactly \(1/(j-i+1)\). Therefore

\[ \Pr[x_i \text{ is an ancestor of } x_j] = \frac{1}{j - i + 1}. \]The expected depth of node \(x_j\) equals the expected number of its proper ancestors, which is

\[ \mathbb{E}[\text{depth}(x_j)] = \sum_{i=1}^{j-1} \frac{1}{j-i+1} + \sum_{i=j+1}^{n} \frac{1}{i-j+1} \leq \sum_{m=1}^{n} \frac{1}{m} = H_n, \]where \(H_n = 1 + \frac{1}{2} + \cdots + \frac{1}{n} \approx \ln n\) is the \(n\)-th harmonic number. So the expected depth of any node is at most \(H_n = O(\log n)\), and the expected height of the entire treap is \(O(\log n)\) as well.

This is a stronger statement than the meldable heap analysis: not only is the average depth logarithmic, but the constant factor is exactly the harmonic number, giving a precise expected depth of roughly \(2 \ln n \approx 1.386 \log_2 n\) — slightly worse than the perfectly balanced tree’s \(\log_2 n\), but still \(O(\log n)\).

Operations

Search. A treap is a BST, so search proceeds by the standard BST algorithm: compare the query key against the root, then recurse into the left or right subtree. Since the expected height is \(O(\log n)\), search takes \(O(\log n)\) expected time.

Insert. To insert a new key \(k\):

- Assign \(k\) a priority chosen uniformly at random from \(\{0, \ldots, n\}\) (where \(n\) is the new size).

- Walk down the BST to the leaf position where \(k\) belongs, and insert \(k\) there as a new leaf.

- The new leaf may violate the heap property (its priority may be larger than its parent’s). Fix this by performing rotations: if the new node has a higher priority than its parent, rotate the parent down (a single rotation). Repeat until the heap property is restored or the node reaches the root.

The rotation used is the same as in AVL trees but driven by a different criterion. A right rotation at node \(y\) makes \(y\)’s left child \(x\) the new local root and attaches \(y\) as \(x\)’s right child, while \(x\)’s former right subtree becomes \(y\)’s new left subtree. Left rotations are symmetric. A single rotation adjusts the parent-child relationship between two nodes while preserving both the BST order and the heap order (because a rotation only changes one edge).

The expected number of rotations during insert is at most 2, since each rotation moves the new node one level up and the node ascends at most depth levels.

Delete. Deletion is conceptually the reverse of insertion:

- Find the node with key \(k\) using standard BST search.

- Set its priority to \(-\infty\) (conceptually).

- Since the node now has the lowest priority in its neighbourhood, the heap property forces it to rotate downward. Perform rotations — always rotating the higher-priority child up — until the target node becomes a leaf.

- Remove the leaf.

At each step, the node being deleted trades places with its higher-priority child, maintaining both the BST and heap invariants throughout. The expected number of rotations is \(O(\log n)\) (proportional to the depth of the deleted node).

All operations in \(O(\log n)\) expected time. Because the treap’s shape is determined solely by the random priorities, no fixed input sequence of insertions and deletions can force the treap into a bad shape. An adversary who knows the algorithm but not the random choices cannot construct a worst-case sequence. This is the essential advantage over deterministic BSTs: the randomisation makes the algorithm’s behaviour independent of the input distribution.

Treaps in Practice

Treaps require storing one extra integer (the priority) per node, plus a pointer to the parent for rotation purposes, giving a small constant-factor space overhead compared to a plain BST. This is smaller than the overhead of AVL trees (which also need parent pointers and a balance field) and roughly comparable to red-black trees.

In practice, treaps are valued for their simplicity: the insert and delete algorithms are shorter and less error-prone than AVL or red-black tree implementations, because the rotation logic is driven entirely by two simple invariants rather than case analyses on colour or height. Split and join operations on treaps (splitting a treap at a given key, or joining two treaps whose keys are disjoint) can also be implemented in \(O(\log n)\) expected time, which makes treaps useful as a building block for more complex data structures such as order-statistic trees and interval trees.

The relationship between treaps and skip lists — both randomised dictionary implementations introduced in this chapter — is worth noting. Both achieve \(O(\log n)\) expected time for all operations by injecting randomness into the structure rather than the input order. Skip lists use coin flips to determine the height of each tower; treaps use random priorities to determine the shape of a BST. In both cases, the adversary cannot predict the outcome of our random choices, so no input sequence is inherently pathological.

5.1 The Motivation for Randomization

By the end of Module 4, we have a clear picture of the dictionary landscape. Unordered structures offer cheap insertion but expensive search. The ordered array achieves fast search at the cost of slow mutation. AVL trees deliver \(\Theta(\log n)\) across the board, but their implementation is non-trivial — rotations require careful bookkeeping and the code for deletion in particular is intricate.

A natural question is: can we achieve the same asymptotic performance with a simpler structure? The average-case height of a randomly built BST (over all insertion permutations) is \(\Theta(\log n)\), which hints that “most” BSTs are well-shaped. The challenge is that an adversary can always choose an insertion order that defeats us. Randomization is the remedy: if we make random choices ourselves during insertion, no fixed input sequence can force bad behaviour. The expected performance is then over our own coin flips, not over the input distribution.

The skip list, introduced by William Pugh in 1990, is the canonical randomized dictionary. It achieves \(O(\log n)\) expected time for all operations with a structure that is both elegant and easy to implement.

5.2 Skip List Structure

A skip list \(S\) is a hierarchy of sorted linked lists \(S_0, S_1, \ldots, S_h\), where:

- \(S_0\) is the base list, containing all \(n\) KVPs in non-decreasing order of key, plus two sentinel nodes with keys \(-\infty\) and \(+\infty\) at the two ends.

- Each \(S_i\) for \(i > 0\) is a subsequence of \(S_{i-1}\), meaning \(S_0 \supseteq S_1 \supseteq \cdots \supseteq S_h\).

- The topmost list \(S_h\) contains only the two sentinels.

Each key present in multiple lists forms a tower of nodes, one per level, linked vertically. Every node \(p\) in the structure supports two pointer operations: after(p) returns the next node in the same list, and below(p) returns the corresponding node one level down.

S3: -inf ------------------------------------ +inf

S2: -inf ---------- 37 --------- 65 -- 94 -- +inf

S1: -inf -- 23 --- 37 --------- 65 -- 94 -- +inf

S0: -inf -- 23 -- 37 -- 44 -- 65 -- 69 -- 79 -- 83 -- 87 -- 94 -- +inf

The skip list is accessed via a pointer to the topmost-left node (the \(-\infty\) sentinel in \(S_h\)).

5.3 Search

The search algorithm exploits the layered structure to skip large blocks of the base list. Starting at the topmost sentinel, the algorithm alternates between two moves: scan forward along the current level as long as the next key is still less than the target, then drop down one level. This continues until the algorithm reaches level \(S_0\), after which one final forward scan lands just before where the target would appear.

skip-search(L, k)1. p ← topmost left node of L

2. P ← stack initially containing p

3. while below(p) ≠ null:

4. p ← below(p)

5. while key(after(p)) < k:

6. p ← after(p)

7. push p onto P

8. return P

The stack \(P\) collects the predecessor of \(k\) at each level \(S_0, S_1, \ldots\). After the algorithm completes, \(k\) is present in \(S\) if and only if key(after(top(P))) = k. The stack is returned because insert and delete also need these predecessors.

Example: Searching for 87 in the structure above. Starting at \(S_3\): scan right, reach \(+\infty\), stop; drop to \(S_2\). Scan right past 37, 65, 94 is too big — stop at 65; drop to \(S_1\). Scan right past 65, 83 is not reached — actually at level \(S_1\), scan past 65, 94 is too big — stop at 65; drop to \(S_0\). Scan right past 65, 69, 79, 83, stop before 87. Return P with predecessors. after(top(P)) has key 87 — found.

5.4 Insert

Insertion uses a randomised process to determine the tower height of the new key. The tower height is determined by flipping a fair coin repeatedly until tails appears: the number of heads is the height \(i\). Formally,

\[\Pr[\text{tower height} \geq i] = \left(\frac{1}{2}\right)^i\]so the expected tower height is 1, and the structure remains sparse at higher levels.

skip-insert(S, k, v)1. Toss coin until tails; let i = number of heads (tower height)

2. Increase the height of S if needed so that h > i

3. P ← skip-search(S, k)

4. Insert (k, v) after P[0] in S0

5. For j = 1 to i: insert k after P[j] in Sj (linking into tower)

If the coin produces heads more times than the current list height \(h\), new sentinel-only levels are created at the top. The key insight is that the insert is otherwise just a sequence of linked-list insertions guided by the predecessors collected during search.

5.5 Delete

Deletion is the conceptual inverse of insertion: search for the key, then use the collected predecessor stack to remove the key’s node from every level at which it appears. Finally, any top-level lists that have become sentinel-only (except one) are discarded to shrink the structure.

skip-delete(S, k)1. P ← skip-search(S, k)

2. For each level j: if key(after(P[j])) = k, remove after(P[j]) from Sj

3. Remove all but one of the lists Si containing only the two sentinels

5.6 Expected Complexity Analysis

The cost of any skip list operation depends on two quantities: the number of drop-downs (transitions between levels) and the number of scan-forwards (horizontal steps within a level).

Height bound: A skip list with \(n\) items has height at most \(3 \log n\) with probability at least \(1 - 1/n^2\). This follows from a union bound over all keys and levels. With overwhelming probability, \(h = O(\log n)\).

Scan-forwards analysis: At each level, the expected number of forward steps before dropping down is at most 2. This is because at level \(S_i\), the probability that any given element of \(S_{i-1}\) is also in \(S_i\) is \(1/2\), so the expected gap between promoted elements is 2.

Combining: the expected total number of steps in a search is \(O(\log n)\) drop-downs \(\times\) \(O(1)\) scan-forwards per level, giving \(O(\log n)\) expected time.

Space: Each element appears at level \(S_i\) with probability \((1/2)^i\). The expected total number of nodes across all levels is \(\sum_{i=0}^{\infty} n \cdot (1/2)^i = 2n = O(n)\).

| Operation | Expected time | Worst-case time |

|---|---|---|

| Search | \(O(\log n)\) | \(O(n)\) |

| Insert | \(O(\log n)\) | \(O(n)\) |

| Delete | \(O(\log n)\) | \(O(n)\) |

| Space | \(O(n)\) | \(O(n \log n)\) |

Skip lists are celebrated in practice for their simplicity: the code is far shorter and less error-prone than a balanced BST, concurrent implementations are natural (updating a linked list is more amenable to locking than rotating a tree), and the constants are small.

5.7 Re-ordering Items in Unordered Lists

A separate thread of Module 5 concerns a different strategy for dictionary implementation: ordered sequential search in an unordered list, where items are rearranged over time to exploit the temporal locality of access patterns. If some keys are accessed far more frequently than others, placing popular keys near the front reduces average search cost.

Optimal Static Ordering: If access probabilities \(p_1, p_2, \ldots, p_n\) are known in advance, the ordering that minimises expected access cost is simply sorting items in non-increasing order of access probability. A key at position \(j\) costs \(j\) comparisons, so the expected cost of ordering \(\sigma\) is \(\sum_j j \cdot p_{\sigma(j)}\), which the rearrangement inequality tells us is minimised when the highest probabilities come first.

Move-To-Front (MTF): When access probabilities are unknown, the move-to-front heuristic is used. Upon a successful search for item \(x\), move \(x\) to the front of the list. New items are also inserted at the front. This exploits temporal locality — recently accessed items tend to be accessed again soon.

MTF can be compared favourably to the optimal static ordering: it is 2-competitive, meaning the total cost of MTF over any access sequence is at most twice the total cost of the optimal static arrangement. This result holds in an adversarial model — no knowledge of future accesses is needed.

Transpose: A more conservative heuristic — swap a found item with its immediate predecessor. Transpose adapts more slowly and does not enjoy the 2-competitive guarantee.

Chapter 6: Dictionaries for Special Keys

6.1 Lower Bounds for Comparison-Based Search

The structures studied so far — BSTs, AVL trees, skip lists — all work by comparing keys to one another. A natural question is whether this approach is fundamentally limited.

The proof is an information-theoretic argument. Each comparison has at most three outcomes (less than, equal, greater than), so after \(k\) comparisons the algorithm has distinguished at most \(3^k\) possible situations. To correctly resolve search for any of the \(n\) keys (or conclude absence), we need at least \(n + 1\) distinguishable outcomes (one for each key, plus “not found”). Therefore \(3^k \geq n + 1\), giving \(k \geq \log_3(n+1) = \Omega(\log n)\).

This bound is tight — binary search on a sorted array achieves \(\Theta(\log n)\) using only two-way comparisons (less than or not). The question then becomes: can we do better if keys have special structure beyond mere ordering?

6.2 Interpolation Search

Binary search always probes the midpoint of the remaining range, regardless of key values. Yet if keys are numerical and uniformly distributed, we can do better by using the key values themselves to predict where the target likely lives.

Interpolation search modifies the choice of probe index. Given a target key \(k\) and a sorted array \(A[\ell \ldots r]\), instead of probing index \(\lfloor (\ell + r)/2 \rfloor\), we probe:

\[m = \ell + \left\lfloor \frac{k - A[\ell]}{A[r] - A[\ell]} \cdot (r - \ell) \right\rfloor\]This is a linear interpolation: if \(k\) sits \(60\%\) of the way from \(A[\ell]\) to \(A[r]\) in value, we probe \(60\%\) of the way from \(\ell\) to \(r\) in index. The intuition mirrors how a human would look up a name in a phone book — you don’t open to the exact middle to find “Zhao”.

interpolation-search(A, n, k)1. l ← 0; r ← n − 1

2. while (l < r) and (A[r] ≠ A[l]) and (k ≥ A[l]) and (k ≤ A[r]):

3. m ← l + floor((k − A[l]) / (A[r] − A[l]) * (r − l))

4. if A[m] < k: l ← m + 1

5. elsif A[m] = k: return m

6. else: r ← m − 1

7. if k = A[l]: return l

8. else: return "not found"

The guard A[r] ≠ A[l] prevents division by zero when all remaining elements are equal.

Example: Search for 449 in the array \([0, 1, 2, 3, 449, 450, 600, 800, 1000, 1200, 1500]\).

- \(\ell=0, r=10\): \(m = 0 + \lfloor \frac{449-0}{1500-0} \cdot 10 \rfloor = \lfloor 2.99 \rfloor = 2\). \(A[2] = 2 < 449\), so \(\ell \leftarrow 3\).

- \(\ell=3, r=10\): \(m = 3 + \lfloor \frac{449-3}{1500-3} \cdot 7 \rfloor = 3 + \lfloor 2.09 \rfloor = 5\). \(A[5] = 450 > 449\), so \(r \leftarrow 4\).

- \(\ell=3, r=4\): \(m = 3 + \lfloor \frac{449-3}{449-3} \cdot 1 \rfloor = 3 + 1 = 4\). \(A[4] = 449\). Found in 3 steps.

Complexity